AMD Radeon RX Vega 64: Bundles, Specs, And Aug. 14 Availability

Spoiler alert: Nvidia is going to maintain its top spot in the ultra-high-end graphics market with GeForce GTX 1080 Ti and Titan Xp. But AMD claims that its anticipated Vega 10 architecture closes the current gap in a big way, setting it up to compete where most enthusiasts can afford to play.

The company isn’t getting too specific about performance (beyond a handful of ranges), but it does have three versions of the upcoming desktop-oriented flagship to announce: Radeon RX Vega 64, Radeon RX Vega 64 Limited Edition, and a Radeon RX Vega 64 Liquid Cooled. They're all expected to launch in August, and in a clear positioning move, the base RX Vega 64 will start at $500, setting up a bout with GeForce GTX 1080. You can also expect to see a Radeon RX Vega 56 for $400. Asked how Vega 56 will fare against GeForce GTX 1070, company representatives responded that “it’ll be very competitive.”

The story gets more interesting. Recognizing that the Polaris-based cards in its product stack are being gobbled up by cryptocurrency miners and largely unavailable to gamers, AMD came up with the concept of Radeon Packs. These packs promise discounts when PC builders buy certain components at the same time as their new graphics card.

Article continues belowThere will be three packs: the Radeon Aqua Pack at $700, the Radeon Black Pack for $600, and a Radeon Red Pack priced at $500. Obviously, you can spend $500 and buy the standard Radeon RX Vega 64. If history is a good predictor of the future, though, those will get gobbled up quickly (and probably not for the price AMD suggests).

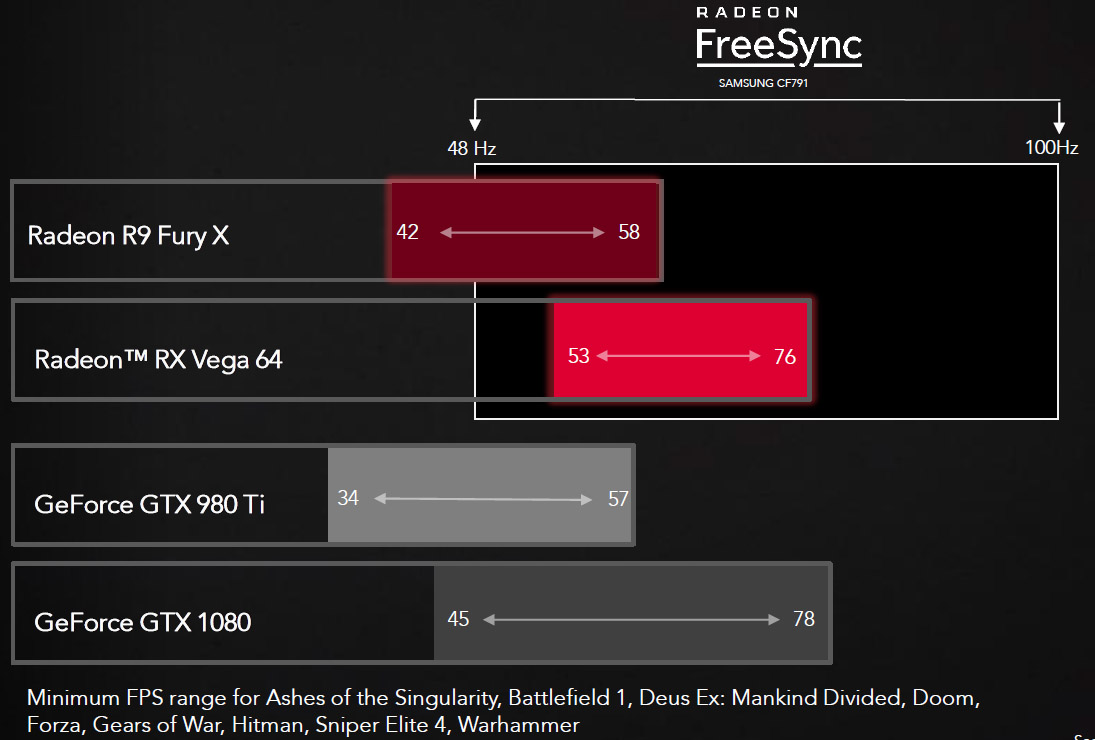

Drop $600 on one of the two air-cooled cards and you’ll get a couple of free games as well (Wolfenstein II and Prey in the U.S.), plus the option to buy Samsung’s FreeSync-capable CF791 at a $200 discount and a Ryzen 7/motherboard combo for $100 off. Take the discounts or leave them; you’ll pay $100 extra either way.

AMD's Aqua Pack jumps to $700, getting you the liquid-cooled card plus those discounts and bundled games. Meanwhile, the $500 Red Pack substitutes in a Radeon RX Vega 56.

The Radeon Packs aren't coupons you can redeem later. You pay more for your card of choice and have the option to save on gaming-oriented components at the same time. Conceptually, this is meant to discourage cryptocurrency miners who have no interest in variable refresh monitors, Ryzen 7-based platforms, or free games. Given how much extra miners are willing to pay for mid-range Radeon RX cards, however, we don't see a $100 or $200 premium discouraging that community from spending extra on a Radeon Pack if Vega's hash rates come to warrant the “investment” at some point.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Originally, AMD said its Radeon Packs would be available through August. However, representatives later clarified that they’d be extended for longer. Add-in board partners are expected to follow AMD’s reference designs with their own interpretations toward the end of Q3 or beginning of Q4.

Radeon RX Vega 64 Specifications: Something For Everyone

Since the Radeon Vega Frontier Edition is already available, we had a good idea of what the gaming-oriented models would look like leading up to AMD's embargoed briefing. Like the Radeon R9 Fury X's Fiji processor, Radeon RX Vega 64 employs four shader engines, each with its own geometry processor and rasterizer.

Also similar to Fiji, there are 16 Compute Units per Shader Engine, each CU sporting 64 Stream processors and four texture units. Multiply all of that out and you get 4096 Stream processors and 256 texture units.

Clock rates are way up, though. Whereas Fiji topped out at 1050 MHz, a GlobalFoundries 14nm FinFET LPP process and targeted optimizations for higher frequencies allows the Vega 10 GPU on Radeon RX Vega 64 to operate at a base clock rate of 1247 MHz with a boost rate of 1546 MHz. Obviously, AMD's peak FP32 specification of 12.66 TFLOPS is based on that best-case frequency. We typically use the guaranteed base in our calculations, though. Even then, 10.2 TFLOPS is still an almost 20% increase over Radeon R9 Fury X.

| Header Cell - Column 0 | Radeon RX Vega 64 Liquid Cooled | Radeon RX Vega 64 | Radeon RX Vega 56 |

|---|---|---|---|

| Next-Gen CUs | 64 | 64 | 56 |

| Stream Processors | 4096 | 4096 | 3584 |

| Texture Units | 256 | 256 | 224 |

| ROPs | 64 | 64 | 64 |

| Base Clock Rate | 1406 MHz | 1247 MHz | 1156 MHz |

| Boost Clock Rate | 1677 MHz | 1546 MHz | 1471 MHz |

| Memory | 8GB HBM2 | 8GB HBM2 | 8GB HBM2 |

| Memory Bandwidth | 484 GB/s | 484 GB/s | 410 GB/s |

| Peak FP32 Perf. | 13.7 TFLOPS | 12.66 TFLOPS | 10.5 TFLOPS |

| Peak FP16 Perf. | 27.5 TFLOPS | 25.3 TFLOPS | 21 TFLOPS |

| Peak FP64 Perf. | 856 MFLOPS | 791 MFLOPS | 656 MFLOPS |

| Board Power | 345W | 295W | 210W |

| Price | $700 | $500 | $400 |

The liquid-cooled model steps those numbers up to a 1406 MHz base with boost clock rates as high as 1677 MHz. That’s an almost 13% higher base and ~8%-higher boost frequency, pushing AMD’s specified peak FP32 rate to 13.7 TFLOPS. You’ll pay more than just a $200 premium for the closed-loop liquid cooler, though. Board power rises from 295W on Radeon RX Vega 64 to the Liquid Cooled Edition’s 345W—a disproportionate 17% increase. Both figures exceed Nvidia’s 250W rating on GeForce GTX 1080 Ti, which isn’t even in Vega’s crosshairs.

Each of Vega 10's Shader Engines sports four render back-ends capable of 16 pixels per clock cycle, yielding 64 ROPs. These render back-ends become clients of the L2, as we already know. That L2 is now 4MB in size, whereas Fiji included 2MB of L2 capacity (already a doubling of Hawaii’s 1MB L2). Ideally, this means the GPU goes out to HBM2 less often, reducing Vega 10’s reliance on external bandwidth. Since Vega 10’s clock rates can get up to ~60% higher than Fiji’s, while memory bandwidth actually drops by 28 GB/s, a larger cache should help prevent bottlenecks.

Incidentally, AMD's Mike Mantor says all of the SRAM on Vega 10 adds up to more than 45MB. Wow. No wonder this is a 12.5-billion-transistor chip measuring 486 square millimeters. That's more transistors than Nvidia's GP102 in an even larger die.

Adoption of HBM2 allows AMD to halve the number of memory stacks on its interposer compared to Fiji, cutting an aggregate 4096-bit bus to 2048 bits. And yet, rather than the 4GB ceiling that dogged Radeon R9 Fury X, RX Vega 64 comfortably offers 8GB using 4-hi stacks (AMD's Frontier Edition card boasts 16GB). An odd 1.89 Gb/s data rate facilitates a 484 GB/s bandwidth figure, matching what GeForce GTX 1080 Ti achieves using 11 Gb/s GDDR5X.

Picking Out the Newness

Despite a long list of similarities to Fiji, AMD insists that Vega 10 is improved for operating frequency, power, and feature enhancements. A 40%-higher transistor count and almost 50%-higher peak boost clocks would seem to support such a claim. Again, we've covered much of Vega's architecture in past tech day wrap-ups, and if you want more detail on the High-Bandwidth Cache Controller, Next-Gen Compute Units, programmable geometry pipeline, and next-gen pixel engine, check out AMD Teases Vega Architecture: More Than 200+ New Features, Ready First Half Of 2017. But the company’s architects did share some new information.

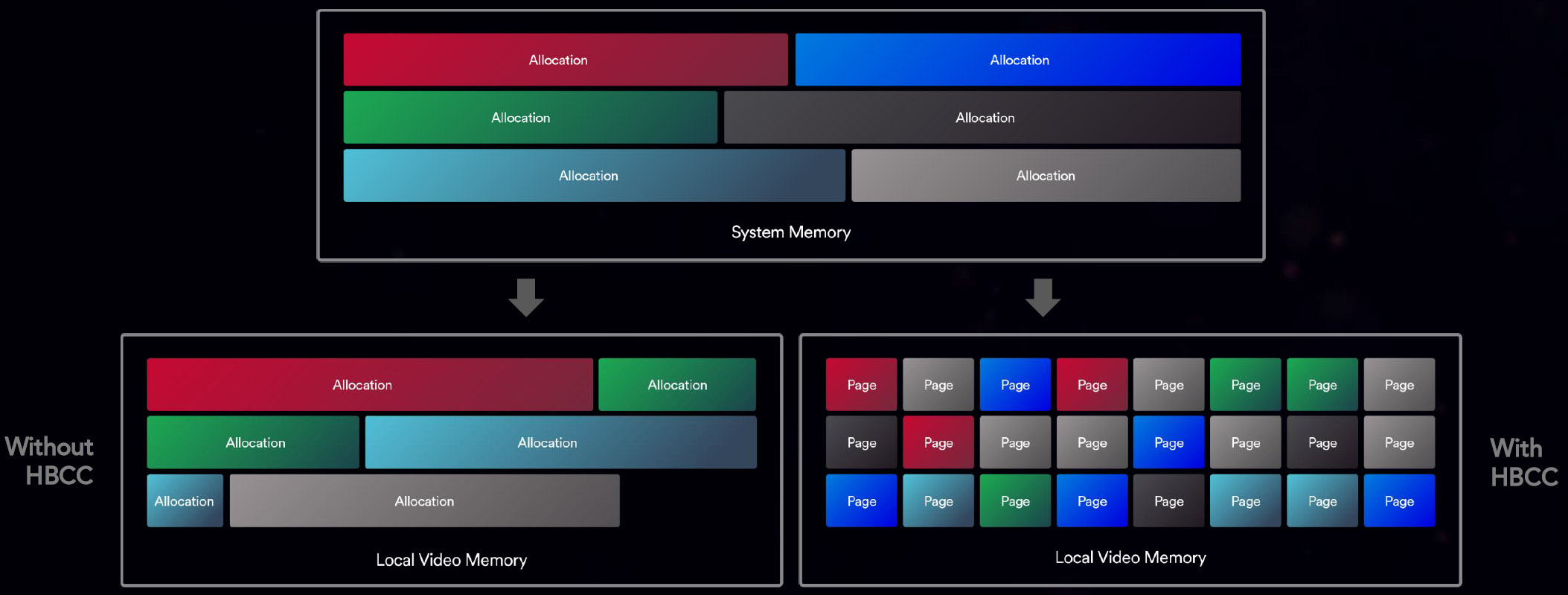

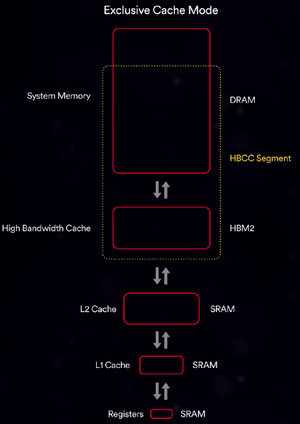

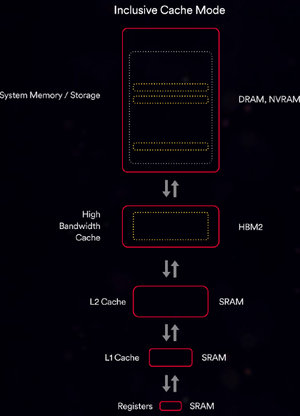

For instance, in our previous coverage, we touched on the HBCC’s hardware-assisted memory management capabilities that allow Vega to move pages in fine-grained fashion using multiple, programmable techniques. The controller can receive a request to bring in data and then retrieve it through a DMA transfer while the GPU switches to another thread and continues work without stalling. It can go get data on demand but also bring it back in predictively. Information in the HBM can be replicated in system memory like an inclusive cache, or the HBCC can maintain just one copy to save space.

AMD expounded on those concepts by illustrating Vega’s memory hierarchy, from the smallest and fastest registers all the way through system DRAM, which the HBCC can address. By default, the architecture is configured in an exclusive cache mode, extending the HBCC segment beyond the card’s HBM2. But in systems with lots of storage capability, the on-package memory can be used as a cache with inclusive backing in DRAM or NVRAM. The practical significance of this was shown in a demo using the exclusive model to access more than 27GB of assets in real-time—a workload that purportedly won’t even run on competing hardware.

AMD’s early-2017 update on Vega’s Next-Gen Compute Units made Rapid Packed Math support official, even if previous PlayStation 4 Pro deep-dives had suggested Vega would embrace new data types. From our coverage:

“…with 64 shaders [per NCU] and a peak of two floating-point operations/cycle, you end up with a maximum of 128 32-bit ops per clock. Using packed FP16 math, that number turns into 256 16-bit ops per clock. AMD even claimed it can do up to 512 eight-bit ops per clock. Double-precision is a different animal—AMD doesn’t seem to have a problem admitting it sets FP64 rates based on target market.”

Like Fiji, we confirmed that Vega 10’s FP64 rate is 1:16 of its single-precision specification. This is another gaming-specific architecture; it’s not going to live in the HPC space at all.

But Vega’s NCU can process IEEE-compliant FP16 and integer ops at double-rate, plus it supports mixed-precision ops for accumulating natively into 32-bit registers with 16-bit (or a mixture of 16- and 32-bit) operands. Of course, leveraging this functionality requires developer support, so it’s not going to manifest as a clear benefit at launch.

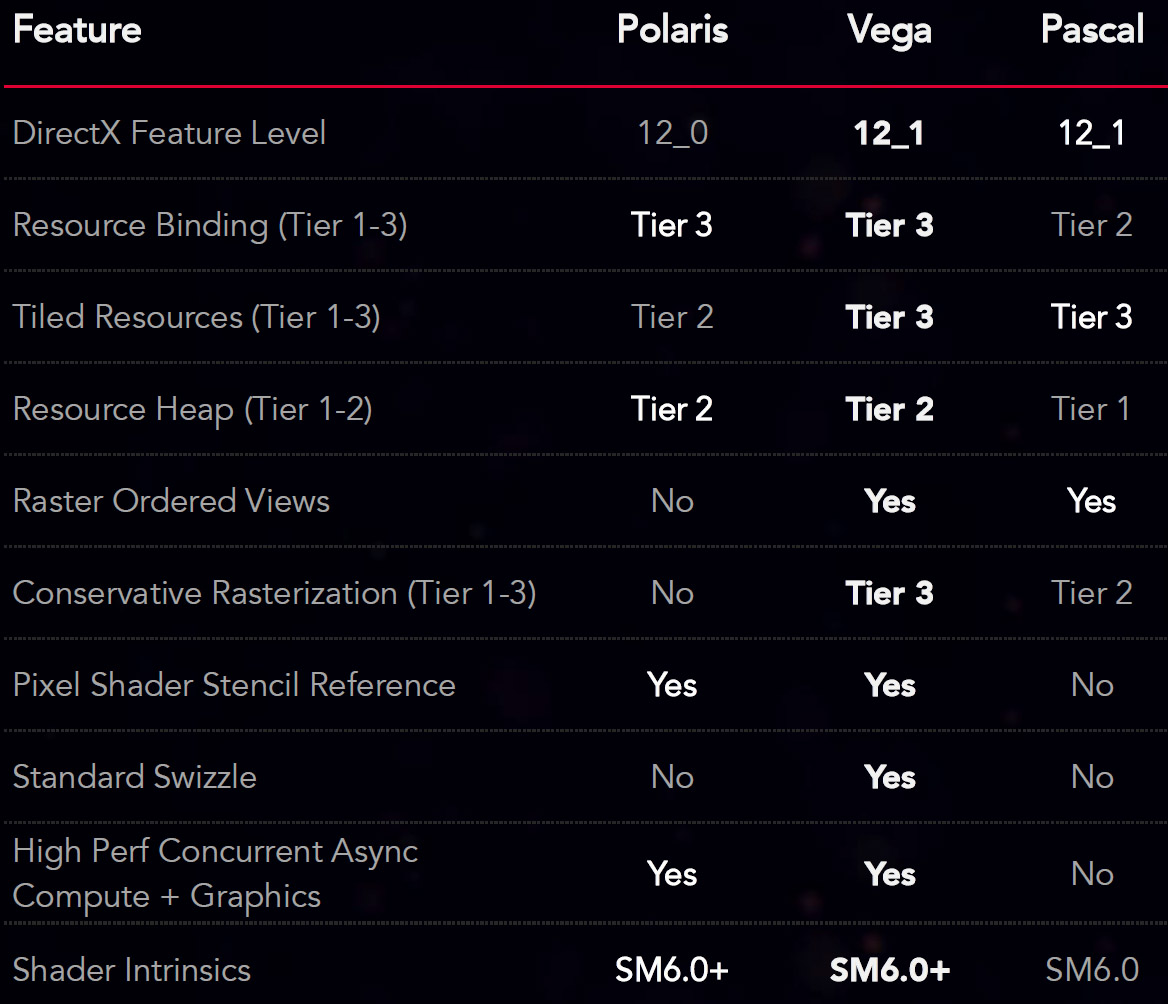

Along the same lines, AMD and Nvidia both like talking about what future-looking capabilities their designs offer, even when the apps don’t yet exist to exploit them. For developers, though, access to hardware that goes beyond current APIs facilitates experimentation. Vega 10 goes beyond Polaris or Nvidia’s Pascal in supporting DirectX 12.

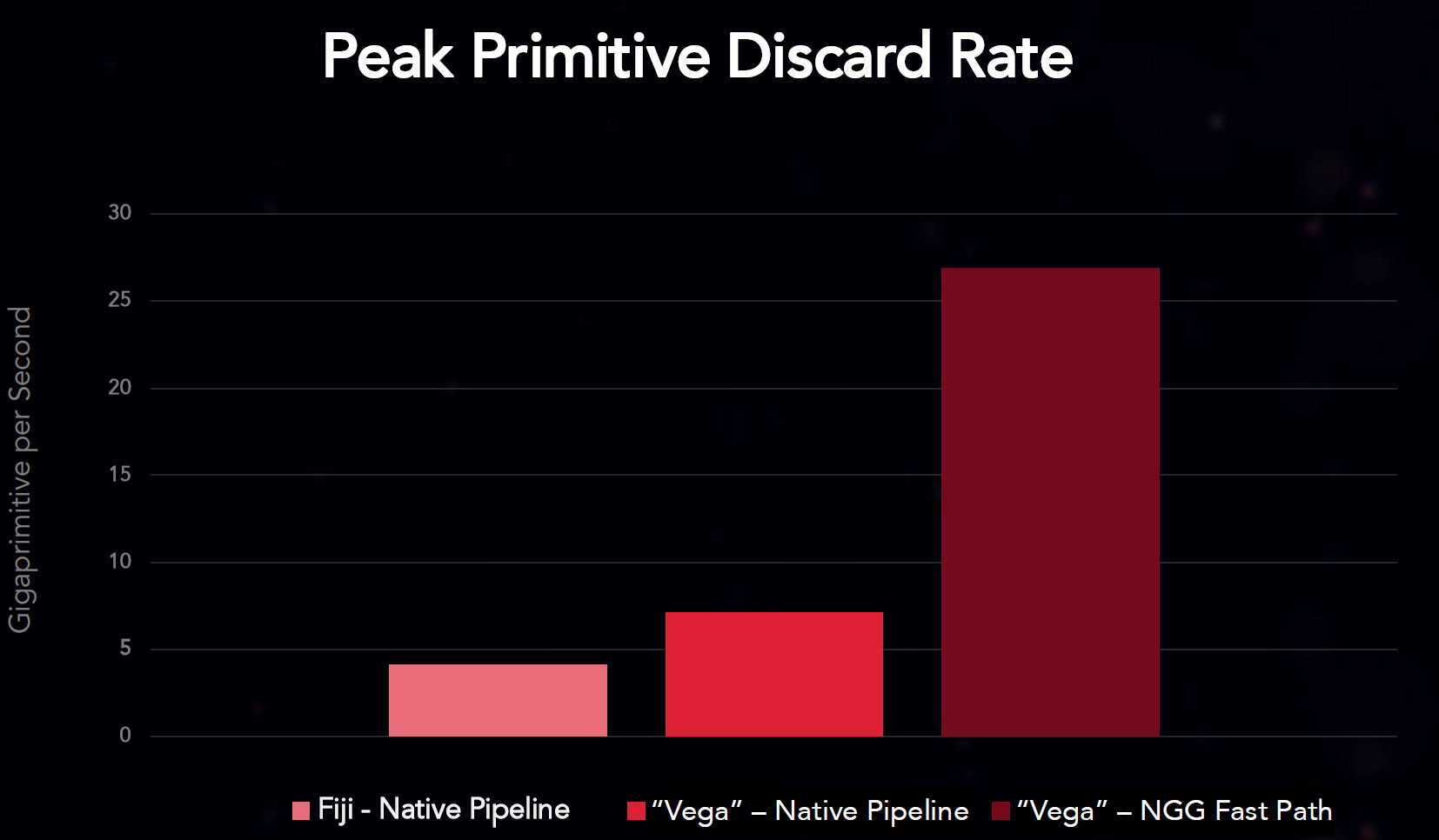

As mentioned in our architectural sneak-peek seven months ago, Vega 10 complements the traditional geometry pipeline with a new primitive shader stage that combines the vertex and primitive shading steps. The primitive shader’s functionality includes a lot of what the DirectX vertex, hull, domain, and geometry shader stages can do, but with potentially higher performance and greater flexibility. AMD’s most recent update added some peak performance data to the story, illustrating its geometry pipeline’s peak primitive discard rate using primitive shaders. Granted, this is another feature developers have to dig their nails into before gamers realize any benefit, so its potential won’t be apparent near-term.

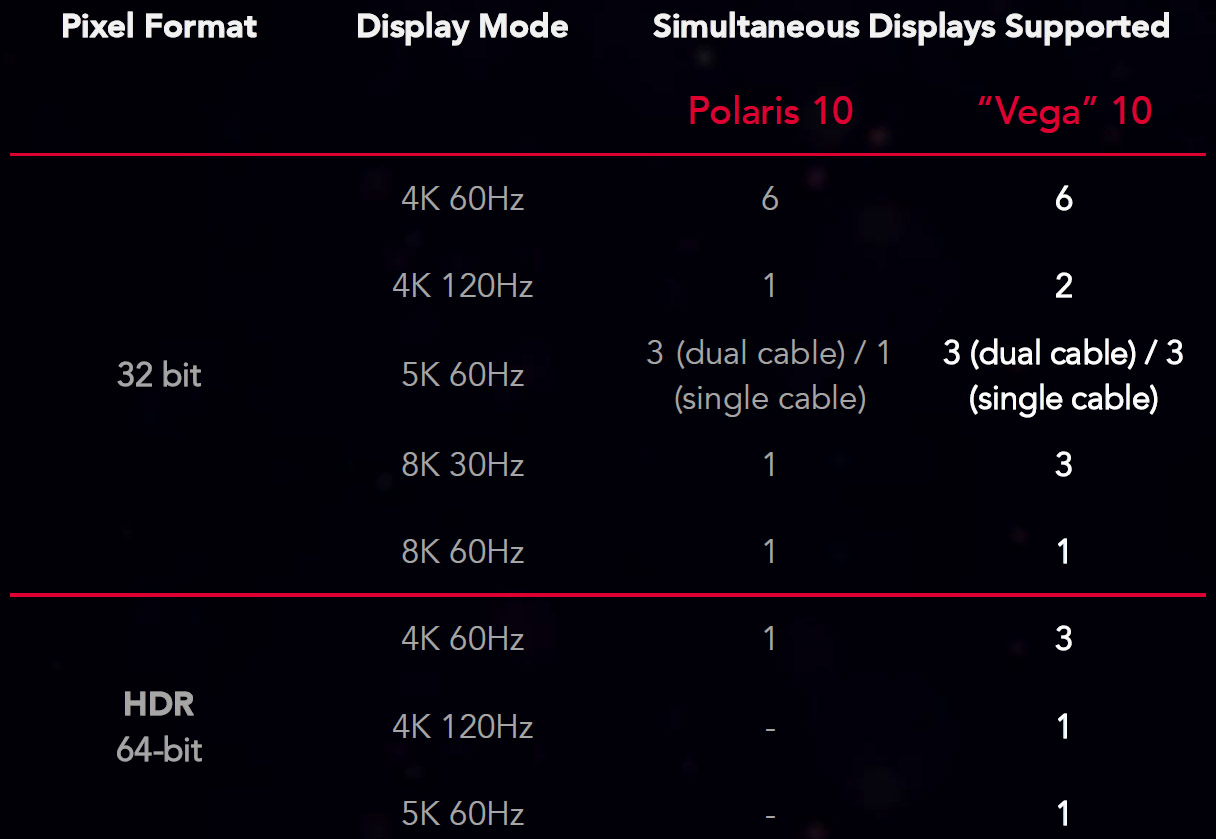

The same goes for Vega 10’s improved display engine—though we eagerly anticipate an opportunity to bask in support for two 4K/120Hz displays or three HDR-capable 4K/60Hz monitors. All three of AMD’s Radeon RX Vega models will offer three DisplayPort 1.4-ready outputs and an HDMI 2.0 connector, all with HDCP 2.2 and FreeSync support.

AMD went into depth on Vega 10’s virtualization and security capabilities as well, including the integration of an AMD Secure Processor for hardware-validated boot and firmware. Trusted memory zone support theoretically should allow playback of protected content like Ultra HD Blu-ray discs, although we’d need compatible software, too. Currently, Cyberlink’s PowerDVD 17 only works with Kaby Lake-based processors sporting HD Graphics 630 or Iris Plus Graphics 640.

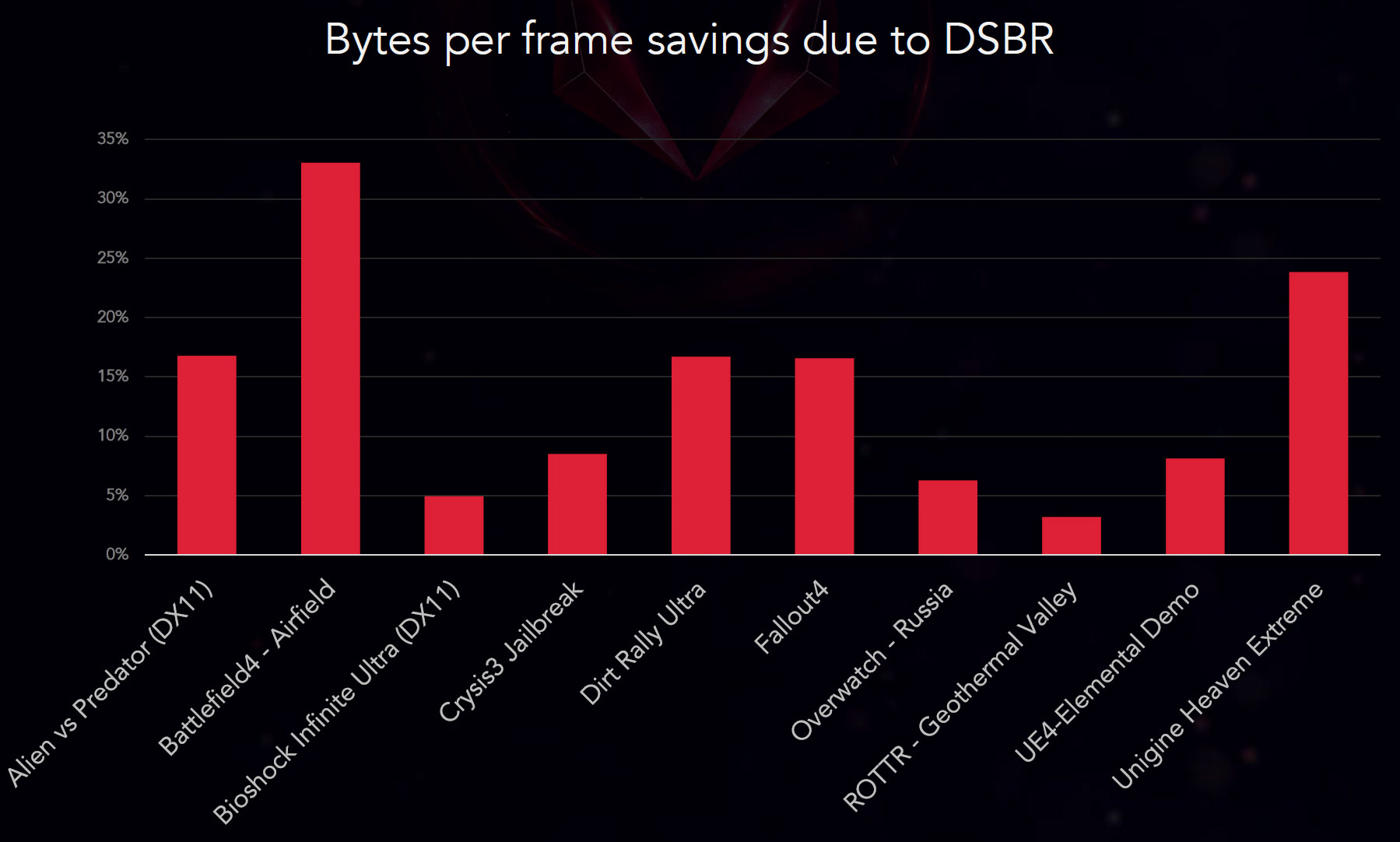

As an interesting side-note, it sounds like the pixel engine’s Draw Stream Binning Rasterizer, which we introduced back in January, is currently disabled on Radeon Vega Frontier Edition cards. However, AMD says it’ll be turned on for Radeon Vega RX’s impending launch. Don’t expect any miracles from the feature’s activation. After all, AMD is assuredly projecting performance with DSBR enabled. But a slide of presumably best-case scenarios shows bandwidth savings as high as 30%.

The Wait Continues

Deep technical details aside, we know that Vega 10 is both dense and physically large, outweighing Nvidia’s GP102 in transistor count and die size. It’s going to be hot—official board power specs from 295W to 345W for the RX Vega 64 assure us of this. And AMD’s own guidance puts the card up against a more than year-old GeForce GTX 1080. Make no mistake, we’re excited to see AMD closing the gap between its competition, and we know die-hard AMD fans are glad to see Radeon RX Vega finally coming to fruition (well, almost). But when it comes time to recommend where you should spend at least $500 on a graphics upgrade, we’re going to want to see these cards win somewhere. It looks like relative value could be the company’s best hope this round. The wait is almost over. August 14th is just around the corner.

-

Bob_8_ Know what it is - Feels just like you open your main Big Christmas present - and it's not what you dreamed it would be =(Reply -

rwinches I would like to see more multi monitor tests included. I believe that AMD does better with those setups.Reply -

pacdrum_88 Man, I'm stoked. Everyone seems down. But looking at the monitor I'm getting, the Acer XR342CK which uses FreeSync, and the crazy price of all decent GPU's right now, and the $399 Vega 56 sounds like a steal to me. I'll be getting it as soon as it launches. Video editing, gaming, this thing will be doing it all for me!Reply -

none12345 Hrm im torn. I was thinking i wasnt going to get a vega. But the vega 56 at $400 seems like a decent deal.Reply

Need to see benchmarks. But with the mining craze inflating gpu prices right now, thats not a bad price, if you can get it for that.

Cant even get a 580 atm, sold out, and even if you tried, it would be up near $300 if not more. Vega 56 should be at like 70% faster, so for $100 more it looks pretty good.

I guess it depends on where the board partner vegas come out price wise. It doesnt look as good if its $450, which is probably what the non reference ones will be.

At $400 for a non reference vega 56 with a good cooler....i think it might be hard for me to pass it up.

All depends on benchmarks tho. IM expecting vega 56 to be about halfway between a 1070 and 1080. -

Lucky_SLS AMD should release their own game engine to make it easier for the devs to exploit all the Vega features. The same goes for Nvidia.Reply

All the new features and performance gains, just wasted without proper support :( -

mpampis84 I don't see this going well for AMD. You can get a water cooled EVGA GTX 1080 for less money than the water cooled RX Vega, and less power hungry, too.Reply

For example, had I decided to upgrade from my RX 480, I'd also have to buy a PSU.

Why choose the RX Vega? -

It's a shame the 980 Ti successor isn't the 1080 as the AMD marketing team would have you believe in that first slide. It's the 1080 Ti.Reply

980 Ti to 1080 Ti is a 65% gain. Fury X to Vega is a 25% gain. :spamafote:

I wanted this to be a win. Competition is great for my wallet. This is a fail though and a big one. A $699, 350w liquid cooled card competing with a vanilla, 180w GTX 1080 Founder's Edition from 2015. Give the AMD driver team a year and it might compete with a factory overclocked 1080. Of course Nvidia will have a new generation out by then. You don't have to tout things like 'platform cost ( that includes the monitor )' and ' higher minimum frame rates' and offer games and coupons on monitors when you have a winning product.

Ryzen is an epic win and it obviously ate all the R&D budget. Hopefully they will reinvest some of that profit in the graphics card division and have something to compete in the high end in a few years.

I think this will mostly be a product for the "AMD can do no wrong" crowd and people with disposable income.

Who knows, real reviews might prove me wrong. But when I saw the 16GB Frontier Edition competing with my 2 year old 980 Ti in benchmarks I knew this wasn't a world beater. -

LORD_ORION Nvidia probably won't even adjust their prices...Reply

When you see this kind of marketing crap, it's because the product isn't good where it counts.