Graphcore Bow IPU Introduces TSMC 3D Wafer-on-Wafer Processor

The firm asserts it can deliver 40% higher performance and better power efficiency than its predecessors.

Graphcore introduced a new AI processor today with quite a degree of fanfare. The new AI processor is called the Bow Intelligence Processing Unit, or Bow IPU for short, and has been prepared to power next generation Bow Pod AI computer systems from Graphcore.

The Bow IPU is particularly interesting for how it was fabricated. It is the world's first 3D Wafer-on-Wafer (WoW) processor, and was designed in close cooperation with TSMC which will be offering similar technology to its other customers. 3D fabrication technology is already on its way to some consumer chips, and Graphcore's claimed success can help shine a light on the possibilities ahead.

TSMC Adds the WoW Factor

Graphcore has worked closely with TSMC to prepare the Bow IPU. This is a TSMC 7nm processor, like its predecessor, but the new mojo comes from 3D stacking technology. With the Bow IPU two wafers are bonded together to make a 3D die. Graphcore explains that the Bow IPU has one wafer for AI processing, with 1,472 independent IPU-Core tiles, capable of handling 8,800 threads and enhanced by 900MB of in-processor memory. The second wafer in the stack, connected with Back Side Through Silicon Via (BTSV) and WoW hybrid bonding is designed for power delivery.

The new 3D packaging innovation, with its improved power delivery, enables significant clock speed uplifts at the same process node size. It is largely thanks to these chipmaking architectural enhancements that the Bow IPU can outpace its otherwise very similar 2D predecessor, which Graphcore claim is as much as 40% in AI compute tasks. Additionally, an improved performance-per-Watt figure of up to 16% is claimed.

Pondering Performance

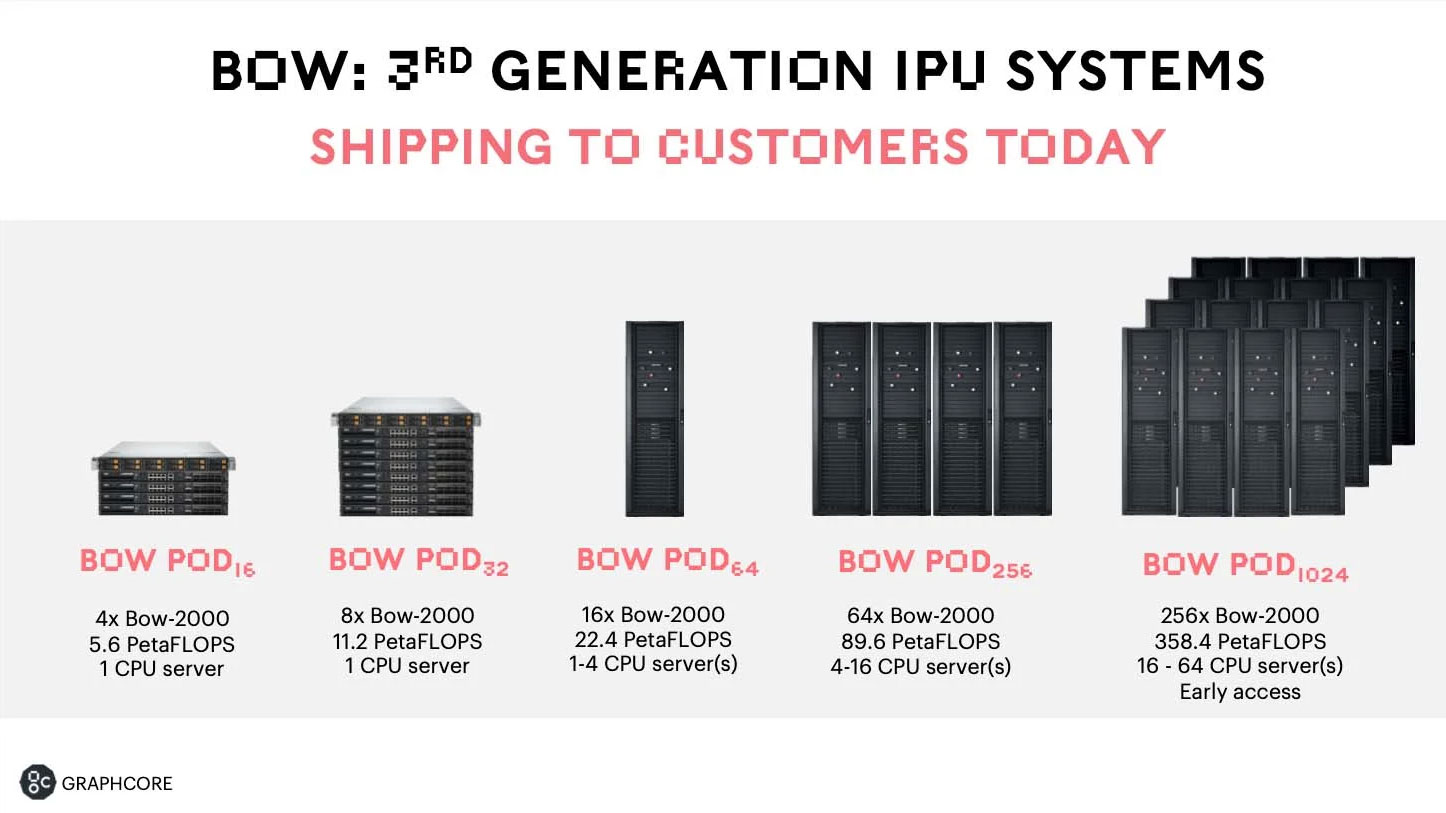

Regarding the new Bow Pod systems that are being made available, they are all based upon layers of Bow-2000 IPU Machines, with each machine containing four Bow IPUs capable of delivering 1.4 PetaFLOPS of AI compute. The smallest system shipping, the Bow Pod 16, contains four Bow-2000 machines, thus offering 5.6 PetaFLOPS of AI compute.

Graphcore says that its top of the range superscale Bow Pod 1024 delivers up to 350 PetaFLOPS of AI compute. Importantly, if you are already working with Graphcore systems, the new Bow IPU systems can use the same software you have used previously, without modification.

According to Graphcore, the new Bow Pod systems packing the Bow IPU deliver "up to 40% higher performance and 16% better power efficiency for real world AI applications than their predecessors."

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

We have learned to take first party performance claims, benchmarks and comparisons with an unhealthy pinch of salt. Today's announcement from Graphcore has already been picked apart somewhat by analyst Dylan Patel, who highlights a number of unfair comparisons against rival Nvidia AI systems.

Some of the largest bones of contention are that the Graphcore systems come packing much more silicon, it also chose AI models which hid the Bow Pod system memory capacity deficits. Moreover, price comparisons weren't fair, asserts Patel, as Graphcore compared its systems against 80GB Nvidia A100’s rather than 40GB ones,

Graphcore says Bow Pod systems are available immediately from its sales partners worldwide.

Graphcore Good Computer

Today Graphcore also announced that it is developing "an ultra-intelligence AI computer that will surpass the parametric capacity of the brain." Its so-called Good Computer, named after Bletchley Park veteran and computer science pioneer Jack Good, is scheduled for launch by 2024.

The Good Computer will be powered by a next-gen Bow IPU, and aims to provide; over 10 Exa-Flops of AI floating point compute, Up to 4 Petabytes of memory with >10 Petabytes/s bandwidth, and support AI models with up to 500 trillion parameters.

Mark Tyson is a news editor at Tom's Hardware. He enjoys covering the full breadth of PC tech; from business and semiconductor design to products approaching the edge of reason.