Alleged Intel Arc A580 Shows Odd Performance in Ashes of the Singularity

Early Arc A580 results from a questionable benchmark

The first benchmark results of the Intel Arc A580 RI (we don't know what "RI" means) have been added to the Ashes of the Singularity database. This occurred just hours after Intel published specifications of its upcoming Arc Alchemist graphics cards, including the midrange Arc A580 — a card that should go up against midrange GPUs in our GPU benchmarks hierarchy, and it might even have a shot at displacing one of the best graphics cards if it's priced right.

Testing unreleased hardware is always a challenge as drivers may not be optimized and software might need tweaking. But these Arc A580 performance numbers raise more questions than answers. The main one is whether the published results were indeed obtained on an Arc A580, and whether it was running final clocks and decent drivers.

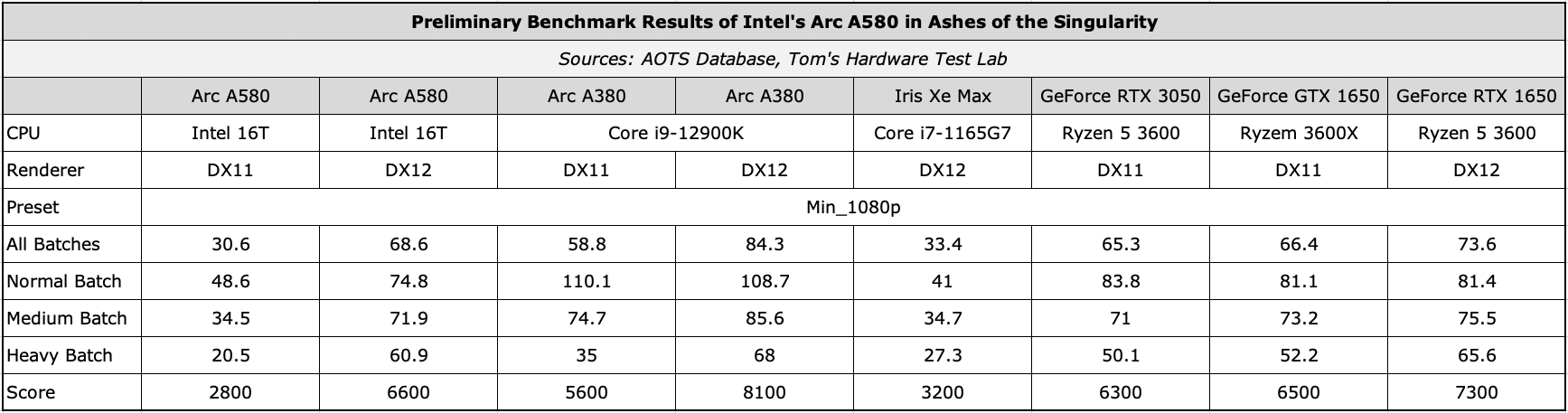

@Benchleaks discovered four "Intel Arc A580 Graphics RI" benchmark results in the AOTS database (1, 2, 3, 4) obtained on a system based on an unreleased Intel 16-thread processor with a 2.50GHz default clock rate (and an unknown turbo frequency) and equipped with 16GB of memory. The results were obtained with the Min_1080p preset. We decided to compare leaked results of Intel's upcoming midrange Arc A580 graphics board to our own test results of Intel's Arc A380 as well as results obtained on Nvidia's entry-level graphics cards from the AOTS database.

It should be noted that some of Arc A580 results were obtained using a DirectX 11 renderer and some using a DirectX 12 renderer. Considering that Intel openly admitted that it did not optimize drivers of its discrete GPUs for the DirectX 11 application programming interface, AOTS DX11 results are barely worth consideration. Meanwhile, we added the best Arc A580 results with DX11 and DX12 renderers into the table just to show the difference.

Note: we did not include performance numbers for Nvidia graphics cards obtained on systems based on high-performance CPUs.

Now, when compared to Nvidia's GeForce GTX 1650, our own test results for the Arc A380 look pretty good, which is logical as this is an entry-level gaming GPU based on a several years old architecture. The RTX 3050 results meanwhile look bad because they're using DX11 — we couldn't find any RTX 3050 DX12 numbers.

So Jarred pulled out a few other GPUs and tested the same settings to put things into proper perspective, all tested on the same i9-12900K platform. You can see how things stack up, and it doesn't lend much credence to the supposed A580 results. The Arc A580 appears to be slower than the Arc A380 in these AOTS benchmarks. There can be several reasons for these odd results.

- The CPU in the system used to benchmark the Arc A580 is limiting performance so badly that a GPU which is supposed to be three times faster than the Arc A380 turns out to be slower.

- Drivers used for the Arc A580 were not optimized for the ACM-G10 GPU.

- The Arc A580 RI is a mobile part that has a power limitation.

- This is not an Arc A580 and someone cheated the benchmark into thinking that it's dealing with this card when it's actually something else.

Truth to be told, Ashes of the Singularity is not a good GPU benchmark since the game was launched in 2016 and should be a fairly easy nut to crack for modern high-end graphics processors, especially with the Min_1080p preset. Meanwhile, the benchmark favors AMD GPUs and takes huge advantage of fast CPUs — it's really more of a CPU benchmark than a GPU benchmark, particularly with the Min_1080p setting. Also, AOTS tends to show better performance on its second run (though not always!), which suggests that there is more variance between runs if you're not careful.

In other words, all AOTS benchmark results need to be viewed with a helping of salt, and perhaps a slice of lemon and a shot of tequila at the viewer's discretion. Keeping in mind that we are dealing with a pre-release piece of hardware with unknown drivers, it's really hard to draw any conclusions about the performance of Intel's Arc A580 in AOTS, and we're not even sure this is a real Arc A580. We should hopefully have actual hardware in hand where we can run our own tests in the not-too-distant future, based on what we're seeing on the Intel Graphics channel.

Jarred Walton contributed to the story.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

-

Darkbreeze Huh.Reply

https://www.tweaktown.com/news/88393/intel-arc-gpu-effectively-cancelled-decision-has-been-made/index.html