Intel Arc AV1 Encoder Easily Beats AMD and Nvidia H.264

The future is bright for AV1 streaming

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

AV1 encoding has finally made its way to the public via integration with Intel's new Arc Alchemist GPUs — the first GPUs to feature this technology. According to a new video by EposVox, this new encoder has been tested on an Arc A380 and provides incredible efficiency for low-bit-rate video streaming, dominating every H.264 hardware encoder in existence — including Nvidia's tried and true NVENC encoder.

AV1 is an open-source, royalty-free video coding format developed by the Alliance for Open Media, a consortium founded in 2015. AV1 is a free, state-of-the-art codec that anyone can use on the internet. AV1 provides massively better compression performance with up to 50 percent smaller file sizes compared to H.264.

AV1 has gained major traction in the video streaming industry in recent years — AV1 decoding engines already supported in many of the latest graphics engines, including Nvidia's RTX 30 series Ampere architecture, AMD's RX 6000 series' RDNA2 GPUs, and Intel's latest integrated graphics GPUs. Even older gaming consoles, such as Sony's PlayStation 4 Pro, support the AV1 codec.

Article continues belowAccording to EposVox, video streaming platforms such as YouTube have also adopted AV1 extensively in recent years — the YouTuber says nearly half the videos he watches on a daily basis support the new codec.

AV1 encoding for content creating and video streaming has been largely absent, despite AV1's adoption. None of the latest graphics engines support AV1 encode engines. You can definitely use AV1 encoding on the CPU with software encoding, but for some reason nobody has bothered to implement a hardware-accelerated method of AV1 encoding. Until now, of course, with Intel leading the charge with its Arc GPUs.

Arc A380 AV1 Quality Comparison

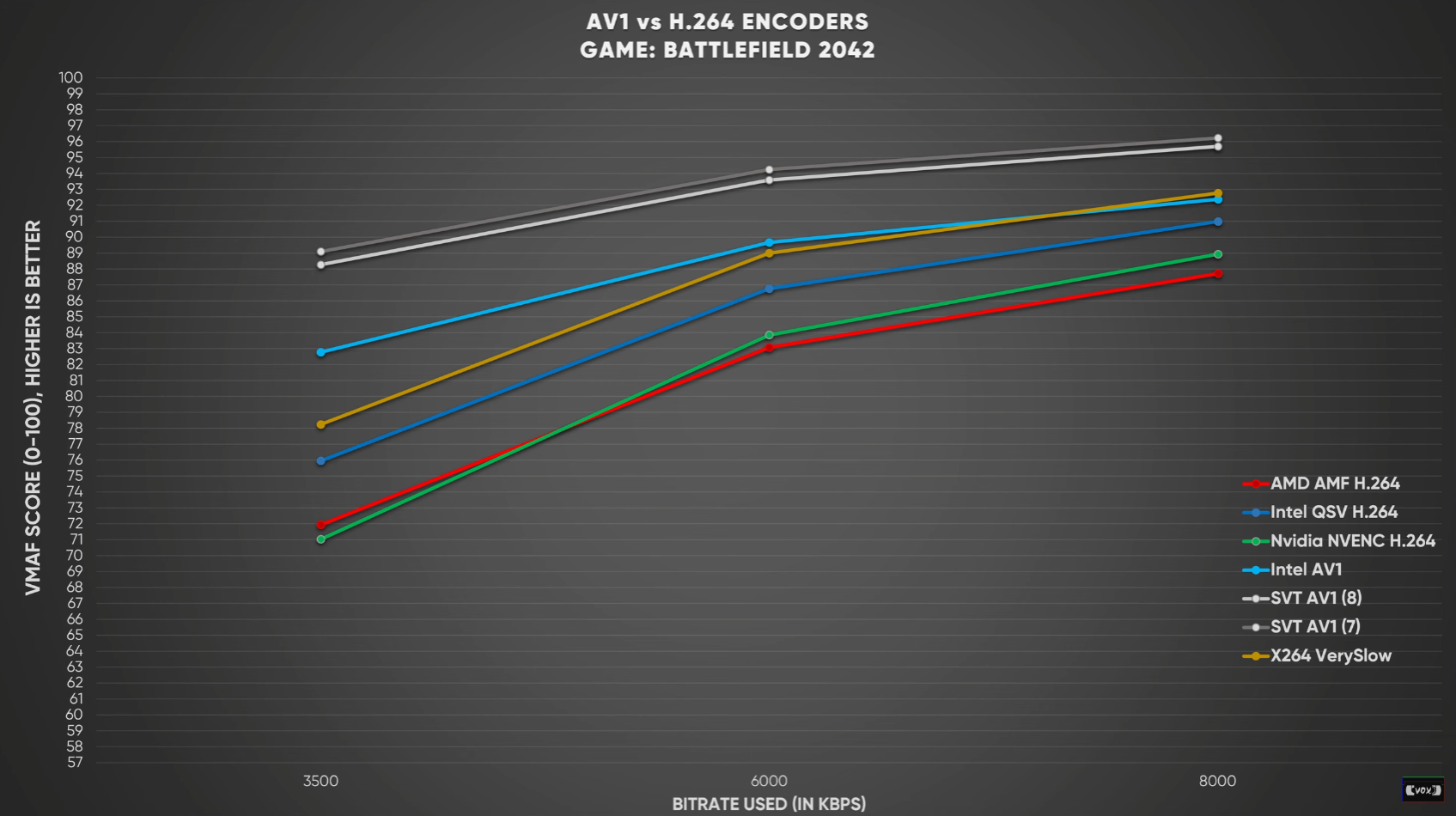

EposVox tested Intel's new AV1 encoder against a variety of H.264 encoders, including Intel's own Quick Sync technology, Nvidia's NVENC, AMD's AMF, and software-based H.264 encoders such as the one found in OBS.

Testing was performed at 3.5 Mbps, 6 Mbps, and 8 Mbps using Netflix's VMAF benchmarking tool which analyses video quality with a scoring system of 0 to 100 (0 is unwatchable,100 is perfect video quality — compared to uncompressed video).

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The AV1 results are impressive: the new Intel encoder scored 83 points at 3.5 Mbps and 90 points at 6 Mbps — the highest of all the H.264 encoders at these bit rates. (This first example was taken from videos recorded from Battlefield 2042.)

The Nvidia NVENC encoder — surprisingly — produced one of the lowest test results: 71 points at 3.5 Mbps and 85 points at 8 Mbps. The AMD AMF encoder scored close to Nvidia, while Intel's Quick Sync encoder (the Alder Lake variant) scored higher with 76 and 87 points at the same bitrates. This makes Intel's new AV1 encoder 16 percent "better" than both the Nvidia NVENC and AMD AMF encoders.

The only H.264 encoder that came close to the AV1's results was OBS's x264 VerySlow H.264 software encoder preset, which scored 78 points at 3.5 Mbps and 88 points at 6 Mbps. These results are impressive for the AV1 codec since OBS's VerySlow preset is so resource-intensive it can't be run in real-time (and is therefore useless for live streaming).

For more details on the AV1 tests — including software accelerated AV1 testing — check out EposVox's video.

3.5 Mbps is the Sweet Spot for AV1 Live Streaming

The 3.5 Mbps results are where we see the most gains out of Intel's AV1 encoder. This encoder is so good at 3.5 Mbps that it was able to outperform both Nvidia's and AMD's encoders at 6 Mbps with nearly half the bitrate.

As you get into bitrates higher than 6 Mbps, however, there seem to be diminishing returns. This is especially true of Intel's AV1 encoder which only gains 2.2% better image quality at 8 Mbps (the Twitch limit) over 6 Mbps.

As a result, we can expect bitrates to drop substantially when streaming platforms make the switch to AV1. This will be great for viewers, as the new encoder will require significantly less internet bandwidth to watch videos at the same quality as H.264, and will put less strain on the internet as a whole.

These new AV1 codec results are very impressive overall. Thanks to Intel, AV1 has completely decimated H.264 encoding with superior image quality that requires significantly less bandwidth.

So far we've only seen Intel's implementation of AV1 encoding. We should get a better understanding of AV1's capabilities when Nvidia and AMD implement their own AV1 encoding engines in their next-gen GPUs. If H.264 hardware encoders have taught us anything, it's that encoding efficiency can change drastically between brands and versions.

Nvidia and AMD's AV1 encoder implementations could be even more efficient and higher quality than what Intel is providing right now — but we'll have to wait until the RTX 40 series and RX 7000 series launch to see if that's true.

Aaron Klotz is a contributing writer for Tom’s Hardware, covering news related to computer hardware such as CPUs, and graphics cards.

-

shady28 This isn't real surprising when you consider that ARC came from HPC data center GPUs. One of the biggest uses for those data center GPUs is real time streaming of many different encoding types to end users consuming that content - millions of streams.Reply

Good to note its performance is good there, but that small win for Intel was already pretty well known.

It might eventually be good for twitch streamers and such, but they have to get the basic 3D performance and driver issues fixed first, especially in DX 10/11 where most e-sports titles are sitting at. -

DraugTheWhopper What is this article even about, quality or speed? Seems the author is getting very confused between the two.Reply -

TheOtherOne H.264 was introduced in 2004, and NVENC version back in 2012. I guess yay! for releasing something faster and better after 18 / 10 years! :unsure:Reply -

TerryLaze Reply

AV1 was created in 2019 and ARC does it faster than nvidia cards that have been doing this for 18 and 10 years on the old codecs, that's the whole point of the article.TheOtherOne said:H.264 was introduced in 2004, and NVENC version back in 2012. I guess yay! for releasing something faster and better after 18 / 10 years! :unsure: -

-Fran- AV1 being compared to H264 is like comparing H265 to VP8 and/or 9. It's just apples to oranges in terms of generations of each technology.Reply

AMD's and nVidia's HEVC enoders are the AV1 match and we already know, at least on the AMD side, that HEVC is quite good.

While I haven't tested nVidia's NVENC in a good while, I've been toying around with AMD's (Vega64 and RX6800M, RX6900XT) for streaming and recording video. HEVC/H265 is hands down better than H264 by a lot.

Regards. -

InvalidError Reply

The graph makes it pretty clear: image quality vs 3.5/6/8Mbps bitrate for each implementation.DraugTheWhopper said:What is this article even about, quality or speed? Seems the author is getting very confused between the two. -

Lorien Silmaril cool story bro - so when is arc coming out lol? until it's a purchasable product is all kind of pointless no?Reply -

InvalidError Reply

It is available now, if you are willing to jump through some hoops to get one shipped from China :)Lorien Silmaril said:cool story bro - so when is arc coming out lol?

The drivers being what they are while 6+ months behind the original launch plans, I'd recommend waiting another six months for drivers to mature some more before considering it. The GN post-review software package impressions looks like Intel has little to no software QA. -

InvalidError Reply

It is a video encoding quality comparison. The same video captures get run through each encoder at the different streaming bitrates and scored based on how close the transcoded videos are to the originals.spentshells said:its better at encoding because it was far less frames to encode?