Nvidia's Grace CPU Superchip to Power Two Supercomputers, Up to Ten 'AI ExaFlops'

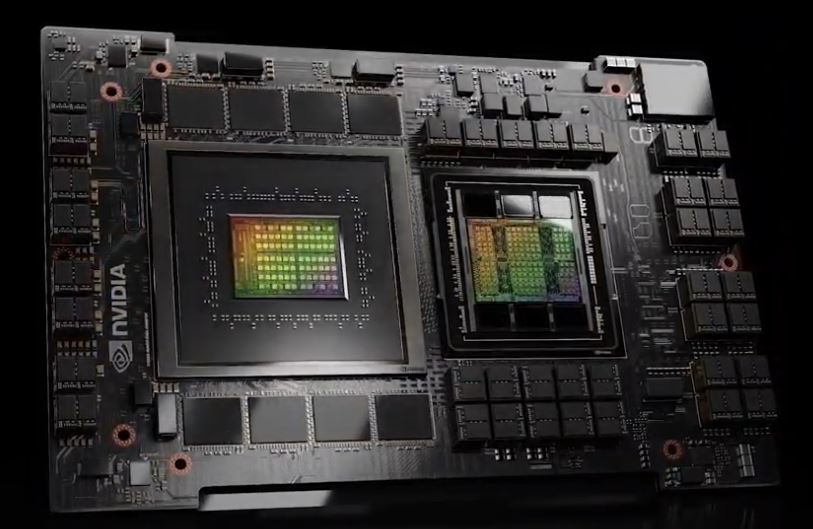

Arm-based Grace CPUs and Hopper GPUs

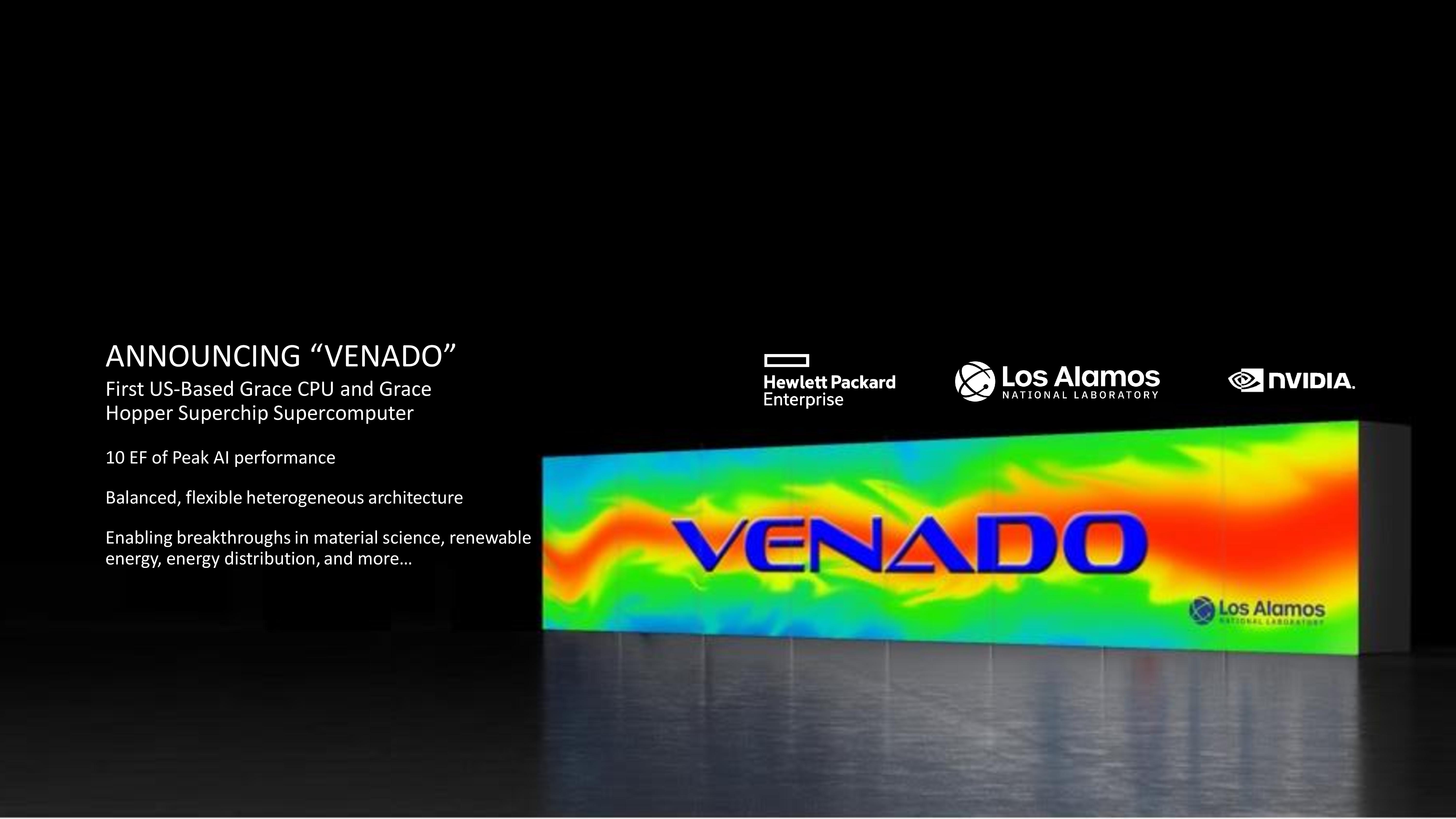

Nvidia's expansion into designing and producing Arm-based CPUs was quite the shocking revelation when the company announced it last year, and the addition of the Hopper GPUs into the design upped the ante. Even though Nvidia has yet to ship the silicon, those efforts continue to pay off as the company announced that its Grace CPU Superchip and Grace Hopper Superchips would power two future supercomputers. The Department of Energy's (DOE) Los Alamos National Laboratory will deploy the flagship Grace-only Venado supercomputer that will deliver up to ten 'AI ExaFlops' of performance.

The Swiss National Computing Center's existing 20 AI ExaFlops Alps supercomputer will also deploy Grace CPU Superchips in HPE Cray EX cabinets. This supercomputer is already operational as a general-purpose machine for the research community.

There is a caveat, though: 'AI ExaFlops' consist of lower-precision math (FP32 or lower, like INT8) than we see with the official rankings that use FP64, but we have yet to receive a confirmation on the data type used for these projections. As such, it is unclear if Venado will deliver an ExaFlop of performance as measured with FP64, as we see with Frontier, the first true Exascale-class supercomputer. Notably, Frontier has achieved a 6.88 HPL-AI ExaFlops result, and it will be interesting to see if the Nvidia-powered systems can match or exceed that benchmark.

Nvidia's Grace supercomputer announcements come hot on the heels of the company's recent announcement that it has provided reference CGX, OVX, and HGX system designs that will ship from six major OEMs in the first half of 2023, springboarding the company into the broader AI server market.

Nvidia and the Los Alamos National Laboratory (LANL) aren't sharing many details about Venado yet, but we do know that it will come with a mixture of the dual-CPU 144-core Grace CPU Superchips and the single CPU + Hopper GPU combo that comes in the form of the Grace Hopper Superchip. This will be the first US-based Grace supercomputer.

As a quick reminder, Nvidia's Grace CPU Superchip is the company's first CPU-only Arm chip designed for the data center and comes as two chips on one motherboard, while the Grace Hopper Superchip combines a Hopper GPU and the Grace CPU on the same board. In addition, the Neoverse-based CPUs support the Arm v9 instruction set and systems come with two chips fused together with Nvidia's newly branded NVLink-C2C interconnect tech.

Overall, Nvidia claims the Grace CPU Superchip will be the fastest processor on the market when it ships in early 2023 for a wide range of applications, like hyperscale computing, data analytics, and scientific computing. (You can read the deep-dive details about the Grace Superchip silicon here.)

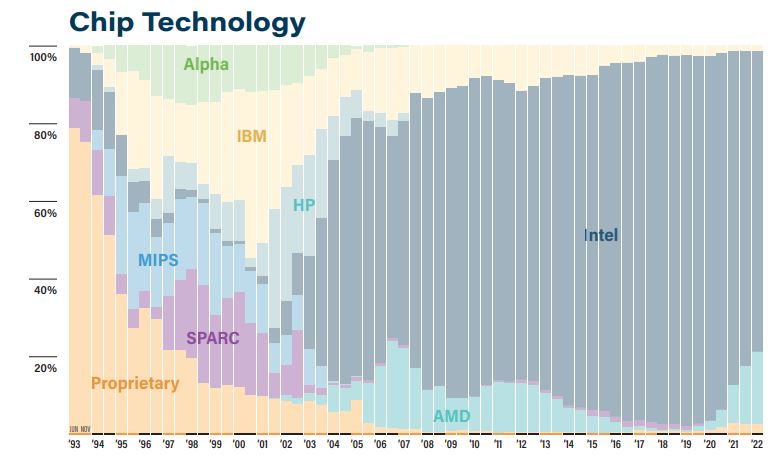

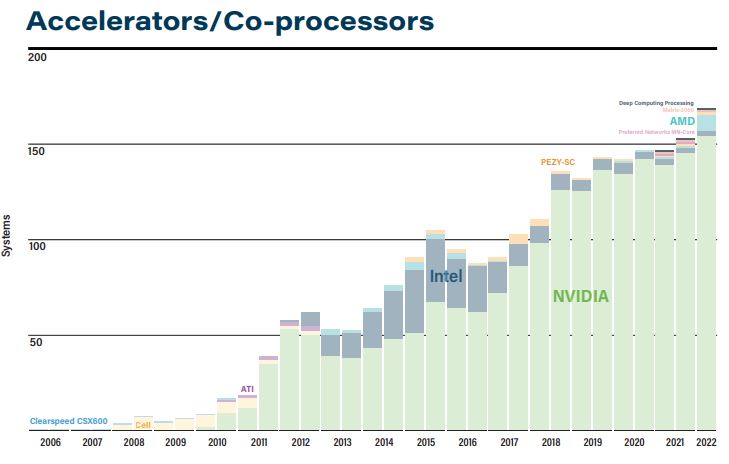

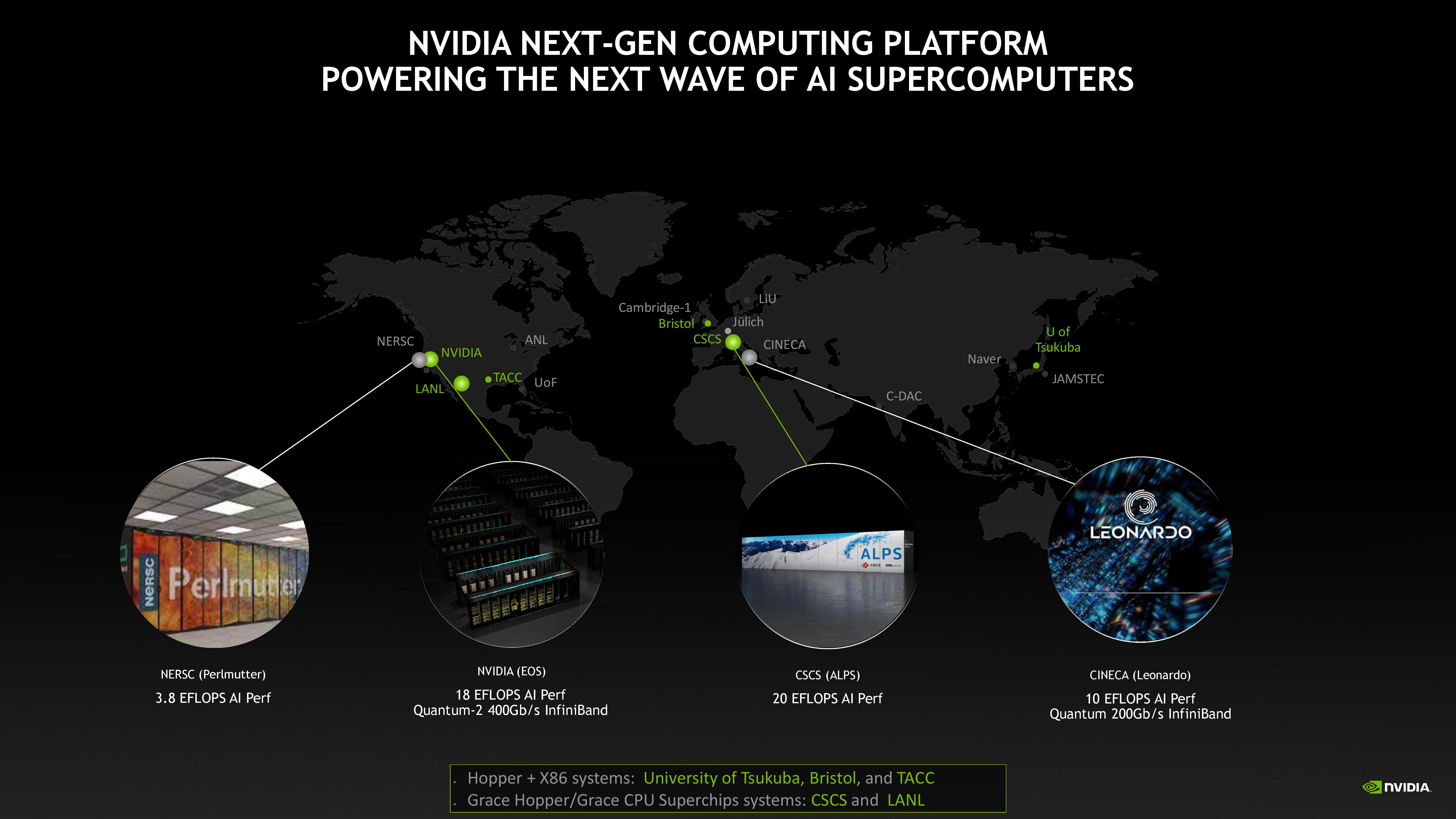

As you can see in the album above, Nvidia GPUs still serve as the go-to accelerator for supercomputers and in HPC, but Intel and AMD dominate the CPU landscape with x86 as IBM recedes. So naturally, Nvidia wants to change that with the arrival of its Arm-based Grace CPUs. However, it's important to note that Nvidia's focus of late has been on systems that tout tremendous power in AI computing, meaning performance in lower-precision and mixed-precision numerical formats, not the standard FP64 that's used in traditional HPC and supercomputing. As such, Nvidia touts its performance in 'AI ExaFlops,' and above, we can see a roster of supercomputers that Nvidia says already meet this criterion.

The two Grace-equipped supercomputers will join a plethora of other Nvidia GPU-powered supercomputers, including the 3.8 AI ExaFlops Perlmutter system at NERSC, Nvidia's own EOS with 18 AI ExaFlops, and the 10 AI ExaFlops Leonardo supercomputer at CINECA.

Nvidia now has multiple public-facing Grace Superchip-powered supercomputers in the works. We expect that number to expand rapidly as the company works closer to shipping the Grace Superchip silicon in early 2023.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.