Nvidia's Arm-Powered Grace CPU Debuts, Claims 10X More Performance Than x86 Servers in AI, HPC

Nvidia comes for the data center CPU market

Nvidia introduced its Arm-based Grace CPU architecture that it says delivers 10X more performance than today's fastest servers in AI and HPC workloads. The new chips will soon power two new AI supercomputers and come powered by unspecified "next-generation" Arm Neoverse CPU cores paired with LPDDR5x memory that pumps out 500 GBps of throughput, along with a 900 GBps NVLink connection to an unspecified GPU for the leading-edge devices.

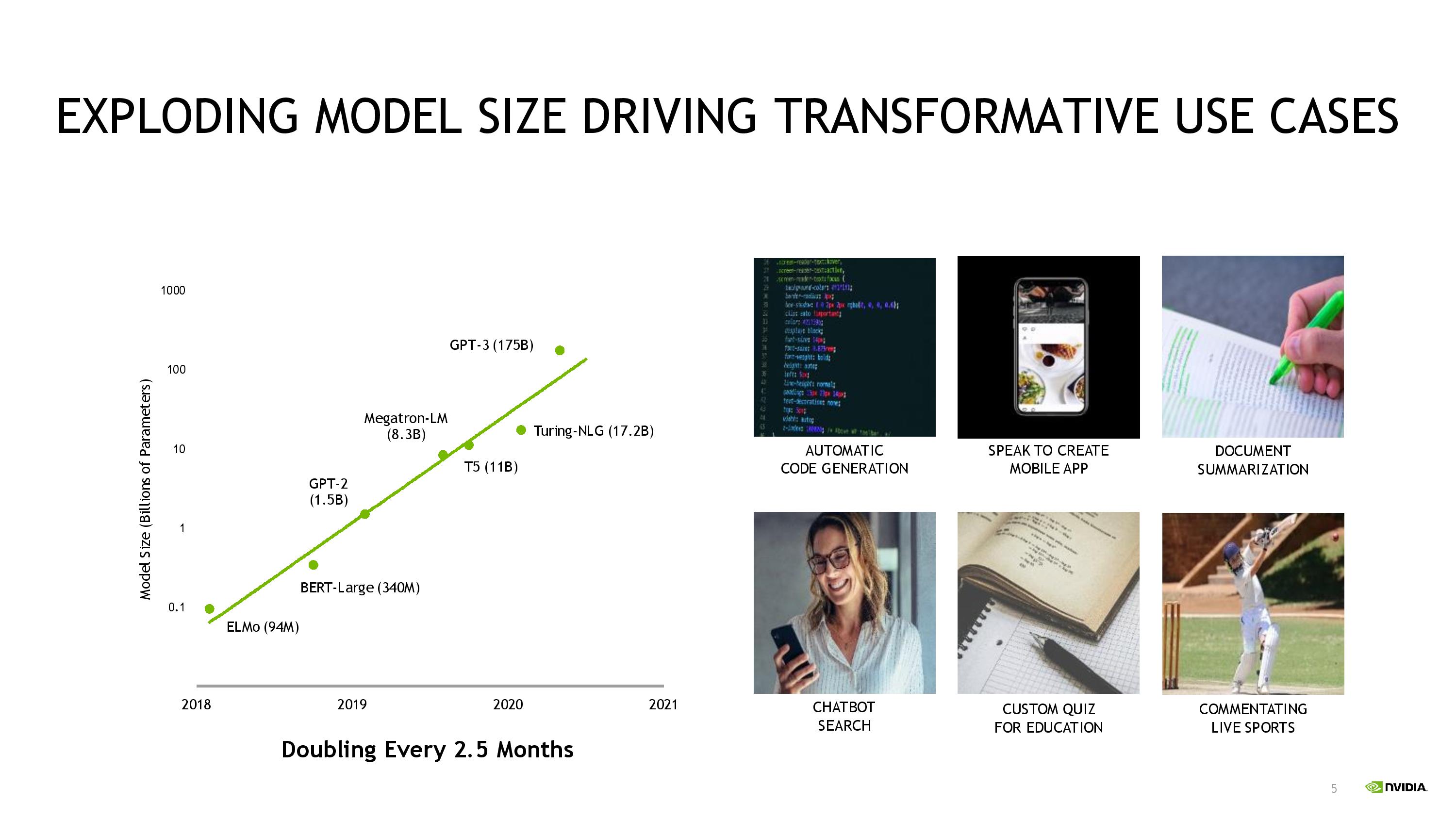

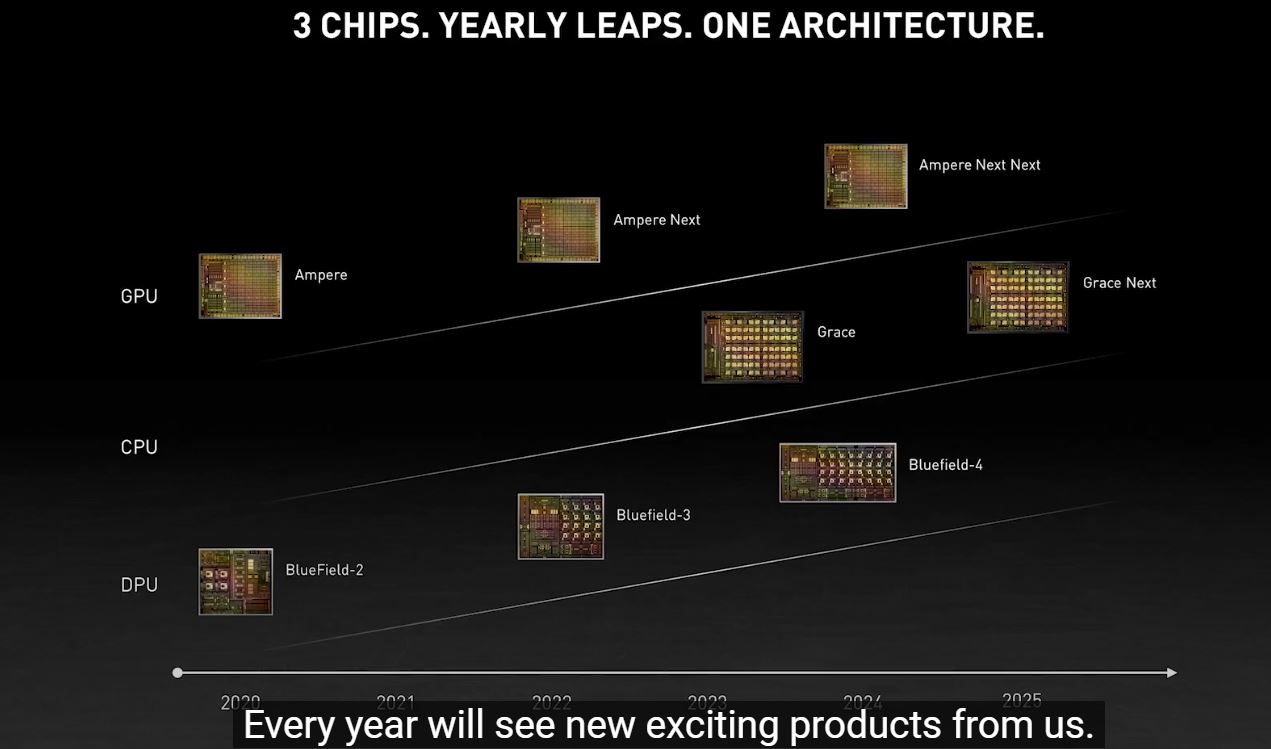

Nvidia also revealed a new roadmap (below) that shows a "Grace Next" CPU coming in 2025, along with a new "Ampere Next Next" GPU that will arrive in mid-2024. Nvidia's pending ARM acquisition, which is still winding its way through global regulatory bodies, has led to plenty of speculation that we could see Nvidia-branded Arm-based CPUs. Nvidia CEO Jensen Huang had previously confirmed that was a distinct possibility, and while the first instantiation of the Grace CPU architecture doesn't come as a general-purpose design in the socketed form factor we're accustomed to (instead the chips come mounted on a motherboard with a GPU), it is clear that Nvidia is serious about deploying its own Arm-based data center CPUs.

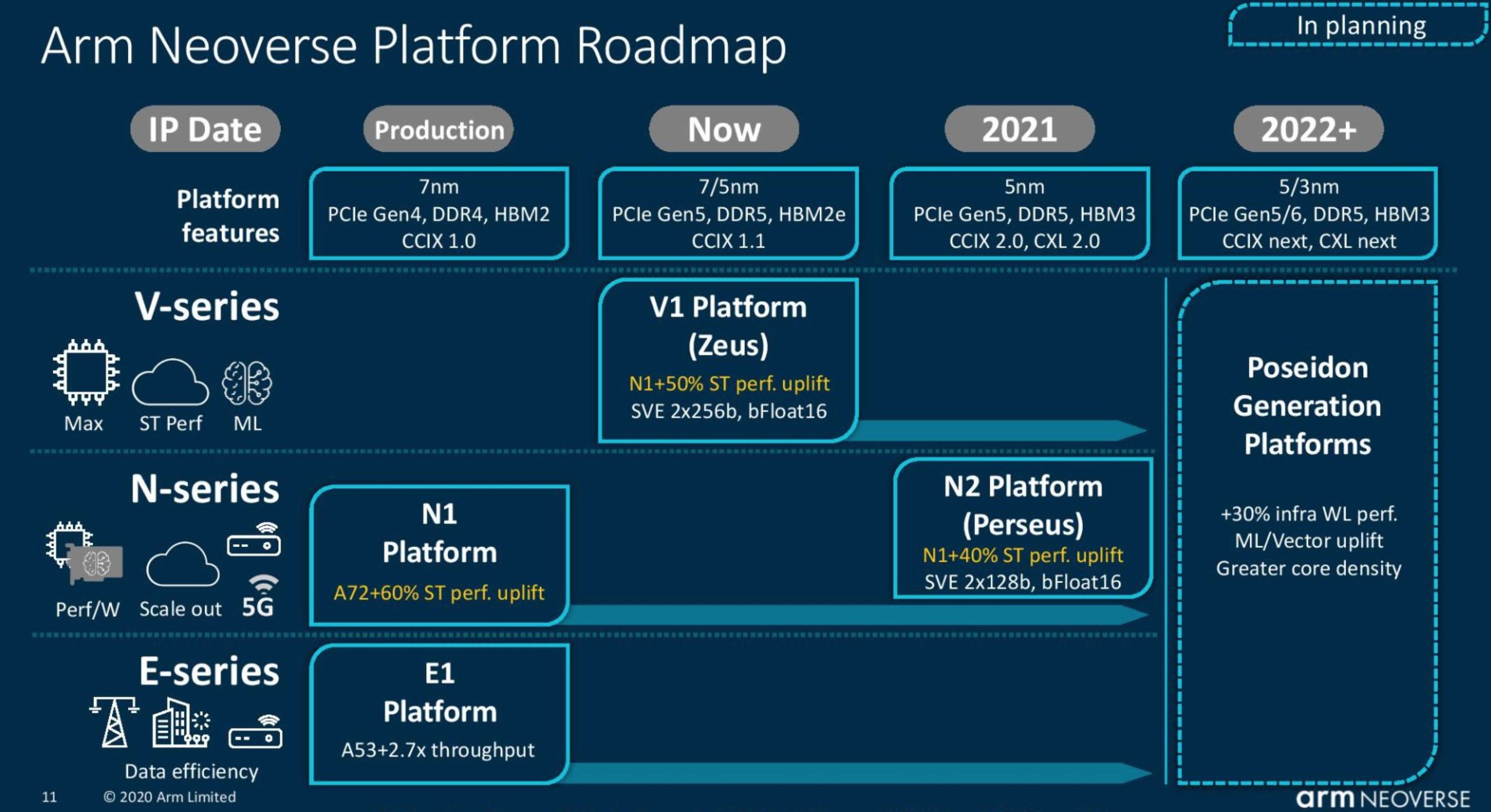

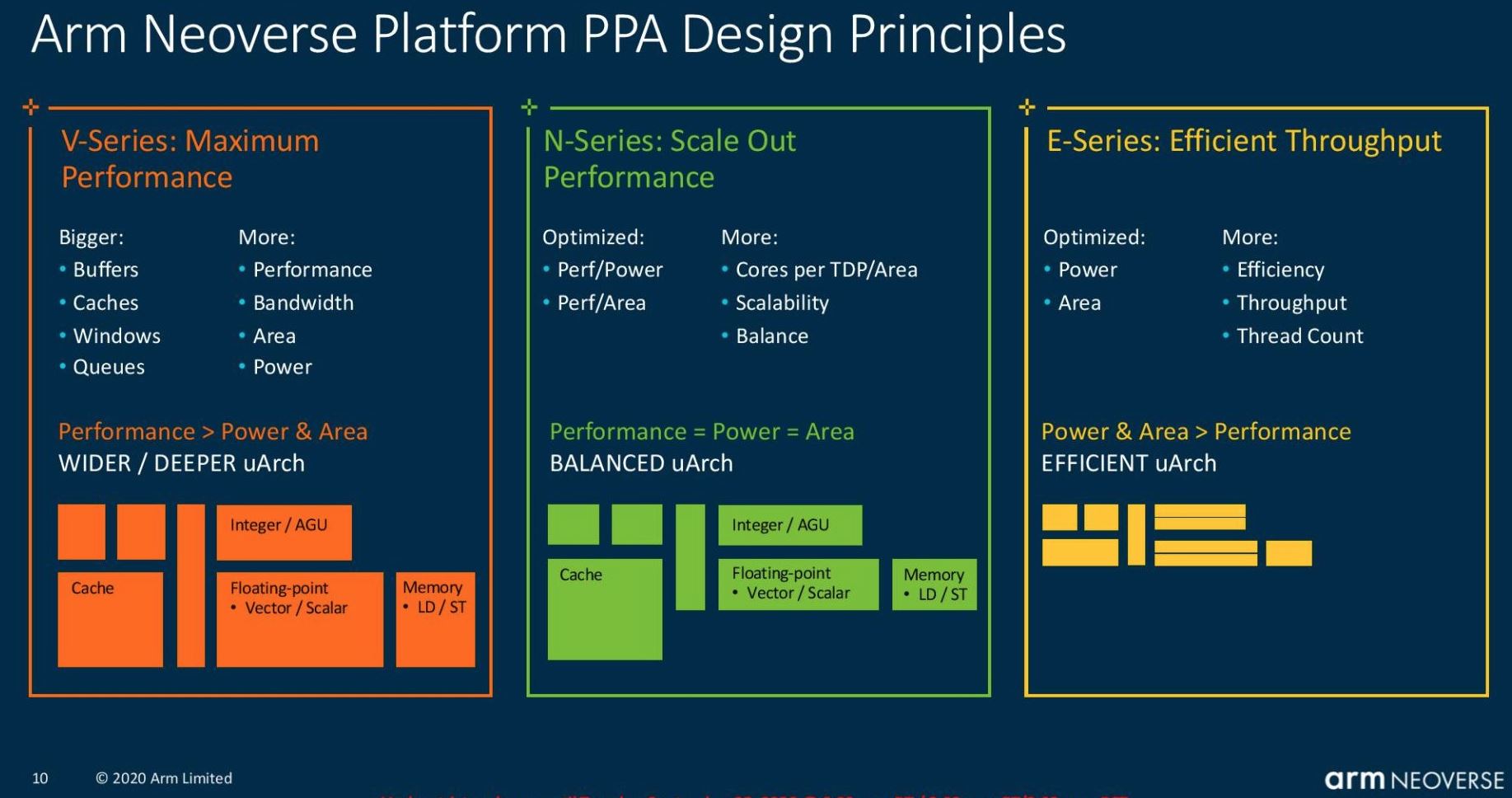

Nvidia hasn't shared core counts or frequency information yet, which isn't entirely surprising given that the Grace CPUs won't come to market until early 2023. The company did specify that these are next-generation Arm Neoverse cores. Given what we know about Arm's current public roadmap (slides below), these are likely the V1 Platform 'Zeus' cores that are optimized for maximum performance at the cost of power and die area.

Chips based on the Zeus cores could come in either 7nm or 5nm flavors and offer a 50% increase in IPC over the current Arm N1 cores. The Arm V1 platform supports all the latest high-end tech, like PCIe 5.0, DDR5, and either HBM2e or HBM3, along with the CCIX 1.1 interconnect. It appears that, at least for now, Nvidia is utilizing its own NVLink instead of CCIX to connect its CPU and GPU.

Nvidia says its Grace CPU will have plenty of performance with a 300+ projected score in the SPECrate_2017_int_base benchmark. Nvidia claims that, with eight GPUs in a single DGX system, the system would scale linearly to a SPECrate_2017_int_base score of 2,400. That's impressive compared to today's DGX, which tops out at 450.

AMD's EPYC Milan chips, the current performance leader in the data center, have posted SPEC results ranging from 382 to 424, putting a single Grace CPU more on par with AMD's previous-gen 64-core Rome chips. Given Nvidia's '10X' performance claims relative to existing servers, it appears the company is referring to GPU-driven workloads.

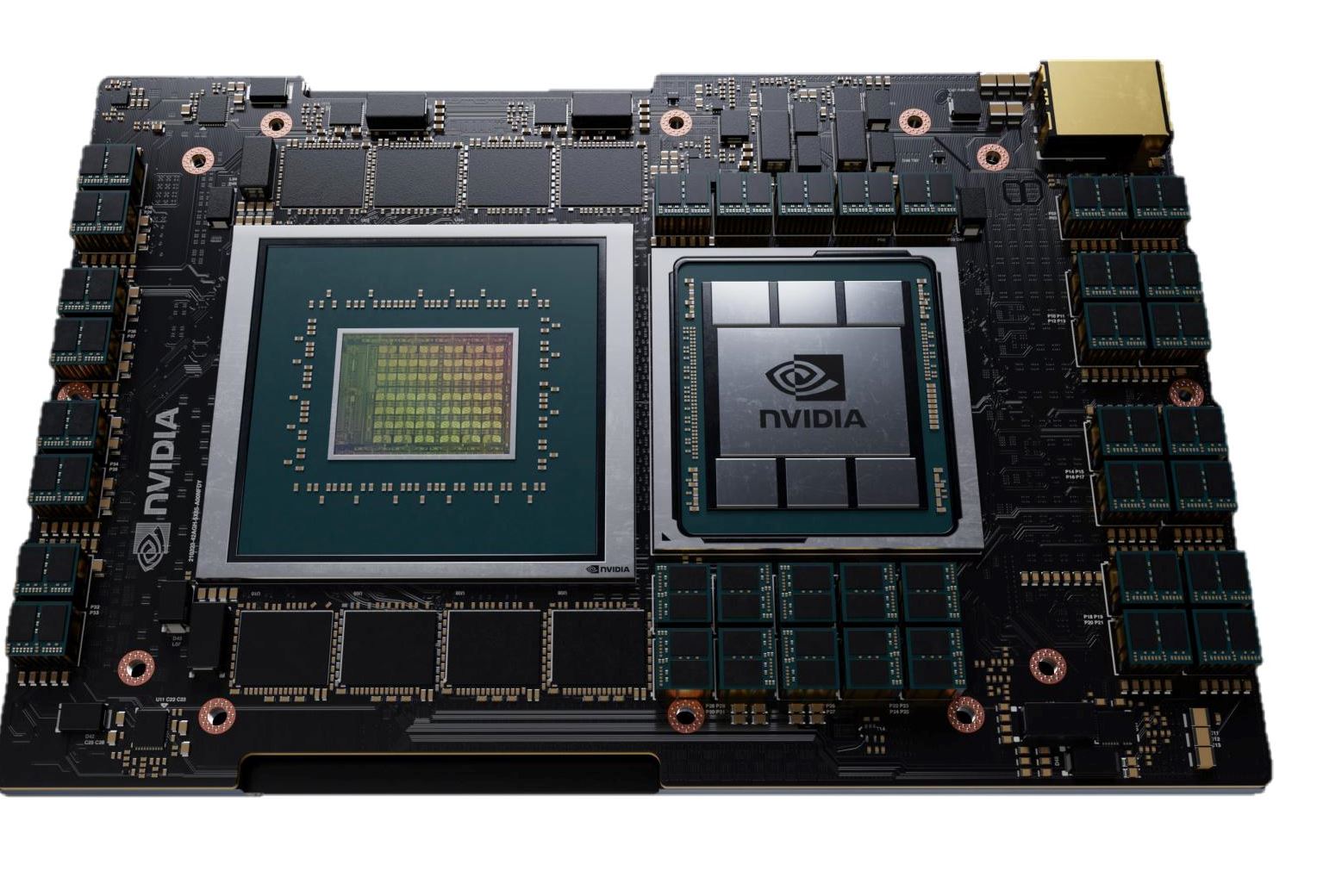

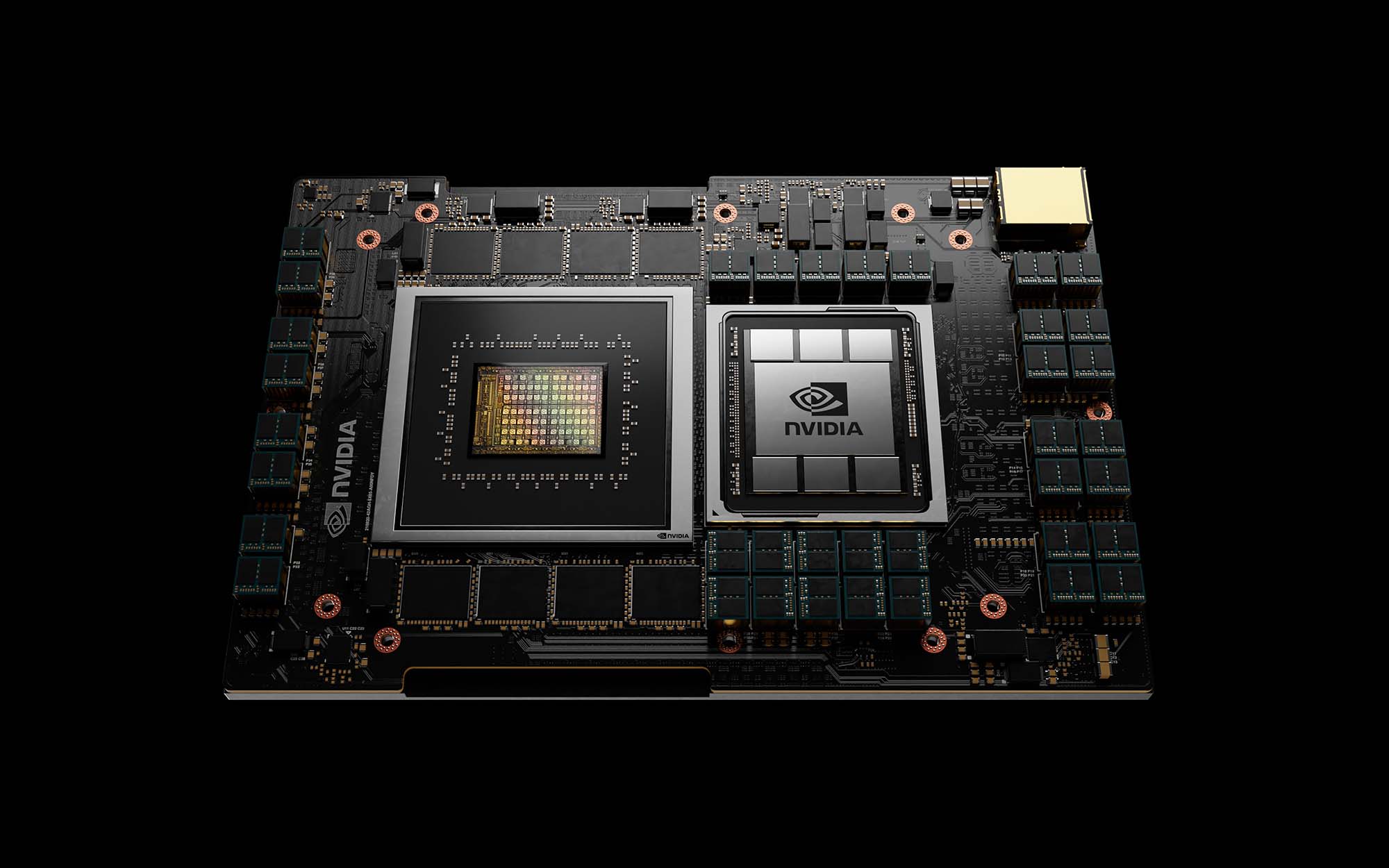

The first versions of the Nvidia Grace CPU will come mounted as a BGA package (meaning it won't be a socketed part like traditional x86 server chips) and comes flanked by what appear to be eight packages of LPDDR5x memory. Nvidia says that LPDDR5x ECC memory provides twice the bandwidth and 10x better power efficiency over standard DDR4 memory subsystems.

Nvidia's next-generation NVLink, which it hasn't shared many details about yet, connects the chip to the adjacent CPU with a 900 GBps transfer rate (14X faster), outstripping the data transfer rates that are traditionally available from a CPU to a GPU by 30X. The company also claims the new design can transfer data between CPUs at twice the rate of standard designs, breaking the shackles of suboptimal data transfer rates between the various compute elements, like CPUs, GPUs, and system memory.

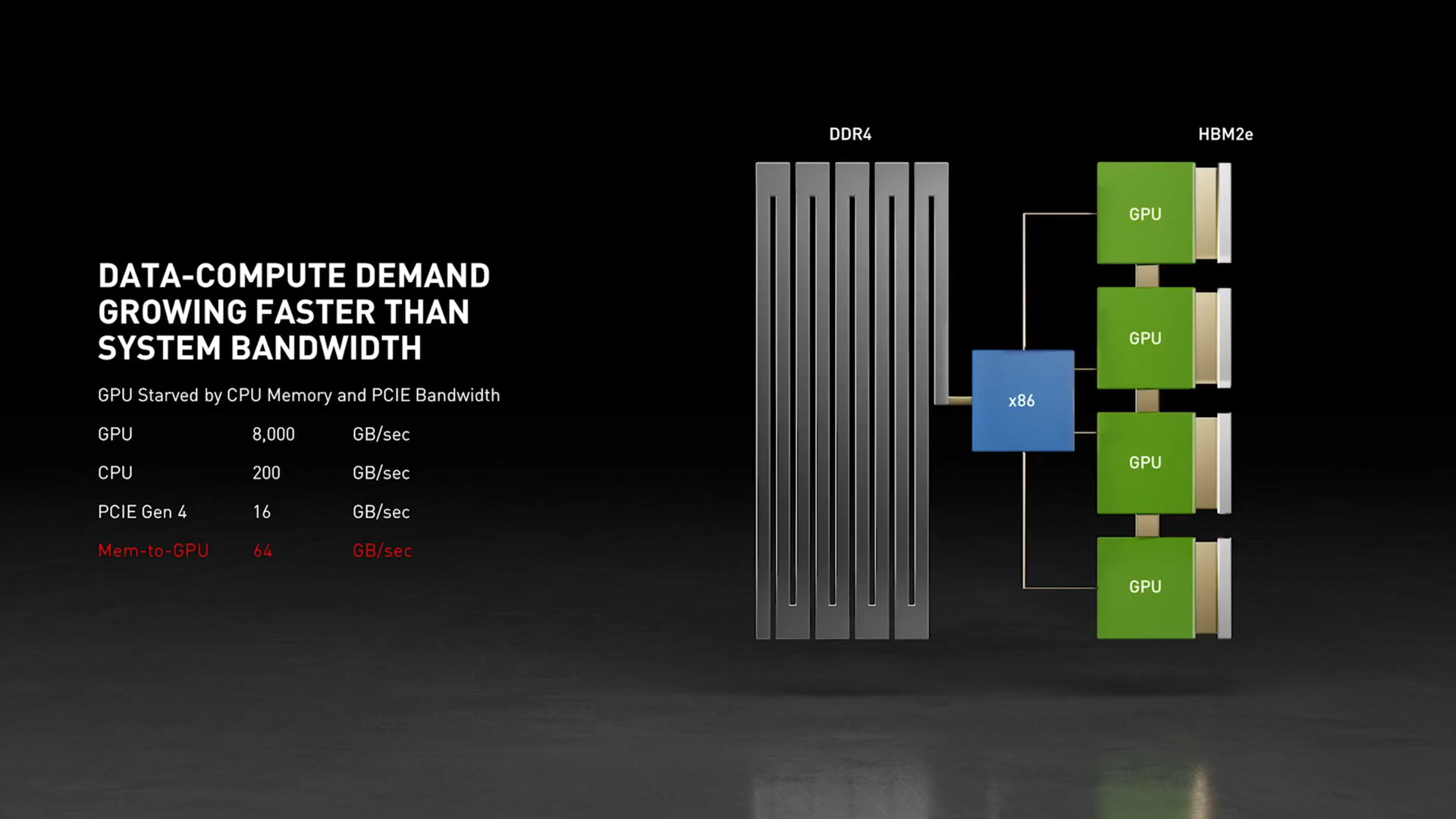

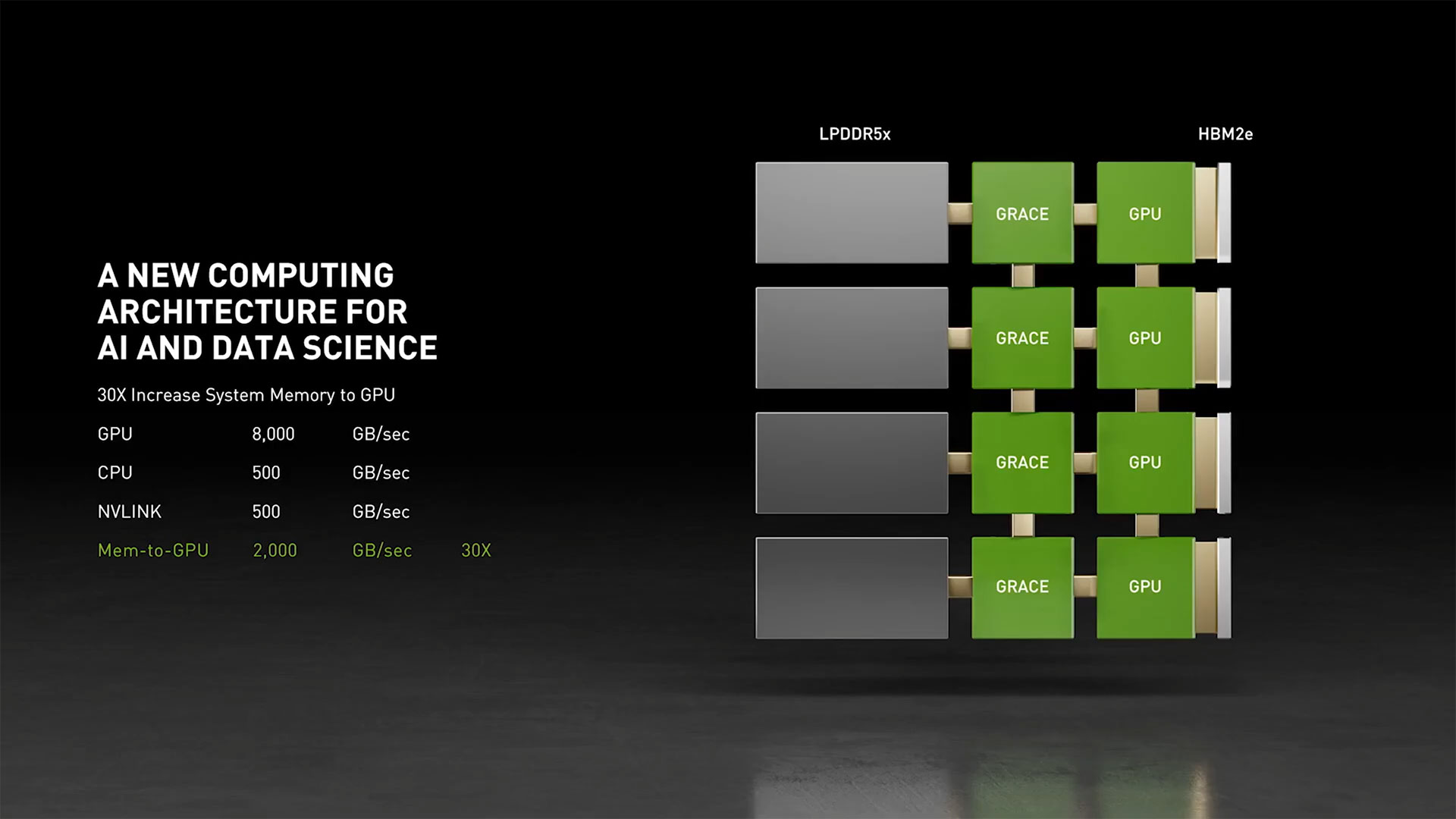

The graphics above outline Nvidia's primary problem with feeding its GPUs with enough bandwidth in a modern system. The first slide shows the bandwidth limitation of 64 GBps from memory to GPU in an x86 CPU-driven system, with the limitations of PCIe throughput (16 GBps) exacerbating the low throughput and ultimately limiting how much system memory the GPU can utilize fully. The second slide shows throughput with the Grace CPUs: With four NVLinks, throughput is boosted to 500 GBps, while memory-to-GPU throughput increases 30X to 2,000 GBps.

The NVLink implementation also provides cache coherency, which brings the system and GPU memory (LPDDR5x and HBM) under the same memory address space to simplify programming. Cache coherency also reduces data movement between the CPU and GPU, thus increasing both performance and efficiency. This addition allows Nvidia to offer similar functionality to AMD's pairing of EPYC CPUs with Radeon Instinct GPUs in the Frontier exascale supercomputer, and also Intel's combination of the Ponte Vecchio graphics cards with the Sapphire Rapids CPUs in the Aurora supercomputer, another world-leading exascale supercomputer.

Nvidia says this combination of features will reduce the amount of time it takes to train GPT-3, the world's largest natural language AI model, with the 2.8 AI-exaflops Selene (the world's current fastest AI supercomputer) from fourteen days to two.

Nvidia also revealed a new roadmap that it says will dictate its cadence of updates over the next several years, with GPUs, CPUs (Arm and x86), and DPUs all co-existing and evolving on a steady cadence. Huang said the company would advance each architecture every two years, with x86 advancing one year and Arm the next, with a possible "kicker" generation in between, which likely will consist of smaller advances to process technology rather than architectures.

Notably, Nvidia named the Grace CPU architecture after Grace Hopper, a famous computer scientist. Nvidia is also rumored to be working on its chiplet-based Hopper GPUs, which would make for an interesting pairing of CPU and GPU codenames that we could see more of in the future.

The US Department of Energy's Los Alamos National Laboratory will build a Grace-powered supercomputer. This system will be built by HPE (the division formerly known as Cray) and will come online in 2023, but the DOE hasn't shared many details about the new system.

The Grace CPU will also power what Nvidia touts as the world's most powerful AI-capable supercomputer, the Alps system that will be deployed at the Swiss National Computing Center (CSCS). Alps will primarily serve European scientists and researchers when it comes online in 2023 for workloads like climate, molecular dynamics, computational fluid dynamics, and the like.

Given Nvidia's interest in purchasing Arm, it's natural to expect the company to begin broadening its relationships with existing Arm customers. To that effect, Nvidia will also bring support for its GPUs to Amazon Web Service's powerful Graviton 2 Arm chips, which is a key addition as AWS adoption of the Arm architecture has led to broader uptake for cloud workloads. Nvidia's collaboration will enable streaming games from its GPUs to Android gaming, along with enabling AI inference workloads, all from the AWS cloud. Those efforts will come to fruition in the second half of 2021.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

rluker5 I read " When tightly coupled with NVIDIA GPUs, a Grace CPU-based system will deliver 10x faster performance than today’s state-of-the-art NVIDIA DGX-based systems, which run on x86 CPUs. " which would likely be gpu based and communication performance improvements. Not quite the same as future arm is 10x current x86.Reply -

Jim90 Replyrluker5 said:I read " When tightly coupled with NVIDIA GPUs, a Grace CPU-based system will deliver 10x faster performance than today’s state-of-the-art NVIDIA DGX-based systems, which run on x86 CPUs. " which would likely be gpu based and communication performance improvements. Not quite the same as future arm is 10x current x86.

Exactly. Beware of headlines and marketing. "10x current x86" is about as bullcrap a headline as you can get. -

funguseater This CPU didn't "debut" today, it won't reach market for 2 more years. Until then it's just vaporware.Reply -

Houndsteeth Early 2023.Reply

AMD and Intel (as well as others) have some time to revise their roadmaps to take this new player into account.

I think Intel has been way too complacent sitting on their laurels.

If you think only AMD was offering competition, now we can see both Apple and NVidia taking shots.

For anything that doesn't require outright x86/AMD64 compatibility, these new processors offer a very competitive option.

And (at least) the Apple Silicon has proven to be competitive even taking x86/AMD64 compatibility into account, if they would ever consider licensing or selling processing units outside of their own machines. -

spongiemaster Reply

The actual statement is:rluker5 said:I read " When tightly coupled with NVIDIA GPUs, a Grace CPU-based system will deliver 10x faster performance than today’s state-of-the-art NVIDIA DGX-based systems, which run on x86 CPUs. " which would likely be gpu based and communication performance improvements. Not quite the same as future arm is 10x current x86.

"Nvidia introduced its Arm-based Grace CPU architecture that it says delivers 10X more performance than today's fastest servers in AI and HPC workloads "

That last part which is no where in your statement is the important part. They're not claiming 10x across the board. The current Epyc CPU's in DGX boxes have no AI specific acceleration. It's not really that hard to believe that two years from now Nvidia could release a purpose built customized CPU that beats today's general purpose CPU's by 10x in specific workloads. -

VforV Reply

Exactly, specific AI and HPC workloads. But some people don't know to read apparently...spongiemaster said:The actual statement is:

"Nvidia introduced its Arm-based Grace CPU architecture that it says delivers 10X more performance than today's fastest servers in AI and HPC workloads "

That last part which is no where in your statement is the important part. They're not claiming 10x across the board. The current Epyc CPU's in DGX boxes have no AI specific acceleration. It's not really that hard to believe that two years from now Nvidia could release a purpose built customized CPU that beats today's general purpose CPU's by 10x in specific workloads. -

LordVile Reply

Nah, Apple can do it because they control the OS, others have to deal with windows who’s arm implementation is horrendous. Also Qualcomm have been trying to catch Apple for years and haven’t managed it.enewmen said:Will Nvidia make a RISC-V M1 killer (before someone else does)? -

Drazen Yeah, NVidia and marketing crap.Reply

Do you remember Tegra1 days and how NVidia claimed Tegra1 is faster then Intel Core CPUs?

Of course it will be 1000000 times faster if NVidia runs code written in C++ and x86 Python. But do not tell anyone this unimportant detail :p