Owlchemy Labs Innovates New Mixed Reality VR Techniques: Depth Sensing Camera, In-Game Compositing

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

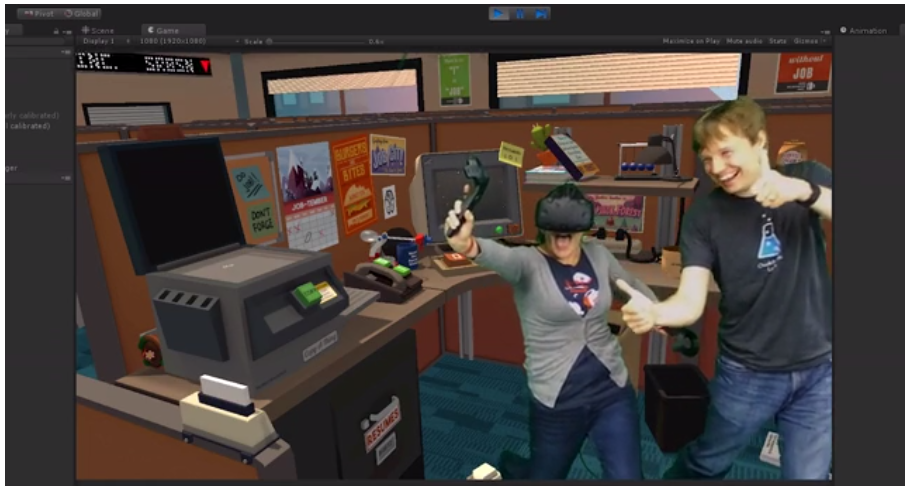

Owlchemy Labs (maker of Job Simulator, one of the first Vive titles ever shown to the press) is one of the pioneering developers in the VR industry, and it's once again proving itself by innovating a new way to shoot in mixed reality.

Before the HTC Vive launched, Owlchemy Labs released a mixed reality trailer for Job Simulator as part of its marketing campaign. Soon after, Valve got involved and created tools to allow it to film mixed reality video, which it used for the Vive launch commercial. Valve later released instructions to the developer community that explained how to enable mixed reality rendering in Unity games.

Since then, developers, streamers, YouTubers and HTC have embraced mixed reality VR, but the process is cumbersome—to say the least. The process for mixed reality video today involves rendering foreground and background objects in separate windows and compositing them together with the camera footage stuck in between.

Article continues belowThe results from the current mixed reality method are impressive, but Owlchemy thought it could do better. And so it did. Owlchemy Labs built a custom shader and custom plugin that allows the developer to record video with a depth-sensing camera. In other words, Owlchemy is bringing three-dimensional video footage directly into the game through Unity and compositing it in-game. It doesn’t rely on third-party software to composite the scene.

"'Job Simulator' presents a huge problem for mixed reality because the close-quarter environments surround the player in all directions and players can interact with the entire world,” said the Owlchemy Labs team. "Essentially, 'Job Simulator' is the worst case scenario for mixed reality, as we can’t get away with simple foreground/ background sorting where the player is essentially static, and all the action happens in front of them (a la 'Space Pirate Trainer')."

Owlchemy Labs figured out a way to bring 1080p video with depth data at 30fps into the game, composite it together with the game with accurate depth compensation, and render the game in stereoscopic VR inside the HMD without dipping below 90fps—all on one PC.

By importing the depth camera data into Unity, the player becomes a Unity asset, just like any other 3D scan or render. The player’s scan can cast a shadow in the game environment, and it can react to dynamic lighting effects. It effectively becomes part of the game environment. Owlchemy can use this information to calculate per-pixel depth, which allows you to reach behind any object in the game, not just a pre-established foreground barrier.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Owlchemy Labs is still perfecting its depth-based real-time in-app mixed reality compositing solution, but the company expects that it could ultimately become a universal solution for mixed reality streaming in the future. It may be a while before we see games from other developers integrate this technology, but we have to assume that Owlchemy Labs is building this tech more for its future content, such as the Virtual Rickality, more so than Job Simulator.

Kevin Carbotte is a contributing writer for Tom's Hardware who primarily covers VR and AR hardware. He has been writing for us for more than four years.

-

Sharky36566666 This type of feedback will become a required must have feature for AAA multi user xR experiences. Not to use to see myself , but for the remote users within the same experience to see me experiencing in the experience along side them.Reply -

Jeff Fx Reply18684192 said:This type of feedback will become a required must have feature for AAA multi user xR experiences. Not to use to see myself , but for the remote users within the same experience to see me experiencing in the experience along side them.

If it was used for that, others would see you with your VR headset on. Scanned avatars with no headset on would be a lot better for that purpose.

This will be most useful for being able to see our own bodies in VR, which will make it much more immersive.