Futuremark's VRMark: First Look

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Futuremark, creators of the famous 3DMark graphics benchmarking utility, is hard at work developing a new benchmarking tool designed to measure your system's ability to handle virtual reality head-mounted displays (HMDs). It will also be used by reviewers to measure latency of HMDs. VRMark is still very much under development, but Tom’s Hardware was recently invited to take part in the press preview and was provided some experimental hardware to try out the upcoming benchmark.

Step By Step

Futuremark’s VRMark utility is being developed for release in 2016, but not all of it will be released to the public. You can break VRMark down into several different parts, and your access to the various levels will depend very much on what you do for a living. The reason for this is that much of the testing for VR is actually done with extra equipment.

Take latency for example. In a virtual reality situation, there are a number of different actions that would have latency. Futuremark said VRMark is able to measure four different latency events:

Article continues below- Time from physical event to API event (Step 1 to Step 2)

- Time from API event to draw call (Step 2 to Step 3)

- Time from draw call to image on display (Step 3 to Step 4)

- Total latency (time from Step 1 to Step 4)

The first step, the physical movement, can’t be measured accurately without a device that can consistently perform the same action and do it on command. This sort of test will therefore be limited to hardware manufacturers and other groups that have access to this kind of specialized equipment and a laboratory environment. Step 2, the time from API event to draw call, is measured by software as part of the latency test. It does not require extra hardware.

The test that Tom’s Hardware has been privy to is the third step: time from draw call to image on display. Futuremark said this test won’t be available to the general public either, because it also requires some specialized equipment to perform. It will be accessible to members of the press for VR headset reviews, though.

We’ve been asked to not show the hardware that was sent to us and to avoid describing it in detail because it is still subject to changes, but what I can tell you is that it involves an external sensor that takes tens of thousands of samples per second to detect when the display draws the image. It then compares that result against when the benchmark initiated the draw call. This test gives us three results measured in milliseconds: Photon Latency, Photon Persistence and Total Latency.

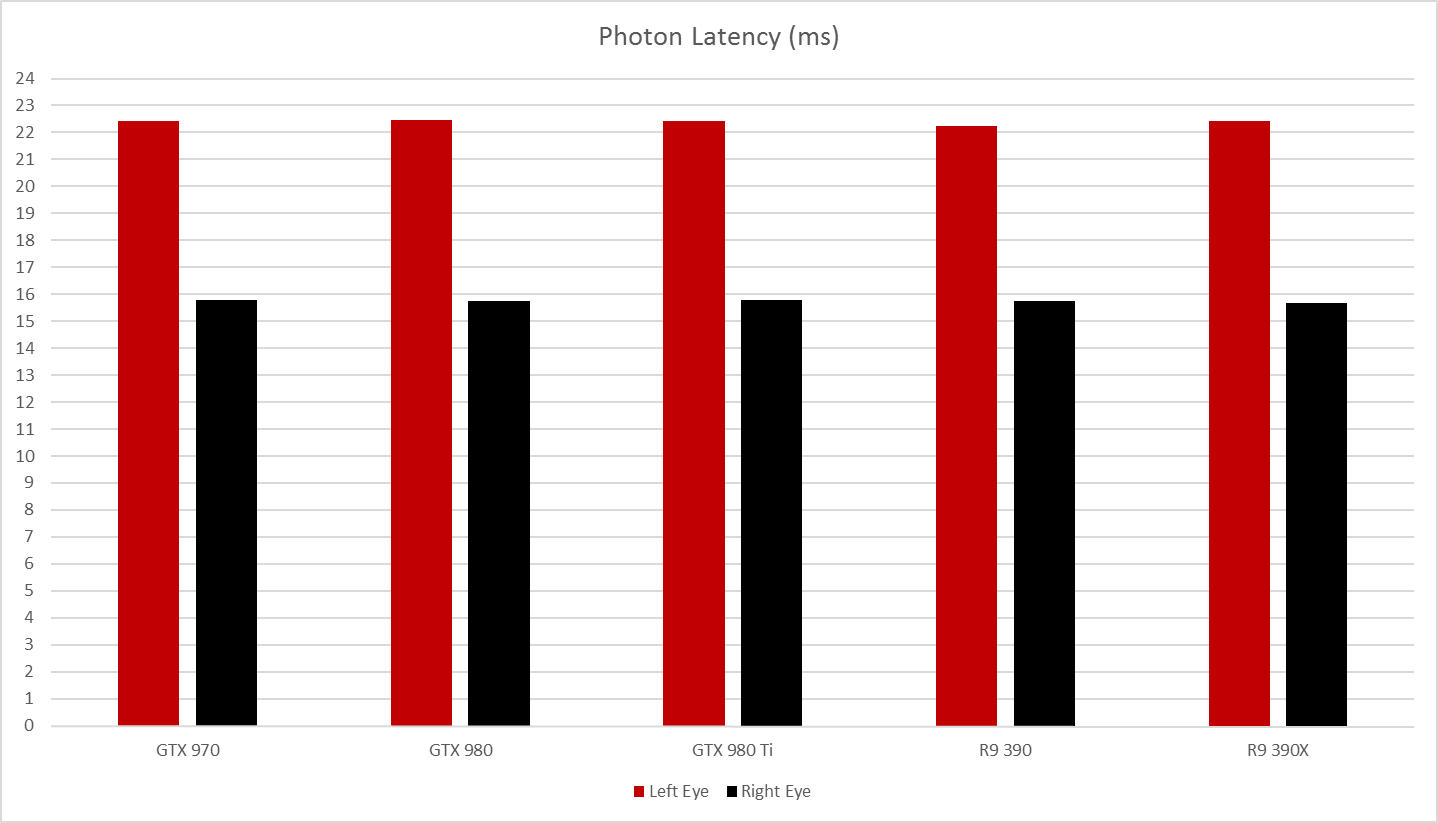

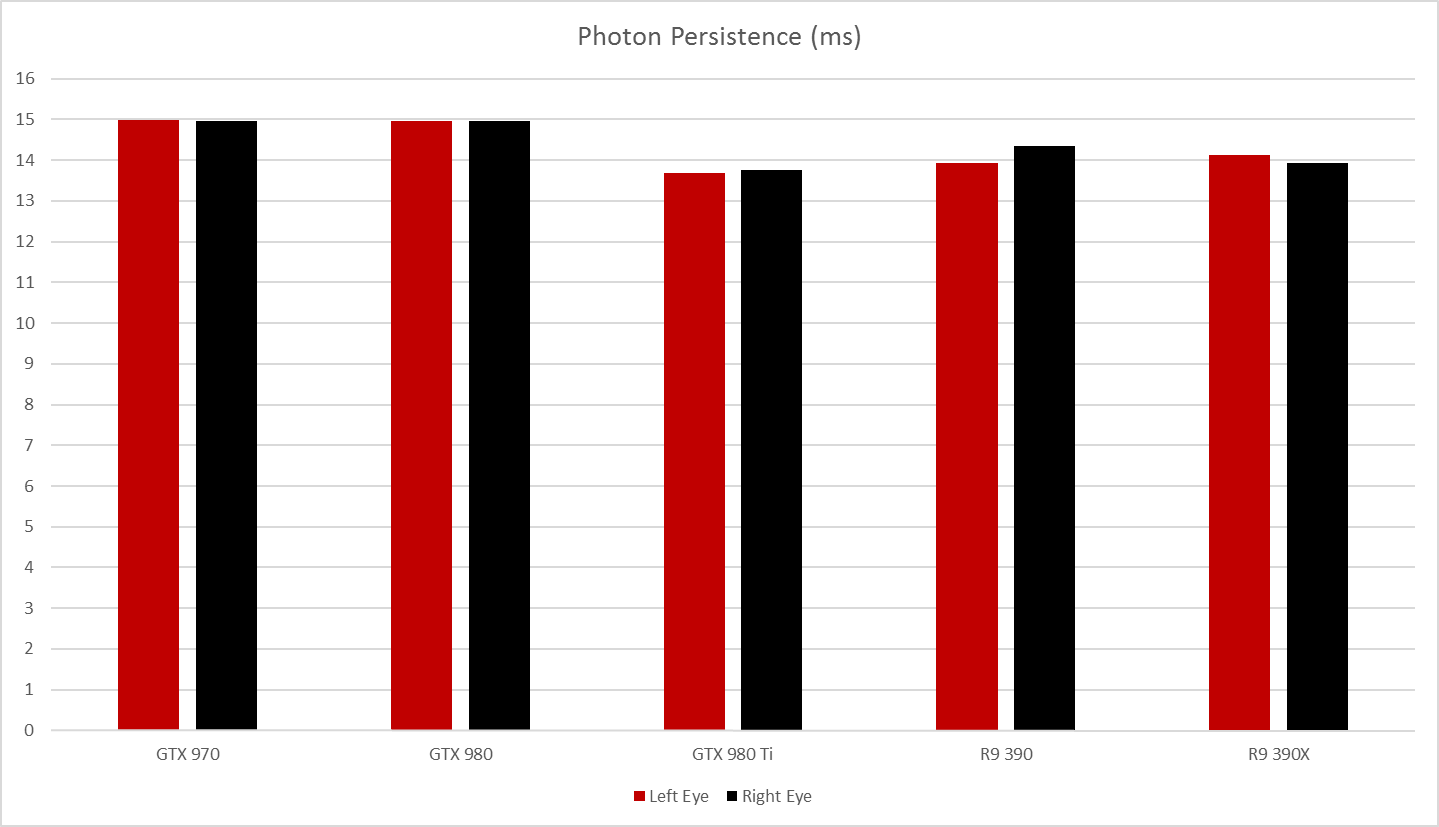

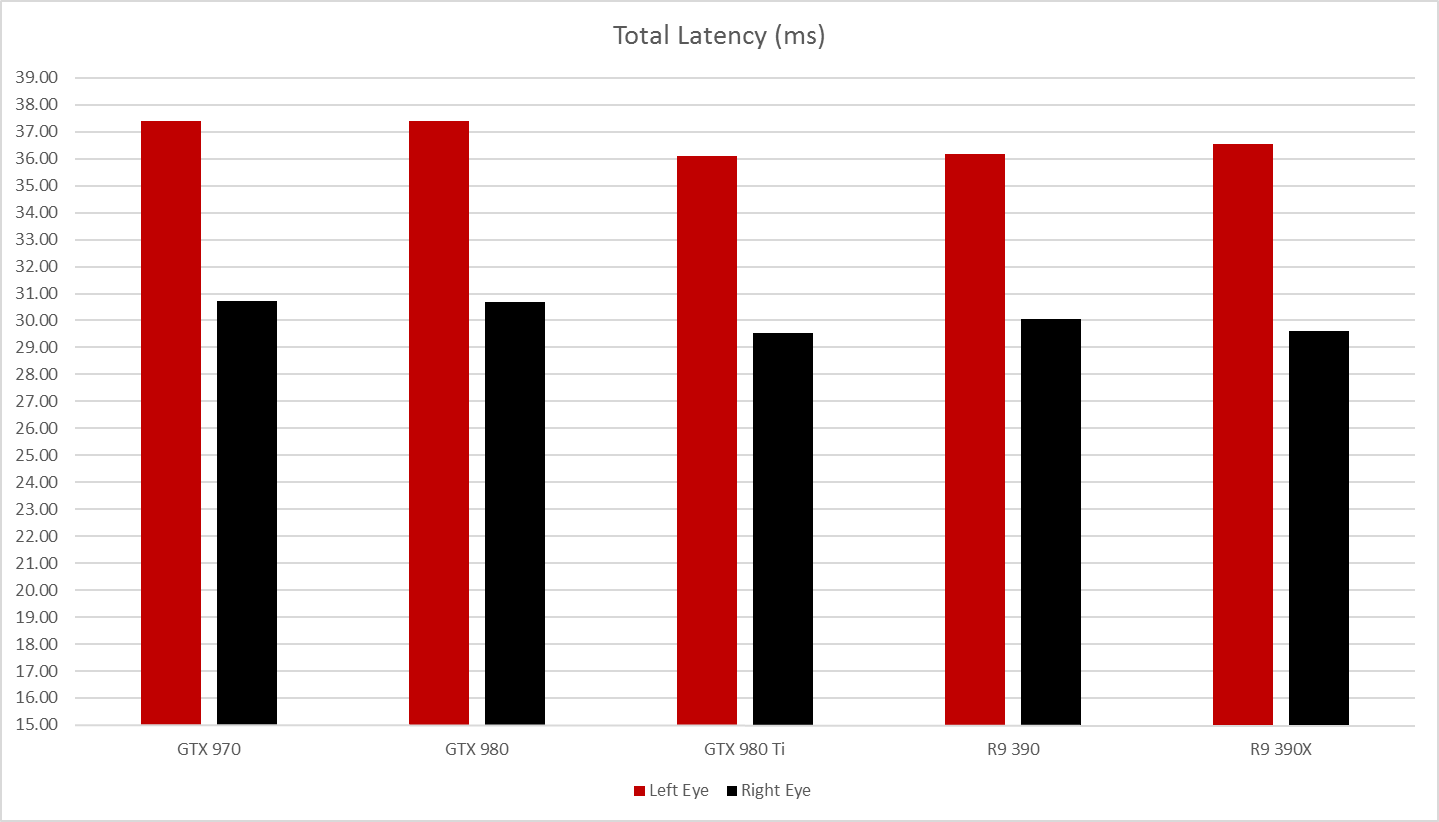

Photon Latency is the time it takes for the screen to react to a draw call. Photon Persistence, also known as ghosting, is the time it takes for the screen to transition from light to dark. VRMark also measures the total latency, which is the time from the physical event to when the image displays on screen.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Although Futuremark supplied the experimental hardware and early VRMark build, we were on our own to get a headset for testing. For this reason, we are currently limited to one headset for the benchmark: an Oculus Rift DK2.

Testing Display Latency

The Display Latency test (Photon Latency and Photon Persistence) is definitely meant to be measuring the VR HMD and not so much the hardware in the computer, but for curiosity's sake we ran the test on a small selection of VR-ready graphics cards to see what, if any, effect different GPUs have on these metrics.

From Nvidia, we tested a Gigabyte GeForce GTX 970 SC Windforce, Asus GeForce GTX 980 Matrix Platinum and a Gigabyte GeForce GTX 980 Ti Xtreme Gaming. We ran the tests using Nvidia GeForce Driver 359.06.

From AMD, we had only two cards on hand to test, Sapphire’s Radeon R9 390 Nitro and Power Color’s Radeon R9 390X Devil. As much as we’d love to test a Fiji-based card in VRMark, we don’t currently have one at this lab. We used AMD’s latest Radeon Software Crimson release, version 15.12.

The test system that we used for VRMark is the same test system use for GPU reviews. The CPU is Intel’s Core i7-5930K overclocked to 4.2 GHz. The system has 16 GB of Crucial Ballistix DDR4 and two 500 GB M200 SSDs, and everything is plugged into an MSI X99S Xpower motherboard. Power is delivered from a be quiet! 850 W Dark Power Pro 10 platinum-rated power supply.

The way the sensor works, it can measure only one eye at a time, so we ran the test for each graphics card on both eyes for comparison. This revealed an interesting trait that should have been obvious, but at first left us with some concerns for the hardware: The left eye was consistently around seven milliseconds slower than the right. It turns out this should happen. The screen in the Rift DK2 draws from right to left, so the left side of the screen will, naturally, have slightly more latency. Presumably, a headset with two screens would be able to have matched latency in each eye, but we’ll have to wait for one to be made available to verify that theory.

The performance level of the graphics card really doesn’t have any effect on the display latency, but the architecture of the GPU may potentially have some effect, albeit extremely minor. This aligns with our expectations; we didn’t expect to see any dramatic differences between the various graphics cards, as photon latency and persistence are largely dependent on the screen's performance, but there did appear to be some slight differences in the photon persistence results.

The variance was slight, to put it mildly, but it appears as though AMD’s drivers and hardware have a slight advantage. AMD’s R9 390 and R9 390X were able to fade back to black nearly a full millisecond quicker than comparably-priced Nvidia cards. (The 980Ti was even better, but for more than twice the price.) Keep in mind that these results are just a small run of tests. We would have to do far more sampling to definitively say AMD’s photon persistence performance is better.

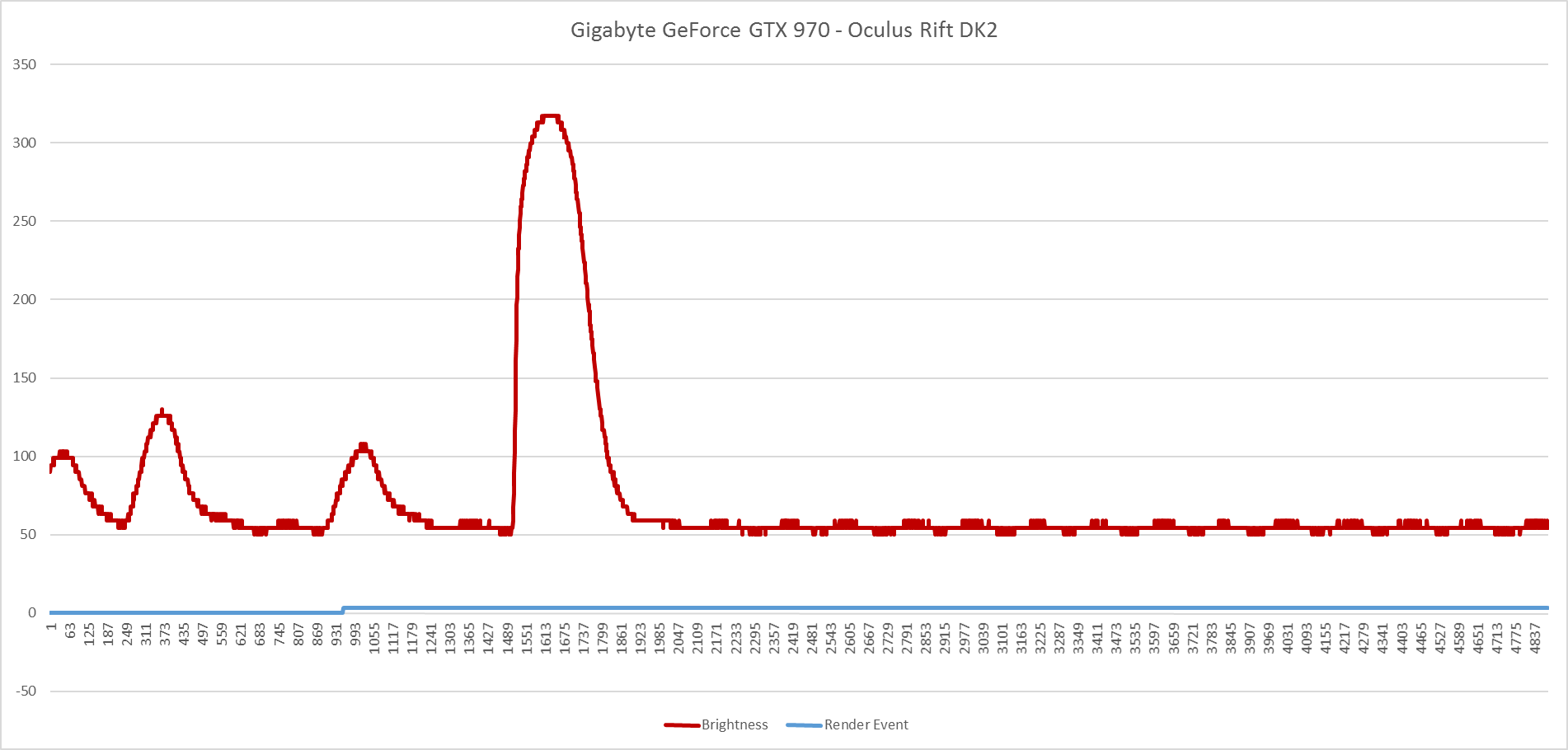

The report that VRMark spits out isn't built into the software yet, so we have to create our own graphs with the data that the benchmark records. The graph data is supposed to indicate where the events took place, though in the current build it doesn't record these events for you. We've been told the next build will incorporate that into the reporting system, but for now this is how the graph looks with the data provided.

A Preview And A Promise

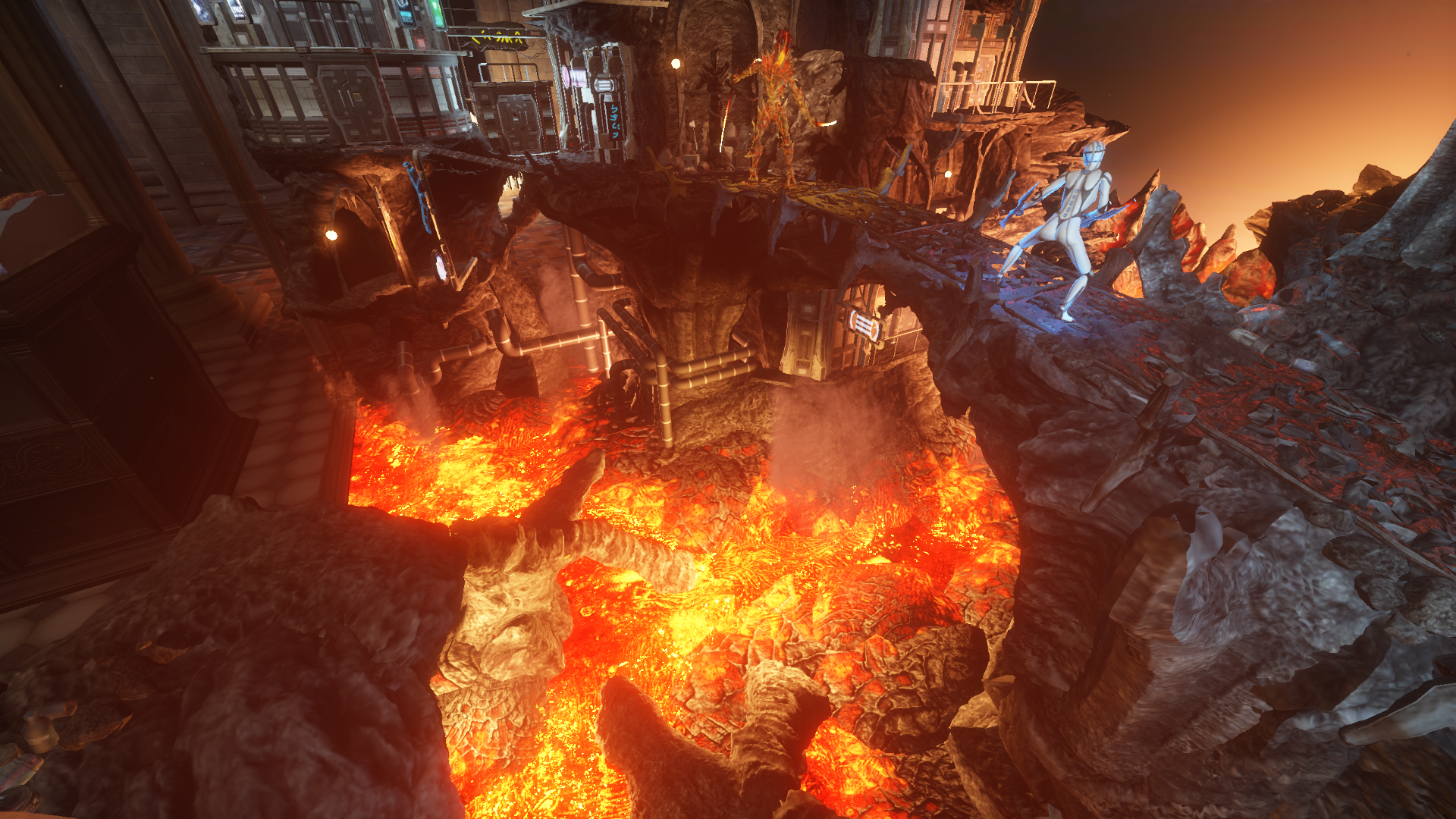

Futuremark has only released the latency test to us, but we’ve been told the next phase is coming soon. There is a VR performance test being developed that you will be able to use at home to gauge the ability of your own system to render VR scenes. Just days before Christmas, the company released a preview that allows you to view an interactive demo of the upcoming benchmark.

The preview will work with or without a VR HMD connected to your PC. It places you inside a museum lobby between four glass displays. Inside the displays are recreations of past 3DMark environments. The blizzard scene from 3DMark06, the jungle scene and submarine scenes from 3DMark11, and the fight scene from 3DMark Firestrike are all present. The fight sequence plays out in miniature in front of you, and one of the floating bug machine things from the demo actually flies around outside of the display cabinet. It’s a clever way to create a VR benchmark, and I look forward to running it as a test rather than a demo.

It’s great to see a company like Futuremark take on VR so seriously. In the coming months, interest in VR will likely accelerate, and many people will want to know how their current hardware will handle virtual reality. A benchmark like VRMark has the potential to be very popular, very soon. We’ll be doing much more testing with Futuremark’s new benchmark as further builds are released to us and the public.

Follow Kevin Carbotte @pumcypuhoy. Follow us on Facebook, Google+, RSS, Twitter and YouTube.

Kevin Carbotte is a contributing writer for Tom's Hardware who primarily covers VR and AR hardware. He has been writing for us for more than four years.

-

AdviserKulikov When the numbers have such small differences, it's really a pain that your website still has the link to tiny images when you're trying to get a full sized image.Reply -

Sakkura Reply17209429 said:You just have to click on the smaller picture to get a larger one.

That's still annoying. When people click on an image, it's generally to make it larger rather than smaller. Yet the first click makes it smaller, and you have to click again to get the full-size image. -

kcarbotte ReplyTL-DR: All VR headsets will have an inherent lazy eye. =)

LOL!

that's one way of putting it, though not entirely accurate.

Single screen VR headsets will. -

kcarbotte Reply17209429 said:You just have to click on the smaller picture to get a larger one.

That's still annoying. When people click on an image, it's generally to make it larger rather than smaller. Yet the first click makes it smaller, and you have to click again to get the full-size image.

If I had the power to change that I would in a heartbeat.

-

AMD27 Reply17209429 said:You just have to click on the smaller picture to get a larger one.

That's still annoying. When people click on an image, it's generally to make it larger rather than smaller. Yet the first click makes it smaller, and you have to click again to get the full-size image.

Totally agree, this is so annoying and I am wondering how a popular website like tomshardware can't/won't fix this issue! -

jasonelmore so you say amd has better vr but the 980 ti beats all of the cards, but it doesn't count because its more expensive?Reply

lol i like your logic.

both are going to be great, i wouldnt even start recommending hardware until HMD's are available for purchase and Nvidia VR and AMD VR drivers are mature. -

Gillerer Replyso you say amd has better vr but the 980 ti beats all of the cards, but it doesn't count because its more expensive?

lol i like your logic.

both are going to be great, i wouldnt even start recommending hardware until HMD's are available for purchase and Nvidia VR and AMD VR drivers are mature.

AMD also have more expensive cards, but they were not tested. In order to gauge the relative strengths of different GPU architectures, you need to compare GPUs of in similar price brackets. It really doesn't say anything about the architecture if a $700 card performs better than a $300 card.