Why you can trust Tom's Hardware

Nvidia RTX 5070 Ti Rasterization Gaming Performance

We're dividing gaming performance into two categories: traditional rasterization games and ray-tracing games. We benchmark each game using four different test settings: 1080p medium, 1080p ultra, 1440p ultra, and 4K ultra.

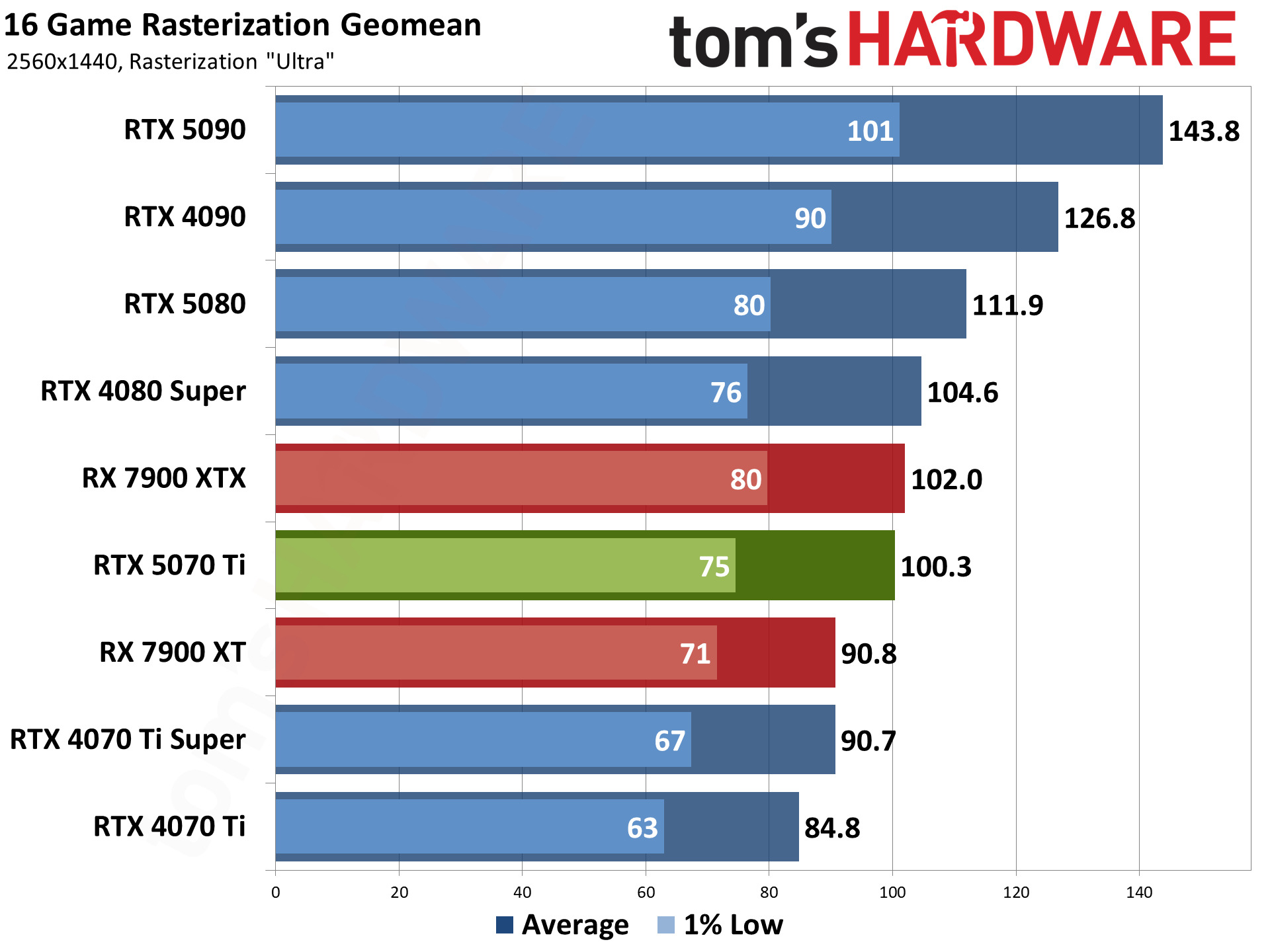

For the RTX 5070 Ti, the 1440p ultra results might be the most interesting. They're useful in their own right, but they're also a stand-in for 4K with quality mode upscaling. 1440p upscaled to 4K will run a bit slower due to the overhead of DLSS, and you can also look at the balanced and performance modes if you want higher framerates, so the 1080p ultra results are also useful.

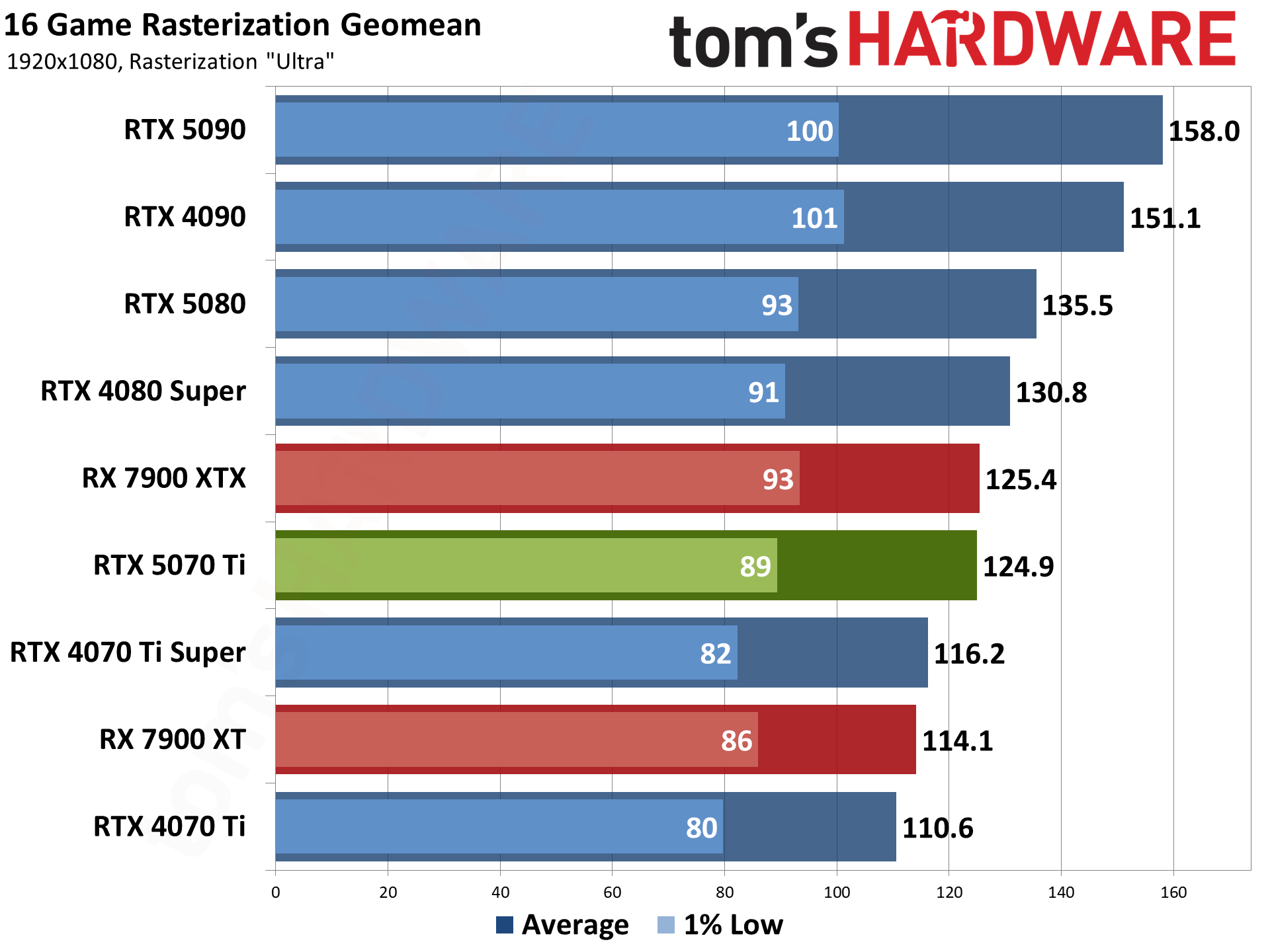

As a $750 card, the 5070 Ti can generally handle 1440p and 4K, particularly with a bit of upscaling help on the latter. We also have the overall performance geomean, the rasterization geomean, and the ray tracing geomean. Just to keep things easier to parse, we're going to put the charts in order from highest resolution to lowest on each group of charts.

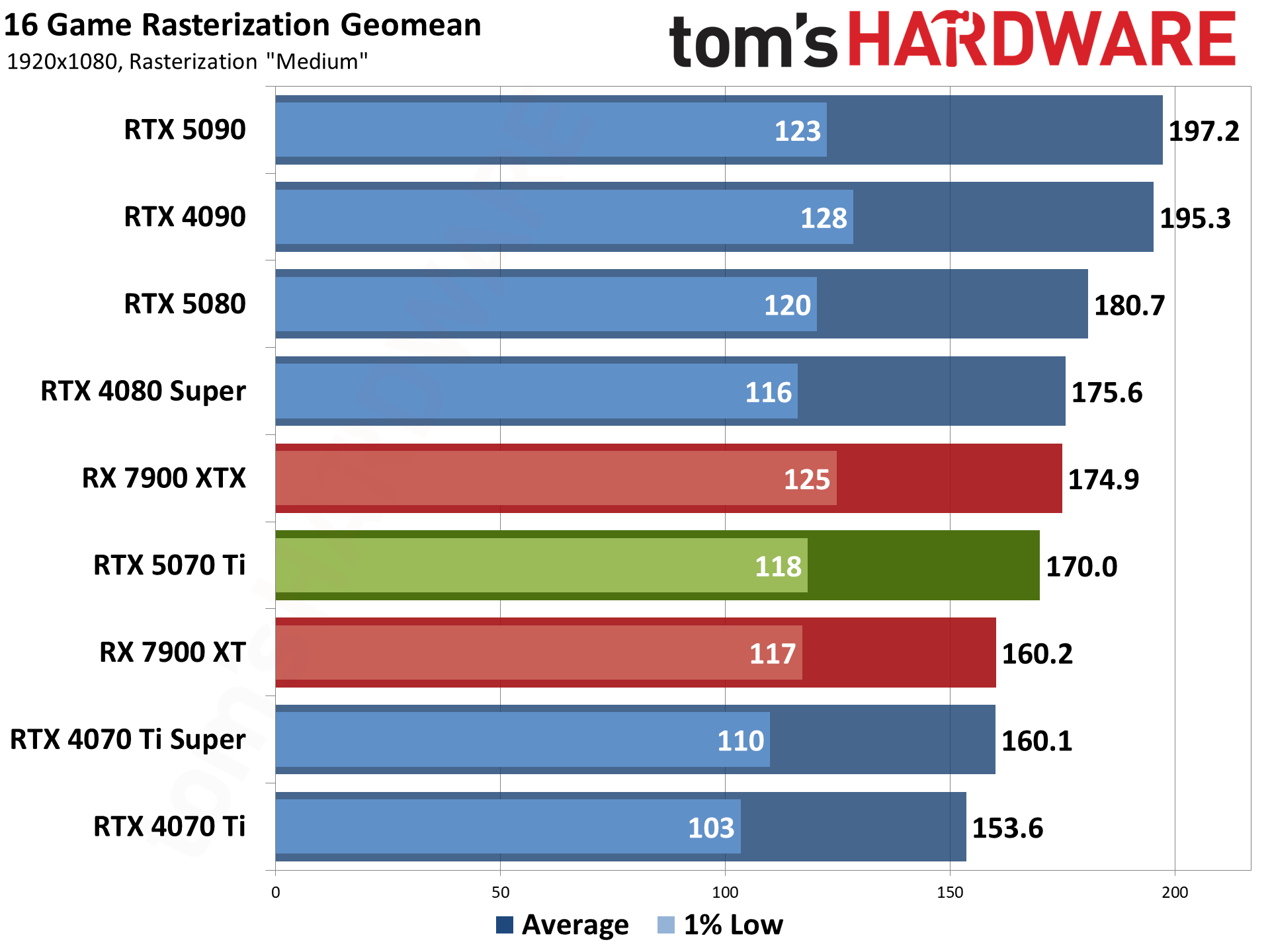

We'll start with the rasterization suite of 16 games, as that's arguably still the most useful measurement of gaming performance. Plenty of games that have ray tracing support end up running so poorly that it's more of a feature checkbox than something useful.

We'll provide limited to no commentary on most of the individual game charts, as the numbers speak for themselves. The Geomean charts will be the main focus, as they provide the big picture of how the 5070 Ti compares to other GPUs.

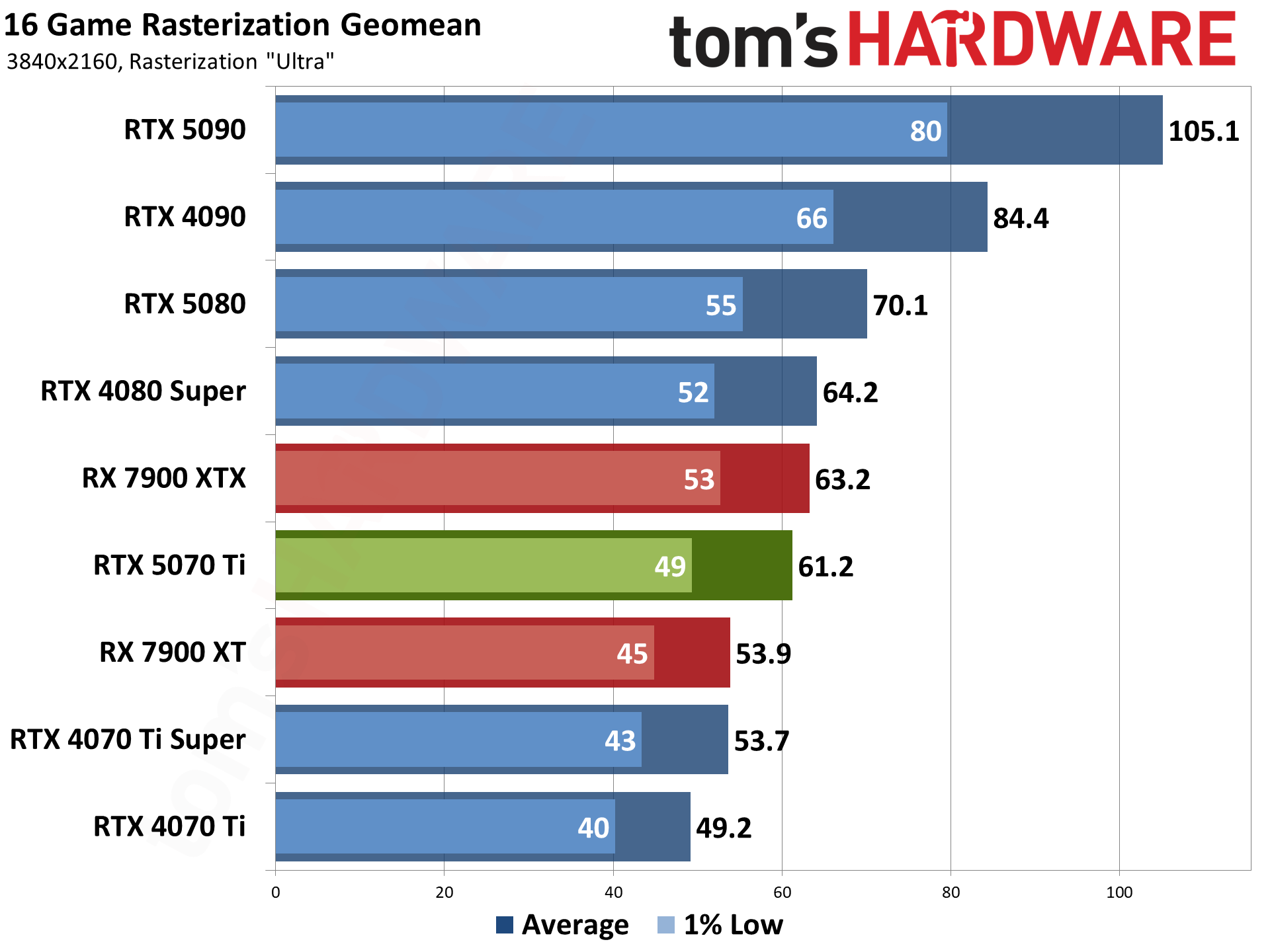

There are two primary comparison points for the RTX 5070 Ti: the RTX 4070 Ti that launched in January 2023, and the RTX 4070 Ti Super that replaced the original card in January 2024. The expectation is that we'll see a bigger generational improvement over the vanilla 4070 Ti, while the 4070 Ti Super will be within striking distance of the 5070 Ti (unless you want to include MFG performance).

Rasterization performance tends to be higher so we can even look at the 4K results without reaching beyond the capabilities of the 5070 Ti. It averages just over 60 fps at 4K ultra across our test suite, beating the 4070 Ti by 25% overall. Drop to 1440p and the gap shrinks a bit to 18%, then 13% at 1080p ultra, and 11% at 1080p medium. That's not too surprising as even a $750 GPU can be CPU limited at 1080p.

The comparison to the 4070 Ti Super is decidedly less impressive. The 5070 Ti takes a 14% lead at 4K, where its additional bandwidth proves most beneficial. The lead decreases to 11% at 1440p, and 7–8 percent at 1080p. It's a small enough difference that, outside of running benchmarks like we're doing here, most people wouldn't be able to tell the two GPUs apart based on these results.

It's also worth noting that there are some games where the 4070 Ti Super comes out ahead of the 5070 Ti. Based on what we know of the architectures and specifications, this shouldn't happen unless the drivers aren't fully tuned for the new Blackwell GPUs. There are some results in our testing where the 4070 Ti Super comes out ahead of the 5070 Ti, and even a handful of cases where the 4070 Ti claims a victory. As we've previously noted with the RTX 5080 and 5090 cards, it seems Nvidia has more tuning worth to get done to properly leverage the new features in Blackwell chips.

One final point of comparison is AMD's RX 7900 XTX. Originally a $999 part, it dropped as low as $800 during sales before the supply dried up. AMD has done better in rasterization performance against Nvidia's GPUs, and that holds true here as well, though the margins aren't really meaningful in this case. The 7900 XTX beats the 5070 Ti by a few percent across all tested settings, with individual games going to one card or the other depending on the engine and other factors.

It's basically a tie, with AMD having a slight edge in performance on a card that's no longer worth buying since it now starts at around $1,350. So unless it comes back in stock at more reasonable prices, AMD's enthusiast RDNA 3 GPU ties Nvidia's new high-end card.

Below are the 16 rasterization game results, in alphabetical order, with short notes on the testing where something worth pointing out is present.

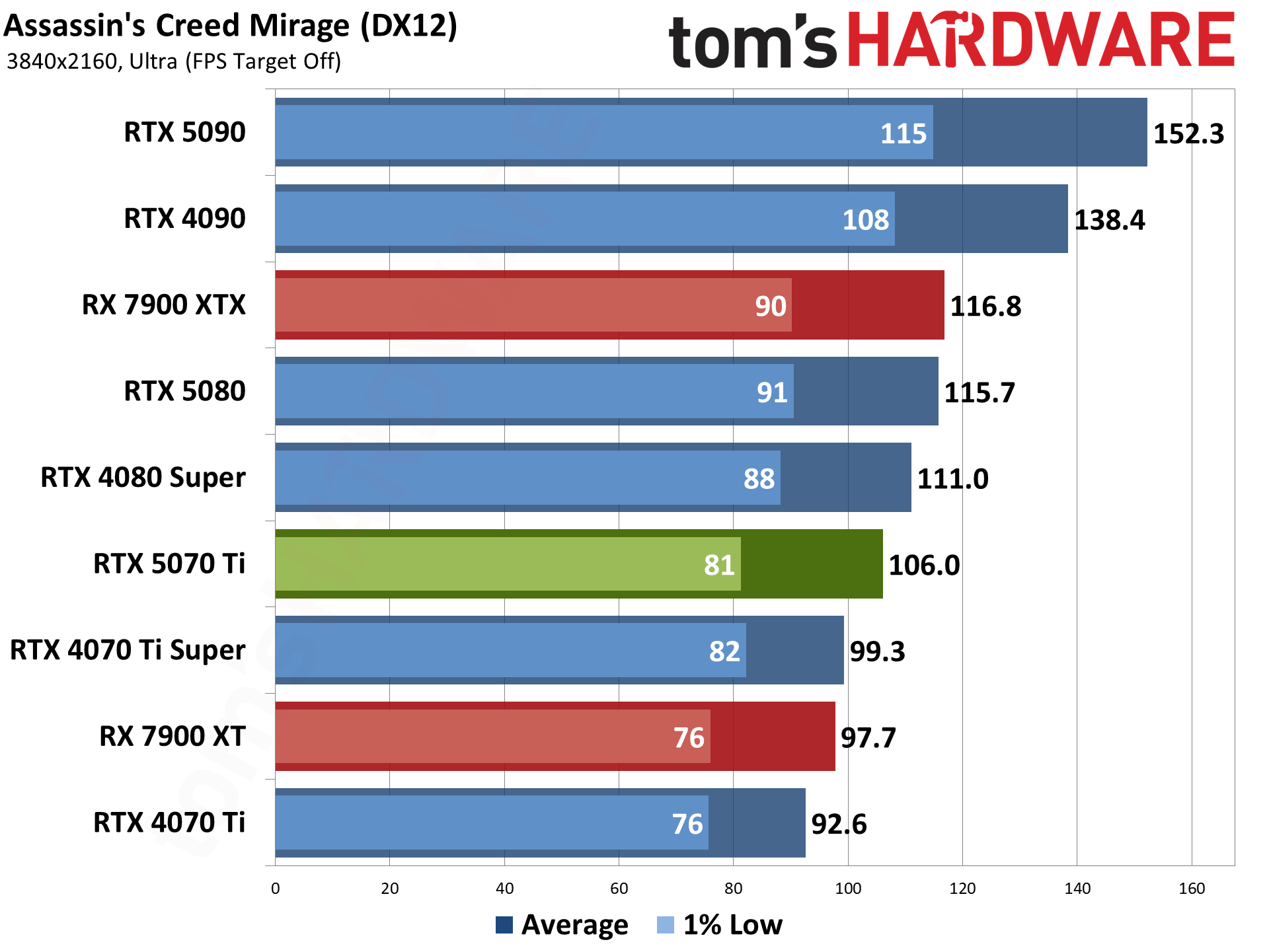

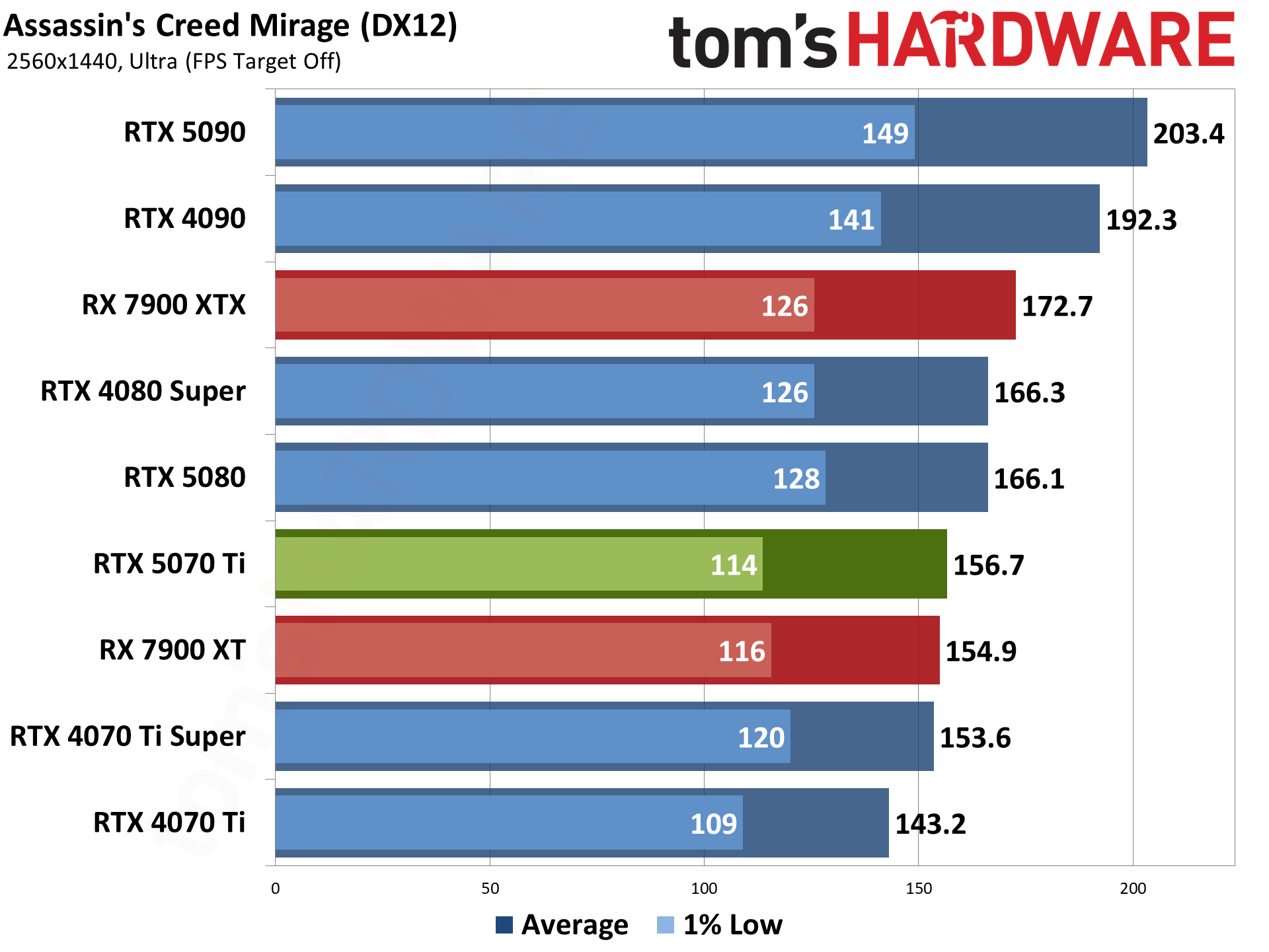

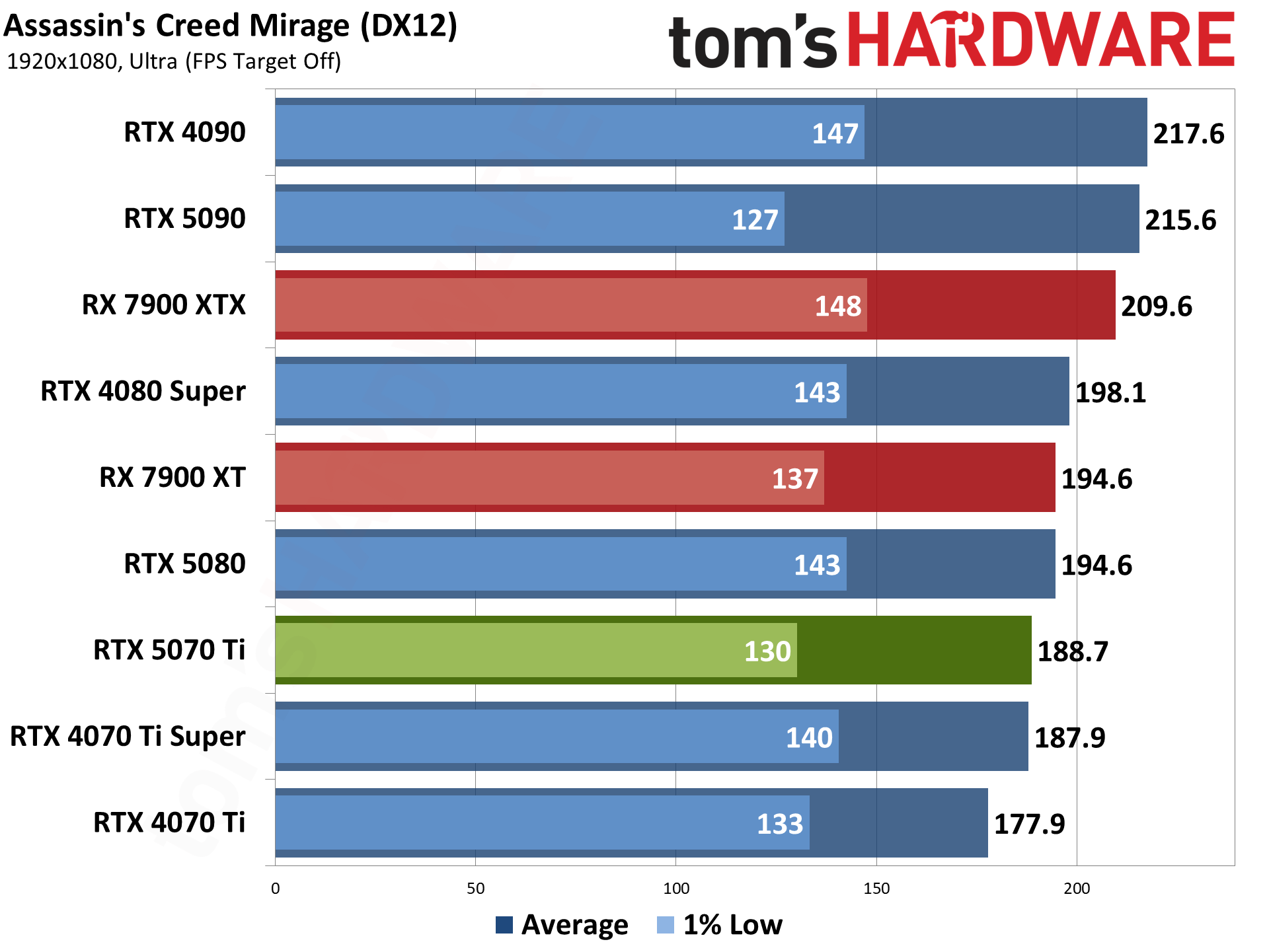

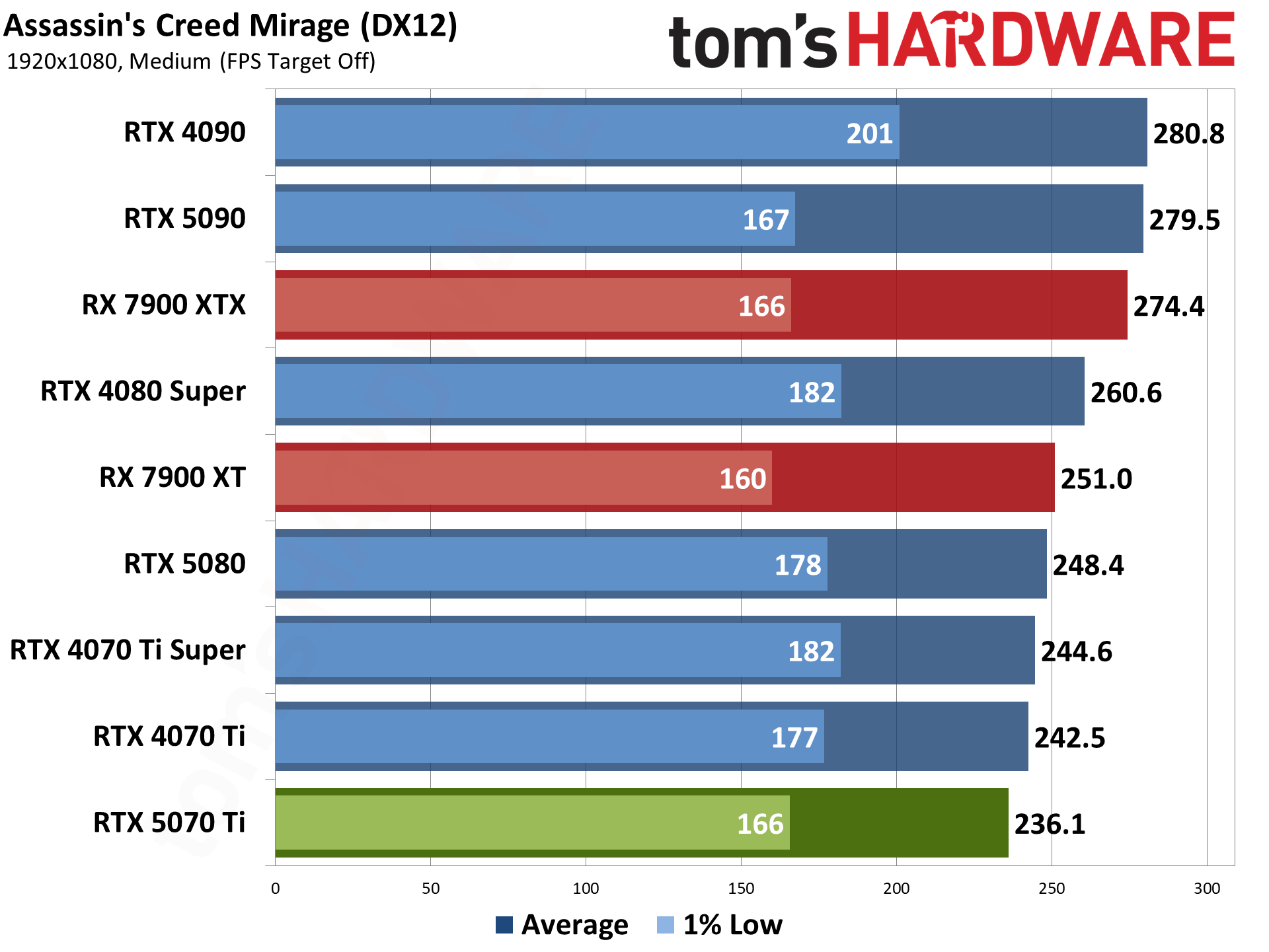

Assassin's Creed Mirage uses the Ubisoft Anvil engine and DirectX 12. It's also an AMD-promoted game, though these days, that doesn't necessarily mean it always runs better on AMD GPUs. It could be CPU optimizations for Ryzen, or more often, it just means a game has FSR2 or FSR3 support — FSR2 in this case. It also supports DLSS and XeSS upscaling.

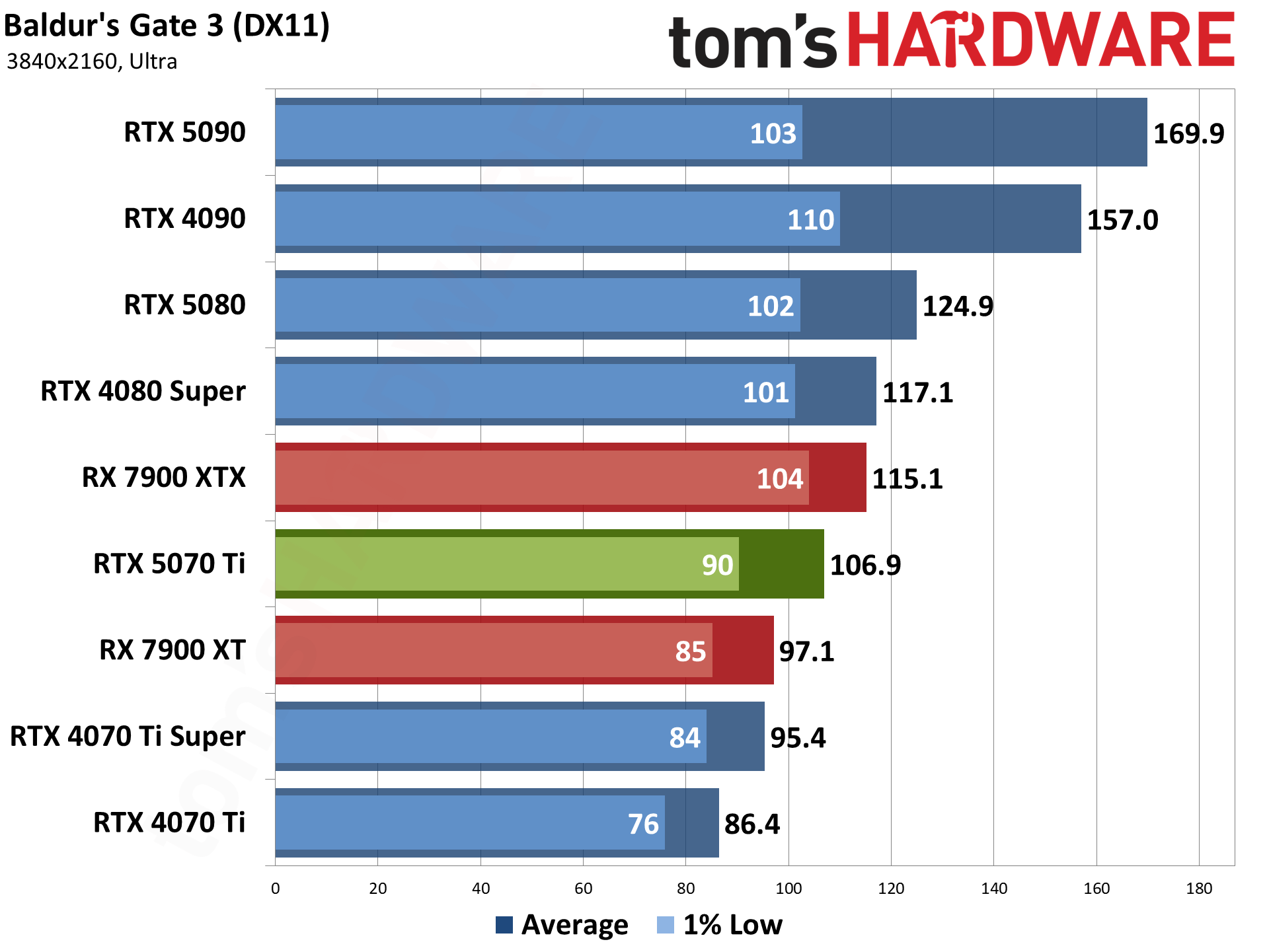

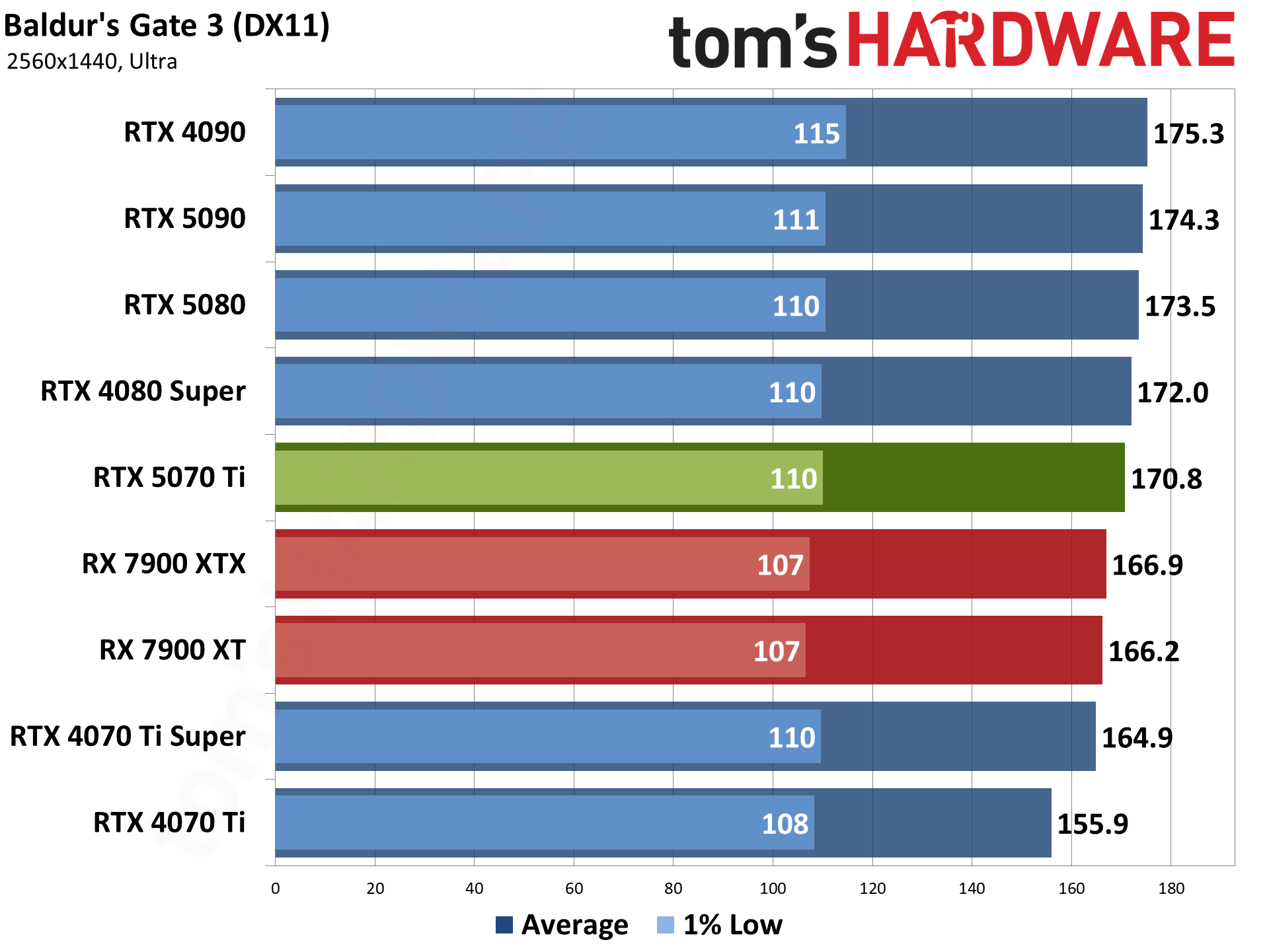

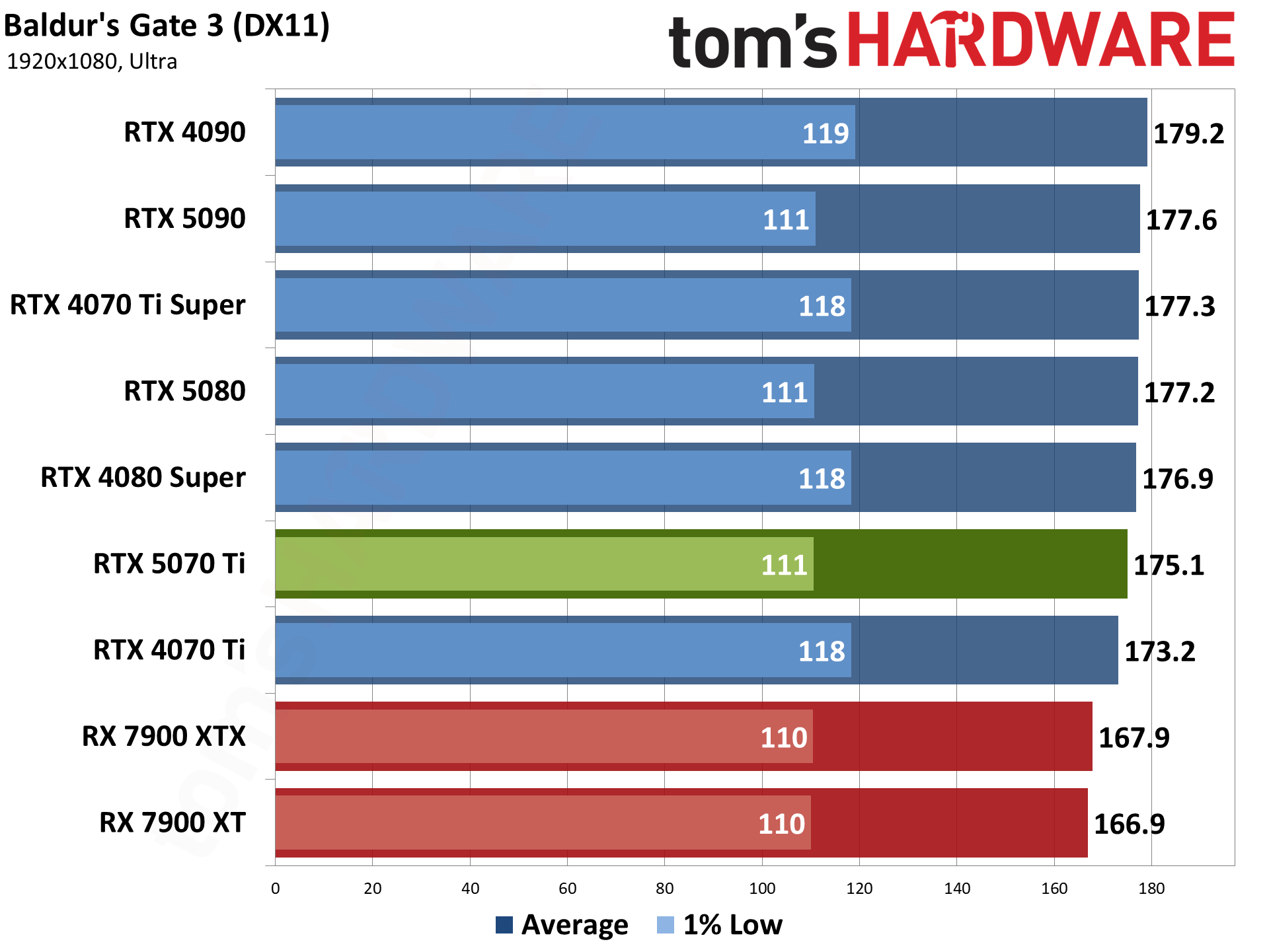

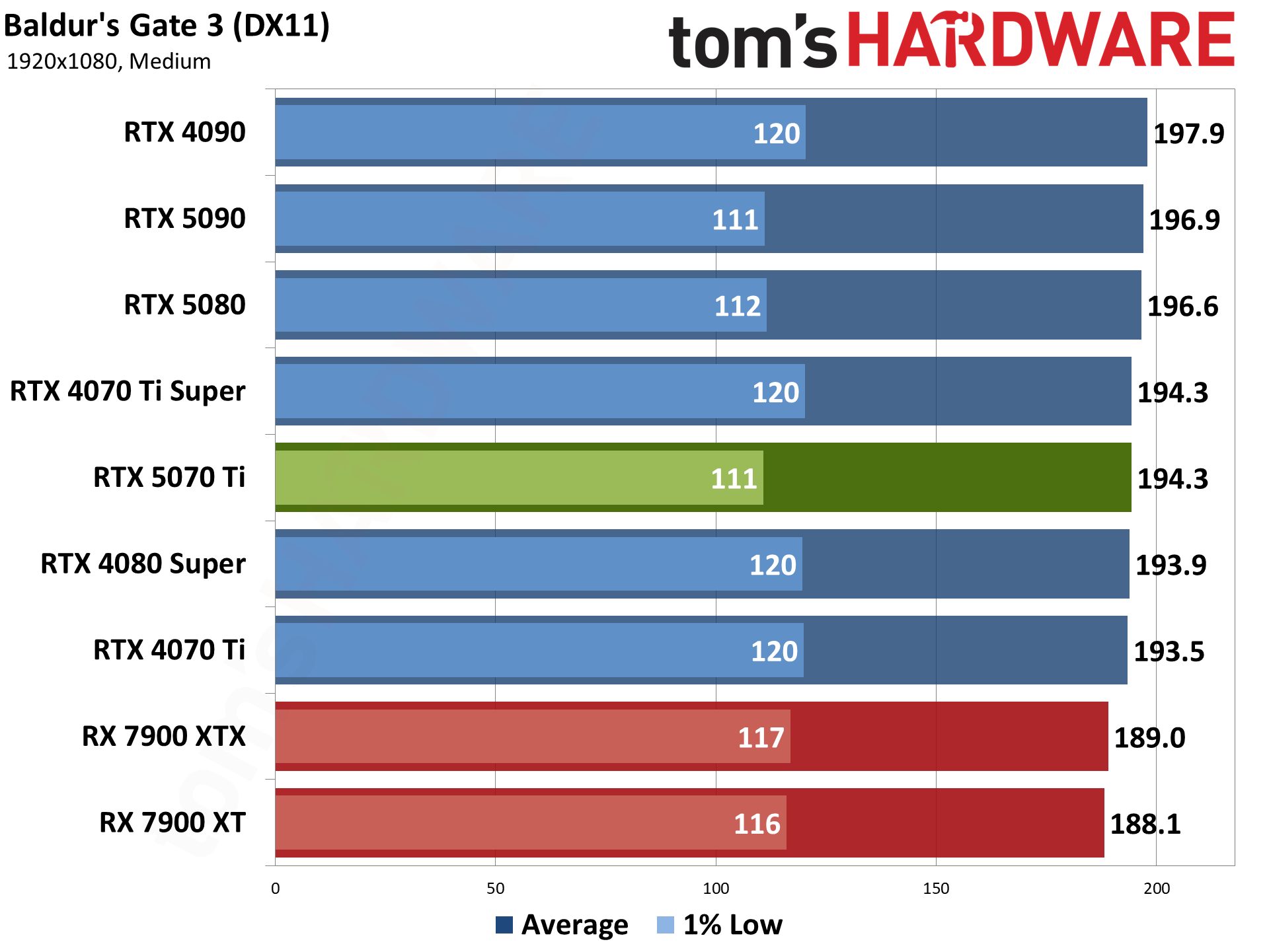

Baldur's Gate 3 is our sole DirectX 11 holdout — it also supports Vulkan, but that performed worse on the GPUs we checked, so we opted to stick with DX11. Built on Larian Studios' Divinity Engine, it's a top-down perspective game, which is a nice change of pace from the many first-person games in our test suite.

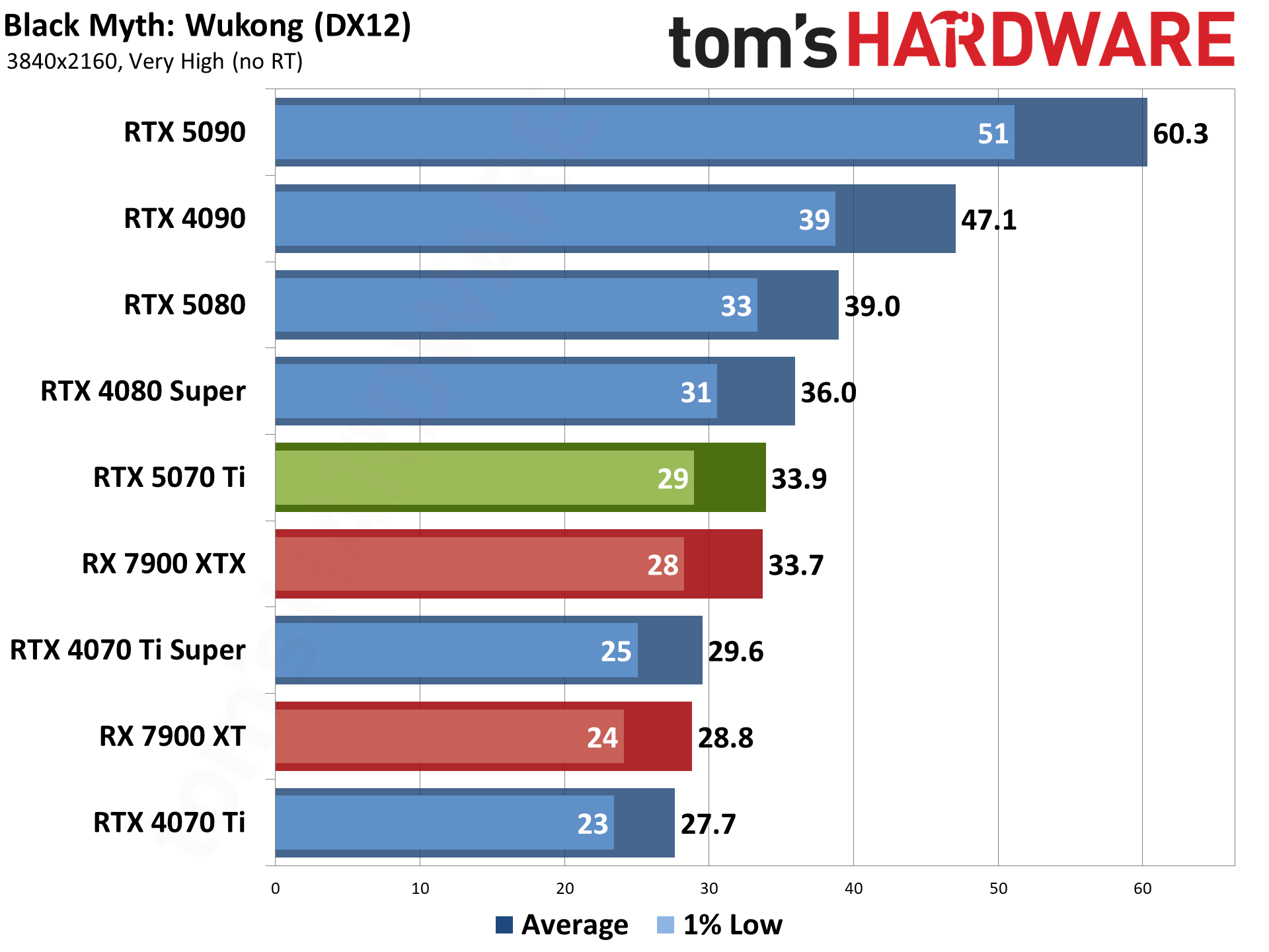

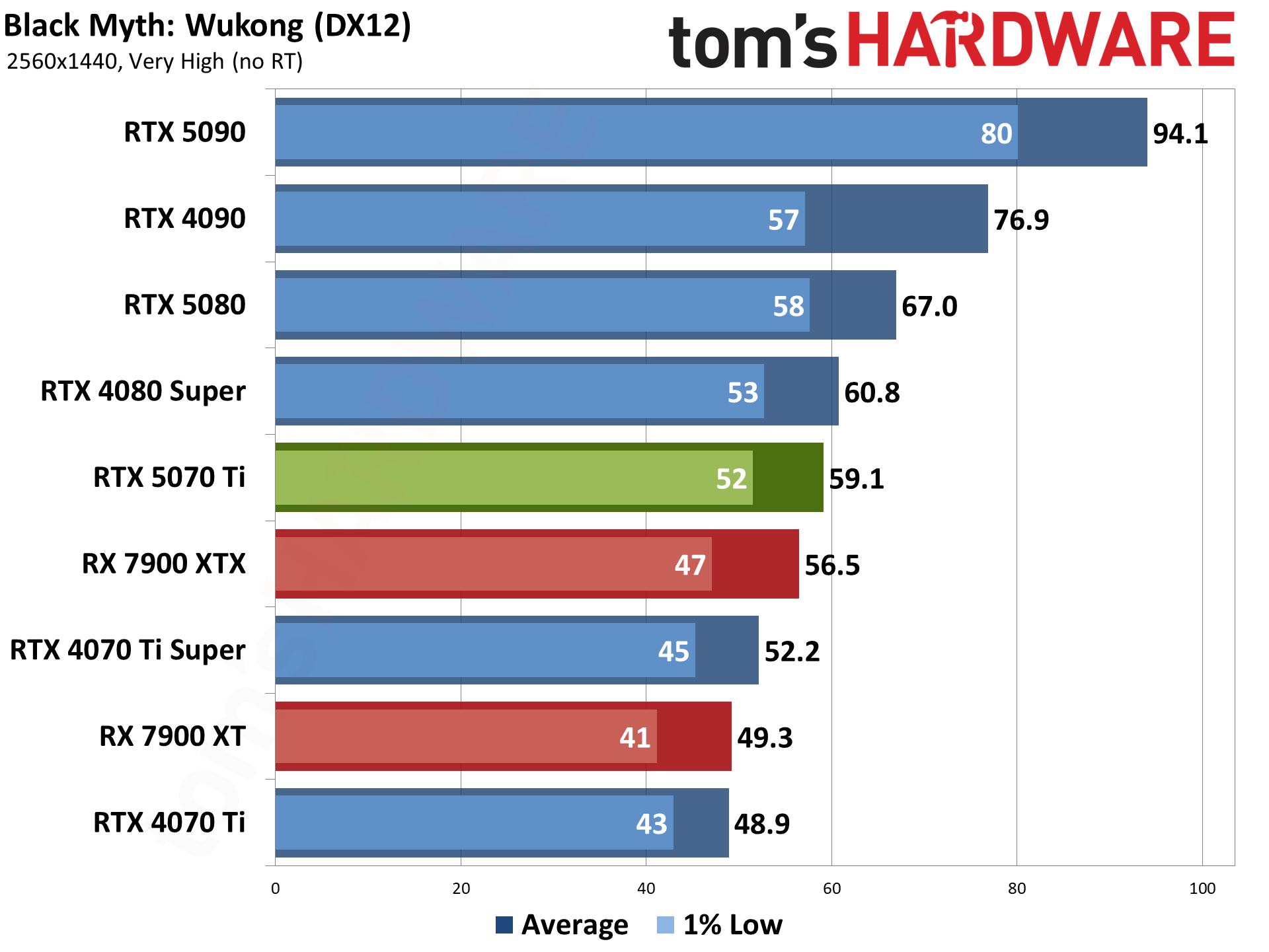

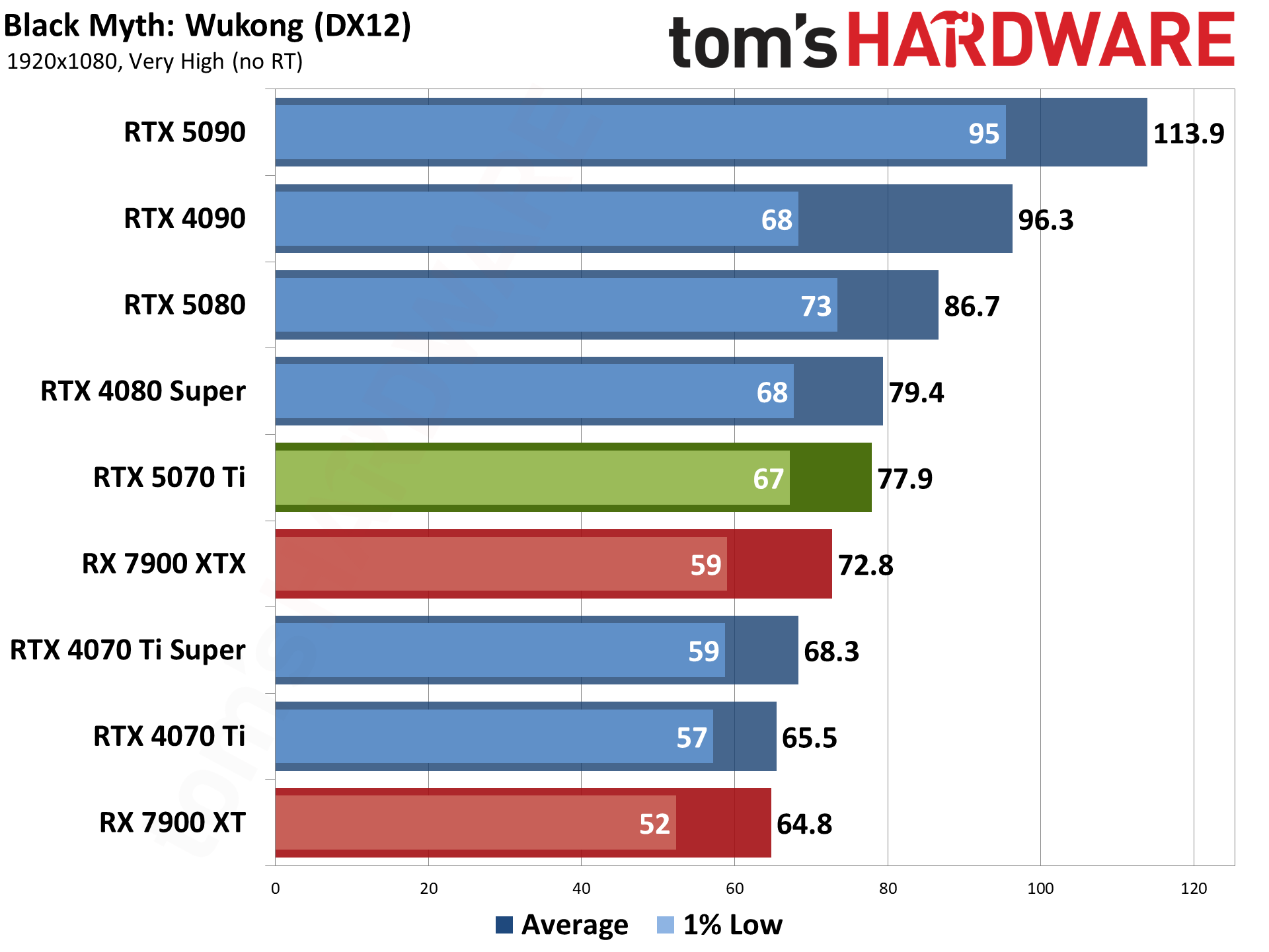

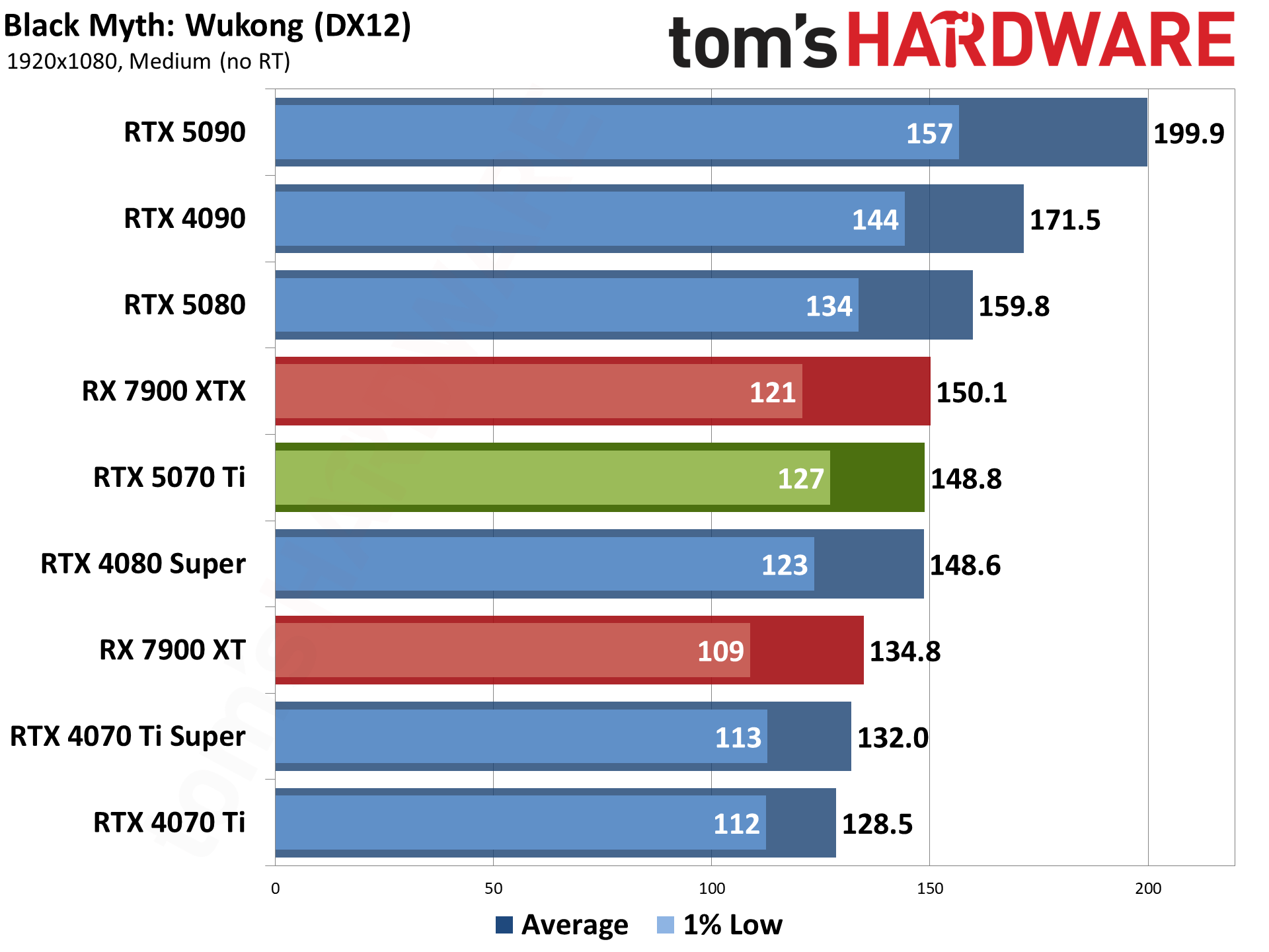

Black Myth: Wukong is one of the newer games in our test suite. Built on Unreal Engine 5, which supports full ray tracing as a high-end option, we opted to test using pure rasterization mode. Full RT may look a bit nicer, but the performance hit is quite severe. (Check our linked article for our initial launch benchmarks if you want to see how it runs with full RT enabled. We've got supplemental testing coming as well.)

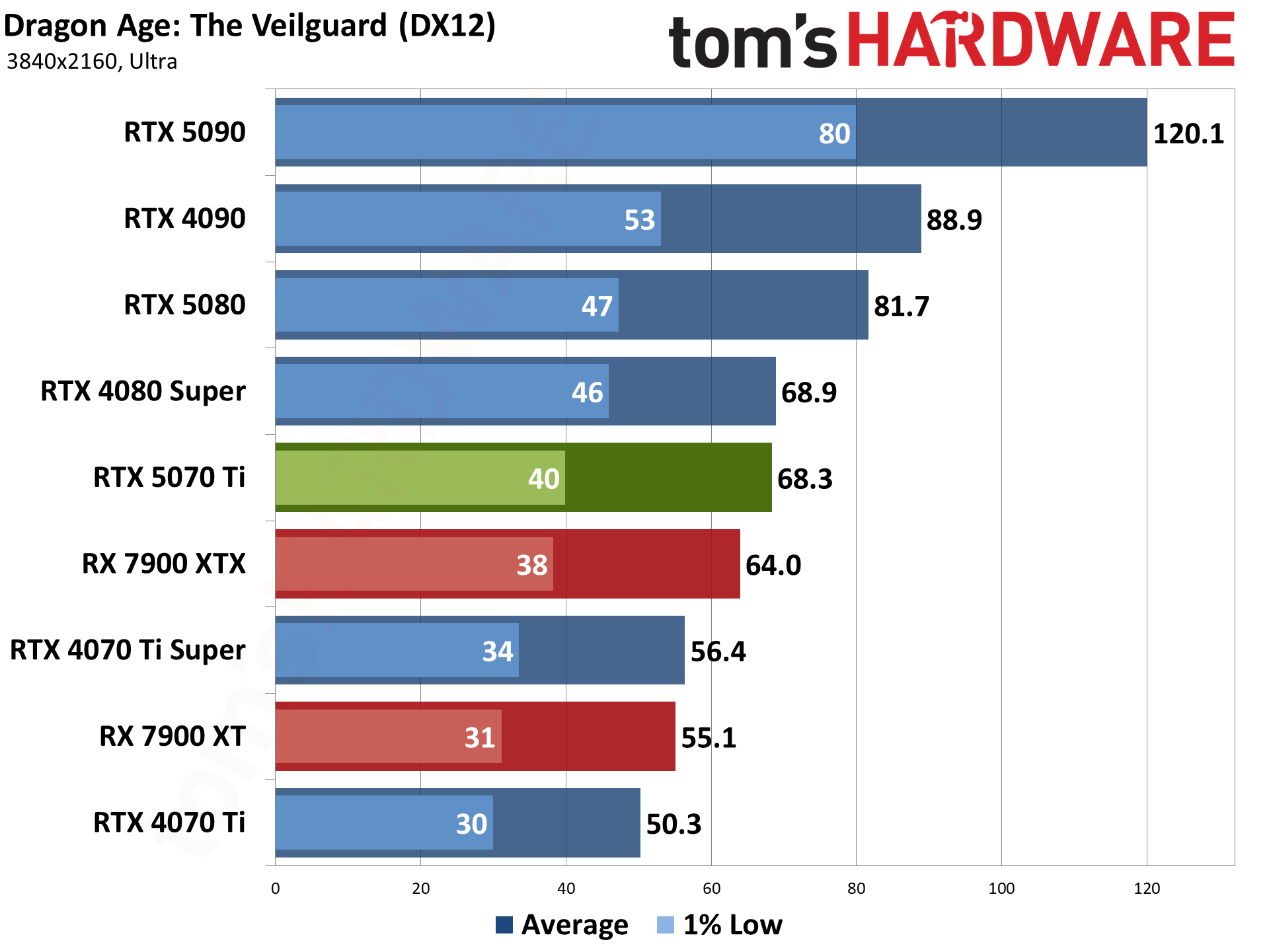

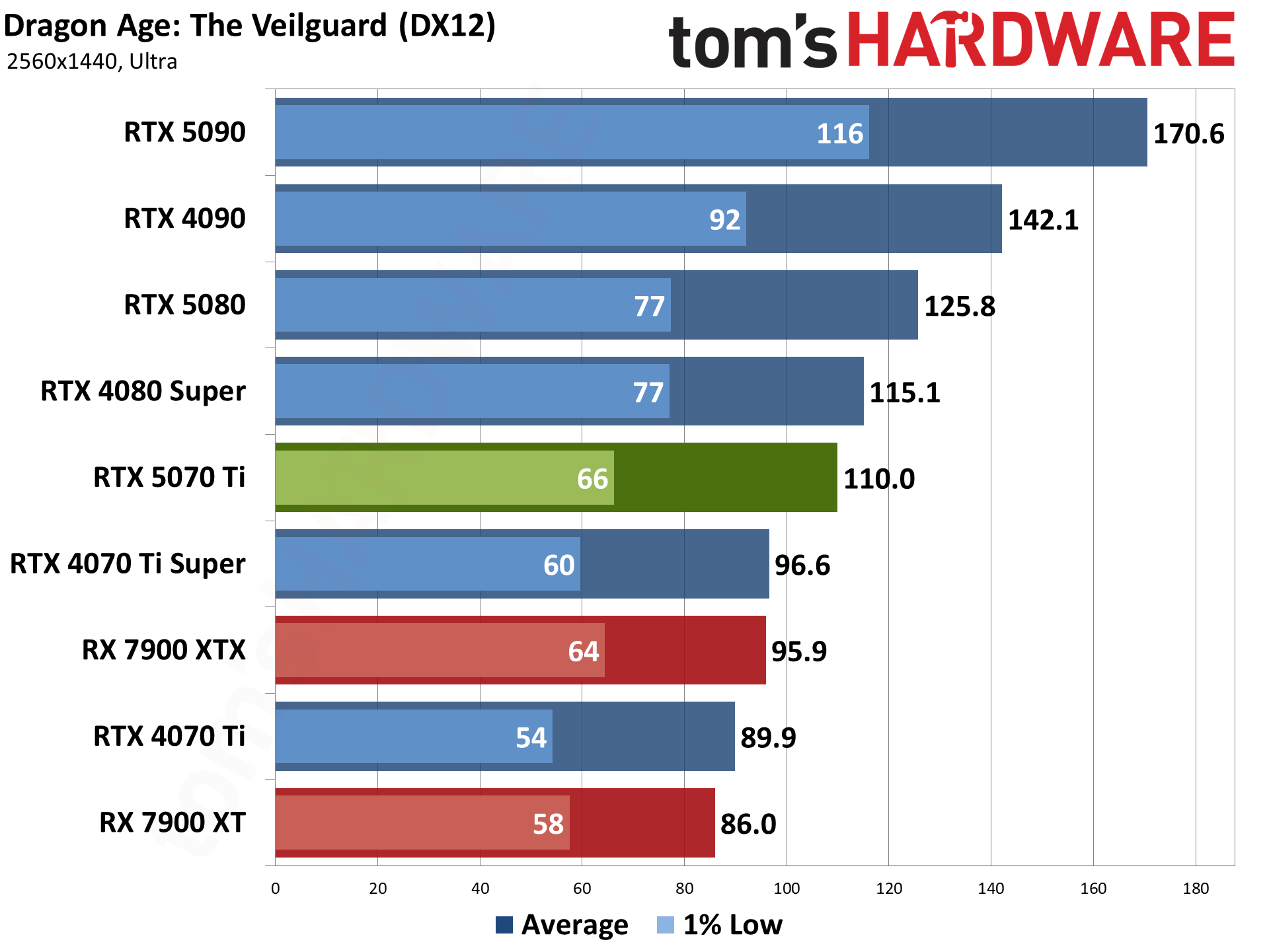

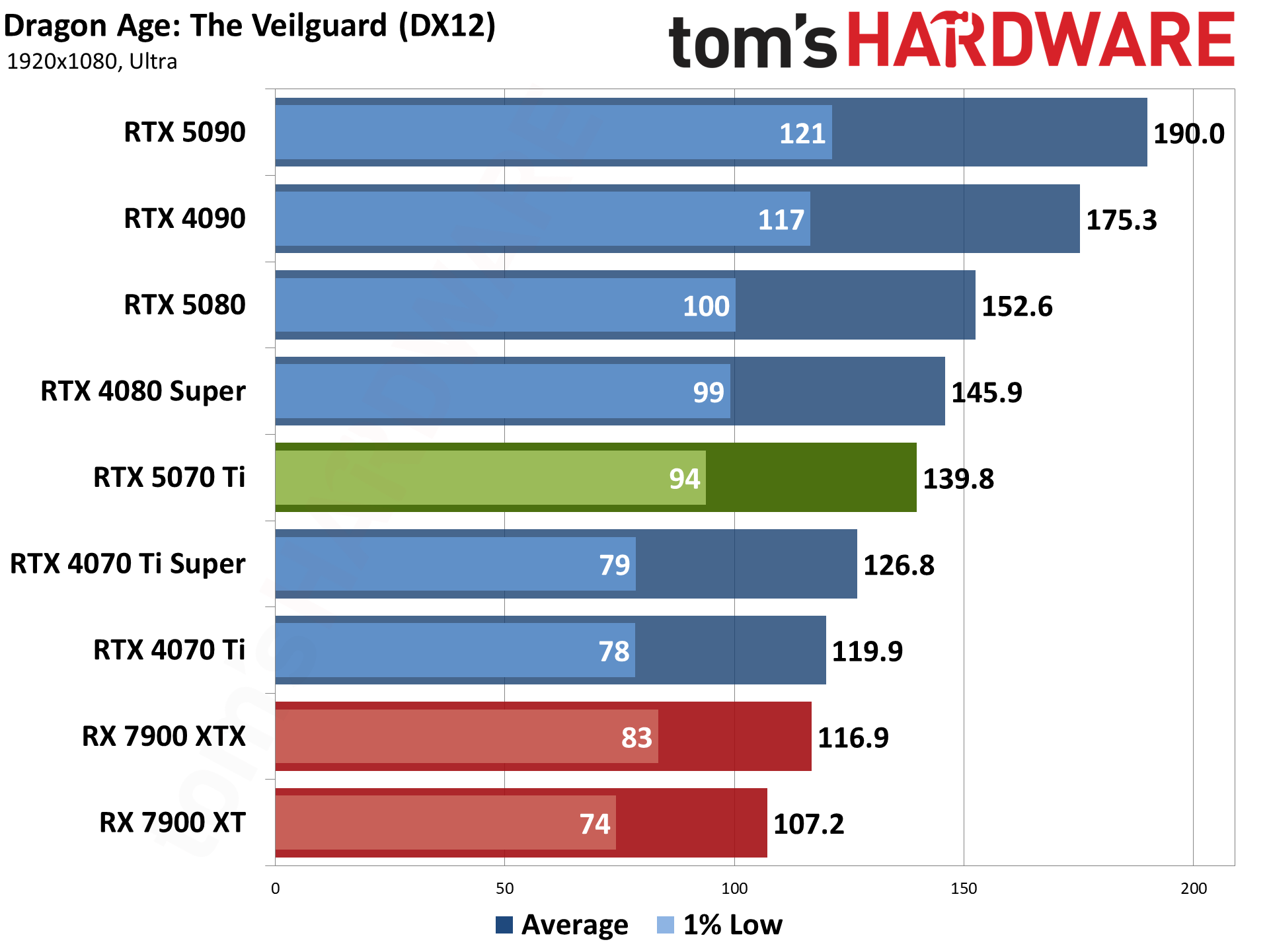

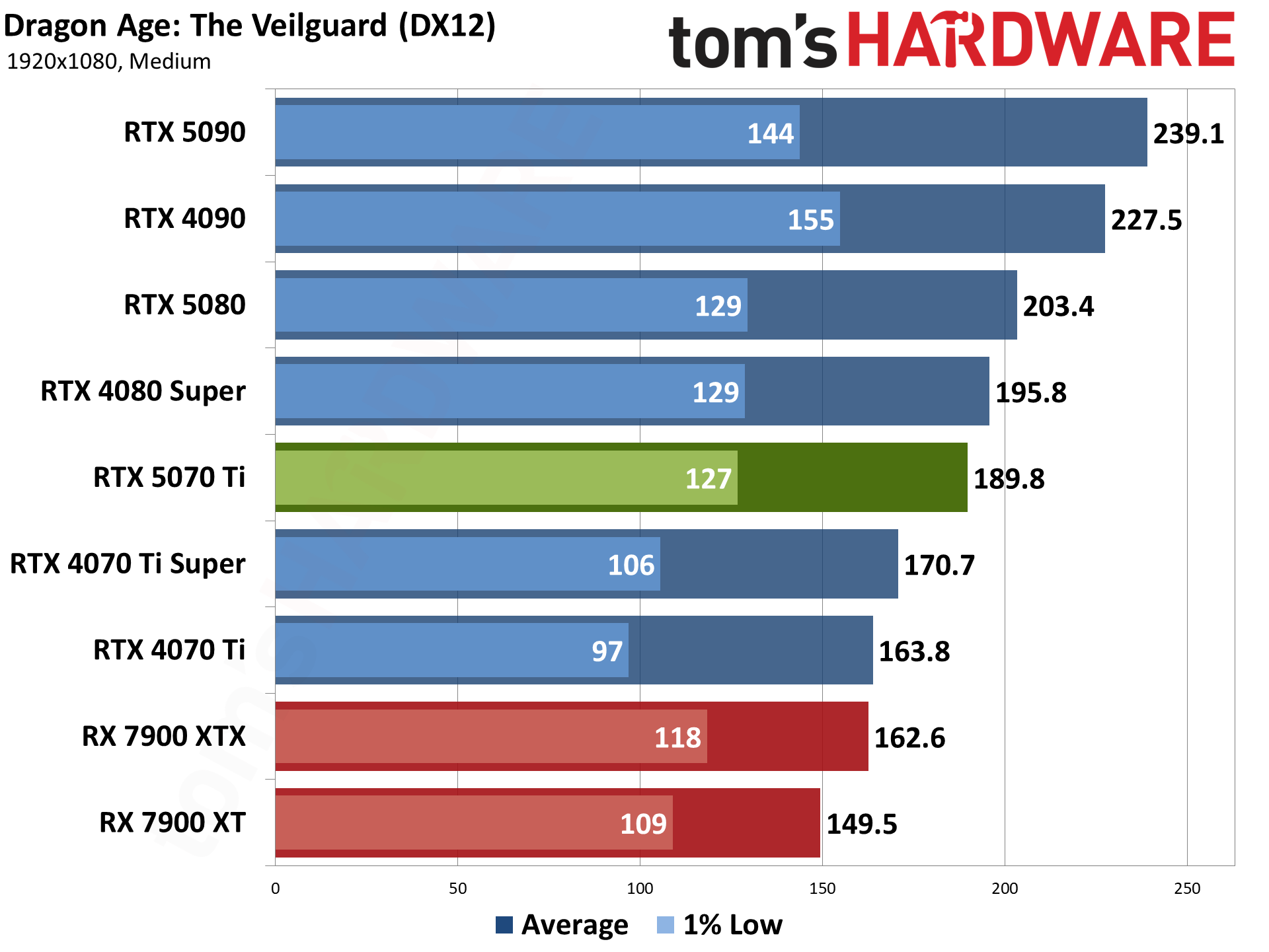

Dragon Age: The Veilguard uses the Frostbite engine and runs via the DX12 API. It's one of the newest games in my test suite, having launched this past Halloween. It's been received quite well, though, and in terms of visuals, I'd put it right up there with Unreal Engine 5 games — without some of the LOD pop-in that happens so frequently with UE5.

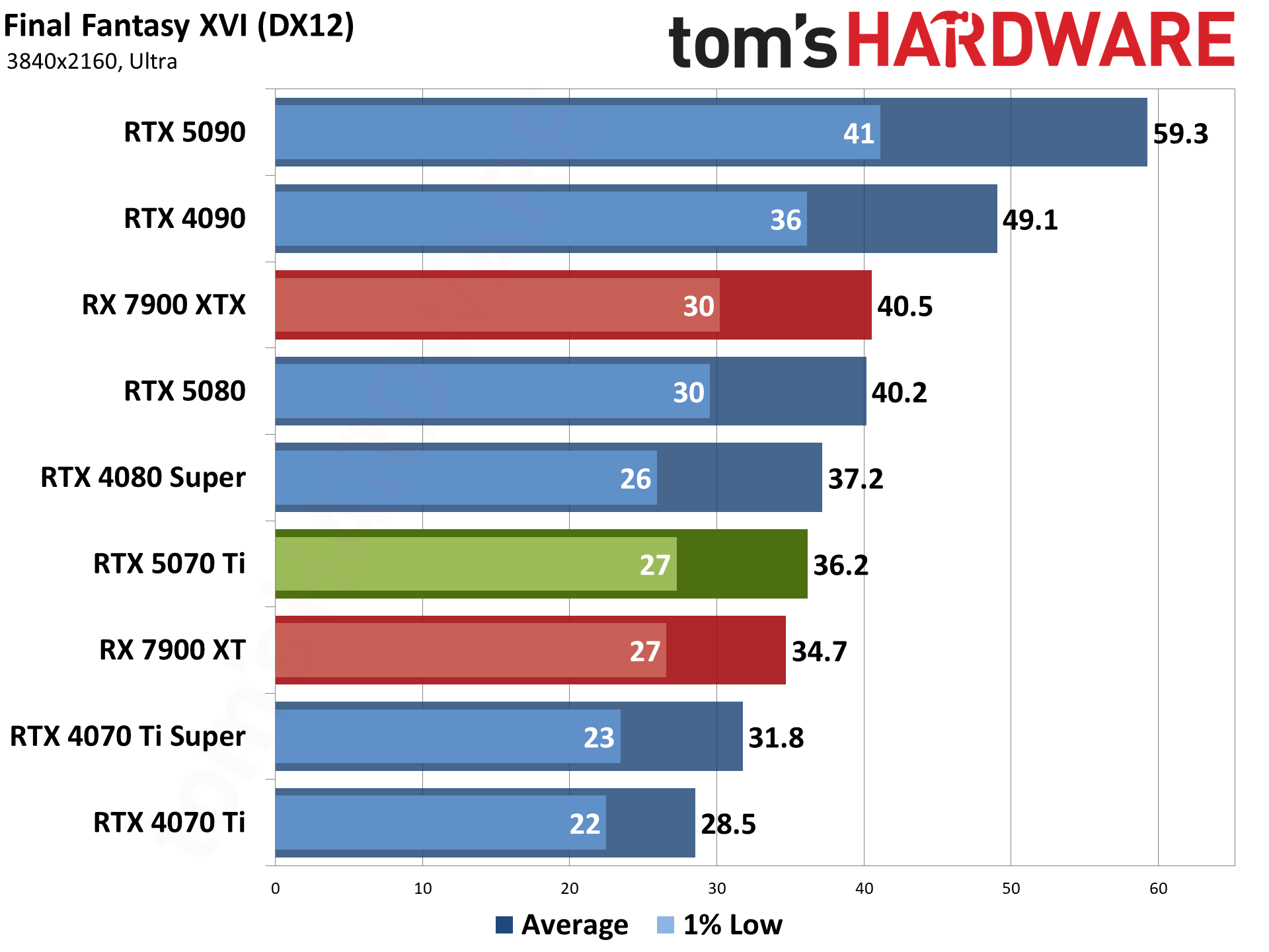

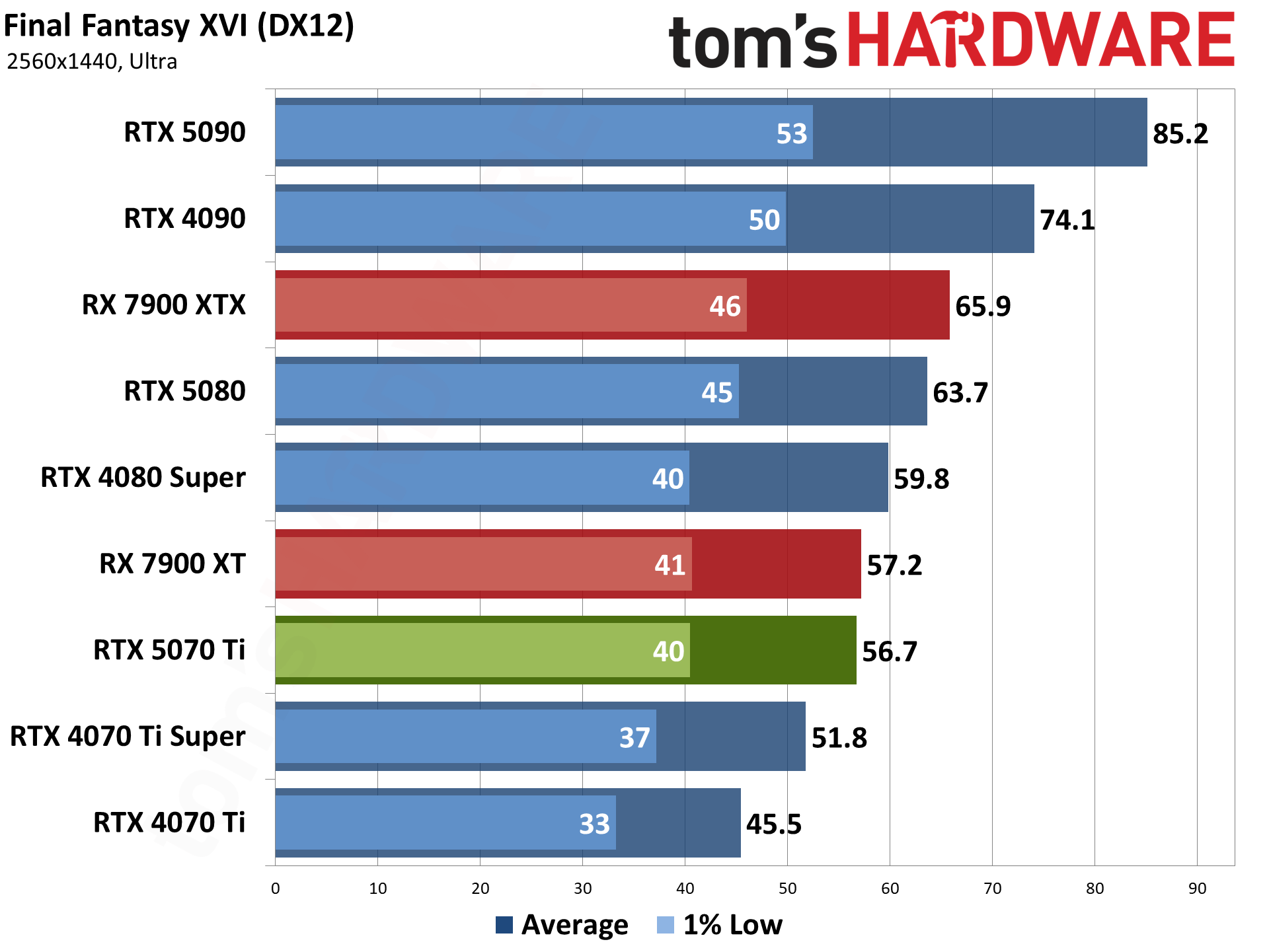

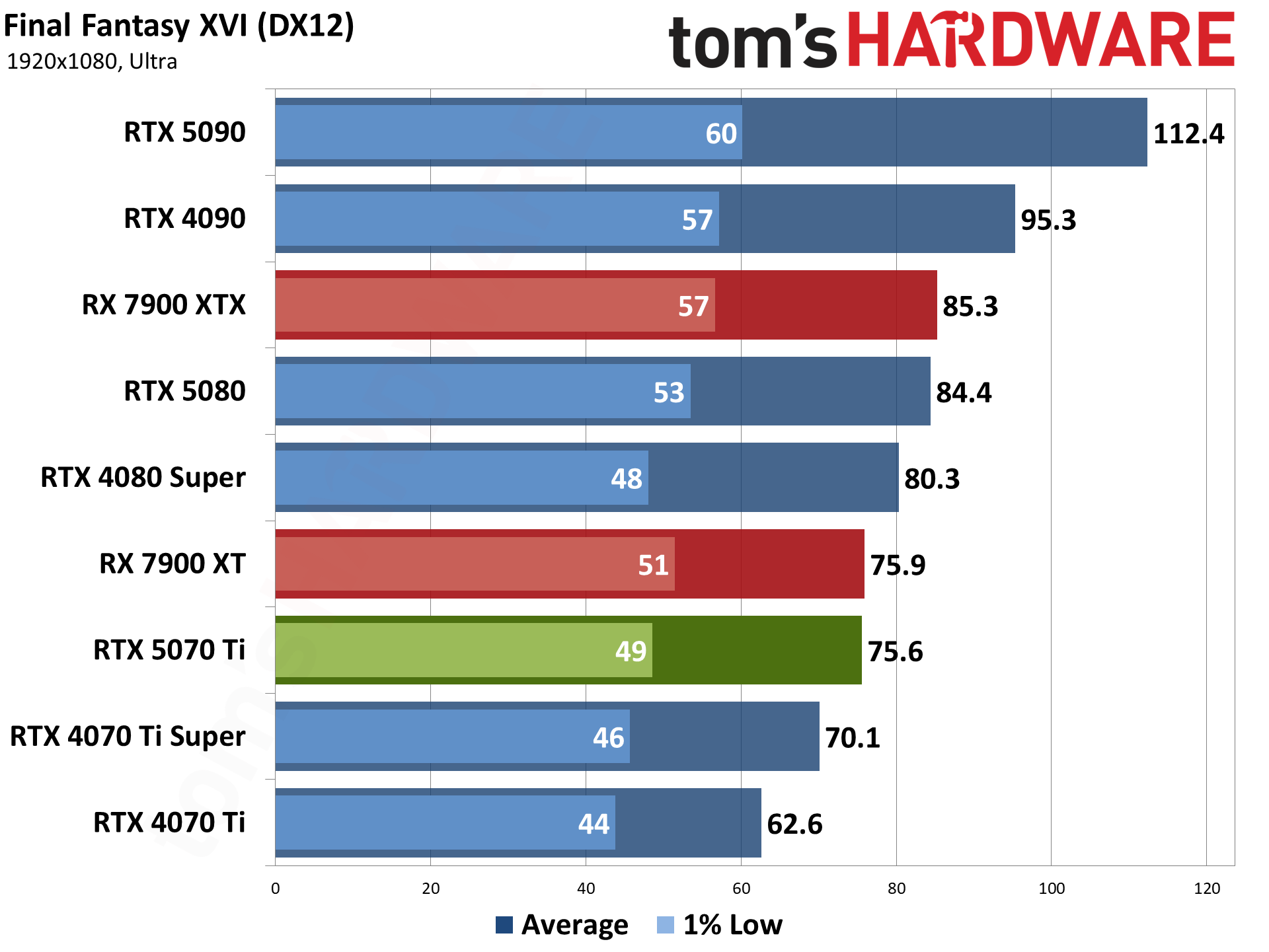

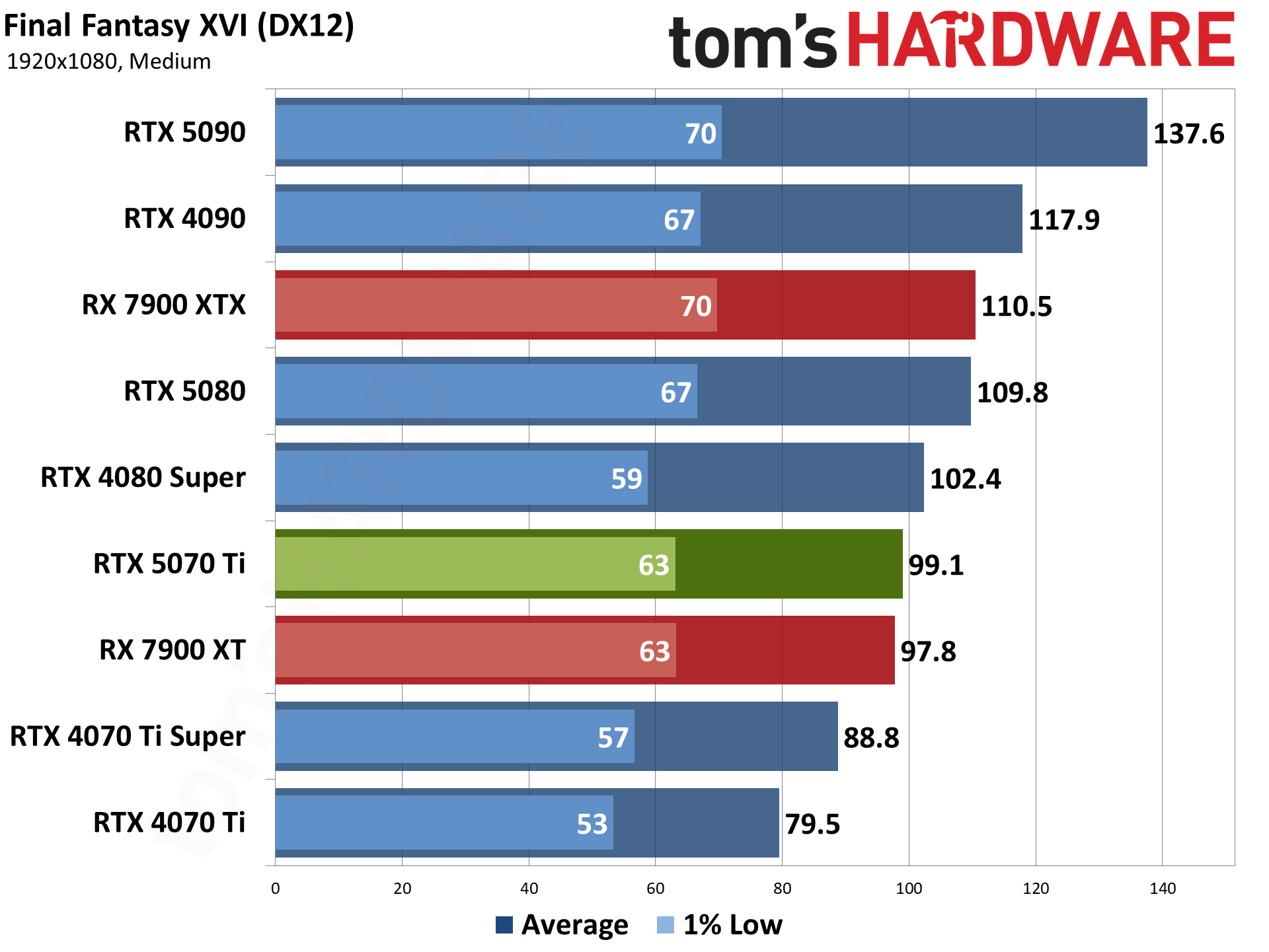

Final Fantasy XVI came out for the PS5 last year, but it only recently saw a Windows release. It's also either incredibly demanding or quite poorly optimized (or both), but it does tend to be very GPU limited. Our test sequence consists of running a set path around the town of Lost Wing.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

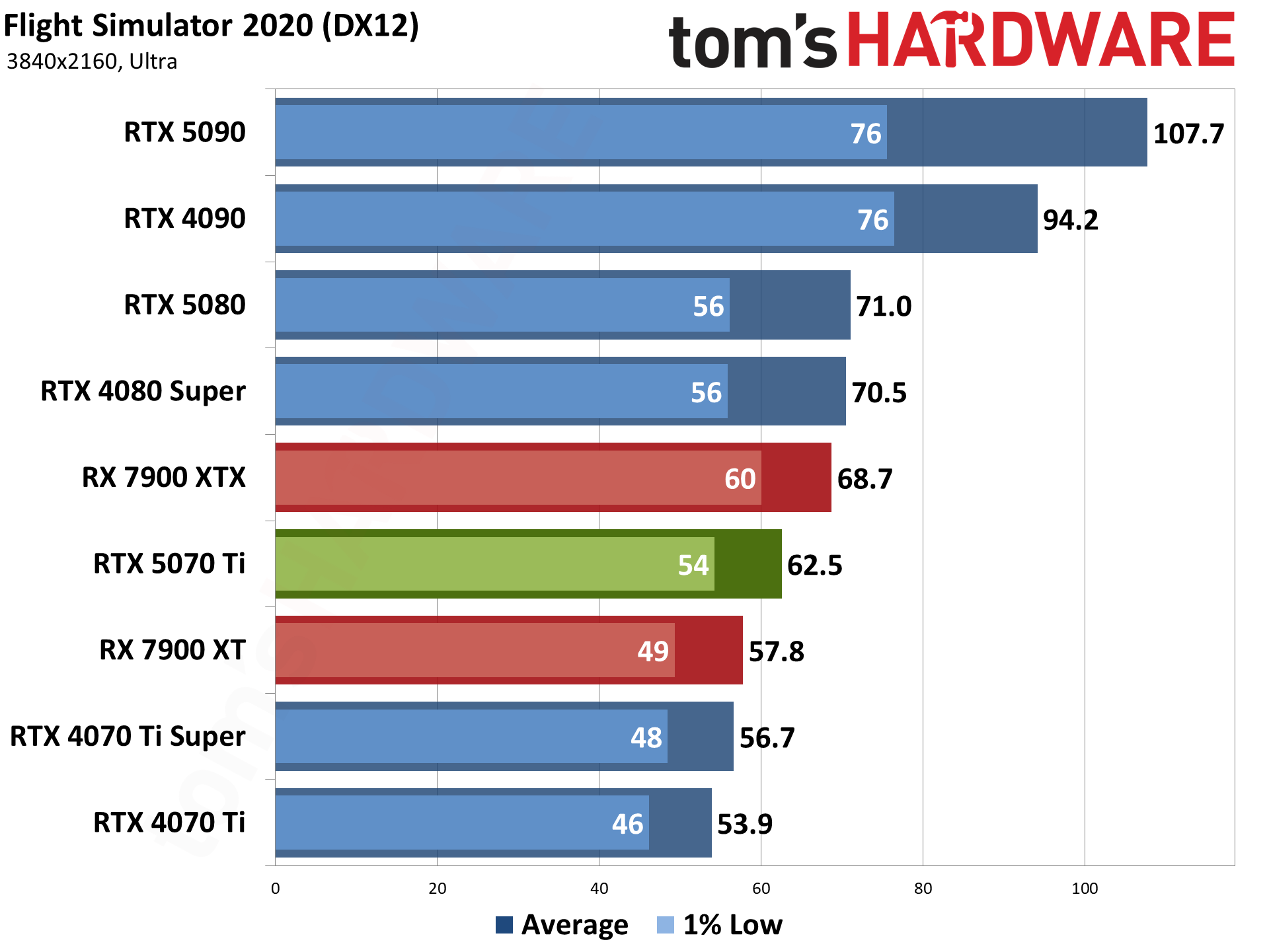

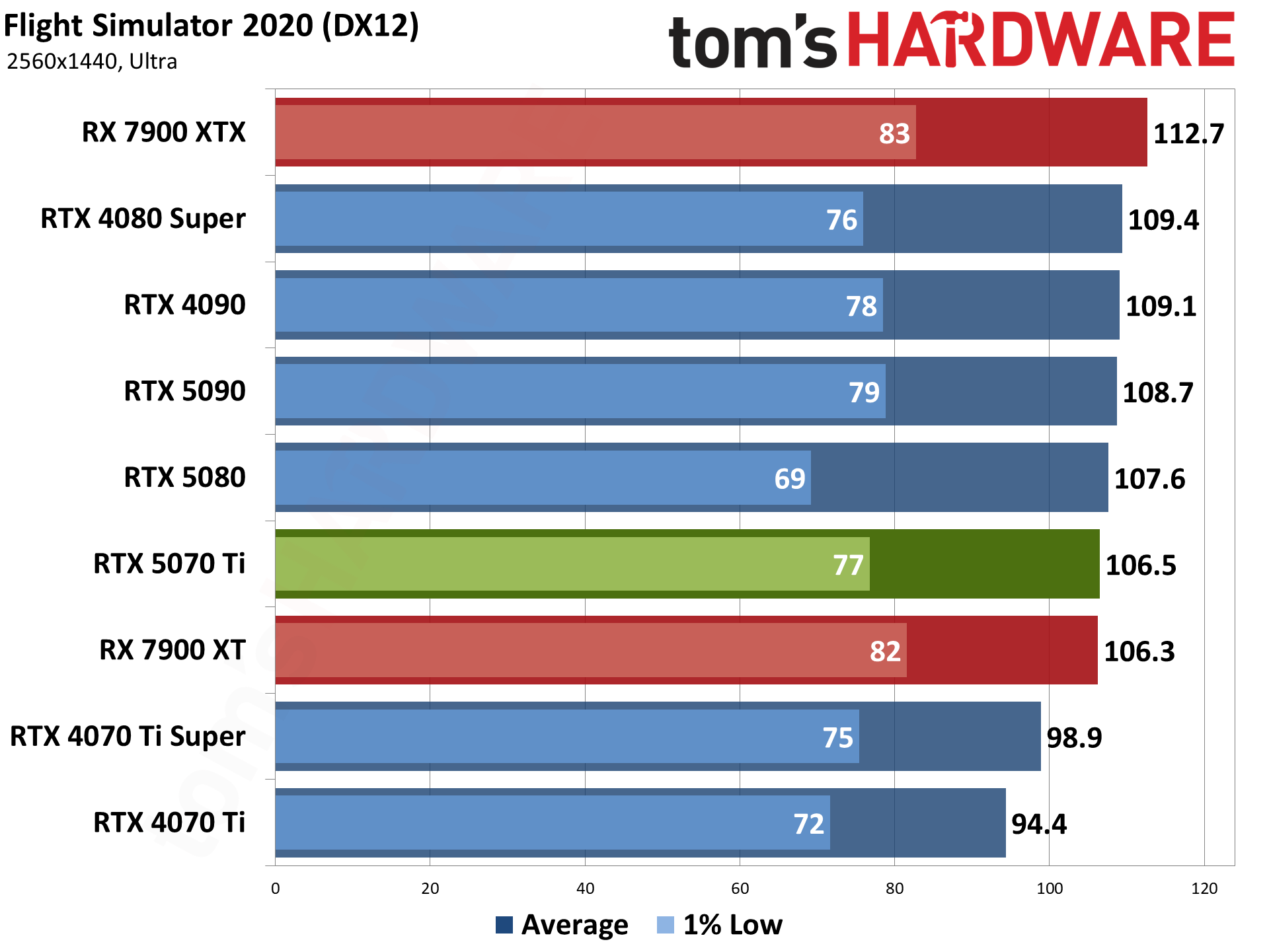

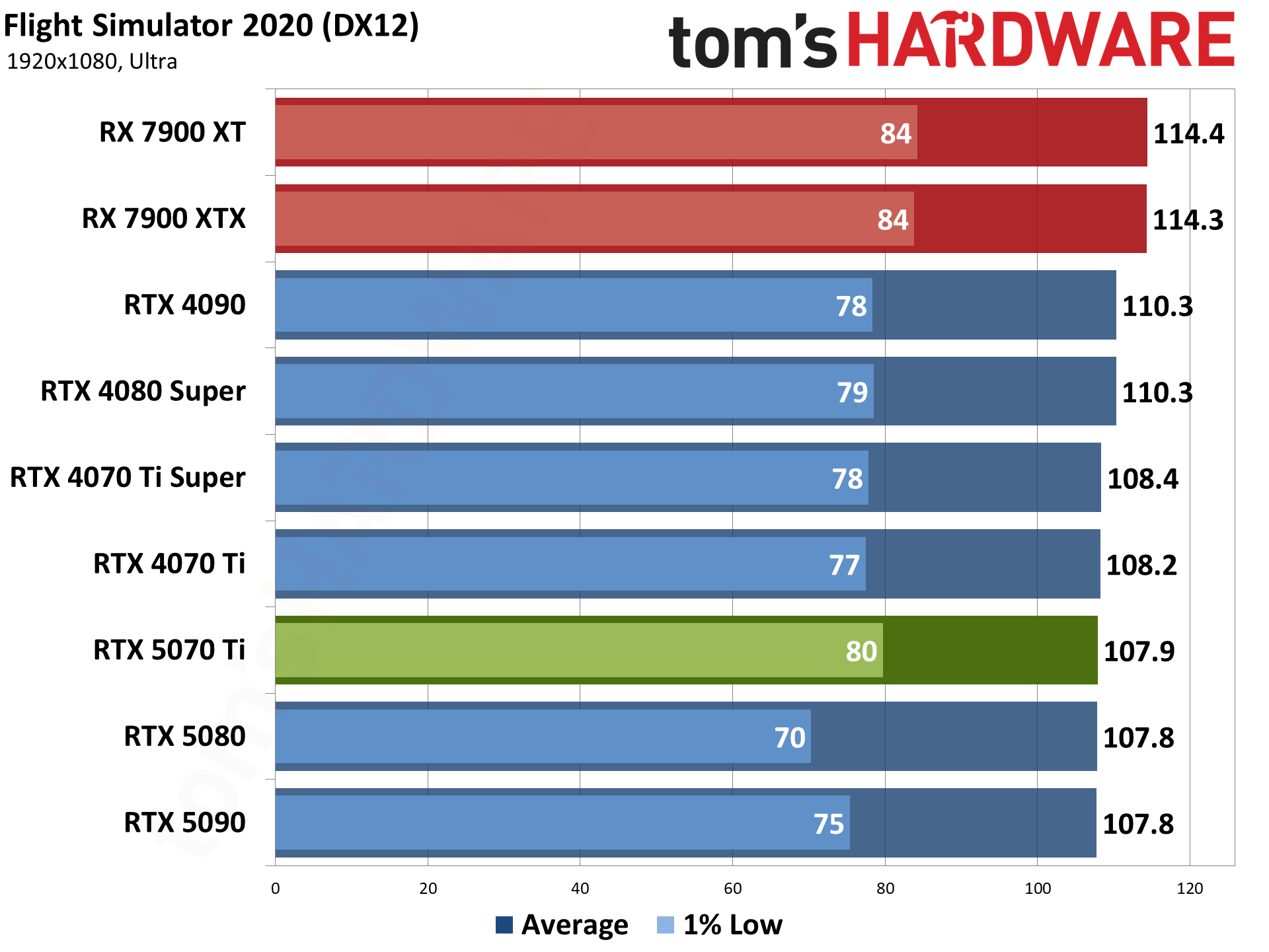

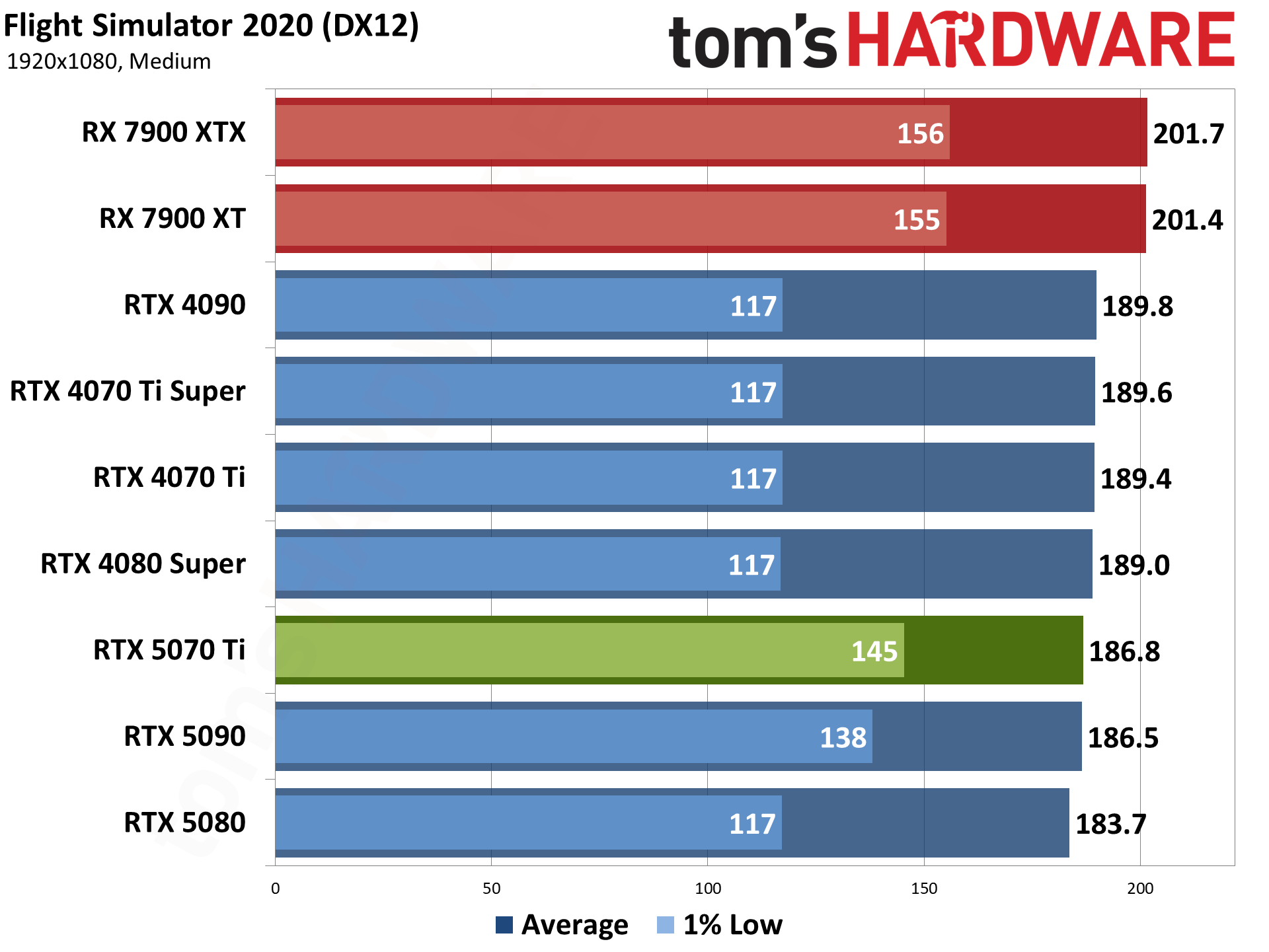

We've been using Flight Simulator 2020 for several years, and there's a new release below. But it's so new that we also wanted to keep the original around a bit longer as a point of reference. We've switched to using the 'beta' (eternal beta) DX12 path for our testing now, as it's required for DLSS frame generation, even if it runs a bit slower on Nvidia GPUs.

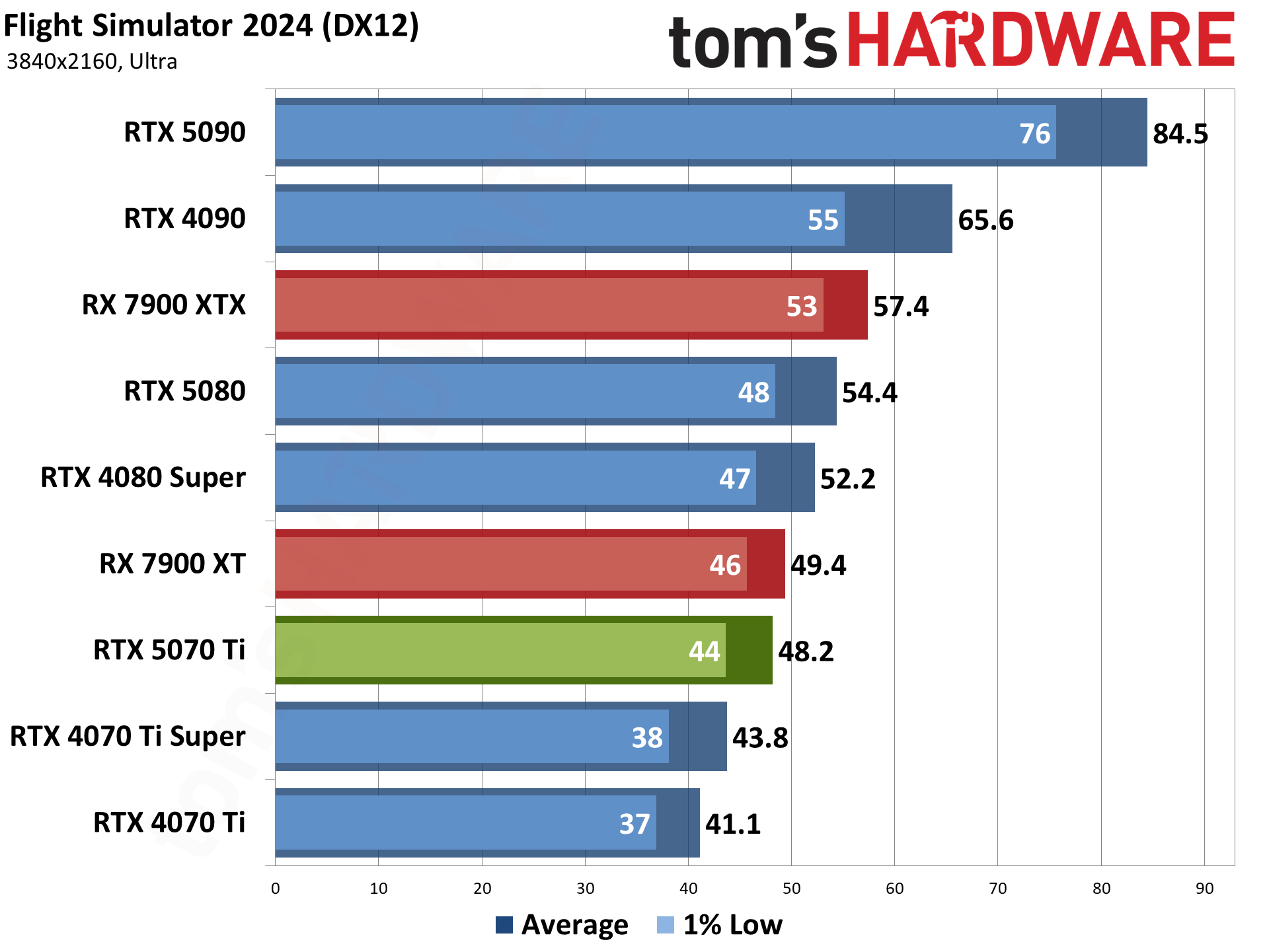

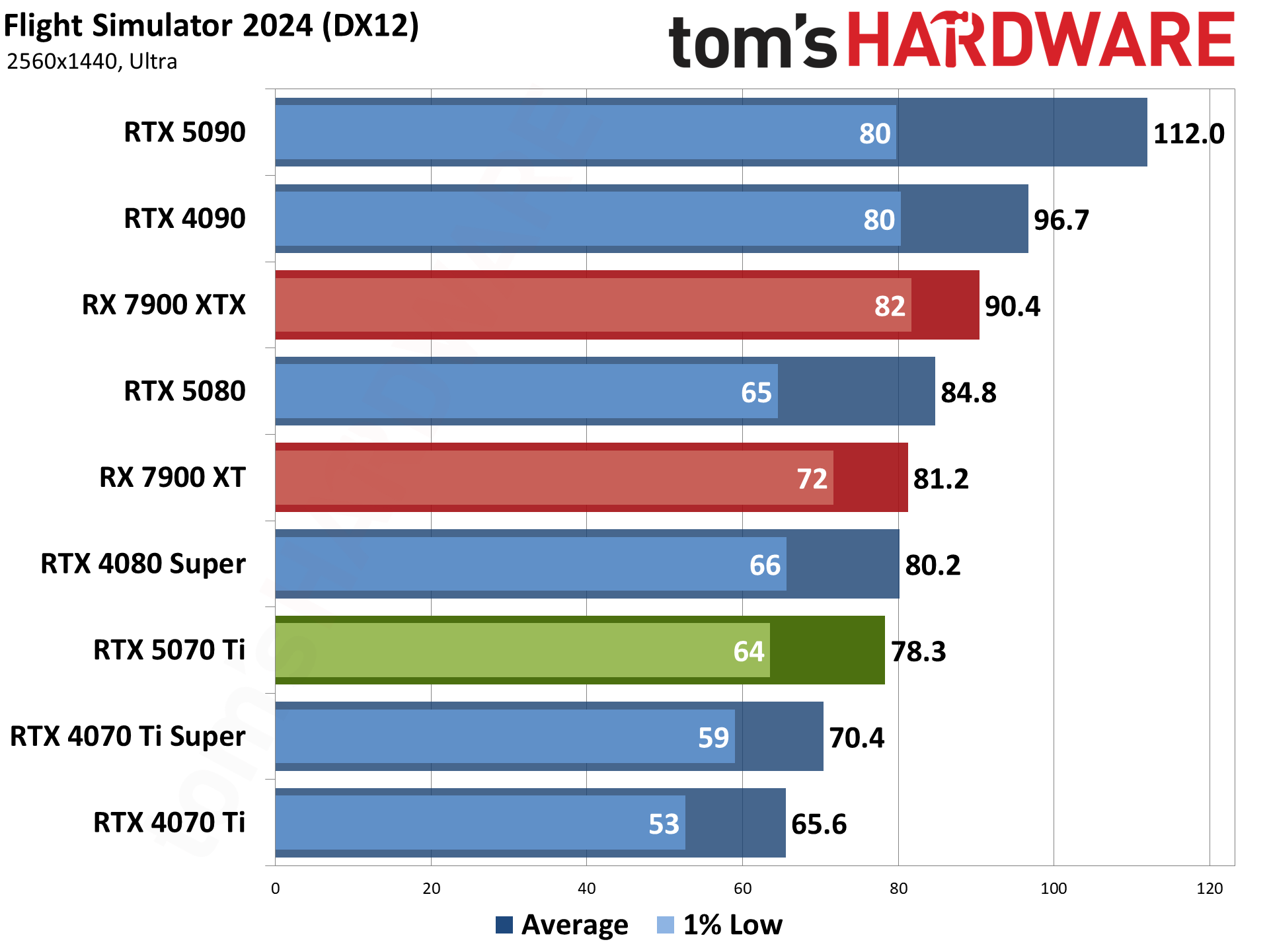

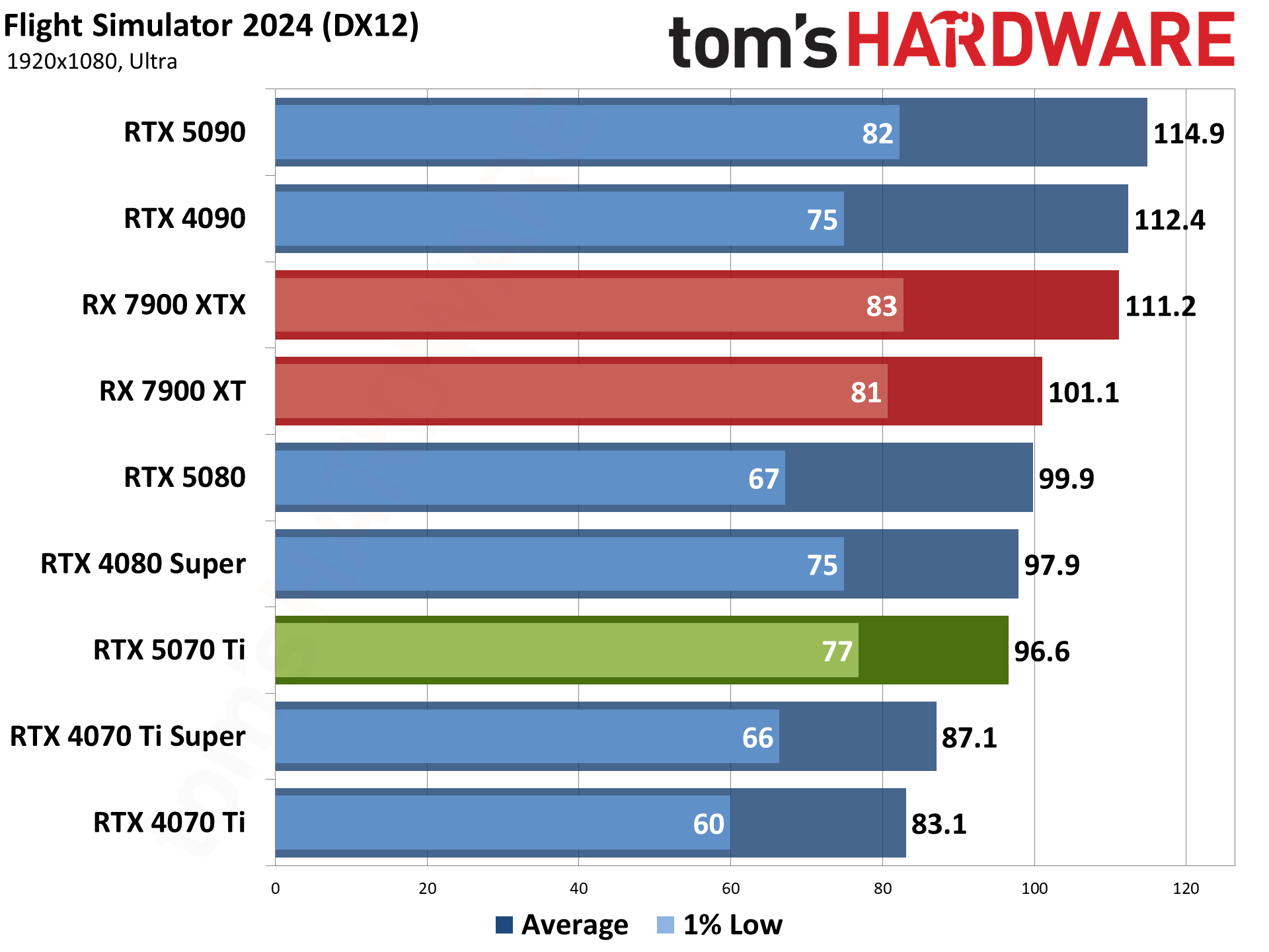

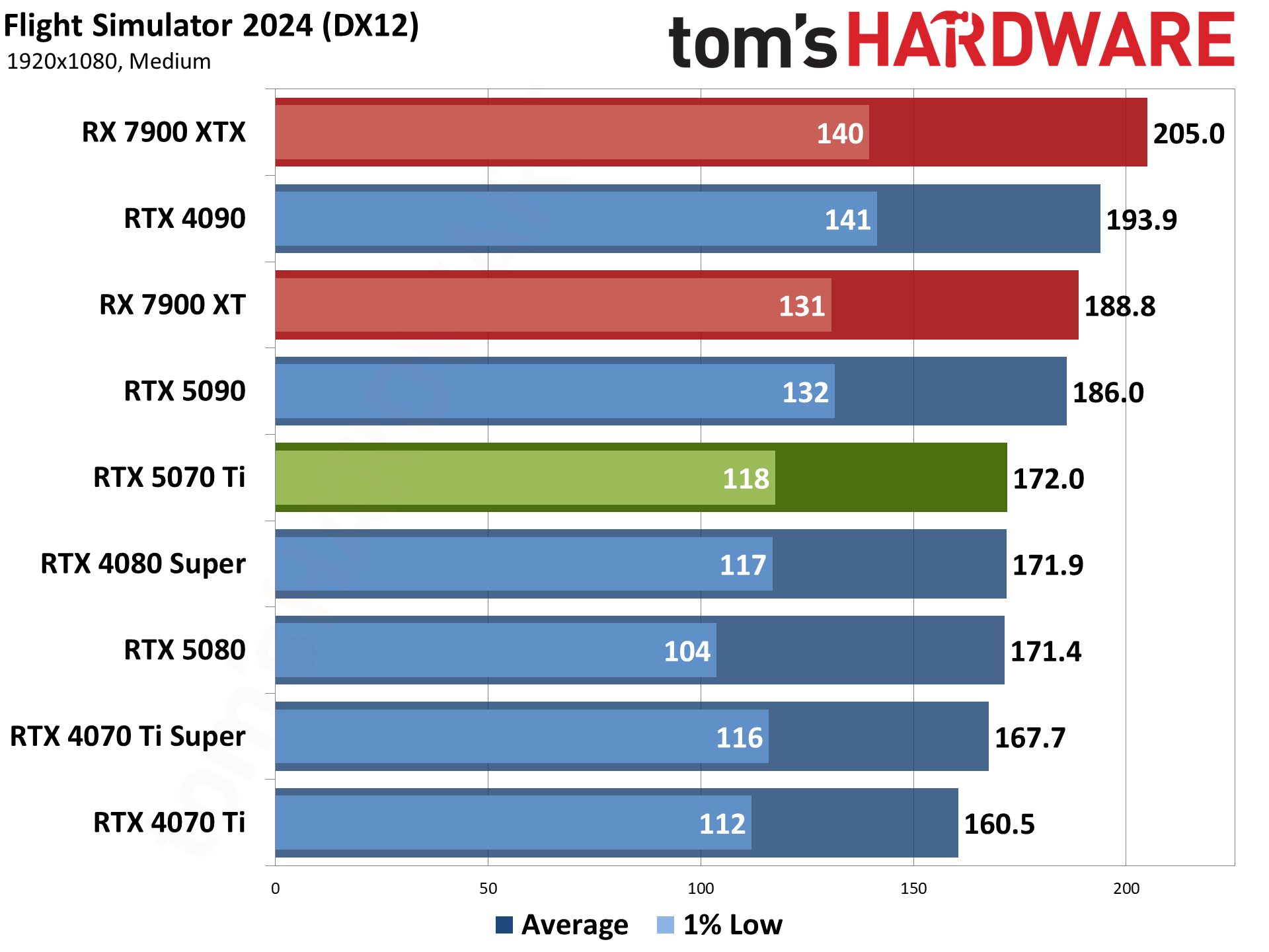

Flight Simulator 2024 is the latest release of the storied franchise, and it's even more demanding than the above 2020 release — with some differences in what sort of hardware it seems to like best. Where the 2020 version really appreciated AMD's X3D processors, the 2024 release tends to be more forgiving to Intel CPUs, thanks to improved DirectX 12 code (DX11 is no longer supported).

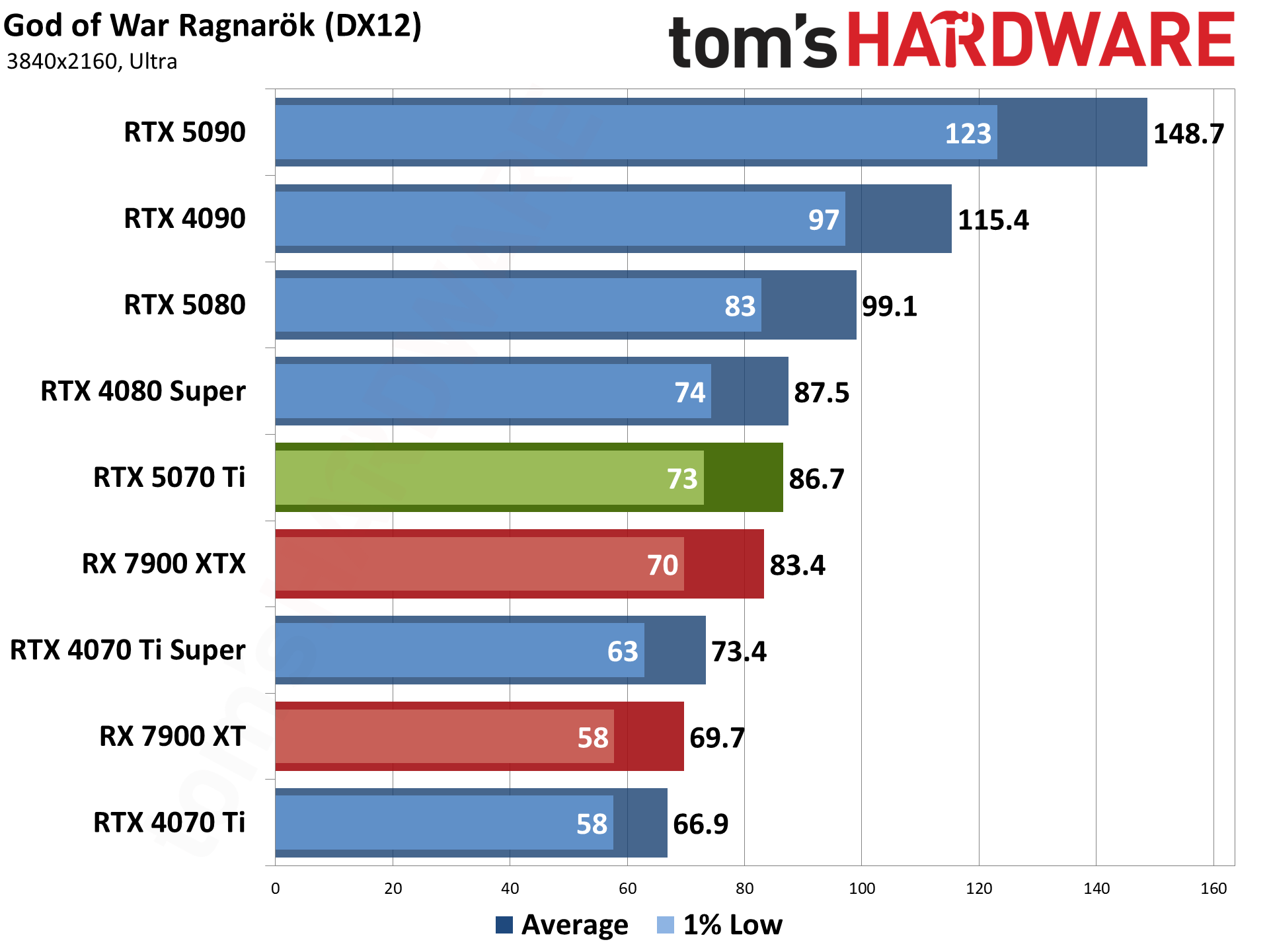

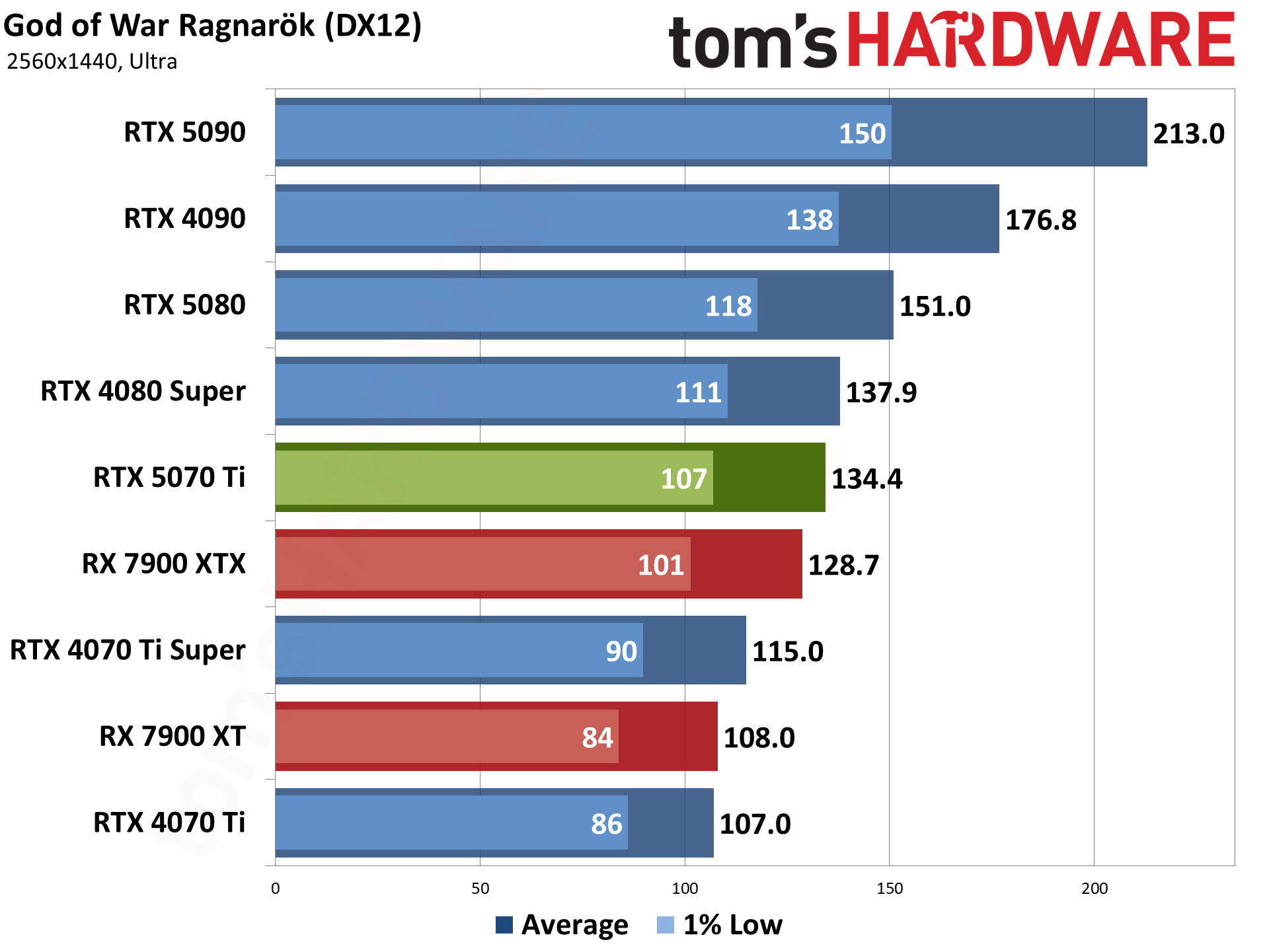

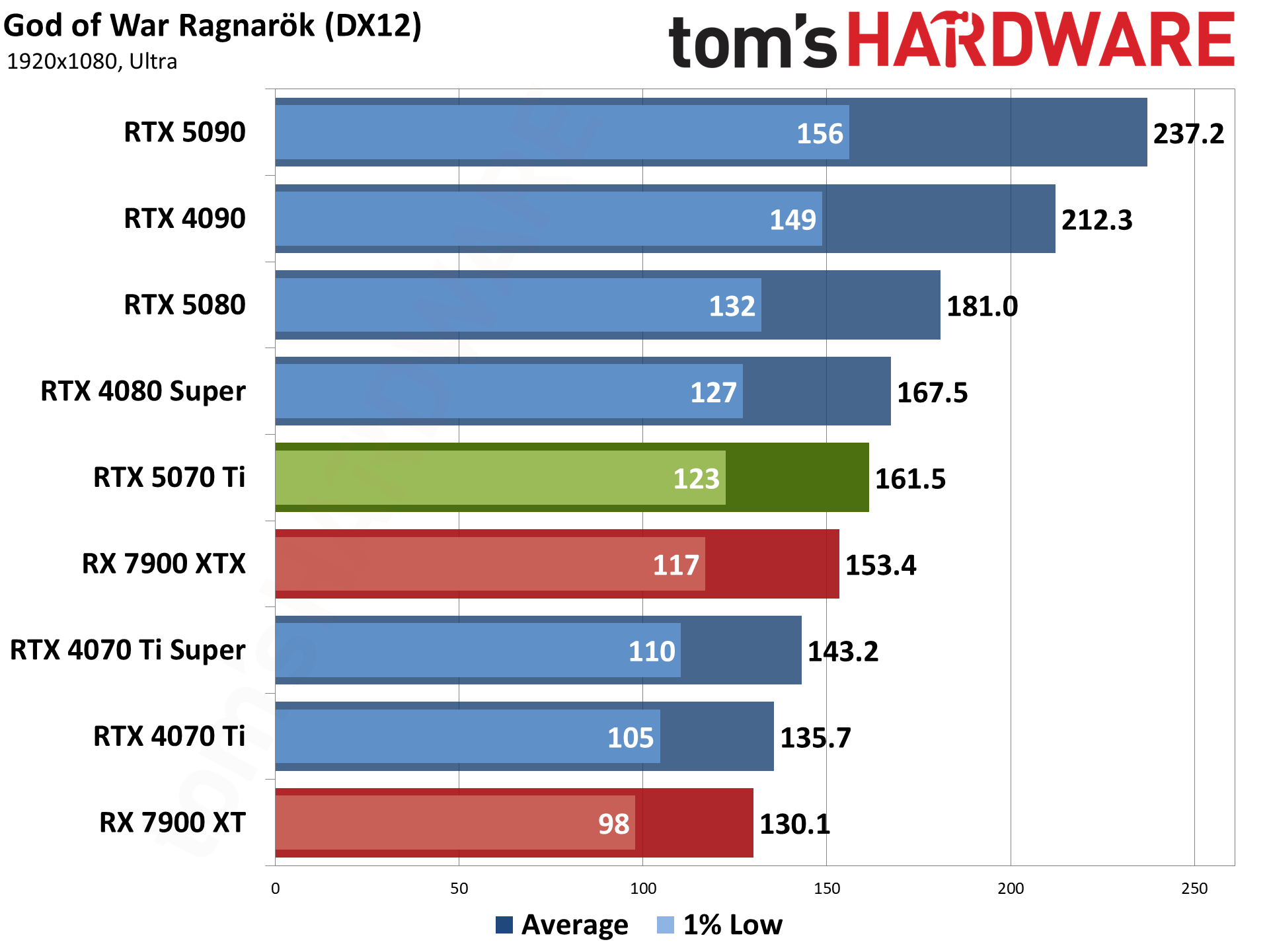

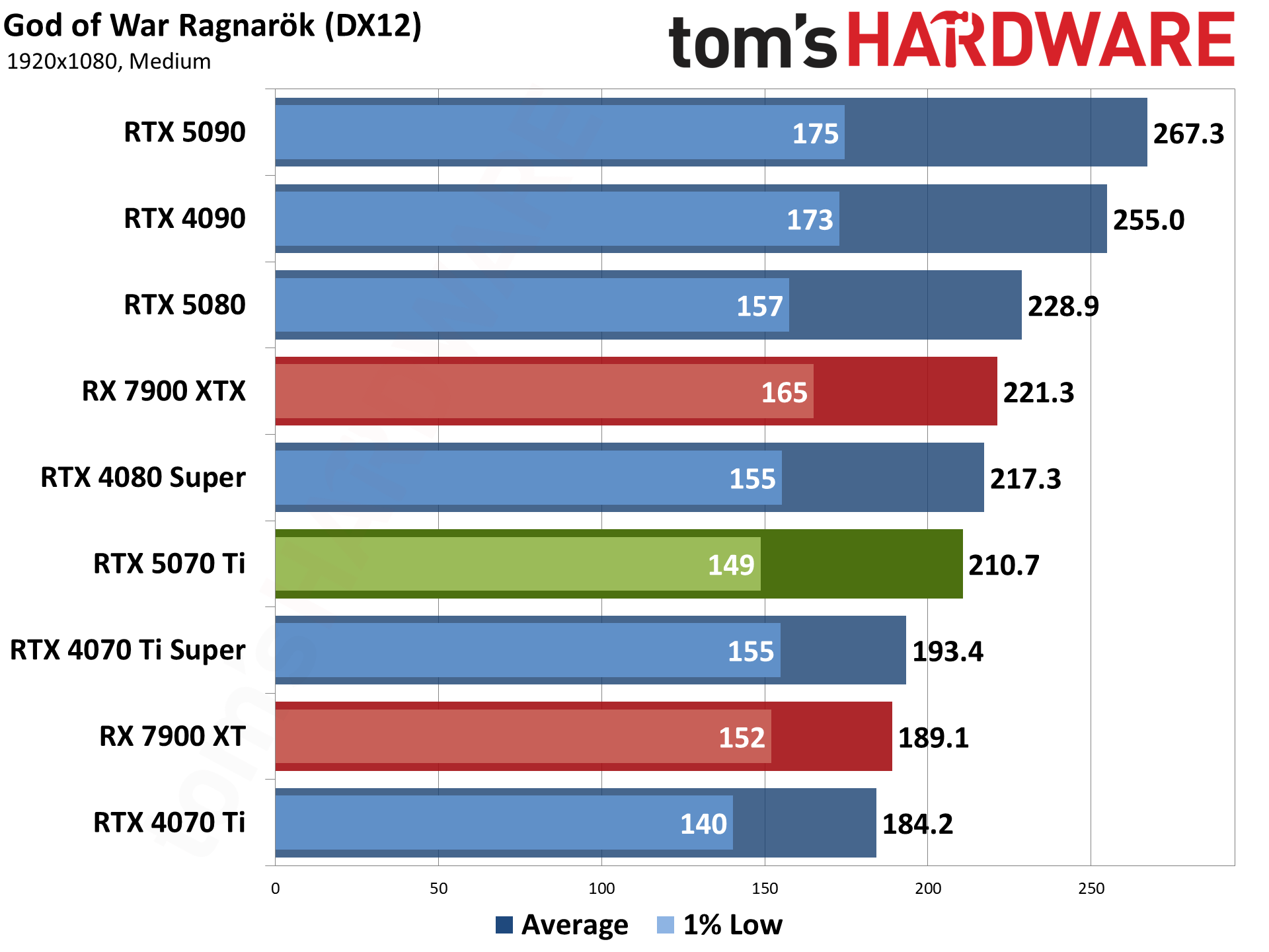

God of War Ragnarök released for the PlayStation two years ago and only recently saw a Windows version. It's AMD promoted, but it also supports DLSS and XeSS alongside FSR3. We ran around the village of Svartalfheim, which is one of the most demanding areas in the game that we've encountered.

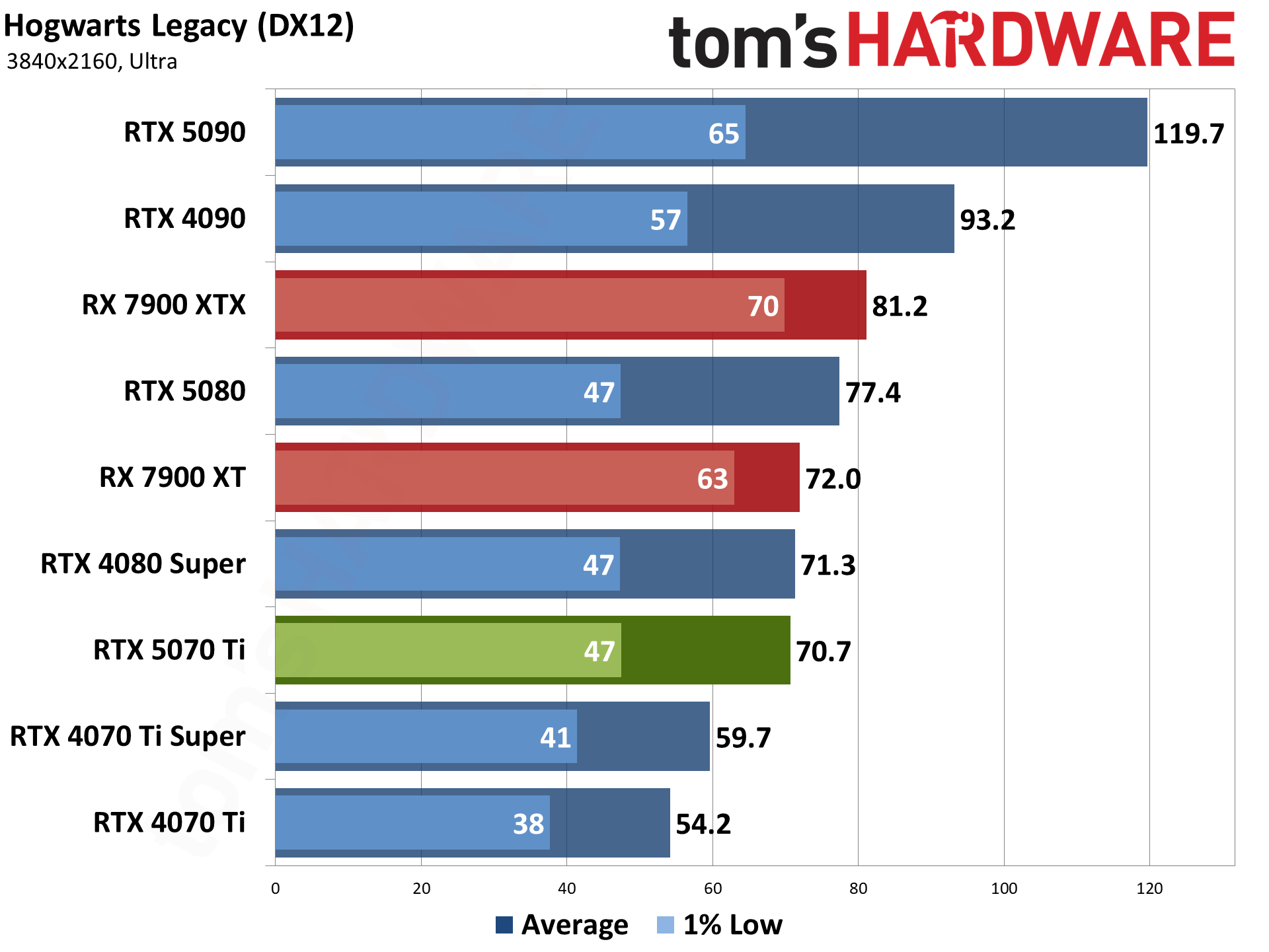

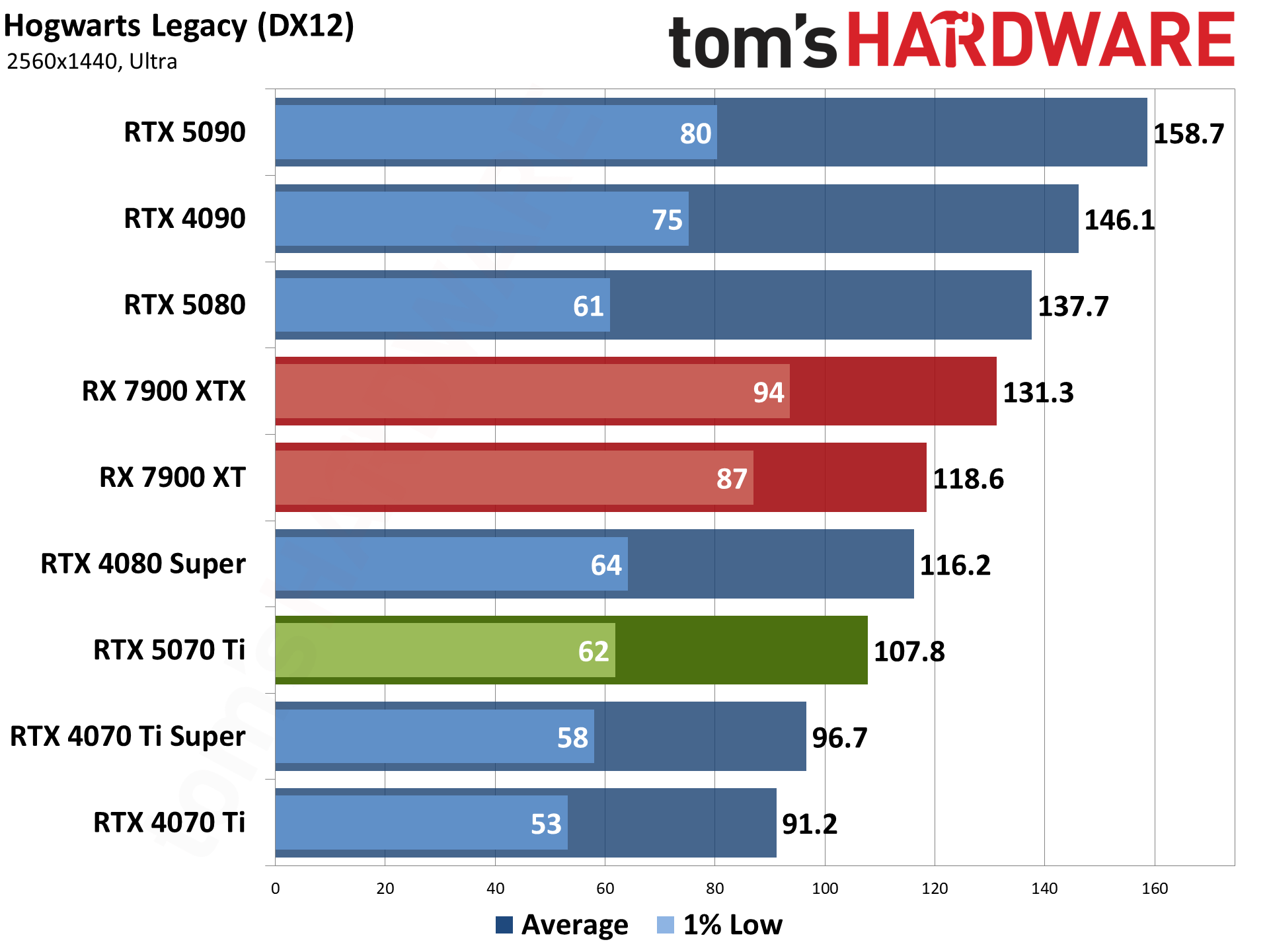

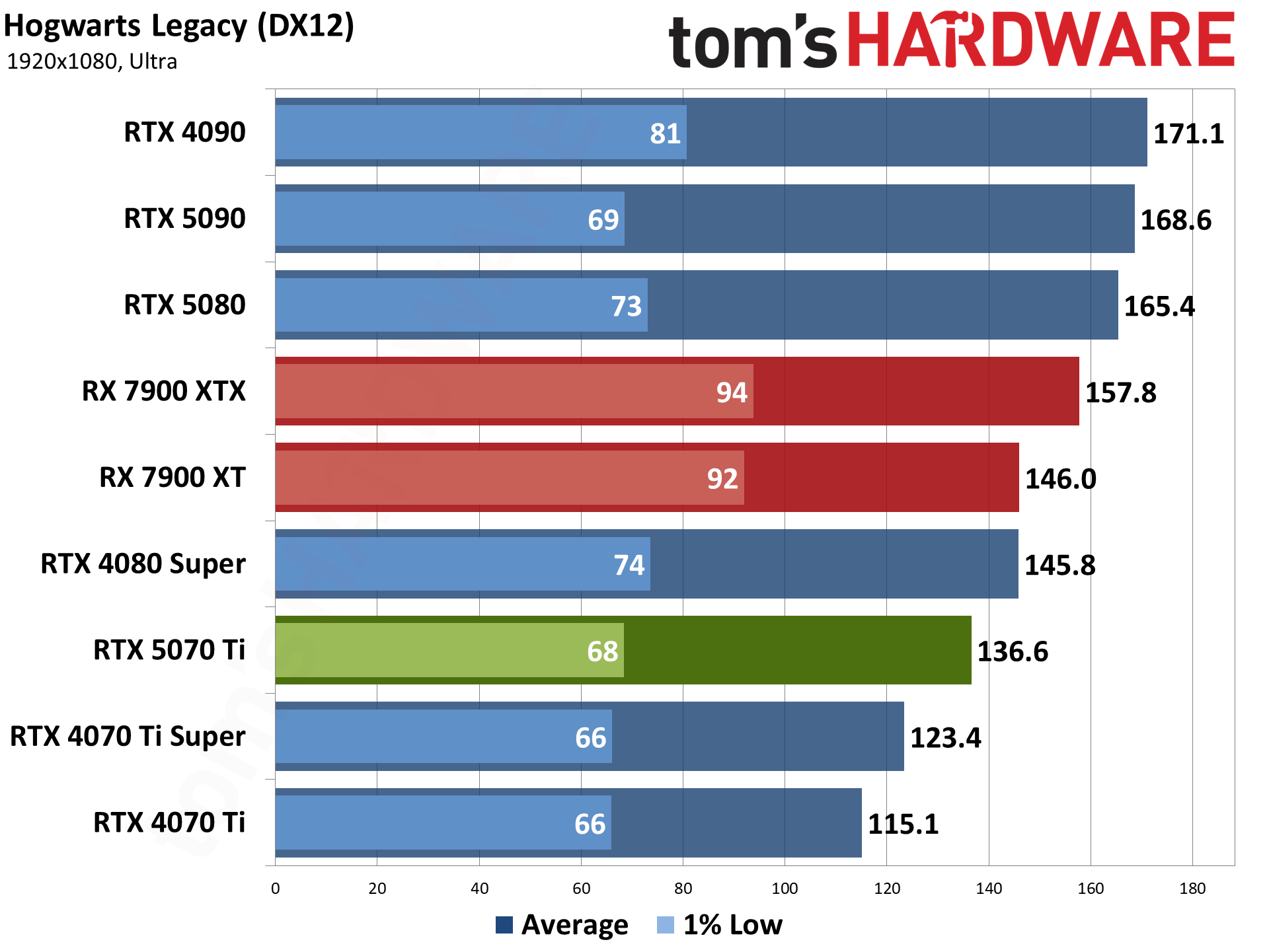

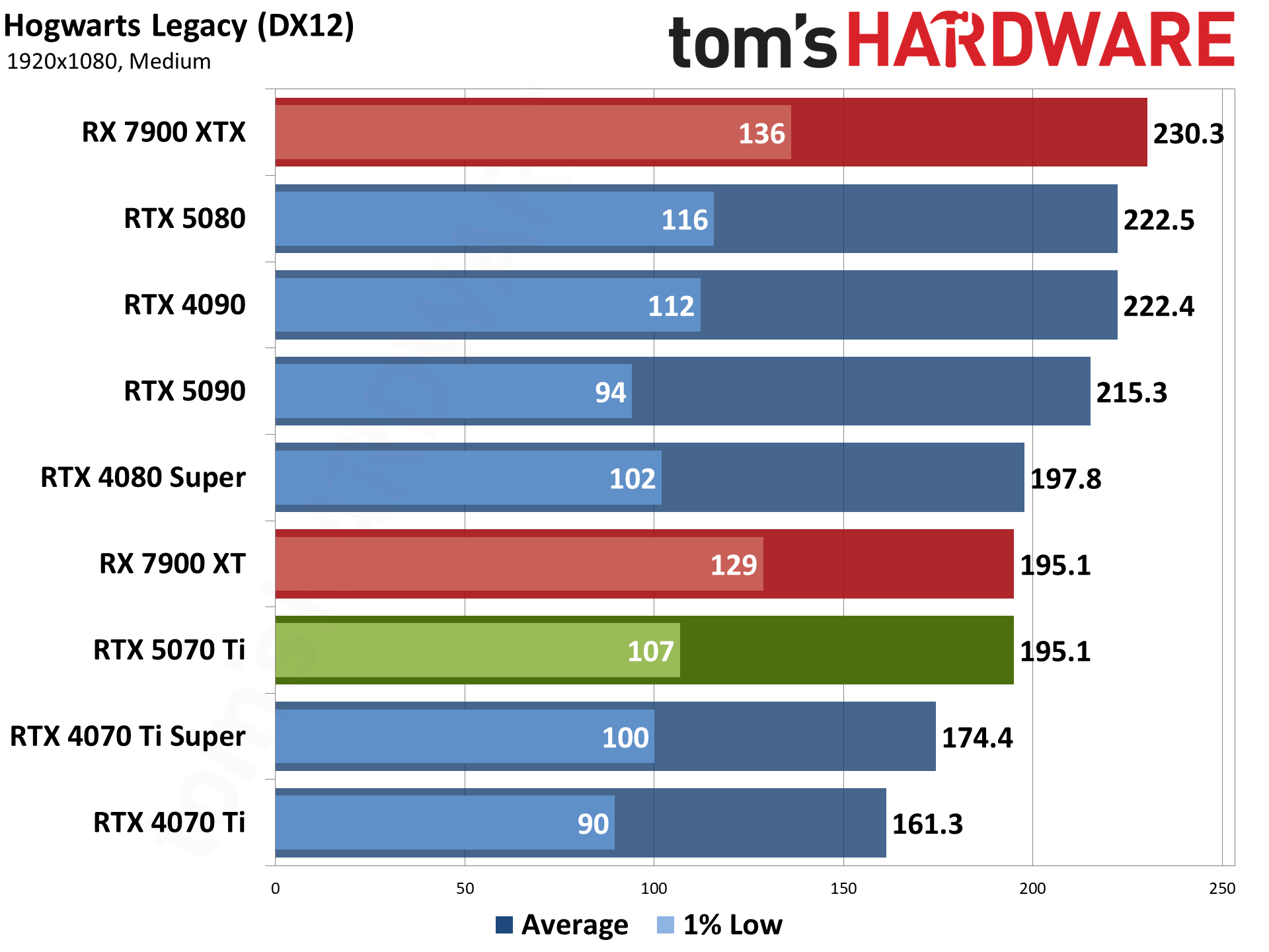

Hogwarts Legacy came out in early 2023, and it uses Unreal Engine 4. Like so many Unreal Engine games, it can look quite nice but also has some performance issues with certain settings. Ray tracing, in particular, can bloat memory use, tank framerates, and also causes hitching, so we've opted to test without ray tracing. (At maximum RT settings, the 9800X3D CPU ends up getting only around 60 FPS, even at 1080p with upscaling!)

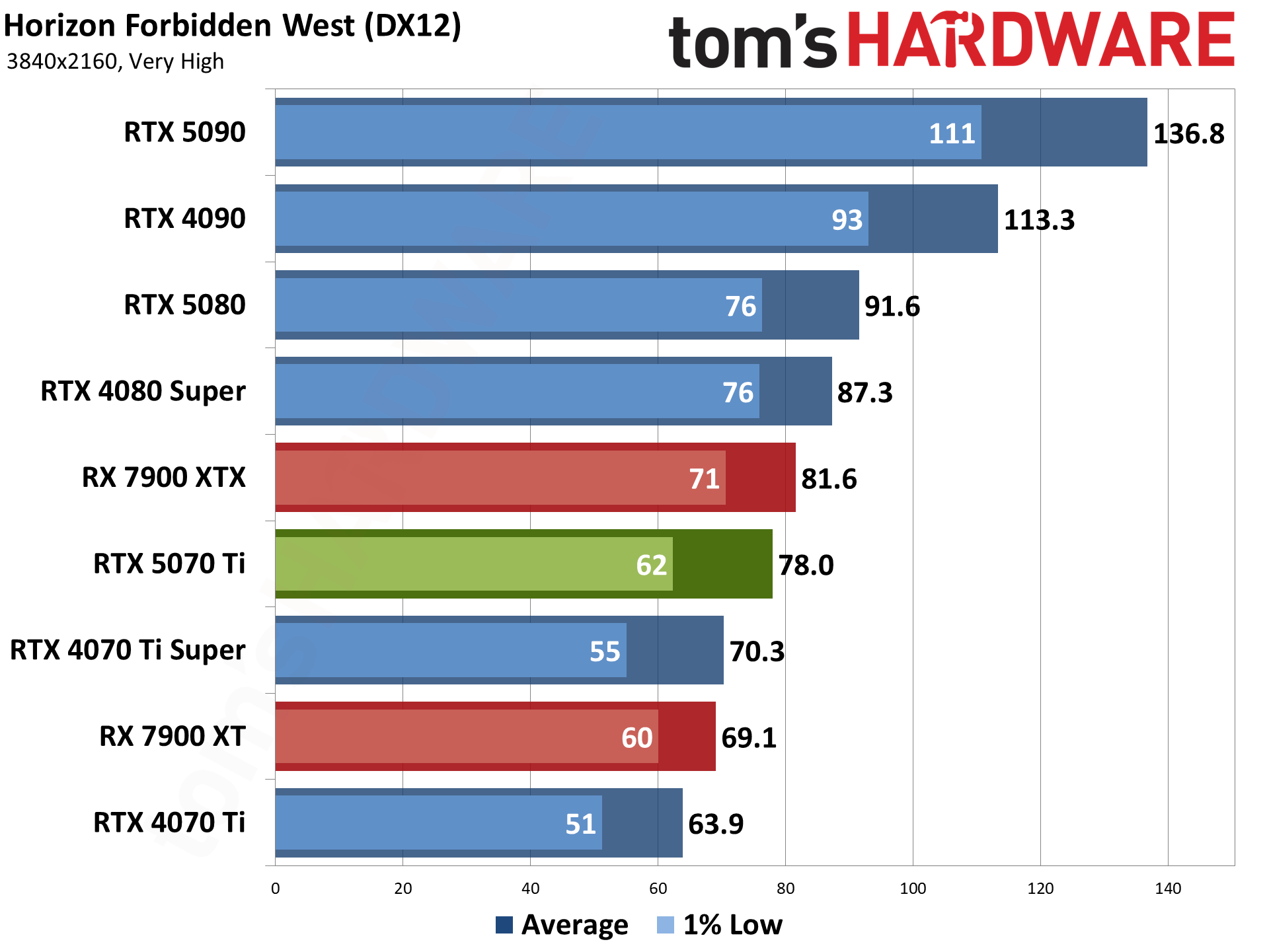

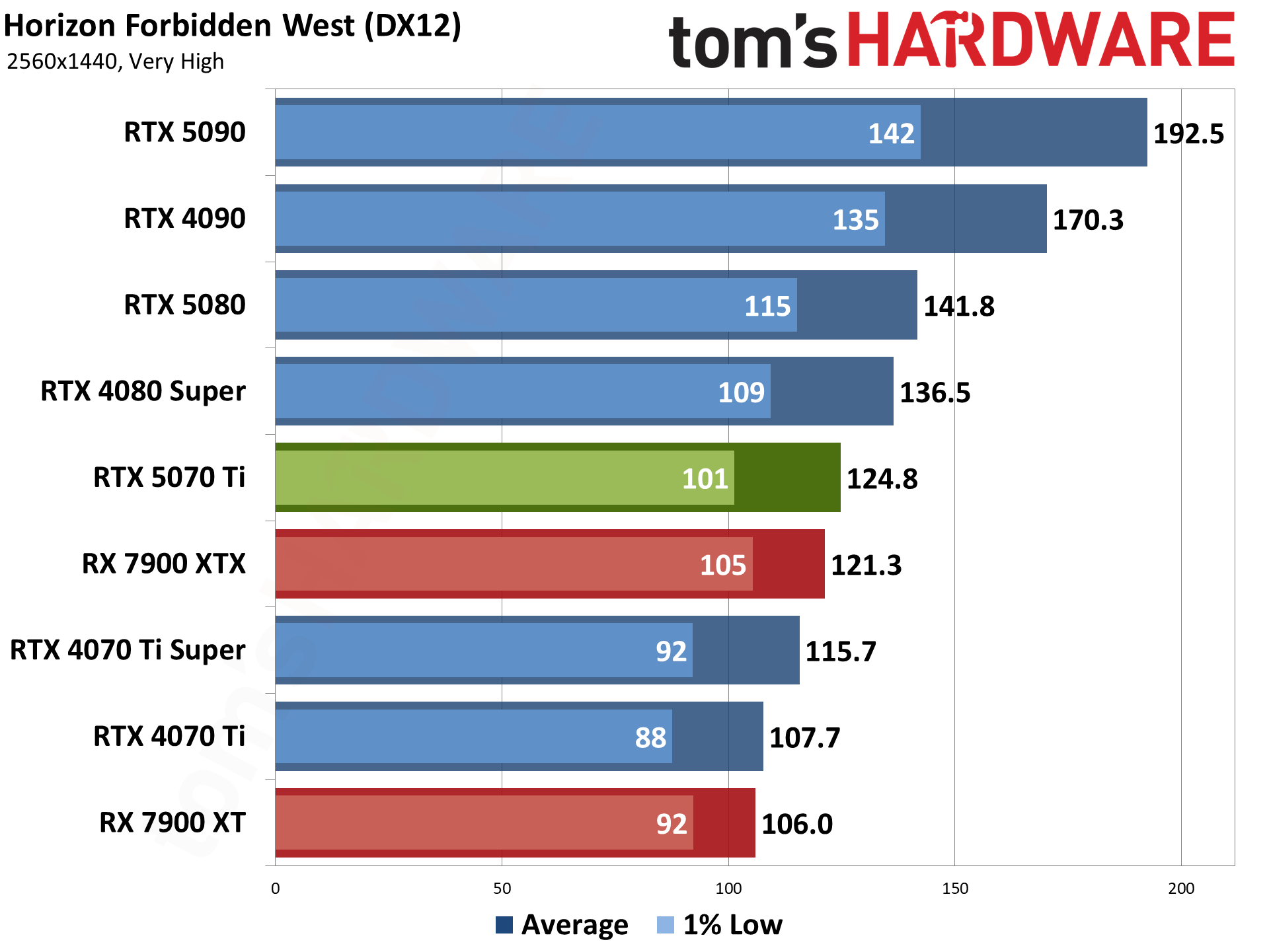

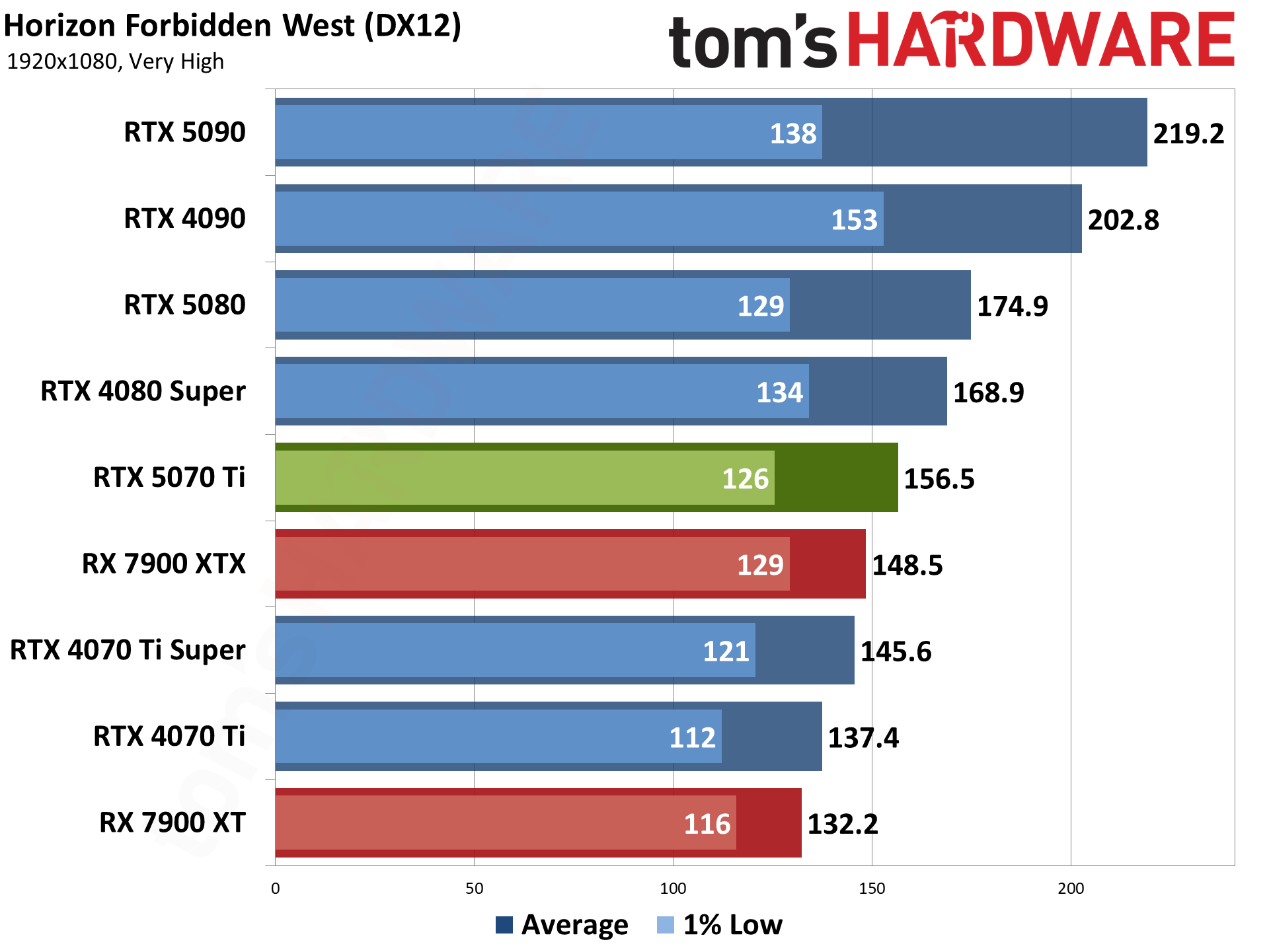

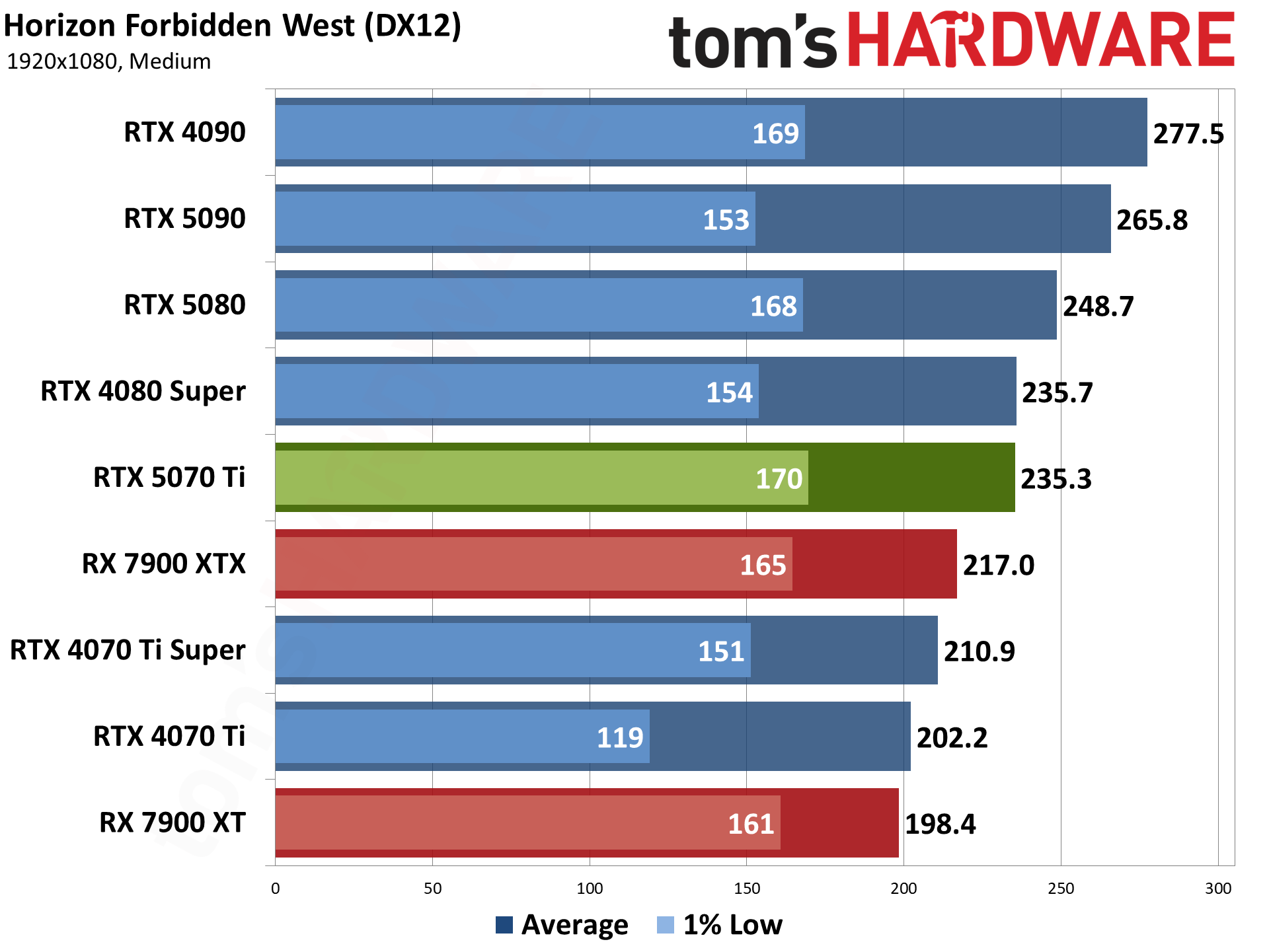

Horizon Forbidden West is another two years old PlayStation port, using the Decima engine. The graphics are good, though I've heard at least a few people think it looks worse than its predecessor — excessive blurriness being a key complaint. But after using Horizon Zero Dawn for a few years, it felt like a good time to replace it.

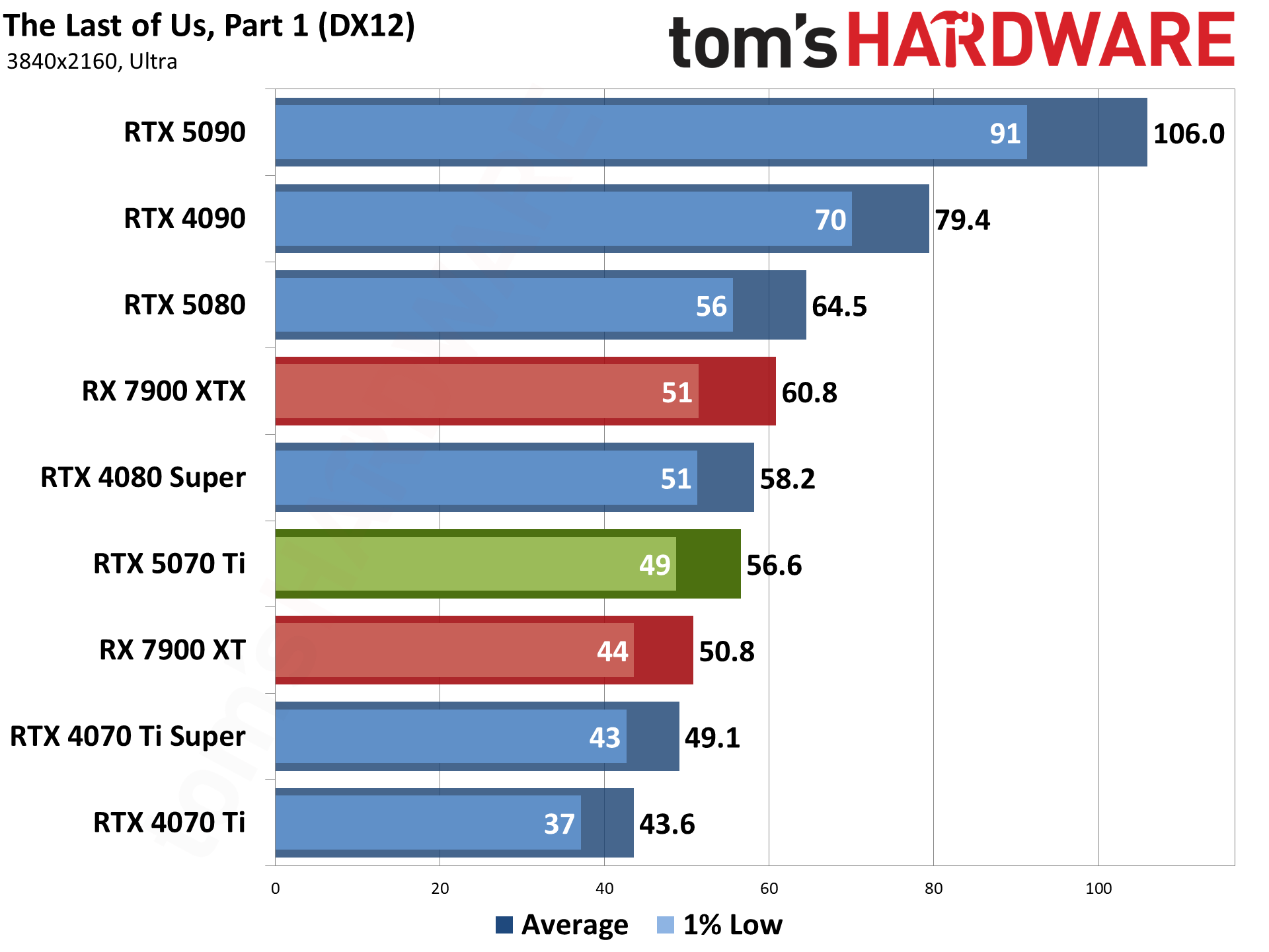

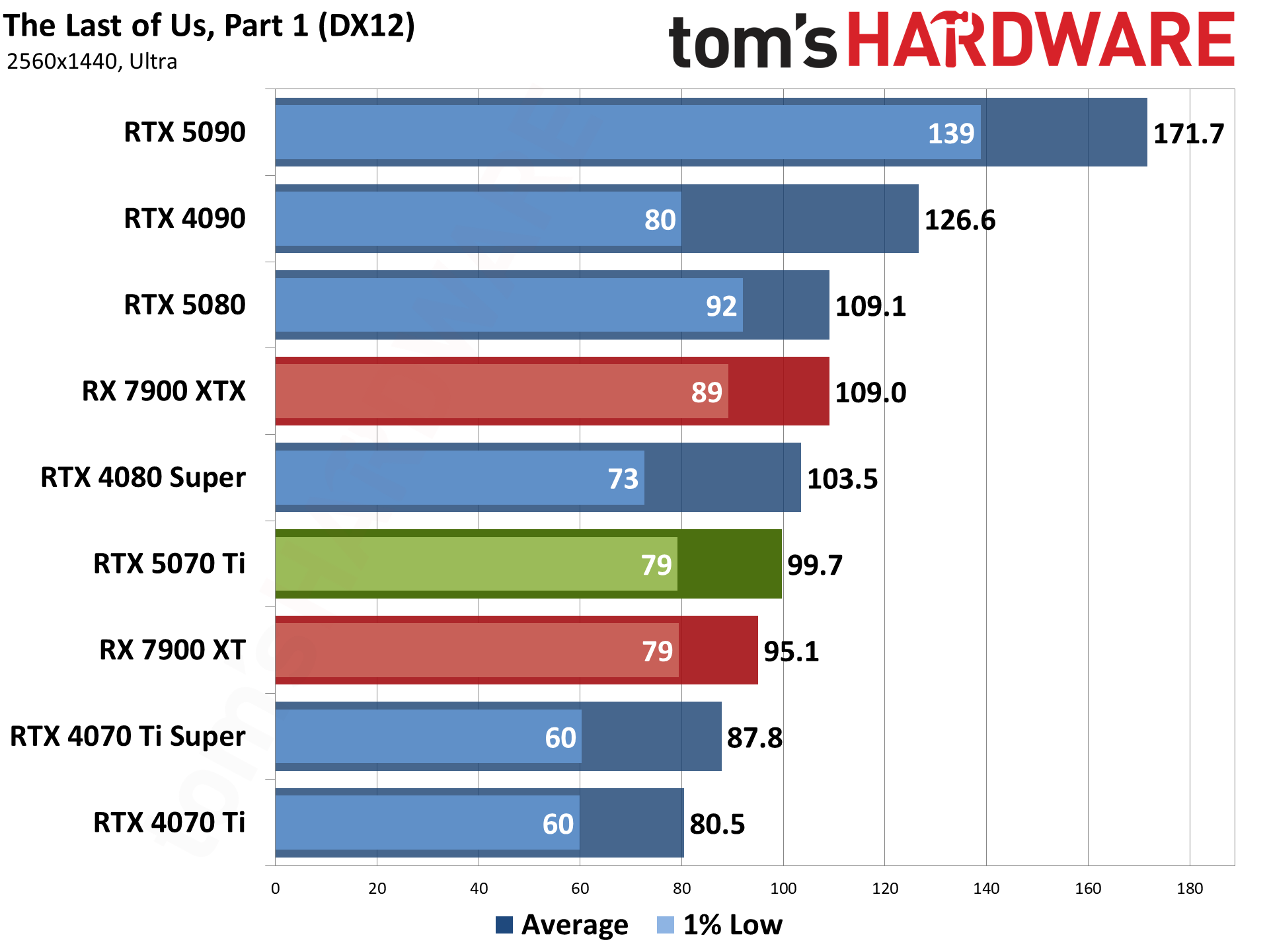

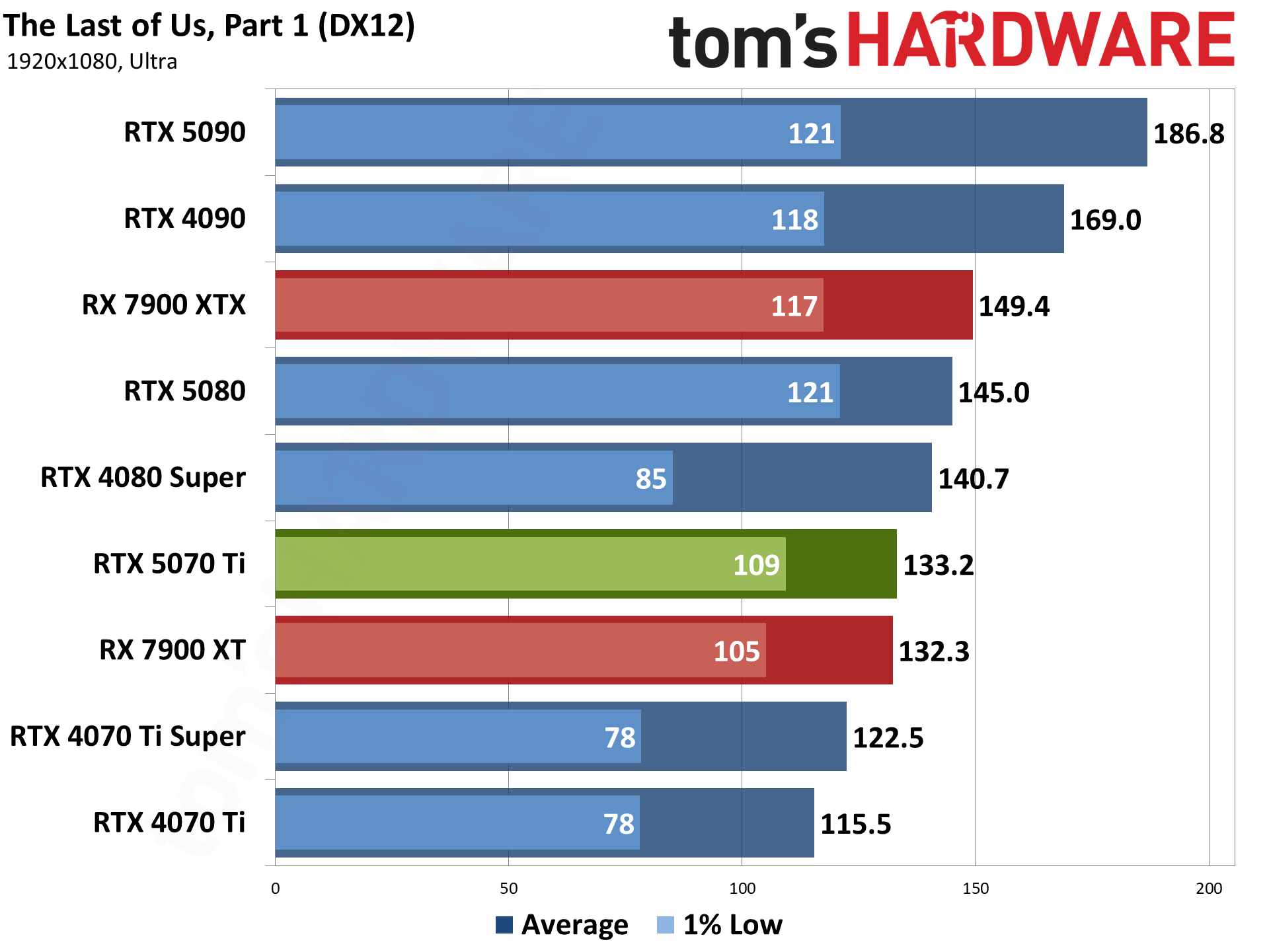

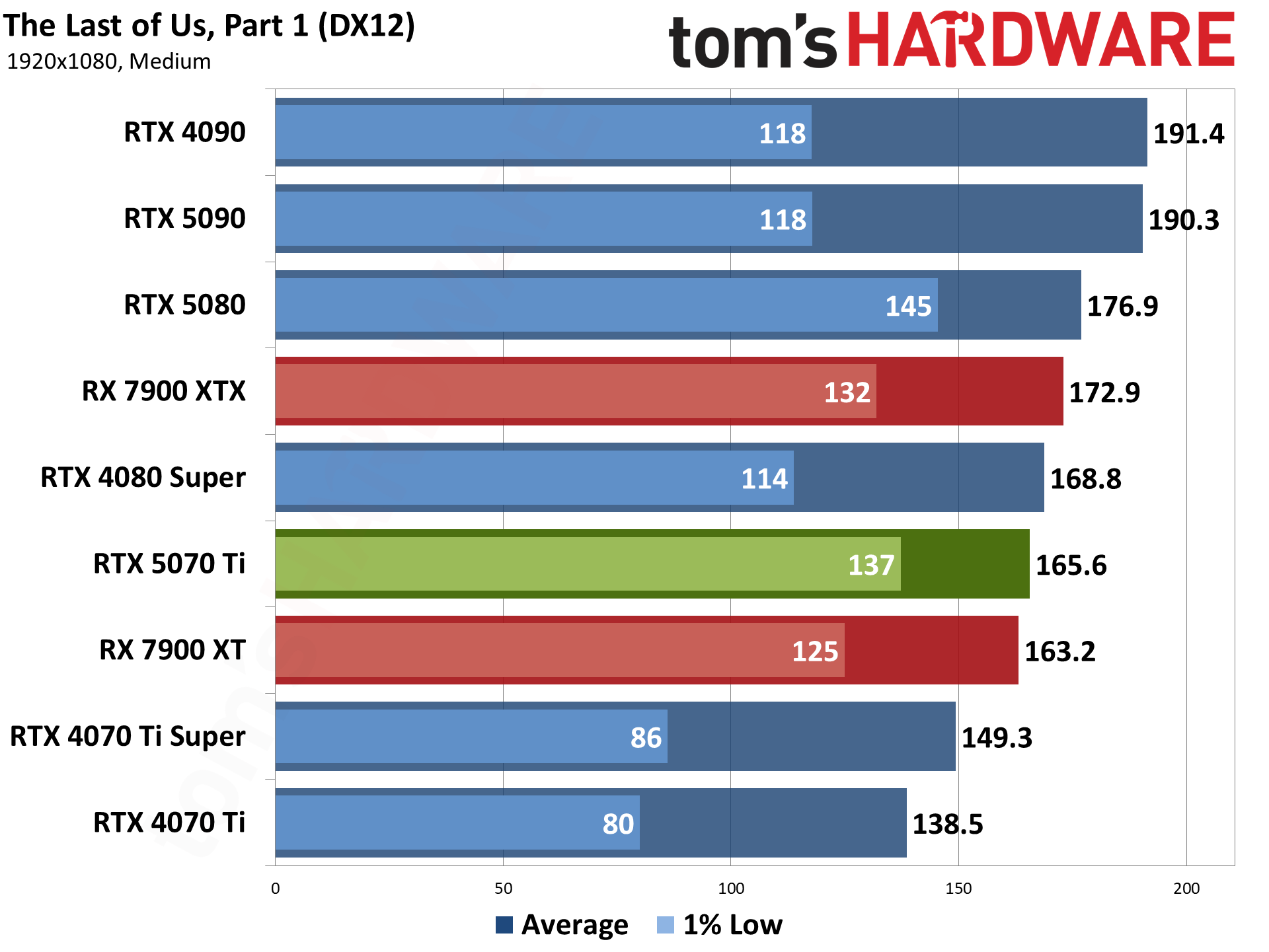

The Last of Us, Part 1 is another PlayStation port, though it's been out on PC for about 20 months now. It's also an AMD-promoted game and really hits the VRAM hard at higher-quality settings. Cards with 16GB or more memory do just fine, in general.

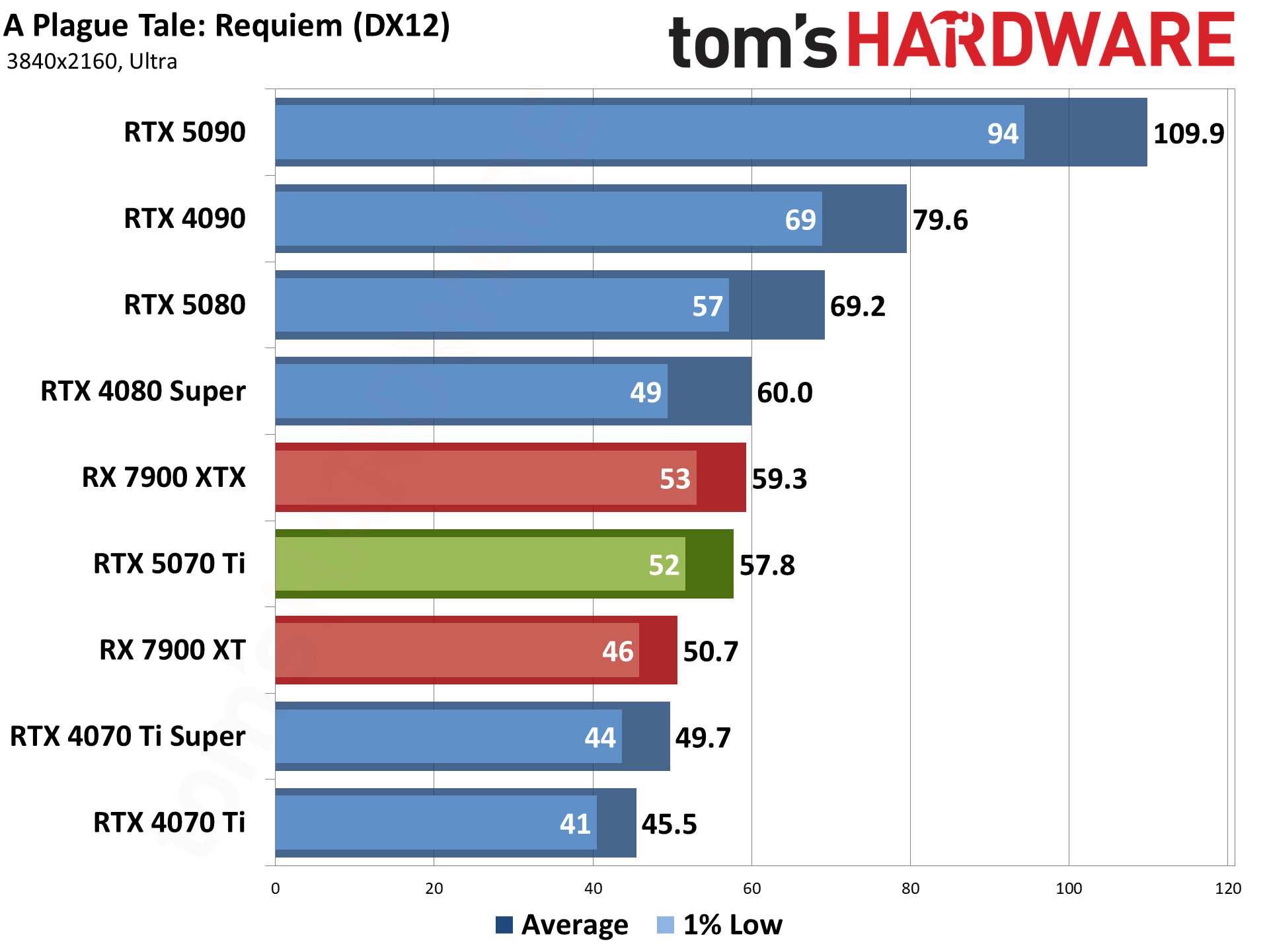

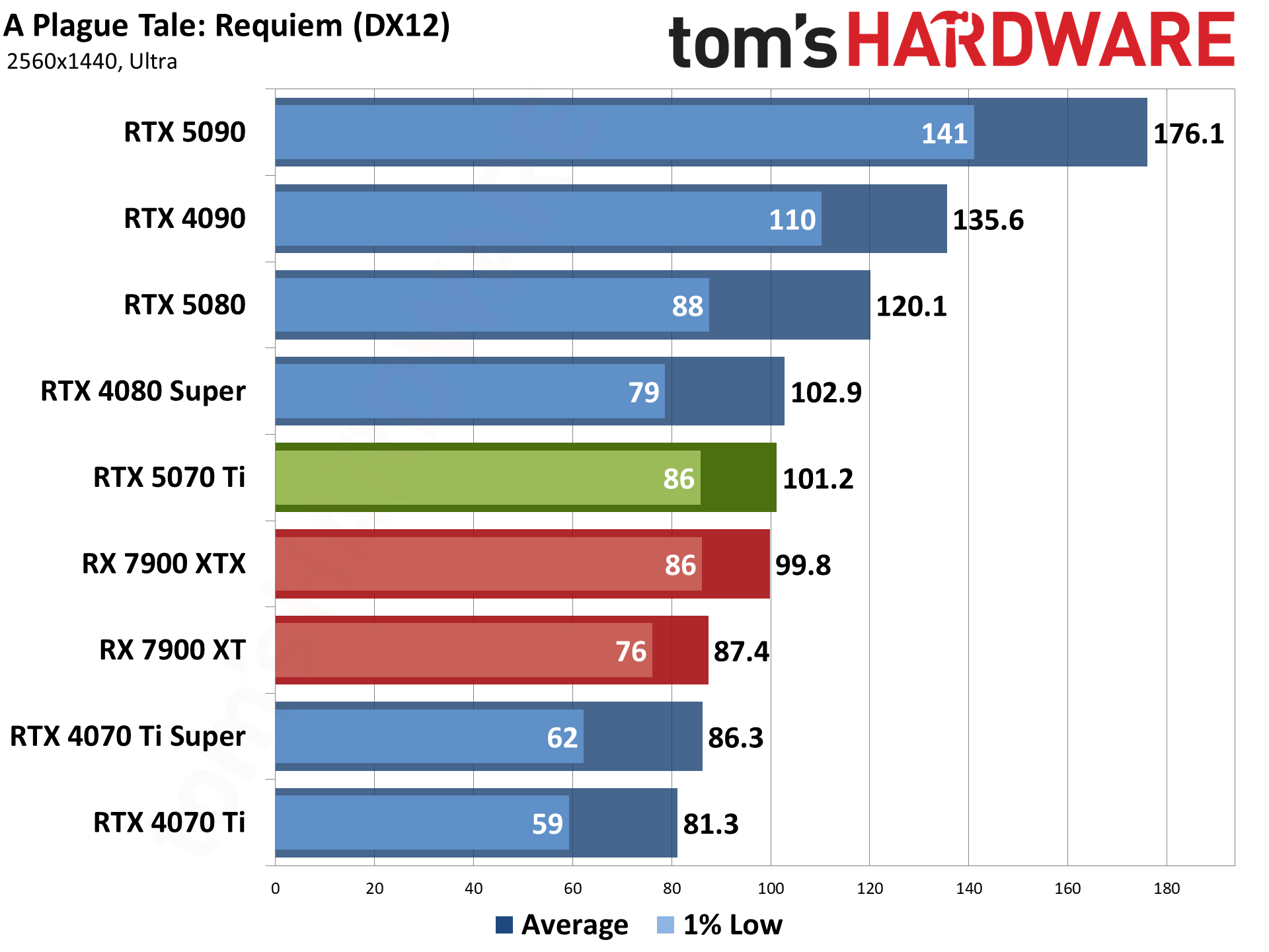

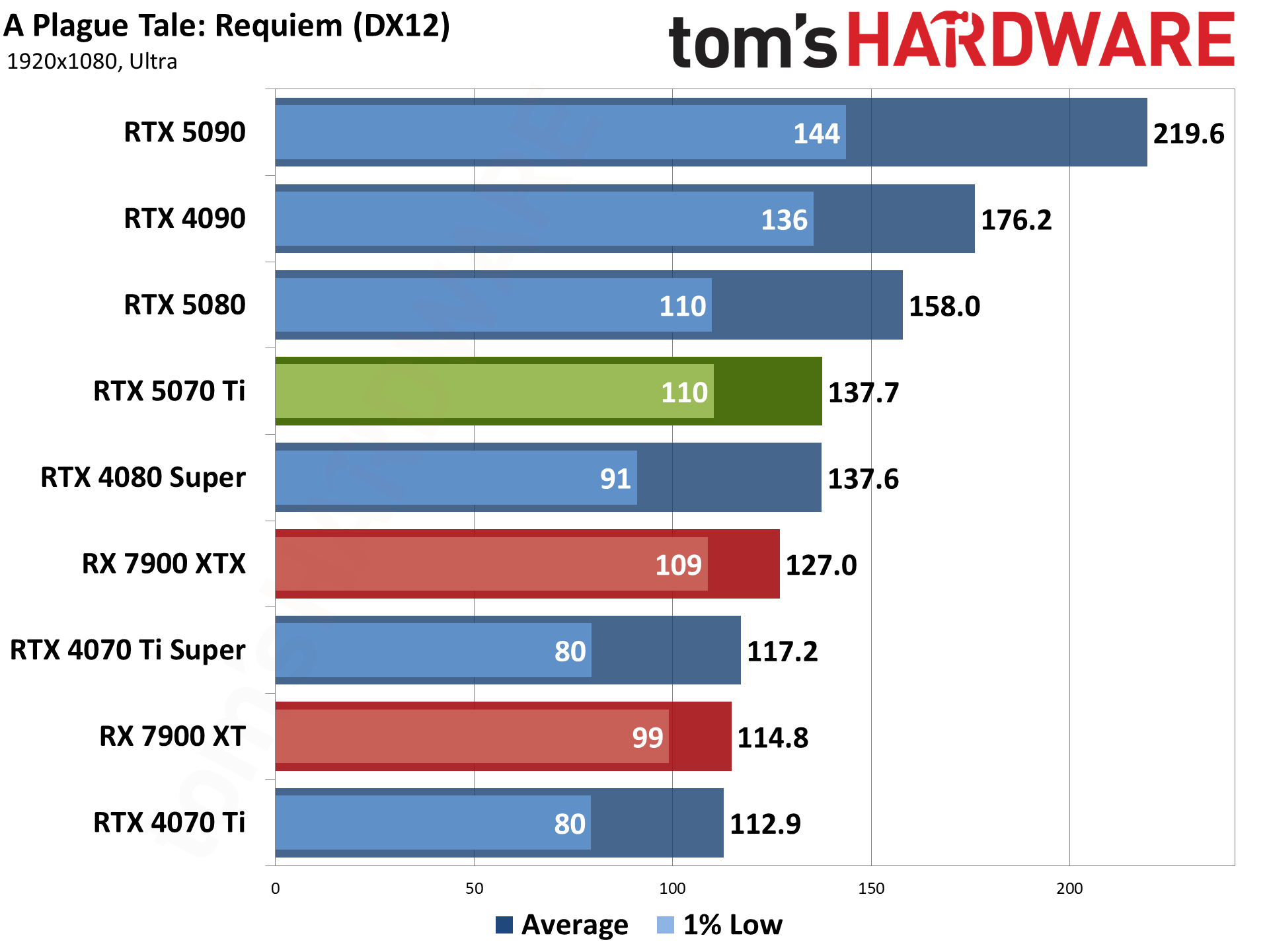

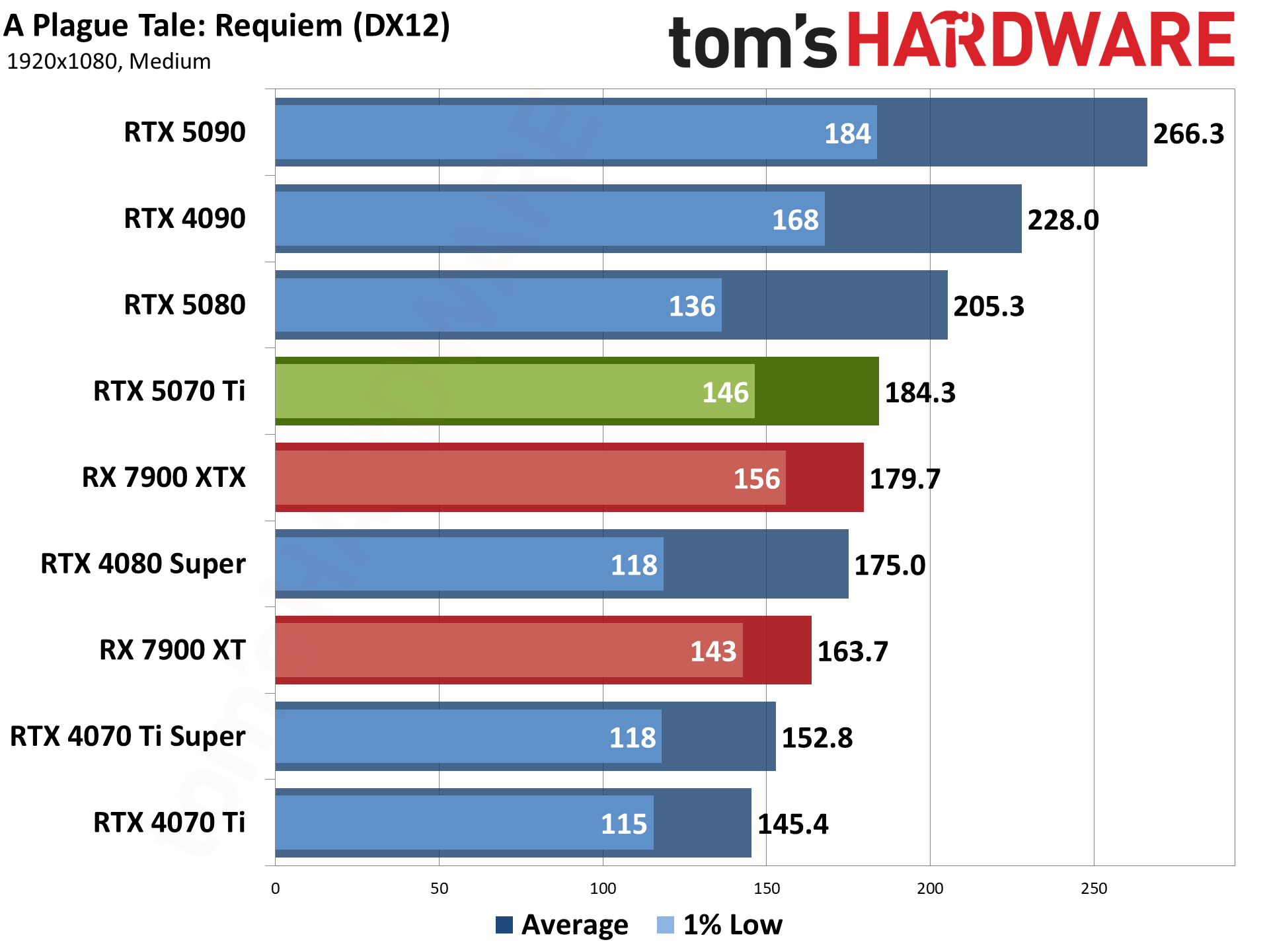

A Plague Tale: Requiem uses the Zouna engine and runs on the DirectX 12 API. It's an Nvidia-promoted game that supports DLSS 3, but neither FSR nor XeSS. (It was one of the first DLSS 3-enabled games as well.) It has RT effects, but only for shadows, so it doesn't really improve the look of the game and tanks performance.

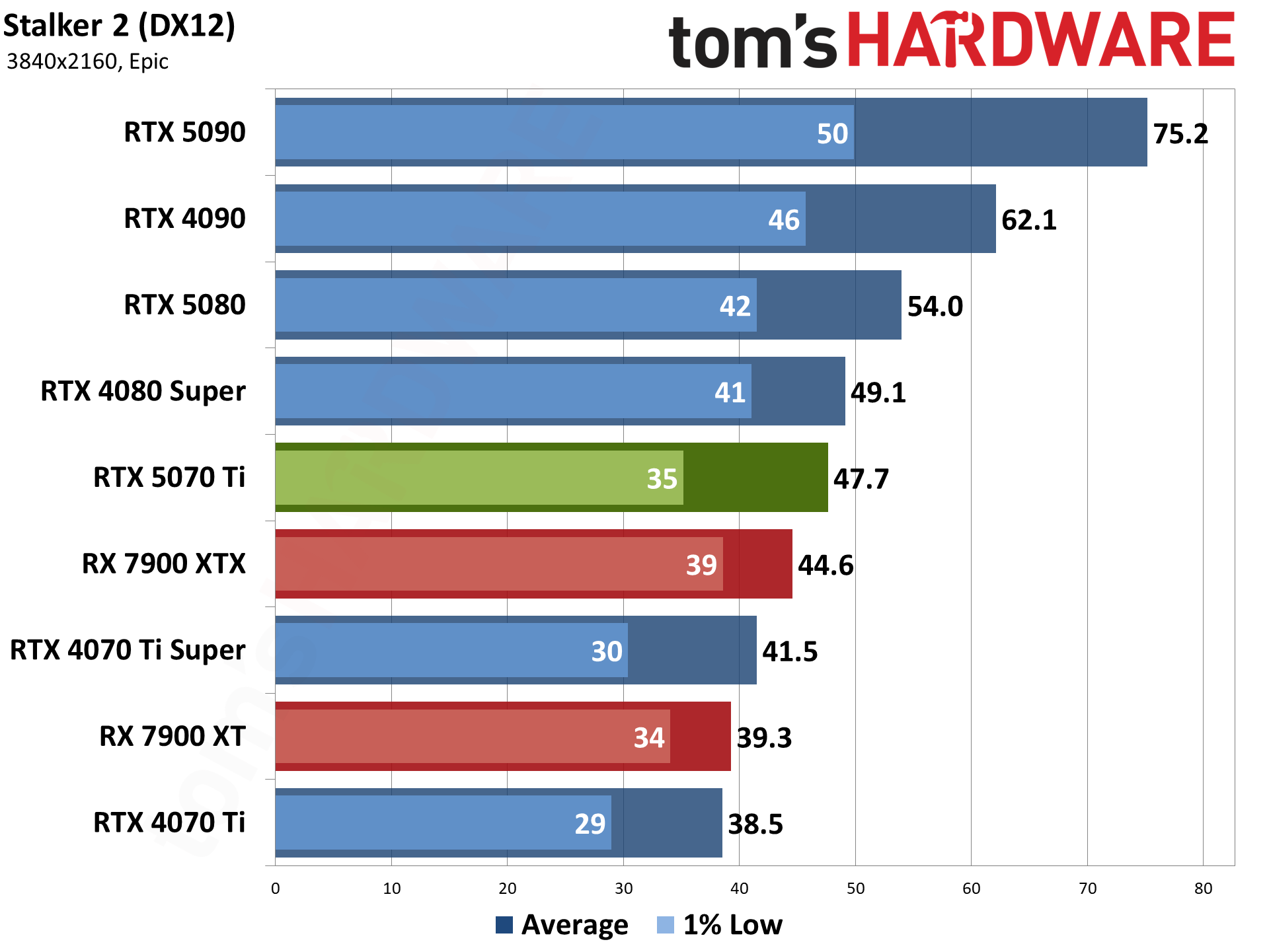

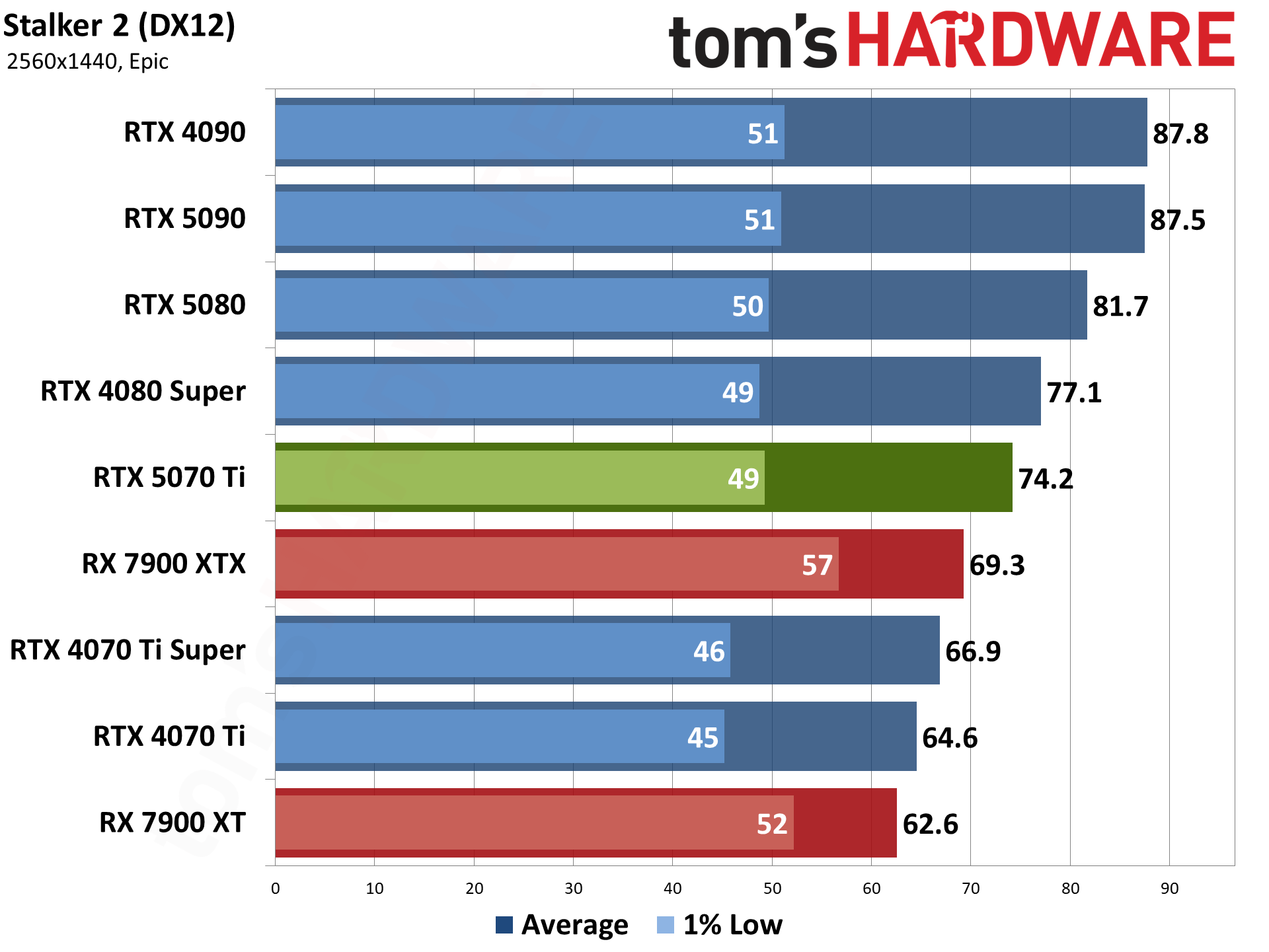

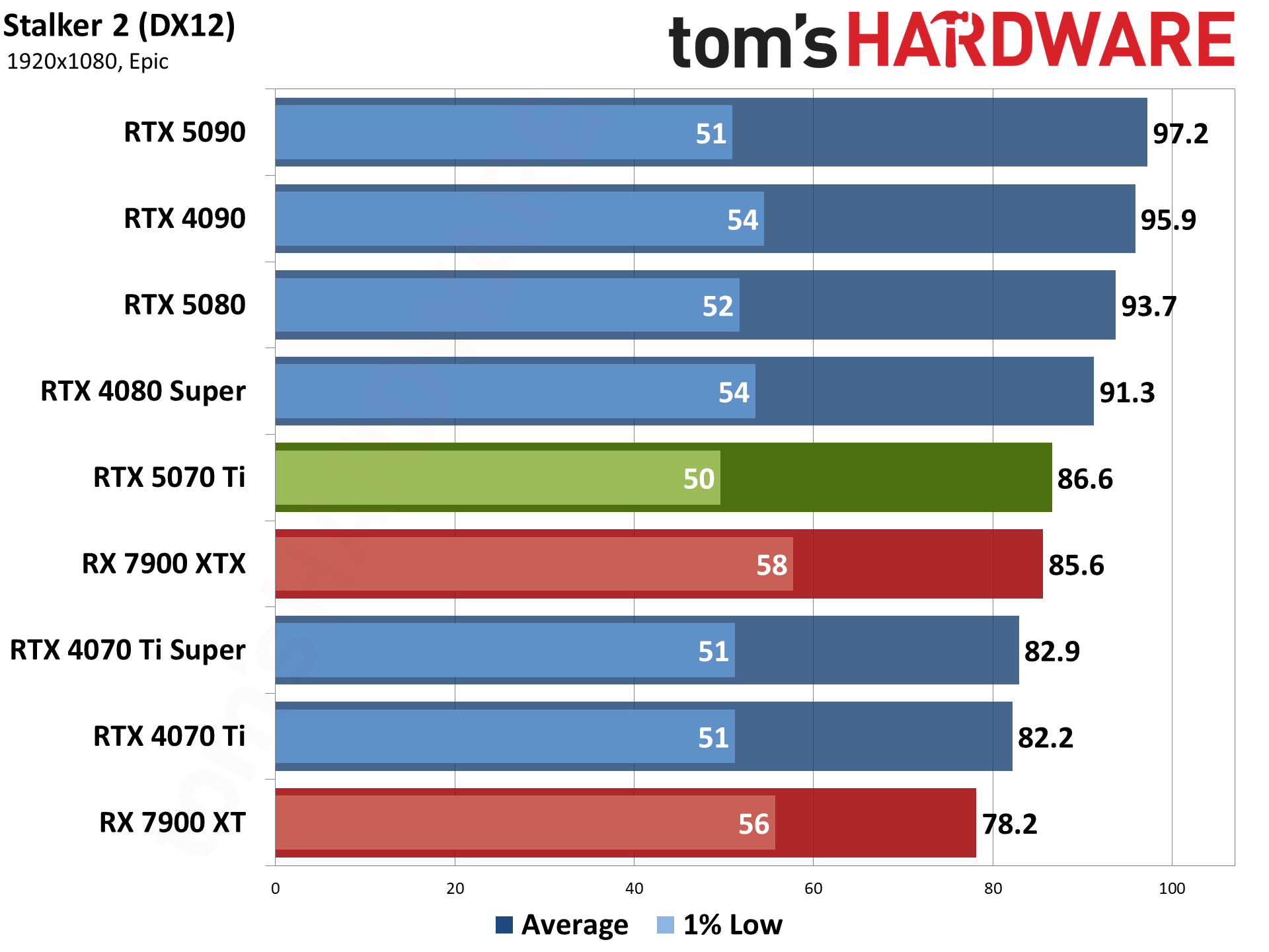

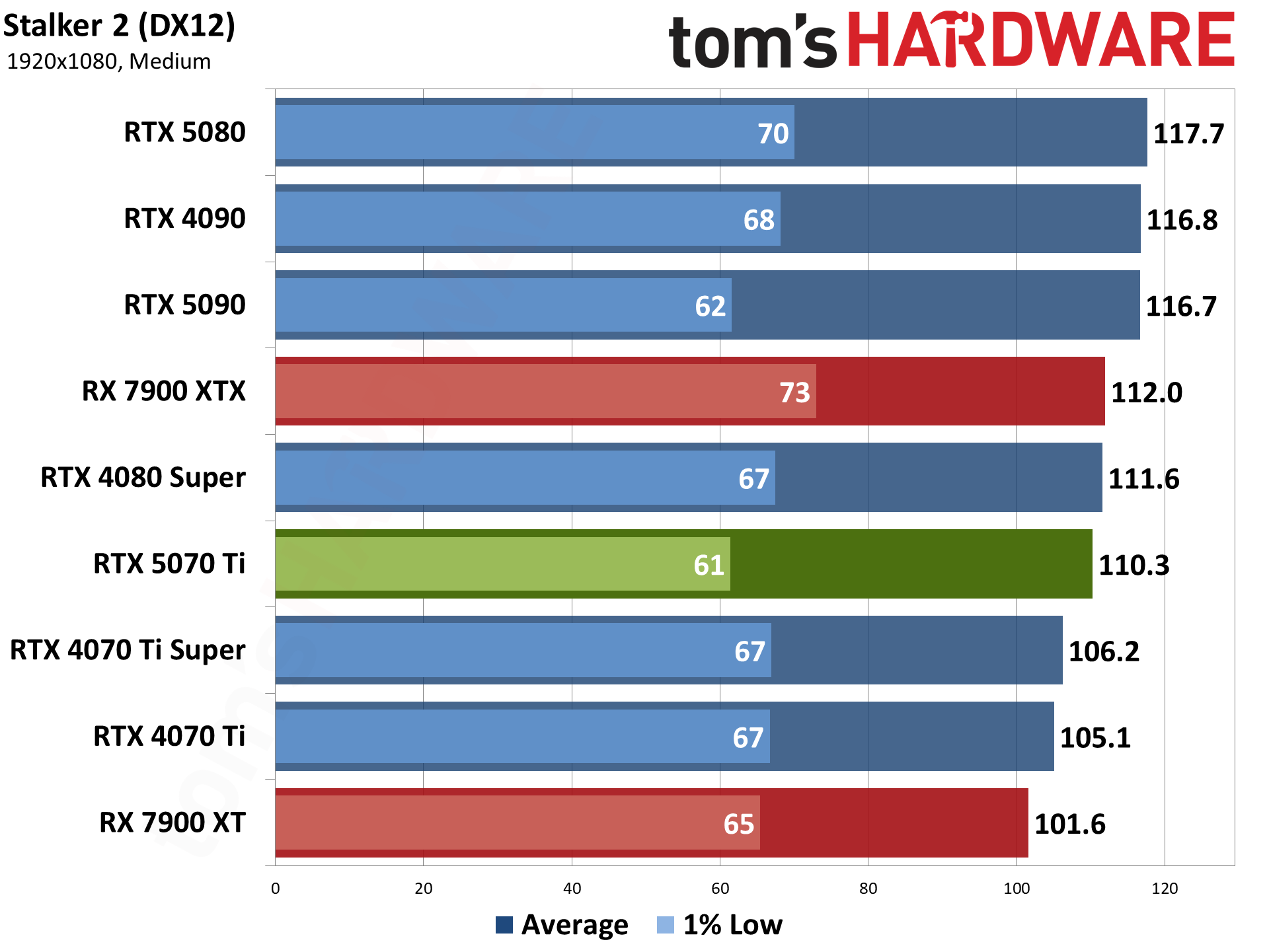

Stalker 2 is another Unreal Engine 5 game, but without any hardware ray tracing support — the Lumen engine also does "software RT" that's basically just fancy rasterization as far as the visuals are concerned, though it's still quite taxing. VRAM can also be a serious problem when trying to run the epic preset, with 8GB cards struggling at most resolutions. There's also quite a bit of microstuttering in Stalker 2, which framegen can help smooth out. (UE5 games tend to benefit more from framegen, in my experience, than some other games.)

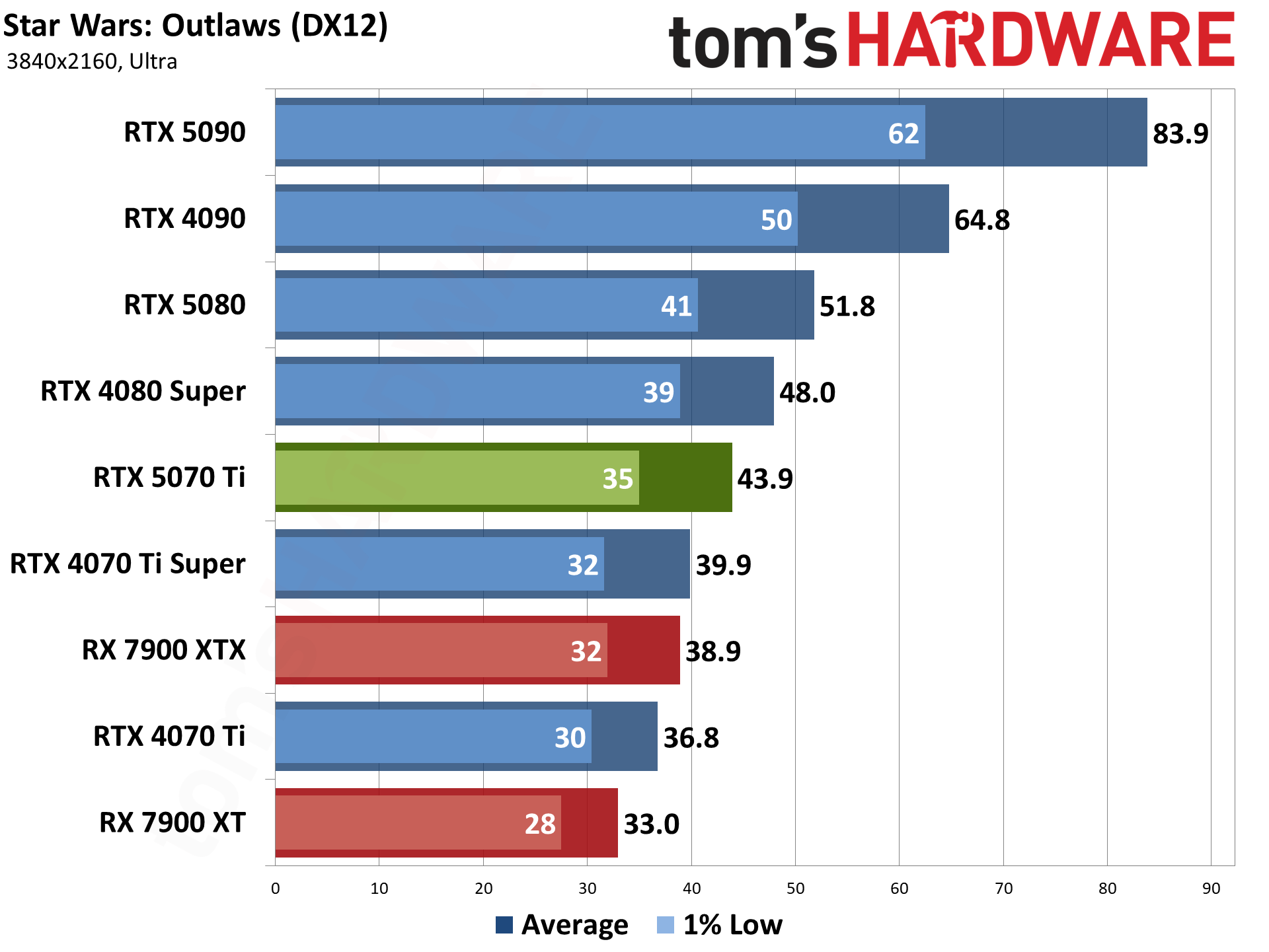

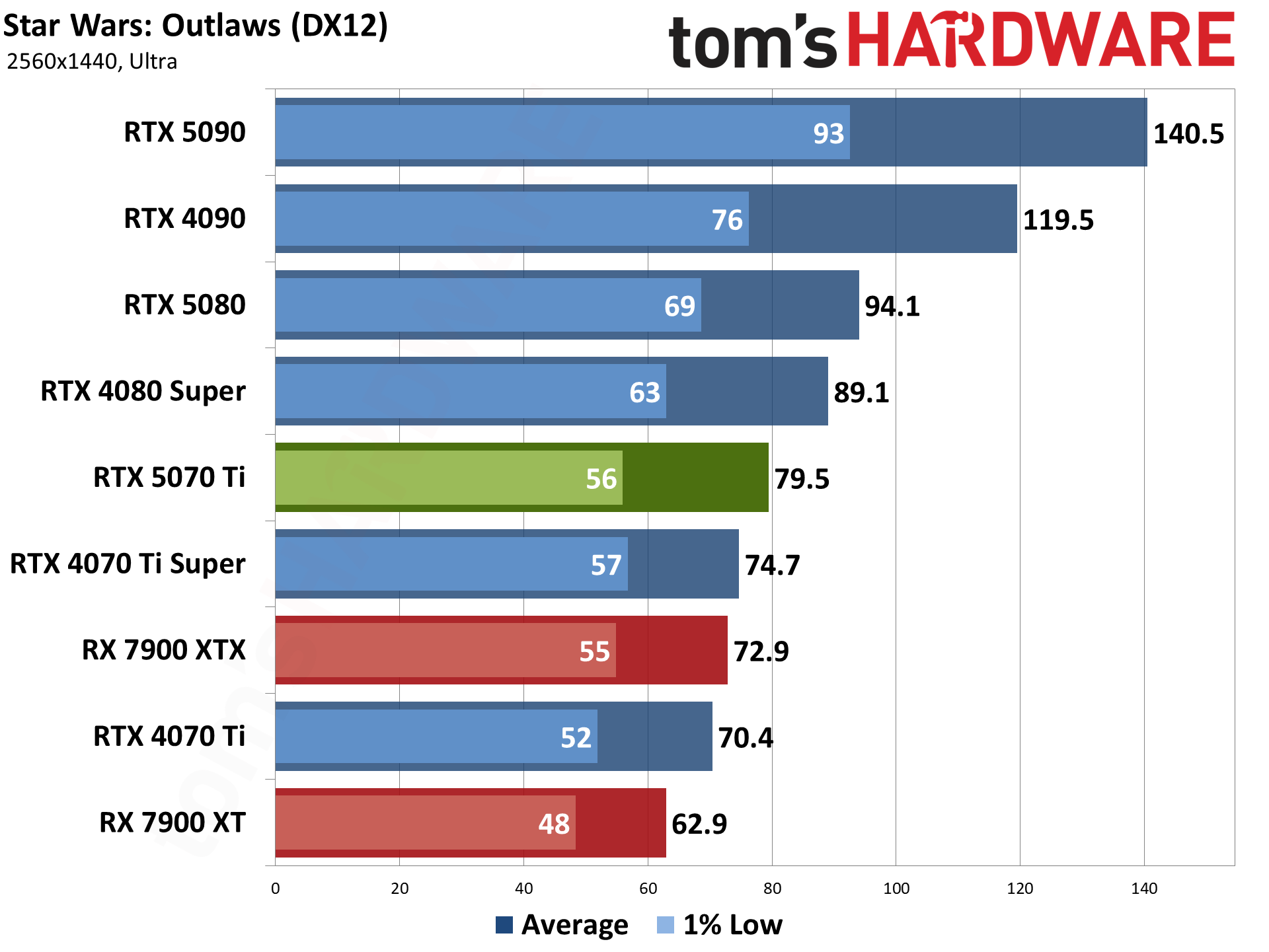

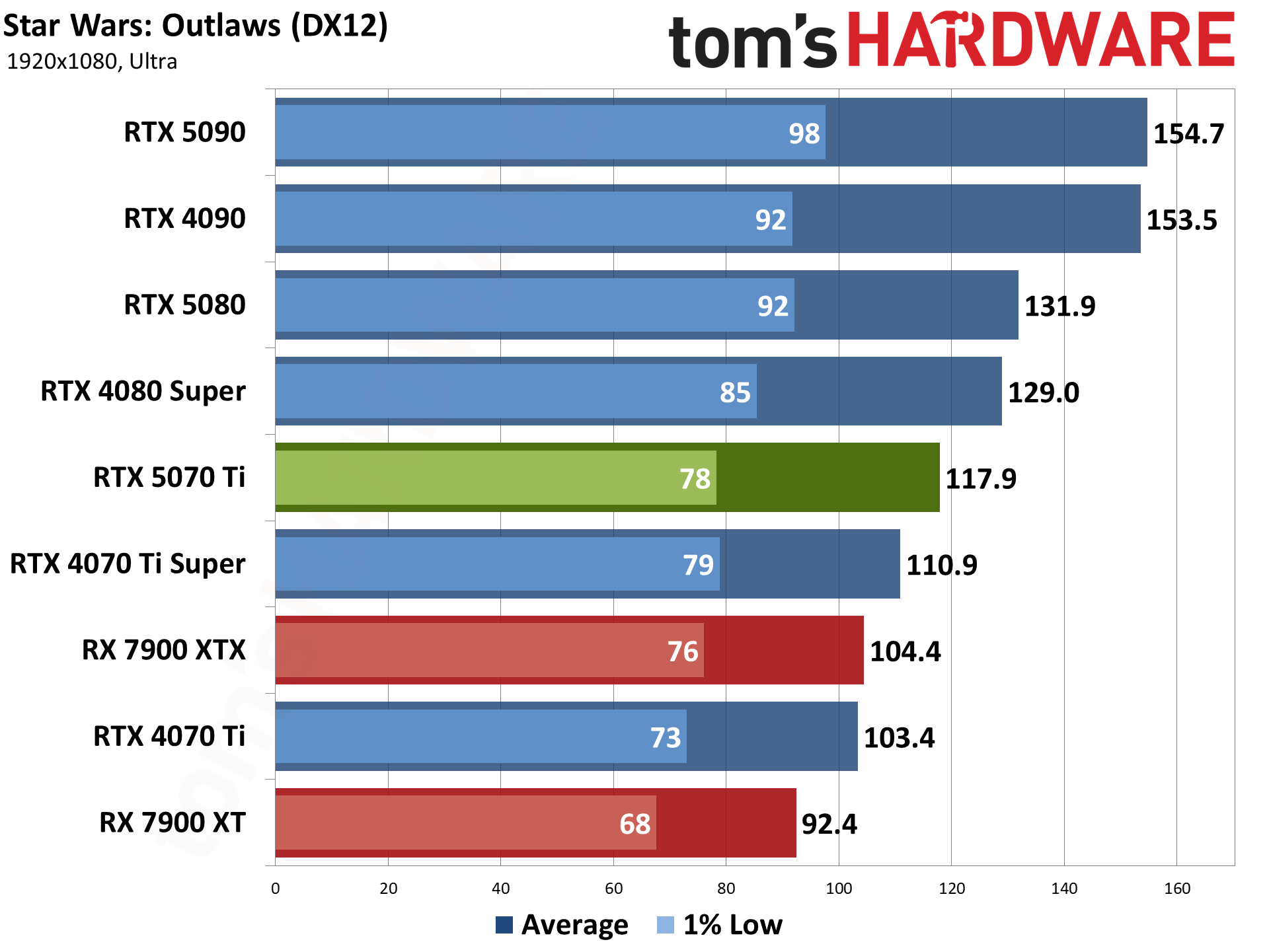

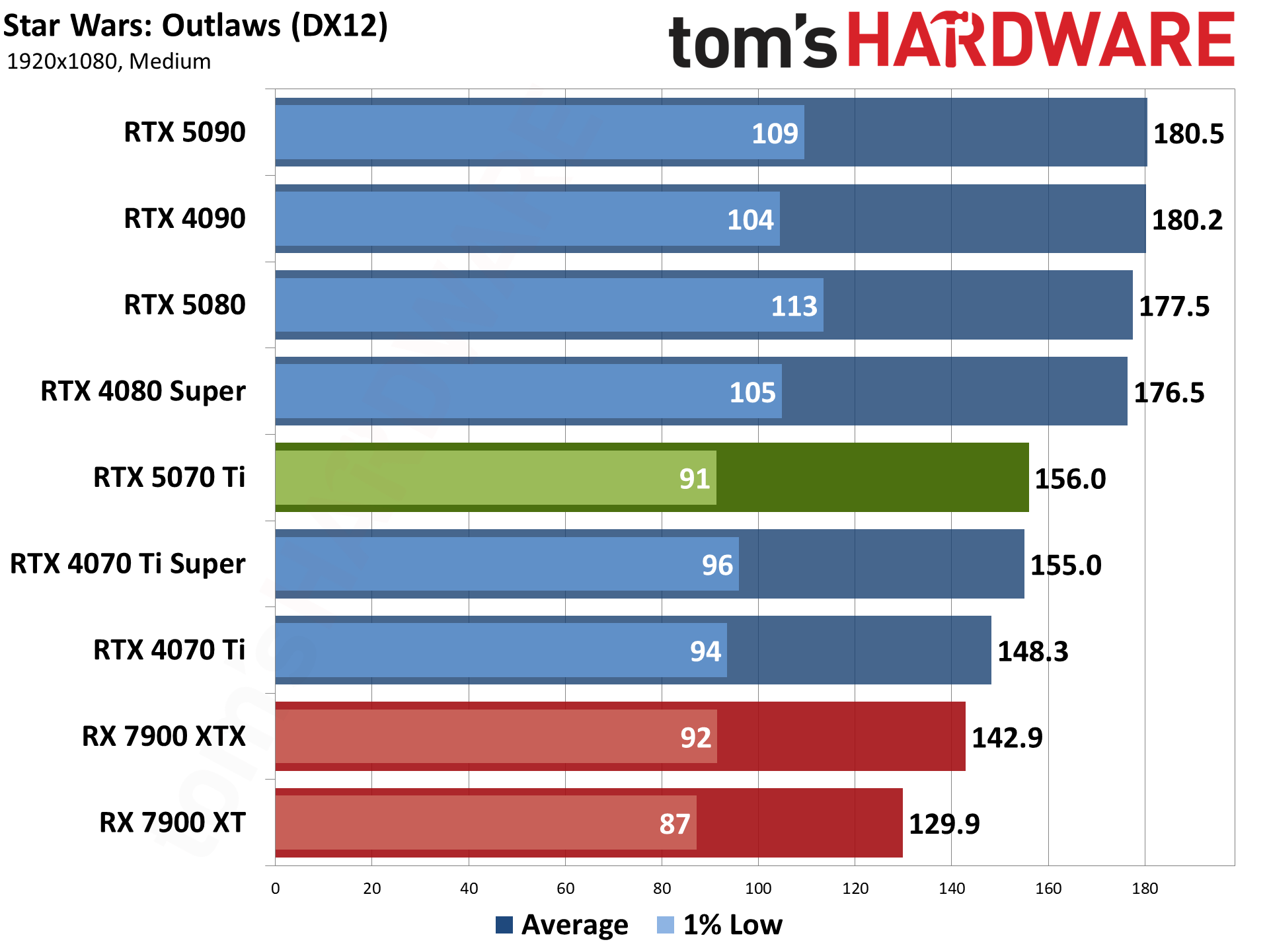

Star Wars Outlaws uses the Snowdrop engine, and we wanted to include a mix of options. It also has a bunch of RT options, though our tests don't enable ray tracing. As with several other games, turning on maximum RT settings in Outlaws tends to result in a less than ideal gaming experience, with stuttering and hitching.

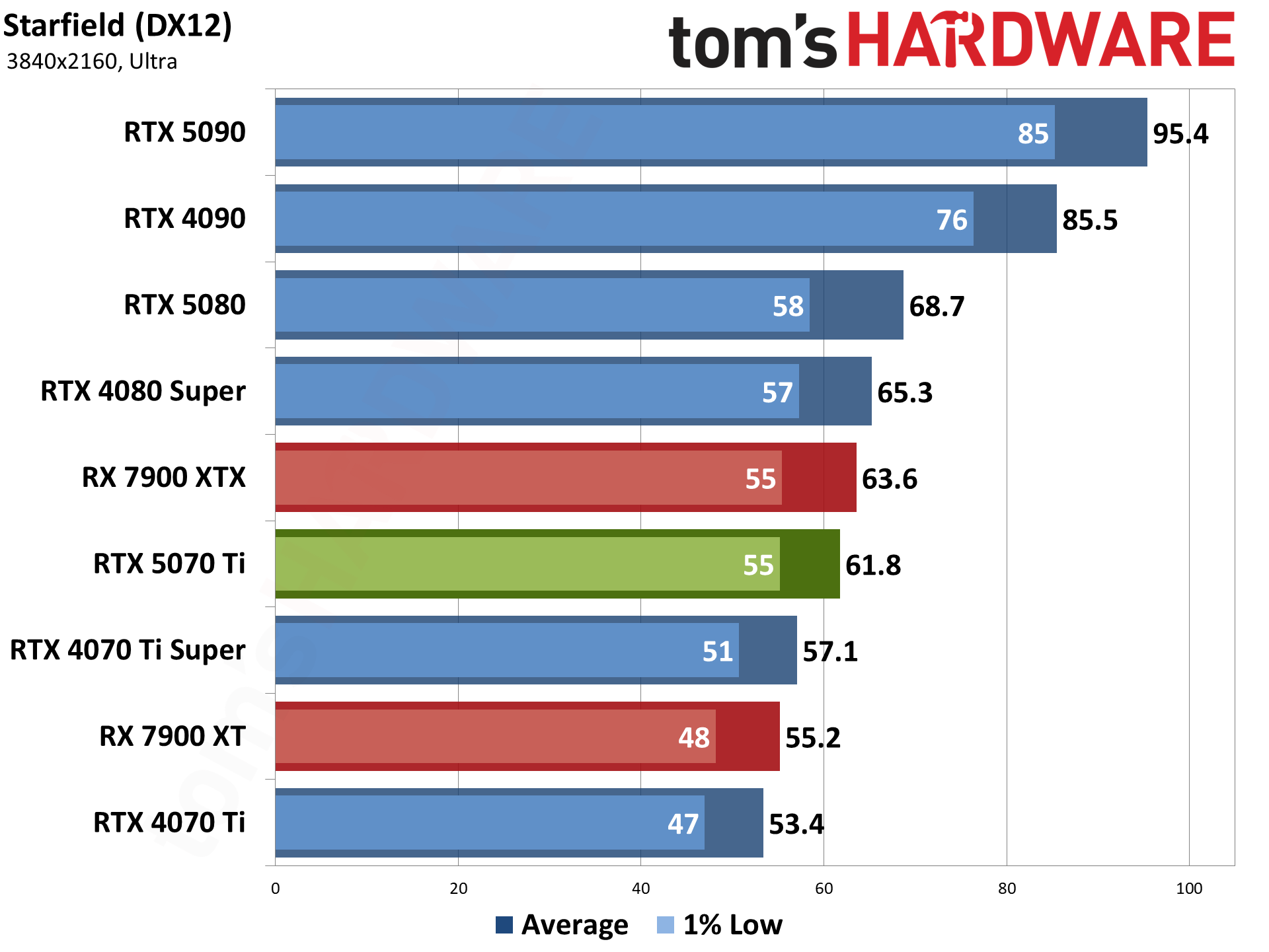

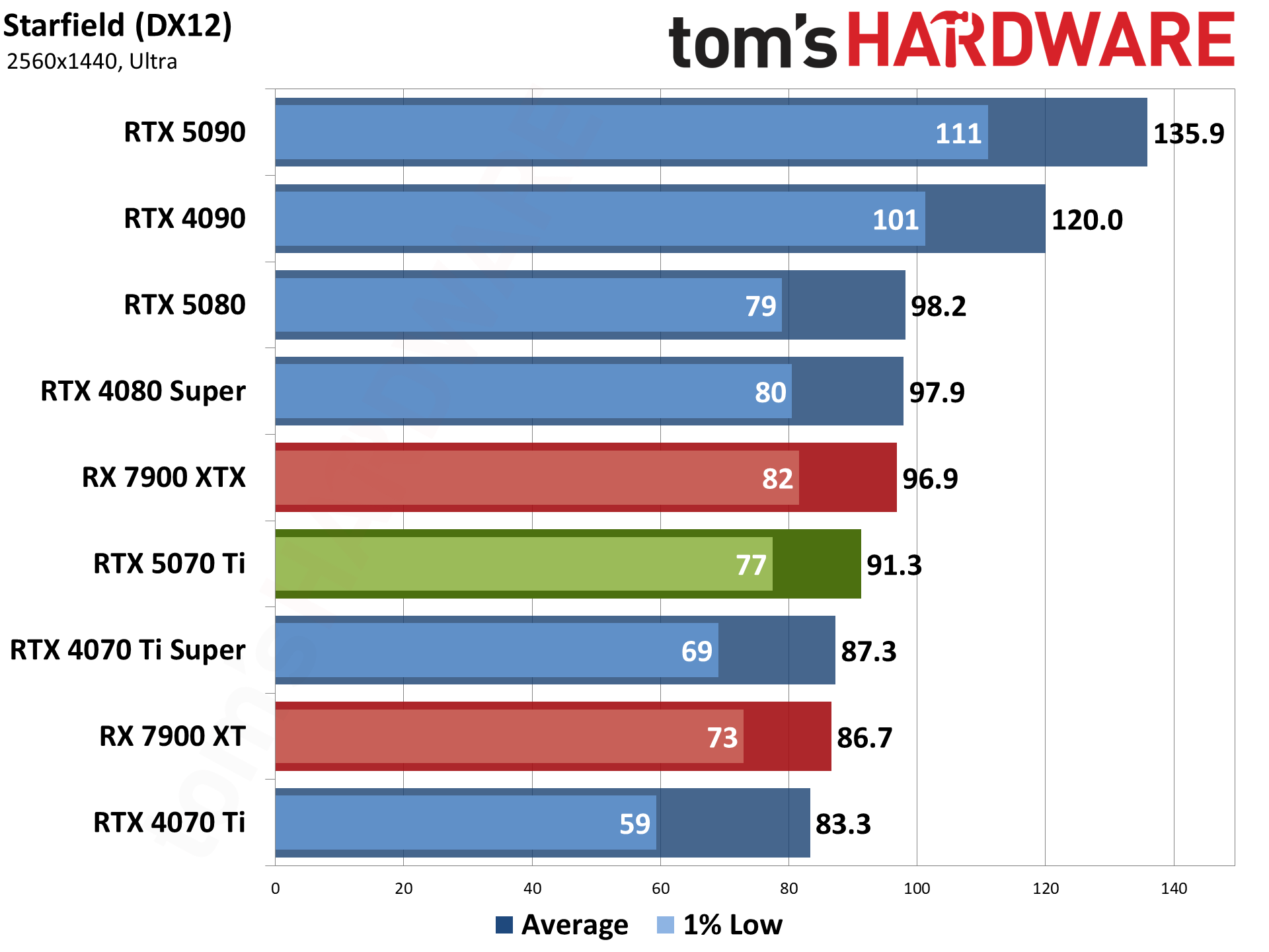

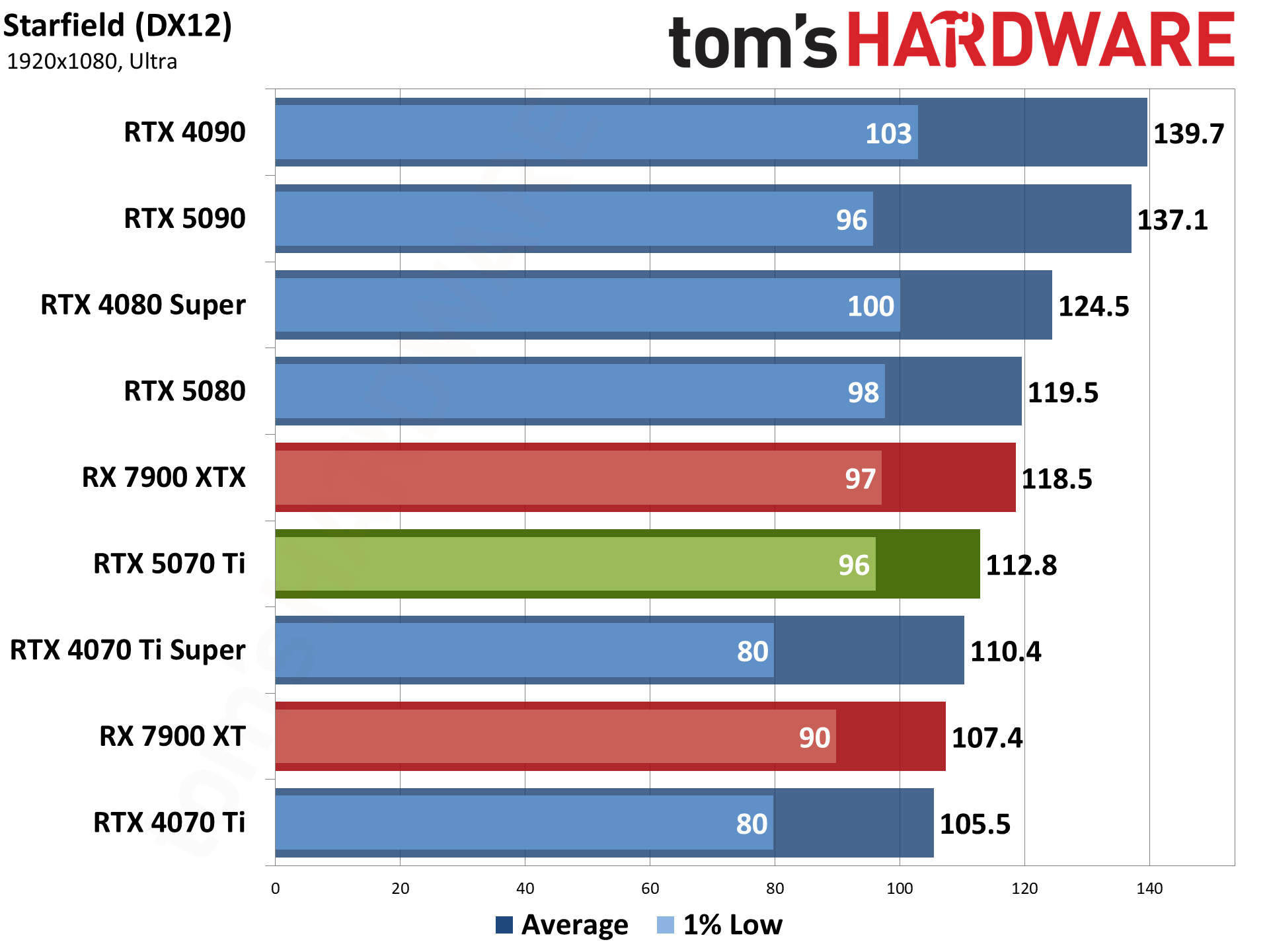

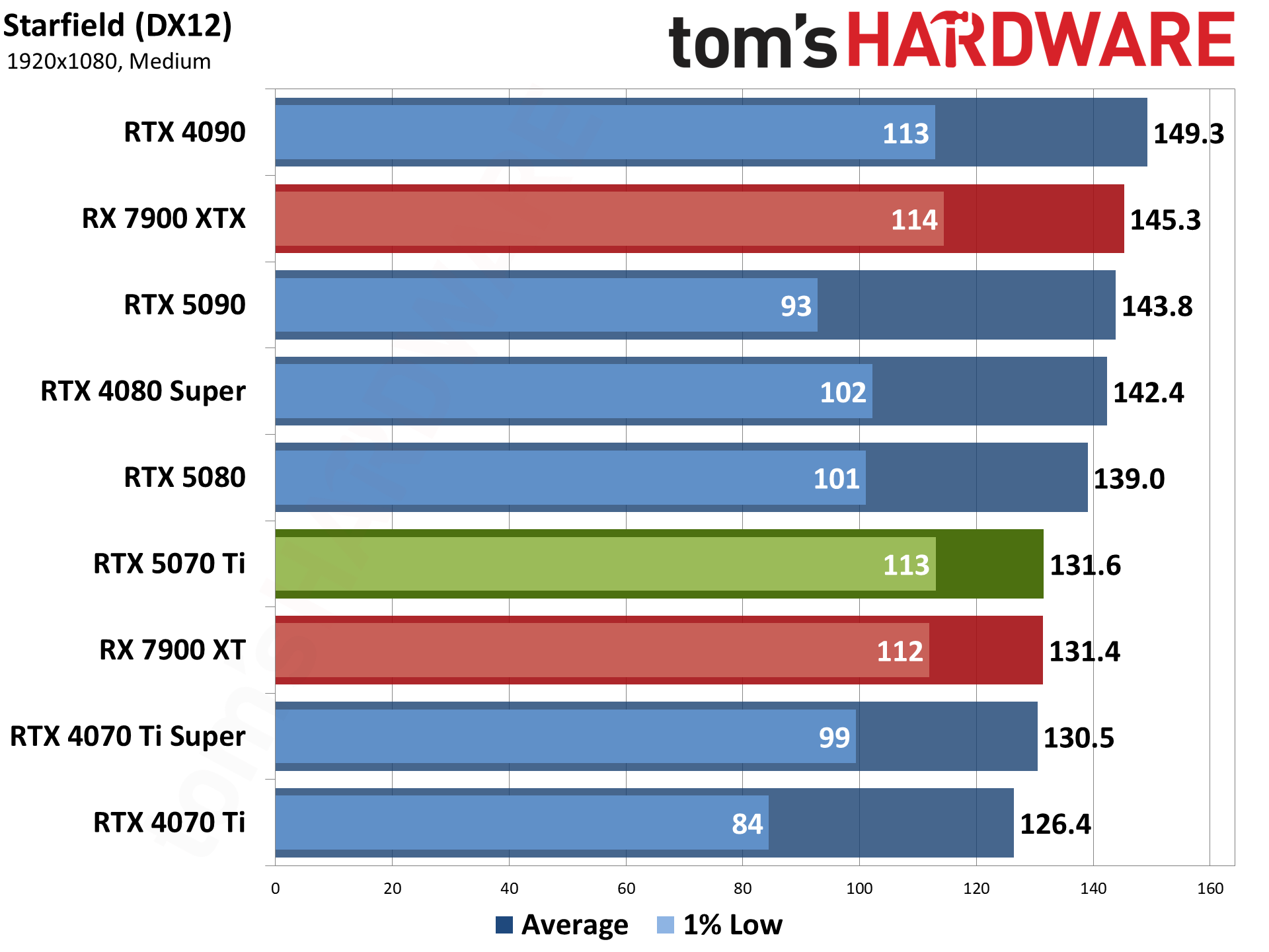

Starfield uses the Creation Engine 2, an updated engine from Bethesda, where the previous release powered the Fallout and Elder Scrolls games. It's another fairly demanding game, and we run around the city of Akila, one of the more taxing locations in the game.

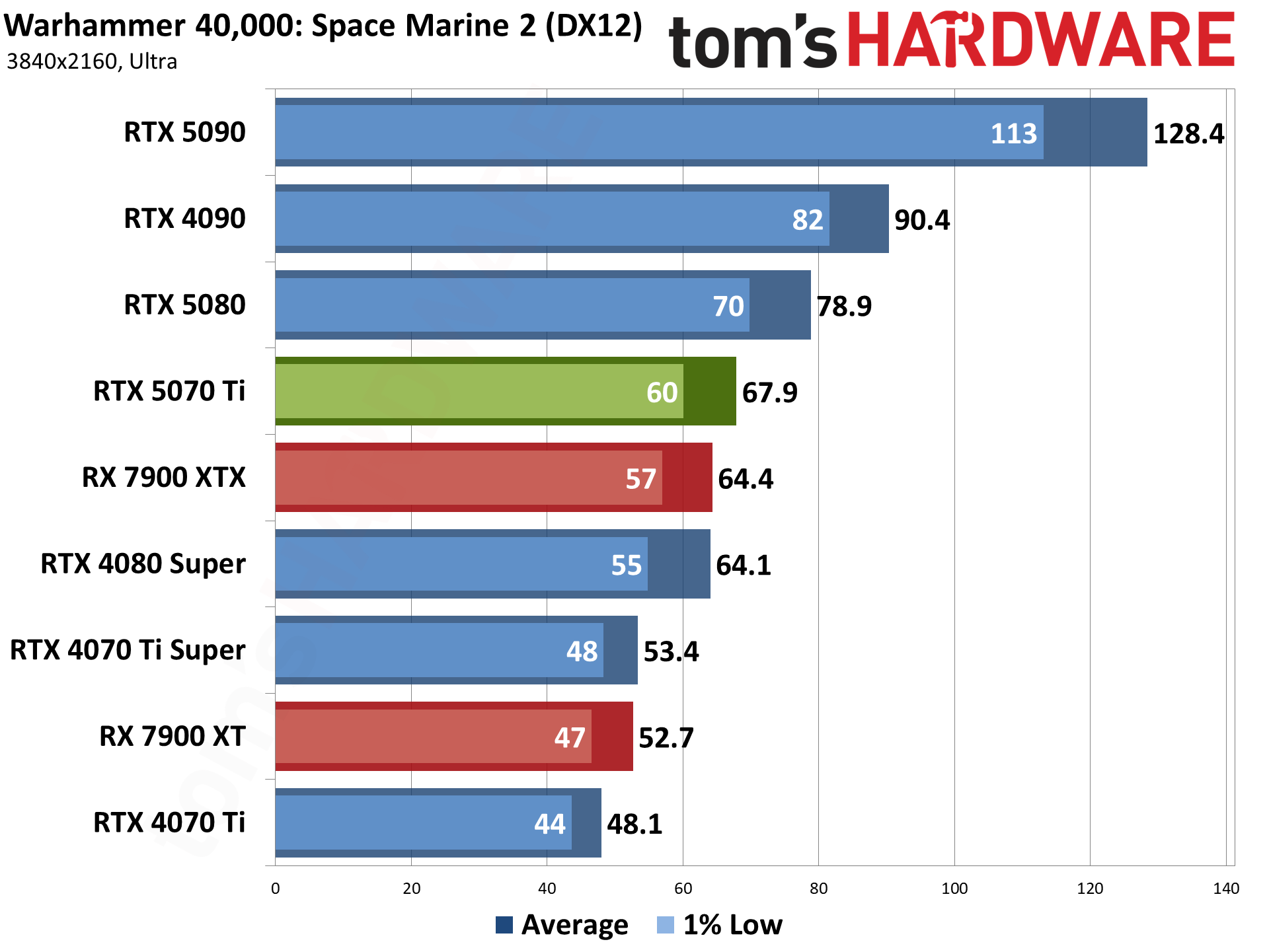

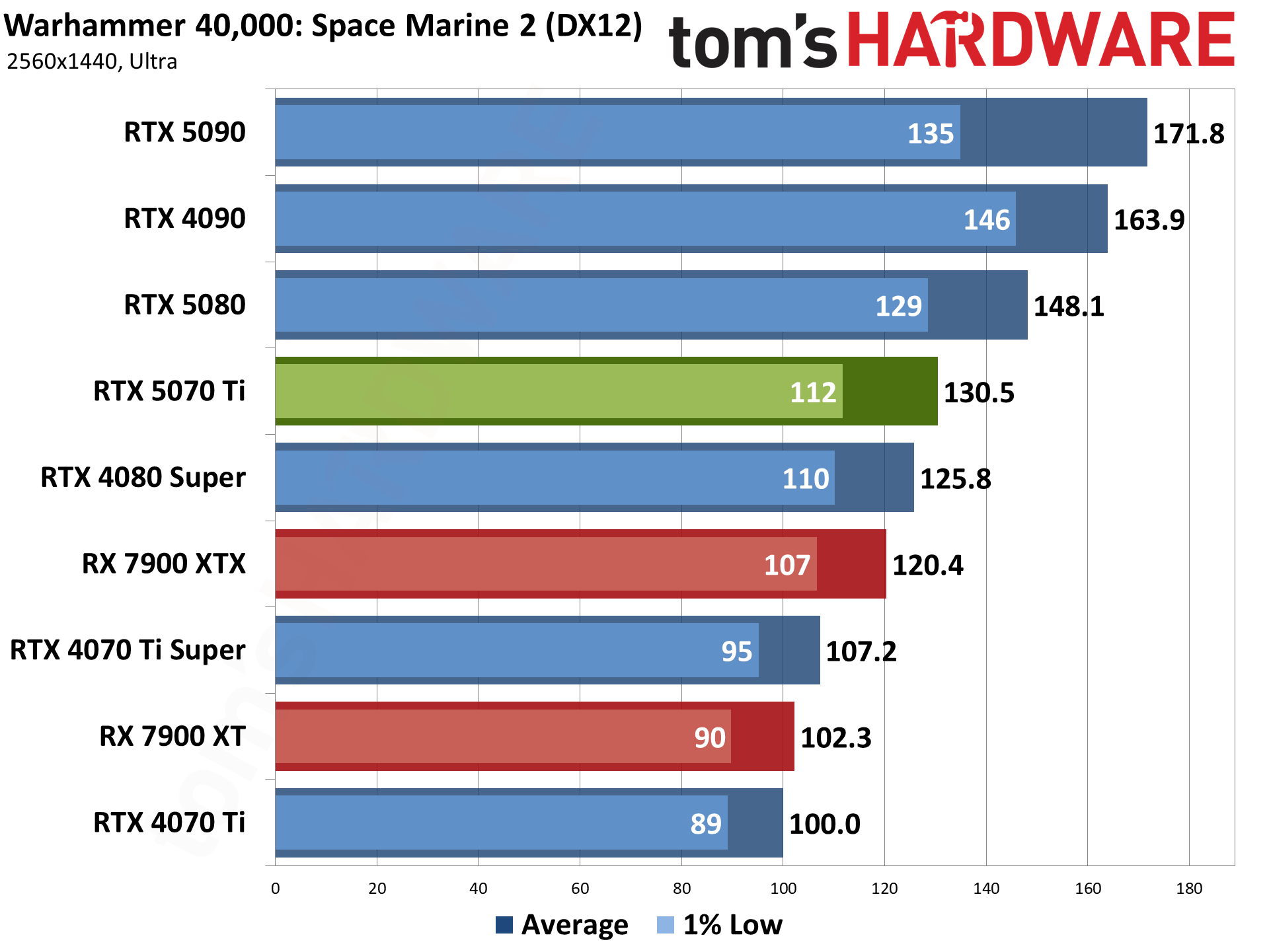

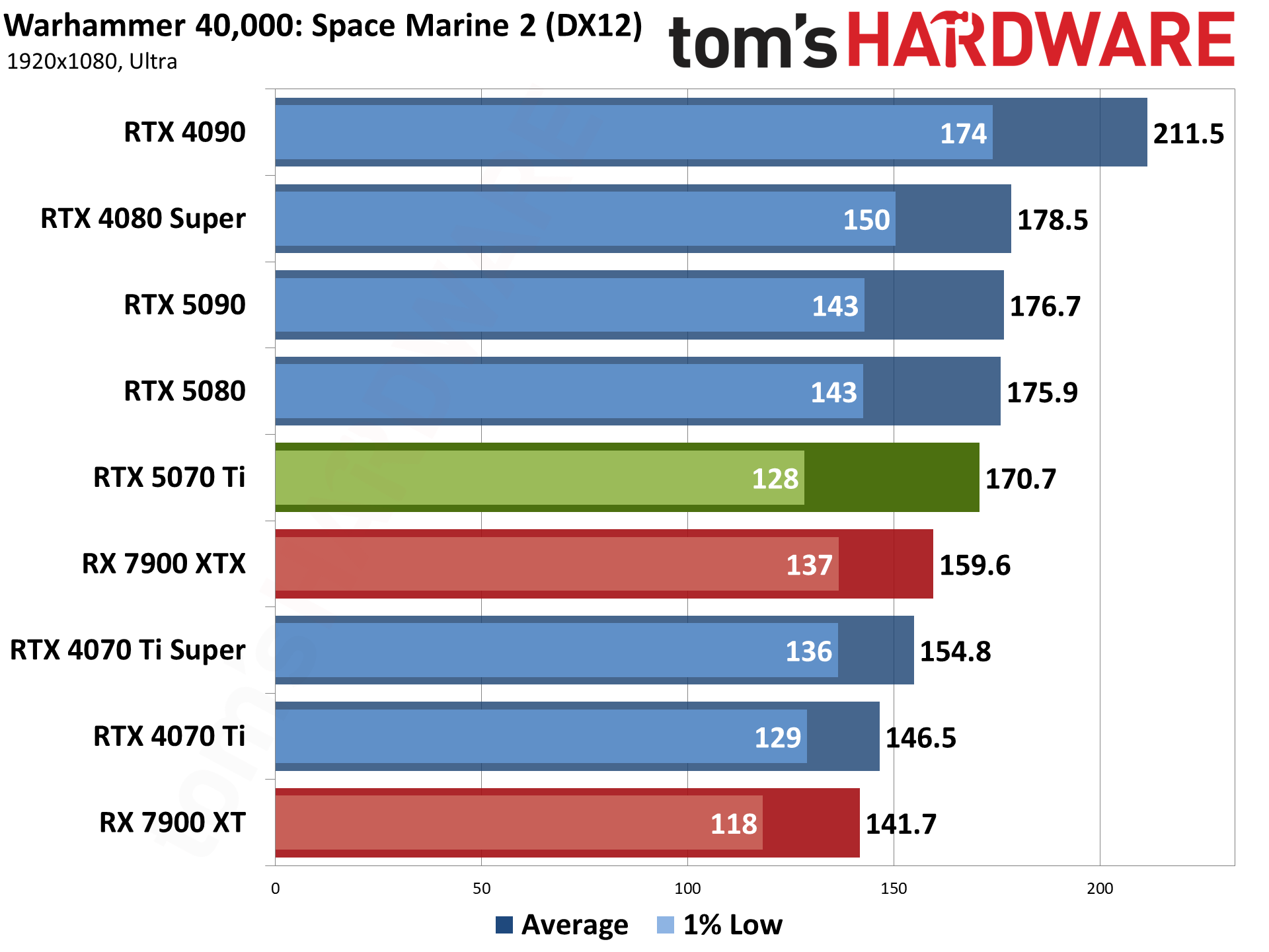

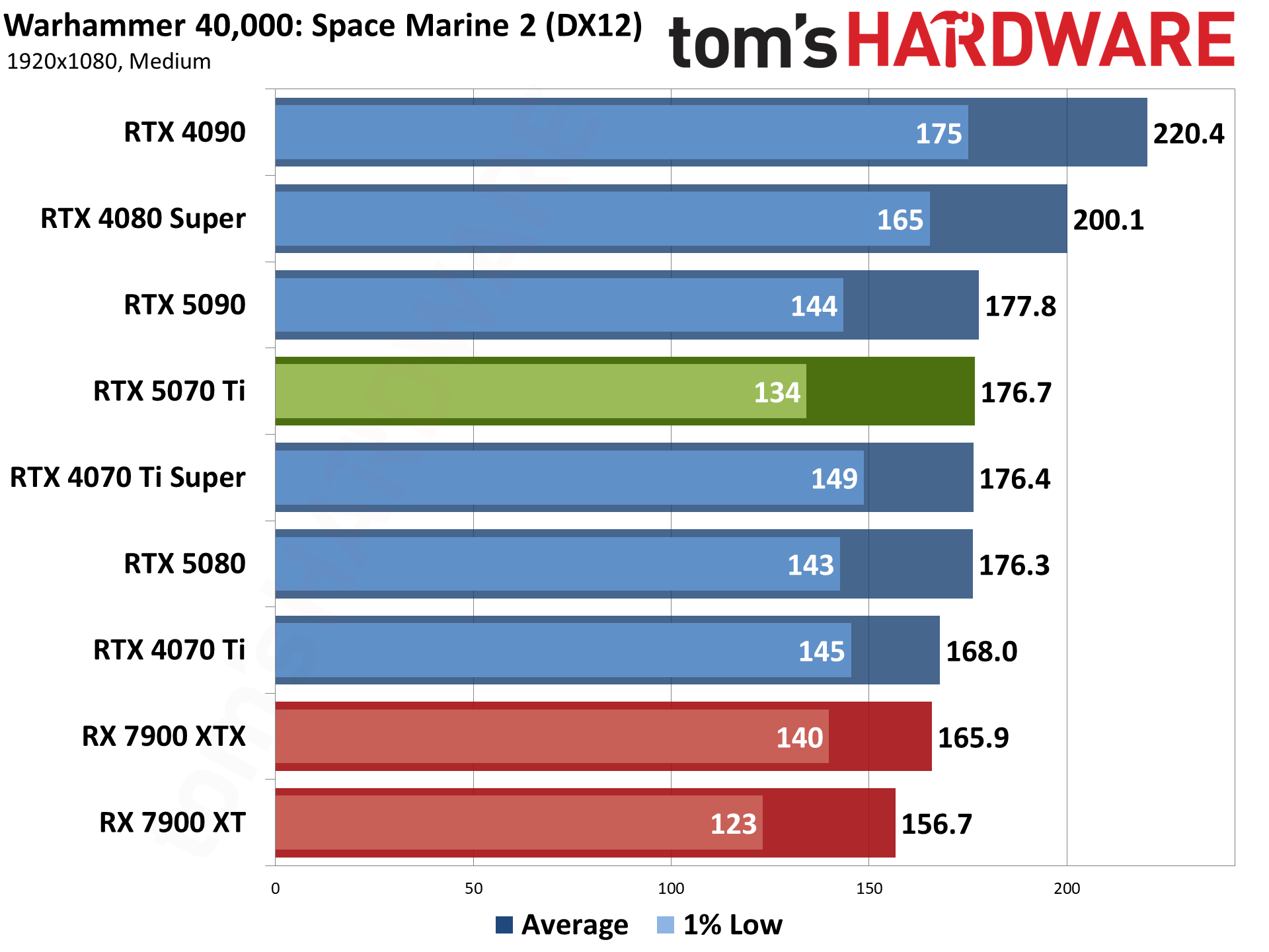

Wrapping things up, Warhammer 40,000: Space Marine 2 is yet another AMD-promoted game. It runs on the Swarm engine and uses DirectX 12, without any support for ray tracing hardware. We use a sequence from the introduction, which is generally less demanding than the various missions you get to later in the game but has the advantage of being repeatable and not having enemies everywhere.

- MORE: Best Graphics Cards

- MORE: GPU Benchmarks and Hierarchy

- MORE: All Graphics Content

Current page: Nvidia RTX 5070 Ti Rasterization Gaming Performance

Prev Page Nvidia RTX 5070 Ti Test Setup Next Page Nvidia RTX 5070 Ti Ray Tracing Gaming Performance

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

Jame5 Basing any performance/$ valuation on this card at MSRP is foolish. There is no FE to anchor it to MSRP. The cards released to the press are slated to be sold $150 above MSRP.Reply

So why even discuss the card as a decent value at $749 when it will cost 20% more than that at launch?

*Edit to correct for the fact that it was 20% over MSRP, so $150, not $200 above. -

JarredWaltonGPU Reply

The further down the stack you go, the less likely pricing is to be completely bonkers. RTX 5090? Yeah, it was always going to sell like hotcakes. 5080 is the step down option so it's not too surprising to see it sell out. But the 5070 Ti? I suspect it will be reasonably available at $749.Jame5 said:Basing any performance/$ valuation on this card at MSRP is foolish. There is no FE to anchor it to MSRP. The cards released to the press are slated to be sold $200 above MSRP.

So why even discuss the card as a decent value at $749 when it will cost 20% more than that at launch?

Yes, there will be $799 to $899 variants, with more bling and a modest overclock. But you don't need to buy those to get a decent card. And we've added the caveat that it's only a good card if you can find it at MSRP.

The same thing basically happened with the 40-series. 4090 and 4080 were mostly sold above MSRP. But 4070 Ti and 4070 were pretty readily available at close to MSRP. The 4070 Ti Super supply is gone now, but it was pretty easy to acquire one at MSRP since it launched a year ago. -

DRagor Replythe 5070 Ti can get away with 16GB by virtue of costing $749

Except it will not cost 749 so it makes no sense to say it. -

Jame5 Reply

You should go check out Microcenter.JarredWaltonGPU said:The further down the stack you go, the less likely pricing is to be completely bonkers. RTX 5090? Yeah, it was always going to sell like hotcakes. 5080 is the step down option so it's not too surprising to see it sell out. But the 5070 Ti? I suspect it will be reasonably available at $749.

Yes, there will be $799 to $899 variants, with more bling and a modest overclock. But you don't need to buy those to get a decent card. And we've added the caveat that it's only a good card if you can find it at MSRP.

The same thing basically happened with the 40-series. 4090 and 4080 were mostly sold above MSRP. But 4070 Ti and 4070 were pretty readily available at close to MSRP. The 4070 Ti Super supply is gone now, but it was pretty easy to acquire one at MSRP since it launched a year ago.

They just (this morning in time for the reviews) conveniently have a sale on the Asus card that was passed to reviewers. The list price is $899. They have magically slashed it for review day today back to MSRP at $749.

It is the ONLY listing available at MSRP.

*Edit: To be clear, before that all of the available options start at $899. Your high end guess is the floor for where people are starting their profit margins. -

ingtar33 Reply

there is only one. count them one. sku at 749. it's made by PNY. no one else has one at MSRP. so your whole 3 paragraphs of nvidia glazing is pointless. because there aren't any cards availible at 750JarredWaltonGPU said:The further down the stack you go, the less likely pricing is to be completely bonkers. RTX 5090? Yeah, it was always going to sell like hotcakes. 5080 is the step down option so it's not too surprising to see it sell out. But the 5070 Ti? I suspect it will be reasonably available at $749.

Yes, there will be $799 to $899 variants, with more bling and a modest overclock. But you don't need to buy those to get a decent card. And we've added the caveat that it's only a good card if you can find it at MSRP.

The same thing basically happened with the 40-series. 4090 and 4080 were mostly sold above MSRP. But 4070 Ti and 4070 were pretty readily available at close to MSRP. The 4070 Ti Super supply is gone now, but it was pretty easy to acquire one at MSRP since it launched a year ago. -

JarredWaltonGPU Reply

We'll see what happens tomorrow AM. Early listing are always bunk. I would not buy or recommend the 5070 Ti as an $899 or higher card, at all. Even $799 is a reach, but for a blinged out model it would be okay.Jame5 said:You should go check out Microcenter.

They just (this morning in time for the reviews) conveniently have a sale on the Asus card that was passed to reviewers. The list price is $899. They have magically slashed it for review day today back to MSRP at $749.

It is the ONLY listing available at MSRP.

The graphics card companies and retail outlets are getting greedy at launch, but give it a couple of weeks and I wager we'll see plenty of $749~$799 5070 Ti cards on Newegg. -

Gururu Thank you for the review. It's expectedly pricey, and still great performance for $250 less than next tier. Still out of my league, nVidia definitely not throwing bones yet.Reply -

HideOut Reply

50 reviews appeared online in the last houur or whatever. The only one that reccomends this card is the one with affiliate links. Amazingi how that works.Admin said:The Nvidia GeForce RTX 5070 Ti replaces the prior-generation RTX 4070 Ti and the 4070 Ti Super in the high-end segment. It offers solid performance improvements over the former but only modest gains over the Super. Thankfully, it's also $50 cheaper.

Nvidia GeForce RTX 5070 Ti review: A proper high-end GPU : Read more -

YSCCC LMAO, a proper high end GPU and struggles to find points to be listed in the Pros:Reply

Pros+

Good balance of performance and price - Price... seriously? we all know that nobody will be getting it near MSRP, maybe as bad as Ampere where MSRP didn't exist till release of Ada +

16GB VRAM and 256-bit interface - Which will be not enough for most titles really soon above 1440p+

Latest Nvidia architecture and features - Which bring... MFG? and....?

At this point of time I think the now cheaper 7900XTX with 24 GB of Vram, the old 4080 super and the 7900XT 20GB will be the real proper high end card... at least if we don't turn on the RT we can be gaming without FG for a year or so longer -

JayGau All the tech channels on YouTube are saying that this card will not be sold at 750$. Jaytwocents even slightly broke the embargo on purpose to expose this craziness. There is not FE for this GPU and AIBs are cranking up tbe prices. Stocks will be awful like the other 5000 cards so they have no reasons to sell it at MSRP. The 5080 is now sold at $1300+ (even $1600), and the 5090 at 3000$. So thinking that the 5070 Ti will magically go to 750$ in two weeks is either naive or dishonest.Reply