AMD Radeon RX 5700 XT and Radeon RX 5700 Review: New Prices Keep Navi In The Game

The Radeon RX 5700 series bests its Nvidia competitors but lacks ray tracing and tensor core hardware.

Why you can trust Tom's Hardware

Power Consumption: Radeon RX 5700

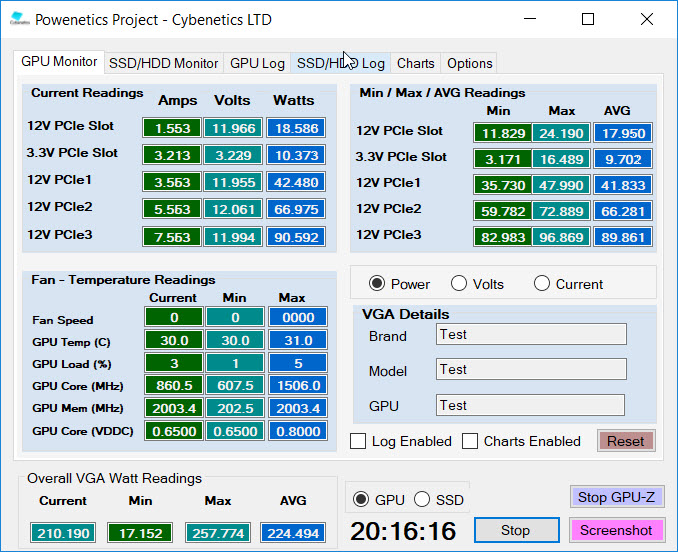

The US Tom’s Hardware graphics lab continues utilizing Cybenetics’ Powenetics hardware/software solution for accurately measuring power consumption.

Powenetics, In Depth

For a closer look at our U.S. lab’s power consumption measurement platform, check out Powenetics: A Better Way To Measure Power Draw for CPUs, GPUs & Storage.

In brief, Powenetics utilizes Tinkerforge Master Bricks, to which Voltage/Current bricklets are attached. The bricklets are installed between the load and power supply, and they monitor consumption through each of the modified PSU’s auxiliary power connectors and through the PCIe slot by way of a PCIe riser. Custom software logs the readings, allowing us to dial in a sampling rate, pull that data into Excel, and very accurately chart everything from average power across a benchmark run to instantaneous spikes.

The software is set up to log the power consumption of graphics cards, storage devices, and CPUs. However, we’re only using the bricklets relevant to graphics card testing. AMD's Radeon RX 5700 and Radeon RX 5700 XT get all of their power from the PCIe slot, one eight-pin auxiliary connector, and one six-pin connector.

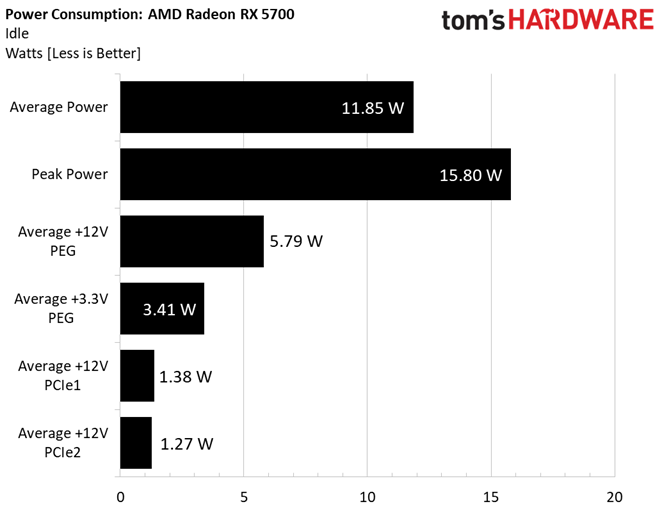

Idle

AMD Radeon RX 5700 gets most of its power from the PCIe slot’s +12V rail at idle, followed by the slot’s +3.3V rail. Power over the auxiliary connectors is kept to a minimum.

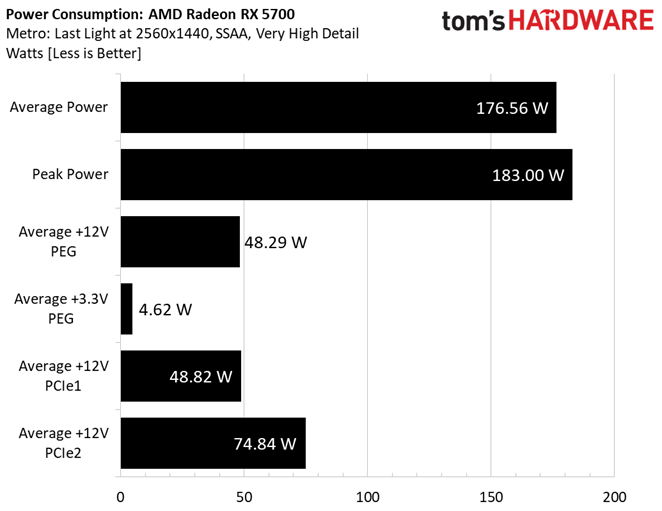

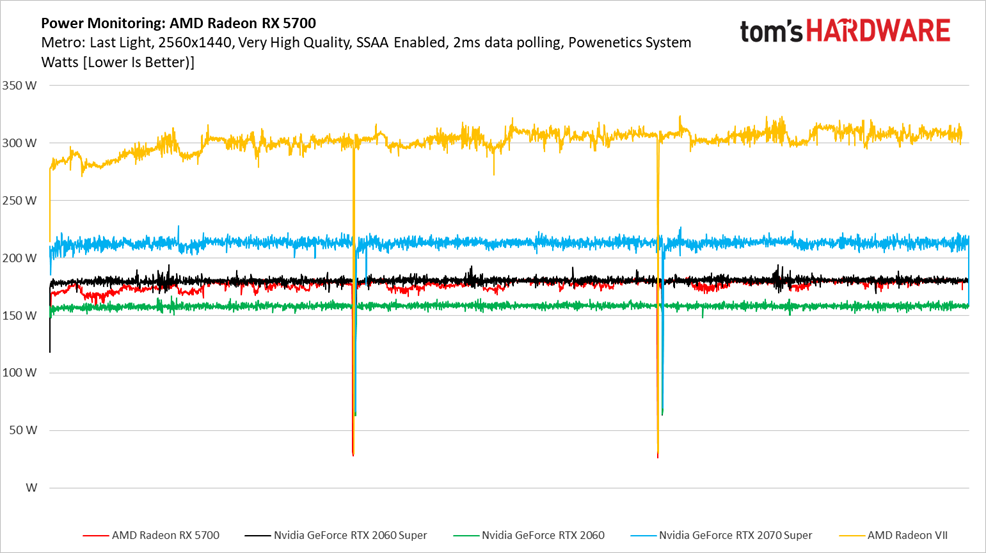

Gaming

In what might come as a surprise to some, the Radeon RX 5700 averages less power consumption through our Metro benchmark sequence than the company’s 185W board power specification. We’re accustomed to seeing launch numbers that push the boundaries of what companies like AMD and Nvidia tell us to expect. But in this case, even the 5700’s worst-case peak lands shy of the paper ceiling.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

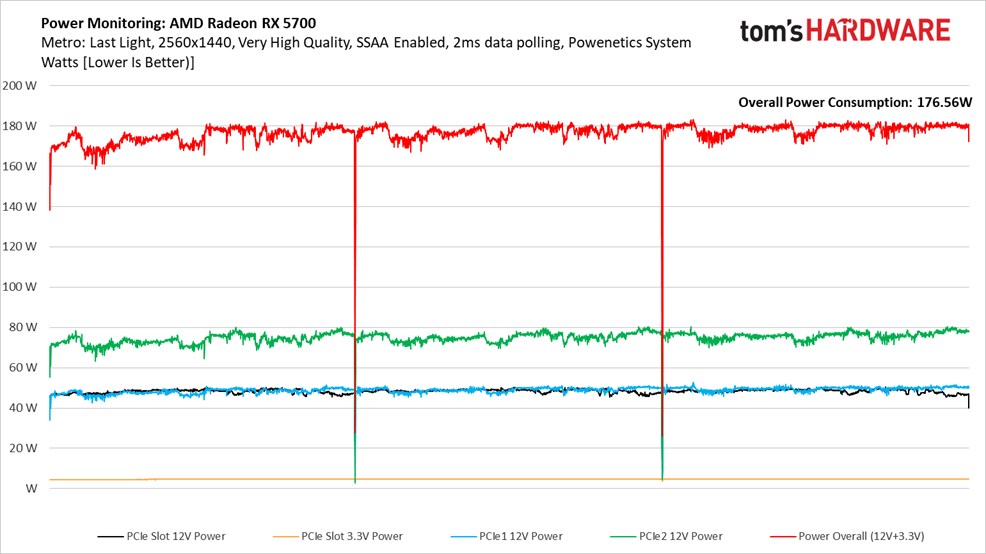

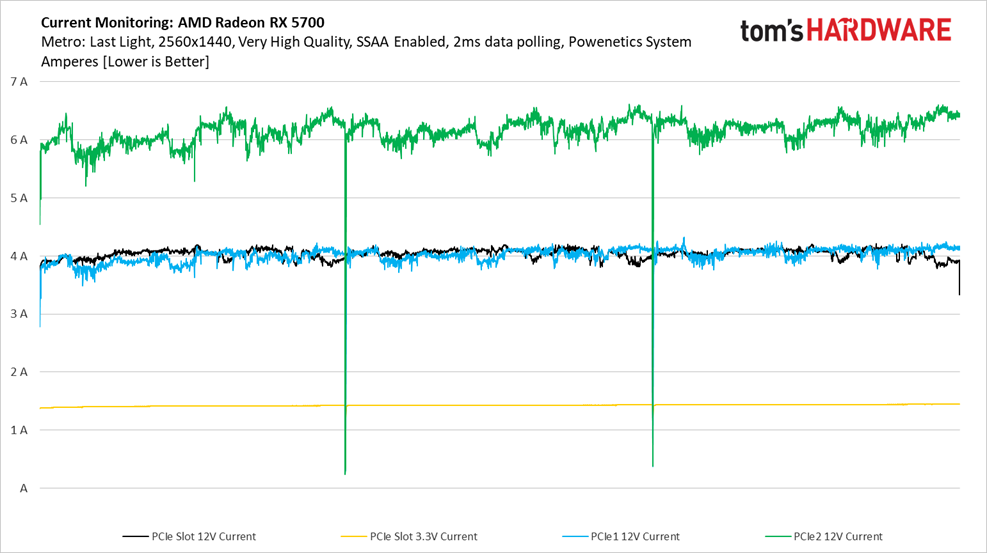

Breaking power consumption up into each rail over time shows that the Radeon RX 5700 gets most of what it needs from the eight-pin auxiliary connector. Meanwhile, the PCIe slot and six-pin connector deliver the same 48W or so.

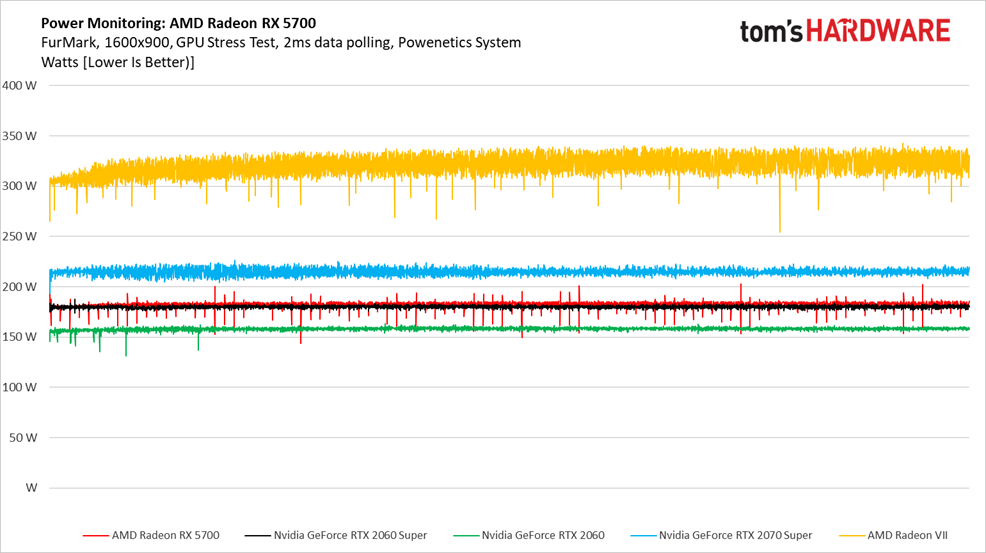

Radeon RX 5700 is represented by the red line in this chart. By matching or dipping under GeForce RTX 2060 Super, AMD demonstrates just how much Navi improves upon Vega’s performance per watt.

Of course, the GeForce RTX 2060 uses significantly less power, while GeForce RTX 2070 Super uses quite a bit more. But it’s the Radeon VII that stands out most for spending time well above the 300W mark.

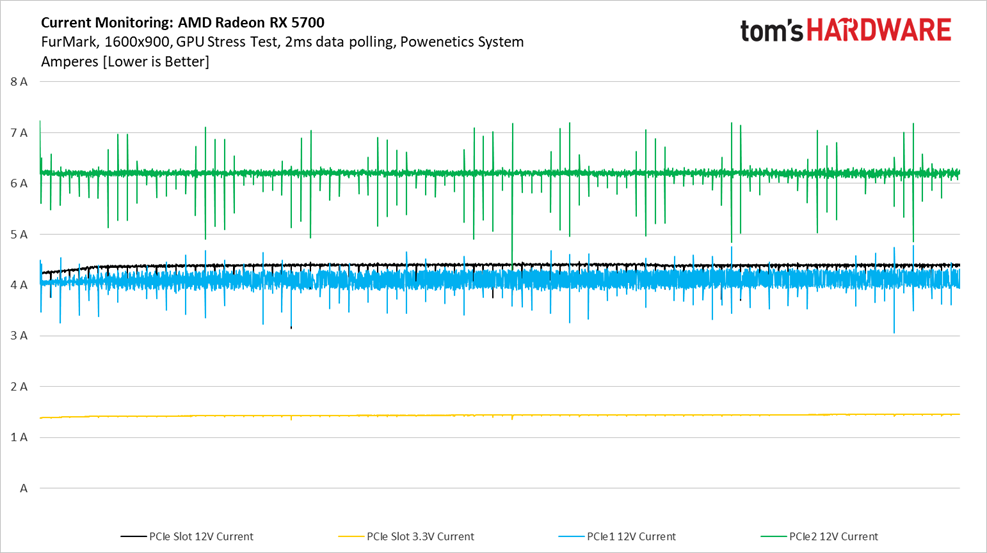

Current draw over the PCIe slot’s +12V rail lands well below its 1.1A/pin maximum.

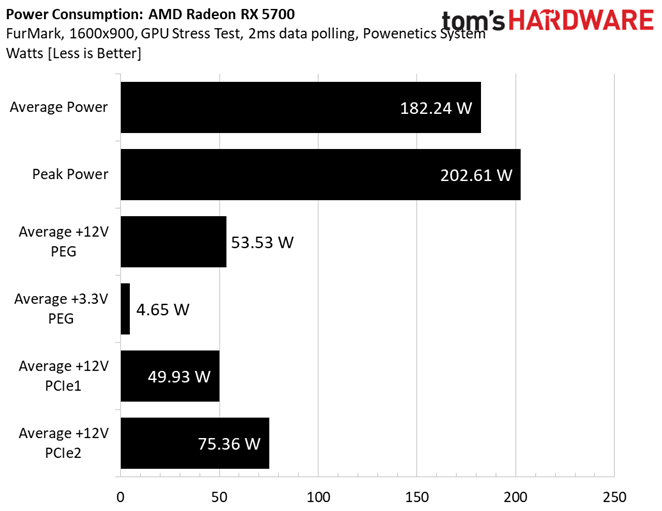

FurMark

Power consumption jumps a bit under FurMark. However, our 182W average is still lower than AMD’s 185W board power specification.

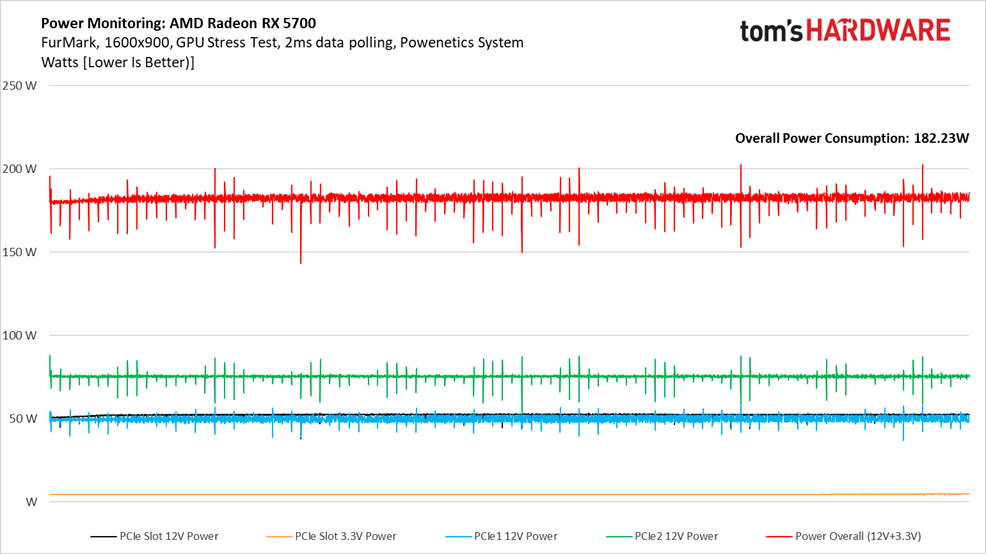

Firing up FurMark reveals dips and spikes over the two auxiliary power connectors that weren’t an issue in Metro. When we drill down into the data, current draw over eight-pin connector is taking >1A dips for one sample and sometimes popping back up in the next 2ms sampling window. The six-pin connector does this to a lesser extent, while the PCIe slot’s +12V rail encounters periodic dips (but no spikes). Cumulatively, we end up with an overall line chart that appears more dramatic. Peak power consumption, however, only gets up to about 202W.

We asked AMD to look at our data and help diagnose the cause of those spikes. Its official response is:

“The behavior you are seeing under a purely synthetic power virus (FurMark) workload is within our specified product parameters.

There are many different sensors and related algorithms that work to keep the GPU working within its prescribed product limits; the behavior depicted and described suggests that specific workload segments in FurMark are periodically causing a rapid ramp in temperature. When the algorithms respond, there is an intentional, designed-in delay which results in the observed ‘spike’.

The absolute values displayed are still within spec, showing that the electrical and thermal protection mechanisms built in to the RX 5700 series are working per design.”

Radeon RX 5700’s power consumption in FurMark is slightly higher than GeForce RTX 2060 Super, though just barely.

Here’s the data we were talking about under the power graph. Current draw over the power connectors is affected in a way not seen from our gaming workload, though the PCIe slot’s +12V rail shows signs of the same behavior.

MORE: Best Graphics Cards

MORE: GPU Benchmarks

MORE: All Graphics Content

Current page: Power Consumption: Radeon RX 5700

Prev Page Performance Results: 3840 x 2160 Next Page Power Consumption: Radeon RX 5700 XT-

ICWiener Honestly I'd pay the extra just for Nvidia's decent cooler, let alone power consumption, RTX, etc. Hard pass on another blower.Reply -

justin.m.beauvais Dear AMD,Reply

Why? Wasn't your line GTX 1080 performance at RX 580 prices? points at Navi This is not that.

Regrettably yours,

Your Fans -

alextheblue Reply

I read the review, don't know what you mean by "let alone power consumption". Efficiency is virtually the same. I personally prefer blowers as long as they're not crazy loud, and the review says they're not so I'm all for it. If you DON'T like blowers, I'm sure there will be third-party coolers that improve cooling performance and acoustics further (and dump heat into the chassis like crazy).ICWiener said:Honestly I'd pay the extra just for Nvidia's decent cooler, let alone power consumption, RTX, etc. Hard pass on another blower.

Who ever promised that? They are 10-11% faster than same-priced Nvidia models. They're not going to drop 5700 $100 bucks when they're already ahead by 11%, even though I would love lower prices, there's no incentive for them to do so.justin.m.beauvais said:Dear AMD,

Why? Wasn't your line GTX 1080 performance at RX 580 prices? points at Navi This is not that.

Regrettably yours,

Your Fans -

face-plants I'm pleasantly surprised by both the performance AND the last-minute drop in price. Personally I don't like blower style cards and will be on the lookout for third-party offerings with more traditional dual fan coolers. This is totally personal preference as I tend to build in bigger cases with plenty of ventilation to deal with the extra heat. If the prices don't get too out of line from the AIBs then I'll gladly give a few of these cards a go in upcoming builds. I'm also expecting a decent improvement in thermals so hopefully these FE cards aren't exemplary of the best cooling you can get on air. The prospect of building all AMD machines in the coming months has got me totally nerding out.Reply -

kinggremlin Replyalextheblue said:I read the review, don't know what you mean by "let alone power consumption". Efficiency is virtually the same. I personally prefer blowers as long as they're not crazy loud, and the review says they're not so I'm all for it. If you DON'T like blowers, I'm sure there will be third-party coolers that improve cooling performance and acoustics further (and dump heat into the chassis like crazy).

Who ever promised that? They are 10-11% faster than same-priced Nvidia models. They're not going to drop 5700 $100 bucks when they're already ahead by 11%, even though I would love lower prices, there's no incentive for them to do so.

You didn't read the review. Ignoring the Furmark results which don't mirror any realworld scenario. The 5700xt is slower than the 2070 Super while using more power and running over 10degrees C hotter in gaming. -

alextheblue Reply

Yes, I did, stop being obstinate. Ah, I get it, you must have read "efficiency" in my post as "power consumption", or something. Why are you comparing it to the 2070 Super? The 5700 is the same price as the 2060 and 11-12% faster on average (between TH and AT), and the 5700XT is the same price as the 2060 Super and roughly 10-11% (TH-AT) faster. Yes, they use more power, but they're faster. As a result the efficiency is pretty close. It varies based on workload (game title), but I have read the reviews here and AT (so far, haven't looked at a third review yet). Here:kinggremlin said:You didn't read the review. Ignoring the Furmark results which don't mirror any realworld scenario. The 5700xt is slower than the 2070 Super while using more power and running over 10degrees C hotter in gaming.

https://www.anandtech.com/show/14618/the-amd-radeon-rx-5700-xt-rx-5700-review/15

Factor in the performance gain (in AT's suite it was 11% for the XT and 12 for vanilla 5700) and you'll see their power consumption is pretty good. Average that with TH's results in Metro: LL and the final efficiency is pretty neck and neck with their direct competitors.

I didn't say they didn't run hot. For people that don't like blowers (as I already said) there will be cooler, quieter third party options. -

digitalgriffin Replyalextheblue said:I read the review, don't know what you mean by "let alone power consumption". Efficiency is virtually the same. I personally prefer blowers as long as they're not crazy loud, and the review says they're not so I'm all for it. If you DON'T like blowers, I'm sure there will be third-party coolers that improve cooling performance and acoustics further (and dump heat into the chassis like crazy).

Who ever promised that? They are 10-11% faster than same-priced Nvidia models. They're not going to drop 5700 $100 bucks when they're already ahead by 11%, even though I would love lower prices, there's no incentive for them to do so.

In all honesty, all these cards are expensive. $350 would have been the most I wanted to pay for a 5700XT. And the card does run hot. Pascal was a small move up in prices. Turing was just insane pricing wise.

That being said I bought one today and said "F"-it. I just don't like NVIDIA's business ethics. It will get the job done for two to three years. -

randomizer How many engineers looked at those fan curves in lab testing and thought they were good?Reply

I think this release is a bit underwhelming. Local pricing here makes the RX 5700 fairly unattractive compared to a 2060, but the XT is better positioned against the 2070. Not sure it's really worthwhile upgrading a 970 though. -

daglesj So for the many of us on a RX480?...Reply

Worth it? Could someone not dig out the previous AMD midrange value demon to test against? C'mon...