Why you can trust Tom's Hardware

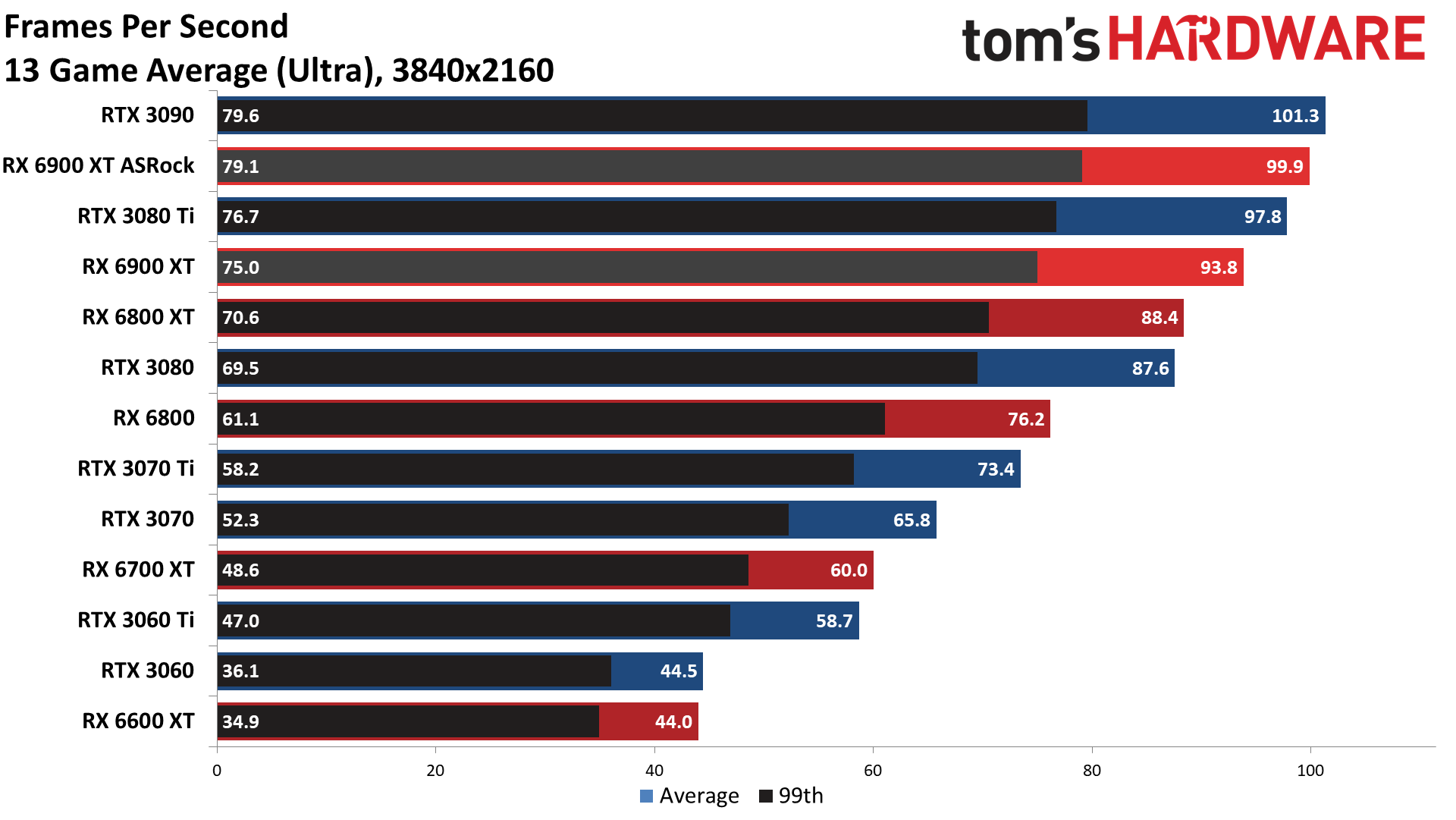

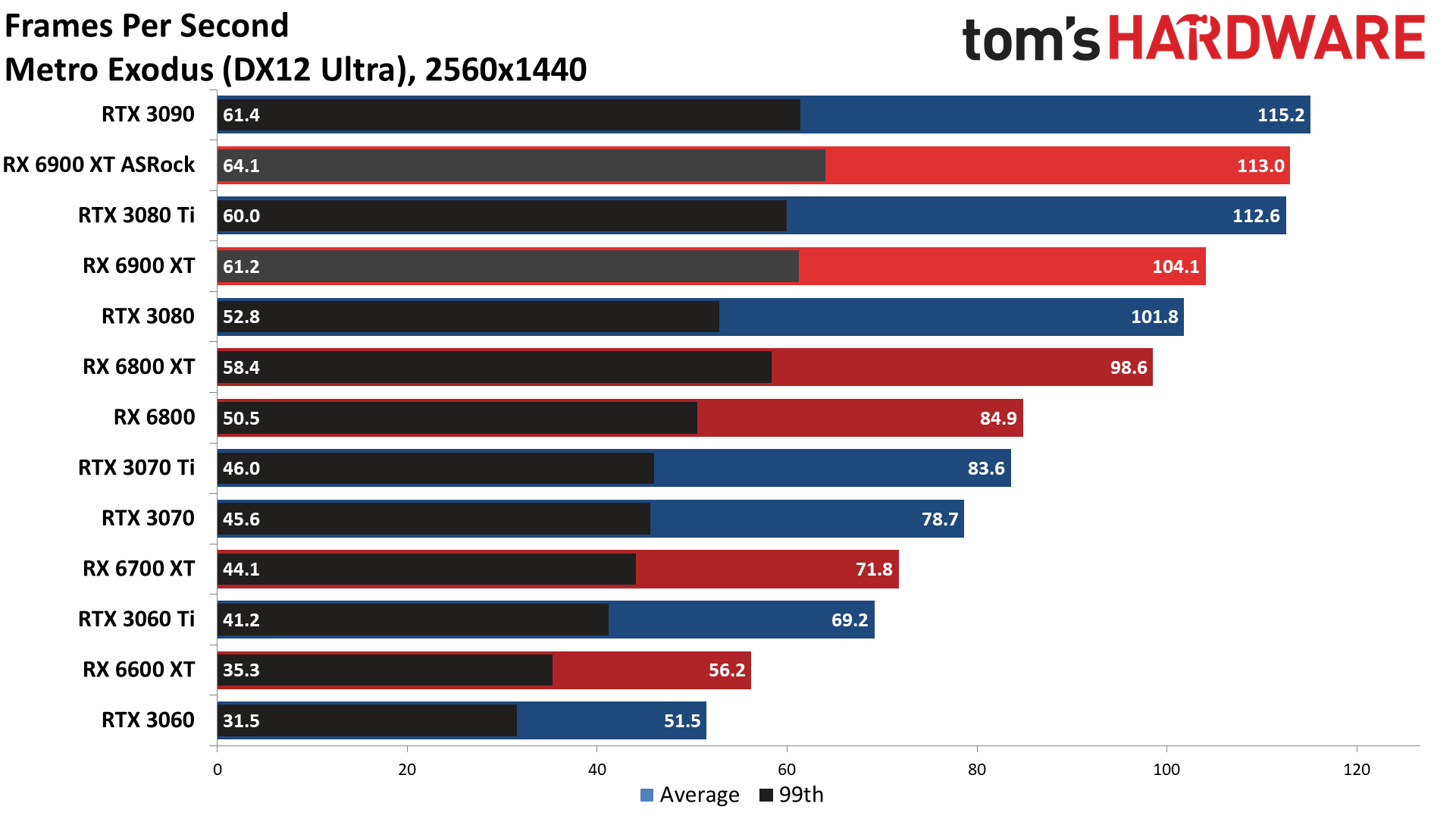

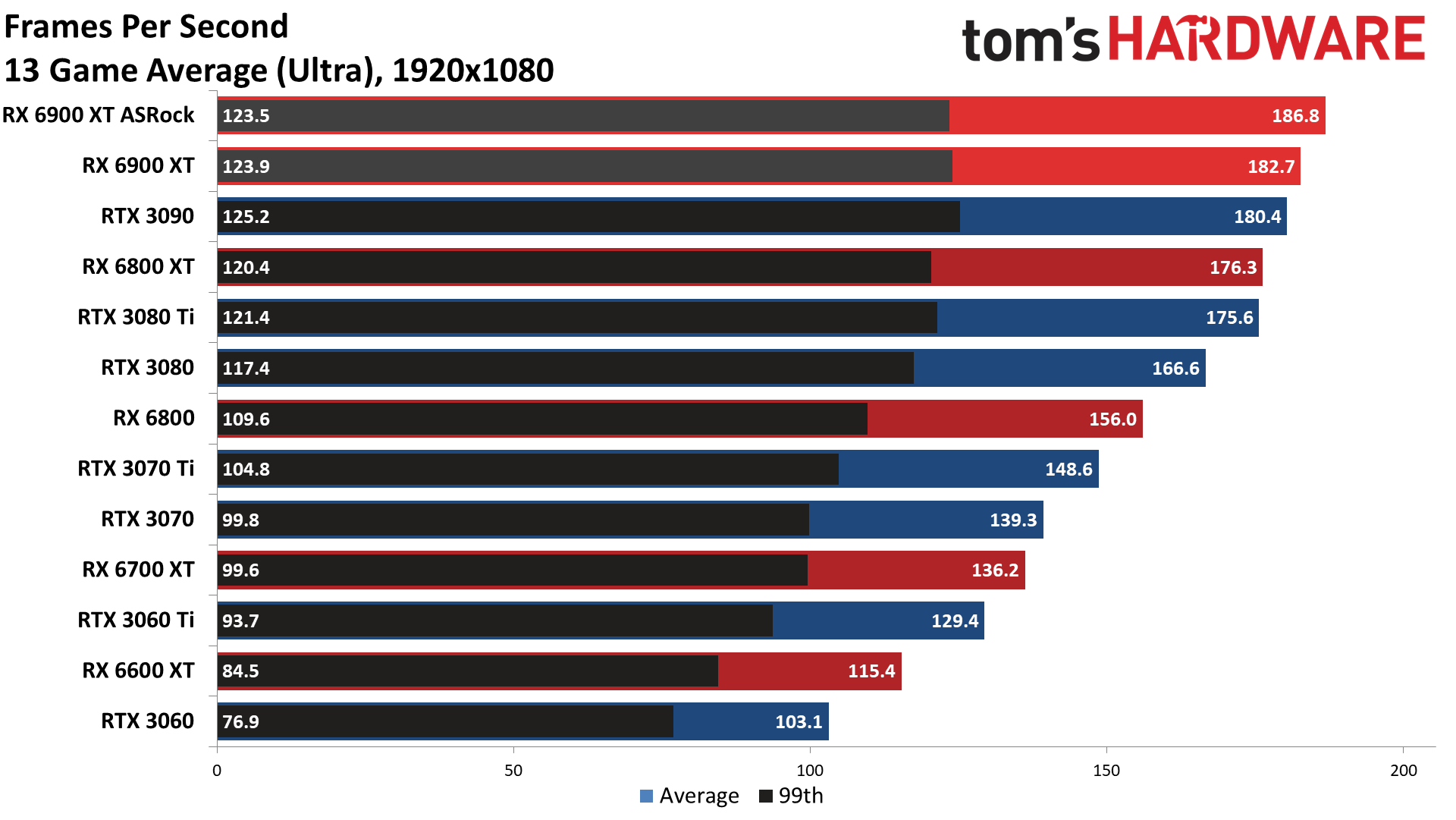

With a large cooler and a healthy factory overclock, we expected the ASRock RX 6900 XT Formula to easily beat the reference model across our test suite. It did, but the last testing of the RX 6900 XT is now about four months old and a few of the games actually ran slower, likely due to driver and/or game updates. There were also a few larger jumps in performance, especially at 4K, which may also be partly thanks to updated drivers.

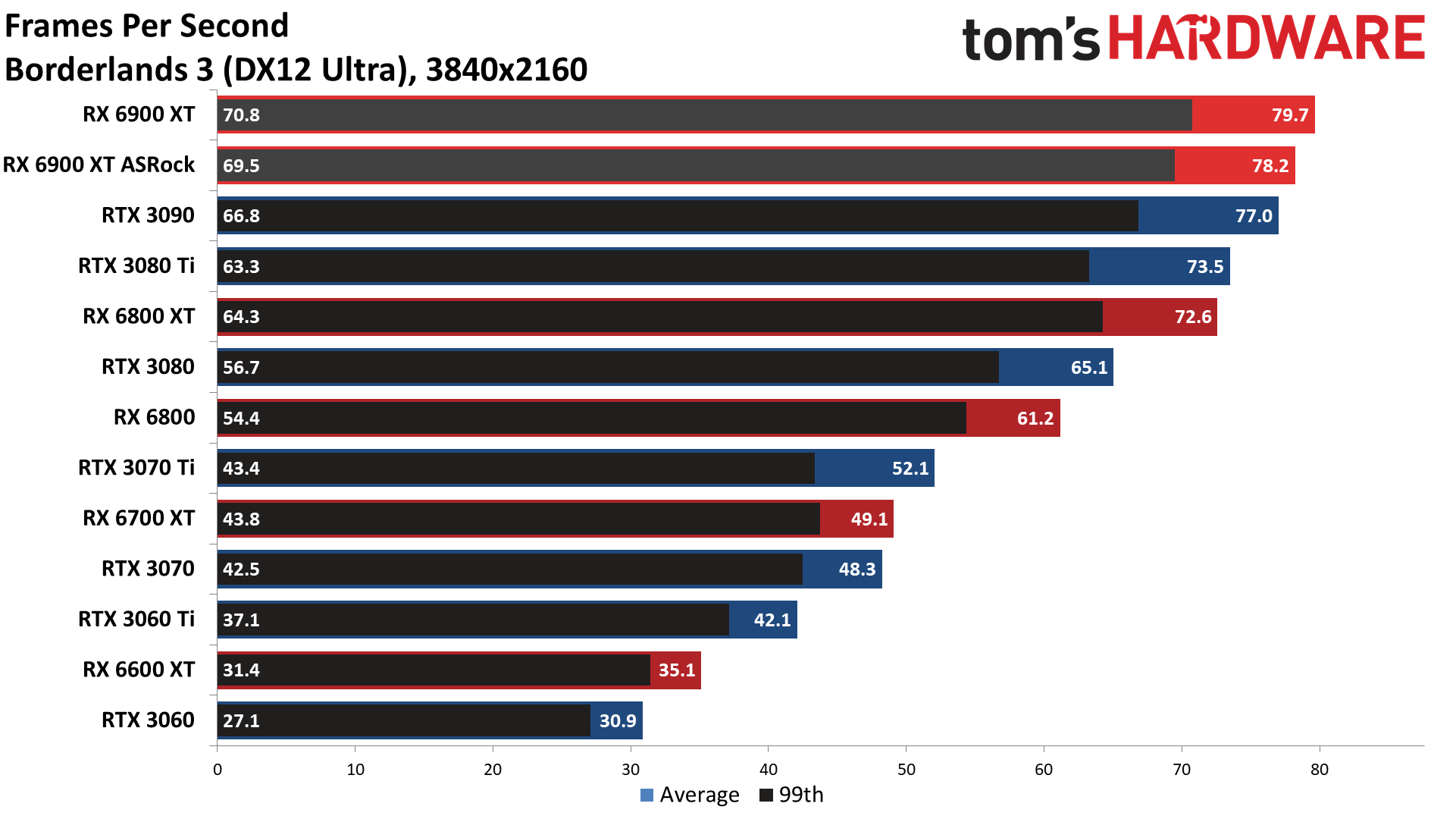

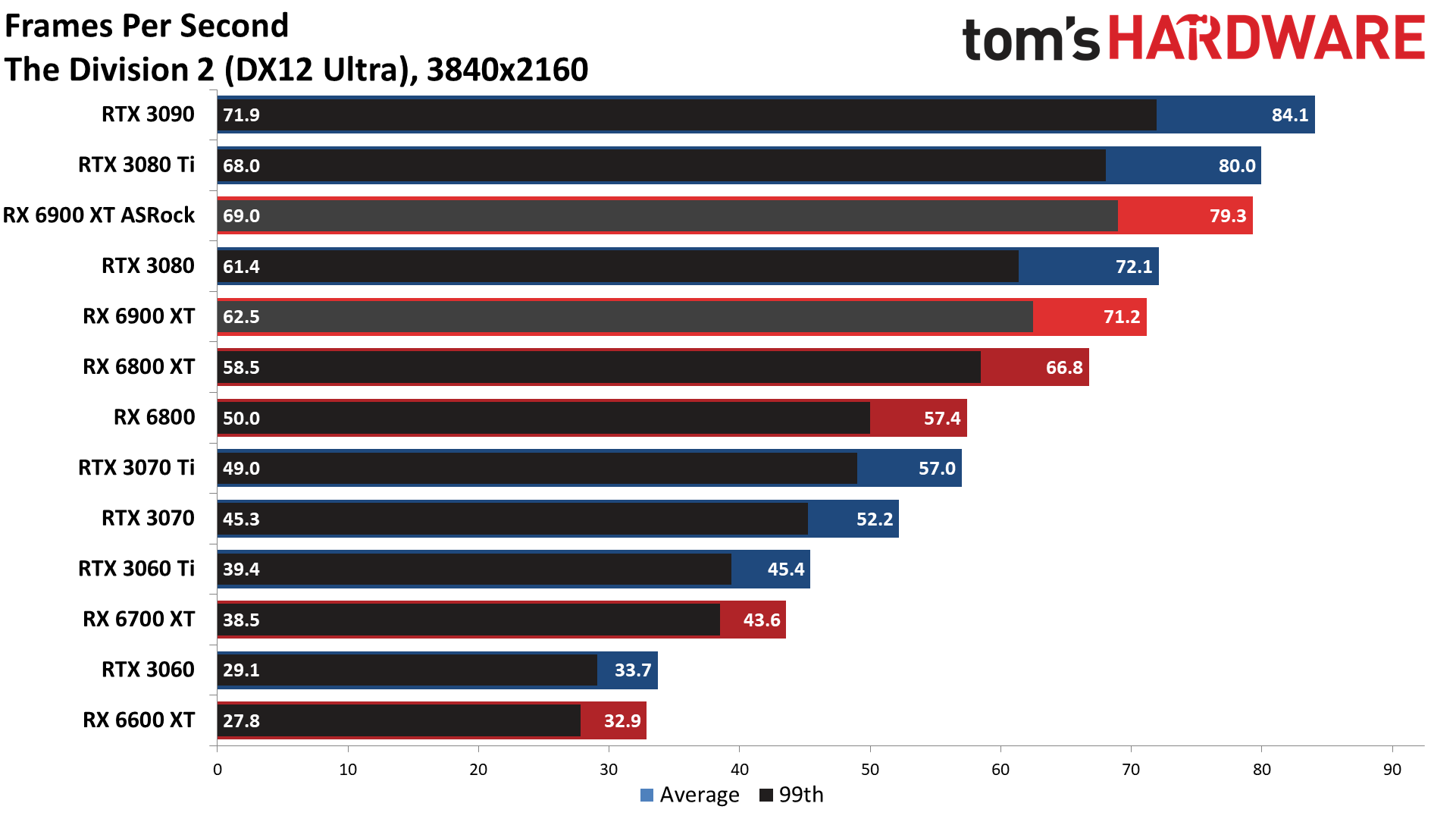

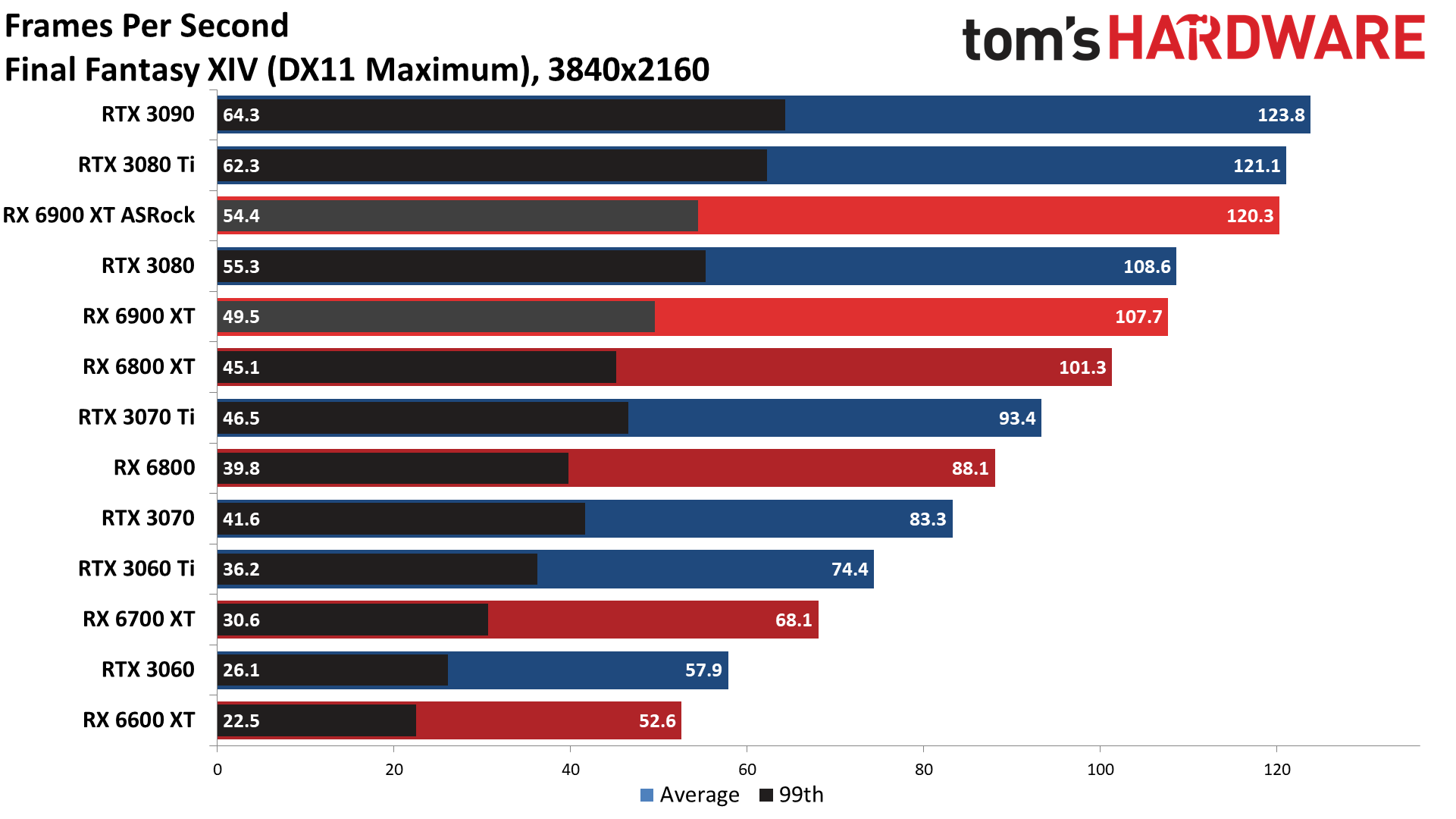

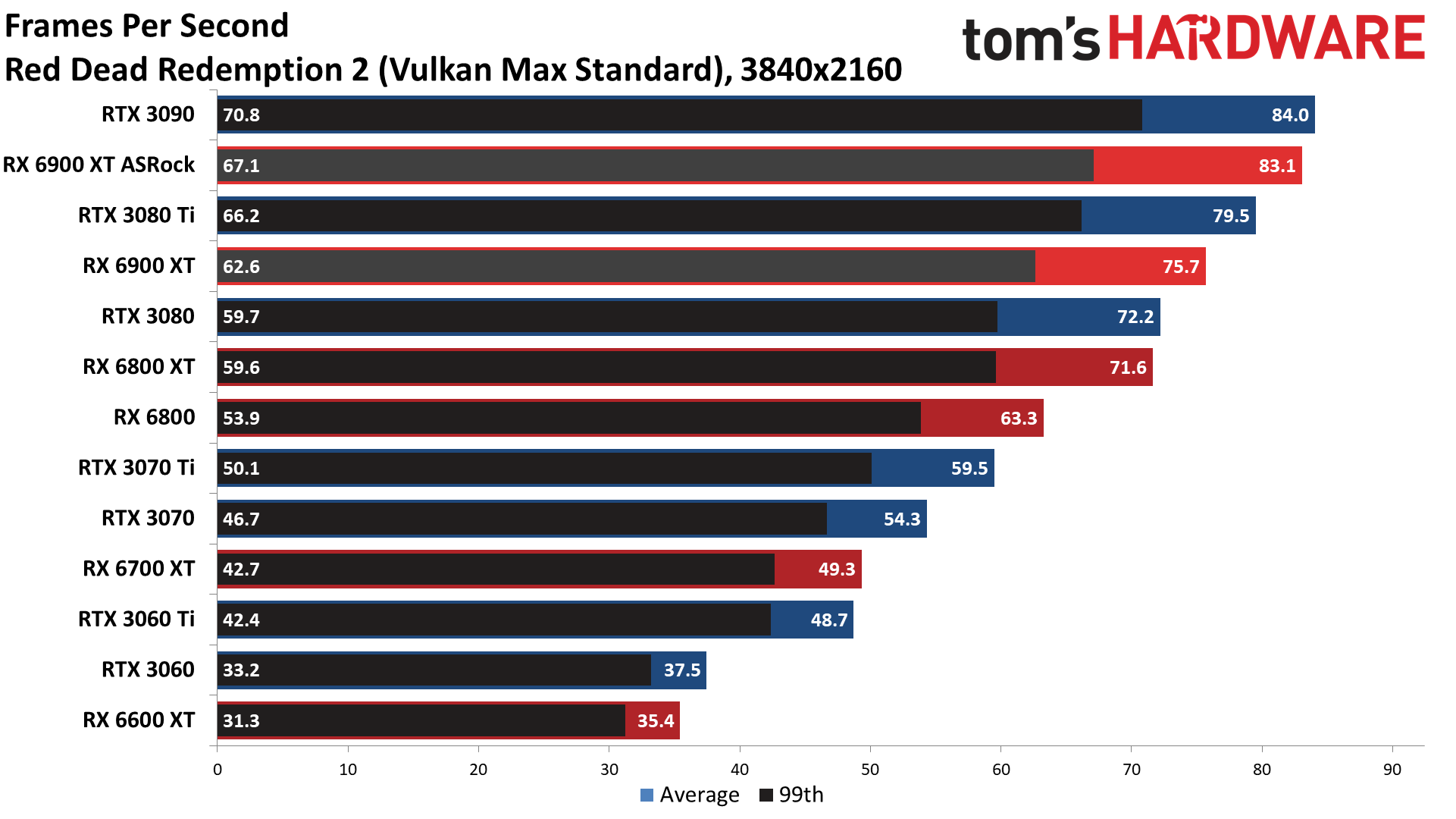

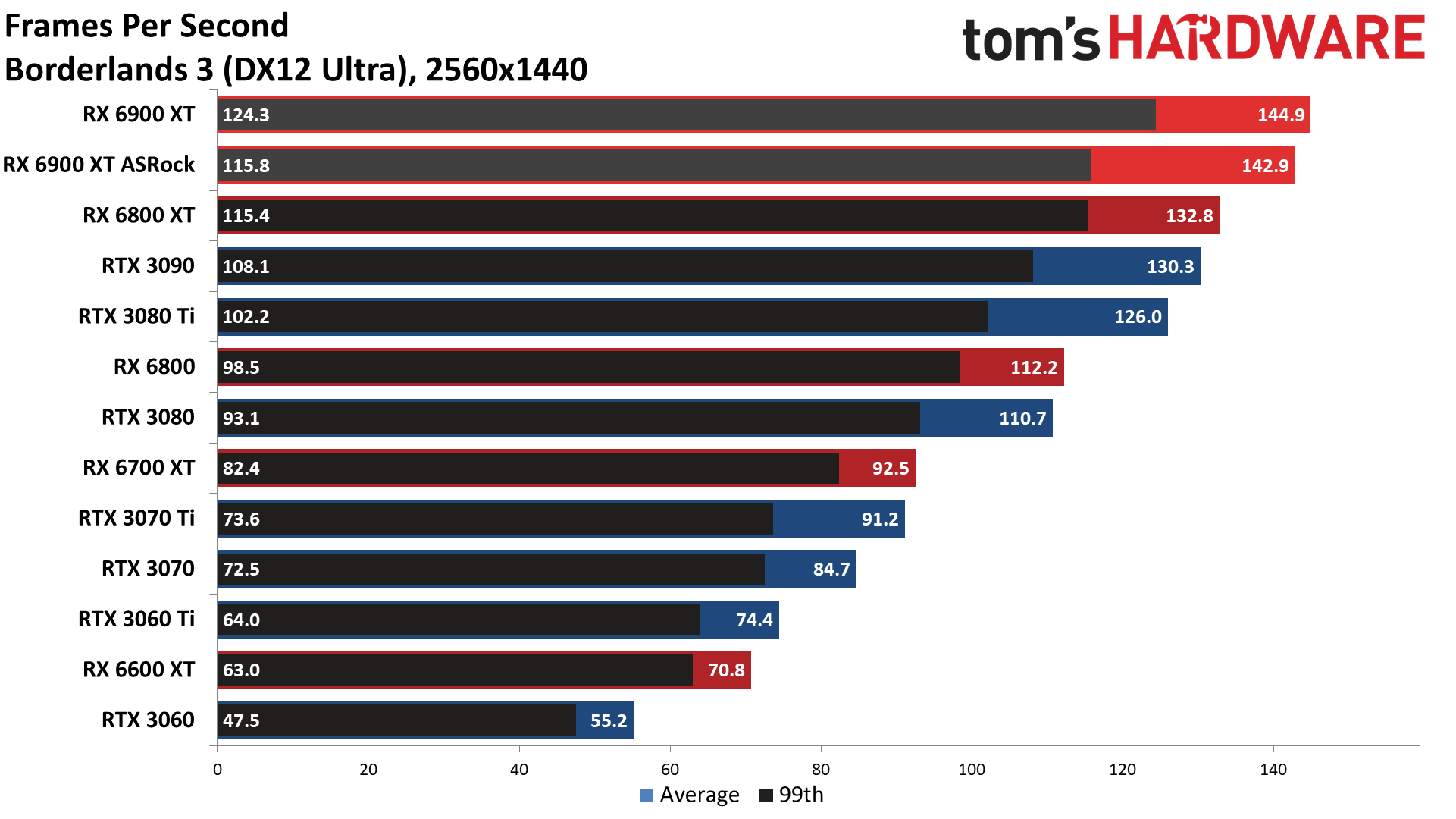

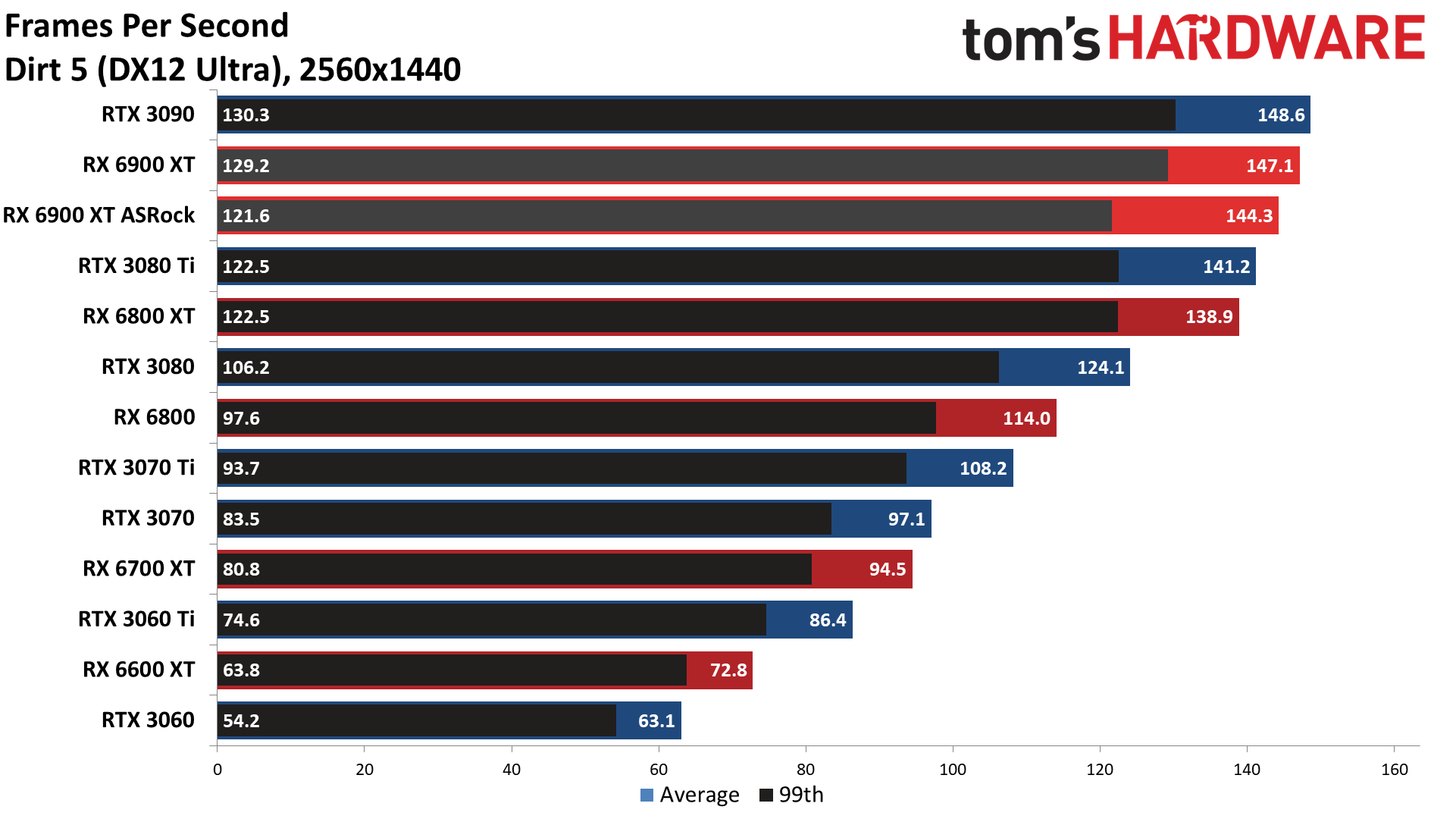

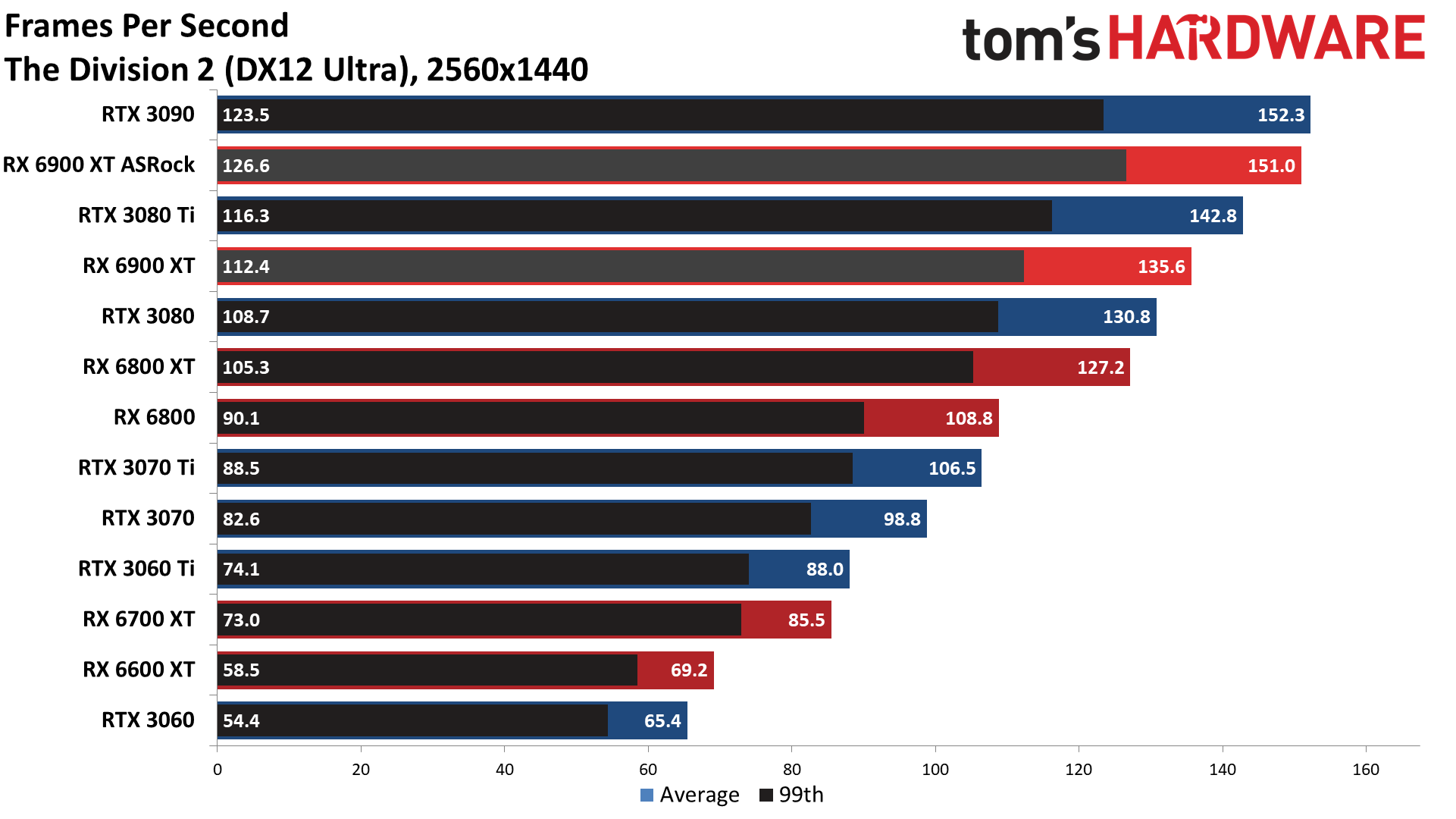

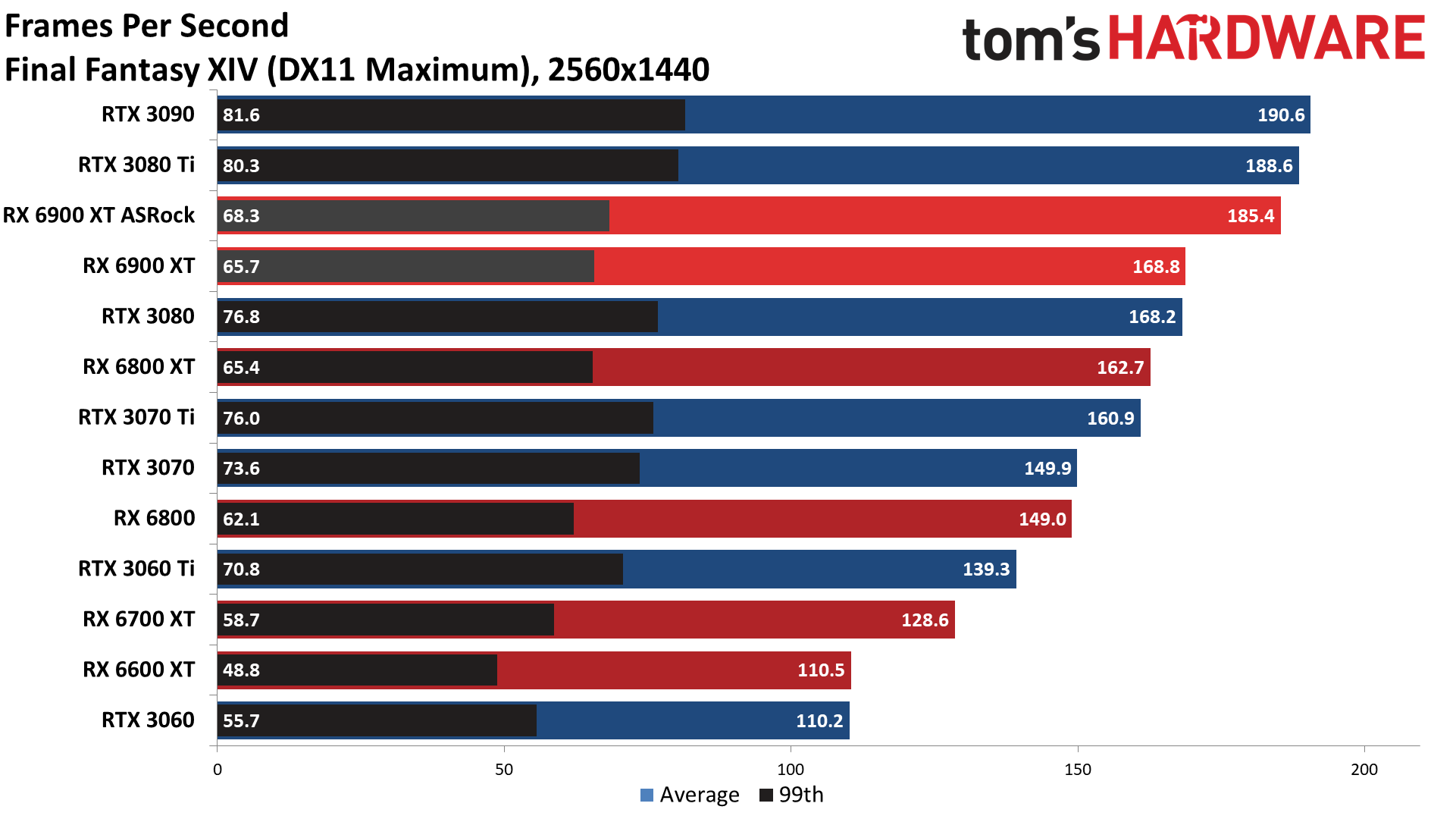

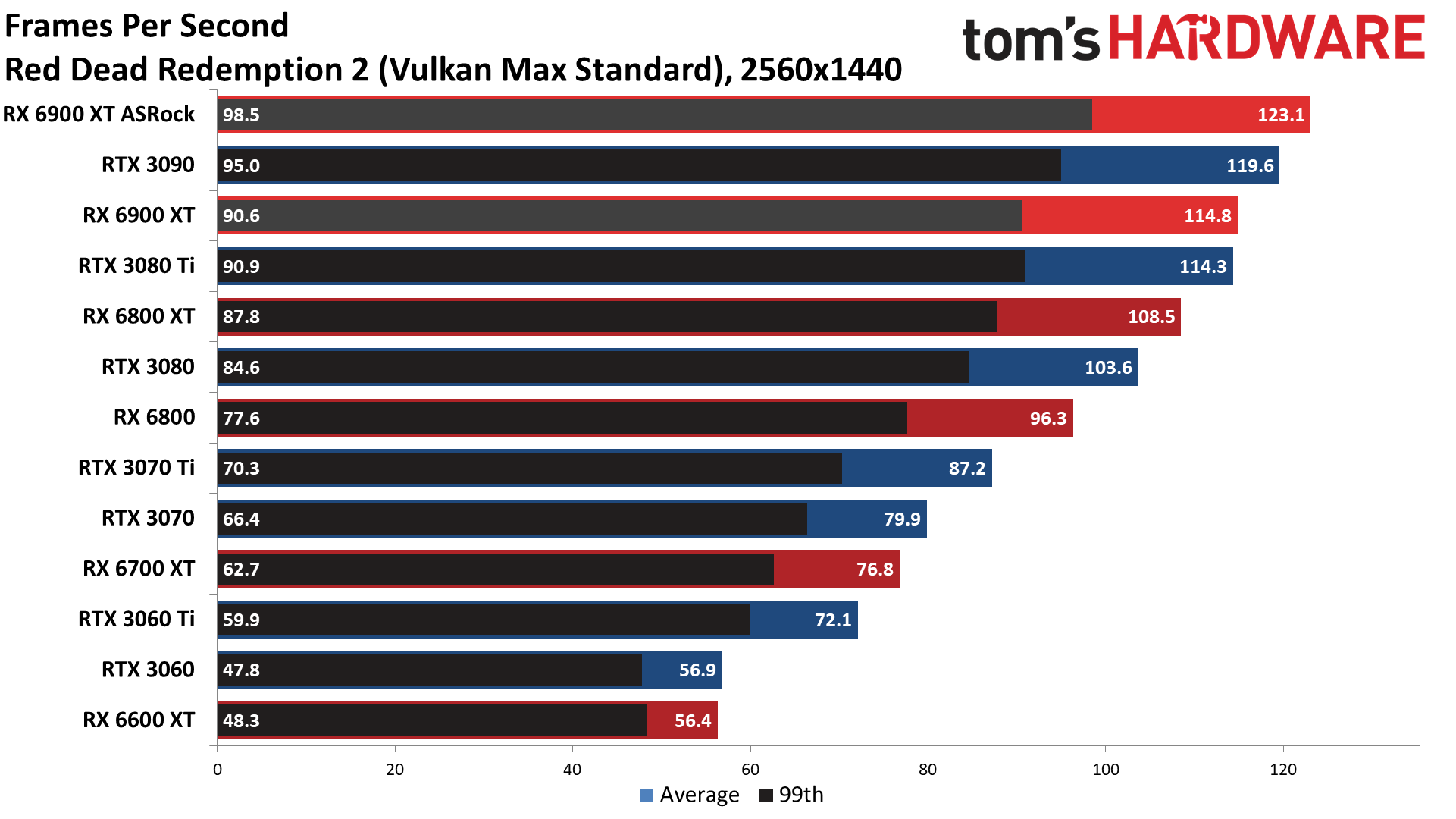

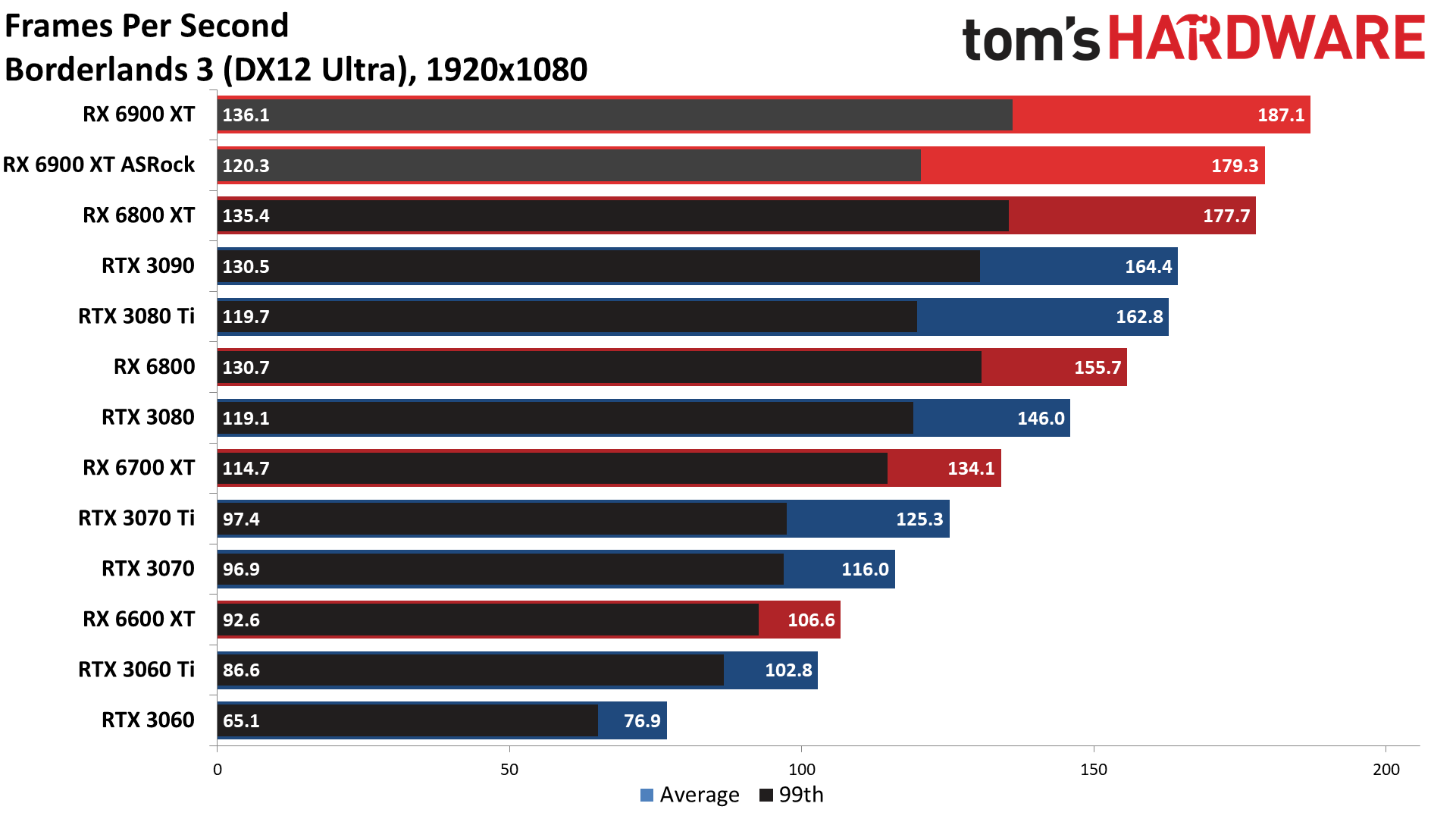

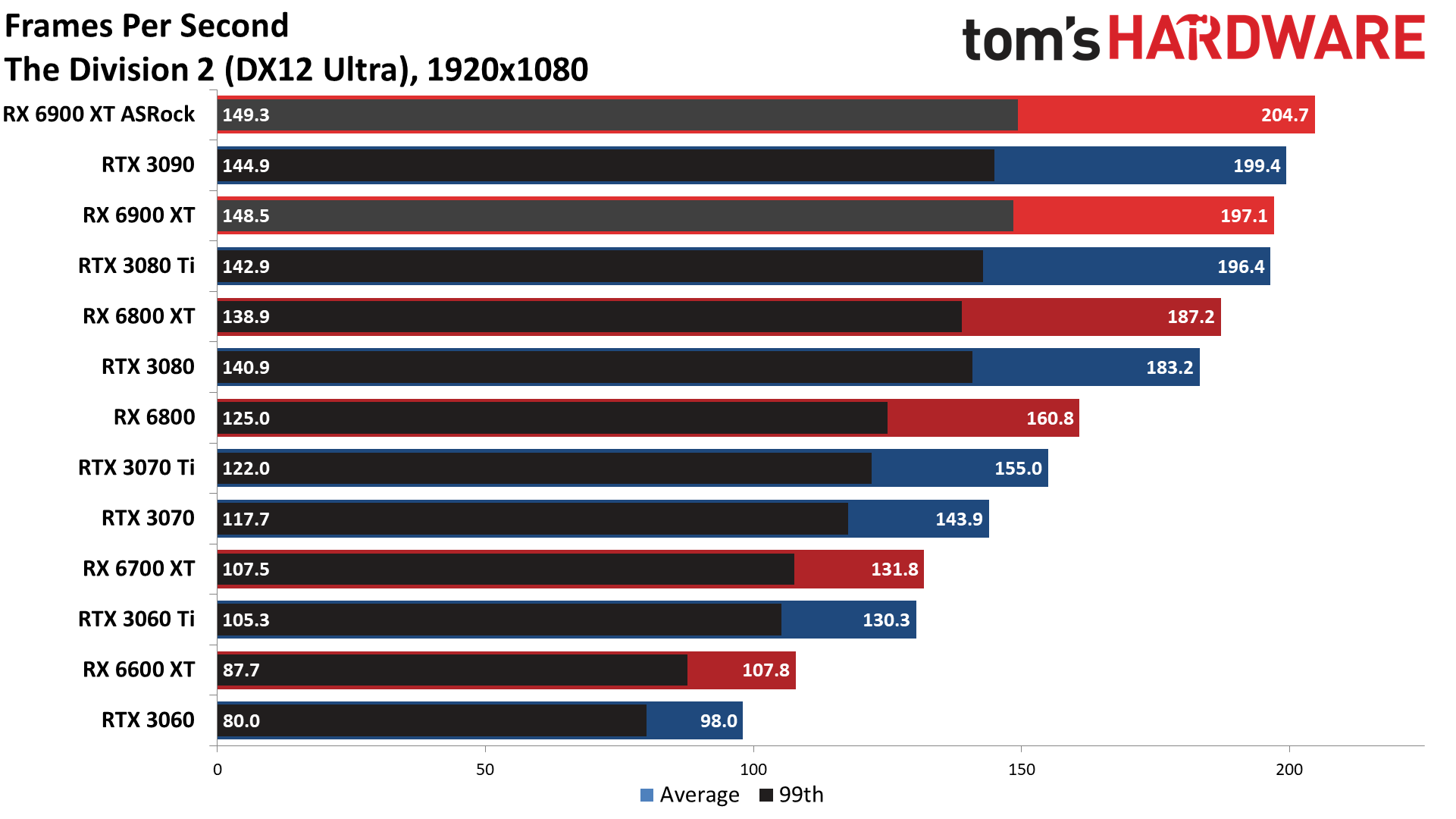

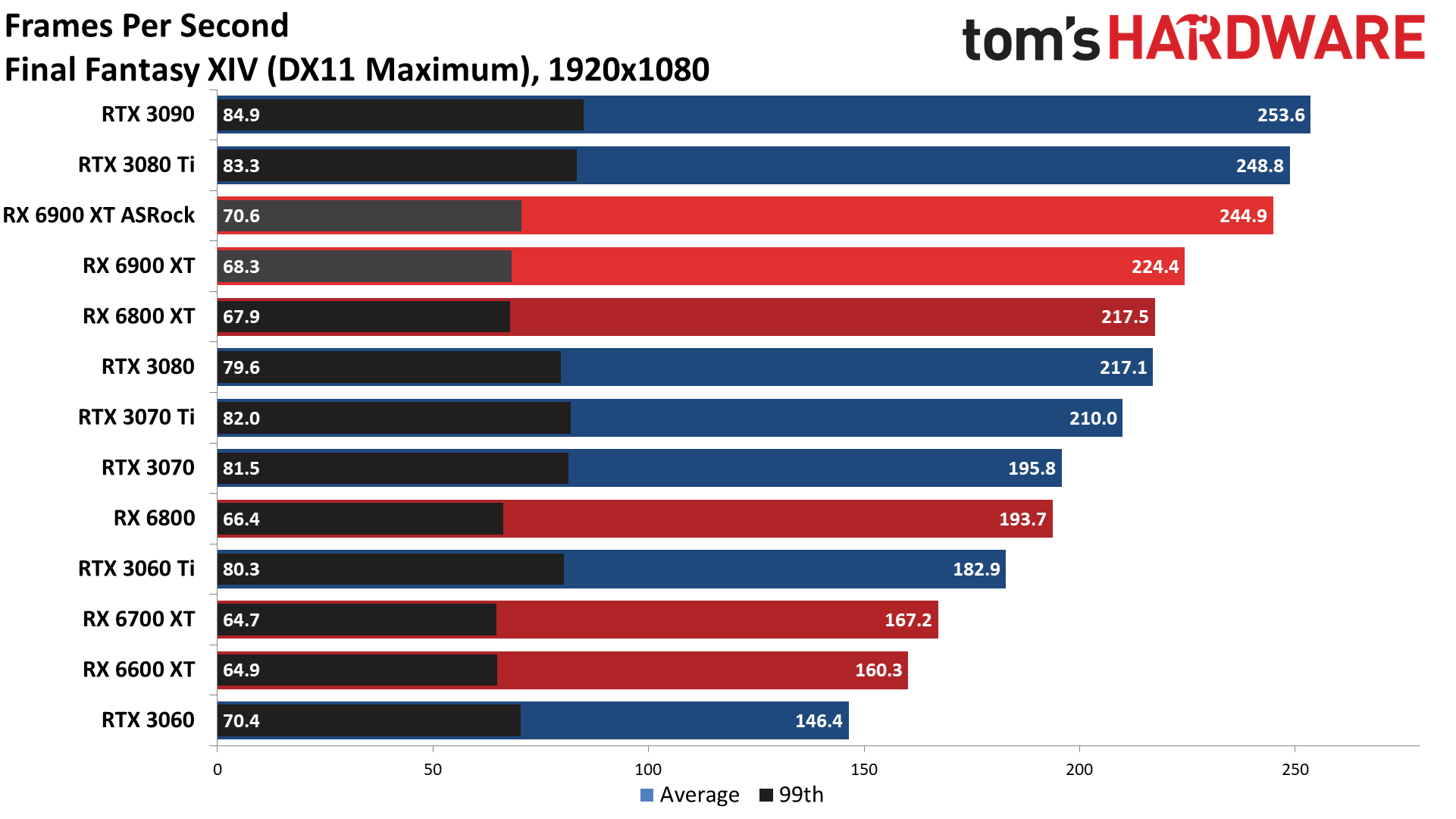

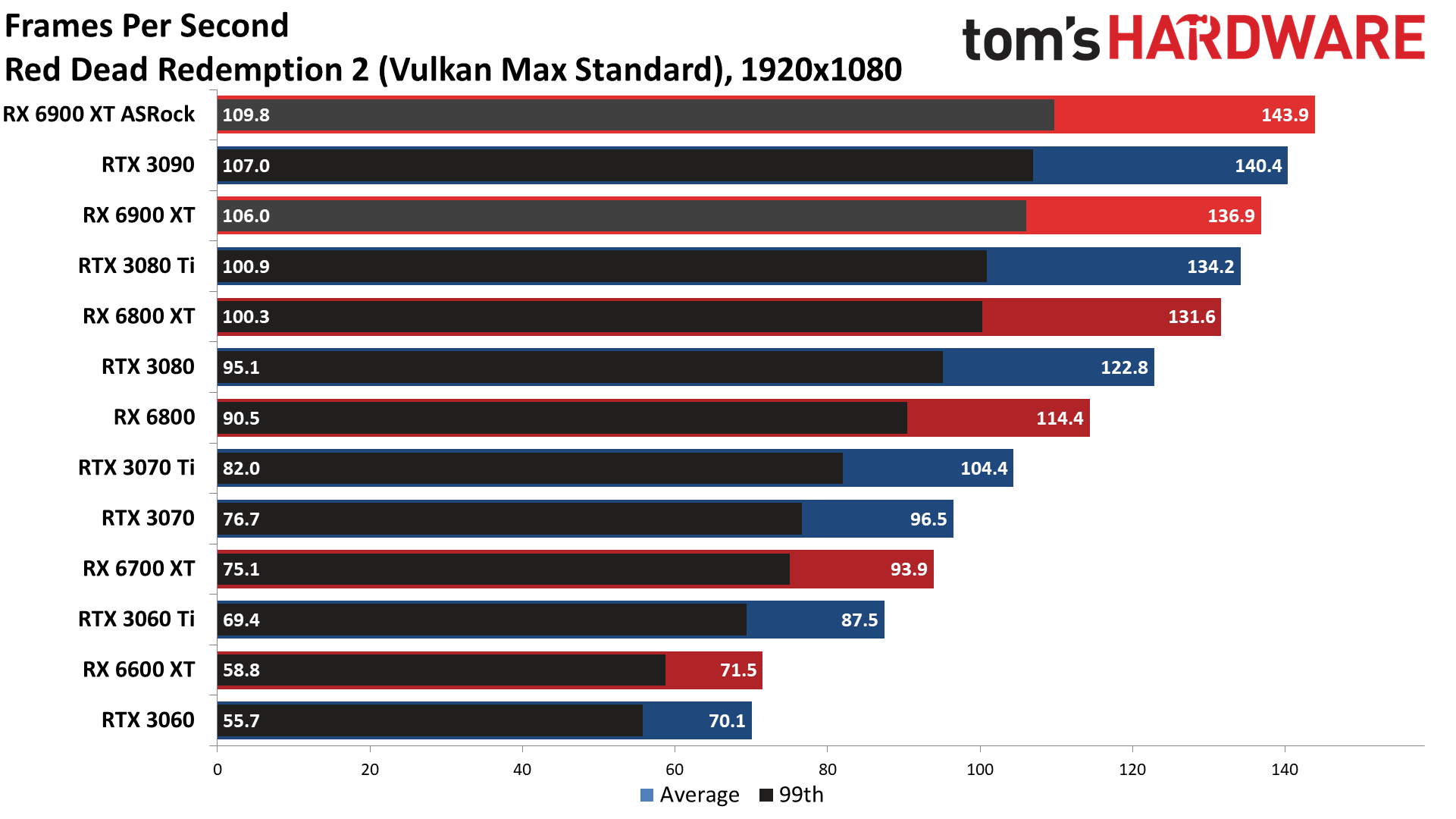

Across our test suite, the ASRock Formula was 6% faster than the reference RX 6900 XT. Only Borderlands 3 showed a slight 2% drop in performance, while the remaining dozen games improved by anywhere from 3% to 12%. Final Fantasy XIV, The Division 2, and Red Dead Redemption 2 all showed double digit percentage gains of 10–12%.

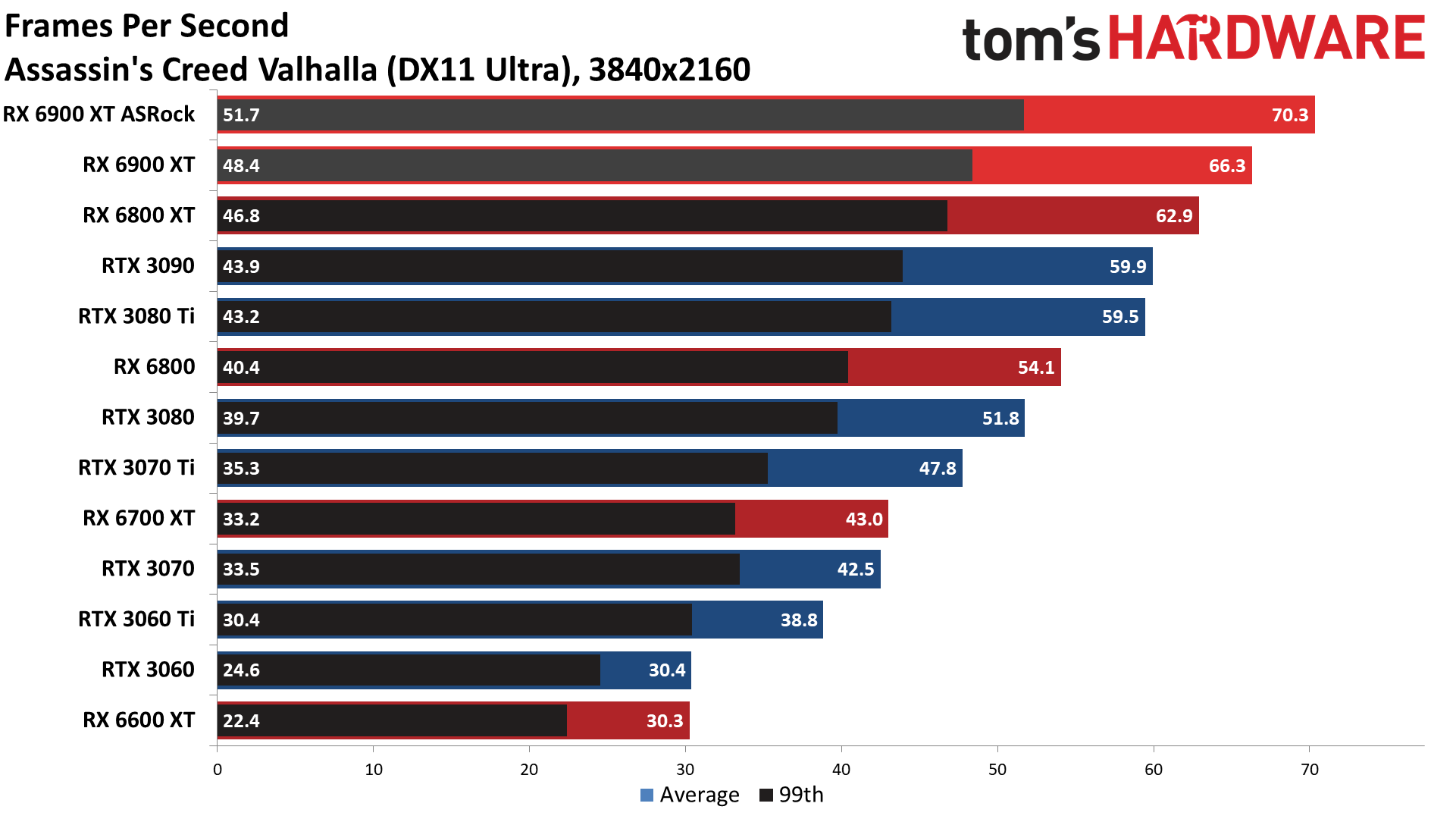

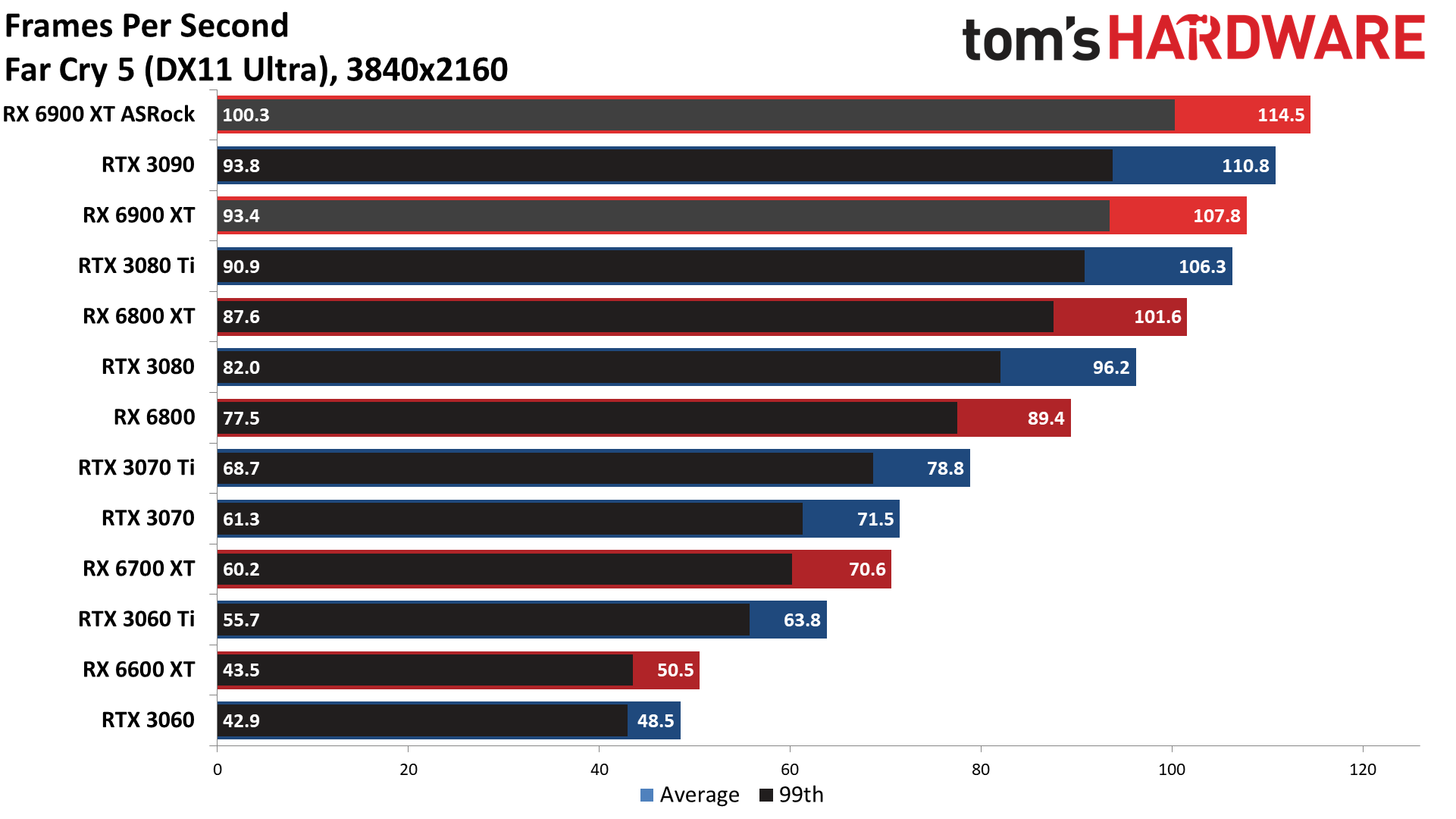

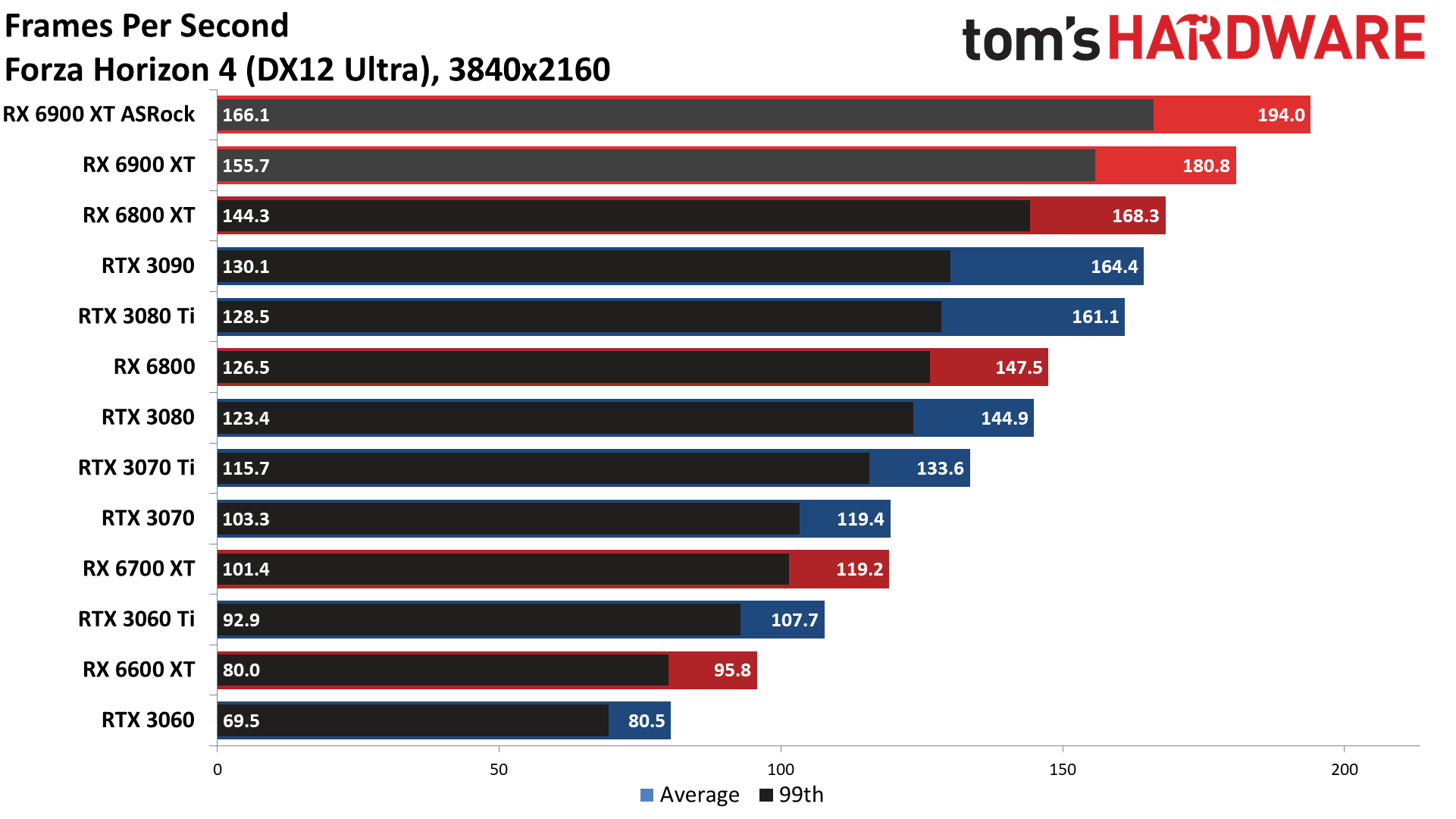

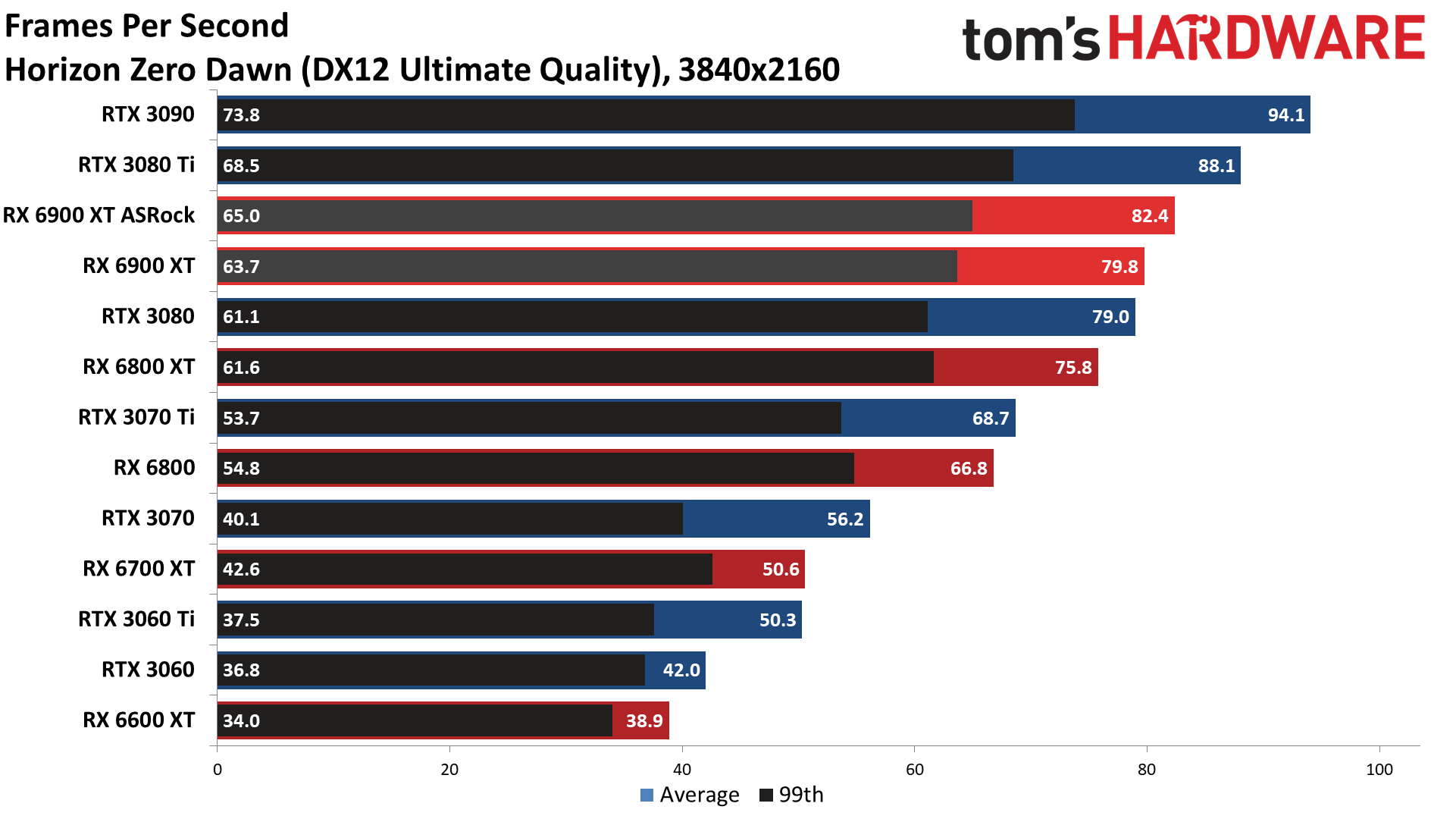

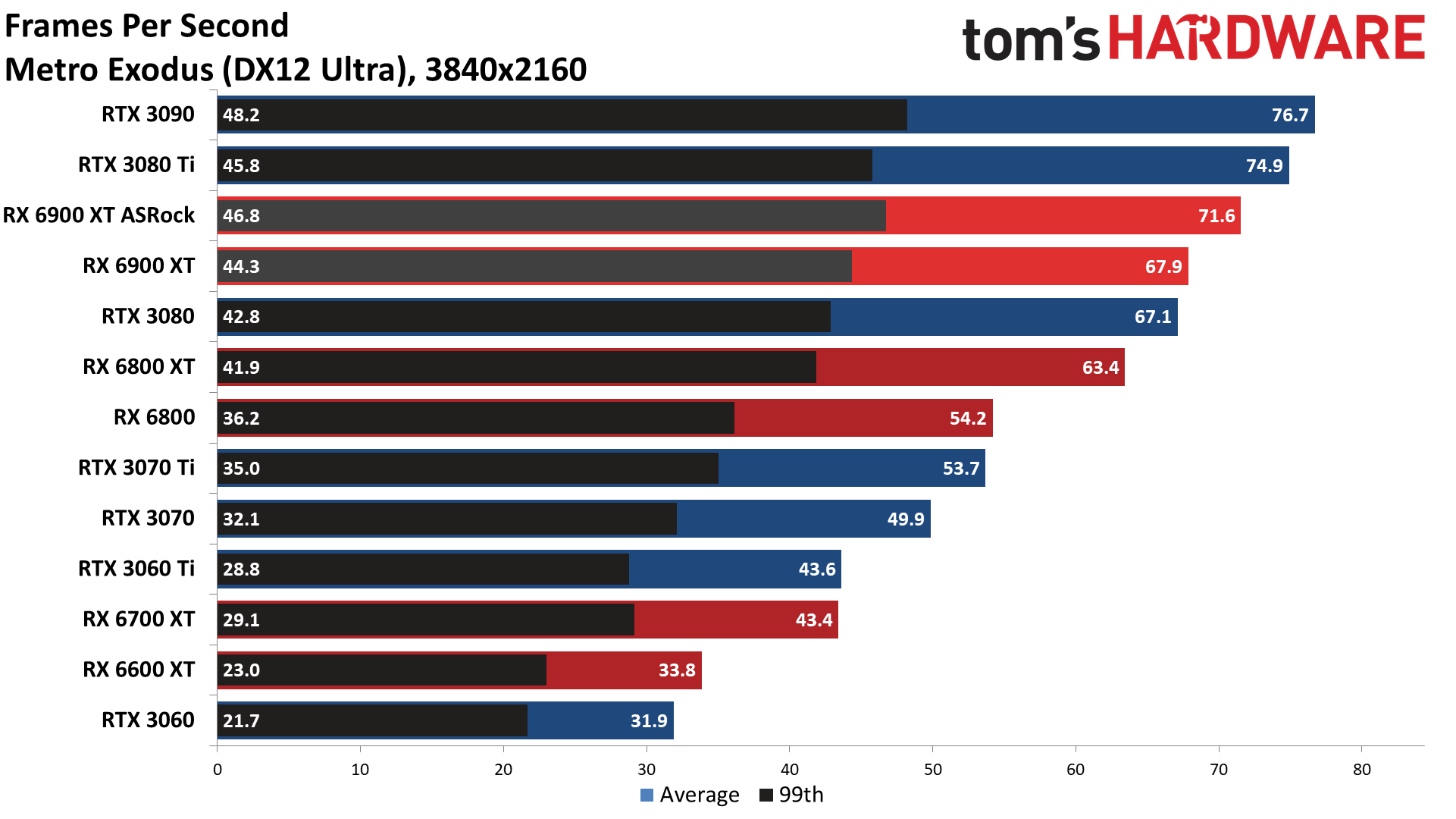

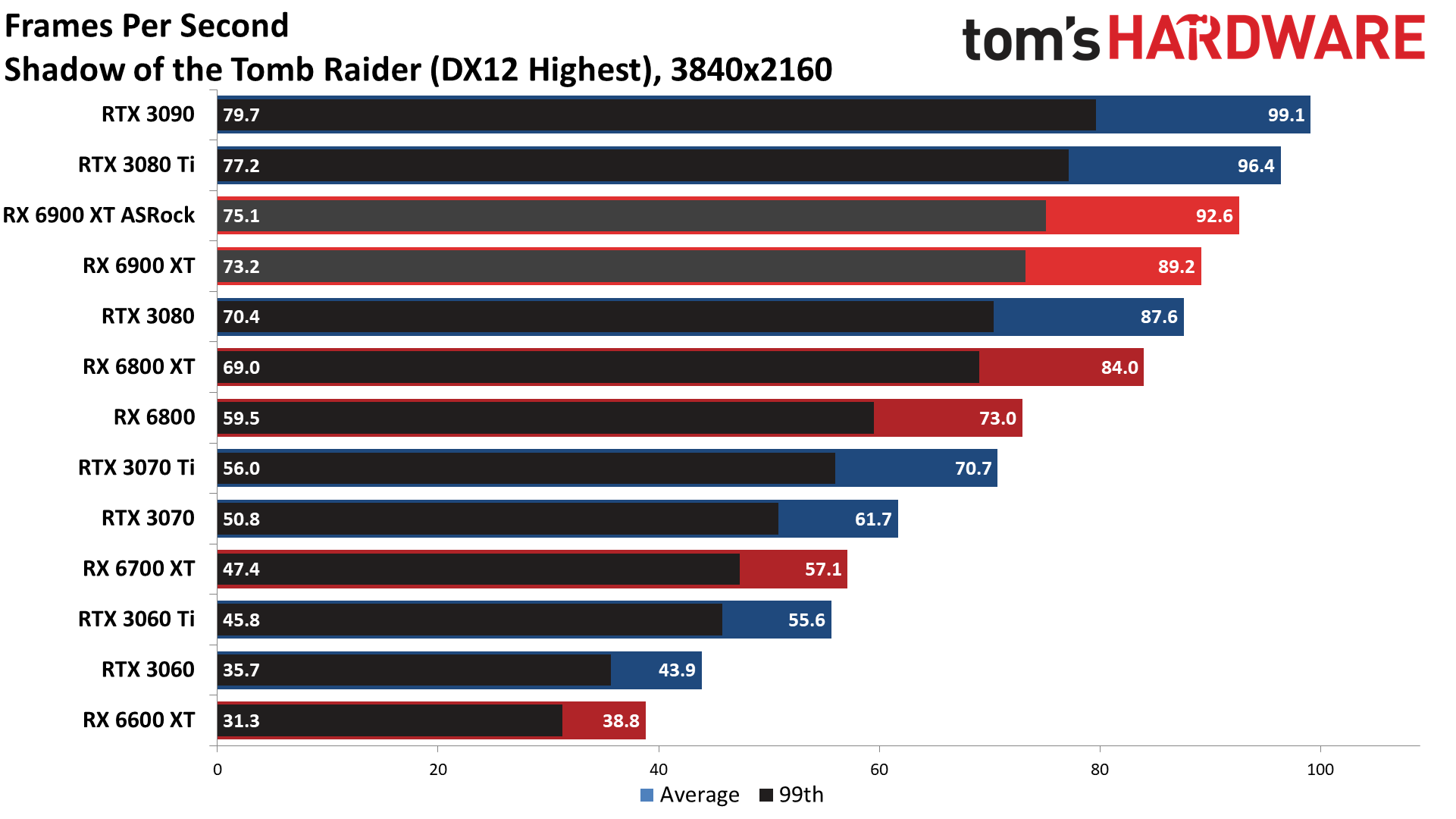

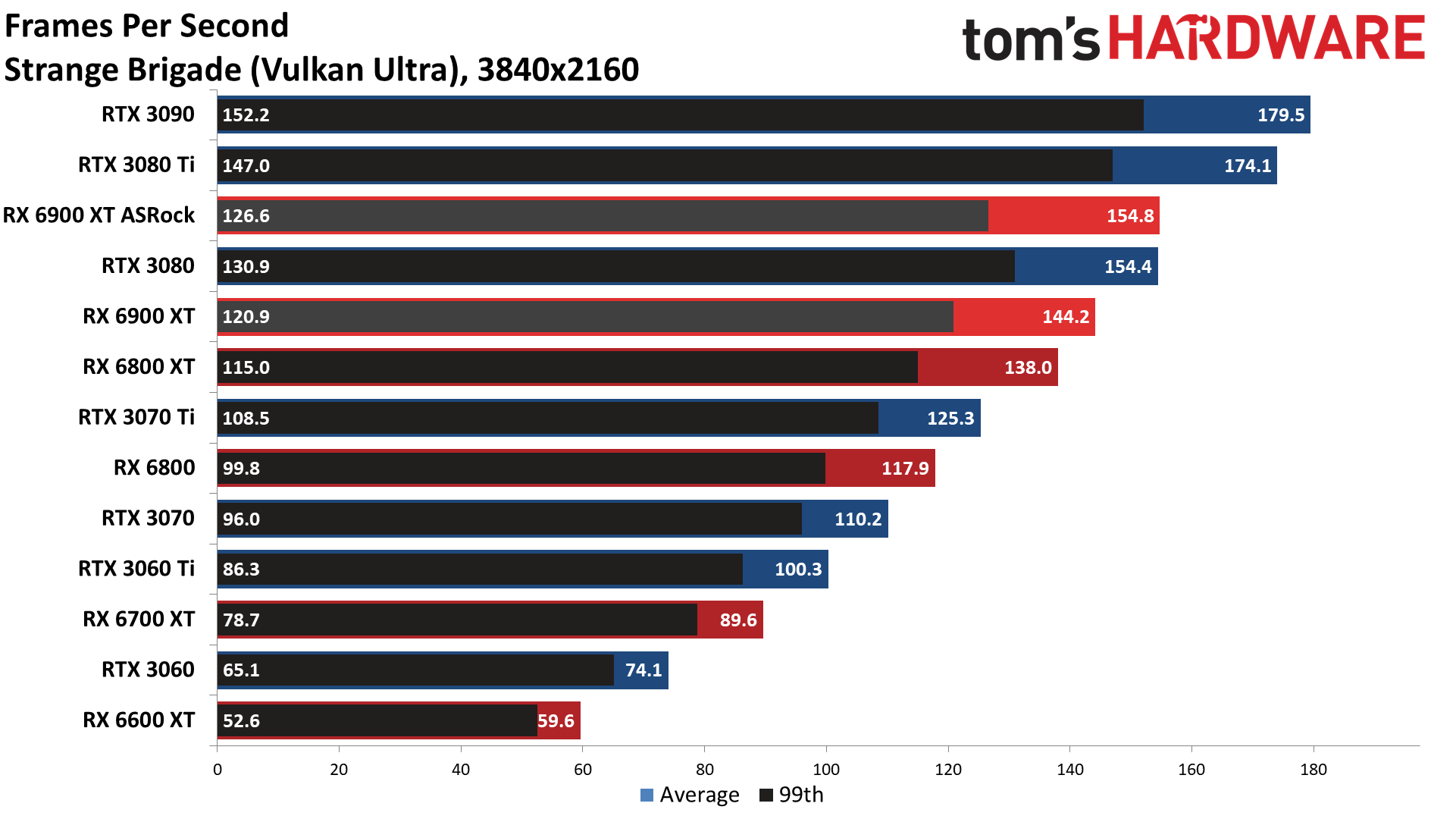

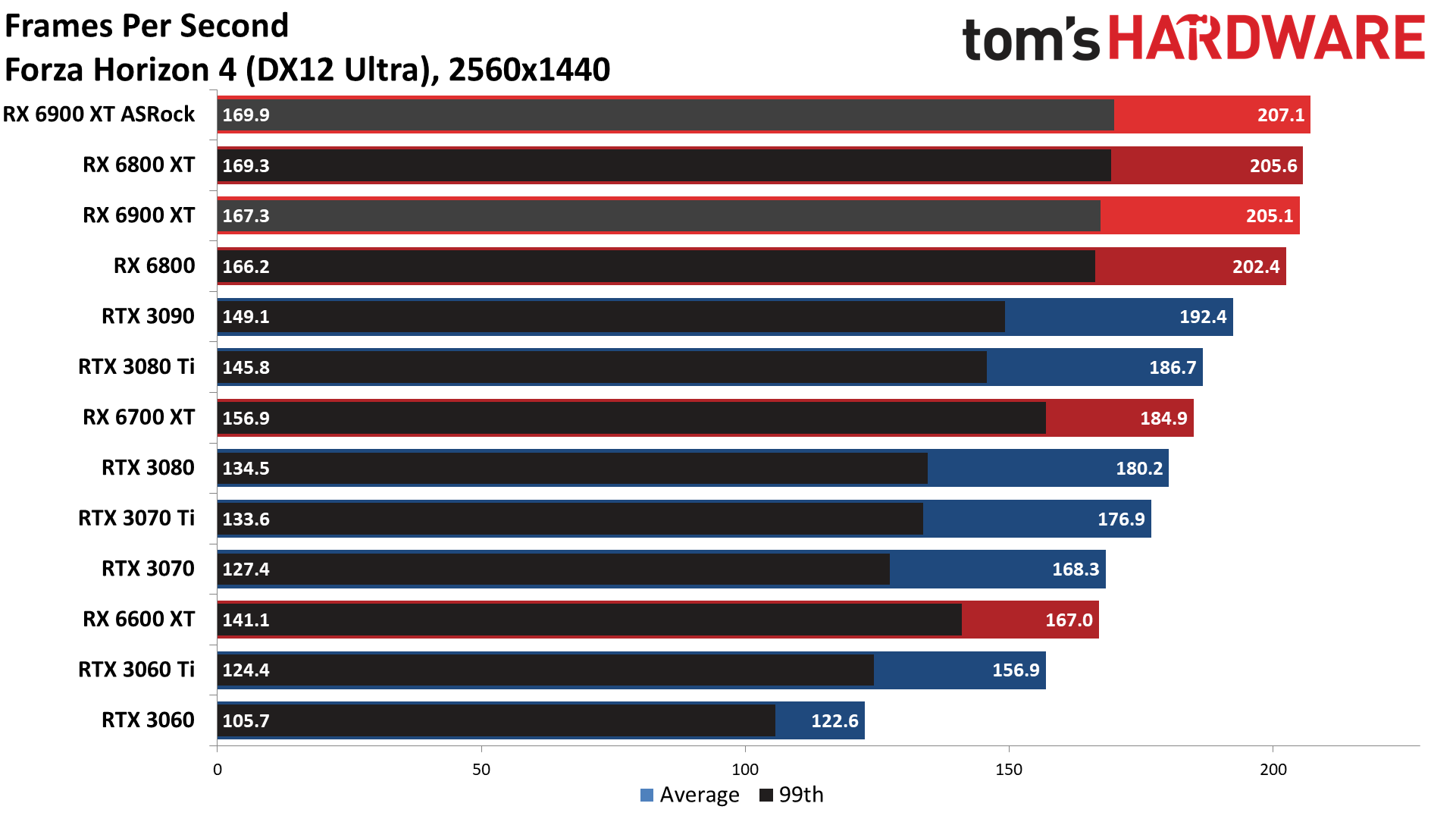

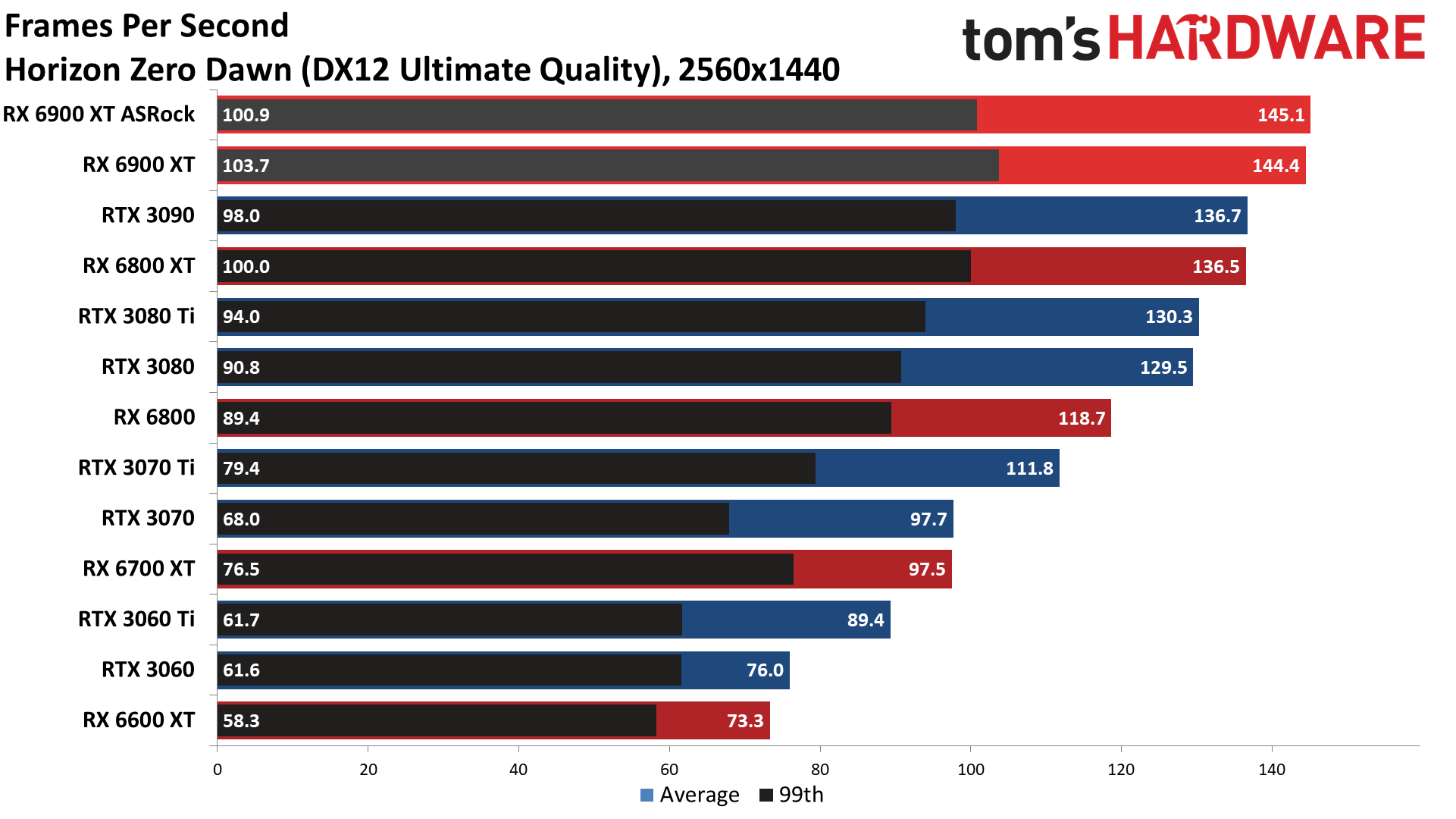

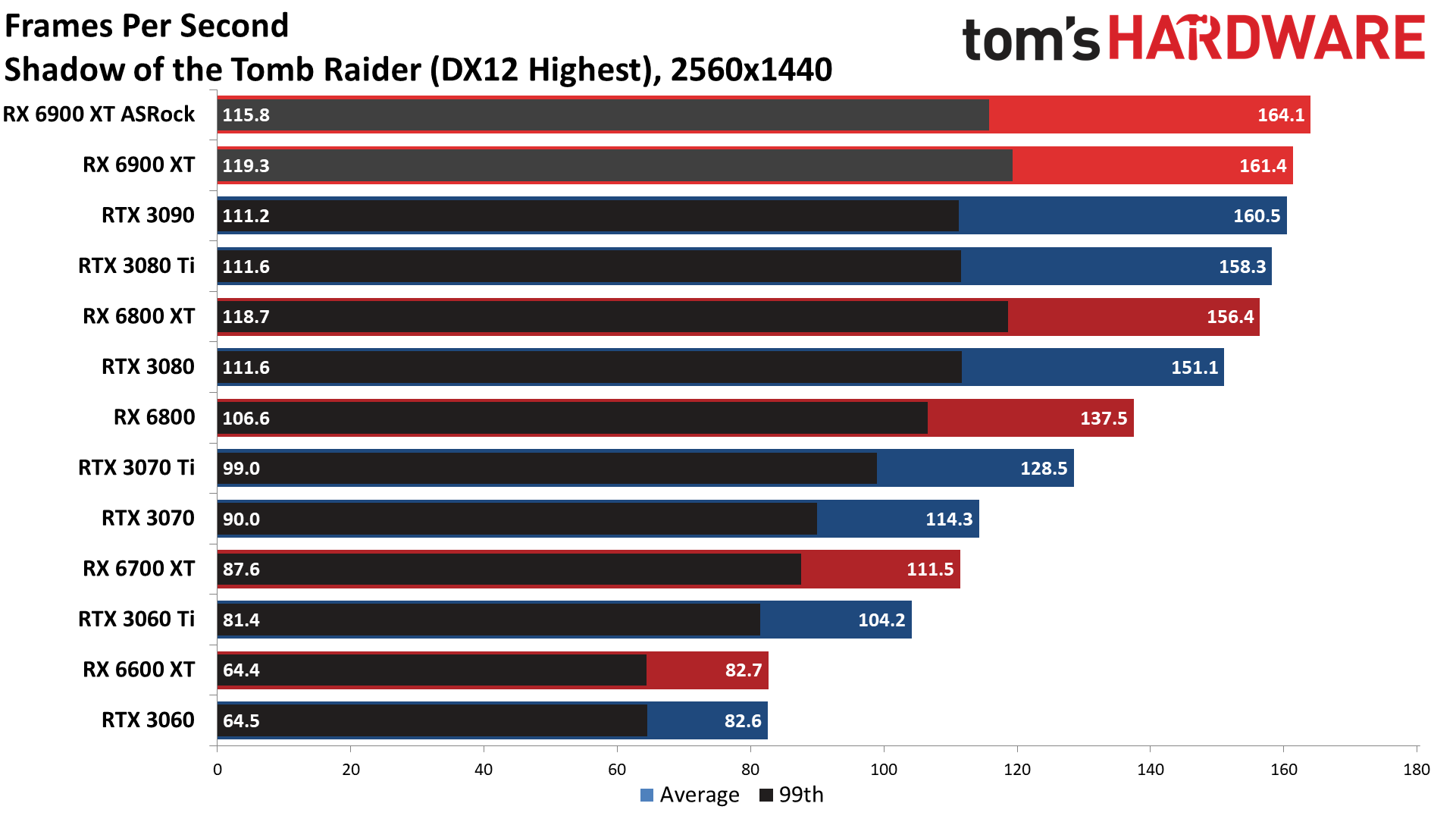

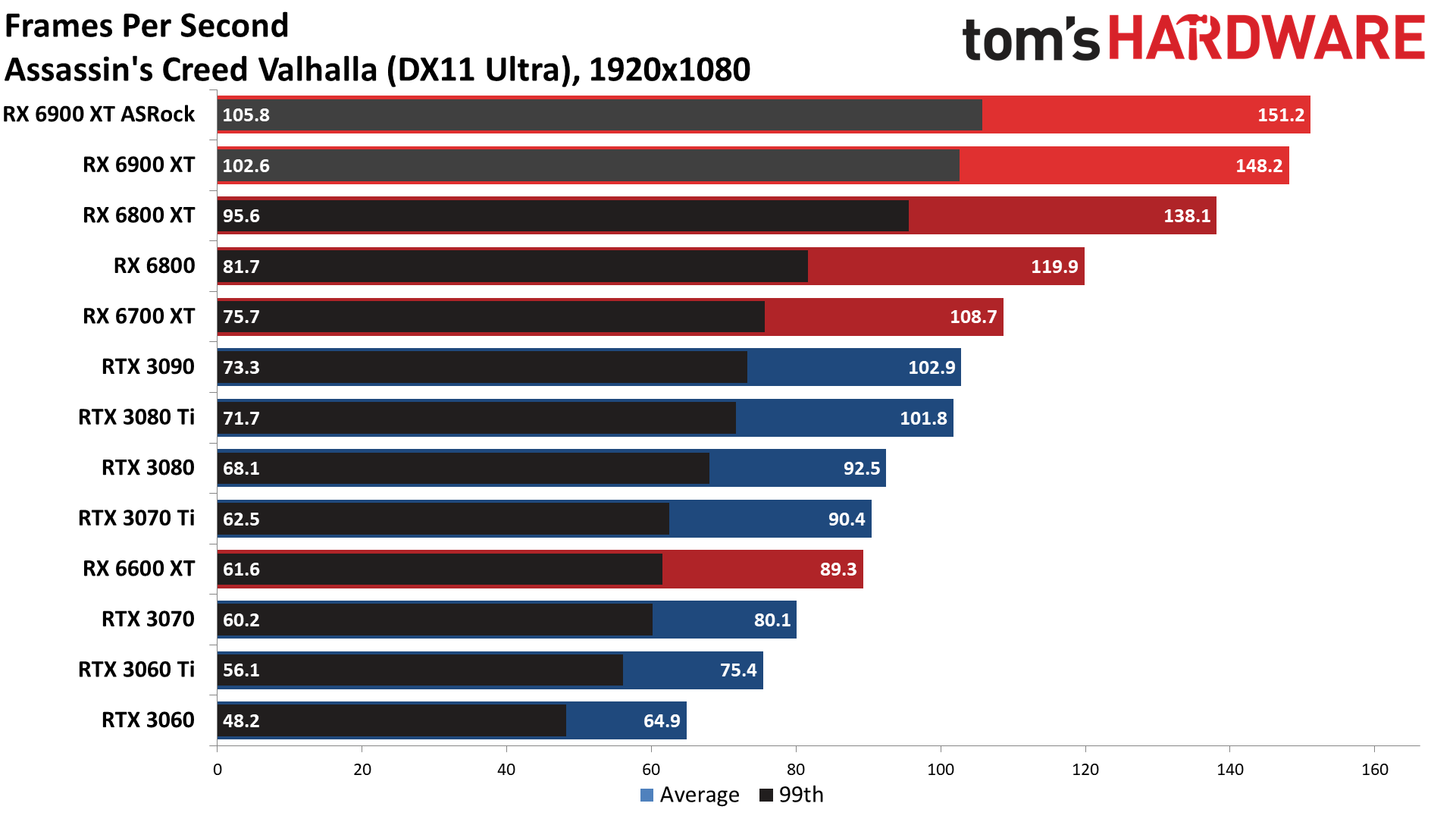

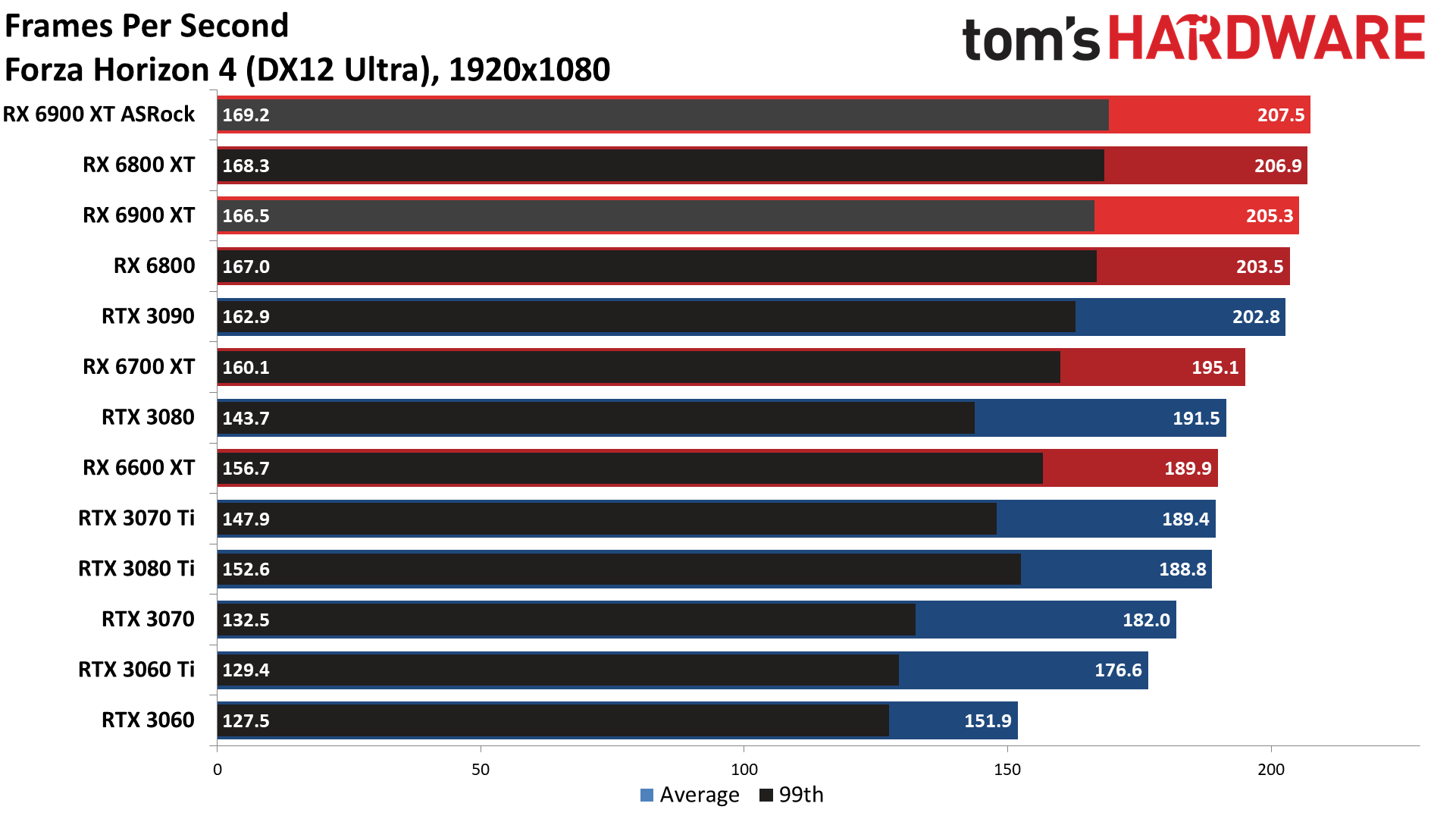

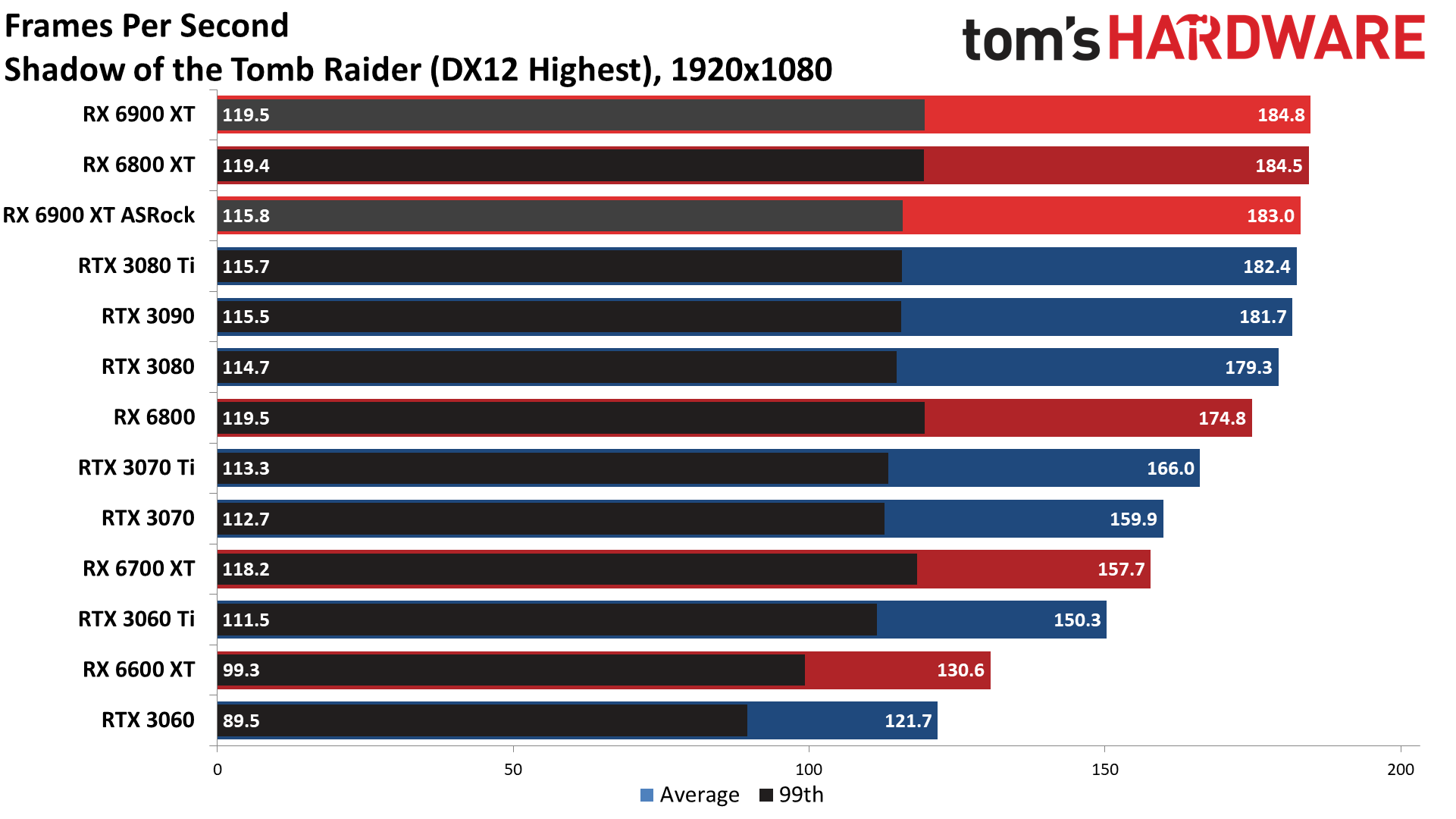

Looking elsewhere, the ASRock Formula also came relatively close to matching the performance of the Asus ROG Strix LC RTX 3080 Ti, trailing by 5% overall. A big part of the reason for ASRock's good showing is our inclusion of Assassin's Creed Valhalla, which heavily favors AMD's latest GPUs (and is an AMD promoted game), though Forza Horizon 4 also favors ASRock's card by 17% — the largest win at 4K. Meanwhile, Horizon Zero Dawn, Strange Brigade, The Division 2, and Shadow of the Tomb Raider all have the Asus RTX 3080 Ti card leading by 12% or more.

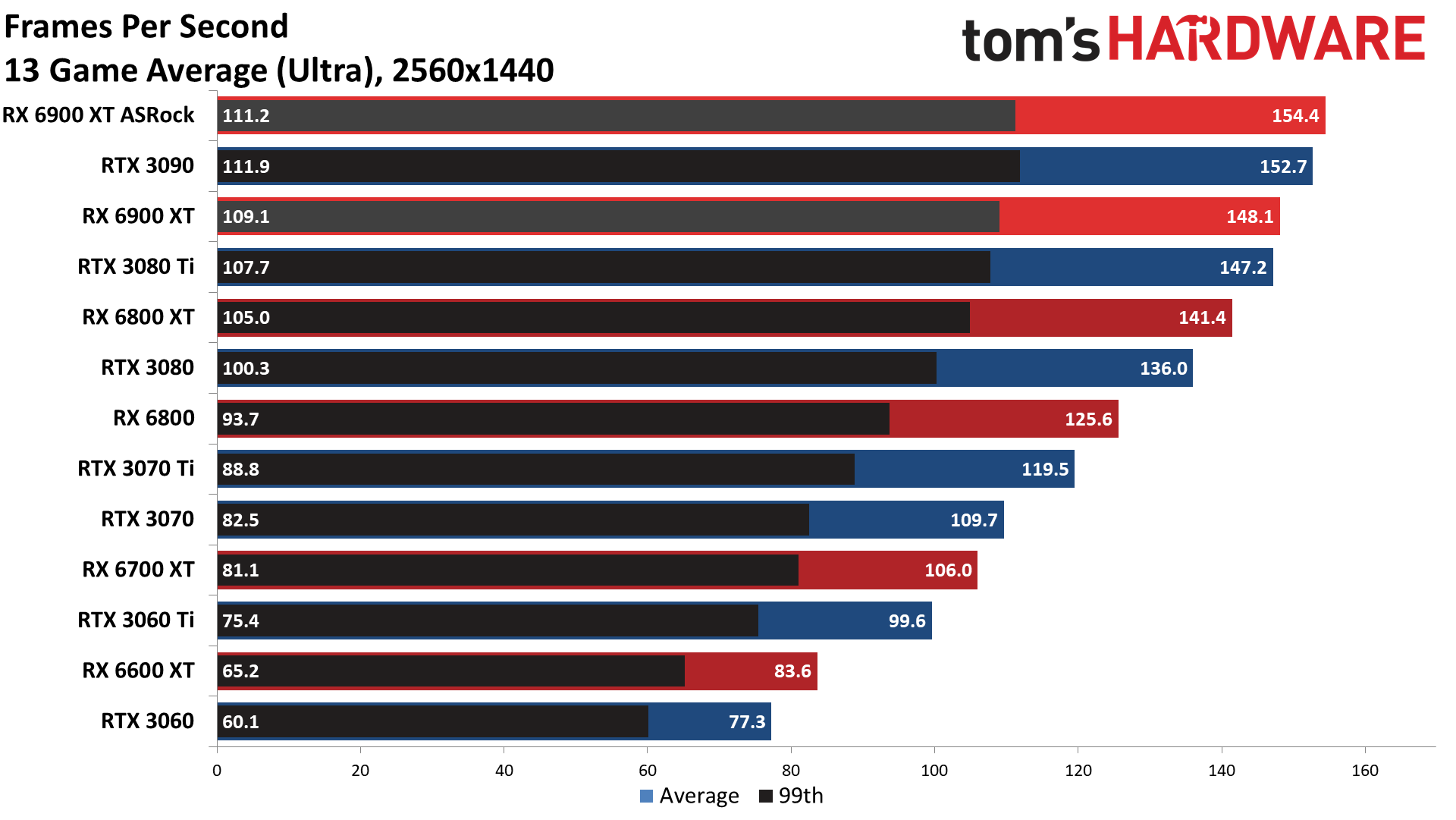

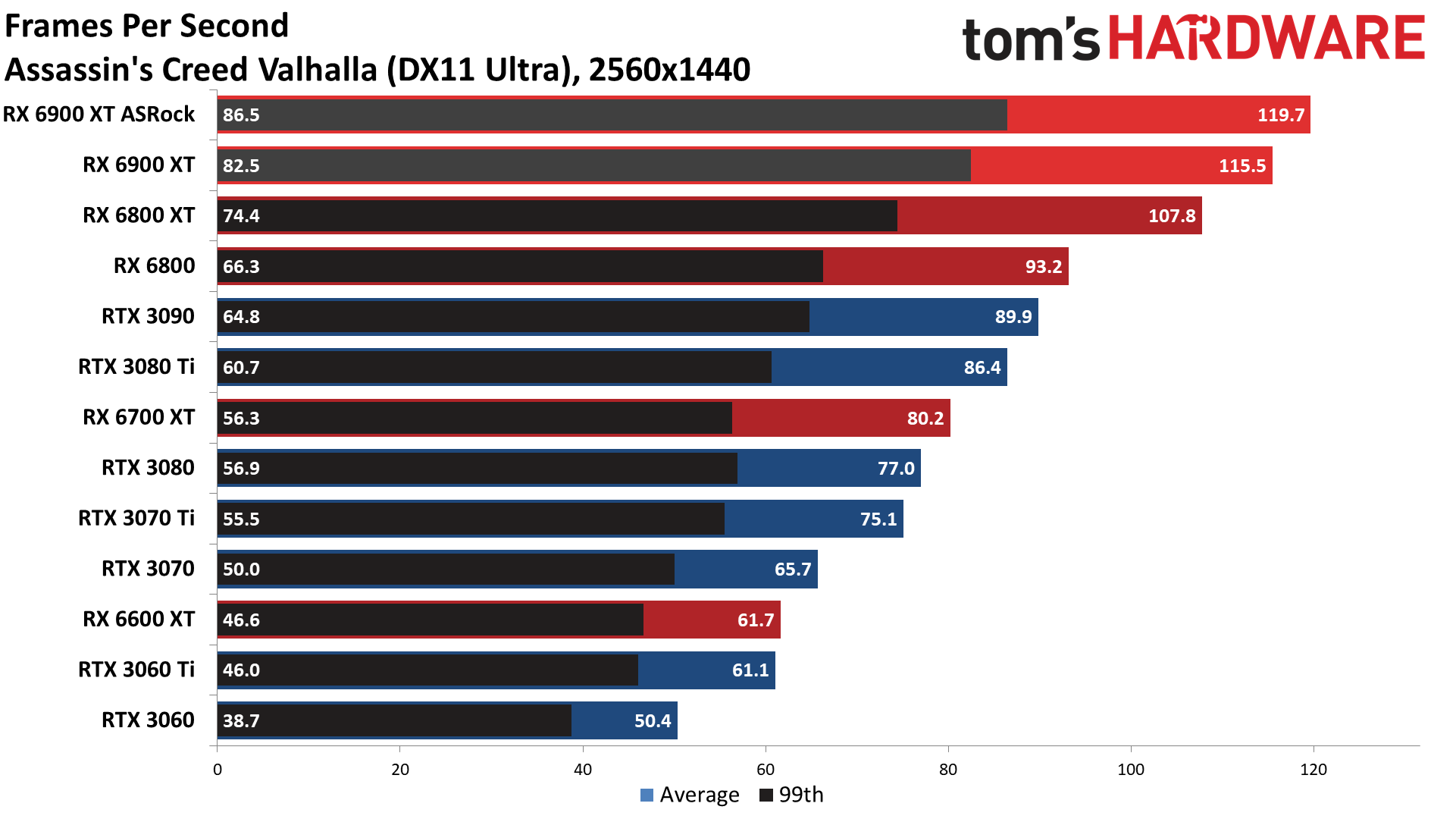

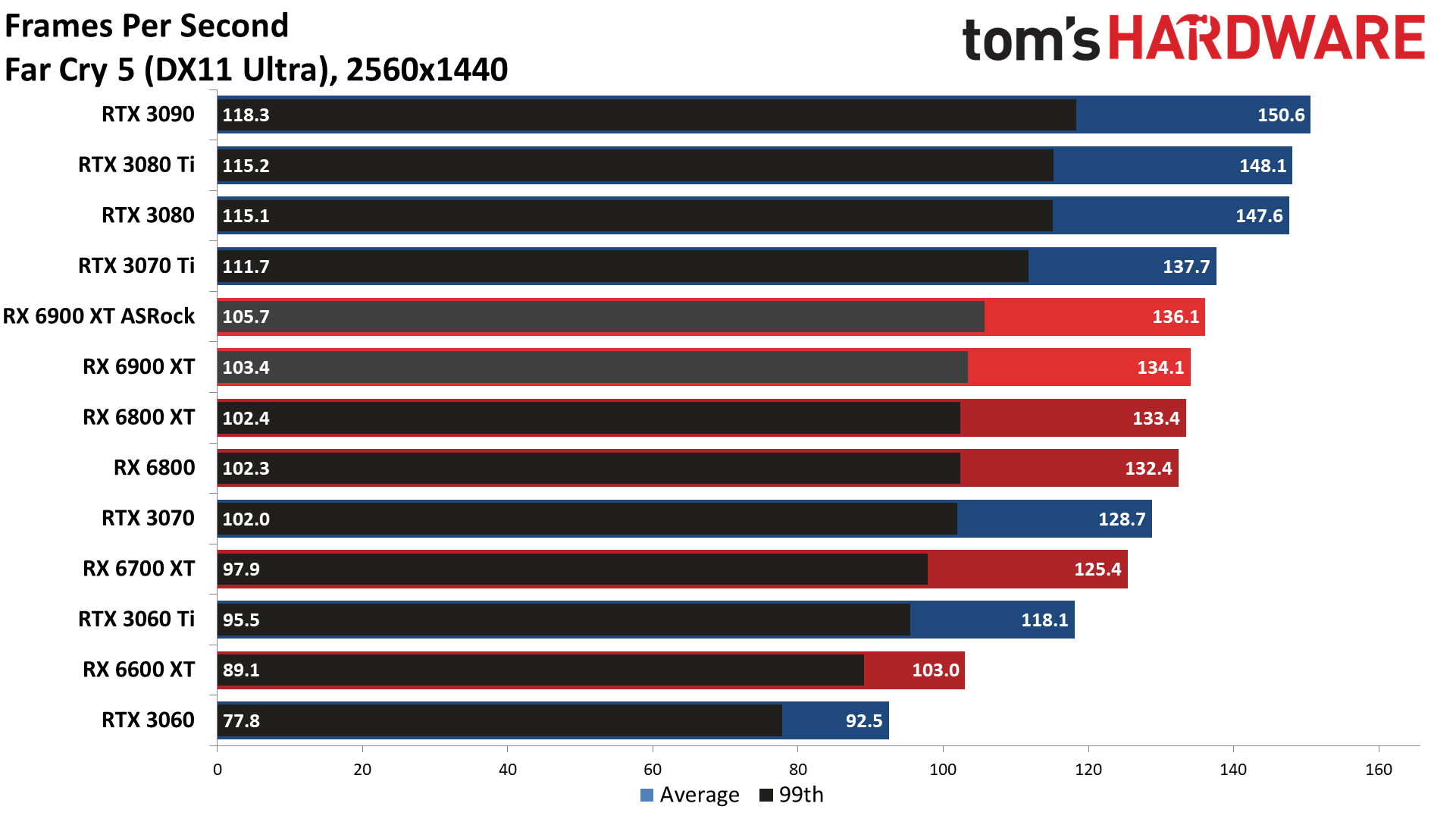

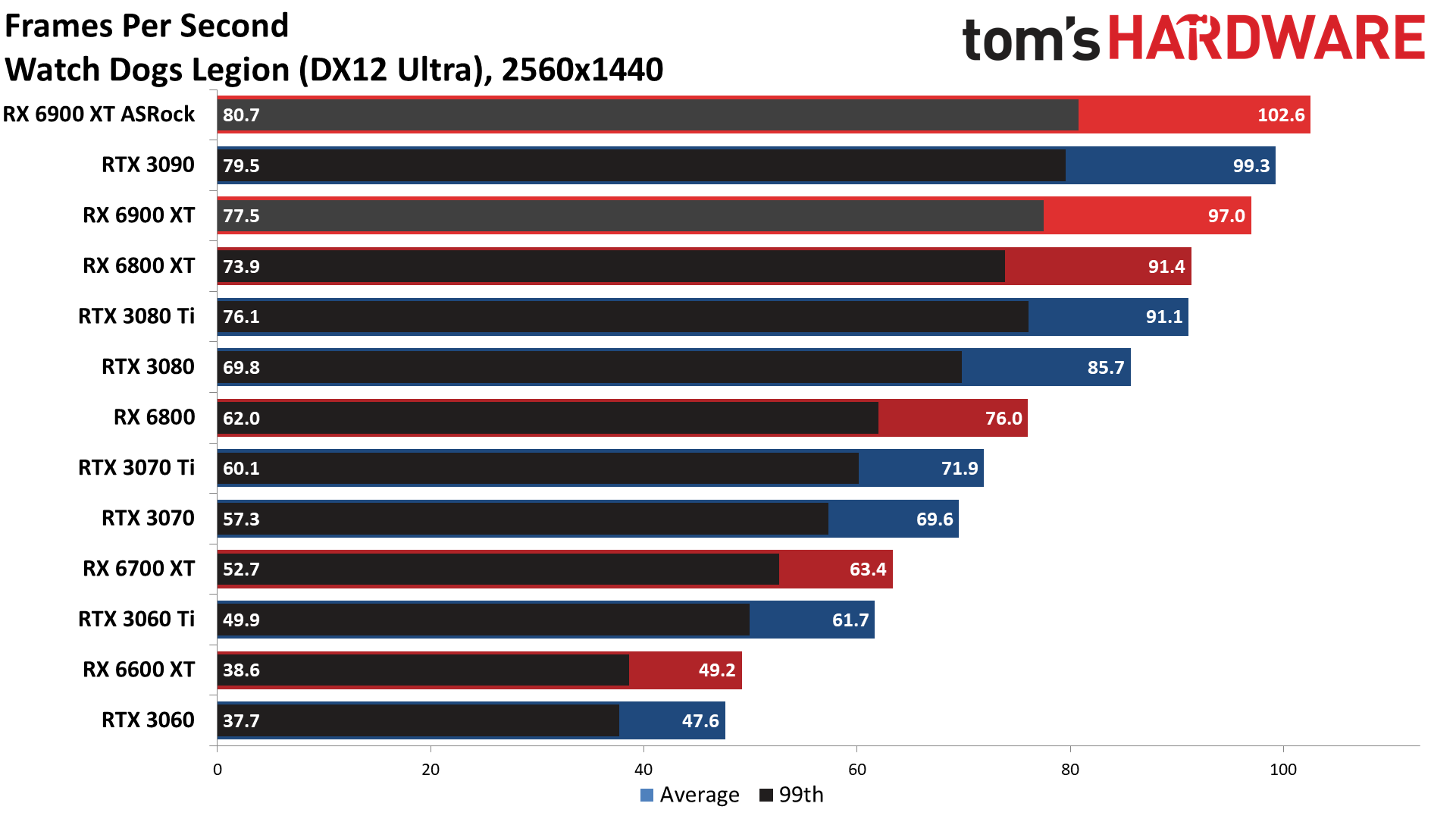

AMD's Big Navi architecture generally does better than Nvidia's Ampere architecture at lower resolutions. That's because the large 128MB Infinity Cache can hold proportionately more data, as 4K mostly ends up using the cache for the various buffers and doesn't have as much extra space for things like textures. Where the ASRock card was slightly slower than the Asus RTX 3080 Ti at 4K ultra, at 1440p ultra it swaps places and now leads by about 1% overall. The games that favor AMD GPUs do so even more at 1440p, with Assassin's Creed Valhalla giving the ASRock card a 34% lead over the Asus card — very much the exception rather than the rule. Remove that one game from the test suite and Nvidia's GPU would still hold onto a slight lead.

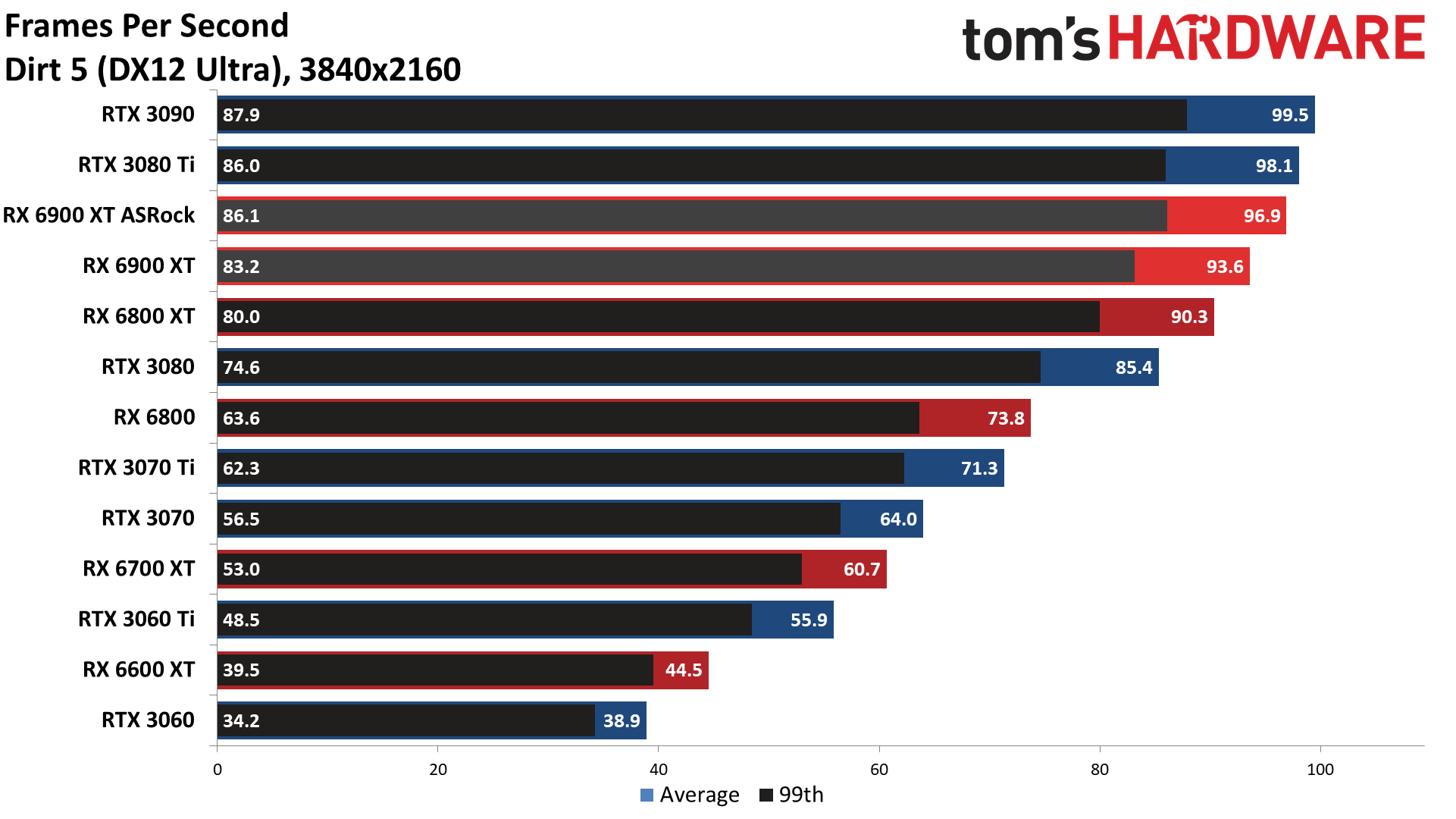

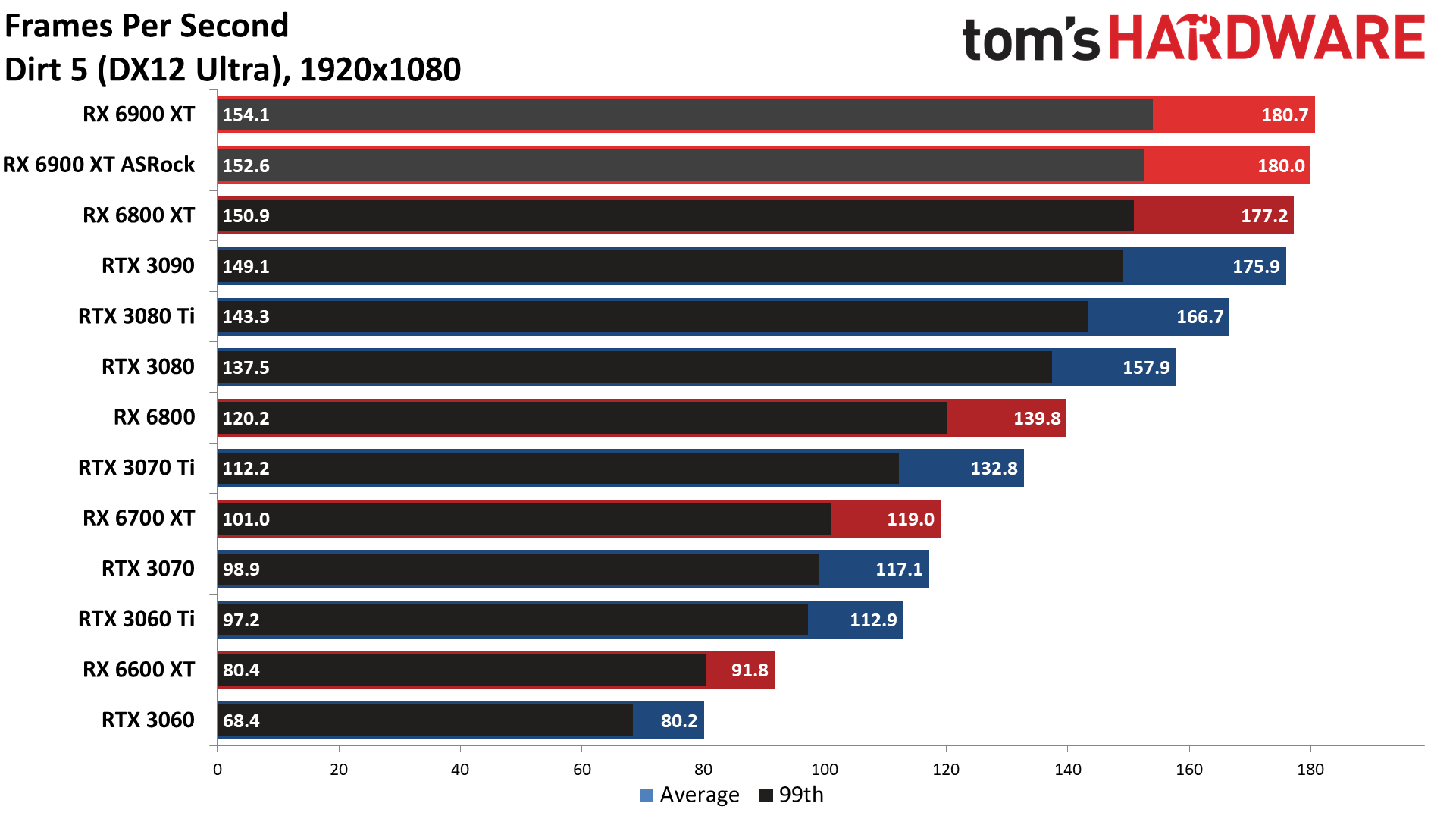

The ASRock card also sees less of an advantage over the reference RX 6900 XT at lower resolutions, and is now only 4% faster. There are also two games now (Borderlands 3 and Dirt 5) where the results from the reference card were slightly ahead of the ASRock card, but again we suspect that's due to drivers and game updates that have occurred in the past four months.

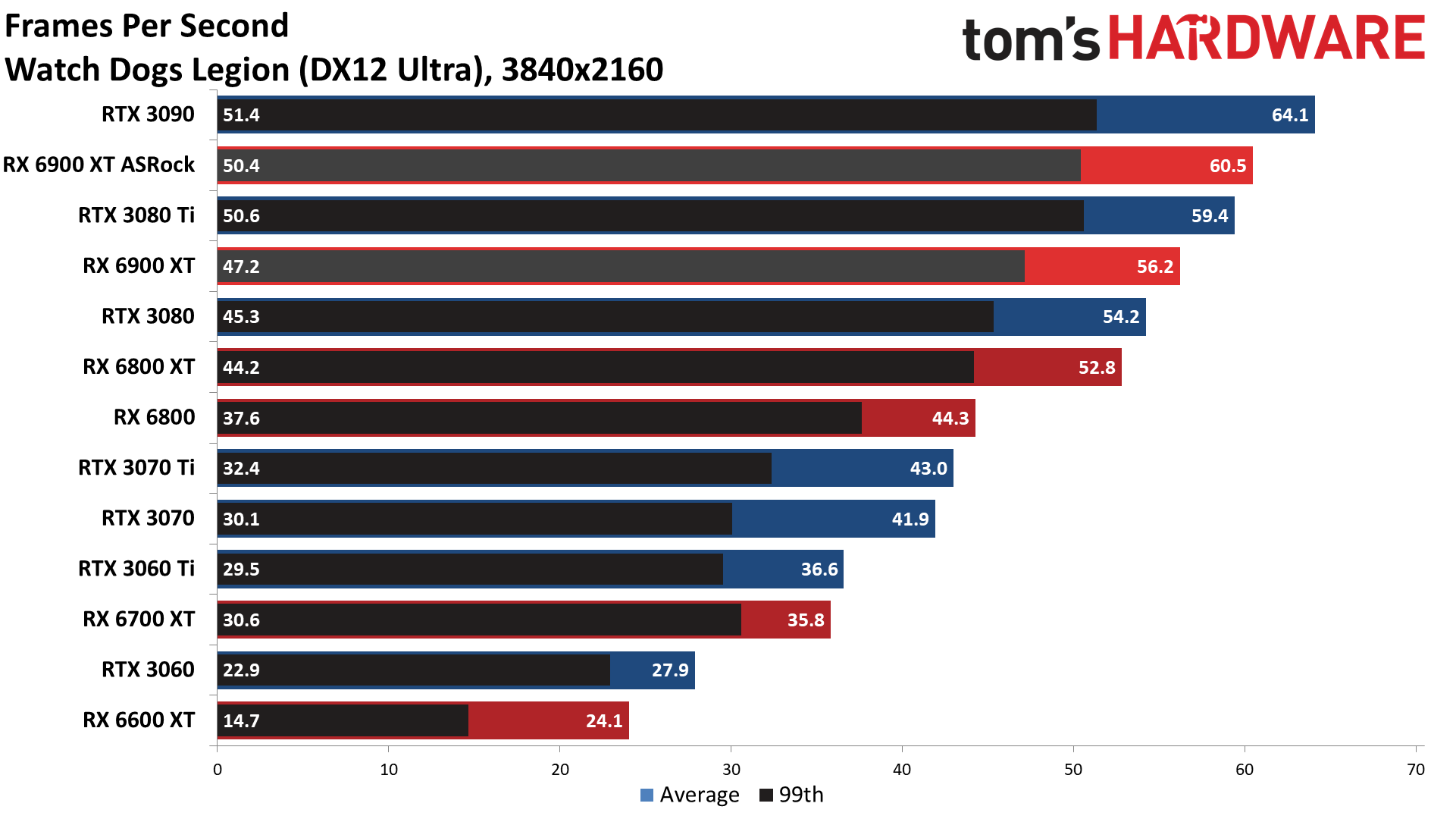

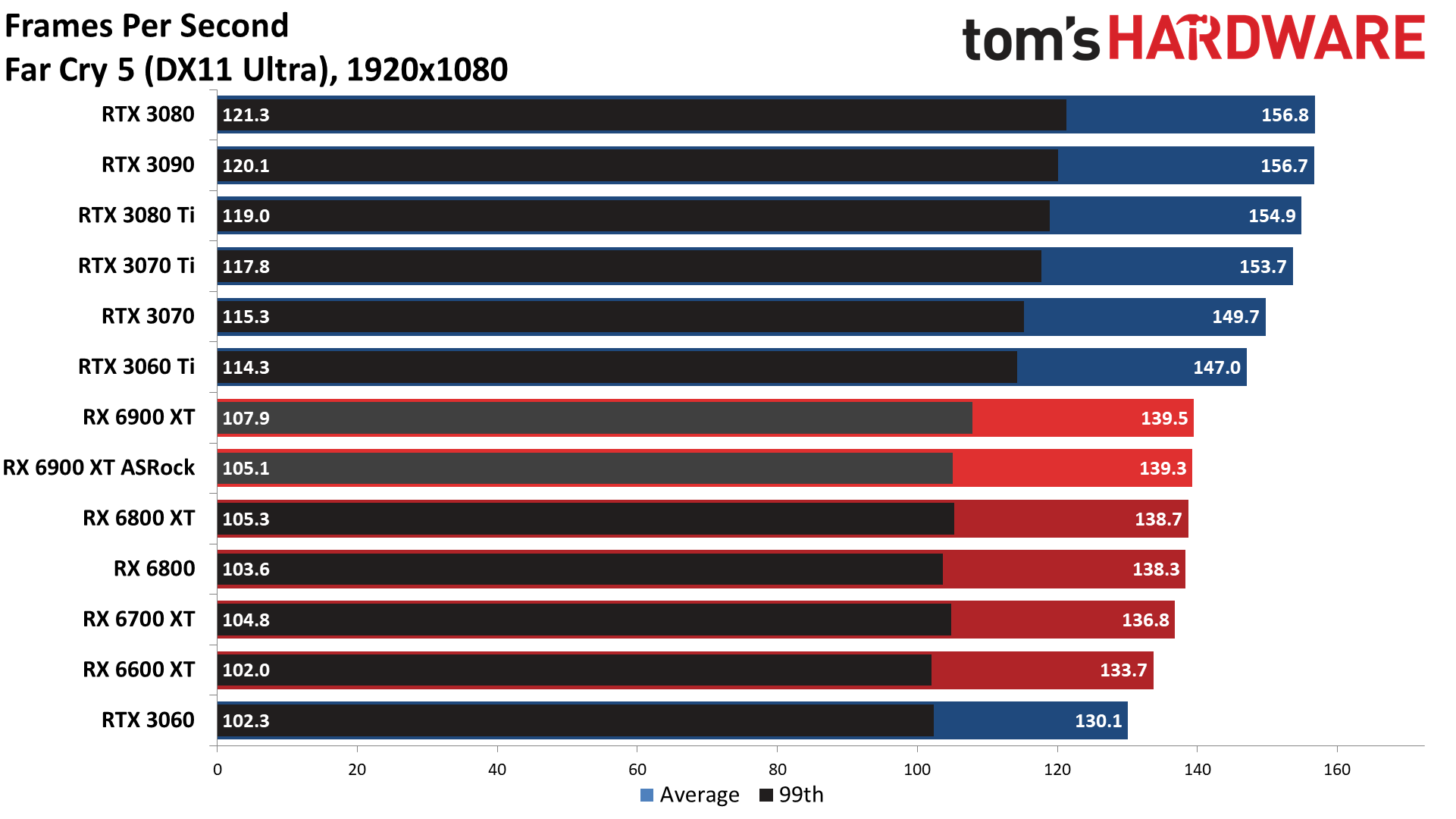

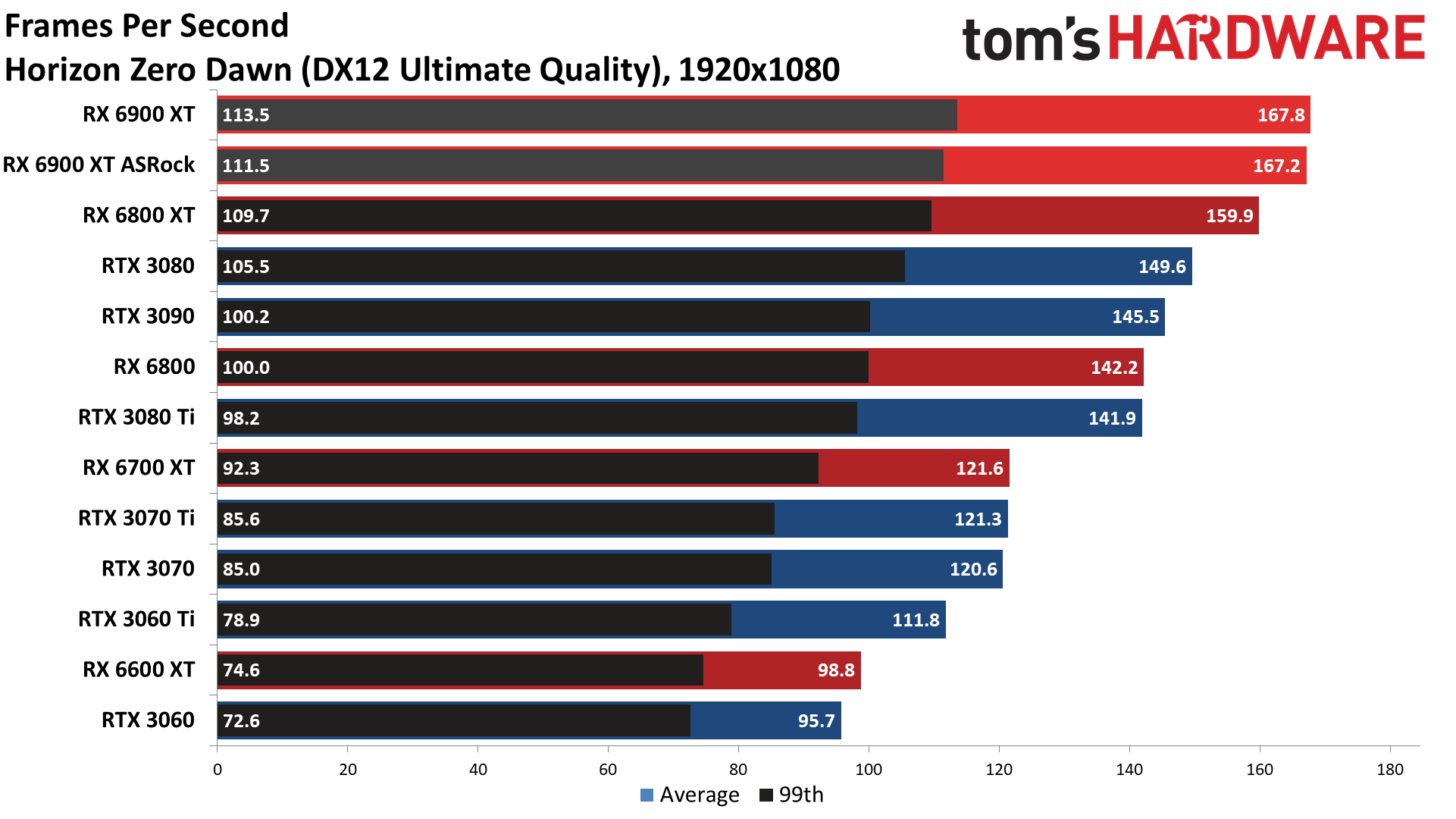

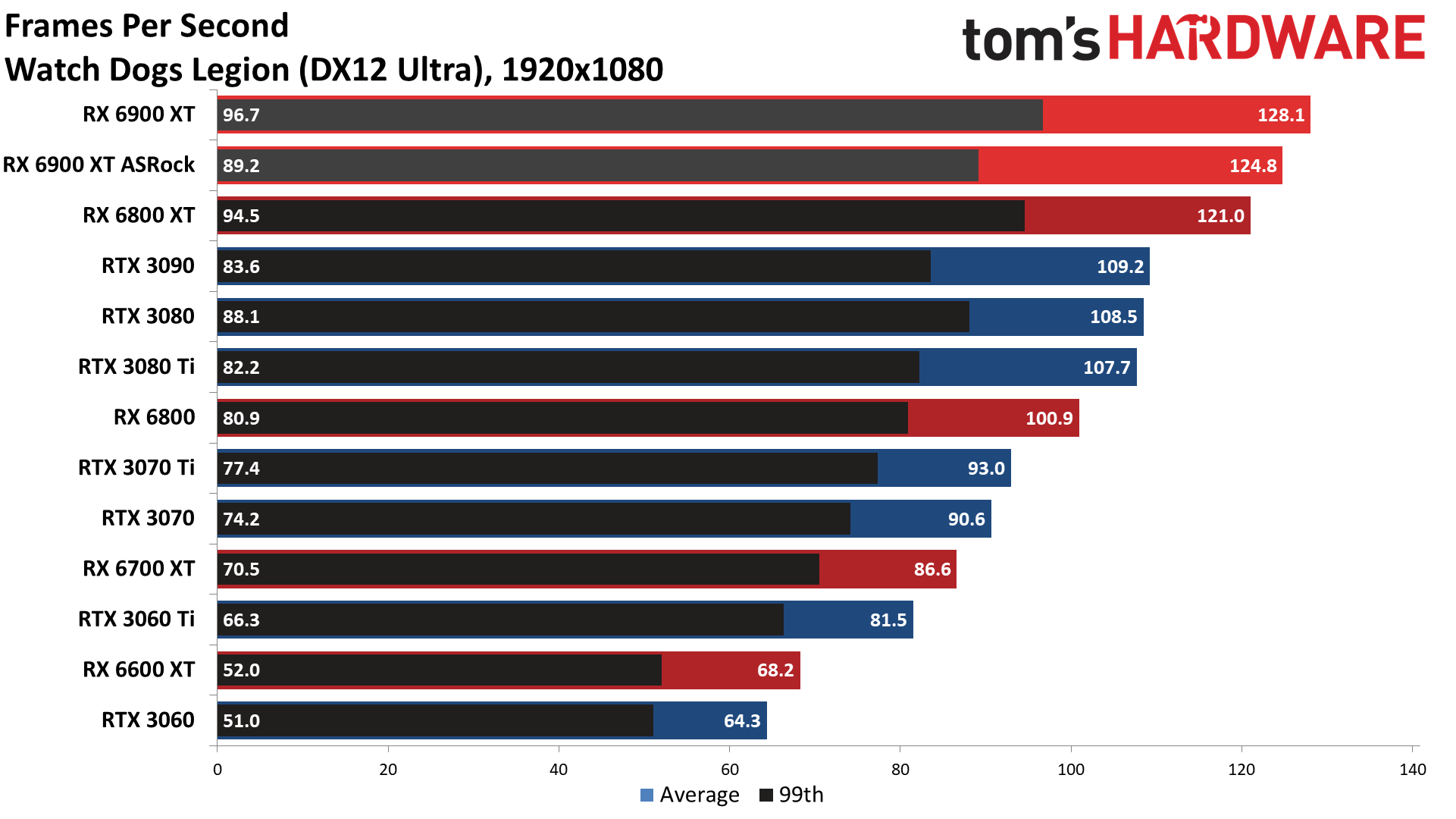

At 1080p, the Infinity Cache really starts to flex its muscle and the ASRock Formula now beats the Asus ROG Strix LC by 5%. Valhalla remains an outlier, now showing a 46% lead for the AMD GPU, but Horizon Zero Dawn and Watch Dogs Legion also have ASRock leading Asus by 15% and 13%, respectively. CPU limitations also play a role, particularly in Far Cry 5 and Forza Horizon 4, with the 1080p results only being slightly better than the 1440p results.

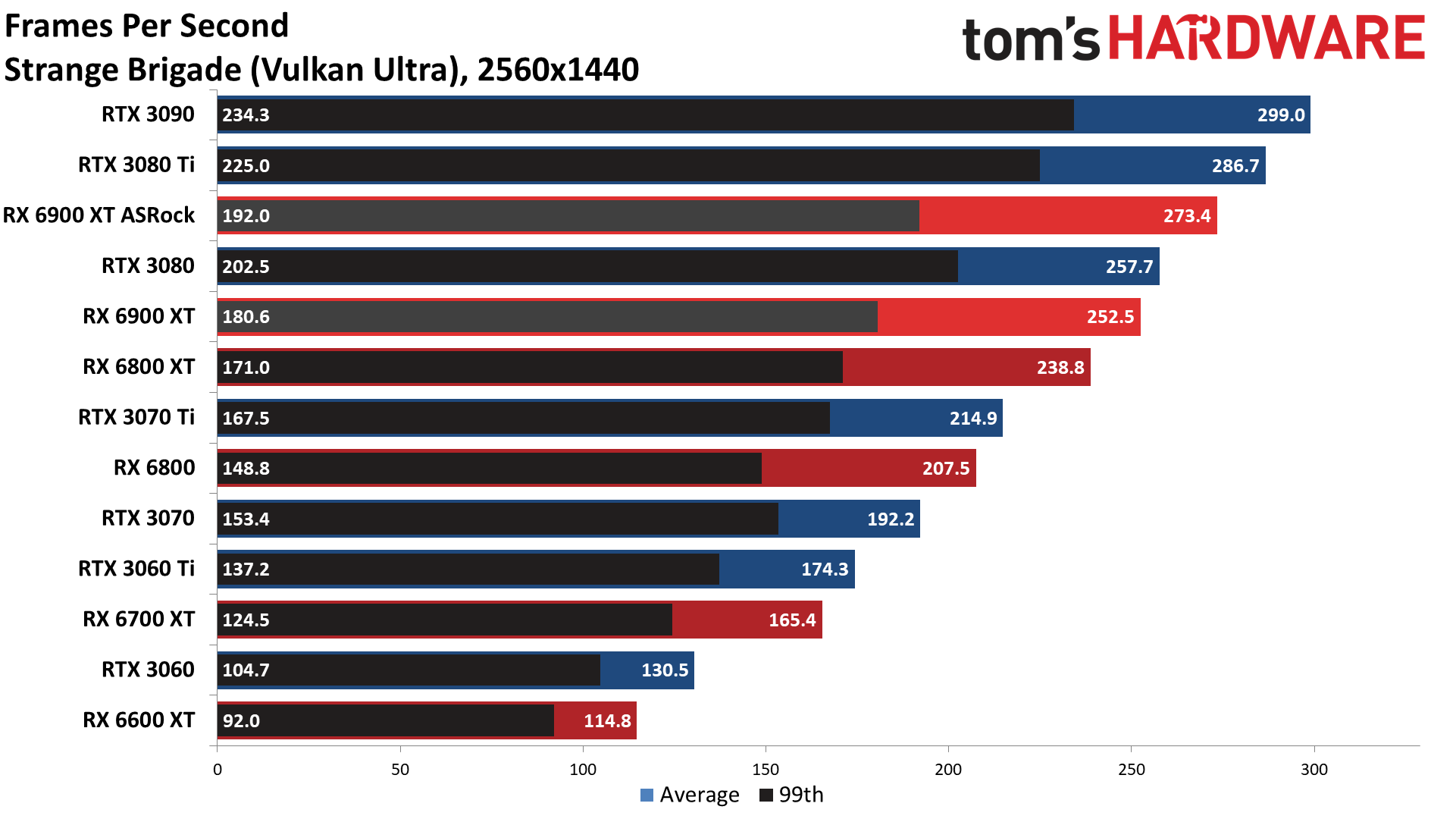

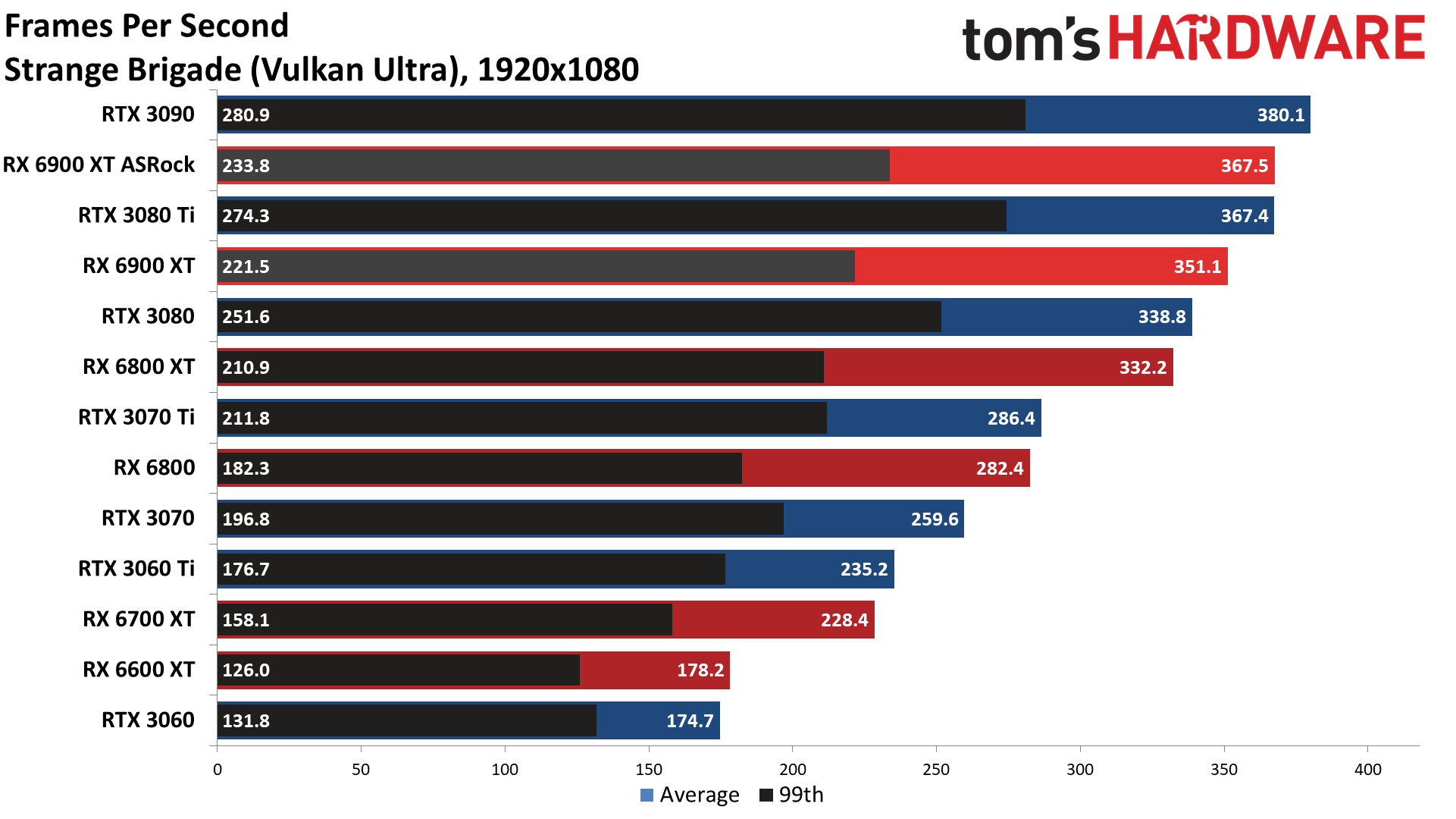

Given the price and the target market, we're not nearly as concerned with 1080p performance as we are with 1440p and 4K performance, though esports fans might want to look for benchmarks of the various GPUs at 1080p and see which ones can max out a 240 Hz or even 360 Hz monitor. Most of the games we test don't come anywhere close to 240 fps, never mind 360 fps — Strange Brigade being the exception, though it's still not an esports game.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

MORE: Best Graphics Cards

MORE: GPU Benchmarks and Hierarchy

MORE: All Graphics Content

Current page: Gaming Performance on the ASRock RX 6900 XT Formula

Prev Page Test Setup for ASRock RX 6900 XT Formula Next Page Power, Clocks, Temperatures, Fan Speed, and Noise on the ASRock RX 6900 XT Formula

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

husker For some the "sanity check" on this card might be the price. But for me, it's the power draw. Prices are set at the whim of market forces, but the huge power draw on this card is something built in by the engineers at ASRock. To me that was one advantage AMD had over Nvidia in this go-round: lower overall power draw which then leads to lower amounts of heat to dissipate, quieter cooling, etc.Reply -

Alvar "Miles" Udell 48dB at 52% fan speed and 60dB at 75%!Reply

Any GPU which requires headphones because it's so loud at NORMAL fan speeds is not even a consideration in my book. Tack on a 400w power draw and an insane pricetag and that's an easy three strikes. -

Makaveli ReplyAlvar Miles Udell said:48dB at 52% fan speed and 60dB at 75%!

Any GPU which requires headphones because it's so loud at NORMAL fan speeds is not even a consideration in my book. Tack on a 400w power draw and an insane pricetag and that's an easy three strikes.

Yep that is crazy.

If these cards ever come back into MSRP which may take a year or more I would only look at an AIO model. I'm liking the temps and noise level you would get with that Asus 3080ti. -

Alvar "Miles" Udell ReplyMakaveli said:Yep that is crazy.

If these cards ever come back into MSRP which may take a year or more I would only look at an AIO model. I'm liking the temps and noise level you would get with that Asus 3080ti.

I never knew what I was missing until after I got my Fury Nano and now my 2070 Super, being able to game and still hear yourself whisper is amazing, especially with an AIO CPU cooler. -

Makaveli ReplyAlvar Miles Udell said:I never knew what I was missing until after I got my Fury Nano and now my 2070 Super, being able to game and still hear yourself whisper is amazing, especially with an AIO CPU cooler.

That is my plan already on AIO cpu cooler then want to go AIO gpu then nice quiet rig even at full load. I cannot stand fan noise and more specifically it ramping up. -

Alvar "Miles" Udell ReplyMakaveli said:That is my plan already on AIO cpu cooler then want to go AIO gpu then nice quiet rig even at full load. I cannot stand fan noise and more specifically it ramping up.

The only thing which kept me from going AIO on my GPU was the finite lifespan of the AIO system between the pump failing and the very slow but steady loss of coolant though evaporation. -

Makaveli ReplyAlvar Miles Udell said:The only thing which kept me from going AIO on my GPU was the finite lifespan of the AIO system between the pump failing and the very slow but steady loss of coolant though evaporation.

True is a trade off vs a full custom loop. -

watzupken Reply

The noise level is insane. 60 decibels is not funny at all and definitely something to recommend against buying this card. Even RTX 3090 with 450W power limit don't get fans that sound this loud. I've used a Sapphire RX 6800 XT Nitro+ with a 350W power limit, and the fans are nowhere near this loud even with it running at 70%. It is only when it hits 100% fan speed when I thought some siren went off in my computer.Alvar Miles Udell said:48dB at 52% fan speed and 60dB at 75%!

Any GPU which requires headphones because it's so loud at NORMAL fan speeds is not even a consideration in my book. Tack on a 400w power draw and an insane pricetag and that's an easy three strikes.

Price wise, I think AIB partners can price what they want, but chances of moving large number of units is slim to none, unless its a desperate miner. While GPU shortage is still a thing now and in the near future, I feel the RX 6900 XT's price have been pushed way off what its worth now. Which is why it is not uncommon to see RX 6900 XT and 6700 XT sitting on shelves with inflated prices. -

-Fran- I have the 6900XT Nitro+ SE in my HTPC for VR and it's silent while operating and I've capped it to 280W. I torture tested it a while ago and never went over 85°C junction and 80°C GPU while using 300W. I was probably throttling regardless. I have it in a small-ish case, under the TV paired to a 5600X.Reply

Anyway, point is these cards can do pretty well in "hot" environments, which is something I can't say about the Ampere generation as I've had friends told me their cards run too hot even with full fans pointing at them and open cases. That's bonkers. Some of then throttle hard or just shut down at times.

As for this card... I don't think it's worth it, just because the Sapphire 6900XT Nitro+ SE exists. And that card is close to vanilla 6900XT MSRP all the time. I bought it close to MSRP at least, so I'm quite happy with that. Could I have just gone for a 6800XT? Yes, but the Nitro+ was better to all alternatives, including 6800XTs, for a few extra shekels.

Regards.