How Seagate Tests Its Hard Drives

Tom's Hardware gets a rare and in-depth look at how Seagate designs and tests its hard drives. Join us for a tour through the company's Longmont, Colorado R&D center.

Build It Better

It bears repeating that the tests and analyses we’ve seen throughout this article—and many others besides—happen repeatedly. A component change in a new design might perform fine at room temperature but throw off a residue at prolonged high temperature. Fixing this might alter the drive’s balance characteristics during RV testing or introduce a structural weakness, which in turn must be fixed. Often, it all comes down to assessing defects parts per million (DPPM), one of the key metrics used throughout the PDP and on into manufacturing and OEM integration. When the DPPM numbers are low enough and all other performance and reliability metrics for a given Integration stage have been met, a drive design advances to the next testing stage, with higher production quantities and tougher metrics, and the testing and analysis start all over again. That a design can go from a few hundred hours MTBF to over a 2 million in six months of refinement is slightly miraculous.

As if Longmont wasn’t busy enough tending to its own HDD development projects, Seagate factories also ship production samples in regularly for testing and analysis to confirm that all remains well with designs under a different manufacturing process in other locations.

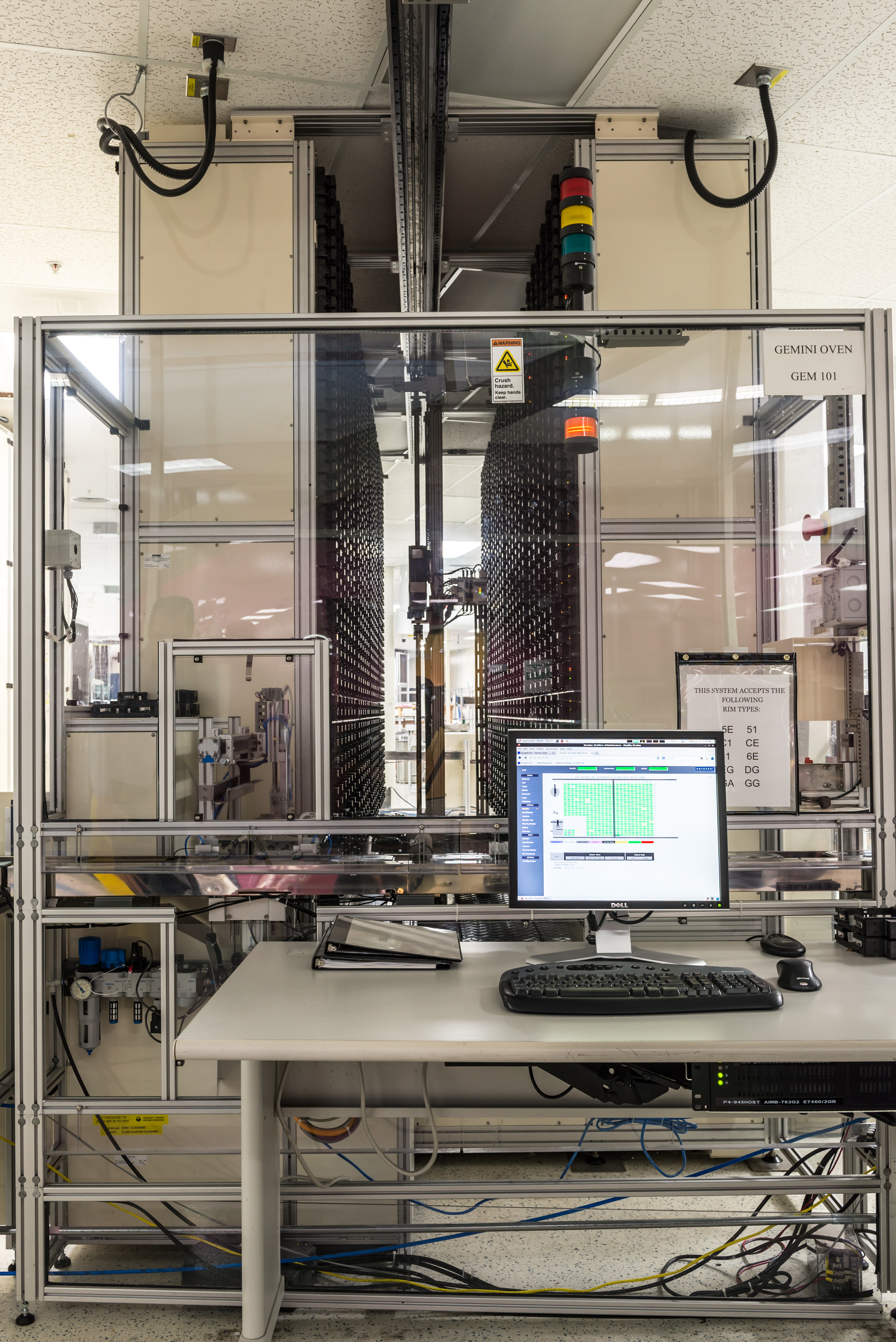

One of our favorite stops through Longmont was also one of our last. In these final two images, you see one of the facility’s five Gemini testers, a 6288-slot robotic behemoth that serves to calibrate, set up, download and test firmware. From the beginning of PDP through its end, the Geminis run a dizzying number of scripts across all drive designs, including those destined for cloud applications. After months and months of arduous design refinement, drives must still past this lengthy challenge. The Gemini makes it easier to apply different firmware revisions for different script workloads and conduct high-quantity tests, enjoying a 60 percent improvement in test space footprint and 28 percent less energy consumption, making it 40 percent cheaper for Seagate to operate than its prior-generation approach.

Article continues belowEverything we’ve seen ultimately leads to affordable, reliable drives being inside the type of system you’re probably using now as well as enterprise titans such as the rack setup below. Perhaps now you see why Seagate R&D can cost $2 billion annually and ultimately result in a 1.2 percent AFR. It’s one thing to read the numbers; it’s another to stand inside of the process and witness the untold thousands of hours of testing and retesting that make such promises of reliability a reality. The experience was as humbling as it was inspirational, and we’ll likely never look at a hard drive (especially an enterprise model) in quite the same way again.

MORE: Best SSDs For The Money

MORE: How We Test HDDs And SSDs

MORE: All Storage Content

William Van Winkle is a Writer-at-Large for Tom's Hardware. Follow him on Twitter.

Follow us on Facebook, Google+, RSS, Twitter and YouTube.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

tom10167 Awesome photos. I don't know what the last picture is but I know I need one of those in my house.Reply -

Rookie_MIB ReplyAwesome photos. I don't know what the last picture is but I know I need one of those in my house.

That is an enterprise storage rack full of 2u hotswap chassis. 18 chassis, 12 drives per chassis = 216 drives @ 6tb (?) per drive = 1,296 terabytes or 1.3 Petabytes.

You could store a lot of TV shows or movies on that thing. Imagine how many of those are used for YouTube? Yikes. They get 300 hours of footage uploaded every minute. -

Mike-TH So if their testing is so good, why are their drives among the worst for reliability - to the point where most IT people I know actually refuse to use them, or if forced to use them will keep (and use) more spares than for other makers.Reply -

Tom20160027 The article explains the different types of drive/MTBF and why the backblaze test is useless information. Marketing plot to have folks talking about it and re-posting its link. It seems to work as we keep seeing the link over and over... They are not getting my data. They put drives designed for desktop into servers and run them to the ground and call it a "reliability test". Let's test my kids bicycle with training wheels at the Tour de France and complain about its quality....Reply

I know IT folks that refuse to use other brands of drives as well. I know IT folks that refuse to use servers from this brand or that brand. We can find anecdotal information about anything. It does not make it true. -

Glock24 Seagate tests their drives? I thought they didn't!Reply

I've had more Seagate drives die without warning than any other brand. The only ones that have survived are some old 250GB Barracuda ES. All other models I've owned had lots of bad sectors or just stopped working before the first year, but SMART almost always says the drive is fine! -

zodiacfml Yawn. All I think of right now is that HDDs will become the tape drives of the past.Reply -

Garrek99 The only drives I've ever had go bad on me were Seagate drives.Reply

Every other drive I've ever purchased simply became obsolete due to size and thus replaced.

They should be reading about how the other drive makers do their testing and learn from that. Hahaha -

rosen380 Maybe things changed... but all of my old SGI machines always had Seagate drives in them and the 20+ year old drives all still work. Hell look at what these drives *sell* for on eBay:Reply

http://www.ebay.com/sch/i.html?_sc=1&_udlo=0&_fln=1&_udhi=200&LH_Complete=1&_ssov=1&_mPrRngCbx=1&LH_Sold=1&_from=R40&_sacat=0&_nkw=%28st31200N%2C+st32171N%2C+st32272N%2C+ST34371N%2C+st34520N%2C+st34573n%2C+st39173N%2C+st318417N%2C+st52160N%29&_sop=16

4.5 GB drives *selling* for $150+ I see a 2Gb for $120.

They must have been pretty decent at some point if SGI was putting them in their $5000-20000 workstations and people are spending $40+ per GB to get these now...