Which Web Browser Is Best Under Windows 8?

Welcome to our first-ever Web Browser Grand Prix on Windows 8! Will Chrome remain the reigning Windows champion? Is Internet Explorer 10 going to smash the competition like its predecessor? Does Opera 12.10 finally deliver on what version 12 promised?

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Reliability And Security

Reliability

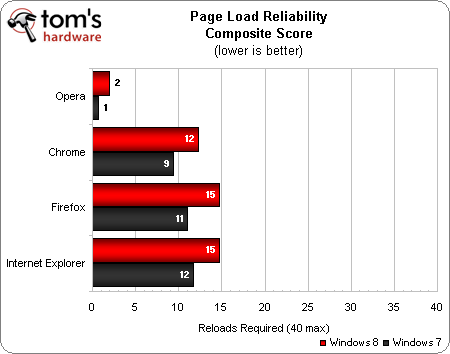

The reliability rating is achieved by measuring a browser's proper page renders under a heavy workload of 40 tabs. A point is added every time a browser requires that a page be reloaded due to broken or missing elements. The highest score in this metric is zero, while the worst possible score is 40. The page load reliability score is the average of three iterations.

Opera once again claims the reliability crown, with just two reloads in Windows 8 and only one in Windows 7. Chrome places second with about one-quarter of the 40-tab workload requiring a refresh. Firefox and Internet Explorer place third and fourth, respectively, both with an average of 15 reloads in Windows 8. Strangely enough, all four browsers exhibit less reliability in Windows 8 than Windows 7.

Article continues belowSecurity

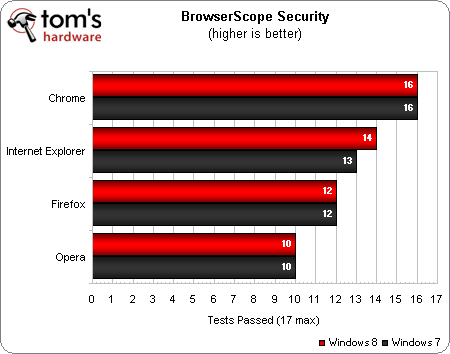

BrowserScope Security is currently the only security benchmark that hasn't been defeated by any of the top four Web browsers, remaining the sole security test in our Web Browser Grand Prix.

Chrome passes 16 out of the 17 security checkpoints in this benchmark, earning a strong first-place finish. IE10 passes 14 checkpoints to place second in Windows 8, while IE9 manages to pass 13 checkpoints to earn a second-place finish in Windows 7. Firefox earns third, followed by Opera.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

mayankleoboy1 1. Did you ensure that Opera has Hardware acceleration and WebGL enabled in about:config ? AFAIk, Opera does not enable HWA by default.Reply

2. I find the over-reliance on "Internet Explorer Test drive" benchmarks disturbing. Most use code that is inefficient and not used anywhere else on the web, making it quite theoretical.

3. +1 for using Google Octane benchmark. Both google and mozilla agree that this is a good real-world benchmark.

4. Addition of the "Maze solver" benchmark is disappointing.

5. Why remove the subjective smoothness ? 95% of the time, subjective smoothness is what lures a person to use a specific browser. People use a browser, not run benchmarks on it all day. Subjectively, no browser can beat Google Chrome. Then comes Opera , Firefox and far lastly, IE10.

-

mayankleoboy1 Any technical reason why browser performs generally better in Win8 ? Even the 'WHQL' drivers from Nvidia and AMD arent quite mature for Win8.Reply

Games and applications did not show any improvement in Win8 over Win7. -

adamovera mayankleoboy11. Did you ensure that Opera has Hardware acceleration and WebGL enabled in about:config ? AFAIk, Opera does not enable HWA by default.2. I find the over-reliance on "Internet Explorer Test drive" benchmarks disturbing. Most use code that is inefficient and not used anywhere else on the web, making it quite theoretical.3. +1 for using Google Octane benchmark. Both google and mozilla agree that this is a good real-world benchmark.4. Addition of the "Maze solver" benchmark is disappointing.5. Why remove the subjective smoothness ? 95% of the time, subjective smoothness is what lures a person to use a specific browser. People use a browser, not run benchmarks on it all day. Subjectively, no browser can beat Google Chrome. Then comes Opera , Firefox and far lastly, IE10.1) We use fresh installs at default settings; Opera does not enable HWA by default.Reply

2) The only IETestDrive tests we use are Psychedelic Browsing and Maze Solver, and IE regularly loses to competitors on both.

3) Octane was not used because it had issues with IE9 and Opera 12.10.

4) We definitely need a new CSS test, but the only other options are outdated or on IETestDrive - unfortunately, Kaizoumark doesn't work with IE10.

5) It's really difficult to see that kind of stuff on a modern test system, but I will say that Chrome and IE10 are about equal in that department, with Firefox and Opera noticeably more choppy right at the beginning of the 40-tab load. -

adamovera mayankleoboy1Any technical reason why browser performs generally better in Win8 ? Even the 'WHQL' drivers from Nvidia and AMD arent quite mature for Win8.Games and applications did not show any improvement in Win8 over Win7.Not sure, the Nvidia drivers used were the same version on both OSes.Reply -

Reply

And we're also passing the torch from Windows 7 to Windows 8.

We are going to miss you on Web Browser Grand Prix, Windows 7 -

mayankleoboy1 Reply10447137 said:1) We use fresh installs at default settings; Opera does not enable HWA by default.

2) The only IETestDrive tests we use are Psychedelic Browsing and Maze Solver, and IE regularly loses to competitors on both.

3) Octane was not used because it had issues with IE9 and Opera 12.10.

4) We definitely need a new CSS test, but the only other options are outdated or on IETestDrive - unfortunately, Kaizoumark doesn't work with IE10.

5) It's really difficult to see that kind of stuff on a modern test system, but I will say that Chrome and IE10 are about equal in that department, with Firefox and Opera noticeably more choppy right at the beginning of the 40-tab load.

1. IMHO, enabling these settings would have made Opera more competitive and this article fairer.

3. Whoops, misread that. But this is a good benchmark. Robohornet and robohornet pro are complete jokes.

4. Just exclude the maze solver. Its bad coding, as any web developer can tell you.

5. Thats exactly what i'm saying. This needs to be factored in the overall score. You want the browser UI to always remain smooth. UI choppiness is unacceptable and sloppy coding. We are not living in the 90's anymore.

The one thing i dislike in Chrome is the memory bloat when opening many tabs. In the 40tab test, FF uses 600 MB. Chrome uses 1600MB :O. That is probably an iverhead of using separate processes for each tab. That is excellent for smoothness and UI fluidity. But shameful for memory consumption. I guess devs need to find a middle path.

-

mayankleoboy1 Both 'mozilla kraken' and 'Google sunspider' benchmarks need to be retired . They are old, and all the major browsers have optimizations to score better on them.Reply

Plus, they heavily test features that are not used anywhere else on teh web.

Example : Sunspider makes a billion manipulations to the the "date" variable. Mozilla did not have any optimization for this. So it scored poorly on Sunspider. After numerous 'review sites' started using sunspider to test FF Vs Chrome, mozilla developers had to reluctantly add the same optimisation (which is basically a separate buffer to store the date). Of course, nowhere on the web is the date variable used in this manner. So its optimization for an artificial test. -

wilem_WAR246810 "The King Is Dead, Long Live The King!" am I the only one who thought of Megadeth?Reply -

deepblue08 mayankleoboy1Any technical reason why browser performs generally better in Win8 ? Even the 'WHQL' drivers from Nvidia and AMD arent quite mature for Win8.Games and applications did not show any improvement in Win8 over Win7.Reply

As far as I heard there are significant under-the-hood improvements in Win8, in terms of memory efficiency and multi-core usage. -

epileptic Is it Opera x64 or x86? I remember having tested Opera 12 and the startup was very slow. I'm still using 11.64 atm. The only thing keeping me from moving to Firefox is how sluggish the UI feels... I'd also have to find a new mail client. :/Reply