Zotac Sonix NVMe SSD Review: Our First E7 Tests

Why you can trust Tom's Hardware

Four-Corner Performance Testing

Comparison Products

Creating a list of comparison hardware to go up against the Sonix wasn't difficult: only Intel and Samsung have 512GB-class SSDs with NVMe support. Not long ago, we also tested a Toshiba XG3 SSD with NVMe, but it remains an OEM model. Plus, we could only find a 128GB version to purchase.

The NVMe protocol reduces latency in the software stack, improving the I/O throughput of small blocks of data by cutting overhead. If you want to learn more about how the NVMe interface affects performance, check out this article.

To illustrate the differences more clearly, we're also including Kingston's AHCI-enabled Predator at the 480GB capacity point.

To read about our storage tests in-depth, please check out How We Test HDDs And SSDs. Four-corner testing is covered on page six of our How We Test guide.

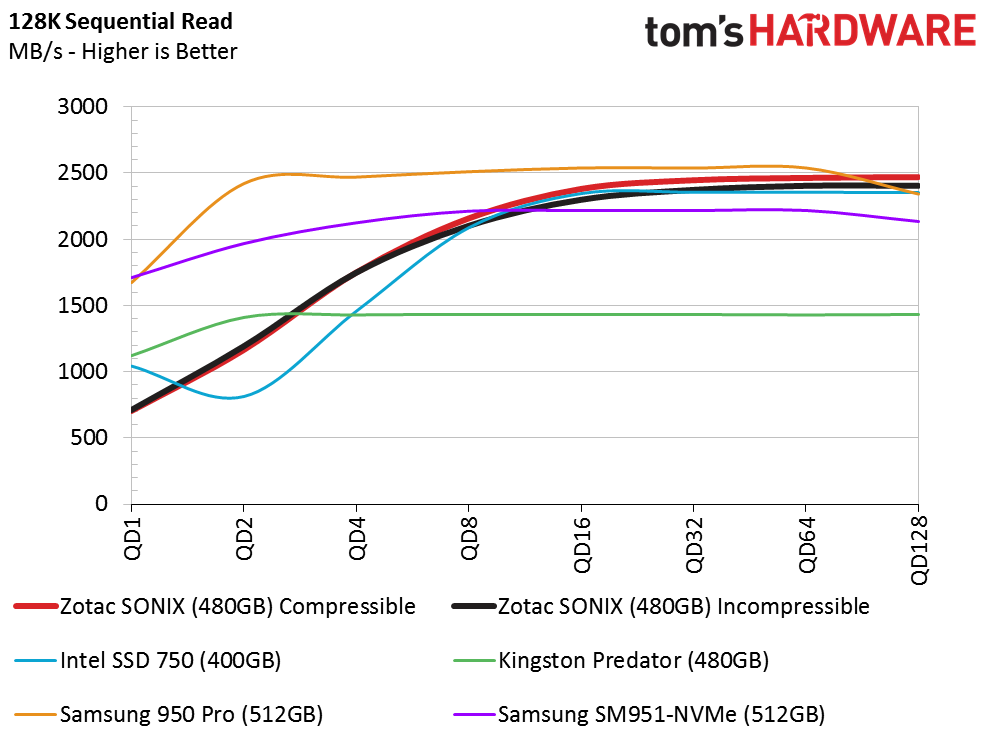

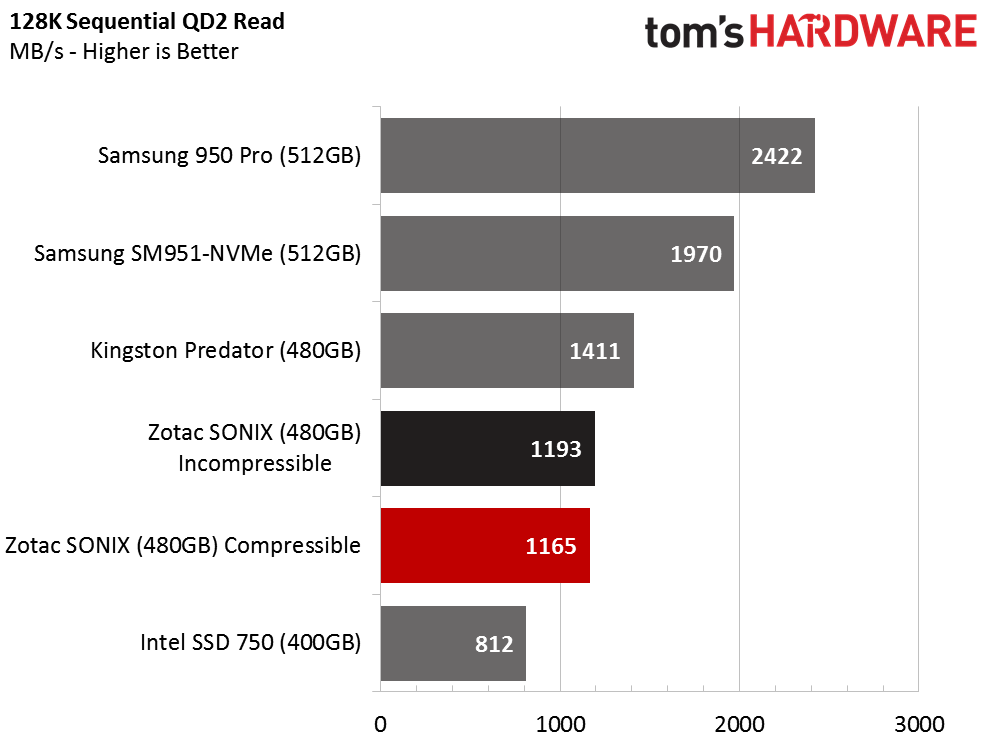

Sequential Read

Our first synthetic tests show the Sonix under the influence of compressible and incompressible data. Most enthusiasts will want to pay attention to performance at queues one or two commands deep. Power users running professional software can look further up the scale. However, anything over a queue depth of eight represents workloads found almost exclusively in enterprise environments.

Zotac's 480GB Sonix, at least in its current state, struggles at a queue depth of one compared to the competing drives already out there. Still, it moves 128KB blocks sequentially faster than what you'd see from a SATA-attached SSD. And the Sonix scales well as the workload intensity rises. At high queue depths, it performs as well as the best NVMe-based desktop drives sold today.

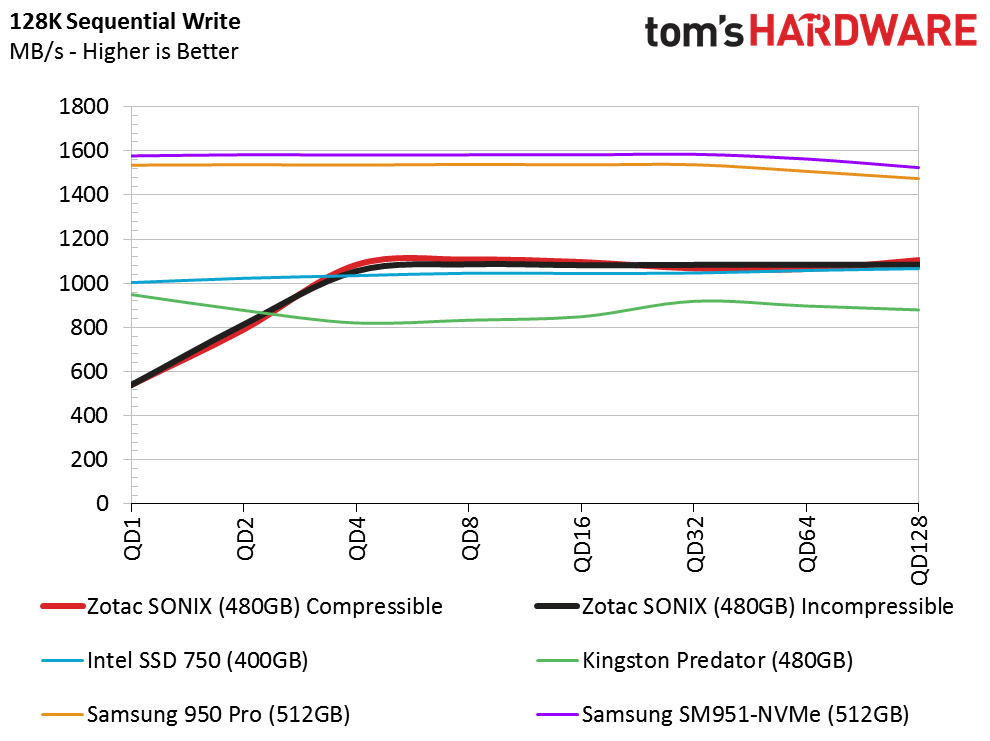

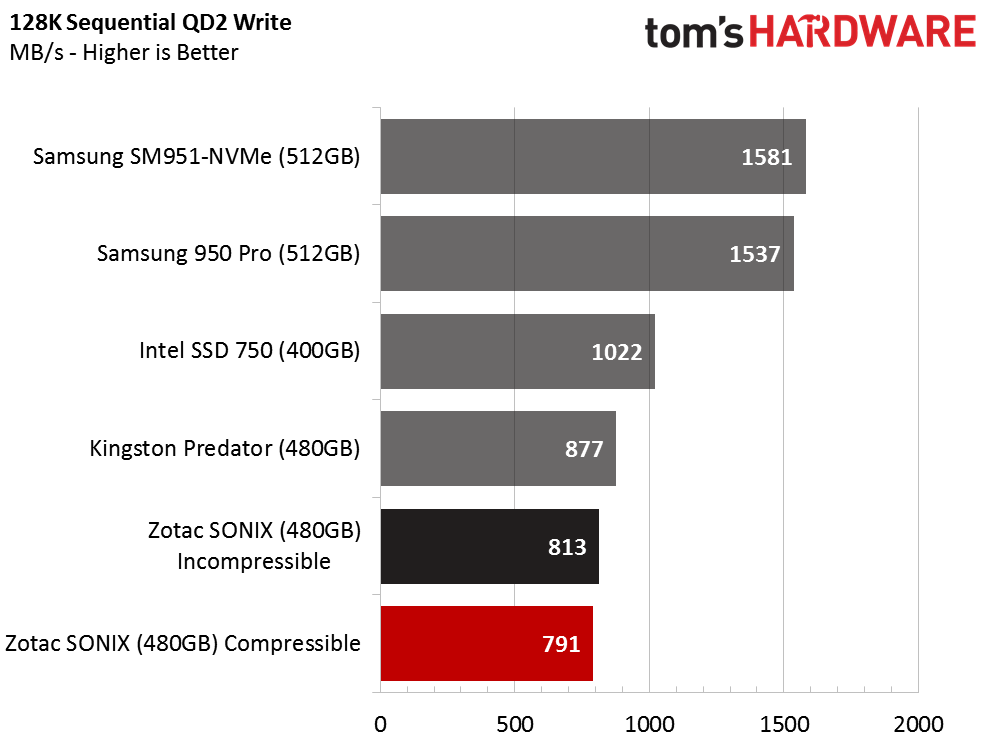

Sequential Write

In our sequential write test, the Sonix falls short of Zotac's performance claims. We expected this; it's an issue we've seen from other NVMe-based drives.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

For a bit of context, AHCI operates on one command at a time, but can queue up to 32 of them. NVMe can operate on as many as 65,535 commands simultaneously, and each of those commands can queue another 65536. Existing software doesn't come close to leveraging those advanced capabilities. Although we could certainly test under conditions more favorable to NVMe to make it look better, the fact is that you simply won't see the interface's potential for years.

So, we record modest sequential write performance at a queue depth of one and two. At a queue depth of four, the Sonix hits its best performance using our testing methods and levels off through the rest of the range.

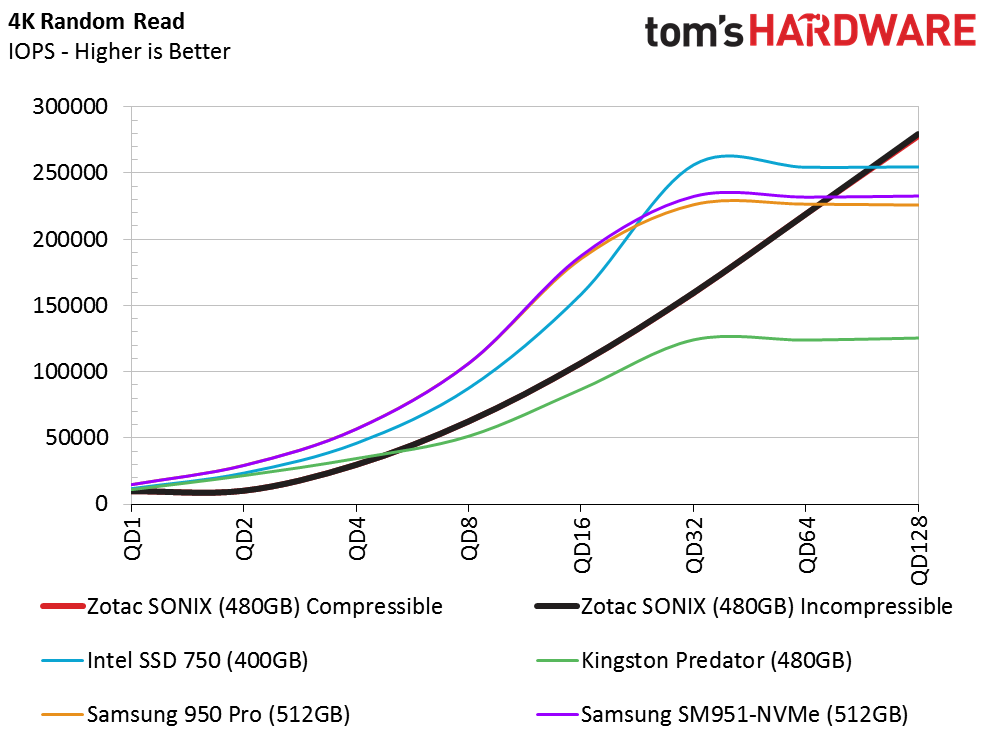

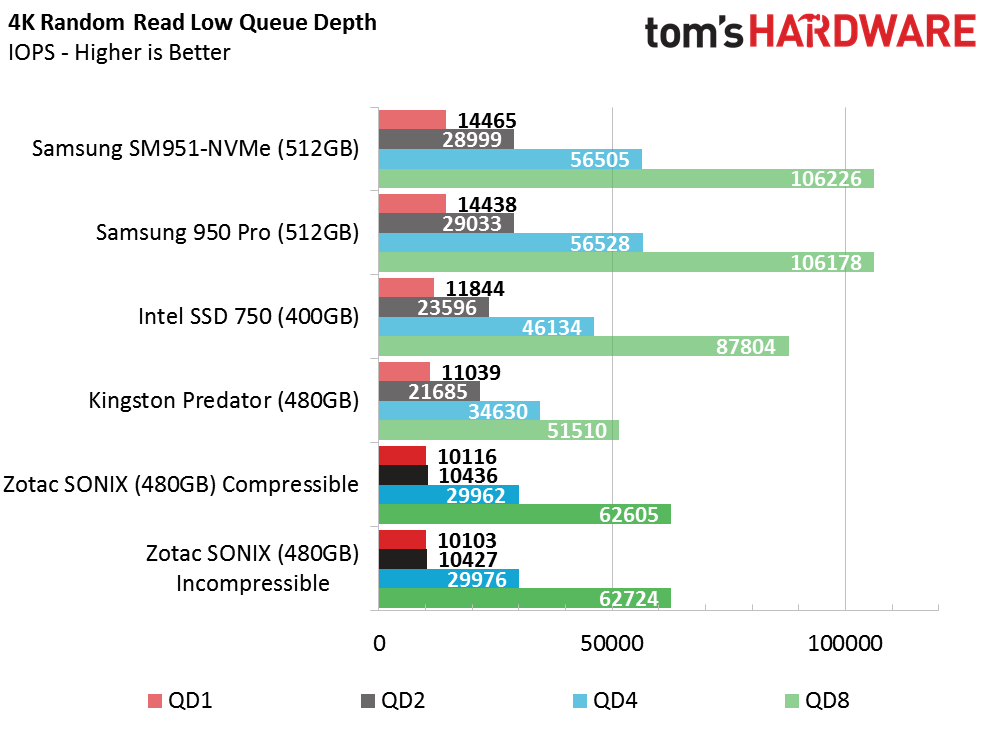

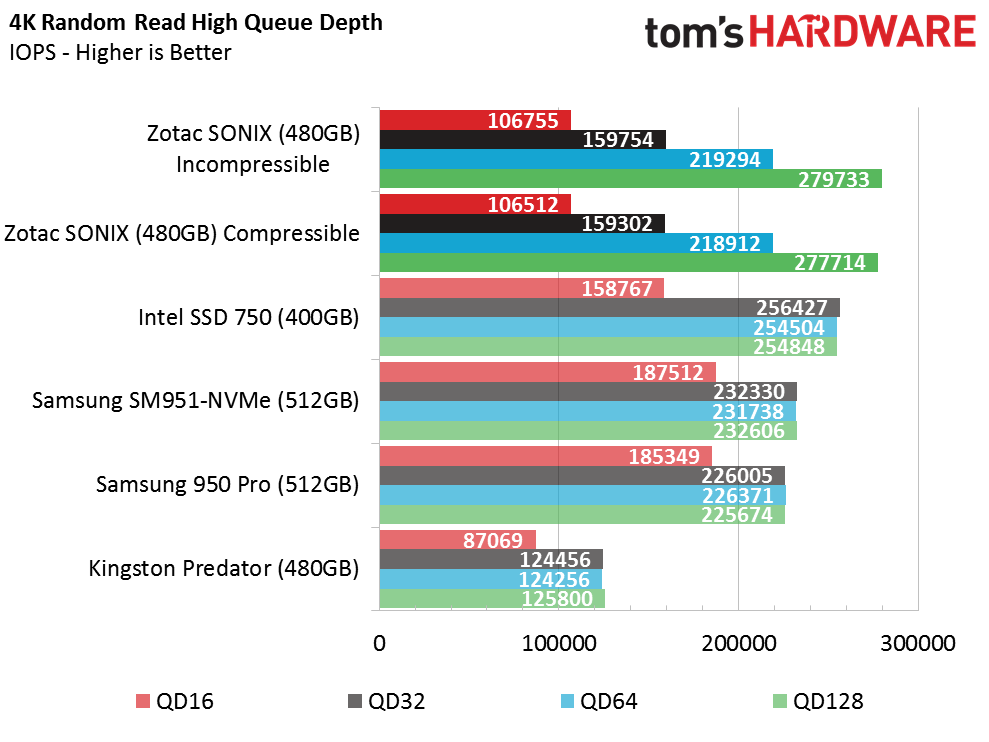

Random Read

Zotac's Sonix delivers just over 10,000 random read IOPS at a queue depth of one, but presents the weakest performance of the group at low queue depths. Conversely, at the other end of the chart, the Sonix delivers better random I/O throughput than its competition. You get exceptional scaling through a queue depth of 128.

Given these numbers, Phison is likely working hard to improve performance at low queue depths before pushing final firmware to eager drive partners. Stability almost assuredly took first priority. But now it's time to coax more speed and additional features from the platform. The next build should include the L1.2 power feature, and we wouldn't be surprised to see optimizations that ameliorate some of these preliminary soft spots.

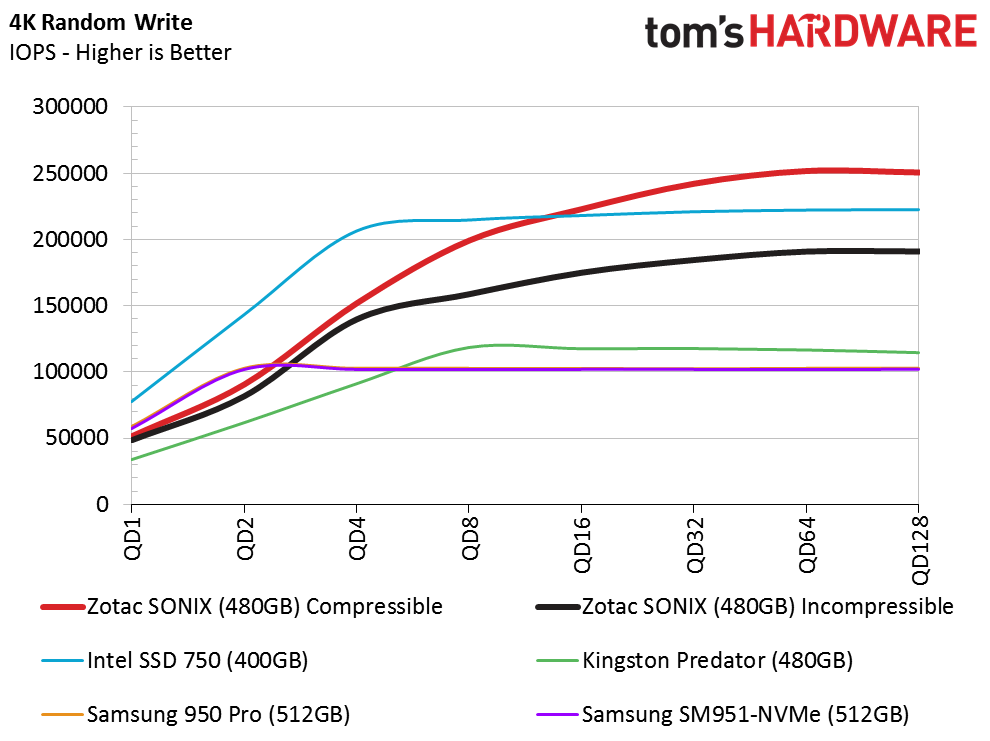

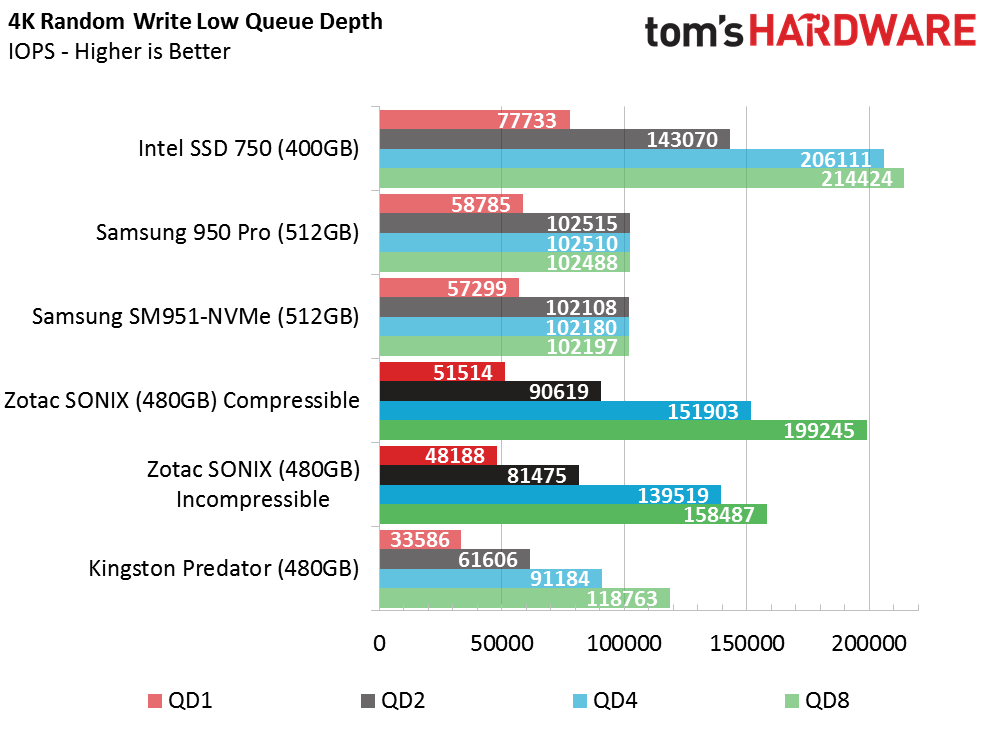

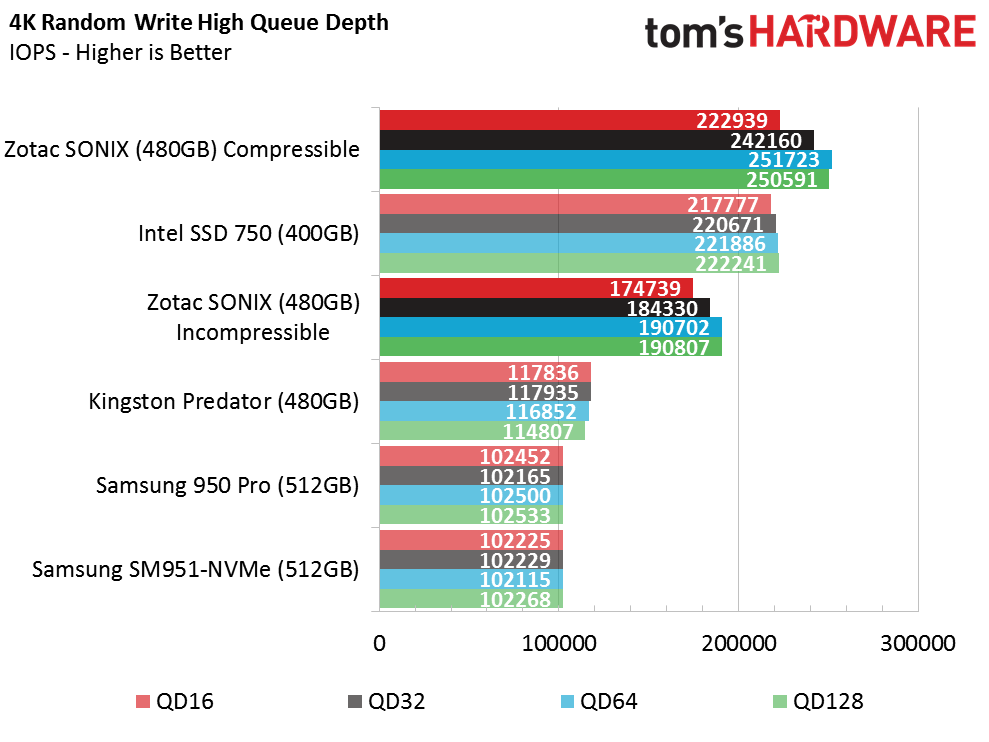

Random Write

The only test other than Anvil where we observed a performance difference between compressible and incompressible data involved small blocks of data written randomly. As we've seen, the Sonix struggles at low queue depths against the 950 Pro and SM951-NVMe. Those two drives don't scale well beyond a queue depth of two though, and hit a wall at 100,000 IOPS. Meanwhile, Zotac's 480GB Sonix continues to scale well, eventually peaking in excess of 250,000 IOPS.

Current page: Four-Corner Performance Testing

Prev Page A Closer Look And Inital Performance Testing Next Page Mixed Workloads And Steady State

Chris Ramseyer was a senior contributing editor for Tom's Hardware. He tested and reviewed consumer storage.

-

2Be_or_Not2Be Is it too much to ask that all of your graphs keep the same colors for each product? One page the Intel 750 is light blue; the next page, it's black. Consistency here would help the reader.Reply -

CRamseyer The different colors for the first and later parts of the review is a one off. We added the compressible / incompressible tests to the tests early in the review so there was a shift. In the future I'll just use a red dotted line if adding something special.Reply -

Kewlx25 Correction:Reply

"For a bit of context, AHCI operates on one command at a time, but can queue up to 32 of them. NVMe can operate on as many as 256 commands simultaneously, and each of those commands can queue another 256."

https://en.wikipedia.org/wiki/NVM_Express#Comparison_with_AHCI

NVMe

65535 queues;

65536 commands per queue -

CRamseyer You are correct, that was my fault. I built a test that does 256 / 256 to see how it works. I'll make the correction.Reply -

josejones Good article, Chris Ramseyer.Reply

"Real-world software rarely pushes fast storage devices to their limits"

Chris, I am curious about what all holds back NVMe SSD's from getting their full potential? What all needs to come together to reach their full potential? Will Kaby Lake and the new 200-Series Chipset Union Point motherboards help to get better performance out of the new NVMe & Optane SSD's? I've heard we need a far bigger BUS too. I am holding out for an NVMe SSD that will actually reach the claimed 32 Gb/s or close to it - minus overhead. -

TbsToy So Chris what is your opinion on which of these drives should be used where. Workstation, PC desktop, laptop etc. as the most 'suitable' drive among this group for which machine?Reply

W.P -

TbsToy So Cris which of these drives to you consider to be the most useful with which type of machine, server, workstation, PC, or laptop?Reply

W.P. -

Kewlx25 ReplyGood article, Chris Ramseyer.

"Real-world software rarely pushes fast storage devices to their limits"

Chris, I am curious about what all holds back NVMe SSD's from getting their full potential? What all needs to come together to reach their full potential? Will Kaby Lake and the new 200-Series Chipset Union Point motherboards help to get better performance out of the new NVMe & Optane SSD's? I've heard we need a far bigger BUS too. I am holding out for an NVMe SSD that will actually reach the claimed 32 Gb/s or close to it - minus overhead.

This is my semi-educated guess.

1) The storage chips need to be faster, but they are pretty fast.

2) Controllers need to be faster. Less complicated overhead, better command concurrency, etc

3) There is a latency vs throughput issue. If most programs are making one request at a time and waiting for the response for that request, then you need really low latency to have high bandwidth. On the other hand, if a program makes many concurrent requests, then it just multiplied its theoretical peak bandwidth.

Similar issue with why TCP has a transmit window. Waiting for a response over high latency slows you down. The main difference is TCP pushes data. Reading from the harddrive pulls data. -

CRamseyer Opine will change things a bit because it lowers the QD1 latency. It will make your computer feel faster thus increasing the user experience.Reply

Beyond that, we need a complete overhaul to effectively utilize NVMe in regular computers. The software needs to reach out for more data at the same time. The Windows file systems (other than ReFS) are all aging. We need a big shift in software across the board. It's just like with video games and other software right now. Nothing pushed the limits of the hardware. VR could be change that but I suspect we are still 5 years away from VR for anyone other than enthusiasts.

TbsToy - NVMe accelerates all tasks by lowering latency. We're starting to see the tech ship in notebooks from MSI and Lenovo. Custom desktops from companies like Maingrear and AVA sell with NVMe as well. I would say use it wherever you find a place. The Samsung NVMe drives sell for a very small premium over the SATA-based 850 Pro. You get workstation and in some cases enterprise-level performance capabilities for the rare instances when the load gets that high. -

jt AJ ReplyOpine will change things a bit because it lowers the QD1 latency. It will make your computer feel faster thus increasing the user experience.

Beyond that, we need a complete overhaul to effectively utilize NVMe in regular computers. The software needs to reach out for more data at the same time. The Windows file systems (other than ReFS) are all aging. We need a big shift in software across the board. It's just like with video games and other software right now. Nothing pushed the limits of the hardware. VR could be change that but I suspect we are still 5 years away from VR for anyone other than enthusiasts.

TbsToy - NVMe accelerates all tasks by lowering latency. We're starting to see the tech ship in notebooks from MSI and Lenovo. Custom desktops from companies like Maingrear and AVA sell with NVMe as well. I would say use it wherever you find a place. The Samsung NVMe drives sell for a very small premium over the SATA-based 850 Pro. You get workstation and in some cases enterprise-level performance capabilities for the rare instances when the load gets that high.

cpu is hitting a dead limit due to software not capable of taking advantage of new instruction hence we see 5% improvement on cpu year after year, and thats also because microsoft window legacy support and why so many old software still work on new windows.

when software programmed to take advantage of cpu's new instructions we'll see a huge jump in cpu performance and would mean more data taken from SSD overall just faster.