Microsoft Azure Unveils First AMD EPYC Instances

AMD's EPYC server processors have finally landed in their first public cloud instances. Microsoft Azure's new Lv2 VM family is destined to host storage-optimized workloads, but we imagine these will be the first in a series of new cloud instances for various purposes.

The new instances are important to AMD's efforts from a broader perspective. AMD's EPYC, as a new and relatively unproven architecture, is going to require some time to gain broad acceptance in the data center. Hardware qualification cycles are an arduous task, and most enterprise operators are notoriously reluctant to bet on new architectures. That serves Intel well with its 99.4% market share in the data center, but it makes it difficult for AMD to come in and swipe market share.

Intel is defending its turf vigorously, and recently released competitive performance analysis that claims superiority over AMD's EPYC in most workloads. Of course, benchmarks from a competitor aren't always a solid measure of performance. AMD has its strengths, and providing access to cloud instances is a great way to spread the message.

Article continues belowTwo of AMD's 32C/64T EPYC 7551 processors power the new Azure instances, though they are carved up into varying numbers of vCPU's for customers. The instances come equipped with healthy rations of both memory and storage. According to Azure, the server instances are ideal for MongoDB, Cassandra, and Cloudera. These workloads are storage intensive and demand high levels of I/O.

| Size | vCPUs | Memory (GB) | Local SSD (GiB) |

|---|---|---|---|

| L8s | 8 | 64 | 1 x 1.9TB |

| L16s | 16 | 128 | 2 x 1.9TB |

| L32s | 32 | 256 | 4 x 1.9TB |

| L64s | 64 | 512 | 8 x 1.9TB |

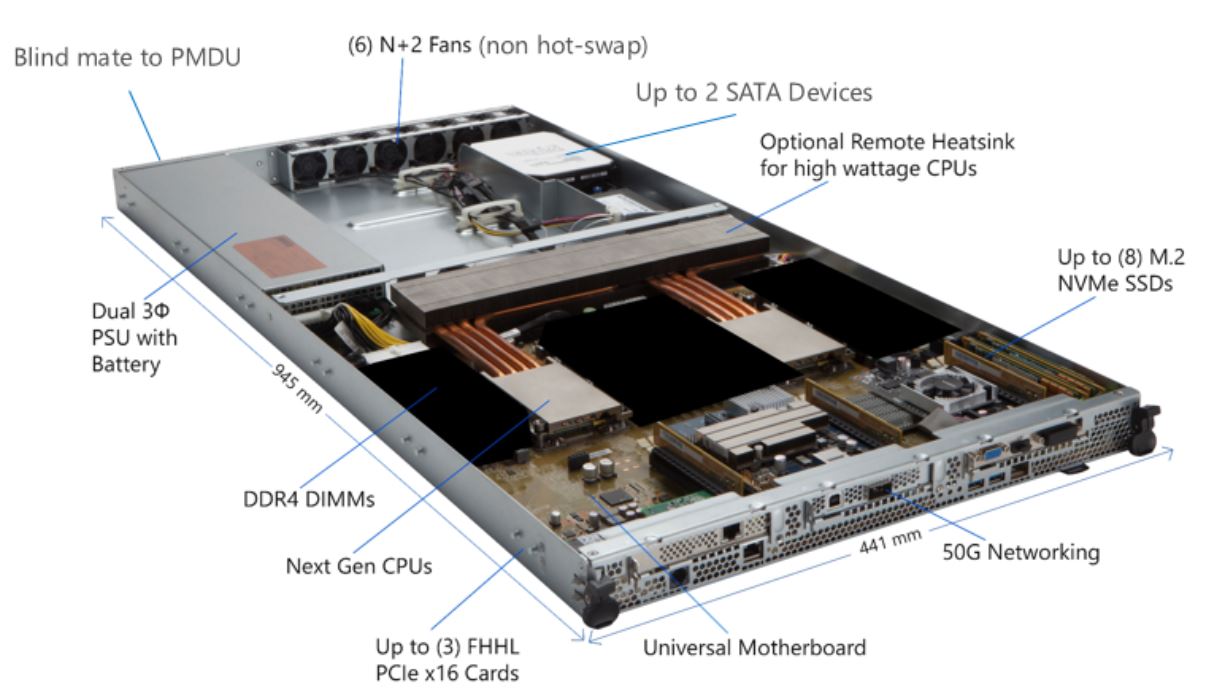

Microsoft is deploying the EPYC servers using its Project Olympus design, which is part of Microsoft's next-gen hyperscale cloud hardware platform. Microsoft shares the design freely with the Open Compute Project (OCP). That means other vendors, such as whitebox and ODM manufacturers, are free to use the same designs to bring less-expensive servers to market for hyperscale data centers.

Storage consists of up to eight M.2 NVMe SSDs that ostensibly consume four PCIe 3.0 lanes apiece. EPYC's hefty allotment of 128 PCIe lanes, which Intel can't match, easily supports the 32 lanes needed for the PCIe storage and leaves plenty of PCIe lanes available for the 50G networking and up to three PCIe 3.0 x16 add-in cards.

The new Azure instances give AMD a foot in the door with Microsoft, which will likely lead to other types of instances on Azure. AMD also tells us to expect other cloud vendors to adopt EPYC platforms. For now, Intel has a stranglehold on the cloud computing space, but AMD's EPYC presents a real threat. We haven't seen instances from the ARM camp, such as Qualcomm's Centriq and Cavium's ThunderX2, make their way to the cloud providers, at least not yet. Pending M&A activity with both Qualcomm (Broadcom) and Cavium (Marvell) has many skittish on the future of the respective ARM platforms, which certainly doesn't help engender confidence from prospective customers that would have to expend significant resources to retool and qualify their software stacks for ARM.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

AMD's x86 pedigree and corporate stability are very attractive to hyperscale cloud operators. AMD is likely to gain some amount of sales from these new endeavors, but more importantly, it allows the company to prove that its architecture is competitive in a production environment.

The cloud has proven disruptive in many ways, but using it to provide broad and easy access to a competing processor architecture is a great strategy for AMD. Now users that might not be willing to invest in hardware can get their hands on EPYC hardware, if only virtually.

For now, the instances are in preview mode only, but we expect them to be more broadly available soon.

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

pravin.shevade Good to see first big customer for AMD. Hope the put pressure on Intel in Server market like the did with Desktop market with Rayzen.Reply

Good luck to AMD,futures looks to be brilliant for team Red. -

redgarl @ Pravin, I would say better at least. As for brilliant, it will be AMD job to prove it. However, I don't understand how a CPU priced more than half the price of the competition for similar performance can go wrong unless Intel prevent suppliers from selling competition hardware.Reply