Intel Releases Bare-Metal oneAPI Level Zero Specification

Intel is plowing forward on a key component of its Xe Architecture

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Intel has released the oneAPI Level-Zero Interface specification. Giving bare-metal access to accelerators, it complements the API-based and direct programming models of oneAPI, Intel’s open programming model for heterogeneous systems that launched in November.

oneAPI: No Transistor Left Behind

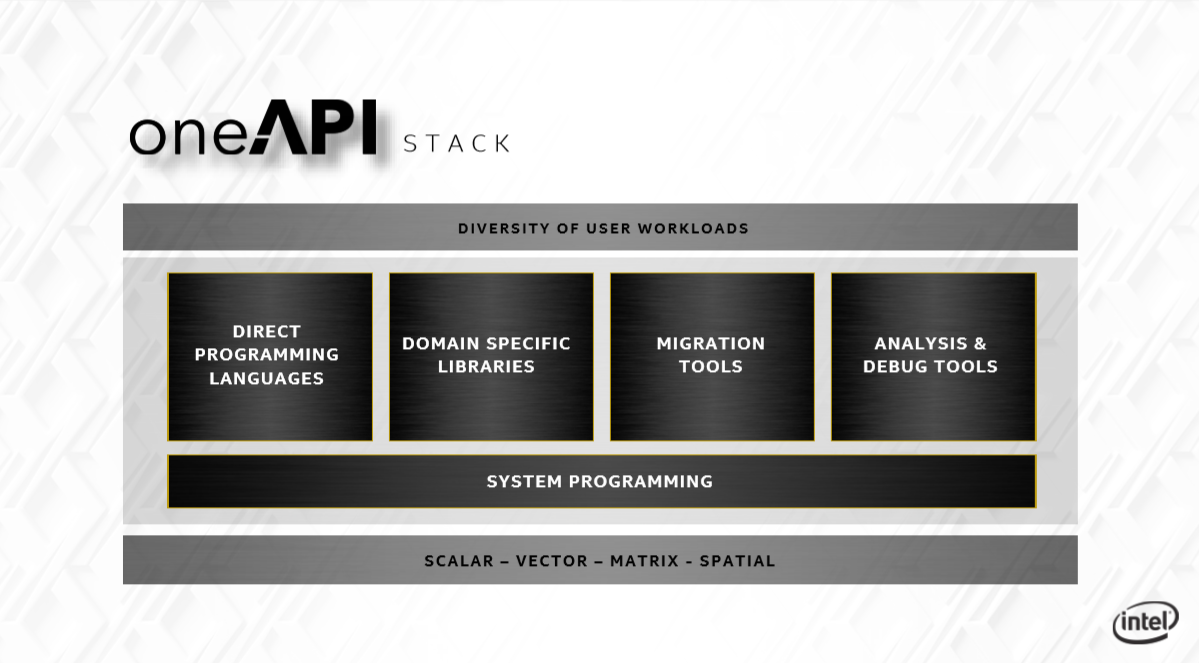

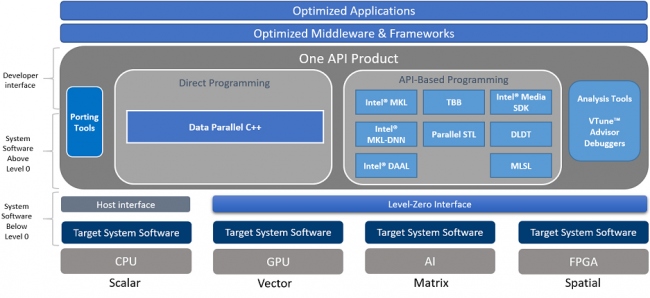

oneAPI is Intel’s ambitious initiative for a unified programming model for performance-driven, cross-architecture applications. It provides code reuse and aims to eliminate the complexity of separate code bases and multiple tools and workflows. Its beta was released to the public at Intel’s HPC DevCon in November. It will also power the Aurora exascale supercomputer.

oneAPI is based on industry standards and open specifications, and consists of both the industry initiative and an Intel implementation of oneAPI. The industry initiative – open to all hardware vendors – specifies both a direct programming language, Data Parallel C++ based on C++ and the SYCL cross-platform abstraction layer, as well as API-based programming for accelerating domain-focused functions. Many parts of it are open source. For example, software developer Codeplay has already announced it is working on Nvidia GPU support for oneAPI.

Article continues belowThe Intel product adds a compatibility tool (such as for CUDA), Intel’s distribution of Python, an FPGA add-on and analysis and debug tools. It currently supports Intel CPUs (Core, Xeon and Atom), Intel’s integrated graphics and Arria FPGAs.

oneAPI Level Zero

Underlying those direct and API library programming models is also a low-level, direct-to-metal interface to accelerator hardware that was released this week, according to Phoronix: oneAPI Level Zero.

The Level-Zero API serves a dual purpose. While it gives fine-grain access to multiple low-level functions, most applications won’t require such explicit control. However, the Level-Zero API also provides that control to the higher-level runtime APIs and libraries.

Intel admits the Level-Zero APIs are influenced by other low-level APIs such as OpenCL and GPU architecture, but differ because they are designed to “evolve independently” and to support different compute device architectures such as FPGAs and deep learning accelerators.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Intel says the interface can be published at a cadence that matches its own hardware releases while also providing more language functions: “It can be adapted to support broader set of languages features, such as function pointers, virtual functions, unified memory, and I/O capabilities,” according to the specification overview.

A comprehensive list of its functionality includes:

- Device Discovery

- Memory Allocation

- Peer-to-Peer Communication

- Inter-Process Sharing

- Kernel Submission

- Asynchronous Execution and Scheduling

- Synchronization Primitives

- Metrics Reporting

- System Management Interface