GeForce RTX 3080 Max-Q Rumored to Use Full GA104 GPU

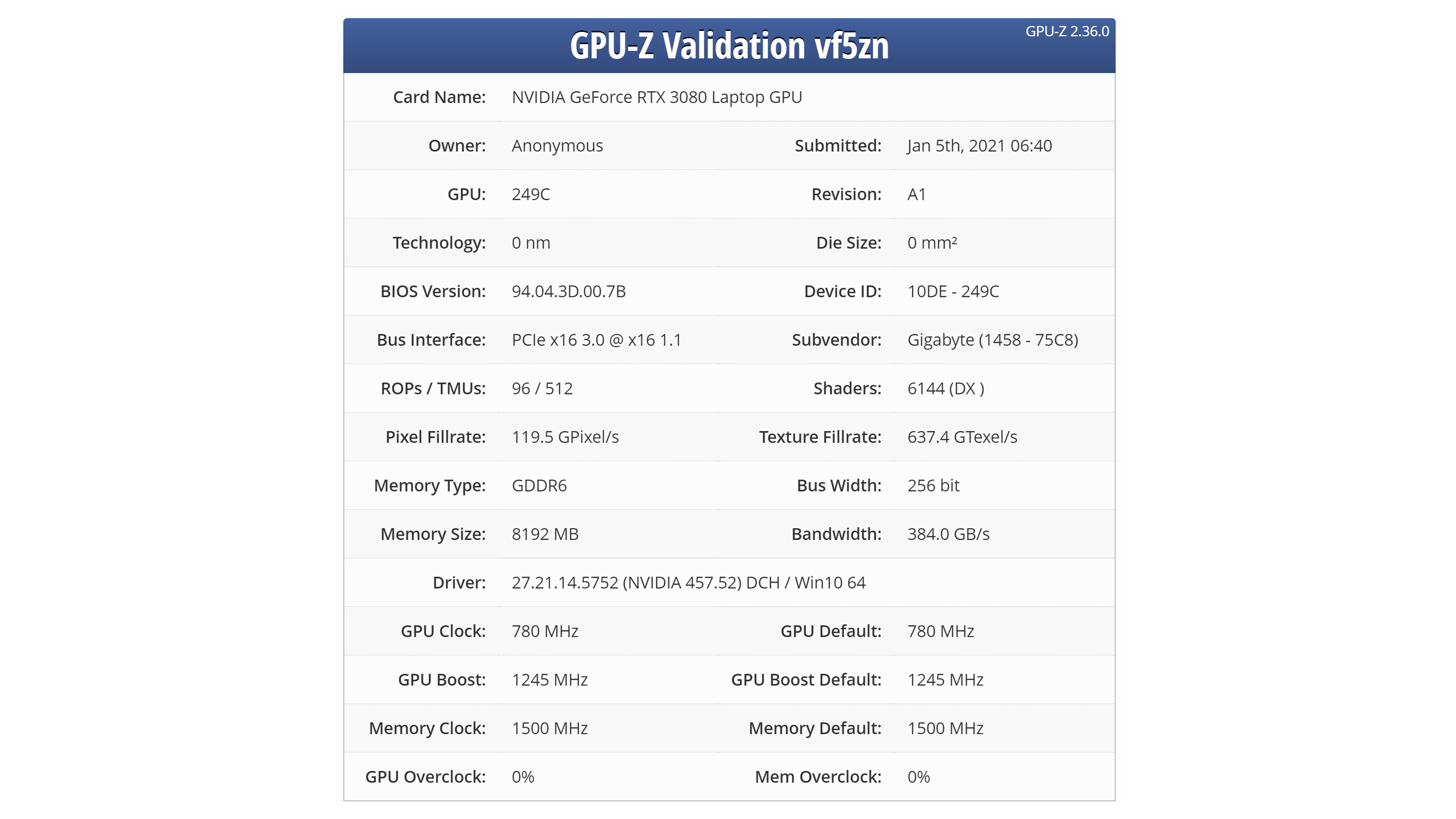

A GPU-Z validation file submitted to TechPowerUp yesterday has reportedly uncovered the GeForce RTX 3080 Max-Q's complete specifications. Gigabyte is reportedly the vendor of the device and aligns with the brand's latest Aorus and Aero gaming laptops that features the same GeForce RTX 3080 Max-Q. Nevertheless, the information should be taken with a pinch of salt since Nvidia hasn't officially announced its mobile Ampere graphics cards yet.

The GeForce RTX 3080 Max-Q reportedly utilizes the GA104 silicon, which is the same one that powers the GeForce RTX 3070 and RTX 3060 Ti. The die selection seems likely, since the GA104 silicon is both much smaller than the GA102 silicon that powers the GeForce RTX 3080 and the GA104 GPUs have much lower TDPs. By comparison, the RTX 3070 desktop GPU has a 220W TDP while the 3080 has a 320W TDP. Similarly, GA104 measures 392 mm², while the GA102 checks in at 628 mm². Given the more confined space in a laptop chassis, plus power restrictions, there's a strong incentive to using the smaller GA104 chip.

What's interesting is that the GPU-Z submission insinuates that Nvidia has endowed the GeForce RTX 3080 Max-Q with the full GA104 die. As a result, the mobile flagship Ampere SKU would have 48 enabled Streaming Multiprocessors (SMs) at its disposition, which boils down to 6,144 CUDA cores, 192 Tensor cores and 48 RT cores. For reference, the GeForce RTX 3070 only enjoys 46 out of the potential 48 SMs. Of course, it has the 3080 branding, so Nvidia had to do everything it could to help it bridge the gap between the desktop and mobile parts, but the rumored specs show just how far Nvidia has had to go.

Nvidia GeForce RTX 3080 Max-Q Specifications

| Header Cell - Column 0 | GeForce RTX 3080 | GeForce RTX 3080 Max-Q* | GeForce RTX 3070 |

|---|---|---|---|

| Architecture (GPU) | Ampere (GA102) | Ampere (GA104) | Ampere (GA104) |

| CUDA Cores | 8,704 | 6,144 | 5,888 |

| RT Cores | 68 | 48 | 46 |

| Tensor Cores | 272 | 192 | 184 |

| Texture Units | 272 | 192 | 184 |

| Base Clock Rate | 1,440 MHz | 780 MHz | 1,500 MHz |

| Boost Clock Rate | 1,710 MHz | 1,245 MHz | 1,730 MHz |

| Memory Capacity | 10GB GDDR6X | 8GB GDDR6 | 8GB GDDR6 |

| Memory Speed | 19 Gbps | 12 Gbps | 14 Gbps |

| Memory Bus | 320-bit | 256-bit | 256-bit |

| Memory Bandwidth | 760 GBps | 384 GBps | 448 GBps |

| ROPs | 88 | 96 | 96 |

| L2 Cache | 5MB | 4MB | 4MB |

| TDP | 320W | 80W | 220W |

| Transistor Count | 28.3 billion | 17.4 billion | 17.4 billion |

| Die Size | 628 mm² | 392 mm² | 392 mm² |

*Specifications are unconfirmed.

The GeForce RTX 3080 Max-Q will purportedly rock a 780 MHz base clock and 1,245 MHz boost clock. However, bear in mind that this is the Max-Q variant that's tailored toward thin and light gaming laptops. The mobile, more commonly referred to as the Max-P, variant has more thermal headroom to stretch its legs. It's not etched in stone, but the Max-Q and Max-P variants typically feature TDP (thermal design power) ratings up to 80W and 150W, respectively.

Not that FP32 performance is the end-all and be-all metric, but the GeForce RTX 3080 Max-Q should be capable of delivering up to 15.30 TFLOPS of output. In contrast, the GeForce RTX 3070 delivers 20.31 TFLOPS, offering 32.7% higher FP32 performance, and the desktop 3080 is potentially twice as fast — and also draws four times as much power.

It's worth noting that if these specs are correct, Nvidia is alterting recent strategy quite significantly for its mobile parts. The RTX 20-series mobile chips used the same GPU as their desktop counterparts (so 2080 Max-Q was TU104, just like the 2080 desktop). The same was true of most GTX 10-series and GTX 900-series mobile chips. The last time Nvidia did the "desktop minus one" approach to mobile GPUs was with the 700-series, where the 780M used GK104 while the GTX 780 desktop used GK110. Just like Nvidia skipped doing mobile 2080 Ti and 1080 Ti, likely due to power requirements, it's effectively skipping the 3080 and 3090 performance tiers with Ampere.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

In regards to the memory, the GeForce RTX 3080 Max-Q appears to come equipped with 8GB of GDDR6. However, this may be one of two configurations that Nvidia will offer since we've already seen retailer listings of a GeForce RTX 3080 Max-Q with 16GB of GDDR6.

While we can't speak for the 16GB variant, the 8GB version evidently runs at 12 Gbps across a 256-bit memory interface, contributing to a memory bandwidth up to 384 GBps. So, the GeForce RTX 3070's memory throughput is only 16.7% higher than the GeForce RTX 3080 Max-Q.

Since Ampere is a high performer, laptop vendors will probably pair the graphics card with the upcoming Intel Tiger Lake-H and AMD Ryzen 5000 (Cezanne) processors. The GeForce RTX 3080 Max-Q, like its other Ampere siblings, can leverage the PCIe 4.0 interface; however, only Tiger Lake-H supports the aforementioned interface. That doesn't mean that Ryzen 5000 will lose out on any performance though, since it hasn't been demostrated if PCIe 4.0 could actually boost graphical performance in a mobile package.

We're looking forward to testing actual RTX 30-series mobile parts and seeing how it stacks up to the existing RTX 20-series. Ampere desktop GPUs have increased TDP to help boost performance, but tuning a chip for maximum efficiency can still yield competitive results. A 20 percent cut in clock speeds, with lower voltages, often means close to half the power requirements. Will RTX 3080 Max-Q be able to surpass the RTX 2080 Super Max-Q? Almost certainly. The full power RTX 2080 Super Max-P will be a more difficult target.

Zhiye Liu is a news editor, memory reviewer, and SSD tester at Tom’s Hardware. Although he loves everything that’s hardware, he has a soft spot for CPUs, GPUs, and RAM.

-

cryoburner This "3080 Max-Q" likely won't perform all that much better than a desktop 3060 (non-Ti). Even hardware-wise it will be more like a massively-underclocked 3070, not even sharing the same chip as the similarly-named desktop part. The desktop 3080's graphics chip is nearly 60% larger than the one in the 3070 or this "Max-Q" card in terms of physical dimensions.Reply

But at least they gave it a model number that can trick people into paying top dollar for laptops containing it, who hopefully won't be too surprised when they only get a little over half the performance of a desktop 3080 at higher resolutions. -

JRB623 As the article says "Not that FP32 performance is the end-all and be-all metric " we won't know until it comes out for sure on its performance. Point being that the 2080 Ti delivers 14.2 TFLOPS vs the 3070's 20.31 TFLOPS yet based on UserBenchmark data the 2080 Ti eeks out a 4% real world performance lead in games over the 3070 and a 14% lead in synthetic benchmarks. (not counting raytracing)Reply

Brief take on raytracing and why I made the distinction above and why I believe at least for the next year at best it will not be as big of a factor in gaming as the next gen wants it to be is that it is not yet mainstream. As of late Dec 2020 only 26 games utilize ray tracing and some of those games that are actually choosing to implement it don't always benefit graphically due to the type of graphic style of the game such as World of Warcraft yet they still take the performance hit thus rendering the utilization of raytracing in such games unbeneficial. 2k-4k standard gaming still seems to be the key focus so that is what I base my personal performance standards on until that changes and how I think others should too. To reinforce my assertion, keep in mind the entire GTX 1000 series and their AMD counterpart were advertised for the future gaming gimick that was to be VR and the consoles soon followed suit too not unlike what we are seeing now with RT but look where VR is today. ¯\(ツ)/¯

So I do not believe we can make a conclusion that the RTX 3080 Max-Q will for sure be unable to perform around a desktop 3070, worse, or better from the given hardware specs alone. We know the RTX 2080 Max-Q was on par with the desktop RTX 2060 if they make no improvements to that formula that the RTX 2080 Max-Q will be on par with the RTX 3060 Ti they have out which is almost on par with a desktop RTX 2080 being a smidge better. The best currently is the Mobile RTX 2080S which is on par with the destop RTX 2070S and the RTX 2070S is roughly around the performance of the RTX 2080 only being a smidge lower and it would be awfully foolish of NVidia to release a new flagship card that is only 3% better for a much larger price. While there will be ignorant purchasers, I don't see them making up the numbers needed for NVIDIA to not take a financial hit by doing so.

It would be in their best interest if the RTX 3080 Max-Q was closer to the performance of the RTX 3070 at minimum or they will be in trouble. -

cryoburner Reply

While I would generally agree, that's mostly just the case across different generations of architectures, where the amount of potential compute performance could mean wildly different things for actual gaming performance. Within a generation of hardware though, the TFlops should usually be a lot more meaningful, especially since these are using the same chips as certain desktop parts, just at significantly lower clocks. If that "up to 15.3 TFlops" number pans out, that would make it slower that a "16+ Tflop" 3060 Ti, and due to the limitations of laptop cooling, many notebooks utilizing it might not even manage that.JRB623 said:As the article says "Not that FP32 performance is the end-all and be-all metric " we won't know until it comes out for sure on its performance. Point being that the 2080 Ti delivers 14.2 TFLOPS vs the 3070's 20.31 TFLOPS yet based on UserBenchmark data the 2080 Ti eeks out a 4% real world performance lead in games over the 3070 and a 14% lead in synthetic benchmarks. (not counting raytracing)

And that kind of performance makes sense. Based on the specs rumored here, the "3080 Max-Q" will be utilizing the same graphics chip and memory configuration as a 3070, but with a little over 4% more cores enabled. However, its nearly 30% lower boost clocks (and 50% lower base clocks), combined with almost 15% lower memory clocks means it won't be coming close to a 3070. At best, it will probably be close to 25% slower than a 3070 in games limited by graphics performance, and slightly slower than a 3060 Ti, assuming it can even reliably maintain those boost clocks for extended periods.