Video Quality Tested: GeForce Vs. Radeon In HQV 2.0

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Test Class 1: Video Conversion, Cont’d.

Chapter 2: Film Resolution Tests

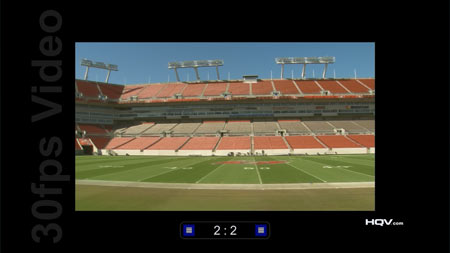

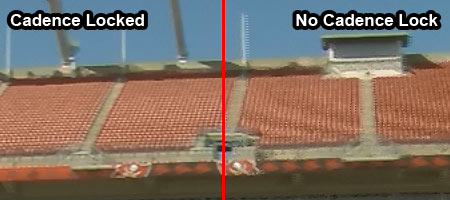

The tests in Chapters 1, 4, and 5 focus on cadence, and whether the video processor can perform appropriate pull-down detection. Cadences are used to stretch or compress video in different formats to fit the 60 interlaced fields/second NTSC standard. The concept may not be intuitive, but if a video processor cannot properly detect cadence, the resulting video may suffer from interpolation artifacts, such as moiré patterns. The benchmark includes indicator boxes to help the reviewer assess whether the cadence has been detected. Scoring is based on the video processor’s ability to lock, and how quickly it locks onto the correct cadence. Five points are awarded for a lock within one half of a second, three points are awarded if the lock is achieved under a second, and if the lock is not achieved or is intermittent throughout the clip, the score is zero.

This is an objective test. Our observations reveal that all of the tested Radeon cards are able to detect 2:2 and 3:2 cadences. All of the Nvidia cards detect the 3:2 cadence, but the GeForce 210, GT 430, and GeForce GTX 460 do not detect the 2:2 cadence in our tests.

Article continues below| Cadence Test Results (out of 5) | |||||

|---|---|---|---|---|---|

| Row 0 - Cell 0 | Radeon HD 6850 | Radeon HD 5750 | Radeon HD 5670 | Radeon HD 5550 | Radeon HD 5450 |

| Stadium 2:2 | 5 | 5 | 5 | 5 | 5 |

| Stadium 3:2 | 5 | 5 | 5 | 5 | 5 |

| Header Cell - Column 0 | GeForce GTX 470 | GeForce GTX 460 | GeForce 9800 GT | GeForce GT 240 | GeForce GT 430 | GeForce 210 |

|---|---|---|---|---|---|---|

| Stadium 2:2 | 5 | 0 | 5 | 5 | 0 | 0 |

| Stadium 3:2 | 5 | 5 | 5 | 5 | 5 | 5 |

Arguably, the 2:2 cadence is somewhat less important than 3:2. Most films are recorded at 24 FPS and this is converted with the 3:2 pulldown cadence, while the 2:2 cadence is only used in countries following the PAL and SECAM standards that shoot film destined for television at 25 FPS. As such, I question the wisdom of assigning these two cadences the same point value. The 3:2 cadence should be worth more points.

[EDIT: I'm going to clarify this a little. I'm not saying that PAL countries should be ignored, I'm saying 24 FPS film is probably more important in the big scheme of things. We're well aware that these graphics cards are to be used internationally.]

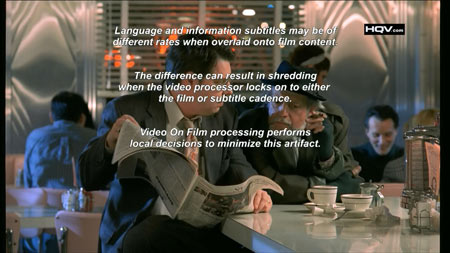

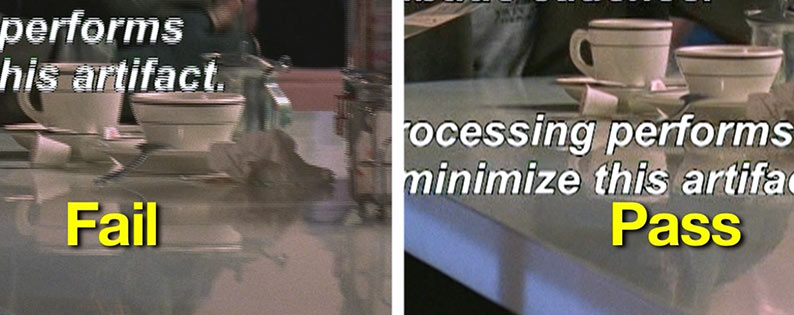

Chapter 3: Overlay on Film Tests

When text is overlaid on film, it’s possible that it may have a different cadence from the underlying video. If the video processor doesn’t detect the different cadences, the text or background may display shredding artifacts. If no shredding artifacts are seen, a perfect score of five is earned. If artifacts are seen at first but are quickly corrected, the score is lowered to three, and zero points are awarded if shredding is observed throughout the majority of the sequence. There are two tests in this chapter and each is scored separately: once for horizontal moving text and once for vertical moving text.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

We found this one difficult to judge. Certainly, the text looks good across all graphics cards, but it doesn’t seem quite perfect, either, especially when it comes to the vertical moving text. Based on the good overall performance, we’re giving all graphics cards a full five points for both tests.

| Overlay on Film Test Results (out of 5) | |||||

|---|---|---|---|---|---|

| Row 0 - Cell 0 | Radeon HD 6850 | Radeon HD 5750 | Radeon HD 5670 | Radeon HD 5550 | Radeon HD 5450 |

| Horizontal | 5 | 5 | 5 | 5 | 5 |

| Vertical | 5 | 5 | 5 | 5 | 5 |

| Header Cell - Column 0 | GeForce GTX 470 | GeForce GTX 460 | GeForce 9800 GT | GeForce GT 240 | GeForce GT 430 | GeForce 210 |

|---|---|---|---|---|---|---|

| Horizontal | 5 | 5 | 5 | 5 | 5 | 5 |

| Vertical | 5 | 5 | 5 | 5 | 5 | 5 |

Chapter 4: Response Time Tests

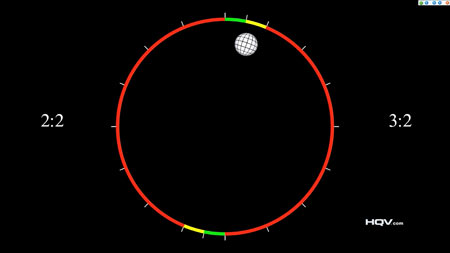

This test assesses the speed at which the two most prolific cadences are detected and locked in. A circling globe helps assess how quickly cadence is locked by moving by a gauge. A full five points are awarded if the cadence is locked under one half of a second, and this is lowered to two points if it takes between one half and one second to achieve lock. If it takes more than one second to lock cadence, no points are awarded, and, of course, no points are given if the graphics card does not detect the cadence at all.

This is an objective test but it can be difficult to judge the time it takes to lock cadence because of the speed of the moving indicator ball. It appears to us that all of the graphics cards we’re testing can achieve a lock under one half of a second.

In the case of some GeForce cards, the 2:2 cadence is not detected and points are lost here. It’s interesting that response time is already a factor in the Chapter 2 cadence tests, and this test may be included as a way to assign more points to the notable 2:2 and 3:2 film cadences relative to the less-common ratios we look at next.

| Response Time Test Results (out of 5) | |||||

|---|---|---|---|---|---|

| Row 0 - Cell 0 | Radeon HD 6850 | Radeon HD 5750 | Radeon HD 5670 | Radeon HD 5550 | Radeon HD 5450 |

| 2:2 Lock | 5 | 5 | 5 | 5 | 5 |

| 3:2 Lock | 5 | 5 | 5 | 5 | 5 |

| Header Cell - Column 0 | GeForce GTX 470 | GeForce GTX 460 | GeForce 9800 GT | GeForce GT 240 | GeForce GT 430 | GeForce 210 |

|---|---|---|---|---|---|---|

| 2:2 Lock | 5 | 0 | 5 | 5 | 0 | 0 |

| 3:2 Lock | 5 | 5 | 5 | 5 | 5 | 5 |

Chapter 5: Multi-Cadence Tests

These tests are identical to the tests in Chapter 2, except that the cadences are different.

| Multi-Cadence Test Results (out of 5) | |||||

|---|---|---|---|---|---|

| Row 0 - Cell 0 | Radeon HD 6850 | Radeon HD 5750 | Radeon HD 5670 | Radeon HD 5550 | Radeon HD 5450 |

| 2:2:2:4 24 FPS DVCAM | 5 | 5 | 5 | 5 | 5 |

| 2:3:3:224 FPS DVCAM | 5 | 5 | 5 | 5 | 5 |

| 3:2:3:2:2 24 FPS Vari-speed | 5 | 5 | 5 | 5 | 5 |

| 5:512 FPS Animation | 5 | 5 | 5 | 5 | 5 |

| 6:4 12 FPS Animation | 5 | 5 | 5 | 5 | 5 |

| 8:7 8 FPS Animation | 5 | 5 | 5 | 5 | 5 |

| Header Cell - Column 0 | GeForce GTX 470 | GeForce GTX 460 | GeForce 9800 GT | GeForce GT 240 | GeForce GT 430 | GeForce 210 |

|---|---|---|---|---|---|---|

| 2:2:2:4 24 FPS DVCAM | 5 | 0 | 5 | 5 | 0 | 0 |

| 2:3:3:2 24 FPS DVCAM | 5 | 0 | 5 | 5 | 0 | 0 |

| 3:2:3:2:2 24 FPS Vari-speed | 5 | 0 | 5 | 5 | 0 | 0 |

| 5:5 12 FPS Animation | 5 | 0 | 5 | 5 | 0 | 0 |

| 6:4 12 FPS Animation | 5 | 0 | 5 | 5 | 0 | 0 |

| 8:7 8 FPS Animation | 5 | 0 | 5 | 5 | 0 | 0 |

Once again, the GeForce 210, GT 430, and GTX 460 are left behind, while the rest of the GeForce family and all the Radeon cards we test achieve full marks.

It’s arguable that these cadences should be assigned far fewer points than 3:2 and 2:2 film cadences. There are some relatively obscure ratios here that many viewers will never see. So, in our opinion, the weighting of these tests on the final score (30 points out of 210) may unrealistically indicate all-around video performance.

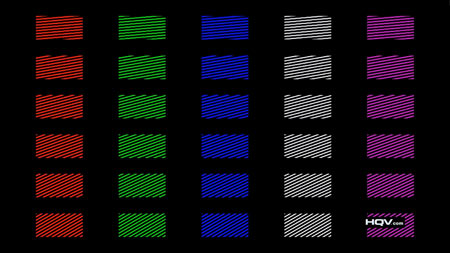

Chapter 6: Color Upsampling Error Tests

The tests in this chapter expose how the video processor handles downsampled video. If it cannot up-scale the video properly, color artifacts may result. The ICP test focuses on interlaced video while the CUE test examines progressive video. The full score of five is assigned if no color artifacts can be observed. This is reduced to two points if faint color halos or mild stair stepping is seen. A score of zero is the result of highly-observable color artifacts.

We find that this test can be difficult to score when extremely subtle artifacts are seen. We’ll err on the side of generosity and give five points to the graphics cards that appear to produce very good results.

While we hand out the full five marks to all of the test cards for CUE progressive video output tests, all of the GeForce cards appear to produce some artifacts in the ICP interlaced video test, except for the GeForce GTX 470, a card that earns full marks.

| Color Upsampling Error Test Results (out of 5) | |||||

|---|---|---|---|---|---|

| Row 0 - Cell 0 | Radeon HD 6850 | Radeon HD 5750 | Radeon HD 5670 | Radeon HD 5550 | Radeon HD 5450 |

| Interface Chroma Problem (ICT) | 5 | 5 | 5 | 5 | 5 |

| Chroma Upsampling Error (CUE) | 5 | 5 | 5 | 5 | 5 |

| Header Cell - Column 0 | GeForce GTX 470 | GeForce GTX 460 | GeForce 9800 GT | GeForce GT 240 | GeForce GT 430 | GeForce 210 |

|---|---|---|---|---|---|---|

| Interface Chroma Problem (ICT) | 5 | 2 | 2 | 2 | 2 | 2 |

| Chroma Upsampling Error (CUE) | 5 | 5 | 5 | 5 | 5 | 5 |

Current page: Test Class 1: Video Conversion, Cont’d.

Prev Page Test Class 1: Video Conversion Next Page Test Class 2: Noise And Artifact ReductionDon Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

jimmysmitty I second the test using SB HD graphics. It might be just an IGP but I would like to see the quality in case I want to make a HTPC and since SB has amazing encoding/decoding results compared to anything else out there (even $500+ GPUs) it would be nice to see if it can give decent picture quality.Reply

But as for the results, I am not that suprised. Even when their GPUs might not perform the same as nVidia, ATI has always had great image quality enhancements, even before CCC. Thats an area of focus that nVidia might not see as important when it is. I want my Blu-Ray and DVDs to look great, not just ok. -

compton Great article. I had wondered what the testing criteria was about, and Lo! Tom's to the rescue. I have 4 primary devices that I use to watch Netflix's streaming service. Each is radically different in terms of hardware. They all look pretty good. But they all work differently. Using my 47" LG LED TV I did an informal comparison of each.Reply

My desktop, which uses a 460 really suffers from the lack of noise reduction options.

My Samsung BD player looks less spectacular that the others.

My Xbox looks a little better than the BD player.

My PS3 actually looks the best to me, no matter what display I use.

I'm not sure why, but it's the only one I could pick out just based on it's image quality. Netflix streaming is basically all I use my PS3 for. Compared to it, my desktop looks good and has several options to tweak but doesn't come close. I don't know how the PS3 stacks up, but I'm thinking about giving the test suite a spin.

Thanks for the awesome article. -

cleeve Reply9508697 said:Could you give the same evaluation to Sandy Bridge's Intel HD Graphics?.

That's Definitely on our to-do list!

Trying to organize that one now.

-

lucuis Too bad this stuff usually makes things look worse. I tried out the full array of settings on my GTX 470 in multiple BD Rips of varying quality, most very good.Reply

Noise reduction did next to nothing. And in many cases causes blockiness.

Dynamic Contrast in many cases does make things look better, but in some it revealed tons of noise in the greyscale which the noise reduction doesn't remove...not even a little.

Color correction seemed to make anything blueish bluer, even purples.

Edge correction seems to sharpen some details, but introduces noise after about 20%.

All in all, bunch of worthless settings. -

killerclick jimmysmittyEven when their GPUs might not perform the same as nVidia, ATI has always had great image quality enhancementsReply

ATI/AMD is demolishing nVidia in all price segments on performance and power efficiency... and image quality.

-

alidan killerclickATI/AMD is demolishing nVidia in all price segments on performance and power efficiency... and image quality.Reply

i thought they were loosing, not by enough to call it a loss, but not as good and the latest nvidia refreshes. but i got a 5770 due to its power consumption, i didn't have to swap out my psu to put it in and that was the deciding factor for me. -

haplo602 this made me lol ...Reply

1. cadence tests ... why do you marginalise the 2:2 cadence ? these cards are not US exclusive. The rest of the world has the same requirements for picture quality.

2. skin tone correction: I see this as an error on the part of the card to even include this. why are you correcting something that the video creator wanted to be as it is ? I mean the movie is checked by video profesionals for anything they don't want there. not completely correct skin tones are part of the product by design. this test should not even exist.

3. dynamic contrast: cannot help it, but the example scene with the cats had blown higlights on my laptopt LCD in the "correct" part. how can you judge that if the constraint is the display device and not the GPU itself ? after all you can output on a 6-bit LCD or on a 10-bit LCD. the card does not have to know that ... -

mitch074 "obscure" cadence detection? Oh, of course... Nevermind that a few countries do use PAL and its 50Hz cadence on movies, and that it's frustrating to those few people who watch movies outside of the Pacific zone... As in, Europe, Africa, and parts of Asia up to and including mainland China.Reply

It's only worth more than half the world population, after all. -

cleeve mitch074"obscure" cadence detection? Oh, of course... Nevermind that a few countries do use PAL and its 50Hz cadence on movies...Reply

You misunderstand the text, I think.

To clear it up: I wasn't talking about 2:2 when I said that, I was talking about the Multi-Cadence Tests: 8 FPS animation, etc.