Nvidia's Tegra 3 Optimizations: THD Android Games, Tested

Nvidia continues to encourage game developers to add Tegra-only details to their Android titles. Is Tegra 3 the killer SoC for Android gaming? How do Tegra-optimized games look compared to the same titles on iOS? Is it all just a bunch of marketing hype?

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Nvidia's Tegra 3: King Of Android Gaming?

At least for the next couple of days, Tegra 3 is Nvidia’s flagship system-on-a-chip (SoC) for smartphones and tablets. Although the chip's performance is no longer the fastest around, an aggressive developer relations team works with game developers to optimize their titles for Nvidia's hardware. As a result, Tegra 3's ULP GeForce graphics component and quad-core Cortex-A9-based processor continue to serve up some of the nicest-looking graphics available from mobile games. But does the Tegra 3's advantage still hold water more than a year after its introduction, or has the rest of the industry caught up?

Armed with an Android-based HTC One X+ smartphone as our test platform, along with 12 purportedly Tegra-optimized titles, we logged some quality game time to find out.

Let’s be clear about what we’re actually measuring. Today's efforts aren't about benchmarking Tegra 3’s frame rates or drawing blanket generalizations about gaming on Android. Instead, we’re doing a visual comparison to see whether or not the optimizations made for Tegra 3 are significant, and if they have any negative impact on playability.

Article continues belowBefore we get to the testing, let’s talk about how Tegra’s ULP GeForce is different from other mobile GPUs.

Tegra Versus PowerVR, Mali, And Adreno

Tegra 3 is quite different from other SoCs in terms of how the GPU operates. Nvidia's ULP GeForce is a more complex version of what came before in Tegra 2, employing 12 pixel shaders rather than four, and operating at up to 520 MHz, up from a maximum of 400 MHz.

Current mobile GPUs employ two basic types of rendering operations: Immediate Mode Rendering (IMR) and Tile-Based Rendering (TBR).

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Nvidia’s Tegra operates using IMR, which has been the standard in PC GPUs for over a decade (the last desktop graphics processor to use tile-based rendering was STMicroelectronics' PowerVR Kyro II, back in '01). With immediate-mode rendering, polygons are received, modified, textured, displayed, and certain zones are calculated several times. In the mobile world, this would generally be considered wasteful. But Nvidia offers graphics hardware fast enough to handle it.

I

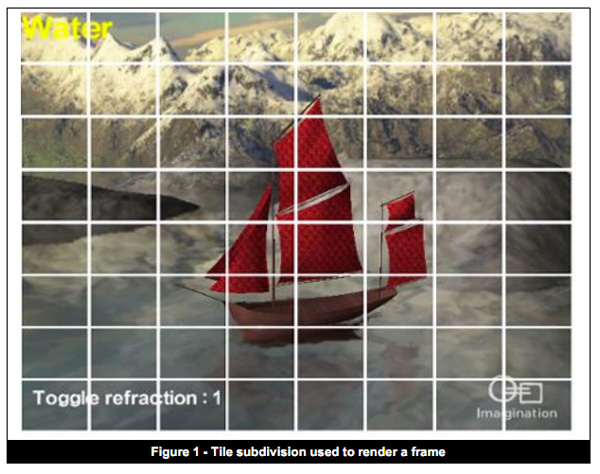

magination Technologies' PowerVR chipsets are the quintessential example of Tile-Based Deferred Rendering. TBR involves dividing each frame into small sections referred to as tiles (right). The rendering of each tile is performed separately, and only the visible pixels in each one are sent down the rendering pipeline. Any pixel not visible in the frame is not rendered (hence, deferred), such as those making up the part of the car obscured behind the tree (below).

Breaking up each frame into separately-rendered tiles gives PowerVR fairly linear scalability, since multiplying GPU cores multiplies GPU performance. Eliminating non-visible areas from the calculations also reduces the necessary bandwidth, which is a very important advantage in the mobile world. While Imagination Technologies' PowerVR technology is found in SoCs from Intel (Medfield), Samsung (Hummingbird), and Texas Instruments (OMAP), it's most notably the graphics force behind Apple’s venerable line of iOS-based devices (Ax).

ARM and Qualcomm GPUs utilize a combination of the two basic rendering modes. ARM’s Mali uses a hybrid known as Tile-Based Immediate Mode Rendering (TBIMR). Meanwhile, Qualcomm employs a different kind of hybrid mode in the Andreno 320, which can render either in TBR or IMR. The most notable use of Mali is in Samsung’s Exynos, while Adreno is exclusive to Qualcomm’s own Snapdragon SoCs.

Although each rendering type has its pros and cons, suffice it to say, Nvidia’s choice is coherent. Ironically, Nvidia takes advantage of one of PowerVR’s limitations. Since many games are designed for iOS-based devices with PowerVR GPUs, their geometry is limited. So, by simply doubling the number of pixel shader units in Tegra 3, Nvidia allows developers to add extra effects to existing games. The company is known to leverage its close relationships with video game developers on the PC side, and now it's doing the same with the creators of mobile titles.

To be clear, Nvidia doesn’t currently have the fastest mobile GPU on the block. ARM, Imagination Technologies, and Qualcomm all have faster engines. One reason for that is simply that Tegra 3 is more than a year old, and we're on the cusp of seeing its successor at CES. Secondly, Nvidia simply isn’t in the same position as Samsung or, to a lesser degree, Apple. Creating the A5X SoC in the third-gen iPad, for example, required that Apple double the number of GPU cores in its A5, moving from an MP2 GPU to an MP4. Plus, Nvidia has to worry about selling its chips to device manufacturers, whereas Apple and Samsung are the manufacturer.

The company is still enjoying plenty of success, however, showing up in a range of devices and form factors, such as HTC's One X+, Microsoft's Surface, Lenovo's IdeaPad Yoga 11, and even Tesla's Model S electric sedan. So, what do optimized games look like, and how does Tegra 3 handle them?

Current page: Nvidia's Tegra 3: King Of Android Gaming?

Next Page Test Setup And Benchmark Suite-

aicom Interesting comparison. Maybe you could test if there are any differences in effects between models of the Tegra 3?Reply -

darkavenger123 Err...why bother compare with iOS?? No big deal. They should start comparing with PS VITA for some real games....hardware is useless without software....so sick of casual gaming...Angry birds runs just fine with Androids 2 years ago, doesn't need a hardware upgrade. They need to get developers to makes some real games for it or it's just pointless expensive sillicone.Reply -

I own both the Nexus 4 and a Tegra 3 equiped Asus Transformer Prime. The Nexus 4 is quite a bit faster in a lot of games, especially GTA3 and Vice City. But also Dead Trigger which is supposedly optimized for Tegra runs quite badly on the Transformer Prime, the framerate is much better on the Nexus 4.Reply

-

gomerpile WTF is going on, jeez if it keep it up with the rat race of title games that have no graphics, pc gamers are all doomed. Why waste time and money on the next version of Battlefield 4 when we can develop monkey ball andriod shooters.Reply -

abbadon_34 ReplyNvidia continues to encourage game developers to add Tegra-only details to their Android titles.

Exactly what is wrong with tech today, for 30 years companies learned to embrace compatibility after the Sony Betamax failure (technically better, but priced beyond comsumer appreciation). Now everyone wants to follow Apple forgetting they too suffered from a "closed shop" for 20 years. -

blubbey Are these phones now more powerful than gen 6 consoles? Dreamcast/PS2/Gamecube/Xbox. I would assume so.Reply -

darkchazz I've got a Nexus 7 OCed (1.5ghz CPU, 520mhz GPU) and a stock Galaxy Note II, and I have to say that every single game you can think of in the play store runs miles better on the Note II, even the so called optimized-for-tegra titles.Reply

What's bothersome for me though is that some tegra optimized titles actually run on a low resolution by default on the N7 (to compensate for low memory bandwidth???) yet still play on a 30-40ish frame rate, luckily the devs have put resolution option in the setting (see Riptide, beach buggy blitz), but you'll be playing at 20-30fps if you bump it up to the max/native res.

Another title, Horn, which is actually a Tegra exclusive using UE3, actually runs on a low res but does not provide any option to change that, and even with the low resolution, it is extremely choppy at times I can easily notice 15-20fps.

One dev that I like is MADFINGER Games (dead trigger, shadowgun), their titles are so heavily optimized that they run at a consistent 35-45fps on my N7 and I do not notice lag nor inconsistent frame rate. still runs at constant 60fps on my Note II though...

Meanwhile I can't think of anything that doesn't run at 60fps on my Note II, So much that wonder how much better the gpu performance is on Snapdragon S4 pro and apple A6 devices. -

natoco blubbeyAre these phones now more powerful than gen 6 consoles? Dreamcast/PS2/Gamecube/Xbox. I would assume so.Yes, but tiny screens u put u fingers on ruin the experience of gaming, i get my old megadrive out and play or niintendo 64 for some funny simple old school games. Phones look the 'tiny' part like it but its just not the same. I bought a whole heap of games on my iphone only to put them down and never play again since the format in which u play is just not 'fun' and thats the whole point of games is it not. Oh well, consoles or pc's FTWReply -

gilgamex101 Did anyone else notice that the Heroes Call screen shots are the EXACT same as the Soulcraft screenshots??Reply -

blubbeyAre these phones now more powerful than gen 6 consoles? Dreamcast/PS2/Gamecube/Xbox. I would assume so.Reply

definitely, GTAIII and Vice City were both PS2 games and the new mobile versions have upgraded textures