Adata Premier Pro SP920 SSD: From 128 To 1024 GB, Reviewed

Adata shifts away from SandForce in its Premier Pro SP920 SSD family. With promises of incredible performance and spiffy features like DevSlp, Adata's latest employs the Marvell controller we saw in Crucial's M550. But the two share quite a bit more...

Results: TRIM Testing With ULINK's DriveMaster 2012

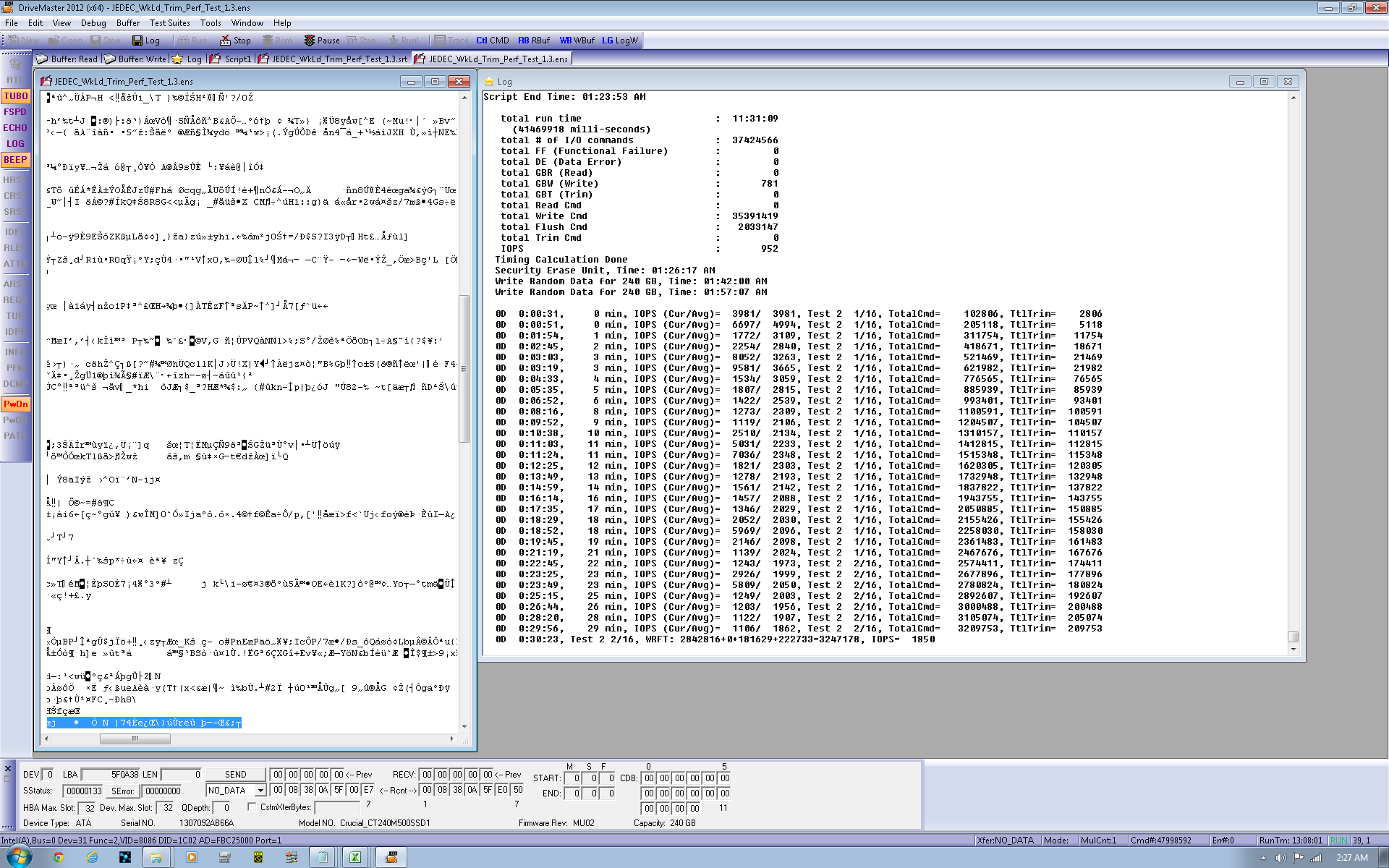

We've been utilizing ULINK's DriveMaster 2012 software and hardware suite to introduce a new test for client drives. Using JEDEC's standardized 218A Master Trace, DriveMaster can turn a sequence of I/O (similar to our Tom's Hardware Storage Bench) into a TRIM test. JEDEC's trace is months and months of drive activity, day-to-day activities, and background operating system tasks.

ULINK strips out the read commands for this benchmark, leaving us with the write, flush, and TRIM commands to work with. Execute the same workload with TRIM support and without, and you end up with a killer metric for further characterizing drive behavior.

DriveMaster is used by most SSD manufacturers to create and perform specific measurements. It's currently the only commercial product that can create the scenarios needed to validate TCG Opal 2.0 security, though it's almost unlimited in potential applications. Much of the benefit tied to a solution like DriveMaster is its ability to diagnose bugs, ensure compatibility, and issue low-level commands. In short, it's very handy for the companies actually building SSDs. And if off-the-shelf scripts don't do it for you, make your own. There's a steep learning curve, but the C-like environment and command documentation gives you a fighting chance.

This product also gives us some new ways to explore performance. Testing the TRIM command is just the first example of how we'll be using ULINK's contribution to the Tom's Hardware benchmark suite.

On a 256 GB drive, each iteration writes close to 800 GB of data, so running the JEDEC TRIM test suite once on a 256 GB SSD generates almost 3.2 TB of mostly random writes (it's 75% random and 25% sequential). By the end of each run, over 37 million write commands are issued.

The first two tests employ DMA to access the storage, while the last two use Native Command Queuing. Since most folks don't use DMA with SSDs (aside from some legacy or industrial applications) we don't concern ourselves with those. It can take up to 96 hours to run one drive through all four runs, though faster drives can roughly cut the time in half. Because so much information is being written to an already-full SSD (the drive is filled before each test, and then close to 800 GB are written per iteration), SSDs that perform better under heavy load fare best. Without TRIM, on-the-fly garbage collection becomes a big contributor to high IOPS. With TRIM, 13% of space gets TRIM'ed, leaving more room for the controller to use for maintenance operations.

TRIM Testing

Rolling Average

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

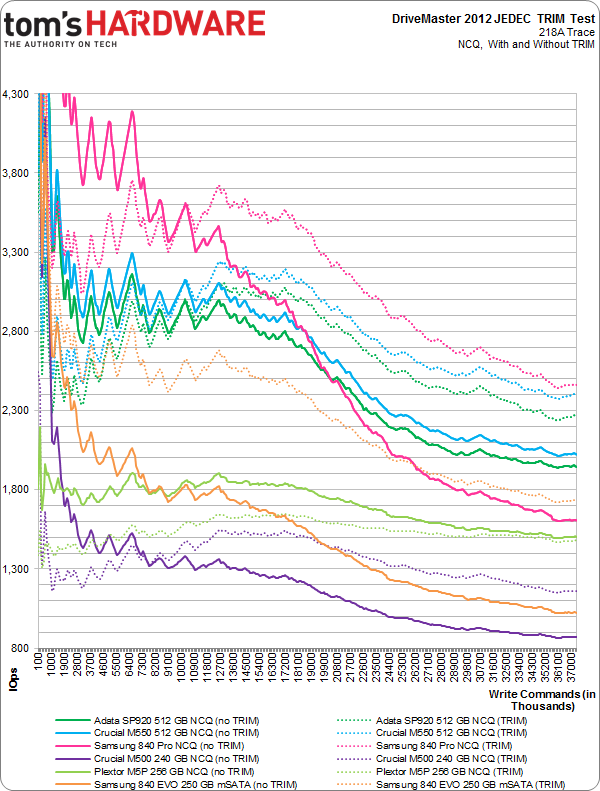

Here's the chart derived from our DriveMaster JEDEC TRIM test data. We have the new Adata SSDs, Crucial's M550s, Samsung's venerable 840 Pro at 256 GB, Crucial's more mainstream M500 (240 GB), Plextor's M5P, and the 250 GB 840 EVO. Each device's NCQ-based test is plotted. The solid line represents average IOPS every 100,000 commands, but without TRIM. The hashed line represents performance every 100,000 commands with TRIM. In each case, the workload is mixed in with tons of small, random writes.

Since performance is measured over each 100,000-command segment, time is factored out of the above chart. This rolling average also hides the trace's peaky nature.

There's a lot going on in the chart above, but pay particular attention to the 512 GB Crucial M550 in green and SP920 in teal. The M550 again outpaces Adata in a small but quantifiable way through the test with TRIM enabled and without the command. We keep getting the sense that these drives are not as identical as the hardware suggests.

Instantaneous

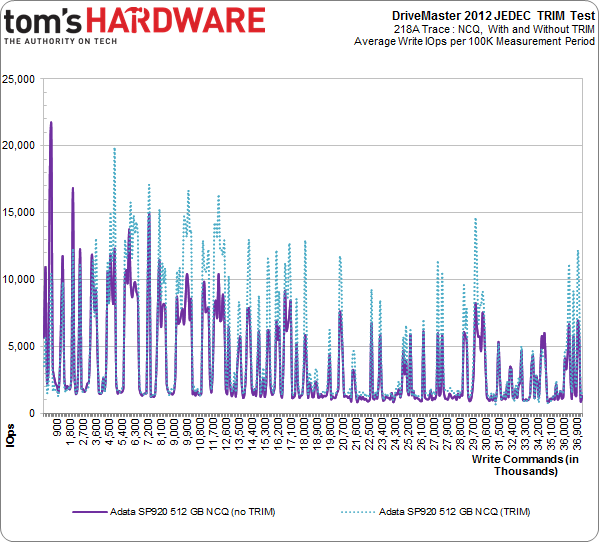

But I also want to know the instantaneous average of our TRIM testing. How does the drive fare servicing writes with and without TRIM during each 100,000-command window? The purple line represents IOPS across the entire trace, without TRIM. The teal line is with TRIM. Each data point represents write IOPS per 100,000-command test reporting period.

As we get more experience with this test, it's easier to identify the drives intended for enterprise-oriented environments and the ones destined to do desktop duty. The SP920 is readily identifiable as the latter. During periods of high I/O demand, the teal line shows the extra space created by TRIM allowing much higher performance. That is to say, when the system needs more write I/O, the SP920 delivers through its support for TRIM.

Throughput

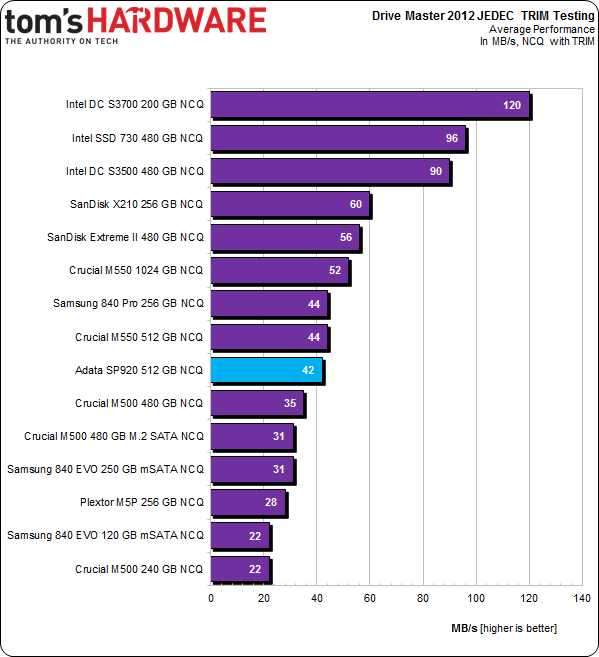

We collect and report the total throughput of each drive in the NCQ with TRIM test. It's one number that helps capture overall performance in the test.

Going back to our rolling average chart, where the 512 GB M550 beat the SP920 by a slim margin, these are the results in MB/s. And now we can see that the difference is just 2 MB/s across the test. Samsung's 840 Pro 256 GB, the Crucial M550, and the theoretically-identical Adata SP920 are all very similar.

Current page: Results: TRIM Testing With ULINK's DriveMaster 2012

Prev Page Results: Tom's Hardware Storage Bench v1.0, Continued Next Page Results: Power Consumption-

cryan Reply13011395 said:I prefer Sandisk, if you don't mind.

The X210 is pretty awesome, but newer Marvell implementations are built with Haswell-style power features in mind. If you're looking for a drive to use in mobile applications, mind the heat and power consumption stats.

Regards,

Christopher Ryan -

rajangel Awhile back I purchased a few different SSD's to test out (OCZ, Crucial, Patriot, Adata). The Adata is the only one still running and was always the quickest. I don't know how this one is built, but the last Adata was built tough. The OCZ was so flimsy it felt like paper. The Crucial and the Patriot were slightly better in build quality. Now that I'm in the market for a new drive I may consider this.Reply -

cryan Reply13012280 said:Awhile back I purchased a few different SSD's to test out (OCZ, Crucial, Patriot, Adata). The Adata is the only one still running and was always the quickest. I don't know how this one is built, but the last Adata was built tough. The OCZ was so flimsy it felt like paper. The Crucial and the Patriot were slightly better in build quality. Now that I'm in the market for a new drive I may consider this.

I have to say, the plastic or metal chassis a drive comes in doesn't mean much. In the lab, I like a nice heavy metal SSD casing, but in a laptop? You probably want a flimsy plastic chassis. It's not conductive and doesn't add much weight.

Regards,

Christopher Ryan -

rajangel It's a matter of opinion. I like things that are built well, and have a quality appearance. I think build quality does affect performance (read reliability). Especially when connectors/etc are cheap in construction. However, just my opinion.Reply -

cryan Reply13012326 said:It's a matter of opinion. I like things that are built well, and have a quality appearance. I think build quality does affect performance (read reliability). Especially when connectors/etc are cheap in construction. However, just my opinion.

I agree that a substantial chassis tends to reinforce the perception of a drive's build quality, but much of the time its aesthetic. The component choice on the PCB speaks more to quality. I've seen some downright terrible drives in the fanciest of cases.

Regards,

Christopher Ryan

-

rajangel I think there should be a restriction that prevents the article author from replying, unless there is a substantial mistake that was noted. I feel like tomshardware authors troll their own threads. This has become a problem lately. I'm at the point where I feel my business and time would be better spent on a real tech website. Tomshardware is like the Yahoo of tech sites lately.Reply -

iltamies Typo on last page: "Adata gets a solid product able to soften the wait, and Micron (Crucial's parent company) gets to more more volume." should read "move more volume."Reply -

Wisecracker Impressive ... power consumption is a bit high though, compared to the Samsung 120GB Evo (my current $80 fav)Reply

Are 'microseconds' considered 'milliseconds' ??