AMD Radeon R9 280X, R9 270X, And R7 260X: Old GPUs, New Names

AMD is introducing a handful of new model names today, based on existing GPUs. Do the company's price adjustments make this introduction newsworthy, or will the excitement need to wait for its upcoming Radeon R9 290 and 290X, based on fresh silicon?

TrueAudio: Dedicated Resources For Sound Processing

If you followed along with AMD’s tech day webcast, then you sat through a lot of TrueAudio discussion. In fact, given the amount of time dedicated to TrueAudio, the feature seemed like it’d be the day’s emphasis.

At the event, we were hearing the partner demos across eight channels, and the positional audio was certainly discernable, if not overwhelmingly busy (on purpose, no doubt). But we all know that 7.1- and even 5.1-channel sound setups outside of a home theater are very uncommon. Two- and 2.1-channel configurations, including headsets, are far more common. Unfortunately, it didn’t sound like anyone tuned in over Livestream was hearing the same output over stereo.

For anyone who was around in the late ‘90s to hear Aureal’s and Sensaura’s technologies, before both were acquired by Creative, you know that the head-related transfer functions used to create effective positional audio over two channels are not new. The point of TrueAudio is to facilitate more complex sound effects (those HRTFs aren’t computationally free) without burdening the host processor. Today, AMD says that audio gets as much as 10% of a game’s CPU utilization budget, limiting what developers can do. But with TrueAudio, AMD wants to guarantee the availability of real-time processing resources specifically for sound, and regardless of the host CPU you have installed.

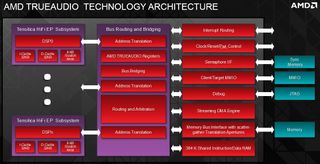

This is achieved through the Tensilica HiFi2 EP Audio DSP cores mentioned on the previous page. In the R7 260X, there are two three cores integrated on the Bonaire GPU. The higher-end R9 290 and 290X will also feature three DSP cores dedicated to TrueAudio. Those DSPs employ Tensilica’s Xtensa ISA with fixed- and floating-point number support, which AMD says is equally useful for high-end gaming and embedded applications. Because the DSP is programmable by nature, you can really feed anything you want into it, so long as there’s a decoder available. To that end, the professional audio software vendors are purportedly showing an interest, eager to see what dedicated hardware can do that host-based processing couldn’t.

The real-time nature of audio in a gaming environment means that fast access to compute cycles and memory is imperative, even if the cores themselves aren't particularly powerful. Each one includes 32 KB of instruction and data cache, along with 8 KB of scratch RAM. A fast routing interface connects the DSPs to 384 KB of shared internal memory organized in 8 KB banks. The local resources are fed by a multi-channel DMA engine able to keep the cores busy. And up to 64 MB of frame buffer memory is addressable through a low-latency bus interface shared with the display pipeline.

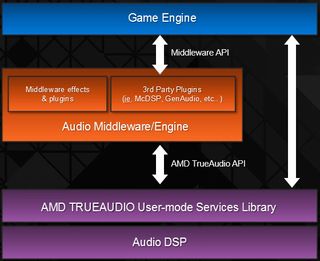

One of the first questions that came to mind upon hearing about TrueAudio was, “will game developers, already strapped for time and money as they get their titles to market, put resources into sound when there’s so much going on in graphics, physics, and AI?” AMD seems to think that the impact on ISVs will be minimal, though. Because a majority of developers are utilizing middleware for their audio, TrueAudio needs support from those companies first and foremost. Once you get support in Audiokinetic and Firelight’s FMOD, detecting and utilizing TrueAudio becomes much easier. From there, the feature exerts its influence before getting handed off to a codec, and is consequently compatible with any output type.

What about the fact that AMD is only making TrueAudio available across three products, two of which aren’t even available yet? Representatives say that AMD has to start somewhere with TrueAudio, and this is simply the first public airing. I’d add that high-end graphics cards, destined for high-end PCs also don’t need audio effects acceleration as much as less powerful platforms. But you can guess where this is going: expect the same technology to start showing up in AMD’s APUs and mobile GPUs, which are less powerful and might even realize power benefits from accelerating audio.

Stay on the Cutting Edge

Join the experts who read Tom's Hardware for the inside track on enthusiast PC tech news — and have for over 25 years. We'll send breaking news and in-depth reviews of CPUs, GPUs, AI, maker hardware and more straight to your inbox.

Current page: TrueAudio: Dedicated Resources For Sound Processing

Prev Page R7 260X: TrueAudio’s First Outing On The Back Of Bonaire Next Page Display Technology-

CaptainTom Wow what's with the AMD hate? As it stands they are doing the same thing Nvidia did except without the outrageous prices. The GTX 770 wasn't a great deal when the 7970 was $50 cheaper. Have fun trying to run BF3 with 2GB of VRAM...Reply -

slomo4sho Nothing revolutionary but better prices I suppose.Reply

The MSI R9 280X Gaming at $299 appears to outperform the GTX 770 at 1600P and is within margin of error at 1080P according to Techpowerup. Not a bad value at $100 less and still overclocks well:

http://www.techpowerup.com/reviews/MSI/R9_280X_Gaming/26.html -

jimmysmitty So long story short, if you have a HD7970GHz then these do nothing for you.Reply

Best to hold out till the reviews on the R9-290X I guess. But considering the specs I hope for at least 20% performance increases over a 7970. -

Shankovich What happened to Chris? I didn't see this kind of hate with all of the 700 series rebrands. Also, to the Canadians here, grab the $270 7970 GHz edition cards while you still can.Reply -

BigMack70 I don't like this new strategy AMD and Nvidia are taking of rebranding an old series at improved price points and then releasing only one new chip at a stupidly expensive price point.Reply

Are the days of (nearly) annual simultaneous full line GPU launches from $100-500 with a dual GPU chip to follow at $750-1000 really over? -

cangelini Hate? The R9 280X won an *award*. I think Tahiti at $300 is pretty much brilliant.Reply

I wrote one of the least flattering GTX 780 stories out there. I only identified a couple of situations where a Titan made any sense at all. And although the 760 *did* change the balance at $250, that card still didn't get an award. I liked the 770 for the simple fact that it delivered better-than-680 performance for close to $100 less.

The rest of AMD's new line-up is a lot like what exists already. Again, the 7870 is a better value than 270X. So what are you getting worked up over? The fact that I'm pointing out these aren't new GPUs? They're not. ;) -

Shankovich Ok Chris, I agree with you, sorry for the over reaction. But I really don't like how nVidia made price increases for some of the rebrands. Looking forward to your 290 and 290X reviews :DReply -

ingtar33 i'll take a 7950 at $129 thank you very much (or two). There is a major retailer selling them for that this week. Best buy all year. two 7950s for the price of one r9-280x? yeah... i'll do that all day every day.Reply -

tomfreak Radeon 7790 has true Audio = but not enabled boooooo = as a 7790 owner I somewhat disappointed :( . Anyone have any idea if we can crossfire 1GB 7790 and 2GB 260x?Reply -

net_nakul By the time a R9 380X comes out, the GCN Tahiti XT achitecture may be 4 years old (assuming end of 2015). AMD better come up with an awesome new architecture by then, considering the R&D time they have.Reply

That goes to you too Mr. NVIDIA

Most Popular