AMD Radeon R9 280X, R9 270X, And R7 260X: Old GPUs, New Names

AMD is introducing a handful of new model names today, based on existing GPUs. Do the company's price adjustments make this introduction newsworthy, or will the excitement need to wait for its upcoming Radeon R9 290 and 290X, based on fresh silicon?

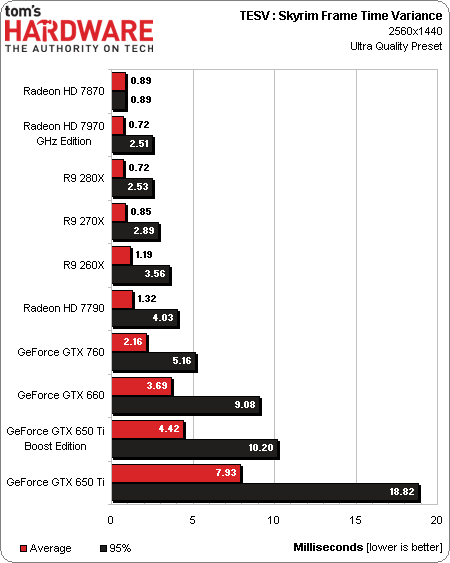

Results: The Elder Scrolls V: Skyrim

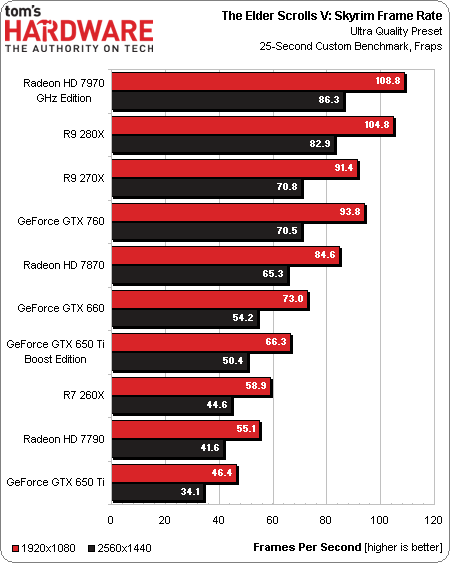

Skyrim tends to be more platform-bound than most of our other benchmarks, so an overclocked Ivy Bridge-E-based configuration with lots of fast memory lets these cards perform to their peak potential using the Ultra detail preset.

The thing is, this game just doesn’t tax graphics hardware very much. You’ll still find it playable at 2560x1440, even on a Bonaire-powered Radeon HD 7790 or R7 260X. Most notable, perhaps, is that a $200 R9 270X trades blows with a $250 GeForce GTX 760.

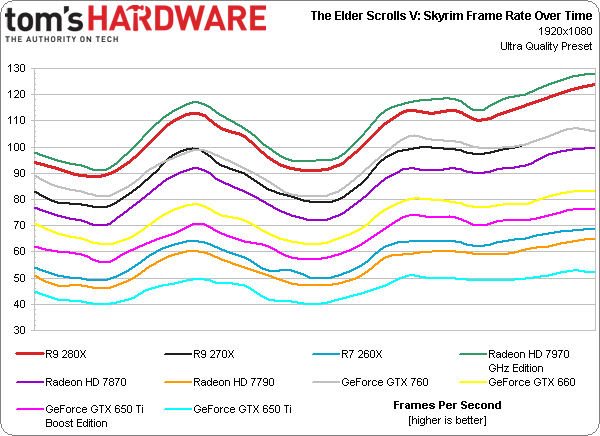

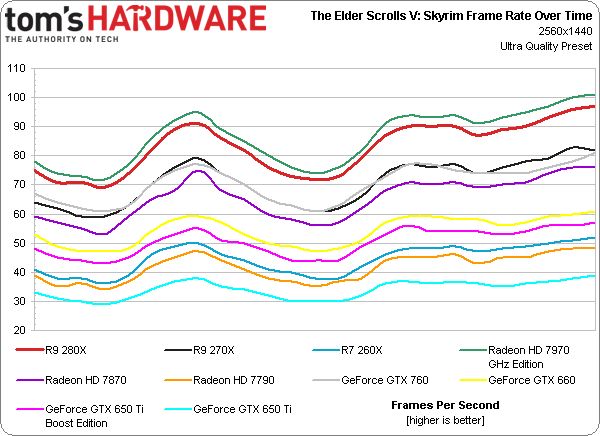

Smooth frame rate over time line graphics demonstrate an entire field of playable performance at 1920x1080, and mostly ample numbers at 2560x1440 using the game’s Ultra quality preset.

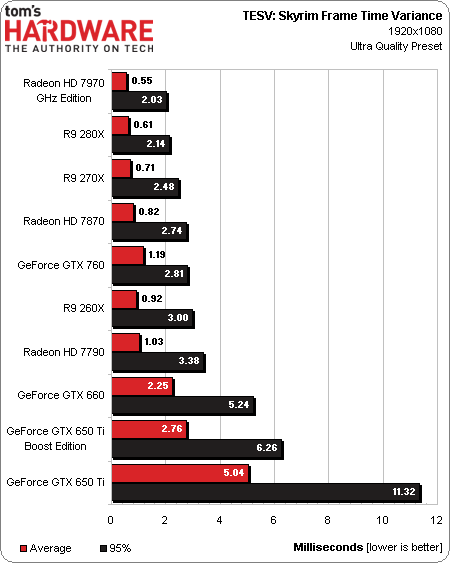

The GeForce cards experience higher frame time variance, on average. At 1920x1080, only the 650 Ti’s worst-case result is something you’d likely notice. At 2560x1440, however, the numbers using Nvidia’s latest beta drivers aren’t as good. Again, it’s the GeForce GTX 650 Ti that demonstrates the least-favorable behavior.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Results: The Elder Scrolls V: Skyrim

Prev Page Results: Grid 2 Next Page Results: Tomb Raider-

CaptainTom Wow what's with the AMD hate? As it stands they are doing the same thing Nvidia did except without the outrageous prices. The GTX 770 wasn't a great deal when the 7970 was $50 cheaper. Have fun trying to run BF3 with 2GB of VRAM...Reply -

slomo4sho Nothing revolutionary but better prices I suppose.Reply

The MSI R9 280X Gaming at $299 appears to outperform the GTX 770 at 1600P and is within margin of error at 1080P according to Techpowerup. Not a bad value at $100 less and still overclocks well:

http://www.techpowerup.com/reviews/MSI/R9_280X_Gaming/26.html -

jimmysmitty So long story short, if you have a HD7970GHz then these do nothing for you.Reply

Best to hold out till the reviews on the R9-290X I guess. But considering the specs I hope for at least 20% performance increases over a 7970. -

Shankovich What happened to Chris? I didn't see this kind of hate with all of the 700 series rebrands. Also, to the Canadians here, grab the $270 7970 GHz edition cards while you still can.Reply -

BigMack70 I don't like this new strategy AMD and Nvidia are taking of rebranding an old series at improved price points and then releasing only one new chip at a stupidly expensive price point.Reply

Are the days of (nearly) annual simultaneous full line GPU launches from $100-500 with a dual GPU chip to follow at $750-1000 really over? -

cangelini Hate? The R9 280X won an *award*. I think Tahiti at $300 is pretty much brilliant.Reply

I wrote one of the least flattering GTX 780 stories out there. I only identified a couple of situations where a Titan made any sense at all. And although the 760 *did* change the balance at $250, that card still didn't get an award. I liked the 770 for the simple fact that it delivered better-than-680 performance for close to $100 less.

The rest of AMD's new line-up is a lot like what exists already. Again, the 7870 is a better value than 270X. So what are you getting worked up over? The fact that I'm pointing out these aren't new GPUs? They're not. ;) -

Shankovich Ok Chris, I agree with you, sorry for the over reaction. But I really don't like how nVidia made price increases for some of the rebrands. Looking forward to your 290 and 290X reviews :DReply -

ingtar33 i'll take a 7950 at $129 thank you very much (or two). There is a major retailer selling them for that this week. Best buy all year. two 7950s for the price of one r9-280x? yeah... i'll do that all day every day.Reply -

tomfreak Radeon 7790 has true Audio = but not enabled boooooo = as a 7790 owner I somewhat disappointed :( . Anyone have any idea if we can crossfire 1GB 7790 and 2GB 260x?Reply -

net_nakul By the time a R9 380X comes out, the GCN Tahiti XT achitecture may be 4 years old (assuming end of 2015). AMD better come up with an awesome new architecture by then, considering the R&D time they have.Reply

That goes to you too Mr. NVIDIA