Intel’s Second-Gen Core CPUs: The Sandy Bridge Review

Although the processing cores in Intel’s Sandy Bridge architecture are decidedly similar to Nehalem, the integration of on-die graphics and a ring bus improves performance for mainstream users. Intel’s Quick Sync is this design’s secret weapon, though.

Sandy Bridge’s Secret Weapon: Quick Sync

I don’t think it has ever been said that Intel caught AMD and Nvidia off guard in the graphics department. And yet, the Quick Sync engine remained an unknown to everyone outside of Intel, right up until IDF 2010. Would you believe that it was first conceptualized five years ago? Talk about keeping a secret!

At the time, the first BD-ROM drives were starting to ship, representing this shift from SD to HD media. Additionally, there was more growth in mobility than the desktop space. Finally, Intel recognized that the PC was the sole platform for content creation—and the fact that editing a video could gobble up an entire weekend was flat-out unacceptable. It was at that point that Intel’s engineers decided to tackle decoding and encoding performance in Sandy Bridge—both pain points for content creators. They approached the video pipeline using dedicated fixed-function logic, which serves two purposes. First, it enables very compelling performance. And second, it keeps energy use to a minimum.

Of course, that fixed-function logic later came to be known as Quick Sync—a blanket marketing name for Sandy Bridge’s ability to accelerate decoding and encoding/transcoding.

“But wait,” you say. “AMD and Nvidia already accelerate those things using CUDA and Stream (now referred to as APP).” That’s true. But both companies are using general-purpose hardware to improve performance beyond what a software-only implementation can do. And while we’ve all been trained to think that general-purpose GPU computing is the future, at least relative to the more limited parallelism offered by a CPU, the tasks we’re talking about here simply cannot run as quickly or as efficiently (power-wise) in general-purpose logic circuits.

So, what’s the thinking here? We know that video—whether you’re talking about playback or encoding—is a common use case. Dedicating processing cores to that workload ties them up and uses a lot of power. We’ve seen this in our CPU reviews for years now (think about the MainConcept and HandBrake metrics). Software developers have had to parallelize their applications to make video-related workloads finish faster. And that means higher utilization, more power, more heat, and so on. I mean, really, video is one of the most demanding benchmark scenarios we regularly throw at a new chip.

Intel’s answer was to build a dedicated block of silicon onto Sandy Bridge-based processor that does nothing but video. According to Dr. Hong Jiang, the senior principle engineer and chief media architect of Sandy Bridge, this decision was based on the pervasiveness of video. Intel is quite literally betting precious die space that video applies to a broader range of its customers than if it burnt transistor budget on more gaming performance. Of course, it helps that video is one of Intel’s competencies. The investment into Quick Sync ends up going a lot further than a more modest gain in 3D alacrity.

Needless to say, once word of Quick Sync spread, both AMD and Nvidia started burning rubber right away, working on their own answers to the fixed-function hardware built onto Sandy Bridge-based processors. But everything I’m hearing puts both companies a year away from having something able to compete. It’s like AMD with Eyefinity in that way—Intel took a major leap on the down-low, a number of ISVs were willing to play ball, seeing value added to their own products, and now the company has a major competitive advantage that’ll take a comparable effort to match.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

What Does It Do?

There are two encompassing ideas here: encode and decode.

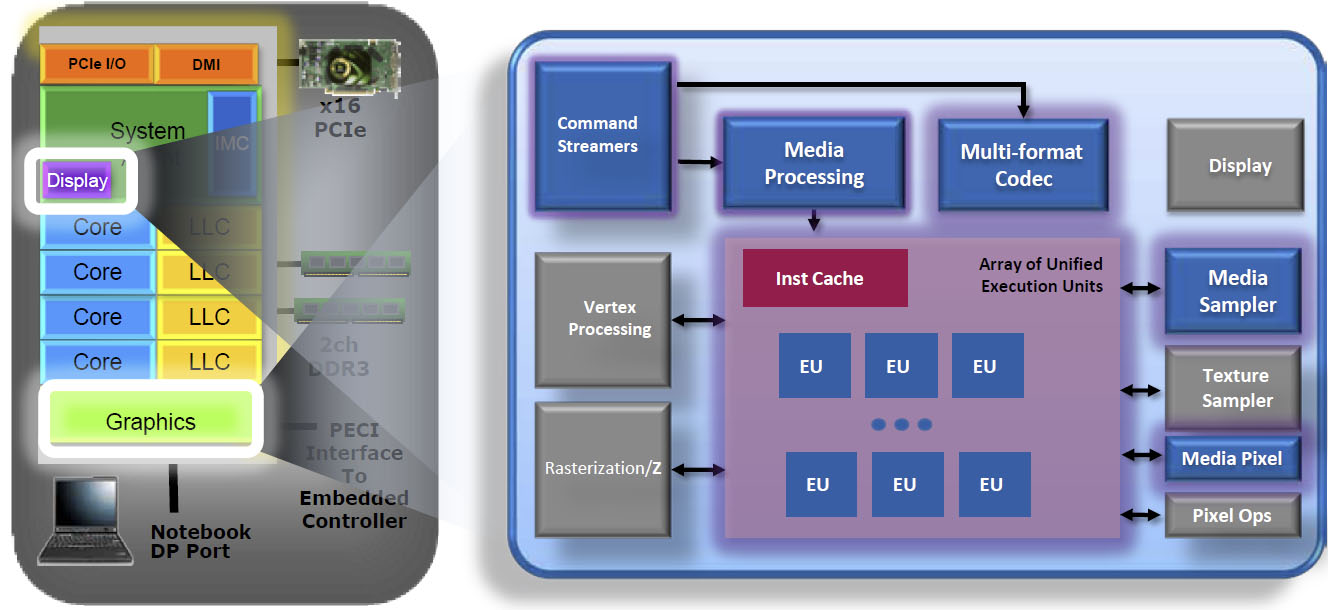

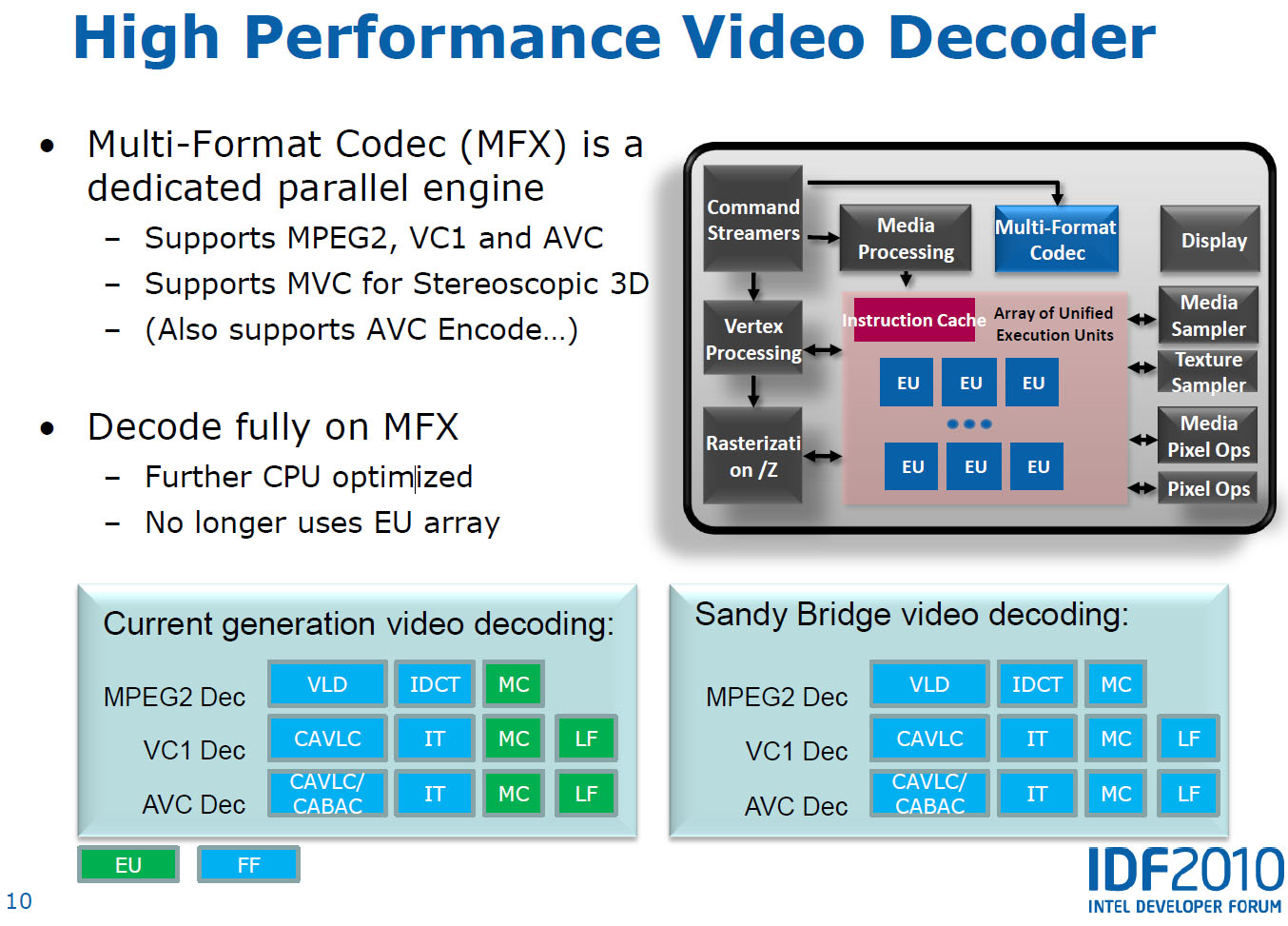

Intel already had a strong position on the decode front—its existing graphics-equipped processors are able to handle MPEG-2, VC-1, and AVC. However, motion compensation (the most complex piece of the decode pipeline) and loop filtering (applicable to VC-1 and AVC) have to be handled by the general-purpose execution units, eating up more power than necessary. Sandy Bridge rectifies this by moving the complete decode pipeline to an efficient fixed-function multi-format codec. It also adds MVC support, enabling Blu-ray 3D playback, too. Video scaling, denoise filtering, deinterlacing, skin tone enhancement, color control, contrast enhancement—all of those capabilities are addressed by blocks of logic in the graphics engine.

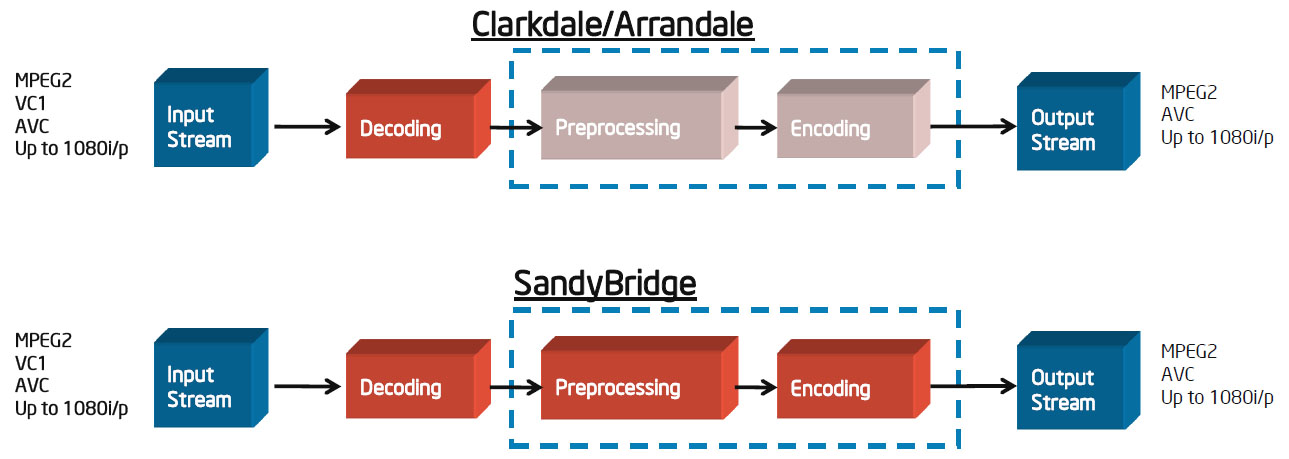

On the encode side, you have fixed-function logic working in concert with the programmable execution units. There’s a media sampler block attached to the EUs (Intel calls this a co-processor) that handles motion estimation, augmenting the programmable logic. Of course, the decoding tasks that happen during a transcode travel down the same fixed-function pipeline already discussed, so there’s additional performance gained there. Feed in MPEG-2, VC-1, or AVC, and you get MPEG-2 or AVC output from the other side.

Now, the way each company employs Quick Sync is naturally going to be different, depending on the application in question. Take CyberLink, for example. PowerDVD 10 capitalizes on the pipeline’s decode acceleration. A MediaEspresso project is going to be significantly more involved—it’ll read the file in, decode, encode, and turn back the output stream. Then, in PowerDirector, a video editing app, you have to factor in post-processing—the effects and compositing that happens before everything gets fed into the encode stage.

Current page: Sandy Bridge’s Secret Weapon: Quick Sync

Prev Page The System Agent And Turbo Boost 2.0 Next Page Quick Sync Vs. APP Vs. CUDA-

cangelini MoneyFace pEditor, page 10 has mistakes. Its LGA1155, not LGA1555.Reply

Fixed, thanks Money! -

juncture "an unlocked Sandy Bridge chip for $11 extra is actually pretty damn sexy."Reply

i think the author's saying he's a sexually active cyberphile -

fakie Contest is limited to residents of the USA (excluding Rhode Island) 18 years of age and older.Reply

Everytime there's a new contest, I see this line. =( -

englandr753 Great article guys. Glad to see you got your hands on those beauties. I look forward to you doing the same type of review with bulldozer. =DReply -

joytech22 Wow Intel owns when it came to converting video, beating out much faster dedicated solutions, which was strange but still awesome.Reply

I don't know how AMD's going to fare but i hope their new architecture will at least compete with these CPU's, because for a few years now AMD has been at least a generation worth of speed behind Intel.

Also Intel's IGP's are finally gaining some ground in the games department. -

cangelini fakieContest is limited to residents of the USA (excluding Rhode Island) 18 years of age and older.Everytime there's a new contest, I see this line. =(Reply

I really wish this weren't the case fakie--and I'm very sorry it is. We're unfortunately subject to the will of the finance folks and the government, who make it hard to give things away without significant tax ramifications. I know that's of little consolation, but that's the reason :(

Best,

Chris -

LuckyDucky7 "It’s the value-oriented buyers with processor budgets between $100 and $150 (where AMD offers some of its best deals) who get screwed."Reply

I believe that says it all. Sorry, Intel, your new architecture may be excellent, but unless the i3-2100 series outperforms anything AMD can offer at the same price range WHILE OVERCLOCKED, you will see none of my desktop dollars.

That is all.