Intel’s Second-Gen Core CPUs: The Sandy Bridge Review

Although the processing cores in Intel’s Sandy Bridge architecture are decidedly similar to Nehalem, the integration of on-die graphics and a ring bus improves performance for mainstream users. Intel’s Quick Sync is this design’s secret weapon, though.

HD Graphics On The Desktop: Intel Trips Up

Early previews benchmarking Sandy Bridge’s graphics suggested that we might have a solution capable of displacing some of the entry-level discrete market. Of course, Intel excitedly included that in the channel-oriented marketing material that press guys like me aren’t supposed to see.

As it pertains to the desktop market, though, you’re going to be disappointed for a few different reasons.

One Giant Leap…For Intel

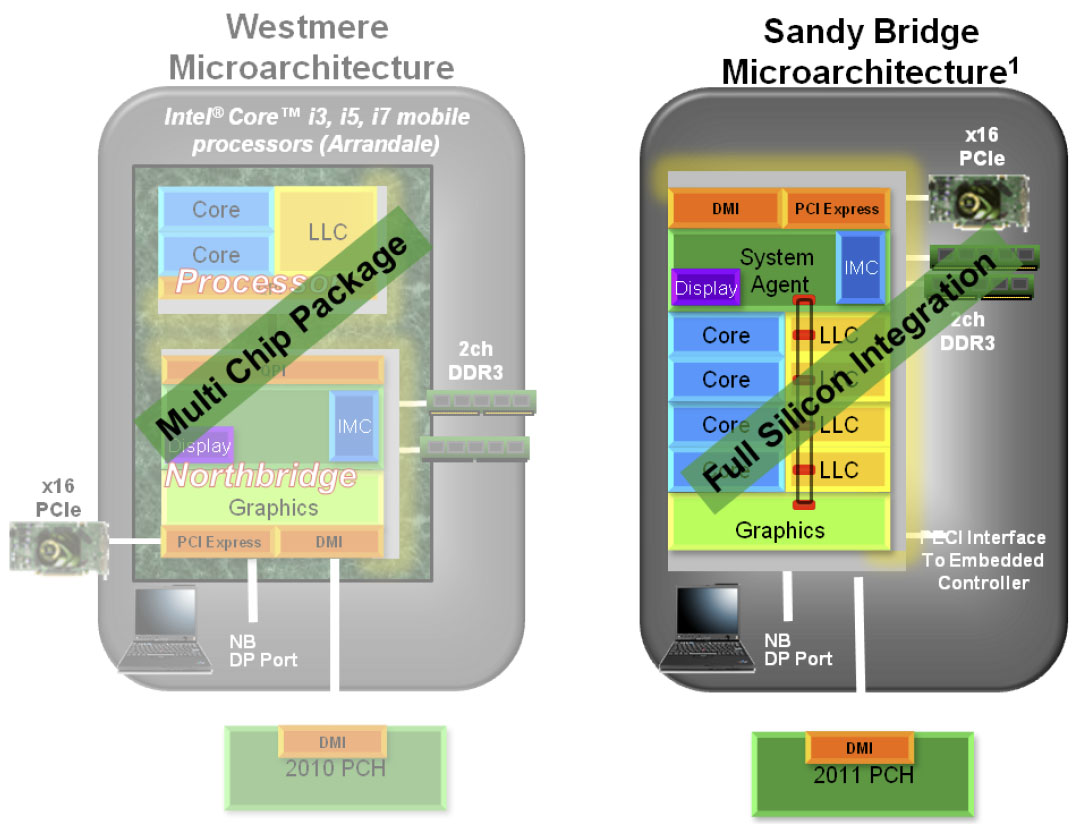

Let’s start from the beginning—or at least the previous-generation implementation. As you know, the Clarkdale-based processors that launched one year ago were the first to benefit from Intel’s manufacturing leadership. Specifically, the dual-core processor was manufactured at 32 nm. But Intel used 45 nm lithography for a second on-package die, composed of a memory controller, PCI Express controller, and its Ironlake graphics engine.

That was a solid first step toward integrating even more functionality into the CPU, but it wasn’t ideal. Graphics performance was better than previous chipset-based implementations, yes. Memory performance dropped compared to Lynnfield and Bloomfield, though, since the controller migrated off-die.

With Sandy Bridge, all of that logic gets glued together, giving Intel a lot more control over its behavior. For example, the graphics core now has access to last-level cache, so the architecture has a mechanism to prevent thrashing between the cores and graphics engine. As mentioned, in 3D-heavy workloads, the power control unit can bias toward the graphics core, allocating it more thermal budget to run at frequencies of up to 1350 MHz.

The nomenclature Intel uses is similar from last generation to this one. Its HD Graphics engine still employs 12 scalar execution units (or EUs, for short) with DirectX 10.1 compatibility. However, a number of architectural improvements, like larger registers, mathbox integration, and new instruction support purportedly double the instruction throughput compared to the Ironlake GPU on Clarkdale. Factor in significant frequency increases and you’re looking at the potential for big performance gains.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

A Tale Of Two GPUs

So far so good, right? Well, here’s where things start getting a little more…uh, weird.

There are two versions of the graphics core, inconspicuously dubbed HD Graphics 3000 (GT2) and HD Graphics 2000 (GT1). The former features all 12 EUs, while the latter is limited to six.

Of the 15 mobile Sandy Bridge-based SKUs being announced, all of them offer HD Graphics 3000. Some models run at up to 1300 MHz, others run at 1100 MHz, one does 950 MHz, and another tops out at 900 MHz. As you might guess, the final specification is largely dependent on TDP.

The desktop is a different story entirely. Of 14 new Sandy Bridge-based CPUs, only two of them come equipped with HD Graphics 3000. Almost humorously, those two chips are the K-series SKUs—enthusiast-oriented parts that I’m willing to bet will never get called on to perform a 3D task. The other 12 models—the ones that’ll go into more mainstream home and office desktops—get HD Graphics 2000. Those are the processors whose owners would actually appreciate saving $50 on an entry-level discrete card. And they get pegged with the handicapped version.

Power users spending an extra $20 on a K-series chip also buy discrete graphics. Period. This point gets hammered home even harder on the next page, where you learn that the H67 chipset needed to utilize on-die graphics doesn't support processor overclocking, so there's really no reason for a K-series/H67 combination.

There’s a fair chance my assessment will be different when it comes to testing Sandy Bridge in a mobile environment. LCD screens running lower native resolutions are totally complemented by the more powerful HD Graphics 3000 engine. But before I even get into the benchmarks, it looks like Intel missed a real opportunity to show off its efforts on the desktop.

It’s A Numbers Game

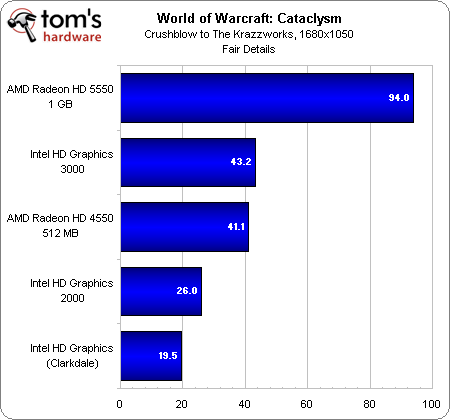

Let’s kick things off with a title right up Intel’s alley: World of Warcraft: Cataclysm. I’m using the same benchmark seen in our recent performance evaluation of the game—a flight from Crushblow to The Krazzworks in Twilight Highlands.

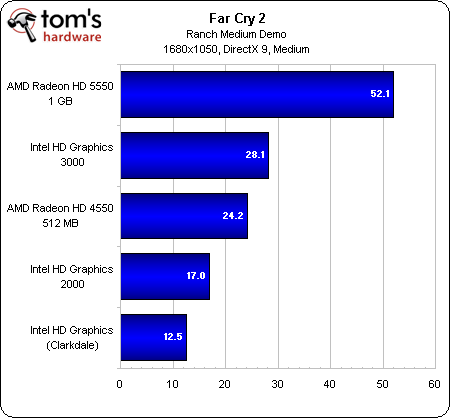

The HD Graphics 3000 engine actually stands up impressively to AMD’s Radeon HD 4550 512 MB—a card you can find for roughly $25 online. The HD Graphics 2000 core doesn’t fare well at all, even using the second-lowest detail preset available. It’s faster than Clarkdale’s on-package solution using half as many EUs, which I suppose says something. But if you want to play this game (even at very modest settings), you’re not using a desktop Sandy Bridge processor.

I threw in a Radeon HD 5550 with 1 GB of DDR3 memory for the sake of comparison, found online for $55 or so. Offering more than twice the HD Graphics 3000's performance, that’s a pretty sizable upgrade if you want to bump the detail slider up a notch or run at 1920x1080.

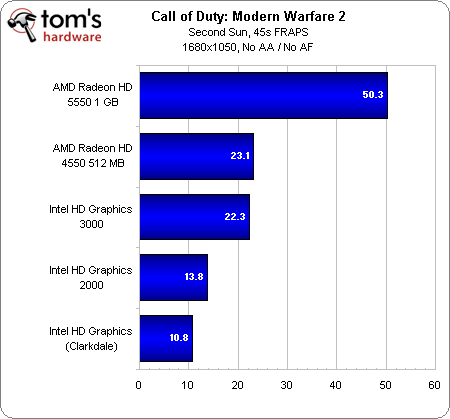

Nobody ever said integrated graphics were supposed to handle first-person shooters, but CoD is notoriously graphics-light, so I figured I’d give it a shot.

Once again, to Intel’s credit, HD Graphics 3000 stands up well to a very entry-level add-in card. But the HD Graphics 2000 implementation’s only real bragging point is beating Clarkdale’s Ironlake engine. In comparison, a relatively affordable Radeon HD 5550 is actually playable at 1680x1050.

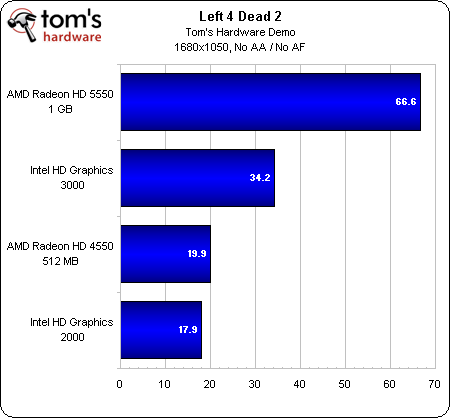

The outcome is as-expected in these two tests—HD Graphics 3000 shows promise against entry-level AMD gear, but unless you’re going to spend extra on a K-series processor and use its graphics engine, you have to look at the HD Graphics 2000 results instead. And those are only marginally better than Clarkdale (which doesn't even complete the Left 4 Dead 2 benchmark successfully).

At least on the desktop, integrated graphics maintains the status quo. It’s fine for elementary tasks, and it’s too anemic for gaming. What a let-down.

Current page: HD Graphics On The Desktop: Intel Trips Up

Prev Page Blu-ray Playback And Video Performance Next Page Two New Platforms, More On The Way-

cangelini MoneyFace pEditor, page 10 has mistakes. Its LGA1155, not LGA1555.Reply

Fixed, thanks Money! -

juncture "an unlocked Sandy Bridge chip for $11 extra is actually pretty damn sexy."Reply

i think the author's saying he's a sexually active cyberphile -

fakie Contest is limited to residents of the USA (excluding Rhode Island) 18 years of age and older.Reply

Everytime there's a new contest, I see this line. =( -

englandr753 Great article guys. Glad to see you got your hands on those beauties. I look forward to you doing the same type of review with bulldozer. =DReply -

joytech22 Wow Intel owns when it came to converting video, beating out much faster dedicated solutions, which was strange but still awesome.Reply

I don't know how AMD's going to fare but i hope their new architecture will at least compete with these CPU's, because for a few years now AMD has been at least a generation worth of speed behind Intel.

Also Intel's IGP's are finally gaining some ground in the games department. -

cangelini fakieContest is limited to residents of the USA (excluding Rhode Island) 18 years of age and older.Everytime there's a new contest, I see this line. =(Reply

I really wish this weren't the case fakie--and I'm very sorry it is. We're unfortunately subject to the will of the finance folks and the government, who make it hard to give things away without significant tax ramifications. I know that's of little consolation, but that's the reason :(

Best,

Chris -

LuckyDucky7 "It’s the value-oriented buyers with processor budgets between $100 and $150 (where AMD offers some of its best deals) who get screwed."Reply

I believe that says it all. Sorry, Intel, your new architecture may be excellent, but unless the i3-2100 series outperforms anything AMD can offer at the same price range WHILE OVERCLOCKED, you will see none of my desktop dollars.

That is all.