Intel Core i7-3960X Review: Sandy Bridge-E And X79 Express

Intel's Sandy Bridge design impressed us nearly a year ago, but it was intended for mainstream customers. The company took its time readying the enthusiast version, Sandy Bridge-E. Now, the LGA 2011-based platform and its accompanying CPUs are ready.

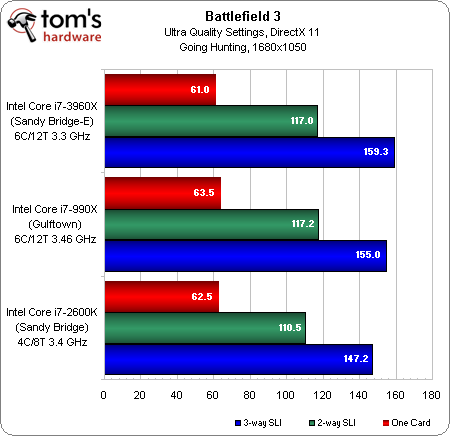

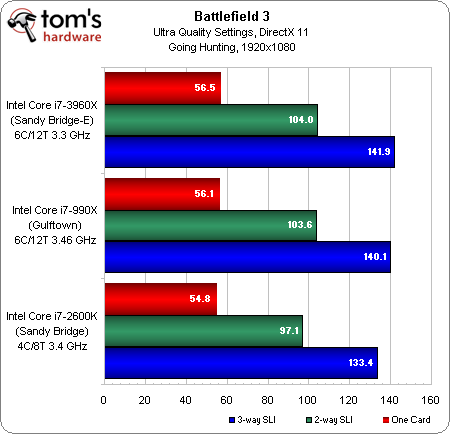

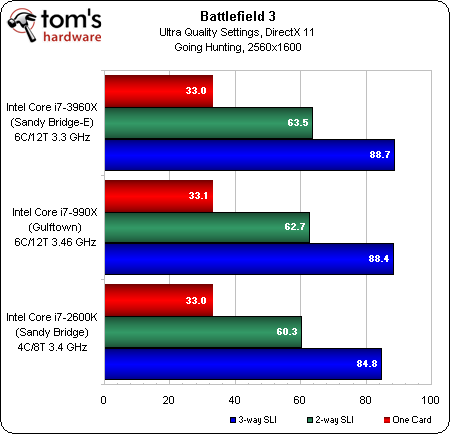

Battlefield 3 In SLI

Last week, Nvidia called to let us know that Sandy Bridge-E really allowed three-way SLI to shine in games like Battlefield 3. It showed performance results up to 20% higher than Intel’s prior-generation platform, but it didn’t say whether it used the campaign or a multi-player map for testing. I really like the idea of benchmarking a 64-player rush match, yeah. But I just can’t accept that the results are reliable. I even reached out to Johan Andersson at DICE for guidance on testing, and he admitted there aren’t any good deterministic sequences to profile.

So, I fell back to the same campaign sequence used in Battlefield 3 Performance: 30+ Graphics Cards, Benchmarked, hoping that it was at least graphics-bound enough at Ultra settings to show off what three-way SLI can do.

It turns out that this sequence does demonstrate scaling at all three resolutions. A trio of GeForce GTX 580s yields great performance from 1680x1050 to 2560x1600. It just doesn’t shine significantly brighter on Core i7-3960X.

So, here’s my interpretation of Nvidia’s findings. It’s not that Core i7-3960X allows three-way SLI to stretch its legs in any particularly unique way. In a purely graphics-bound scenario, it scales almost as well on a $300ish Core i7-2600K or a $1000 Core i7-3960X. However, I suspect Nvidia did its benchmarking in a multi-player map, where processor performance is more influential. Less-powerful CPUs become bottlenecks with so much graphics muscle behind them, inhibiting scaling.

If anything, this serves as a reminder why gamers shouldn’t skimp on a processor and load up on GPUs. In a title like Battlefield 3, there are environments that tax graphics (the campaign) and others that exact a more demanding load on the CPU (multi-player). Balancing the two is critical. So, if you’re willing to splurge on three-way SLI, be prepared to also spend generously on a complementary platform. Today, Sandy Bridge-E, by virtue of its per-clock performance and six-core configuration, is unquestionably the best you can present to a trio of potent GTX 580s.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

SpadeM So no SAS/Full Sata 3 ports but u do get PCIe 3 ... no Quicksync but u do get 2 more cores and the added cache ... no USB 3.0 but u get quad channel memory which in real life every day computing is a minimal gain at best. Feels an awful lot like a weak trade if you ask me. I'm basically asked to buy the P67 chipset with sprinkles on top. And for 1000$ it feels like it falls short. For heavy workloads it's cheaper and faster to make yourself 2 systems based on 1155 or bulldozer and render, fold, chew numbers that way. X79 should have launched with an ivy bridge based cpu inside and a better chipset to live to it's name.Reply

What we have today is simply a platform for bragging rights not a serious contender to the X38, X48, X58 family. -

illfindu Not to take the review to much off topic but its worth bringing up because this review was so complete , as in covering a vast array of situations and programs. Its truly embarrassing for AMD that the FX-8XXX series is beaten not only bye chips with half the cores but half the cores that are a generation behind. In fact as of this moment the FX set is almost inspiring it its lack of any value at first glance at some of these marks one could say that AMD's most expensive chip at over 200$ is one of its slowest being beaten bye both the x4 and x6 phenoms.Reply -

redsunrises Illfindu, you are beating a dead horse... Old news, lets move on (sorry, just tired of the same thing being said over and over, which will end in an amd fanboy fight). Great review though!Reply -

ohim This article tells me 2 things , either our current software is a total piece of crap since it has absolutely no clue of multi core cpus, or the future without AMD is so grim that intel makes you pay 1000 bucks for a cpu that doesn`t perform really that fast ... but for sure the software industry needs to take a better look at those multicore optimisations.Reply -

stonedatheist I think Intel would be raking in the dough if they left all 8 cores enabled for the 3960X. I doubt that a later revision will enable them. 8c/16t will probably hit the desktop with IB-E (can't wait) :)Reply -

joytech22 :| Well AMD is fighting a losing battle.. (In High-End CPU's, which I actually use for rendering etc..)Reply

I would LOVE to see them pick up their game and provide me with a worthy upgrade over my 4GHz i7 2600 (Non-K). I would swoop it up.

Look, BD had 4 modules with two "cores" each, each module is equivalent to a Sandy Bridge core.

They should just combine both of those cores or make them a single core, so we get 4 threads.

Then create 4-6-8 core versions of those CPU's..

Think about it.. the FX8150 is more of a 4-core CPU where the resources are halved pretty much so you get two threads per core, it would have been MUCH MUCH better if they just kept 4 strong cores.

Not sure why either but I always seem to start an AMD related comment :\ -

JeanLuc Hi Chris,Reply

The labels are wrong on the graphs on this page the last ones should read DDR2-2133 on the last two shouldn't it?

JeanLuc