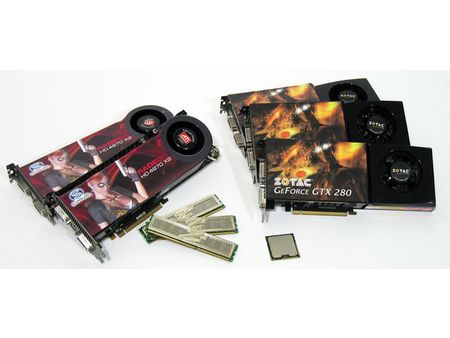

Core i7: 4-Way CrossFire, 3-way SLI, Paradise?

Tempered Expectations

Intel’s Core i7 processor, formerly referred to as Bloomfield, has been architecturally dissected one bit at a time for the past two years. Talk about a rollercoaster of anticipation for gaming enthusiasts. Gone is the super-quick L2 cache that helped propel Core 2 processors so far ahead of AMD’s Phenom offerings in a myriad of games. In its place is a much smaller L2, a large L3, a HyperTransport-like processor-to-processor interconnect, an integrated memory controller, and the reemergence of Hyper-Threading. If we didn’t know any better, we’d suggest that Intel is going after a different market entirely.

Ah, but we do know better, and it is. At this fall’s Intel Developer Forum (IDF), Intel representatives gave us our closest look yet of production specs for its upcoming Core i7 lineup, along with preliminary performance numbers. In addition to exceptional results in video editing, media conversion, and professional workstation titles, we also received a healthy dose of reality for what we’d see in testing gaming performance. Quite simply, the Nehalem micro-architecture incorporates a lot of improved technology, which is reflected in the speed-ups seen throughout a cherry-picked benchmark suite. However, it reportedly wouldn’t gain much in the way of gaming—presumably as a result of the tradeoffs made as Intel’s engineers optimized their efforts to retake the enterprise computing space.

It’s A Platform Story Now

Thus, our attention shifted to the X58 platform, Intel’s chipset complementing Core i7 at launch. Initial rumblings were that X58 would extend Intel’s support for CrossFireX—AMD’s multi-card rendering technology—through divisible PCI Express links. A number of existing Intel chipsets already accommodated two or more cards, so it was hardly a surprise that core logic armed with two x16 PCI Express 2.0 links would continue that trend.

Then the rumors started bubbling up that X58 might also work with SLI, just like Intel’s “Skulltrail” does.

First, we were lead to believe the feature would require Nvidia’s nForce 200 companion chip. But then, at its NVISION ’08 show in San Jose, the company made it clear that X58 would support SLI natively—in two- and 3-way configurations. We’ll leave alone discussion of SLI and the supposed hardware requirements that kept it from appearing on non-Nvidia platforms previously. By now, it’s a foregone conclusion that the technology needed to enable SLI isn’t a chipset-specific feature, but rather a matter of licensing. The company shared with us the “updated” requirements for what it’d take to enable SLI, and gave us some idea of the configurations made possible by combining Intel’s X58 and the company’s own nForce 200.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

DFGum Yep, i hafta say being able to switch brands of graphics cards on a whim and selling off the old is great. Knowing im going to be getting the preformance these cards are capable of (better price to preformance ratio) is nice also.Reply -

cangelini randomizerSLI scales so nicely on X58.Reply

Hey you even got a "First" in there Randomizer! -

randomizer cangeliniHey you even got a "First" in there Randomizer!And modest old me didn't even mention it. :lol:Reply -

enewmen Still waiting for the 4870 X2s to be used in these bechmarks. I thought THG got a couple for the $4500 exteme system. But still happy to see articles like this so early!Reply -

cangelini enewmenStill waiting for the 4870 X2s to be used in these bechmarks. I thought THG got a couple for the $4500 exteme system. But still happy to see articles like this so early!Reply

Go check out the benchmark pages man! Every one with 1, 2, 4 4870s. The 2x and 4x configs are achieved with X2s, too.

Oh, and latest drivers all around, too. Crazy, I know! =) -

enewmen cangeliniI found it, just read the article too quickly. - My bad.Reply

"A single Radeon HD 4870 X2—representing our 2 x Radeon HD 4870 scores—is similarly capable of scaling fan speed on its own. "

Hope to see driver updates like you said. -

spyde Hi there, my question regarding these benchmarks with the HD card is, "was a 2G card use or a 1G". I am about to buy a new system and was looking to buy 2 x HD4870X2 2G cards, but with these results its looking a bit ify. I hope you can answer my question.Reply

Cheers. -

Proximon That's a nice article. I especially like the way the graphs are done. everything is scaled right, and you get an accurate representation.Reply