IBM Challenges 3D XPoint With TLC Phase-Change Memory (PCM)

IBM announced that it has developed a method to store three bits of data per cell using Phase-Change Memory (PCM). Intel and Micron set the memory world afire with their 3D XPoint announcement, but they have not revealed the underpinnings of the technology, which many speculate is either ReRAM- or PCM-based. In either case, the IBM PCM products will line up as competitors for 3D XPoint, though the ability to store more data per cell could provide IBM with a cost and density advantage.

The future of data storage will consist of new materials and techniques that bring DRAM-like speed in tandem with the persistence of NAND (that is, it will retain data when power is removed). These new storage mediums will straddle the line between main memory and storage memory, though many are closer to the speed of DRAM than NAND, creating a new class called "Universal Memory" or "Storage-Class Memory."

PCM development began in the late 1960s. PCM relies upon switching cells between crystalline and amorphous states in a chalcogenide (glass-like) alloy. The material switches between states through targeted Joule heating. The crystalline state has low resistance and the amorphous state has high resistance, which determines if the binary bit stored in each cell is a 1 or 0.

Article continues below| Header Cell - Column 0 | Memristor | PCM | STT-RAM | ReRAM | DRAM | Flash | HDD |

|---|---|---|---|---|---|---|---|

| Read Time (ns) | <10 | 20-70 | 10-30 | 10 | 10-50 | 25,000 | 5-8x106 |

| Write Time (ns) | 20-30 | 50-500 | 13-95 | 1-100 | 10-50 | 200,000 | 5-8x106 |

| Endurance (cycles) | 1 Trillion | 10 - 100 million | 1015 | 1010-1012 | >1017 | 500-106 | 1015 |

| Retention (without power) | >10 Years | <10 Years | Weeks | Months | <Second | ~10 Years | ~10 Years |

| Energy Per Bit (pj)2 | 0.1-3 | 2-100 | 0.1-1 | ? | 2-4 | 101-1014 | 106-107 |

| Chip Area Per Bit (F2) | 4 | 8-16 | 14-64 | ? | 6-8 | 4-8 | n/a |

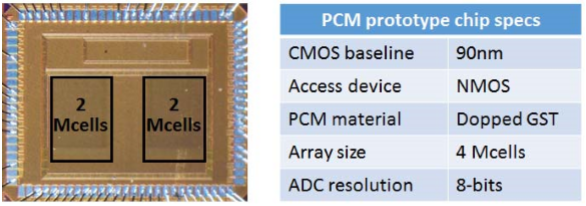

IBM researchers demonstrated the ability to store three bits of data per cell, much like Triple-Level Cell (TLC) NAND. The scientists achieved up to 1 million write cycles with the prototype memory chip array (64k-cell) when storing 8 levels of data per cell (which equates to three bits per cell).

Planar TLC NAND offers 300-500 write cycles, and more-endurant planar MLC NAND offers up to 3,000 cycles. Both pale in comparison to the 10 million write cycles provided by standard PCM. The new TLC PCM displayed up to 1 million cycles in the lab, and its endurance will likely increase as the technique evolves.

IBM employed a set of drift-immune cell-state metrics and drift-tolerant coding and detection schemes to bring the bit error rate into an acceptable range. The scientists exposed the material to extreme temperature variations (25 C to 75 C) for 10 days and were able to achieve a raw 3 x 10-4 raw bit error rate. This is sufficient for many use cases, and the addition of standard ECC schemes will increase the bit error rate to acceptable levels.

The cost of a storage medium is one of the most important factors that determine if it will be suitable for mass production, and storing more bits per cell increases density, which lowers cost. The increased PCM density will provide DRAM-like performance at NAND-like pricing.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

PCM is exponentially faster than NAND in both read and write speeds, but it's slower than DRAM in both metrics. PCM is faster at reading data than writing it, which is similar to many existing memory technologies, and it will be well suited for caching and tiering applications.

Unfortunately, PCM is still in the development stages, and IBM does not have a manufacturing partner. The company does not produce memory products, so it will license the patented technology to semiconductor manufacturers. IBM predicted that it will require roughly two years for a partner to bring a TLC PCM-based product to market.

The initial production stages of PCM will carry a higher cost as the vendors ramp to achieve economies of scale. In the initial stages, we can expect to see blended use cases for the new materials, such as an eDRAM-like implementation that would meld DRAM and PCM to marry the write speed of DRAM with the cost-effective and capacious attributes of PCM. Other leading-edge products will likely employ PCM as a write cache for large NAND repositories, which will magnify NAND endurance and increase its performance. This technique is attractive for less-endurant TLC NAND and the forthcoming Toshiba QLC NAND (four bits per cell).

PCM pricing will fall as uptake increases, which will eventually foster its use as a singular pool of fast storage. The technology could be employed into a broad spate of client computing devices (from mobile to desktop PCs), as well as in the enterprise space.

In the past, the development of new universal memories was hampered by the lack of optimized interfaces and protocols. The burgeoning NVDIMM ecosystem is paving the way for standardized access to new non-volatile memories, such as the pmem.io drivers in Linux and Windows NVDIMM support in Storage Spaces. IBM displayed PCM with its CAPI (Coherent Accelerator Processor Interface) on a POWER8-based server at the recent OpenPOWER summit, which indicates the company is also working on providing system-level support for the emerging technology.

The future holds new and promising technologies that will either complement or supplant existing memories, and HP/SanDisk also has the much-anticipated Memristor under development. The only question is which will emerge as the victor.

Paul Alcorn is a Contributing Editor for Tom's Hardware, covering Storage. Follow him on Twitter and Google+.

Follow us @tomshardware, on Facebook and on Google+.

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

The Wizzard The line "...between crystalline and amorphous states in a chalcogenide (glass-like) alloy" should really read "between crystalline and amorphous (glass-like) states in a chalcogenide alloy" so as not to jumble definitions. Chalcogenides are sulfur, phosphorus et al (incl oxygen but usually not described as such); it does not describe the state of the matter, only it's composition.Reply -

bit_user Reply

I really appreciate the table, but I wonder about the Energy Per Bit row. Does that represent the amount of energy needed to write a bit? Since few of these technologies are bit-addressable, did you compute the energy needed to write a block and then divide by the bits per block? And why is the range so big, for Flash? 10^1 to 10^14 ...it's very rare to see a range that big, anywhere.17979082 said:...

And why would the units be (pj)^2? That's (pico-Joules)^2, correct?

Also, the areal density of HDDs could have been included, in the last cell. Even though it's not a chip, it would convey a sense of the relative densities.

I hadn't been following the development of memristor-based storage products, but now I will!

Anyway, nice article.