Micron Reveals GDDR6X Details: The Future of Memory, or a Proprietary DRAM?

Micron discusses graphics memory: GDDR6X is here, PAM4 & PAM8 for HBM patented

Micron Technology shared some additional details about its latest GDDR6X SGRAM used by Nvidia's GeForce RTX 30-series graphics cards at a virtual briefing last week. The company revealed that it has experimented for more than a decade with technologies enabling the new type of memory and said that GDDR6X SGRAM had not been standardized by JEDEC yet. Right now, only Nvidia uses GDDR6X memory, but Micron hopes this will change over time. Can it?

PAM4 Signaling: Explored for DRAM Since 2006

Micron's Graphics DRAM Design Center in Munich, Germany, has a history of graphics memory innovation ever since the design center belonged to Qimonda, a long-gone DRAM spinoff from Infineon. Engineers from these labs brought the industry's first GDDR5, GDDR5X, and now GDDR6X chips to mass production. In fact, Micron was the only maker of GDDR5X, and now it is the sole producer of GDDR6X.

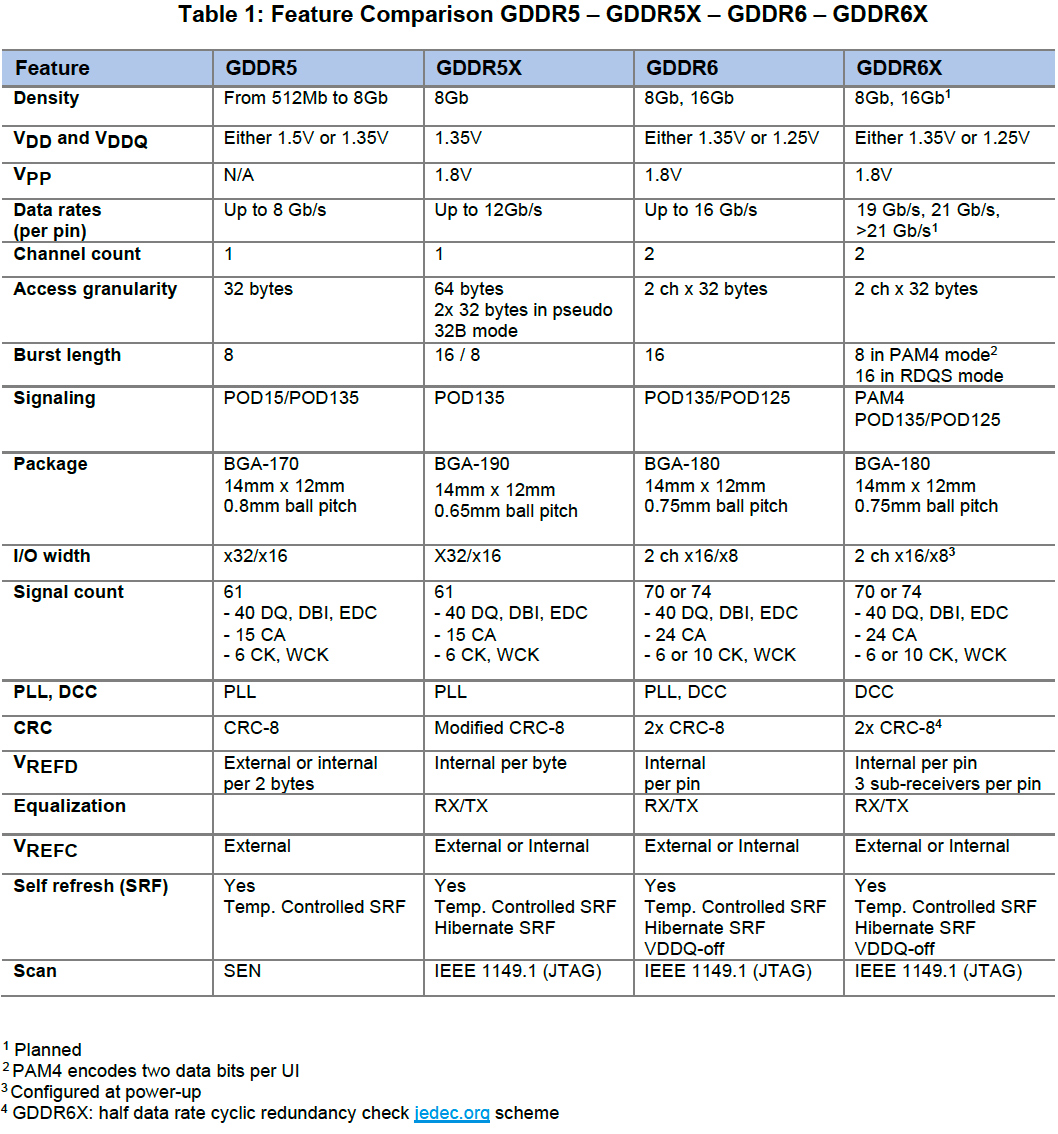

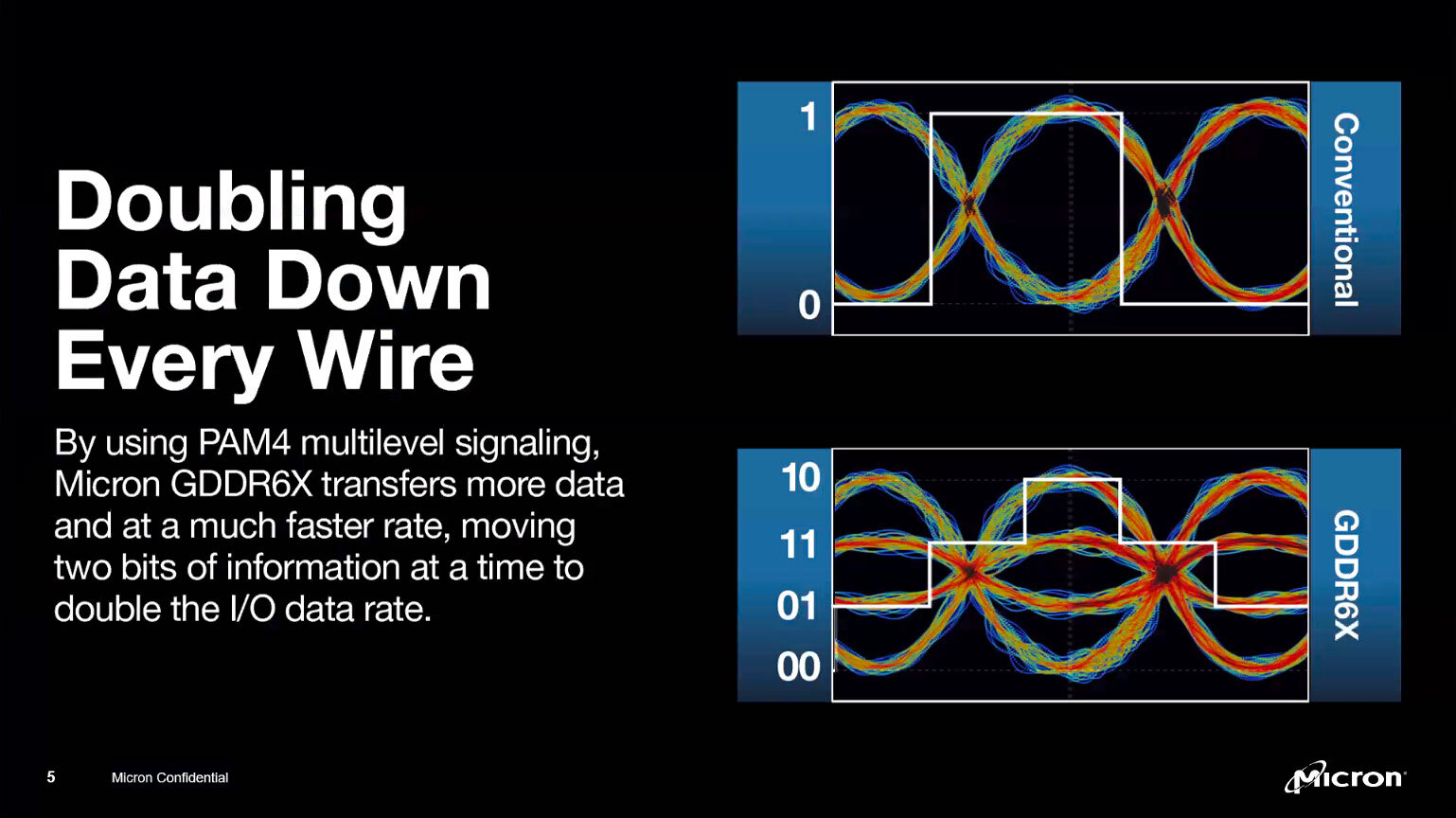

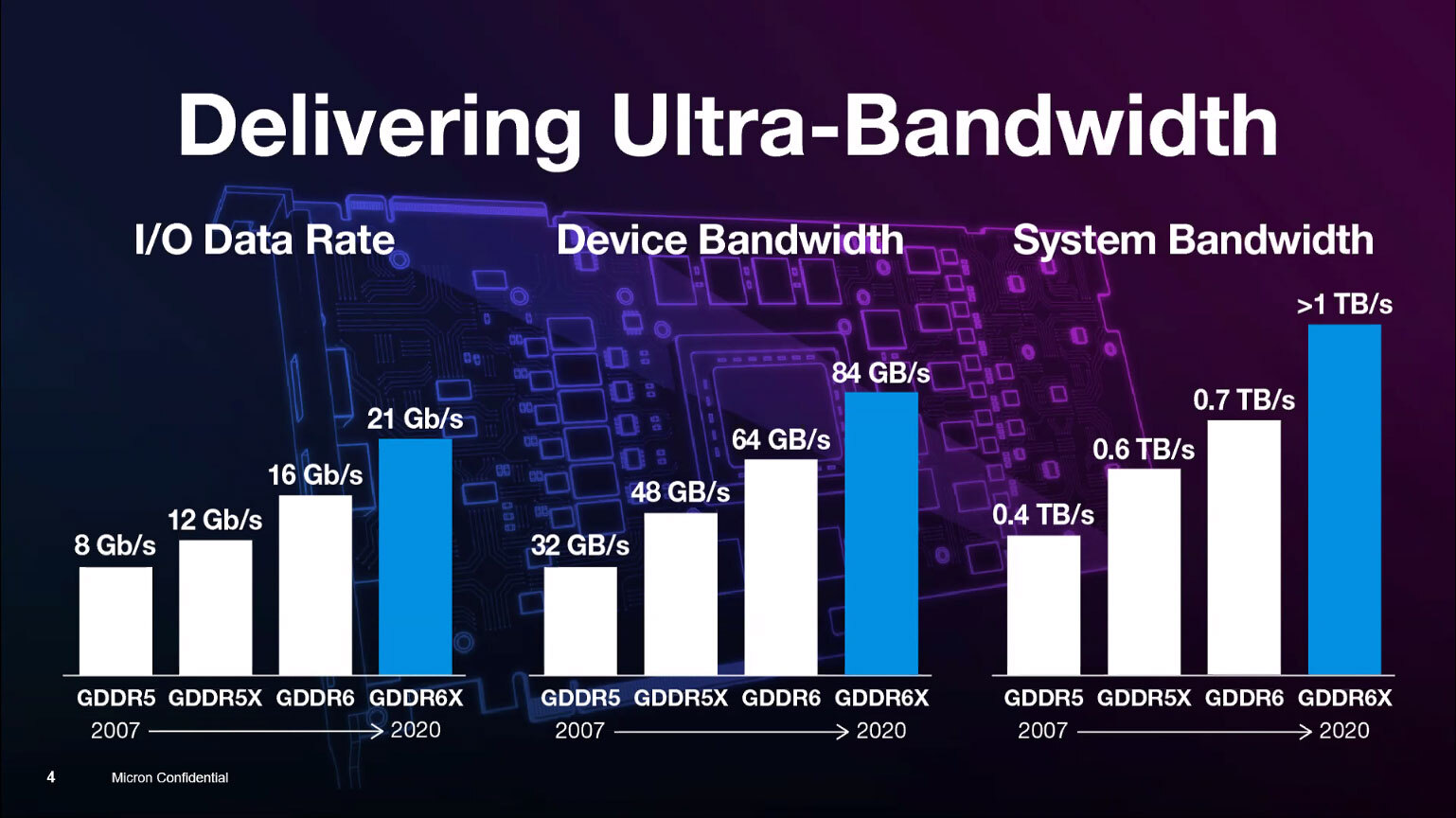

Four-level pulse amplitude modulation (PAM4) signaling is the key feature of GDDR6X memory. This technique transmits two data bits per cycle using four signal levels, thus doubling the effective bandwidth for any operating frequency vs. previous-generation SGRAM types. In addition, PAM4 opens doors to higher data transfer rates (albeit at a cost). As a result, PAM4 improves both efficiency-per-clock and speeds.

There is a slight caveat, though. GDDR6 has a burst length of 16 bytes (BL16), meaning that each of its two 16-bit channels can deliver 32 bytes per operation. GDDR6X has a burst length of 8 bytes (BL8), but because of PAM4 signaling, each of its 16-bit channels will also deliver 32 bytes per operation. To that end, GDDR6X is not faster than GDDR6 at the same clock.

PAM4 signaling has been used for data center networking standards, such as Infiniband, for many years, and four-level coding itself is nothing particularly new. The main reason why PAM4 remained reserved for large data centers and supercomputers is its implementation cost when compared to traditional PAM2/NRZ modulation.

But high costs do not prohibit exploration of the technology in the lab, and this is what scientists from Micron's US branch have been doing since 2006. In the process, they have been granted 45 patents.

"At Micron, we had scientists look into how to utilize PAM4 in memory already since 2006," said Ralf Ebert, Micron's director of the graphics segment. "I intentionally said scientists because I would differentiate between developers and scientists. These were the guys really doing the groundwork for the innovation. They basically took this PAM4 technology and tried to figure out how can we use that in DRAM."

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

After years of PAM4 exploration, Micron felt that it was time to apply the technology to graphics memory. The evolution of GDDR from 2007 (GDDR5) to 2018 (GDDR6) was pretty straightforward in terms of architecture (albeit with a return to BL8), so introducing a new signaling scheme required Micron to bring together its scientists from the USA and engineers from Germany.

"The scientists had to work side by side with the GDDR developers, the guys that signed the chip," said Ebert. "They also collaborated very closely with the system and product engineers that understand the challenges from the system and mass manufacturing perspective."

The work on GDDR6X as we know it today started a little less than three years ago in late 2017. Typically, it takes a lot longer to bring a new type of DRAM to the market, but since this was mostly an in-house project (at least on the memory device level), the implementation of technologies that Micron already had went very quickly. There is a reason for that, though.

Developed in Close Collaboration with Nvidia

New types of memory are developed not only with certain applications in mind, but with certain customers in mind, too. Nvidia was the first company to use GDDR5X and GDDR6 (as well as GDDR2 and GDDR3 back in the early 2000s), so it's not particularly surprising that it engaged with Micron early for the GDDR6X project, too. In fact, according to Micron, Nvidia asked Micron for a discrete memory solution that could offer higher performance than GDDR6.

"Of course, […] you have to collaborate with the customer," said Ebert. "You have to identify a customer to work with and, ideally, rely on a close business and technical collaboration that has been established for many years. [We've got to make sure that] the product works in the application right from the beginning."

Nvidia had to develop an all-new memory controller and PHY for GDDR6X since PAM4 signaling changes how memory subsystems work in general. Based on the fact that no IP design houses have announced their GDDR6X offerings to date, it looks like Nvidia has designed everything in-house.

At present, Nvidia uses GDDR6X on its GeForce RTX 3080/3090 graphics cards based on the GA102 GPU that's aimed primarily at gamers. Eventually, the company will also offer Quadro RTX professional graphics cards featuring the same chip and GDDR6X memory. Meanwhile, Micron says that GDDR6X is also used for AI and HPC applications, both of which are outside of Nvidia's GeForce RTX (as these cards are capped in terms of FP16 and FP32 tensor performance for AI as well as FP64 performance for HPC) and Quadro RTX focus. Perhaps Micron means hypothetical usage, or it is implying an upcoming Nvidia Titan-series card powered by the GA102 that will offer the right performance (without capping) for AI and HPC.

Nvidia is Micron's only GDDR6X launch partner, but Micron stresses that it didn't design the new type of memory exclusively for the GPU developer. The DRAM maker plans to offer GDDR6X to other companies, too.

"We now start offering and opening this up to the industry, GDDR6X is not customer-specific," said Ebert. "We expect other customers to have interest moving forward, and then we will also engage with them."

GDDR6X with PAM4: Harder to Build, but Cheaper than HBM2

Micron says that PAM4 required it to redesign its write data capture circuitry (receiver) in its GDDR6X memory devices to sample and resolve four distinct signal levels accurately. To do so, each GDDR6X DRAM incorporates three input sub-receivers per I/O and data bus inversion (DQ/DBI) pins. The host can fine-tune reference VREFD voltage levels during the write training sequence. GDDR6X's output driver also had to be redesigned, but Micron says the redesign relied on traditional methods.

Micron admits that GDDR6X chips are costlier to produce than previous-generation GDDR6 devices. Furthermore, they require a very clean and stable signal, which is why the memory controller of Nvidia's GA102 GPU that powers GeForce RTX 3080/3090 cards now sits on its own power rail to ensure very clean and stable power.

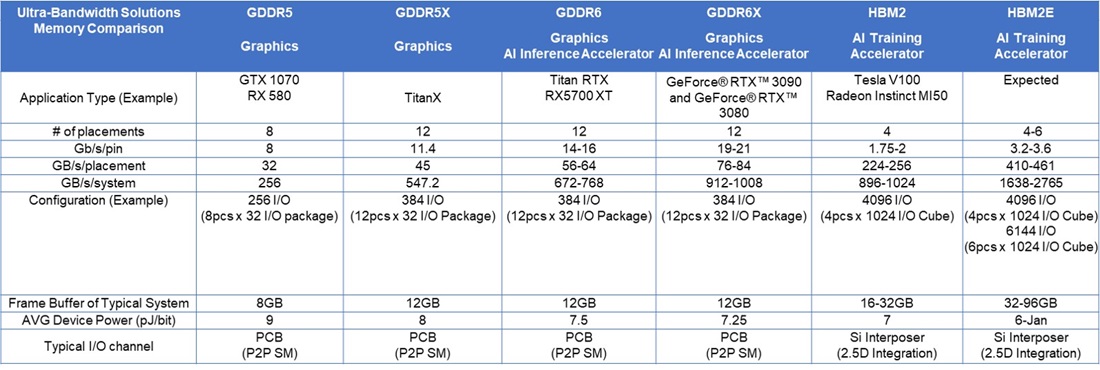

Speaking of power, it is necessary to note that because of considerably increased performance, GDDR6X is 15% more power-efficient than GDDR6 (7.25 pj/bit vs 7.5 pj/bit) at the device level, according to Micron.

Overall, GDDR6X chips and their implementation is more expensive than GDDR6, but it is still considerably cheaper than HBM2-class memory, according to Micron. GDDR6X does not require stacking, and it's shipped as discrete chips that can be soldered down at a factory. The whole infrastructure for discrete DRAMs has existed for decades, and all processes are familiar and cheap. By contrast, HBM2 KGSDs (known-good stacked dies) have to be assembled at a semiconductor fab and then placed on an interposer next to a GPU in a cleanroom of another fab.

"Higher performance DRAM typically also comes at a higher cost," said Ebert. "The big advantage of GDDR6X is that we could push the bar of performance much higher while still remaining within a certain cost envelope. This is given by the fact that GDDR6X is still a discrete memory solution. The GDDR6X memory can be assembled like any other memory on a PCB by add-in-board makers in their standard environment. When you look into the different speed grades of memory, there's typically ranges in cost adders; we positioned our GDDR6X in line with typical ranges. This is not a product that comes at an extremely higher cost for the customer, mainly due to the fact because it is still a discrete memory solution."

Micron doesn't disclose the die size of its 8 Gb GDDR6X devices and doesn't compare it to its 8 Gb GDDR6 devices. The company emphasizes that this is the first type of memory to use PAM4 signaling, and the latter is a breakthrough that opens doors to various kinds of innovation.

"PAM4 was a challenge, and we believe that with this breakthrough, this can be done moving forward," said the director of graphics DRAM at Micron. "This will, we believe, change the DRAM industry. We were the first ones to have done this, and we have been working on this for quite a while."

GDDR6X to Scale Densities and Data Rates

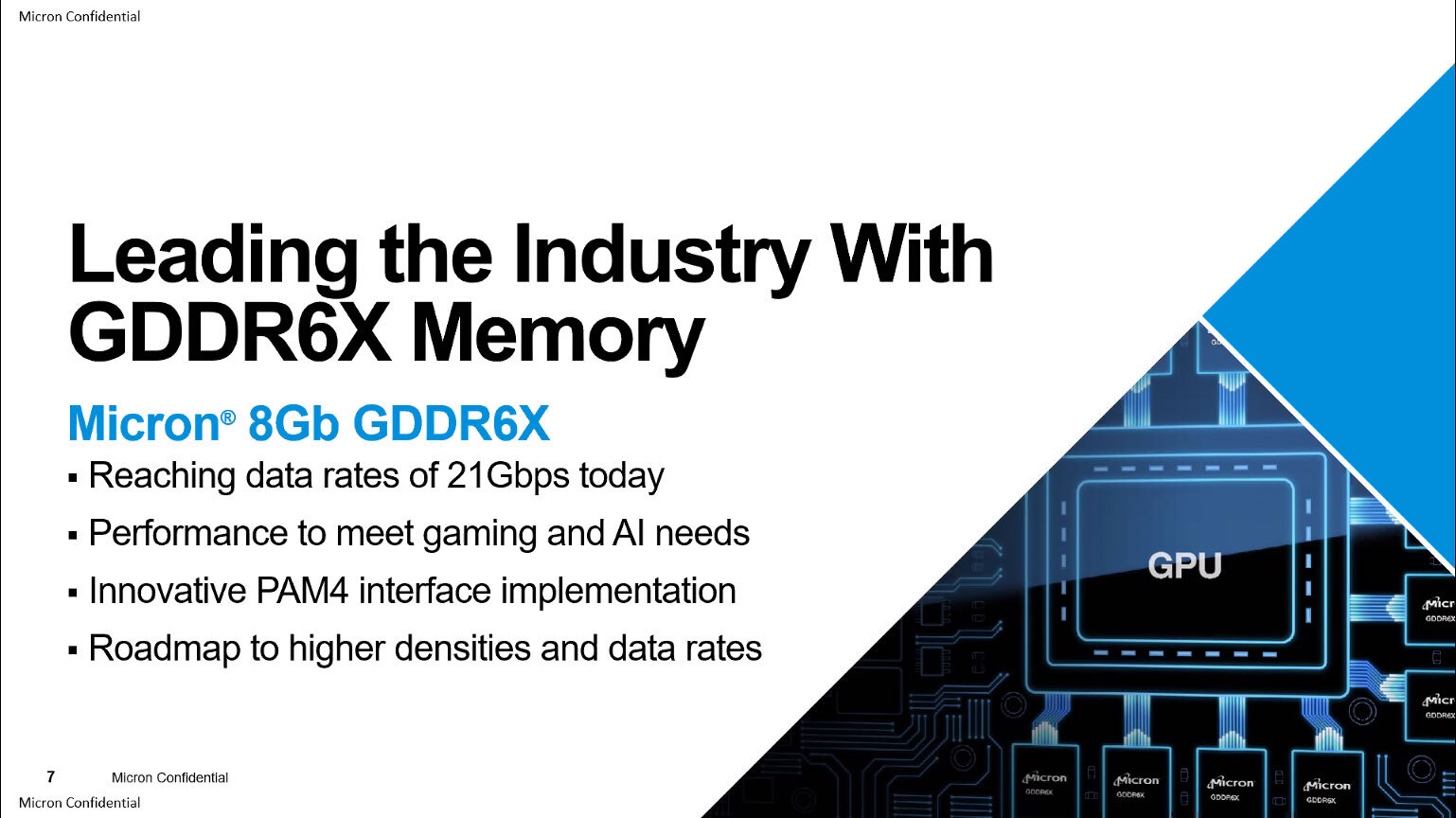

At present, Micron offers 8 Gb GDDR6X chips rated for 19 Gbps and 21 Gbps. The new memory devices are produced using the company’s proven 4th Generation 10 nm-class process technology (also known as 1α nm). The company has a roadmap to scale GDDR6X both in terms of capacity and speed bins.

Next year Micron intends to add 16 Gb densities to the lineup and offer faster chips over time, too. At present, Micron is the only producer of GDDR6X, and Nvidia is the only customer, so GDDR6X's evolution depends on Nvidia's demands and Micron's ability to mass-produce. The key message here is that GDDR6X is set to scale in terms of performance beyond 21 Gbps.

GDDR6X: Not a JEDEC-Standard, But Not Proprietary

To finalize GDDR6X as soon as possible and make it work with Nvidia's Ampere GPUs, the two companies worked nearly in stealth mode. The two companies have never submitted the specification to JEDEC for standardization, and therefore GDDR6X is a proprietary kind of memory only available from Micron at this point.

"At this point in time, it has not been submitted to JEDEC for standardization," said Ebert.

GDDR5X was largely developed by Micron with little (if any) input from the rest of the industry. JEDEC formally published the standard before Micron commenced mass production of GDDR5X and made it available to members of the organization. However, nobody except Nvidia used GDDR5X, and nobody except Micron produced this type of memory.

GDDR6X Could Be Used Beyond Graphics, Maybe

Traditionally, GDDR types of memory have been used almost solely for graphics cards and game consoles. With GDDR6, Micron and its industry peers started to promote graphics DRAM for other applications that require high bandwidth. Among the potential use-cases, they targeted automotive, networking, and FPGA applications. Micron hopes that GDDR6X will be able to address non-GPU markets, but it doesn't make any real promises here.

GPUs today are used widely for various AI applications, so naturally, training and inference applications were mentioned at Micron's briefing when the company talked about GDDR6X for non-graphics verticals. Meanwhile, since Nvidia targets its Titan-series graphics cards at gamers, AI enthusiasts, and various prosumers, Micron's GDDR6X will technically address these markets if Nvidia launches a Titan Ampere model.

To address emerging markets, Micron needs to offer not only the memory itself, but memory controller IP, PHY IP, and verification IP. These types of things are provided by IP design houses like Avery, Cadence, Rambus, and Synopsys. Since GDDR6X's journey has only just begun, IP houses will have to catch up, assuming that they see potential demand for GDDR6X by the industry. That's not exactly guaranteed, especially considering that GDDR6X is not an industry standard supported by JEDEC.

Micron made an interesting remark that CPUs could use GDDR6X:

"Historically, nothing would prevent the industry from GDDR DRAM with CPUs," said Ebert. "Same in this case. But this is a decision that has to be done by CPU companies."

The Future of Graphics Memory: PAM4 Is Here to Stay, Even for HBM

For Micron, GDDR6X is not only a highly-completive product, but a culmination of its work to bring PAM4 signaling to DRAM. While this type of coding will not be used for DDR5 SDRAM, Micron believes it is the future of memory in the longer run.

"So, GDDR6X is where we introduced PAM4, and we definitely can see that moving forward," said Micron's director of graphics memory. "Potentially, PAM4 can be used in other memory standards. It is possible or likely that this type of technology will get used by corporations with CPUs or our other processors."

PAM4 will indeed be used by the industry far more widely than it is used today. PCIe 6.0, which is due in 2021, uses PAM4 signaling to extract more efficiency and higher data rates. Keeping PCIe's broad adoption in mind, CPU and ASIC companies are bound to support both PCIe 6.0 and PAM4 eventually. As soon as the industry learns how to work with four-level pulse amplitude modulation with PCIe 6.0, it will certainly apply it elsewhere.

Micron said that it first implemented PAM4 into an LPDDR test chip to experiment with the technology. Moreover, a patent discovered while we were preparing this story says that Micron patented stacked HBM-class memory with PAM4 and PAM8 signaling three years ago.

HBM types of memory yet has to adopt loads of things that are used by discrete DRAM devices (QDR, BL8/BL16, etc.), so it is hard to make predictions when it can adopt new signaling. But if currently-available HBM2E 3.6 Gbps chips adopted four-level pulse amplitude modulation, this would double per-device bandwidth to 922 GB/s. That means a six-module 6144-bit DRAM subsystem would provide a whopping 5.5 TB/s bandwidth. This is pure speculation at this point, though.

Summary

Micron's GDDR6X is the industry's first mass-produced memory that uses four-level pulse amplitude modulation signaling, or PAM4. The new type of coding transmits two data bits per cycle using four signal levels (vs one bit in case of PAM2) and opens doors to higher frequencies. Having experimented with PAM4 since 2006, Micron sees PAM4 as the evolution not only for GDDR, but for DRAM at large. While DDR5 does not use PAM4, Micron has already patented PAM4 and even PAM8-enabled HBM memory.

The DRAM maker admits that GDDR6X is harder (and probably costlier) to build and implement when compared to GDDR6. Yet, even in its infancy, GDDR6X is cheaper than mature HBM2E because we are dealing with discrete memory chips here. Meanwhile, since GDDR6X returns to a burst length of 8 bytes (down from 16 bytes in case of GDDR6), it is not faster than its predecessor, GDDR6, at the same per-pin data rate.

The biggest caveat of GDDR6X at this point is that it was developed solely by Micron with some input from Nvidia. Micron has not submitted the standard to JEDEC, and it is unclear whether GDDR6X will ever become an industry standard. Micron hopes that GDDR6X will be used for non-graphics applications, but it will be hard to promote the new type of memory without support from other companies.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

-

nofanneeded PCIe 6.0 in 2021 ? are you kidding meeee ?Reply

If things keep going this fast , then I think GDDR6 should replace DDR ... and DDR should be history just like SDR is history today . -

hotaru251 Replynofanneeded said:PCIe 6.0 in 2021 ? are you kidding meeee ?

pcie 4 was MEGA delayed.

should of came out yrs ago.

pcie 5 is already near completed iirc. -

MHBGT The article mentions on more than one occasion that GDDR6X, even with PAM4, is not faster than GDDR6 because of the BL8/16 difference, in fact it is the same.Reply

Then how does GDDR6X achieve the higher bandwidth?