China's Moore Threads MTT S80 GPU Lags Behind GT 1030 in Gaming Showdown

China's GPU trails badly, despite an abundance of power and RAM.

China's Moore Treads MTT S80 graphics card has been tested in a suite of games by TechTuber BullsLab Jay. They say these GPUs only come up for sale occasionally in small batches, so they were fortunate to be able to grab one.

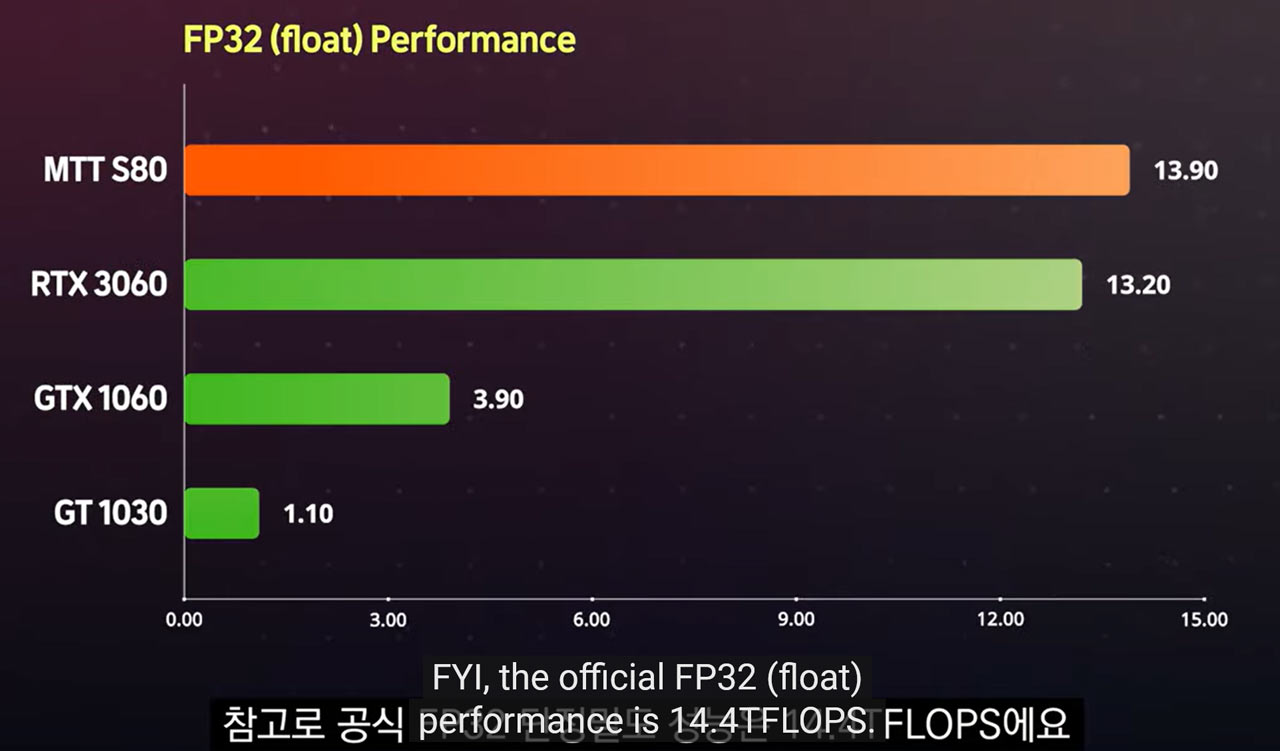

In brief, the 12nm, 4096 core GPU with 16GB of GDDR6 and a TGP of 250W is disappointing in gaming, as it was consistently outgunned by the GT 1030 2GB with a 30W TGP. Moreover, the card seemed to be restricted to DX9 gaming at the time of review (it is advertised as DX11-capable) and its AV1 codec acceleration was also lacking. Last but not least, BullsLab Jay’s investigation shows that the MTT S80 relies on a PowerVR-based GPU architecture – so its mysterious Chunxaio GPU with MUSA cores has now been unmasked.

In the BullsLab Jay video, you can see the Moore Threads graphics card unboxed, tested and disassembled. If you open the link you can watch it with closed-captioned in English, but we have embedded the benchmarks-only video from the TechTuber’s ENG channel below. So you can check out some of the benchmarking action without any fiddling with caption controls.

Article continues belowThe 250W MTT S80 has a bulky triple fan cooler, but once removed you can see the PCB is only about two fans in length. Contrast this design with the 30W GT 1030 which can run with a passive heatsink. The other card in the tests, a 120W GTX 1060, offered stratospheric gaming performance compared to the MTT S80, but it's also usually manufactured in single- or two-fan versions.

Getting to the benchmarks, the titles under comparative tests were limited to DX9 games, as that is all that worked on the Moore Threads review card / driver. The architecture is claimed to support DX11, so it looks like some driver updates will be required. In the video's summary charts, the MTT S80 is often half as fast as the aging low-power GT 1030. Only an outlier or two indicates the MTT S80 has some potential.

Speaking of unfulfilled potential, the touted AV1 video codec support claims are also ringing hollow right now. Several tests showed AV1 decoding was tackled by the CPU, however the card was seen to shove VP9 Codec video processing to the GPU in YouTube testing.

BullsLab Jay dug through old Moore Threads China presentations and concluded that the MTT S80 uses Imagination Technologies' PowerVR architecture. Moore Threads have not been very upfront about this, and some had hoped that it was taking a different tack to the Innosilicon Fantasy (Fenghua) line, which also uses PowerVR IP. (And again, it wasn’t very up-front in letting people know about the underlying architecture).

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The Moore Threads MTT S80 graphics card sells for the equivalent of approximately $430 in China, bundled with an Asus TUF B660M motherboard. If we take away the cost of the board, the graphics card is roughly $260. Perhaps it will start to show more of its FP32 potential as drivers mature and these PowerVR cards for PCs will become more effective challengers.

Right now, with demonstrably faster GT 1030 selling for $80 or less, the MTT S80 isn't exactly making a good case for itself at more than three times that price. It's possible that performance will improve substantially with driver updates, just as we saw with Intel over the past several months. But of course, that will depend on how much time and effort the company plans to spend on driver improvements, as well as the limitations of the architecture.

Mark Tyson is a news editor at Tom's Hardware. He enjoys covering the full breadth of PC tech; from business and semiconductor design to products approaching the edge of reason.

-

The power consumption is really enormous. Luckily though, as evident from the review the card did run cool at 44C temps but that's the only good thing about it so far.Reply

There were also instances where the card did not even run some games due to API and software compatibility issues. Given all these issues, the MTT S80 graphics card may not be that good of a side option even for the Chinese/ASIAN market.

https://cdn.wccftech.com/wp-content/uploads/2023/02/Moores-Threads-MTT-S80-Chinese-Gaming-Graphics-Card-Performance-Games-_3.png

As interesting this may sound, the card was able to run the CRYSIS game. D3D9 version only though. lol

1621573247383773184View: https://twitter.com/Loeschzwerg_3DC/status/1621573247383773184 -

bit_user I'd love to hear some more details on why they're believed to use Imagination IP, in these GPUs. I find it very surprising they perform so poorly, if true.Reply

I know it's not trivial to scale up something like a GPU, but given how much success Apple had with Imagination, I'd have thought it would work much better.

BTW, did anyone profile the CPU, to see if the performance bottlenecks seem to be in the driver code, itself? I just wonder if maybe Imagination doesn't have Windows drivers, and maybe MT simply took their Linux/OpenGL driver and tried to reuse bits and pieces of it in the Windows/D3D driver framework. Where there are mismatches, it could involve a lot of overhead that I think would mainly show up as higher host CPU load. -

Reply

Nyce. It’s only gonna get betterMetal Messiah. said:The power consumption is really enormous. Luckily though, as evident from the review the card did run cool at 44C temps but that's the only good thing about it so far.

There were also instances where the card did not even run some games due to API and software compatibility issues. Given all these issues, the MTT S80 graphics card may not be that good of a side option even for the Chinese/ASIAN market.

https://cdn.wccftech.com/wp-content/uploads/2023/02/Moores-Threads-MTT-S80-Chinese-Gaming-Graphics-Card-Performance-Games-_3.png

As interesting this may sound, the card was able to run the CRYSIS game. D3D9 version only though. lol

1621573247383773184View: https://twitter.com/Loeschzwerg_3DC/status/1621573247383773184 -

kal326 I’m pretty sure the last time I had a PowerVR based desktop card was a PCI one in the mid to late 90s, but hard to recall. It might have been a S3 Virge at that point as well.Reply -

bit_user Reply

They did start out making PC graphics card chipsets! I think the last ones shipped back in 2000. They also made the GPU in the Sega Dreamcast.kal326 said:I’m pretty sure the last time I had a PowerVR based desktop card was a PCI one in the mid to late 90s, but hard to recall.

https://en.wikipedia.org/wiki/PowerVR

They later flirted with selling hardware raytracing accelerator cards, but I'm not sure whatever came of these:

https://www.semiaccurate.com/2013/03/12/imagination-shows-off-rogue-and-caustic-silicon/ -

digitalgriffin Making graphics cards is hard mkaaaaay.Reply

Had a feeling they were borrowing old S3 PowerVR ip -

digitalgriffin Replykal326 said:I’m pretty sure the last time I had a PowerVR based desktop card was a PCI one in the mid to late 90s, but hard to recall. It might have been a S3 Virge at that point as well.

Yes they were. It was the first tile renderer. They were bought by VIA And they incorporated tech by bitboys from Finland. Remember them?

PowerVR graphics are still used as the 3d chips for a number of smart phones due to its power efficiency. -

bit_user Replydigitalgriffin said:Had a feeling they were borrowing old S3 PowerVR ip

Woah, let's get a few things straight:digitalgriffin said:Yes they were. It was the first tile renderer. They were bought by VIA And they incorporated tech by bitboys from Finland. Remember them?

S3's graphics business was bought by VIA, and then later sold on to HTC

PowerVR is by Imagination Technologies, which is UK-based, but now owned by a Chinese-backed private equity group.

BitBoys, OY was acquired by ATI and then sold on to Qualcomm, forming the basis of its Adreno GPUs. Interestingly, they still have offices in the same building as part of AMD's graphics group.

None of those companies you mentioned collaborated in any way, as far as I can tell.

Uh, not much since Apple dumped them and MediaTek switched to using ARM's Mali GPUs.digitalgriffin said:PowerVR graphics are still used as the 3d chips for a number of smart phones due to its power efficiency.

Maybe some off-brand phone SoCs use them? They've definitely fallen on hard times since Apple dumped them, which is why they were delisted and bought by that private equity firm. I think have largely been kept afloat by IP licensing fees that Apple is paying them.

They're trying to reinvent themselves as a one-stop IP shop for RISC-V based SoCs, analogous to what ARM has become. So, you can go to them and license all of the blocks you need for your own SoC: Graphics, CPU, memory controller, interconnect, etc. -

kyzarvs As we all sit at our screens viewing data that has certainly passed through chinese designed kit at some point between where it's stored and us - I wouldn't mock this early effort, in a few years they could be leading the market.Reply -

digitalgriffin Replybit_user said:Woah, let's get a few things straight:

S3's graphics business was bought by VIA, and then later sold on to HTC

PowerVR is by Imagination Technologies, which is UK-based, but now owned by a Chinese-backed private equity group.

BitBoys, OY was acquired by ATI and then sold on to Qualcomm, forming the basis of its Adreno GPUs. Interestingly, they still have offices in the same building as part of AMD's graphics group.None of those companies you mentioned collaborated in any way, as far as I can tell.

Uh, not much since Apple dumped them and MediaTek switched to using ARM's Mali GPUs.

Maybe some off-brand phone SoCs use them? They've definitely fallen on hard times since Apple dumped them, which is why they were delisted and bought by that private equity firm. I think have largely been kept afloat by IP licensing fees that Apple is paying them.

They're trying to reinvent themselves as a one-stop IP shop for RISC-V based SoCs, analogous to what ARM has become. So, you can go to them and license all of the blocks you need for your own SoC: Graphics, CPU, memory controller, interconnect, etc.

I thought for sure via acquired them and merged with the s3 portfolio after apple dumped them. But I guess I was wrong.

Bitboys used a similar tile approach so I thought it was incorporated when they were sold. Botched that one up as well I guess.

Thanks for the correction.