Nvidia's New HGX A100 Supercharges AI

Chip giant announces new additions to HGX HPC platform.

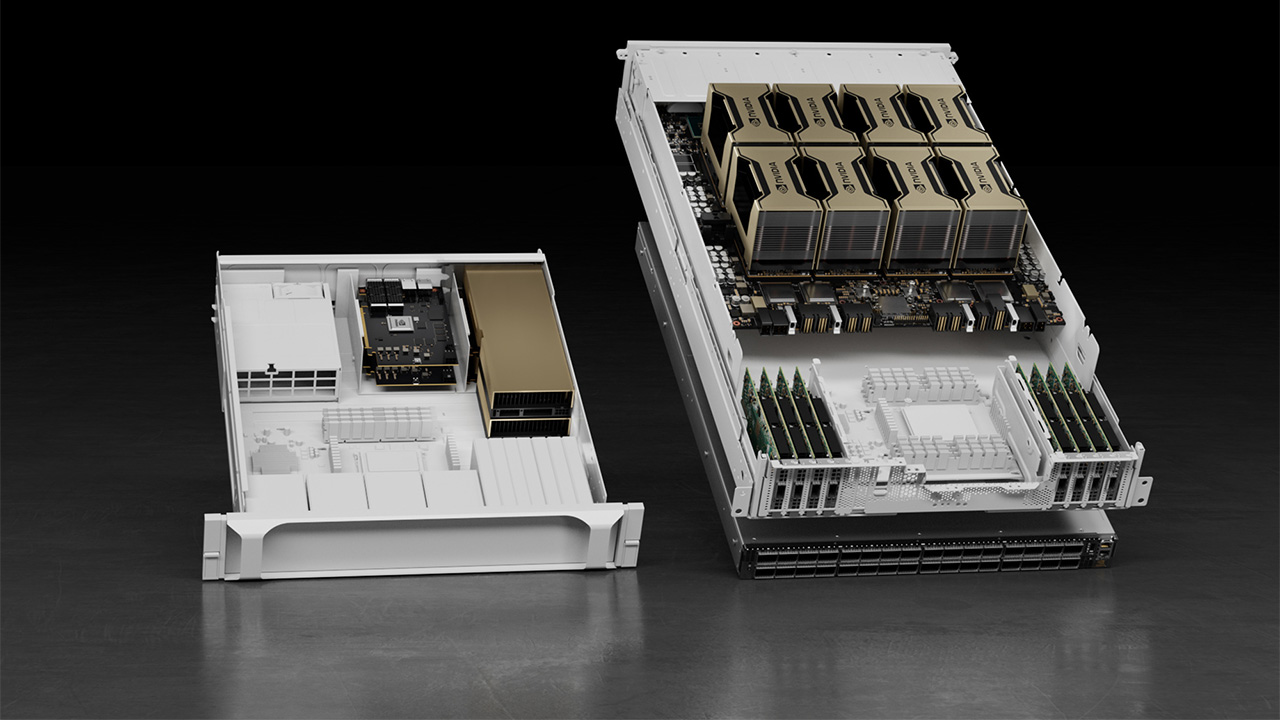

Nvidia’s powerful A100 GPUs will be part of its HGX AI super-computing platform, the Californian graphics-crunching colossus announced today, with new technologies including its 80GB memory modules, 400G Infiniband networking, and Magnum IO GPUDirect Storage software also being added.

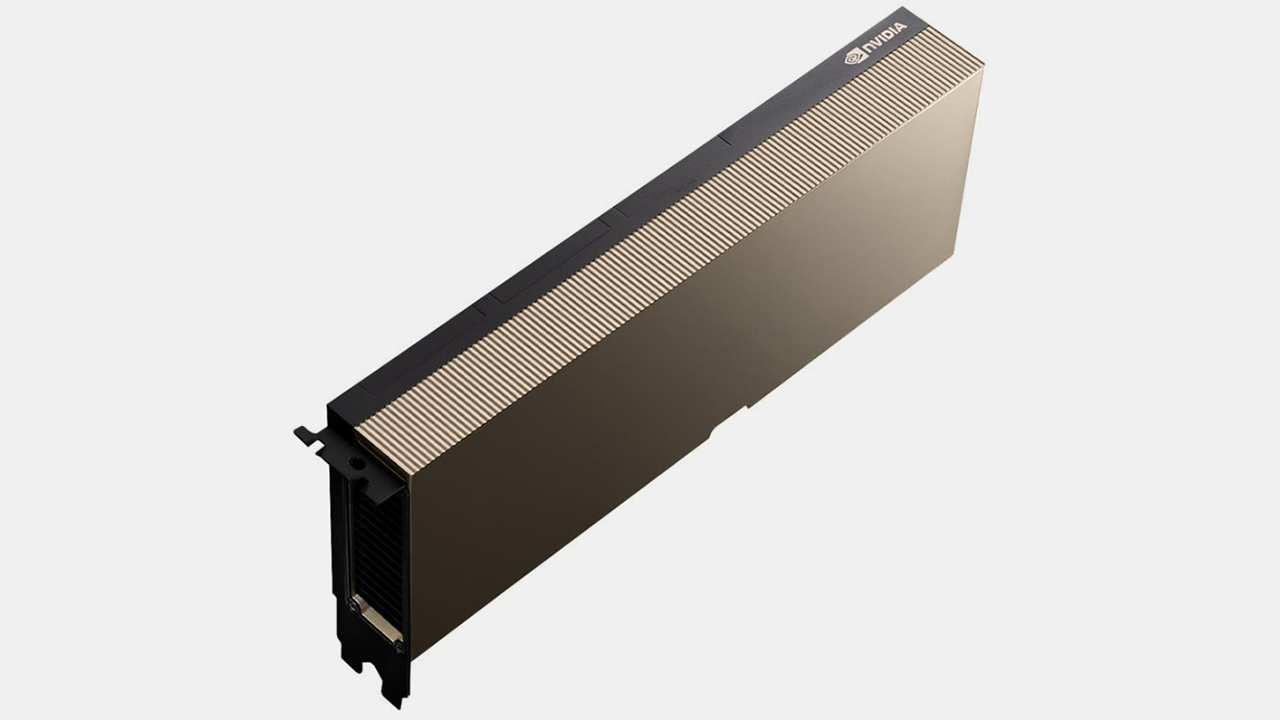

The A100, which recently flexed its Ampere-powered muscles to overtake the Titan V as the most powerful GPU in the OctaneBench benchmark, comes in two forms: one with 40GB of HBM2E and the other with 80GB. The larger model boasts the world’s widest memory bandwidth, transferring over two terabytes per second. Built on a 7nm process, you get 54.2 billion transistors arranged into 6912 shading units, 432 texture mapping units, 160 ROPs and 432 tensor cores.

Tying these GPUs together within the massive racks of supercomputers are technologies such as Magnum IO GPUDirect Storage, which lowers latency by allowing direct access between GPU memory and storage. Infiniband networking at up to 400Gb/s allows bandwidth of up to 1.64 Pb/s per 2,048-port switch, with the ability to connect more than a million nodes.

“The HPC revolution started in academia and is rapidly extending across a broad range of industries,” said Jensen Huang, founder and CEO of Nvidia. “Key dynamics are driving super-exponential, super-Moore’s law advances that have made HPC a useful tool for industries. Nvidia’s HGX platform gives researchers unparalleled high performance computing acceleration to tackle the toughest problems industries face.”

Not exactly designed for home use, the HGX platform is used by companies such as General Electric, which simulates computational fluid dynamics to design new large gas turbines and jet engines. It will also be used to build the next-generation supercomputer at the University of Edinburgh, optimized for computational particle physics to analyse data from massive particle physics experiments, such as the Large Hadron Collider.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Ian Evenden is a UK-based news writer for Tom’s Hardware US. He’ll write about anything, but stories about Raspberry Pi and DIY robots seem to find their way to him.