Wintel VR: Intel, Microsoft, And Their Two-Pronged Plan To Democratize VR, AR And MR

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Suddenly, Intel has been quite busy on the VR/AR/MR front. At IDF 2016, the company announced and outlined multiple facets of its VR plans, including its Project Alloy VR HMD and new RealSense 400 Series camera, as well as plans to develop specifications for mainstream HMDs--all of which involves partnering with Microsoft. Intel quietly demoed a couple of intriguing proofs of concept, and now it's made a couple of key acquisitions to bolster its deep learning IP, which actually pertains directly to its VR/AR/MR vision.

But those are the ingredients, and there’s a larger soup that Intel has cooking. We’ve dug deep to suss out what we do know, what we think we know, and what we suspect. Two of the most important components here are the two types of HMDs that Intel is developing, and Microsoft’s role in both.

Project Alloy VR HMD

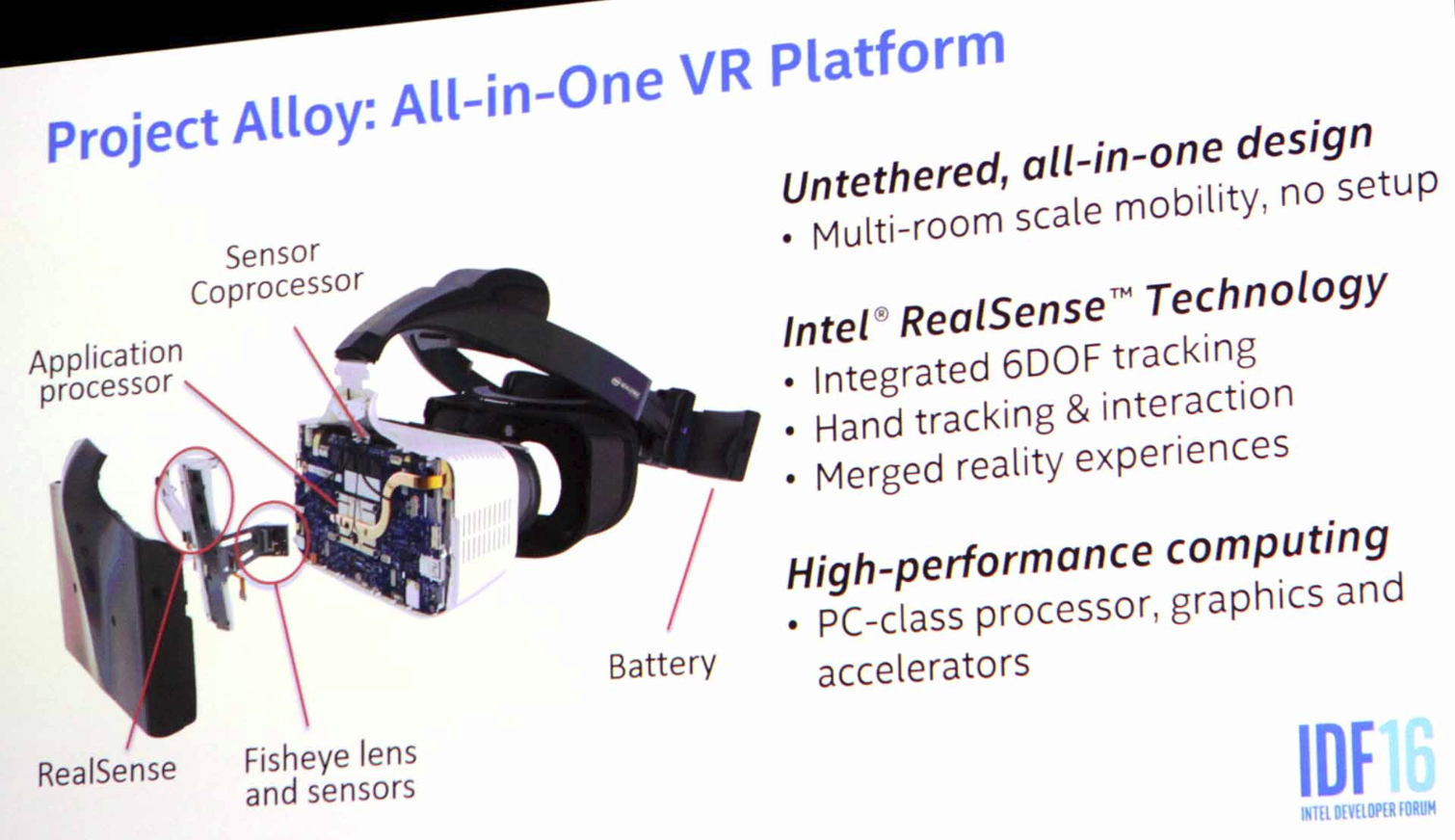

To be clear, Intel did not demo its Project Alloy VR HMD at IDF. Yes, there were onstage demos at the keynote, but the HMD wasn’t even using the newer version of the RealSense camera that it will eventually employ, and there were no further opportunities to see Alloy in action.

Article continues belowProject Alloy, in whatever stage of completion it’s in, is different from Rift/Vive and HoloLens. Although I chafe at this seemingly irresistible need companies seem to have to invent new terminology for their technology, I see what Intel is going for when it called Project Alloy “Merged Reality.” Although the HMD is fully occluded like the Rift and Vive, there’s a RealSense camera mounted on the front that scans the real world in (essentially) real time and reproduces that imagery inside the HMD. In that sense, you can “see” the real world. It’s effectively a passthrough system, but whereas the HoloLens has clear lenses that let you see the real world, Alloy reproduces a facsimile of it for you.

A Brief Rant On Nomenclature: XR

So there’s VR (virtual reality), AR (augmented reality), MR (“mixed reality,” which is what Microsoft calls HoloLens) and MR (“merged reality,” which is what Intel calls Project Alloy). But whenever I hear someone from Microsoft explain why they call HoloLens “mixed reality,” it sounds like the dictionary definition of augmented reality. Intel has the best excuse for calling Project Alloy “merged reality” (as described in the main article copy), but in any case, we have a nomenclature problem on our hands. We’ve taken to calling the whole ball of wax “XR” as a shorthand for any and all of the above technologies, as in “[x] reality.” The “x” also winks at the notion of mixed or merged realities in a symbolic way.

Further, Project Alloy is a completely self-contained system. That is, there is a PC crammed inside the HMD, and there are no external wires. We don’t have full specifications--or really any specifications--but we know that it will run on an Intel Core i5 or i7 Skylake CPU and that there will not be a discrete GPU. It also has what a presentation slide referred to as a “sensor coprocessor,” which we believe is just another term for a vision processor (VPU). We can’t be certain at this point, but we believe that, in Alloy, this chip is (or will be) made by Movidius.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

It’s highly unlikely that Alloy would employ Microsoft’s HPU (a proprietary term for that specific vision processor), because that chip was custom-made for the HoloLens, and further, an Intel-made device would likely sport a VPU from its new acquisition, Movidius. Whether Alloy might have a Movidius Myriad 2 VPU or a new, unannounced chip is anyone’s guess at this point.

It will rely on Windows 10 for its OS, so it’s an actual PC, and what’s more, it will make use of the Windows Holographic shell. Clearly, then, Windows and Intel are working closely together on Project Alloy.

On this point Intel is being a little cagey, but it did announce that Windows Holographic will run on “mainstream” PCs when it’s available in 2017. (As a teaser, Intel demoed a merged reality experience at 90 fps on a NUC.) But, in any case, the PC that comprises the business end of Project Alloy is decidedly “mainstream,” and doing the arithmetic, you can imagine that Project Alloy will be able to run any of the experiences that a HoloLens can. (Remember, the HoloLens is also a self-contained system, but it has a less powerful Cherry Trail SoC.)

To understand further how Project Alloy compares to other HMDs out there, here’s a simple but handy chart:

| Header Cell - Column 0 | Rift/Vive/OSVR | Sony PSVR | Microsoft HoloLens | Intel Project Alloy | Mobile VR (Gear VR, Daydream) |

|---|---|---|---|---|---|

| Type | VR | VR | AR/”Mixed Reality” | VR/AR/”Merged Reality” | VR |

| System | Separate PC | PlayStation 4 | (Self-contained) | (Self-contained) | Separate smartphone |

| Tethered | Yes | Yes | No | No | No |

| Occluded | Yes | Yes | No | Yes | Yes |

| Passthrough | No | No | Yes | Yes | No |

This is where the first big head-scratcher lands: If Project Alloy is a fully occluded headset, how and why is it using Holographic technology? The whole idea behind holograms is that they’re placed within the real world (i.e., augmented reality). Further, the display technology HoloLens uses has to do more with projection than anything else, and that would seem to be completely at odds with a fully occluded HMD like Project Alloy.

On the other hand, putting low-GPU-intensive holograms into an HMD’s display should be pretty easy, provided the HMD has a virtual space in which to put them. On top of that, Intel and Microsoft have been clear that their collaborations will allow both 2D and 3D applications within a given XR environment, including those that already exist within Windows. (More on that later in this article.)

Further, as I mentioned, this is not merely an occluded HMD. It gets effective passthrough to the real world by way of the RealSense camera. And that’s where Project Alloy gets really (really, really) interesting.

RealSense 400 Series And Deep Learning

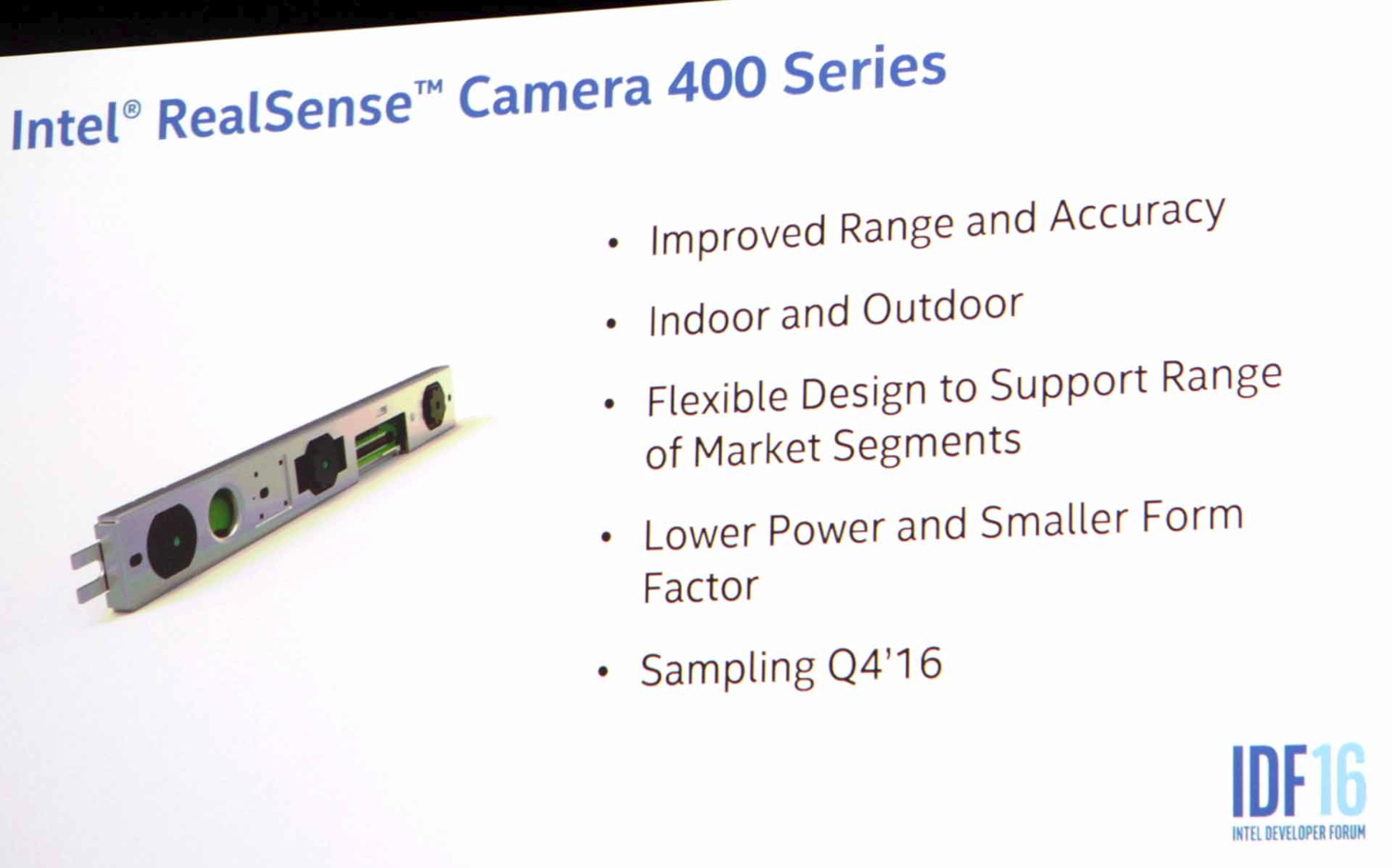

Just as we know few details on the internals of Project Alloy, we know little about Intel’s latest RealSense camera, the 400 series. I speculate that Intel hasn’t finalized it yet; the onstage demos at IDF used an existing RealSense camera, and even during the RealSense session later in the week, we never got any specifications on the new kit.

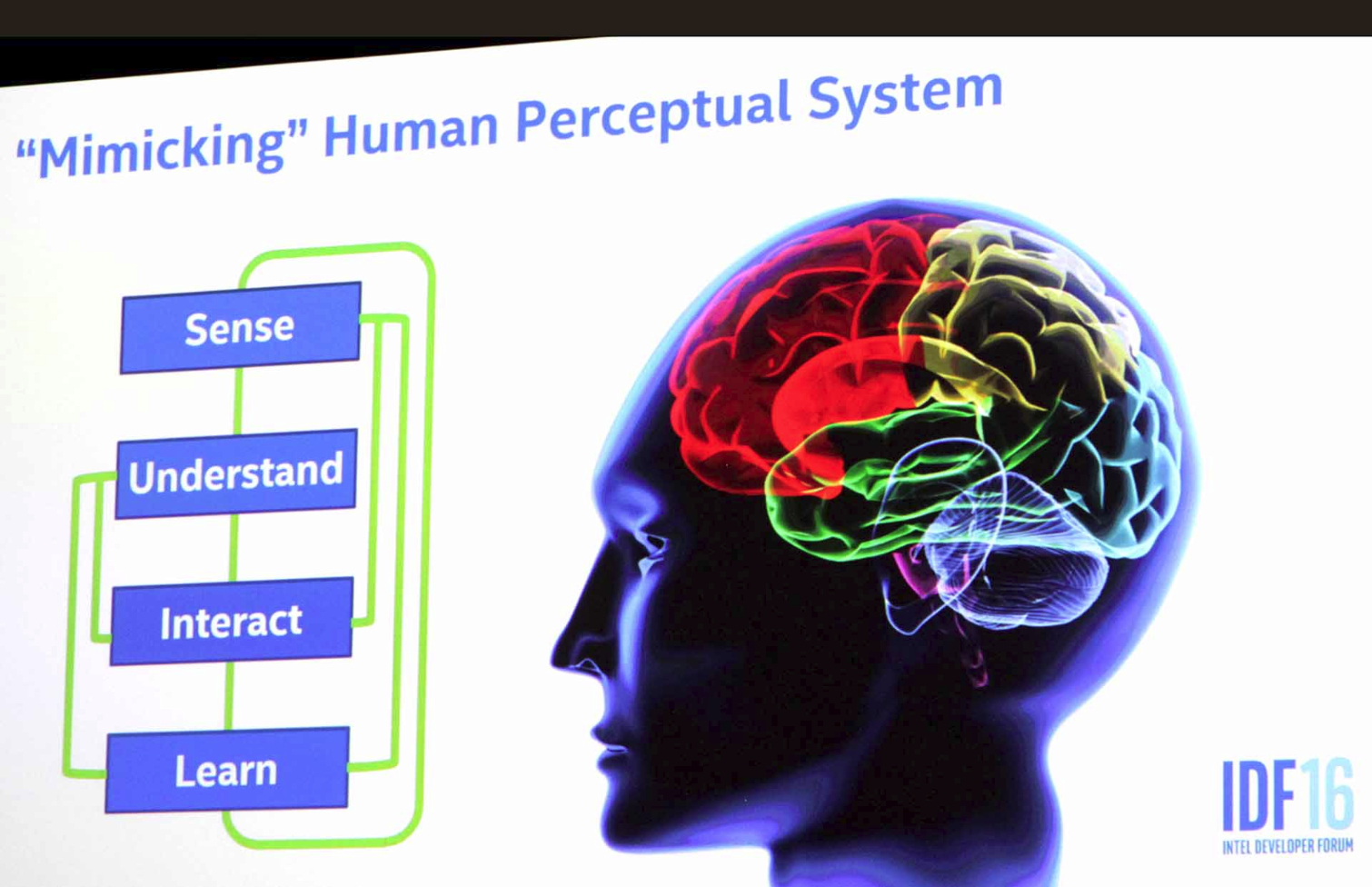

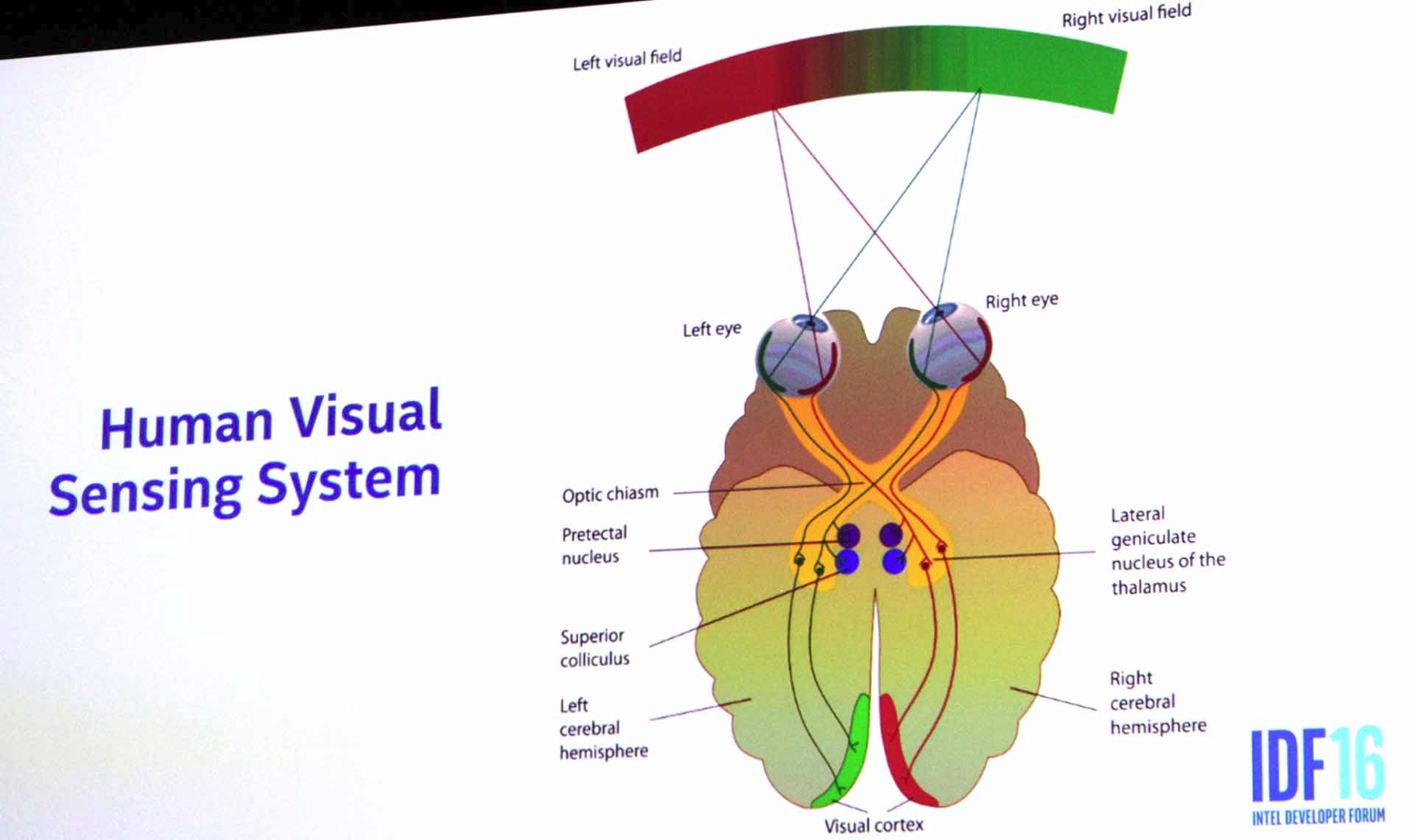

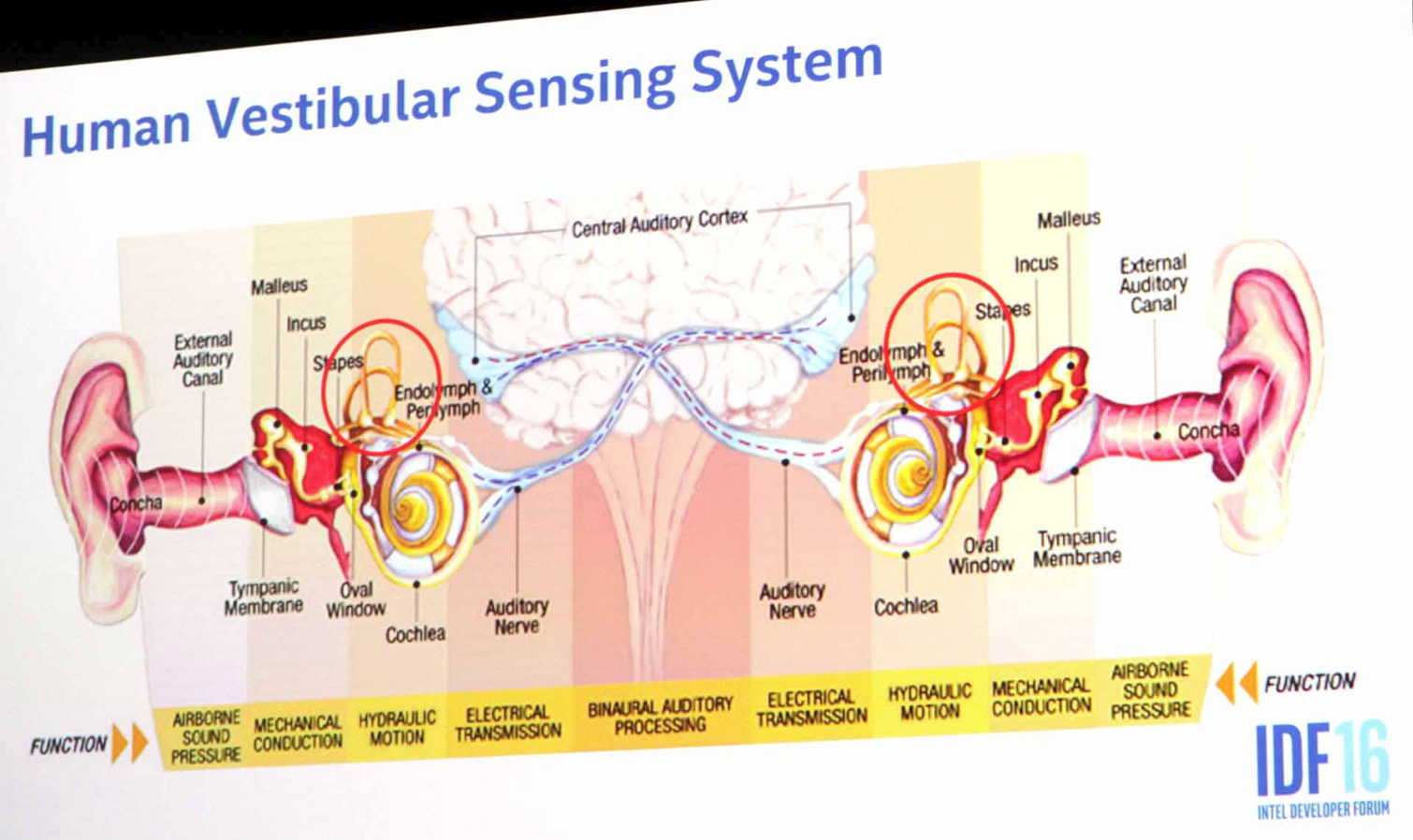

In any case, this new RealSense camera promises extraordinary capabilities. In an IDF presentation, Dr. Achin Bhowmik, VP and GM of Intel’s Visual Perceptual Computing Group, laid it out as simply as possible: The goal of RealSense technology is to mimic human perceptual systems--both human vision and the human vestibular system.

That is an incredibly lofty goal, but the Group is working towards it, and latest iteration, the RealSense 400 Series, is a step closer. According to Dr. Bhowmik, the new camera will have increased accuracy over its predecessors, will work both indoors and outdoors, offer a smaller and lighter package, and will have a flexible design so that it can be deployed in a number of different types of applications.

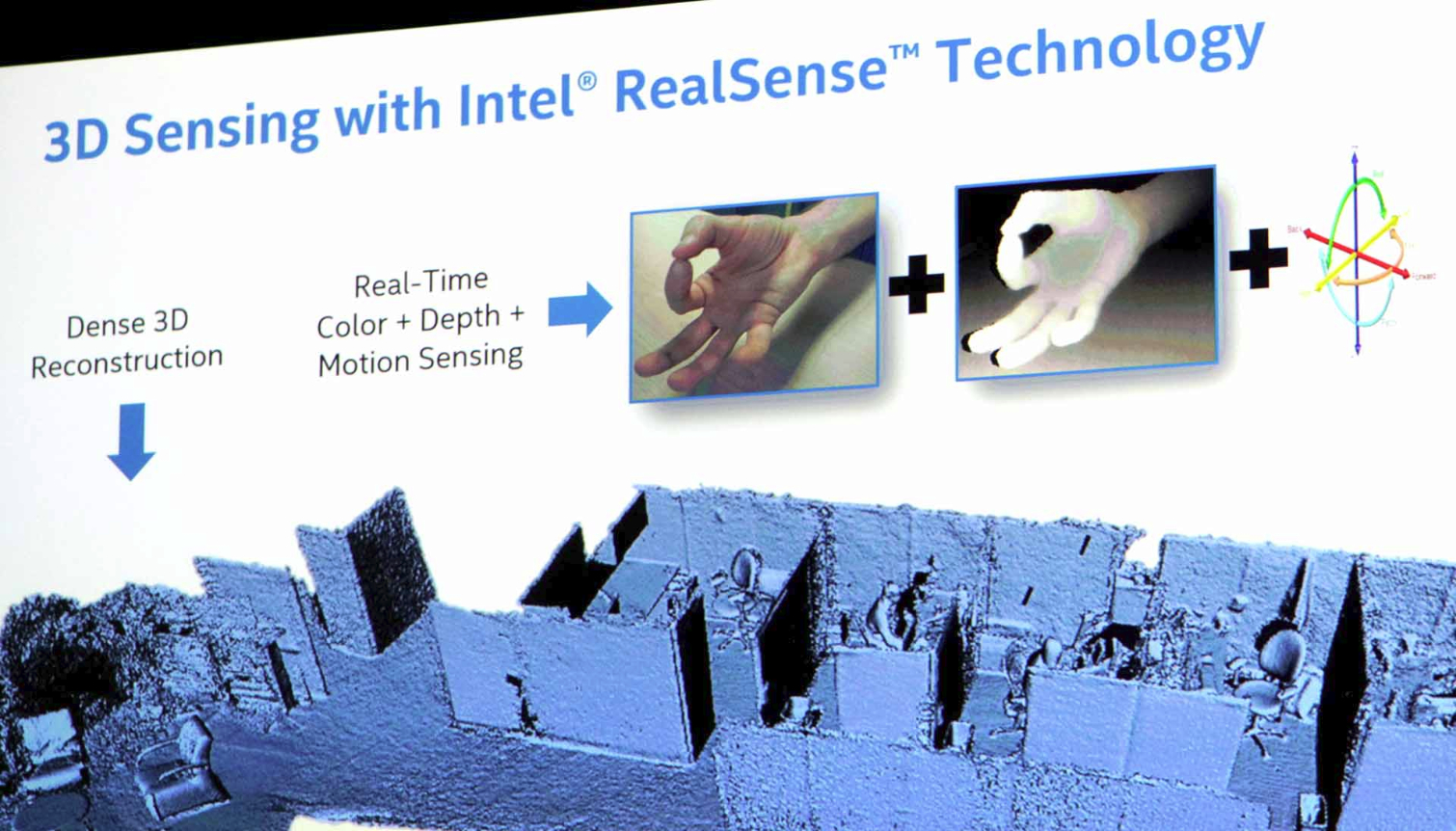

Paired with a device like Alloy, the RealSense 400 Series camera and its VPU will offer 6DoF hand and body virtualization/tracking and 3D scanning of objects and spaces, such that you can interact with them and avoid bumping into them (unless you want to). Unlike the HoloLens, which has to scan and “learn” a room before it can place objects within it, RealSense promises to perform multi-room scale movement and tracking in near real-time. It should also enable multiple people to enter the same virtual space, and let individuals interact with holograms.

Intel has been banging away on these capabilities for a while. A year ago at IDF, we saw a (pretty rough) demo of a Leap Motion camera strapped to an OSVR HMD. Intel has moved on from both, replacing the Leap Motion camera with its new RealSense camera, and creating its own HMD instead of using OSVR. Earlier this year at Mobile World Congress, we saw Intel demo a Project Tango smartphone that could perform hand and object virtualization. (Not “tracking,” but “virtualization.”) They had a mocked-up HMD for it, too, and this demo was far more impressive.

Then just weeks ago, word broke about an Intel-made depth camera that strapped onto an HTC Vive. Lo and behold, the horn shape of that device matches what we saw on Project Alloy.

It’s all culminated with the announcements of Project Alloy and the RealSense 400 camera. However, powerful though the RealSense camera may be, the most important aspect to it is the presence of deep learning.

A full discussion of what deep learning is and how it works is far beyond the scope of this article, so for more in-depth reading on the subject, check out this article on the rise of client-side deep learning (which is germane to the RealSense 400 series camera and Project Alloy).

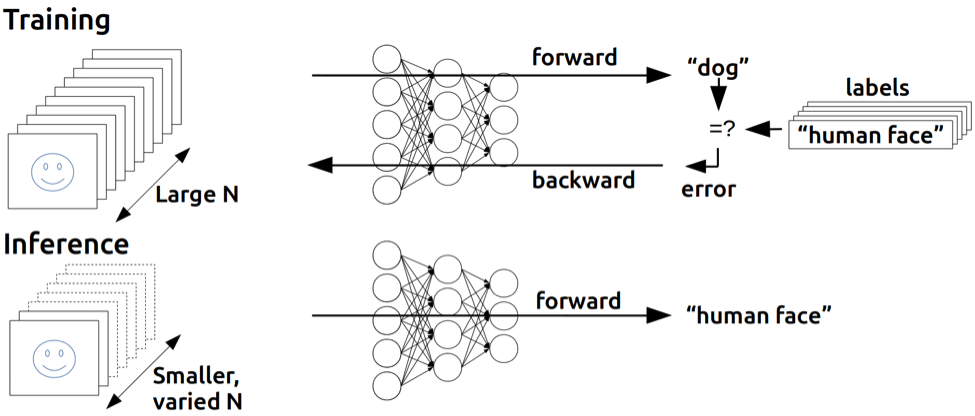

Deep learning is a component of artificial intelligence (AI), an area into which Intel is aggressively pushing. But how that pertains specifically to Project Alloy and the RealSense 400 series camera is more simple. In a (very small) nutshell, there are two components to deep learning: “training” and “inference.”

The training portion requires extraordinary resources. This is Big Data, supercomputing country. However, the inference piece can be done with extremely low compute power. Movidius, for example, has its Fathom neural compute stick, which we affectionately refer to as “deep learning on a stick.”

A device like the Fathom (or a chip that fits into such a tiny form factor) can be loaded with a neural net, which may take up just dozens of megabytes of space. The neural net has been trained so that when the device “sees” objects, people, animals and spaces, it can interpret what they are--not just that something is a dog, but that it’s a Golden Retriever, for example.

What’s truly extraordinary about devices equipped with neural nets is that they don’t need any cloud connection to work. They’ve already been trained. However, the neural nets are also learning as they “see” the world, and they can send that data back to to the cloud to contribute to better training. Then, armed with more data, the training part gets smarter, and you can download a smarter neural net to your device, and so goes the virtuous cycle.

With Movidius in its stable, Intel has both parts covered--at IDF, the company announced that it acquired Nervana Systems, a deep learning/AI company.

On an HMD like Project Alloy, where a neural net could be paired with the RealSense 400 Series camera, the potentialities are mind-boggling.

An Unnamed Mainstream XR HMD (Or HMDs)

Lost in the bluster of the Project Alloy excitement is the fact that Alloy is not the only HMD project Intel is working on. Intel representatives would not discuss many particulars, but based on multiple conversations we had with them, we’re able to take some clues and piece them together.

Fundamentally, Intel is looking to address more than one HMD market. Project Alloy is a self-contained system with passthrough capabilities afforded by real-time mapping and tracking. The other unnamed pursuit here is a mainstream-level, fully occluded HMD designed to work with PCs and Windows--although language from Microsoft as well as breadcrumbs inadvertently dropped by Intel reps indicate that an augmented reality device like the HoloLens could be in the offing, too (I find it striking, incidentally, that Intel hasn’t even named this second Thing yet. There’s no project codename. It’s just an idea, a strategy, a notion.) And in this unnamed effort, Intel and Microsoft are working closely together.

This other headset idea will ostensibly fit the mold of Rift or Vive more than Project Alloy does, insofar as it will be an occluded VR HMD designed as a PC peripheral, but it will be a decidedly more mainstream device. Intel’s sees a huge hole in the XR market--that vast unsettled territory between mobile devices like Gear VR and high-end HMDs like Rift and Vive.

Obviously, questions immediately arise as to what kind of quality one could expect from such a device, but Kim Pallister, Director of Intel’s Virtual Reality Center of Excellence, said, “You need more affordable solutions, and that doesn’t mean dumbing down the specs, because then you get a bad experience. You have to re-engineer how the pipeline works to a degree.”

That’s easier said than done, but Intel is looking to solve the problem by leveraging technology it knows best: processors.

Although we don’t have many specifics yet, we do know that Intel is working on solving problems in XR by offloading certain tasks to a CPU. Intel engineers have been working on a version of this with some VR game devs on existing PCs, to alleviate some of the GPU’s overwhelming VR responsibilities. It appears that from this experimentation, Intel is drawing up ways for HMDs themselves to offer similar assistance.

“In addition to having some sensors on the HMD and doing some of the sensor fusion-type work, there’s a theory we have that we’re going to go do a proof of concept and try and prove out that there may be advantages to doing things like barrel distortion, and time warp, and chromatic error correction on the HMD itself,” Pallister told us. “Because you can basically have those close to the sensors and be very responsive in terms of how late you latch the sensor data, and how quickly you update the timewarp, and you can do things like decouple that from the resolution and framerate of the application,” he added.

He further noted that some of the other advantages to this decoupling include rendering the back buffer and upscaling. “You can maybe update the HMD very quickly and time warp but have the simulation running at a different framerate,” said Pallister. He qualified Intel’s results thus far as “pretty good” and believes that the company can continue to extract more VR quality from lower-power hardware.

One of the obstacles in Intel’s way, though, is the need for Microsoft’s help.

Intel’s Daydream With Microsoft

“This collaboration with Microsoft has two components to it,” said Pallister. “One of which is working with [Microsoft] to get to a set of specifications for PCs and for head-mounted displays that will match up and provide mainstream solutions--we use that word very deliberately--mainstream solutions for this Windows Holographic Windows Shell, and a set of APIs that will enable VR for a volume market on PCs.”

In other words, Intel is not looking to make a single, mainstream-level VR HMD. It’s trying to jumpstart a whole new ecosystem, and it’s working with Microsoft to get the software and OS pieces to jibe with the hardware. Then, Intel will provide hardware to other companies, who will make end user products.

This is, you will notice, exactly what the chipmaker has done for years in the CPU market. Just as you can’t buy an Intel PC, you won’t be able to buy an Intel VR HMD. So why is Intel building HMDs?

Pallister was quick to deflate any notion that Intel is getting into the headset business. “We built prototypes, proofs of concept and things like that, but that doesn’t mean we’re necessarily getting into that market,” he said. “As things stand right now, we’re far more interested in enabling the industry...than trying to get into a vertical business.” He noted that this prototyping is designed both for Intel’s engineers to understand what the system-level requirements will be for their Core processors and graphics and so on, and also to provide sufficient knowledge to its industry partners.

He added, “Part of why we’re doing this collaboration with Microsoft on both of these classes of devices [meaning Alloy and mainstream VR HMDs] is that VR is a very high performance, latency-sensitive usage model, so it’s not enough to just say, 'Hey we’ve done this layer, and you figure out the rest.' You kind of have to provide a really tight solution and then work from there.”

An apt comparison here would be what Google is attempting to do with its Daydream VR platform. Google is not interested in making HMDs. Daydream is a platform that Google is providing that OEMs will ostensibly use to build their own VR devices.

Pallister agreed that the above is a more or less sound way of thinking about Intel’s efforts in XR, then clarified with a light chuckle, “Substitute the PC ecosystem for the phone hardware ecosystem, and substitute Windows and Windows Holographic for Android and Daydream.”

In both cases, the goal is the same: to democratize, or even commoditize, XR in such a way that it’s a high-quality user experience that is also affordable and easy to acquire.

But that’s just the first couple of steps--making the stuff work. As we’ve seen time and again in the tech industry in recent years, content, or the lack thereof, is of utmost importance. To that end, though, Pallister seems wholly unconcerned, because assuming the Wintel bit works like it’s supposed to, the content already exists. The answer for the unnamed mainstream mystery HMD is the same as the one for Alloy. “Similar to what [Microsoft] did with HoloLens, they’re giving you a way to bring your existing Win32 apps and content into a VR space and interact with them in some ways. They’re also bringing the set of content they’ve done for Windows Holographic, and for the HoloLens, into the occluded headset Windows Holographic shell as well, so that’s another pool of content that they have to draw from.”

Although the everything-you-already-have-on-Windows is a compelling potentiality, it’s one we’ve heard before, and it hasn’t worked out especially well, at least not yet. (Windows Mobile/Continuum, we’re looking at you.)

The first version of the Intel/Microsoft-specification for these HMDs is expected in December.

So What’s The Grand Plan?

Taking all of the above into consideration, what does this XR utopia actually look like? Despite everything we know, Intel’s XR strategy frankly remains hazy.

Intel is not going to build and sell Alloy HMDs. Intel is building an Alloy platform that other companies can use as a basis upon which to develop shipping products.

Intel is not going to build and sell mainstream VR HMDs that are effectively lower-end versions of Rift and Vive. Intel is going to develop technology and possibly create prototypes to help develop IP with Microsoft that other companies can use as a basis to develop shipping products.

In both cases, Intel and Microsoft are an item, as it were.

Perhaps a helpful way to understand all of the above is to contrast Intel’s approach with that of a company like Oculus. It is true that Oculus has huge VR plans, but they center squarely on its Rift HMD. It starts with a single product; continues with a platform, a catalog of titles, and additions like the Touch controllers; and ends with--well, no one knows what it ends with yet. In any case, Oculus’ strategy of starting with a Thing and growing from there is what you could call an inside-out strategy.

Intel is taking on VR with an outside-in approach. Instead of focusing on one product, it’s building up a massive portfolio of IP--including RealSense cameras and deep learning--that could be leveraged for numerous aspects of XR.

This is exactly what Intel has done in the PC market: Be everywhere. Be in everything.

Seth Colaner previously served as News Director at Tom's Hardware. He covered technology news, focusing on keyboards, virtual reality, and wearables.

-

d_kuhn First of all... Hololens is textbook AR. In fact - Hololens is more AR than current "AR" things like Pokémon Go. I think if people just reject a Microsoft branded AR term they'll eventually give in and call it what it is. There's VR and there's AR... there currently are no other fundamental technologies in play, just variants of those two things.Reply

As far as the Intel approach of Merging camera input instead of using a passthrough... I see it as having some advantages and disadvantages. One advantage is it won't suffer from fading in very bright conditions, and the computer based content (Holograms on Hololens) won't have to be delivered through a more complicated viewing technology (which currently adds artifacts). On the downside... human vision is MUCH better than any camera (especially the super-compact ones used in headsets), and also the display tech used can't cover the full range of a real scene (in a number of ways... dynamic range, color reproduction, temporal reproduction, etc...). I also suspect that the Hololens pass-through approach will ultimately be more energy efficient (battery life).

I think short term it will be worth exploring both technologies and both will work better in some situations... long term I'd put my money on Hololens style pass-through for AR. -

wifiburger waste of time, right now VR is not worth the effort with putting up with accessories,Reply

it's bad cause instead of having game studios create amazing games they dedicate their resources into this gimmick because it's the new thing !

it happened with wii mote, everybody jump unboard for some truelly garbage games that sold on the fake promise of that wii mote accesory, it's just another reapeat and i'll pass no thank you -

scolaner Reply18567646 said:waste of time, right now VR is not worth the effort with putting up with accessories,

it's bad cause instead of having game studios create amazing games they dedicate their resources into this gimmick because it's the new thing !

it happened with wii mote, everybody jump unboard for some truelly garbage games that sold on the fake promise of that wii mote accesory, it's just another reapeat and i'll pass no thank you

Have you tried any VR/AR experiences? -

alidan Reply18567786 said:18567646 said:waste of time, right now VR is not worth the effort with putting up with accessories,

it's bad cause instead of having game studios create amazing games they dedicate their resources into this gimmick because it's the new thing !

it happened with wii mote, everybody jump unboard for some truelly garbage games that sold on the fake promise of that wii mote accesory, it's just another reapeat and i'll pass no thank you

Have you tried any VR/AR experiences?

the only people who don't believe in vr have never tried vr, id willingly go back to what i used 15~ years ago if i could have it today, but what we have is currently to expensive for me, and i would need massive platform upgrades to deal with it. -

scolaner Reply18568364 said:18567786 said:18567646 said:waste of time, right now VR is not worth the effort with putting up with accessories,

it's bad cause instead of having game studios create amazing games they dedicate their resources into this gimmick because it's the new thing !

it happened with wii mote, everybody jump unboard for some truelly garbage games that sold on the fake promise of that wii mote accesory, it's just another reapeat and i'll pass no thank you

Have you tried any VR/AR experiences?

the only people who don't believe in vr have never tried vr, id willingly go back to what i used 15~ years ago if i could have it today, but what we have is currently to expensive for me, and i would need massive platform upgrades to deal with it.

That's what Wintel is trying to solve--they're trying to bring the cost down without sacrificing (much) performance. I hope that happens. I don't care if it's Intel, MSFT, Google, Oculus, HTC, or whoever, just as long as it happens. -

Virtual_Singularity VR & AR, weren't enough? There has to be a "MR" too? Can't we just shorten the first two down to MR? Am interested to know what long term effects MR may have, or potentially have, on the human brain. Or even how many hardcore Pokemon Go players have started seein' those virtual critters in their dreams, and other places they aren't supposed to be, so far.Reply -

scolaner Reply18570041 said:VR & AR, weren't enough? There has to be a "MR" too? Can't we just shorten the first two down to MR? Am interested to know what long term effects MR may have, or potentially have, on the human brain. Or even how many hardcore Pokemon Go players have started seein' those virtual critters in their dreams, and other places they aren't supposed to be, so far.

Agreed, the additional "R"s are a little much. I addressed that very nomenclature issue in the sidebar. -

alidan Reply18569082 said:18568364 said:18567786 said:18567646 said:waste of time, right now VR is not worth the effort with putting up with accessories,

it's bad cause instead of having game studios create amazing games they dedicate their resources into this gimmick because it's the new thing !

it happened with wii mote, everybody jump unboard for some truelly garbage games that sold on the fake promise of that wii mote accesory, it's just another reapeat and i'll pass no thank you

Have you tried any VR/AR experiences?

the only people who don't believe in vr have never tried vr, id willingly go back to what i used 15~ years ago if i could have it today, but what we have is currently to expensive for me, and i would need massive platform upgrades to deal with it.

That's what Wintel is trying to solve--they're trying to bring the cost down without sacrificing (much) performance. I hope that happens. I don't care if it's Intel, MSFT, Google, Oculus, HTC, or whoever, just as long as it happens.

I honestly don't trust intel/microsoft to being the hardware cost, as in the computer needed, down in price. they may be able to make a headset cheaper, but osvr also does that. and for my money, i see no use in room scale because i dont have the space for it, however a racing sim or even an arcade racer, that, just having that extra axis is enough to change how the game is played, even if its not in 3d.

Hell, there is a 4k vr out of china I have been looking at, not so much for gaming, but for watching movies. -

scolaner Reply18573592 said:18569082 said:18568364 said:18567786 said:18567646 said:waste of time, right now VR is not worth the effort with putting up with accessories,

it's bad cause instead of having game studios create amazing games they dedicate their resources into this gimmick because it's the new thing !

it happened with wii mote, everybody jump unboard for some truelly garbage games that sold on the fake promise of that wii mote accesory, it's just another reapeat and i'll pass no thank you

Have you tried any VR/AR experiences?

the only people who don't believe in vr have never tried vr, id willingly go back to what i used 15~ years ago if i could have it today, but what we have is currently to expensive for me, and i would need massive platform upgrades to deal with it.

That's what Wintel is trying to solve--they're trying to bring the cost down without sacrificing (much) performance. I hope that happens. I don't care if it's Intel, MSFT, Google, Oculus, HTC, or whoever, just as long as it happens.

I honestly don't trust intel/microsoft to being the hardware cost, as in the computer needed, down in price. they may be able to make a headset cheaper, but osvr also does that. and for my money, i see no use in room scale because i dont have the space for it, however a racing sim or even an arcade racer, that, just having that extra axis is enough to change how the game is played, even if its not in 3d.

Hell, there is a 4k vr out of china I have been looking at, not so much for gaming, but for watching movies.

You don't "trust" them? Or you don't think they can do it?

I mean, they're not doing it to be altruistic. They want to dominate the market and make buckets of money, and the best way to do that is to exploit the mainstream portion. So it's one of those situations where the Venn diagram of what benefits those companies and what benefits end users aligns.

Also...as I wrote in the article, they're really trying to develop a set of specs that work and then will get OEMs to make the actual stuff.

Also also, I feel you on the roomscale issue...but that's where HoloLens and Alloy are ideal. They give you whatever environment you're in. The XR experiences don't have to be "room scale" the way Vive is. Besides, I think a lot of the value of AR headsets like the HoloLens will be around productivity and content consumption. -

bit_user Reply

I totally agree on this point. That MS will try to use their trademarked term is almost a given, but I really hope everyone rejects it, in favor of "AR".18567193 said:First of all... Hololens is textbook AR. In fact - Hololens is more AR than current "AR" things like Pokémon Go. I think if people just reject a Microsoft branded AR term they'll eventually give in and call it what it is.

I disagree. What's truly novel about Intel's Alloy is its ability to introduce non-translucent imagery. They can also mess with your environment in other ways, such as replacing your room with a scenic vista, while still letting you see all the furniture. It can also selectively remove or alter items in your environment, to a large extent.18570041 said:VR & AR, weren't enough? There has to be a "MR" too? Can't we just shorten the first two down to MR?

I think it's hard to really get your head around the differences between MS' approach vs. Intel's, without actually trying both. In the long term, I think Intel is on a better track. The downside is that it's currently more obtrusive, invasive, and fatiguing than MS' Hololens.

So, I accept the term MR as a seemless mixing of VR and AR that can achieve things not possible when limited strictly to either one.

The big question for me is how extended & frequent use of VR affects vision and depth perception. Lightfield displays would largely allay those concerns, I think.18570041 said:Am interested to know what long term effects MR may have, or potentially have, on the human brain.