Why you can trust Tom's Hardware

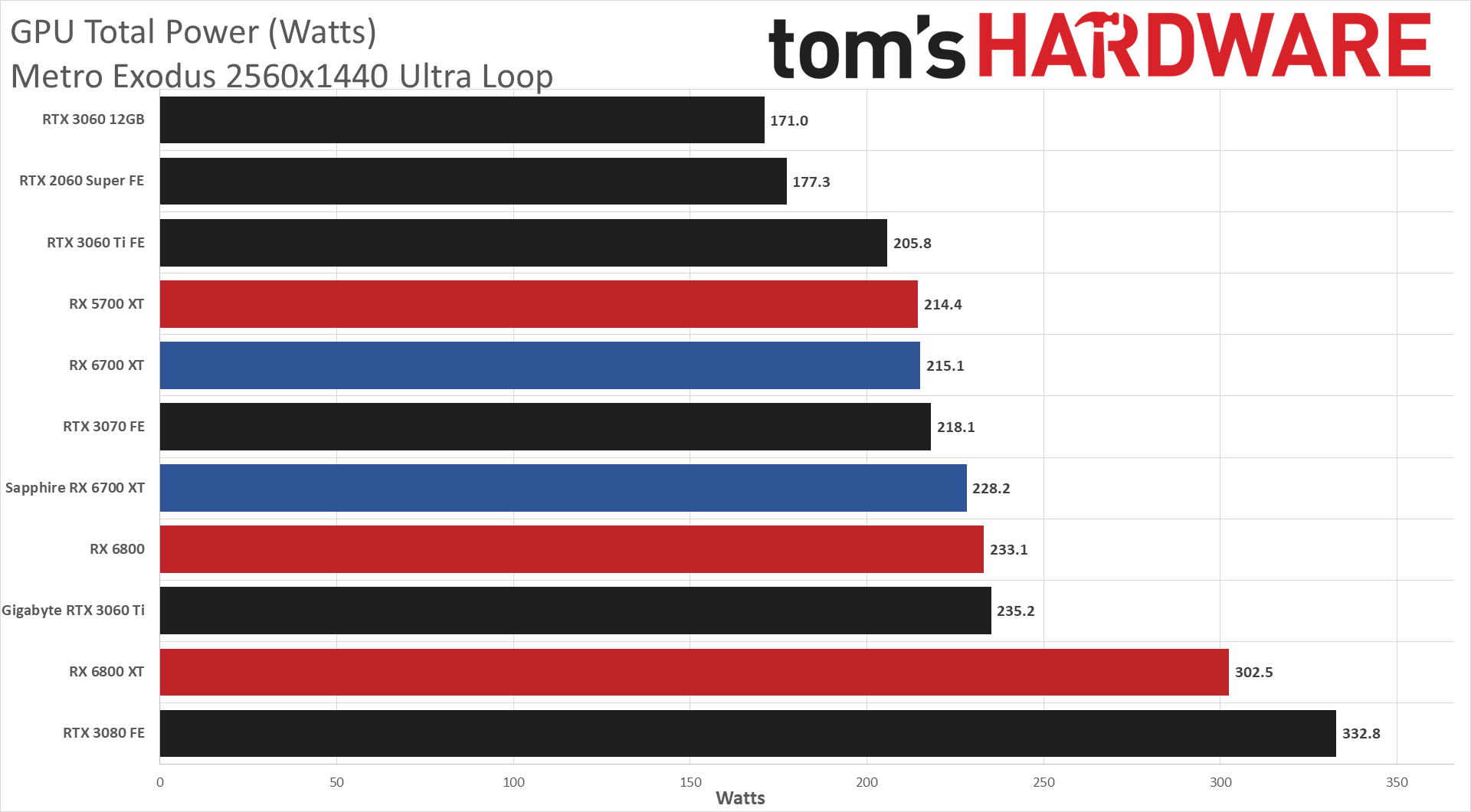

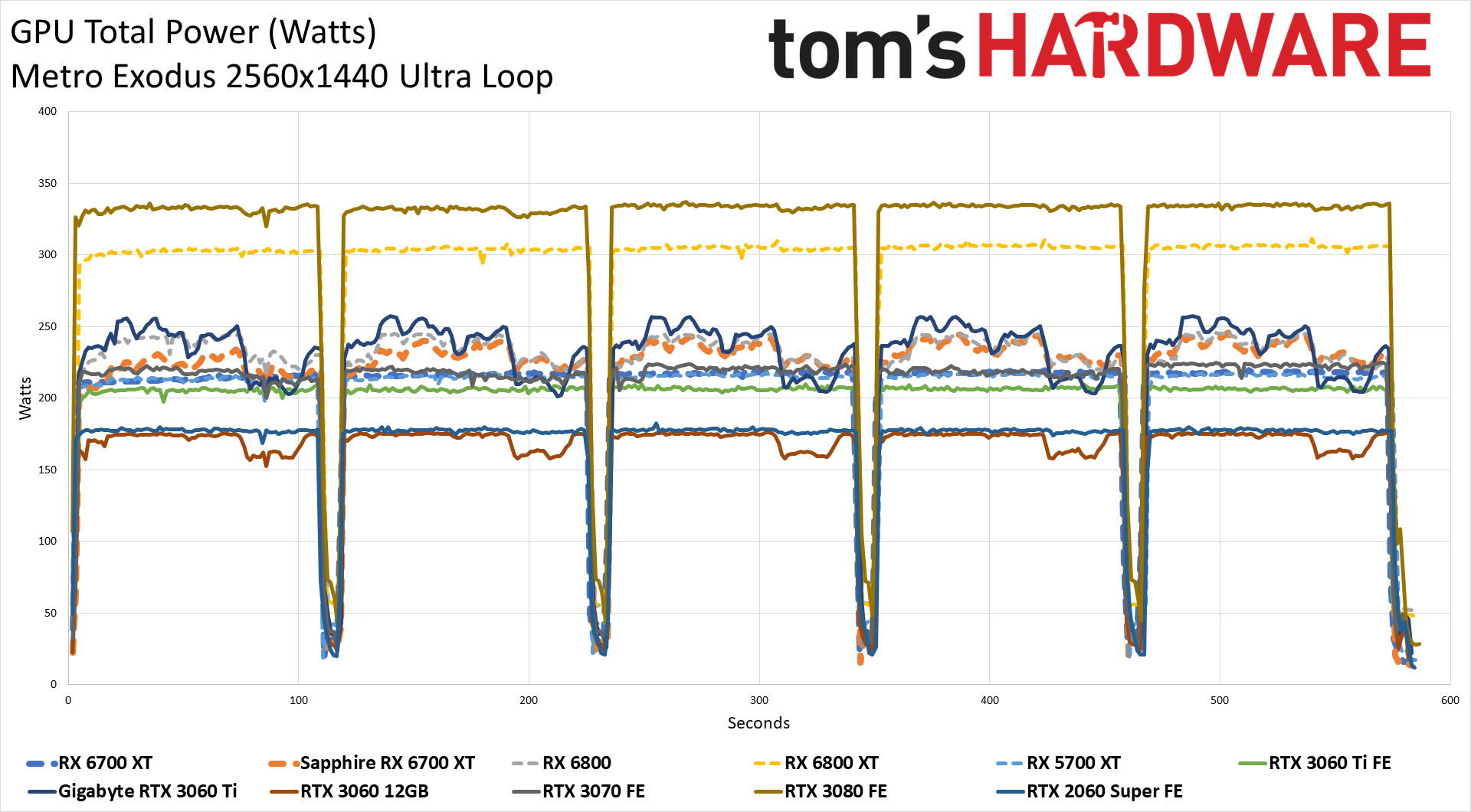

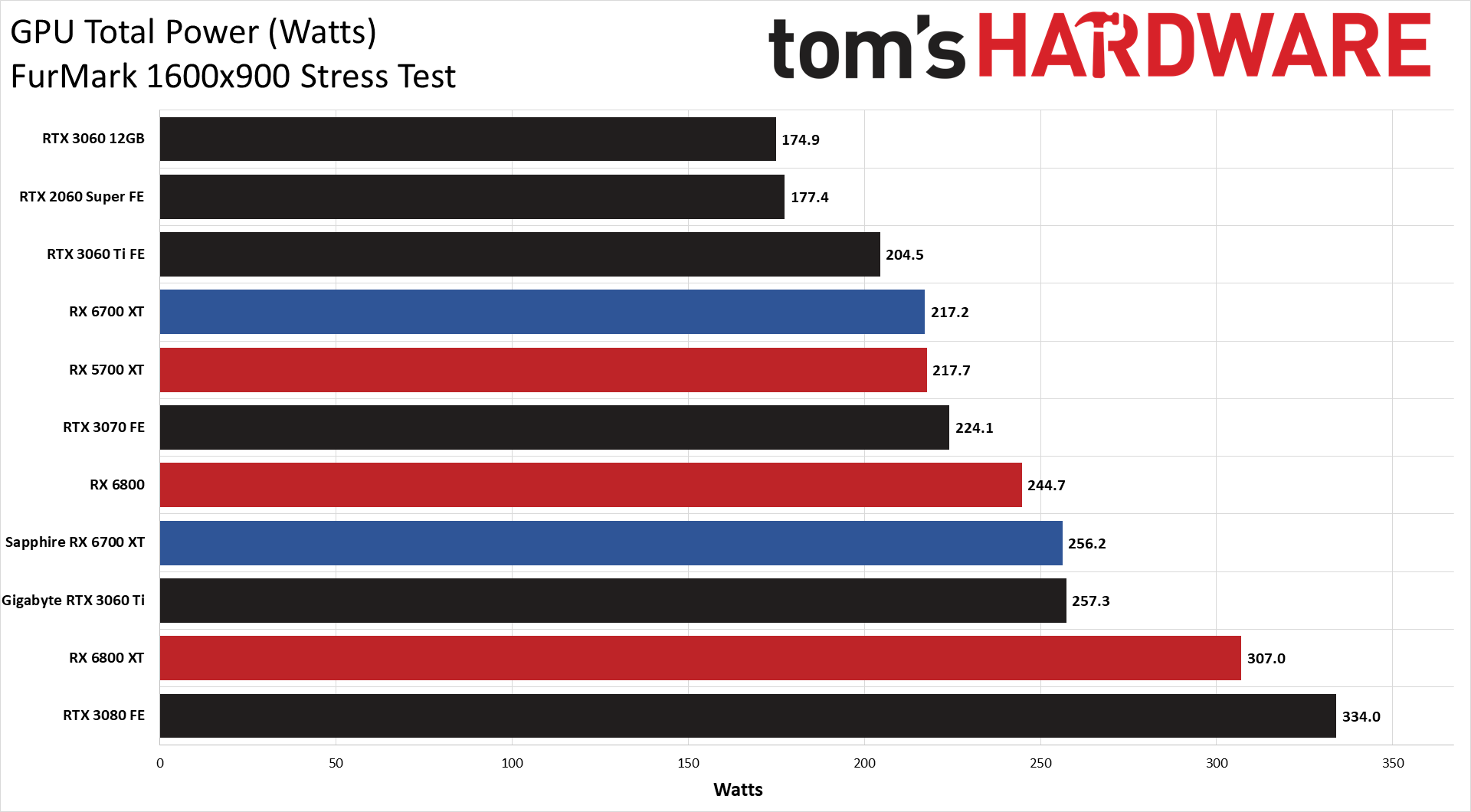

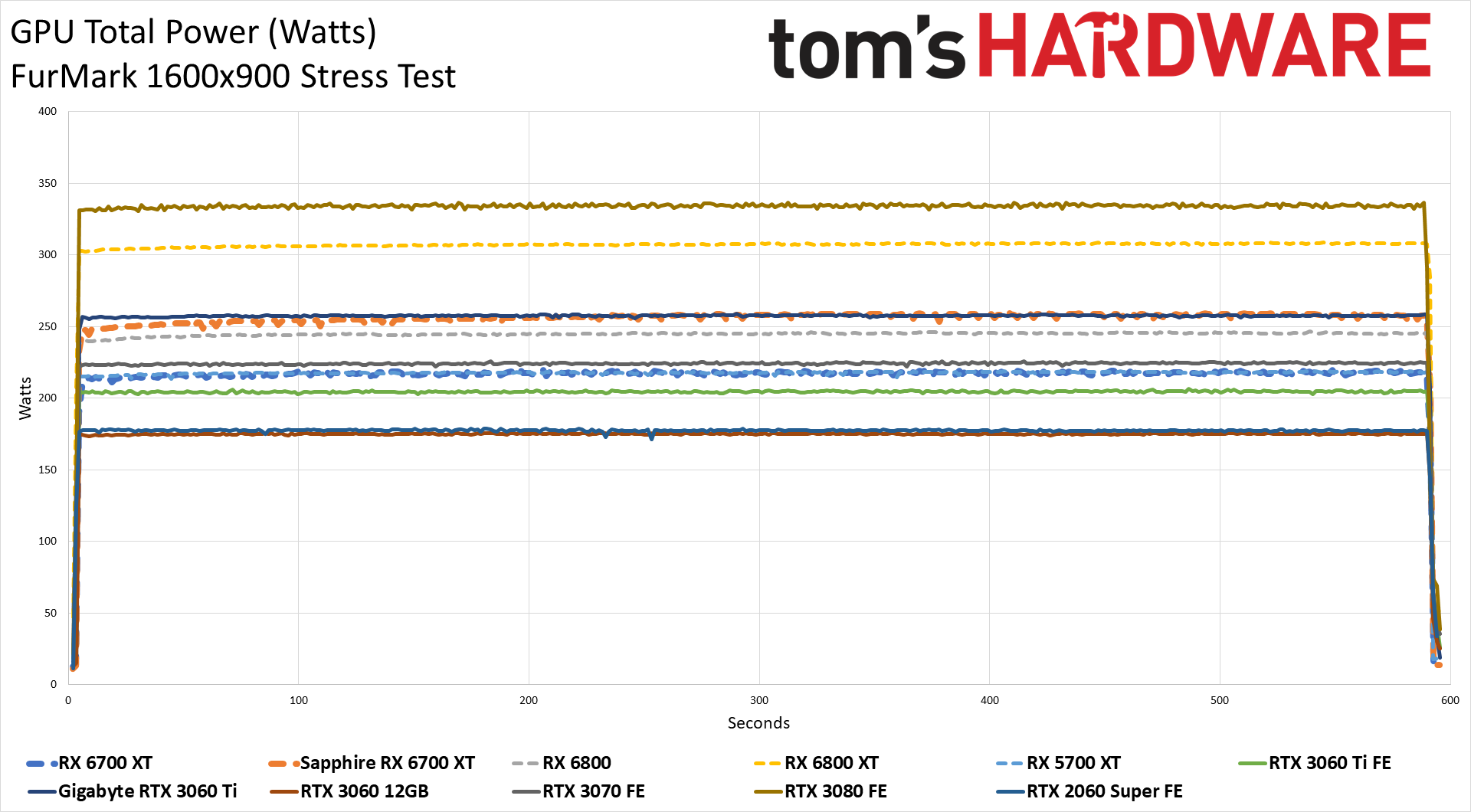

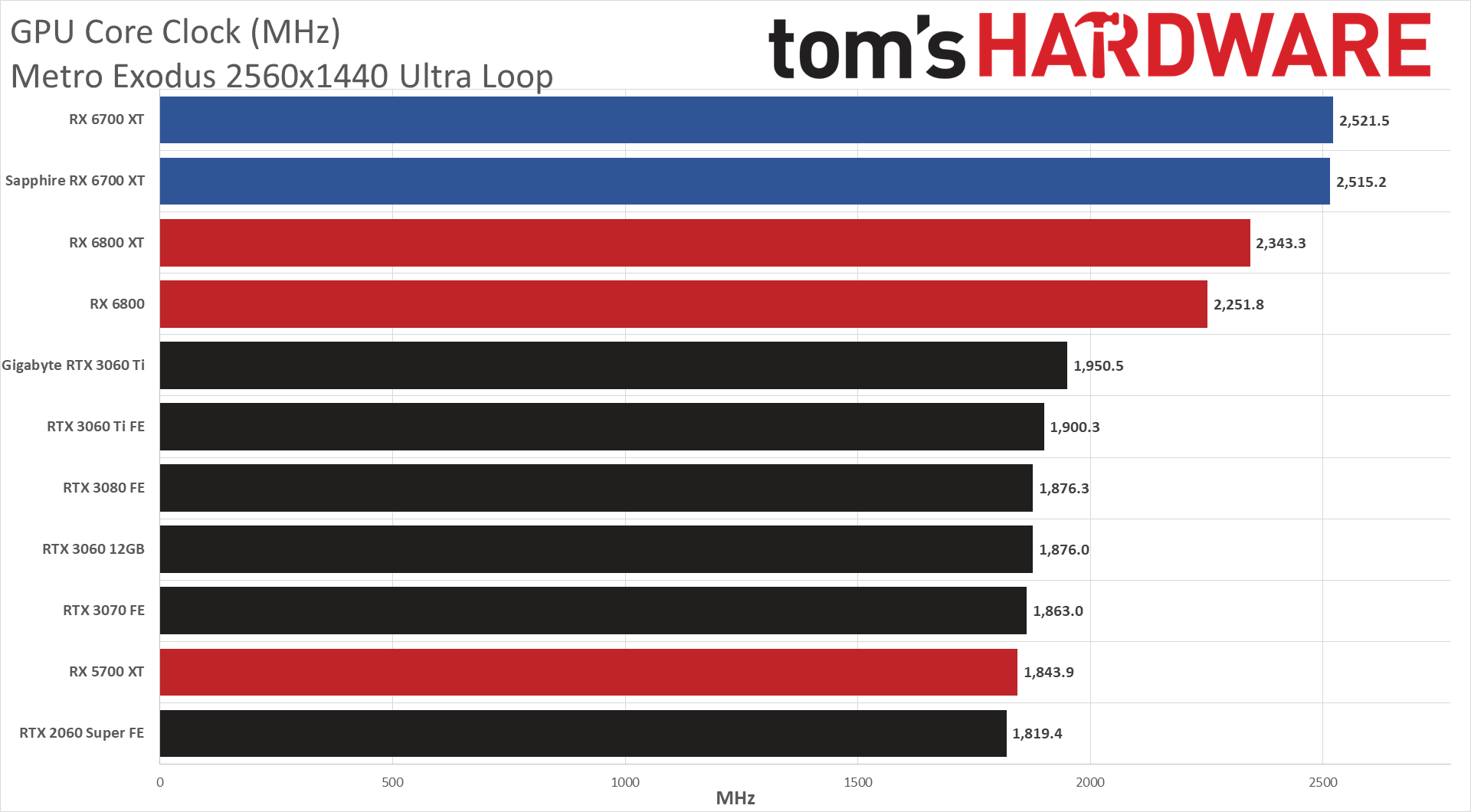

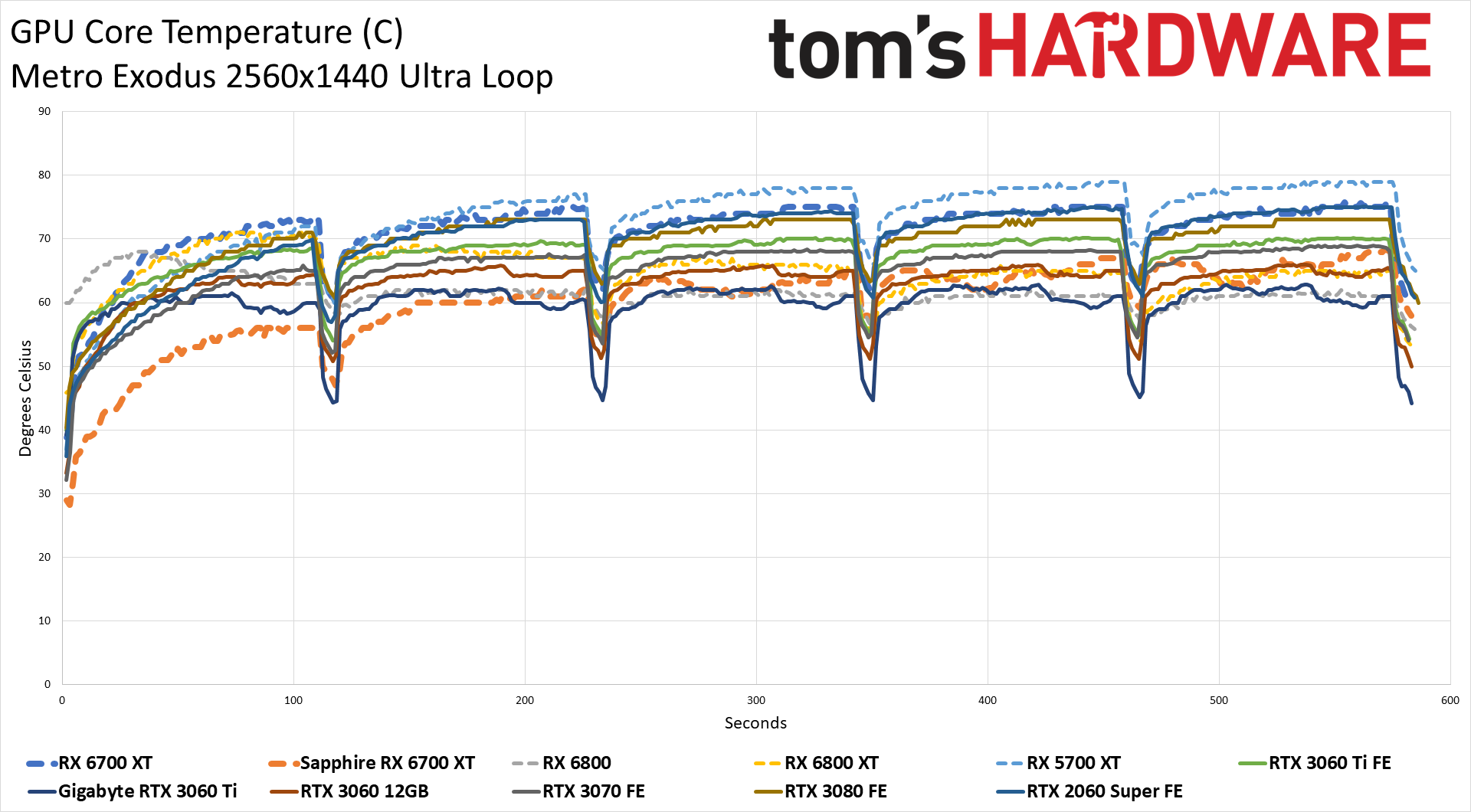

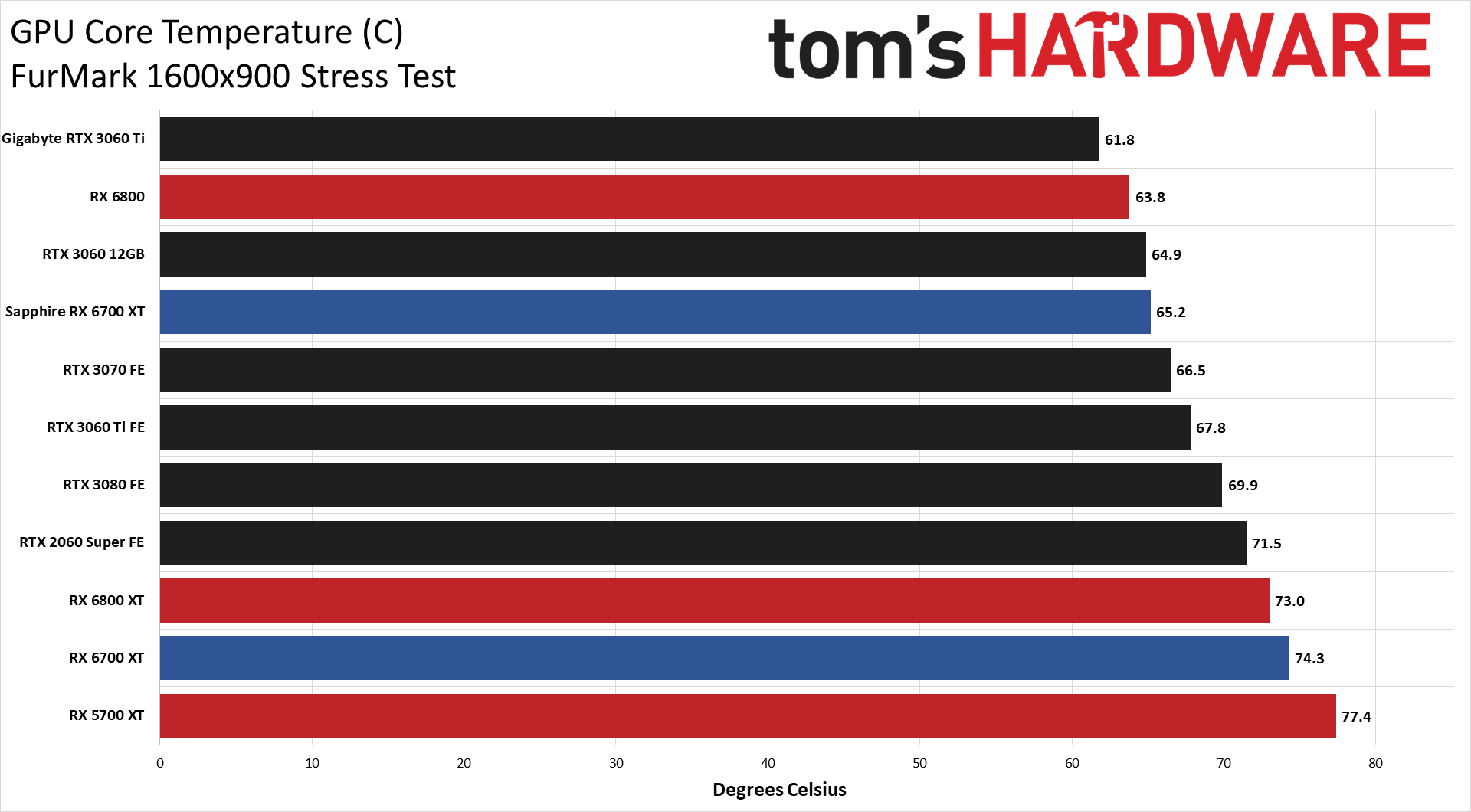

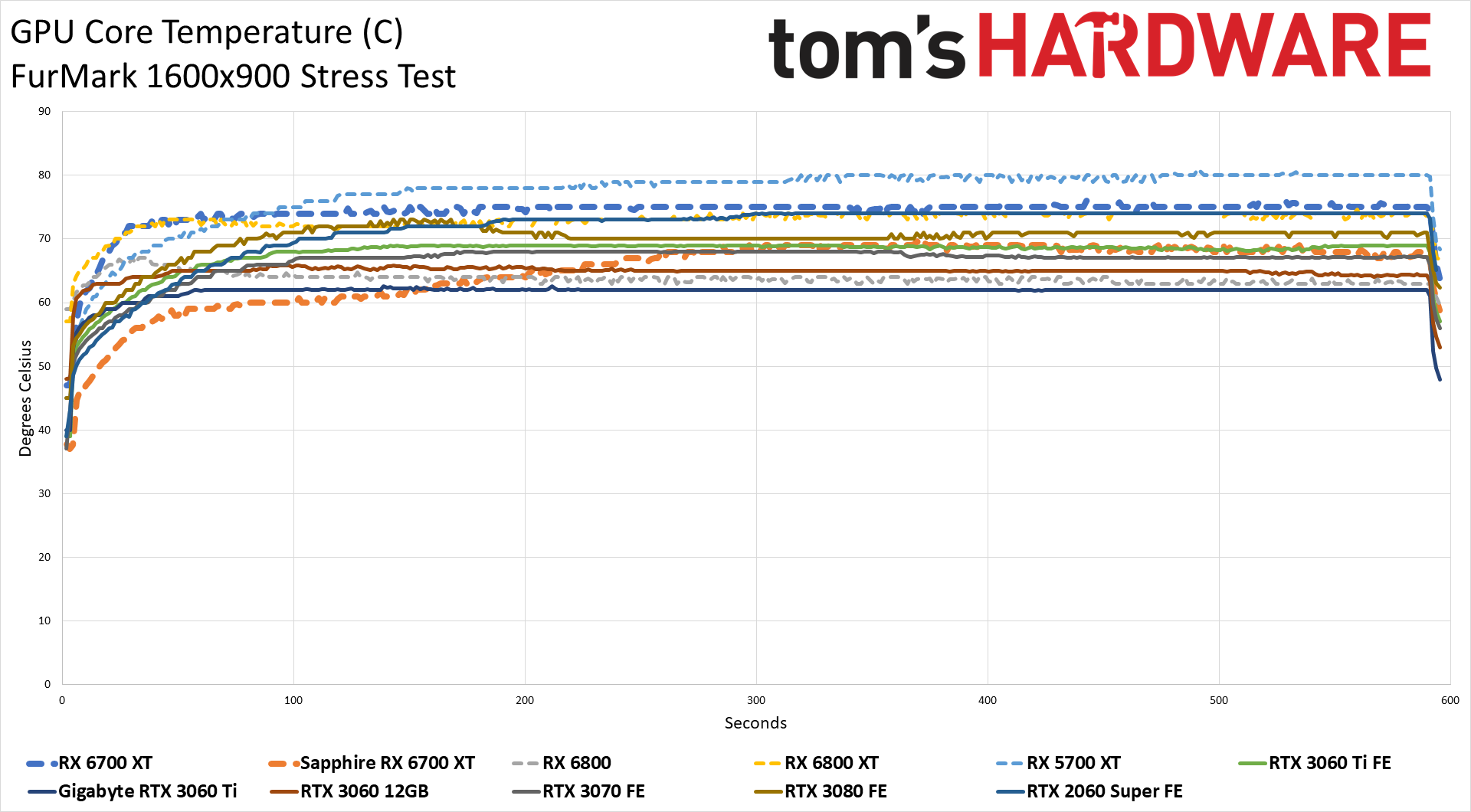

We noted at the start that the rated TBP comes in higher than we'd normally expect, likely thanks to the higher GPU clocks. We're not going to take the time (right now) to see how much power use drops at lower clocks, but we'll run our normal suite of Powenetics testing and check the GPU power consumption and other aspects. We run Metro Exodus at 1440p ultra and FurMark at 900p to collect these results.

It's good to see the RX 6700 XT come in a bit lower than the rated TBP, sitting at around 215W. As usual, FurMark required a bit more power, but it's only 2W in this case — nothing to worry about. It looks as though AMD was a bit conservative with its power ratings. That's better than the alternative, which we've seen quite a bit of lately, of cards using 5W-10W more than the rated TBP. Of course, third-party cards are free to increase the power limits, and clearly, the Sapphire card did.

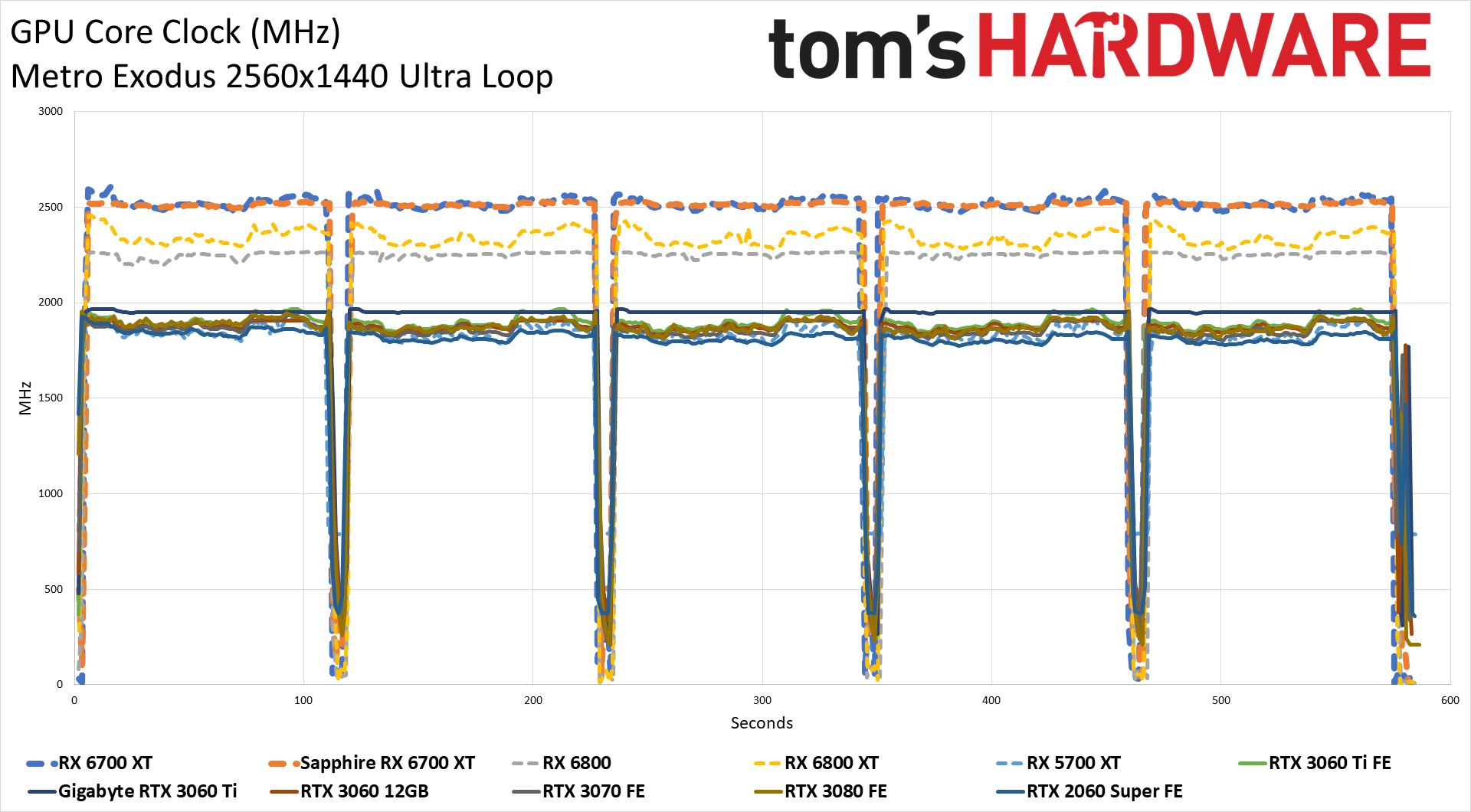

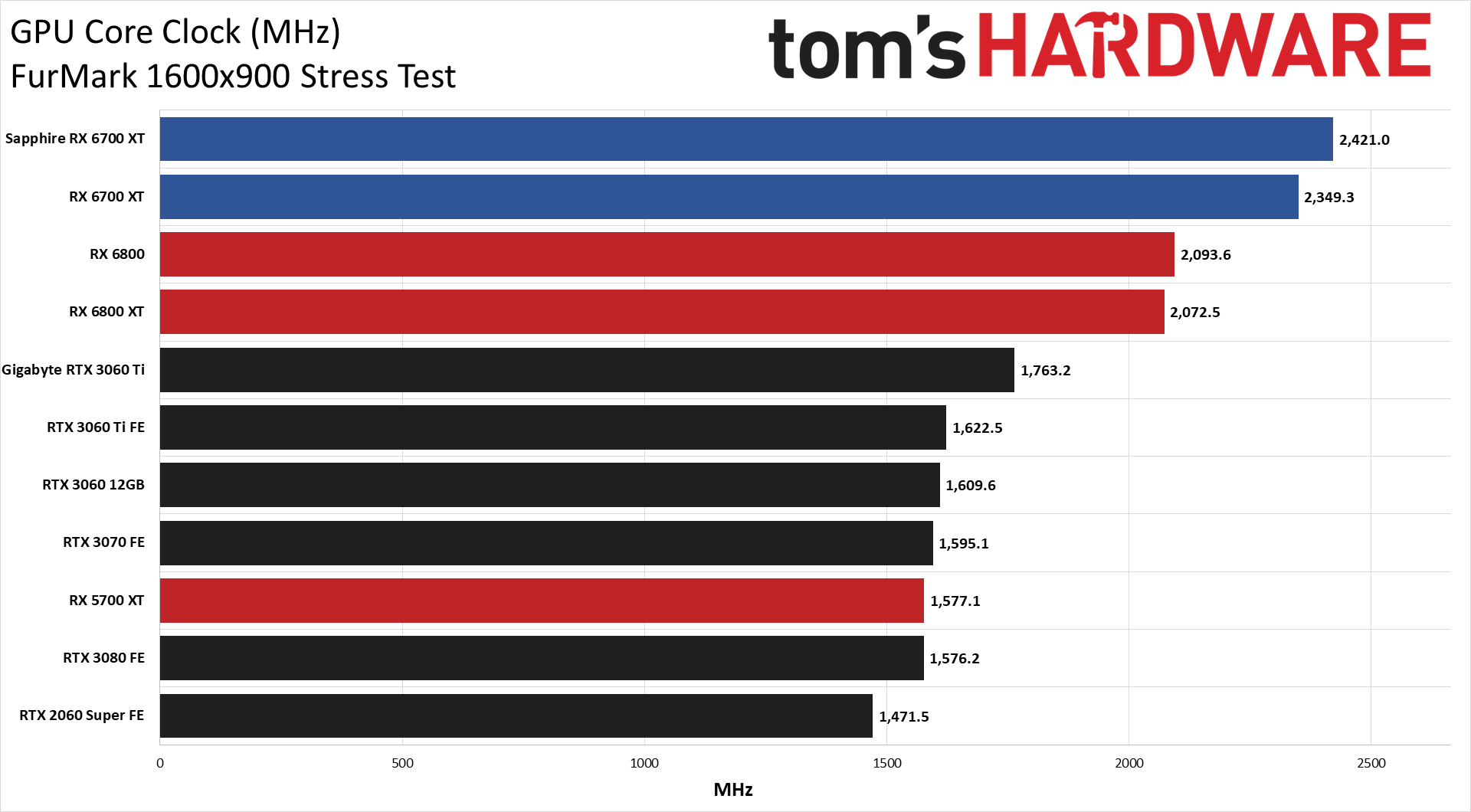

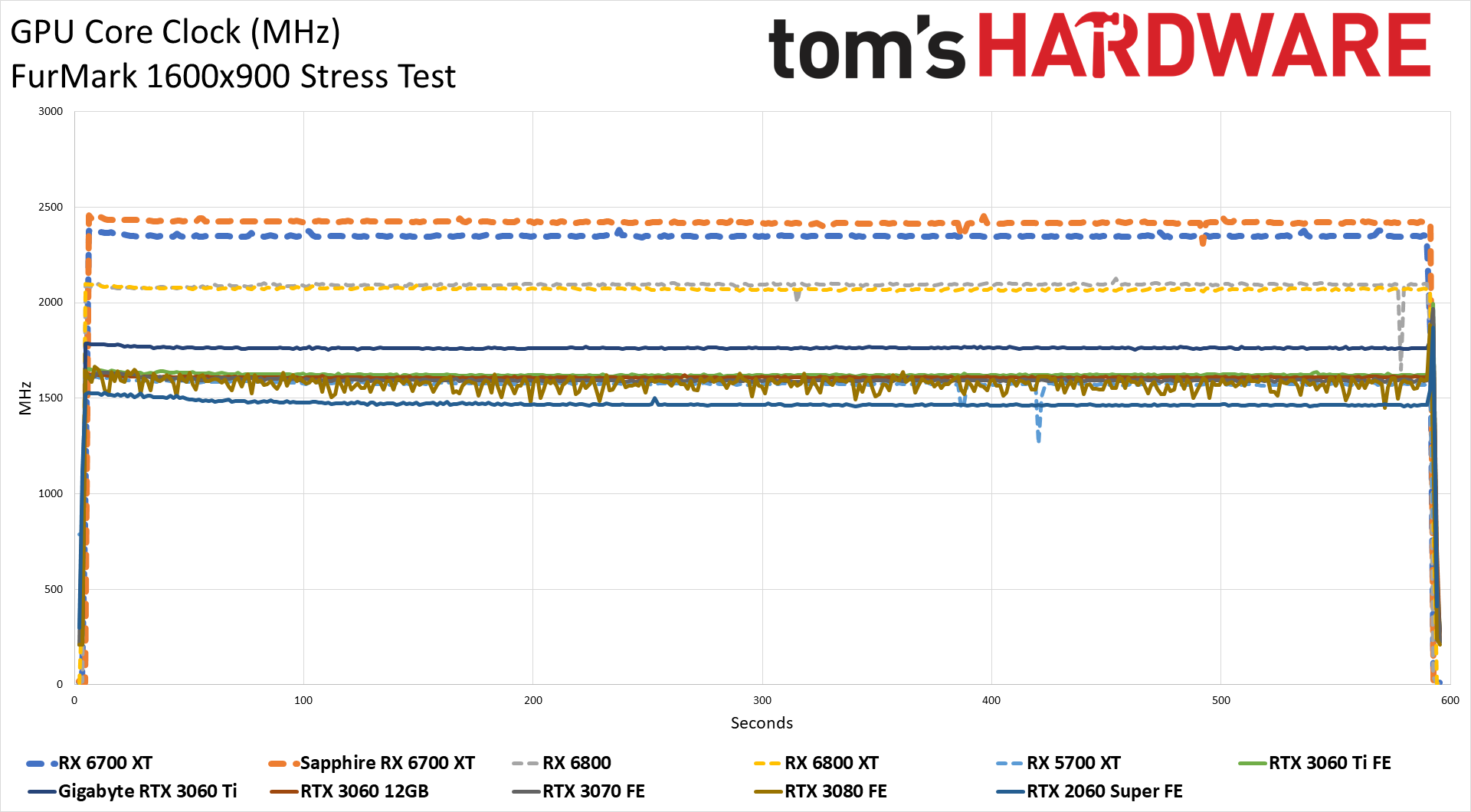

Power scales with voltage and clock speed, and the 6700 XT has the highest reference clocks of any GPU to date by about 175MHz. Interestingly, the Sapphire and reference cards are basically tied on average clocks in Metro Exodus, while the Nitro+ makes use of its additional power in FurMark. Maximum clocks during gaming tend to average roughly 2.5GHz, depending on the game, while the RX 6700 XT still cruises along at 2.35GHz in FurMark.

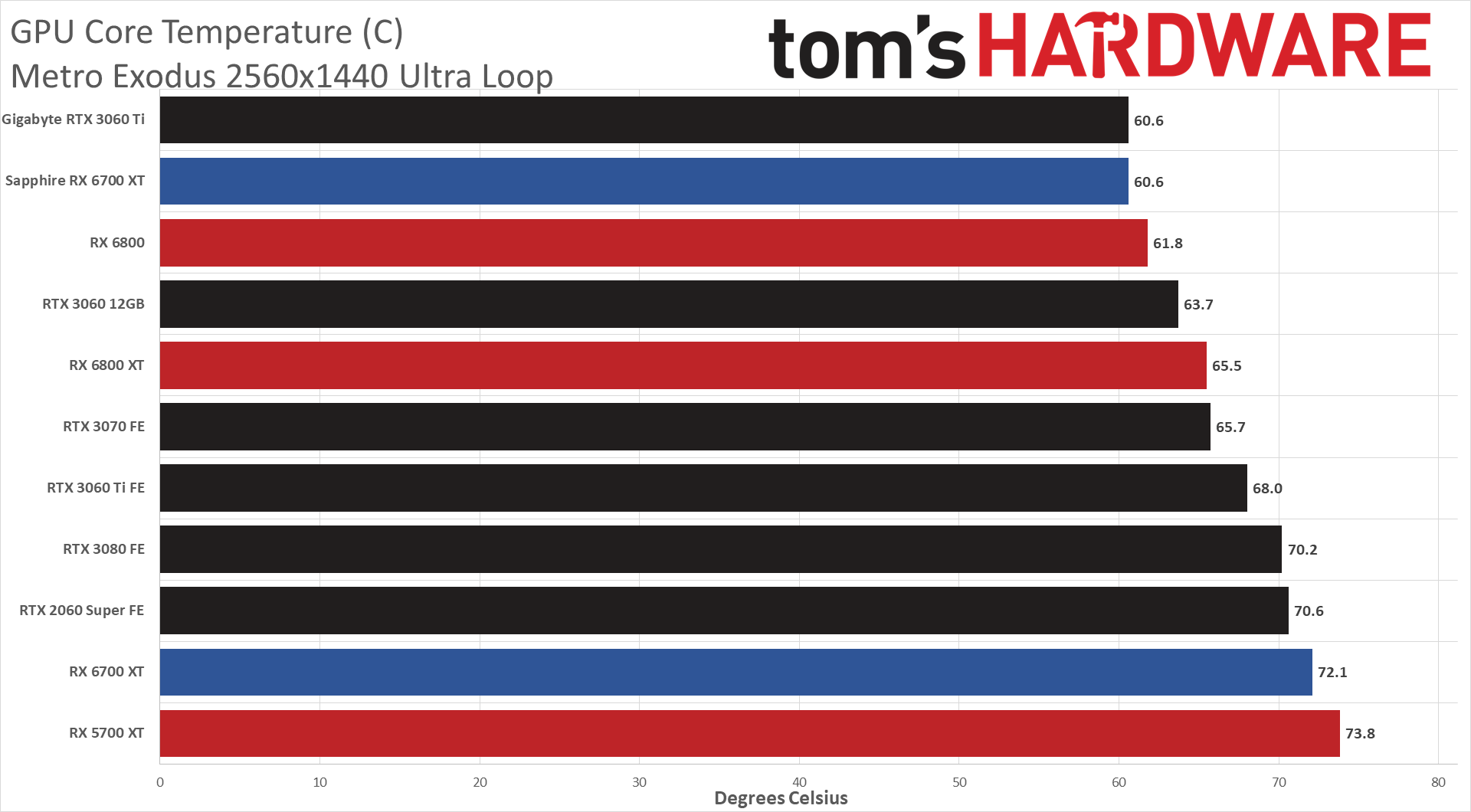

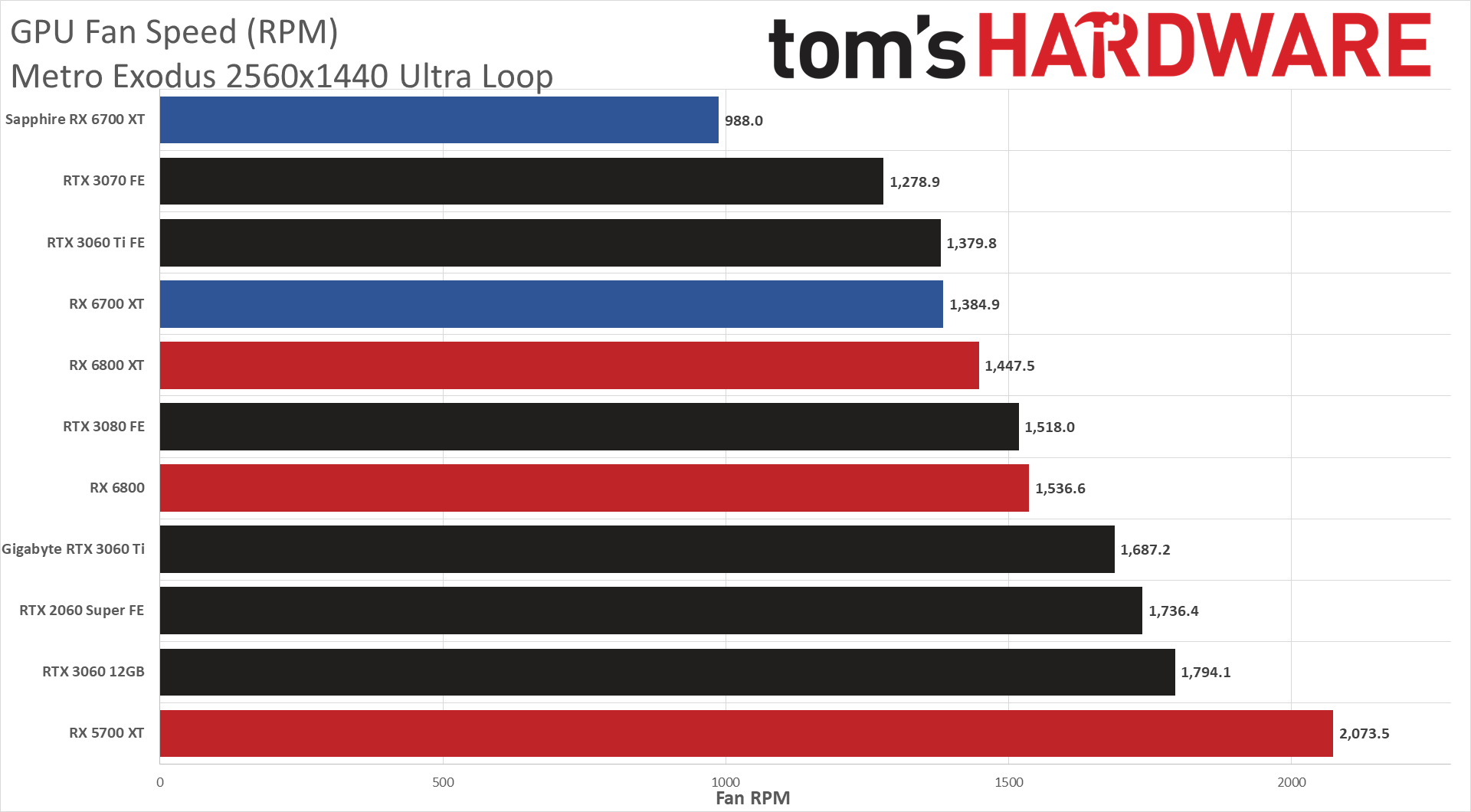

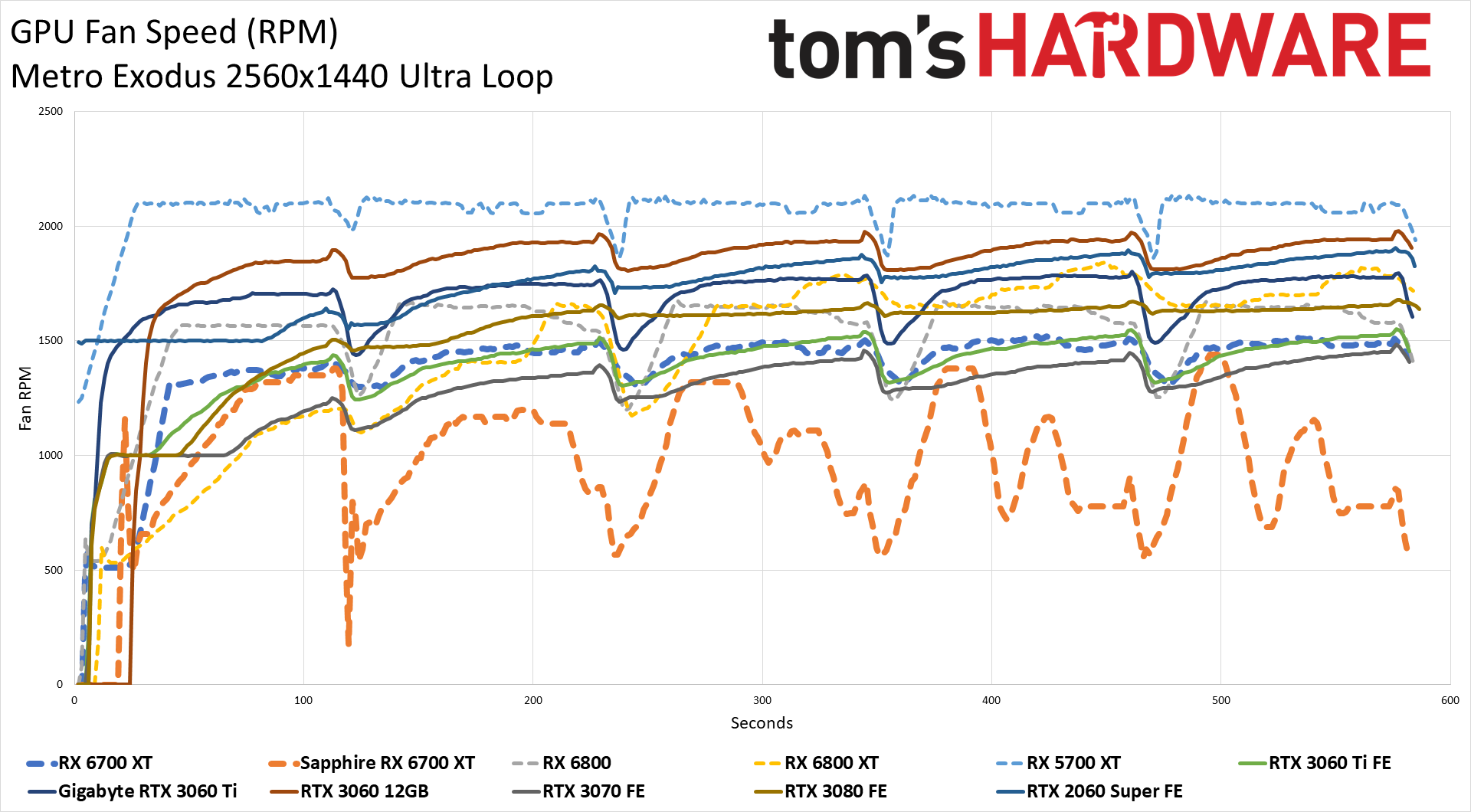

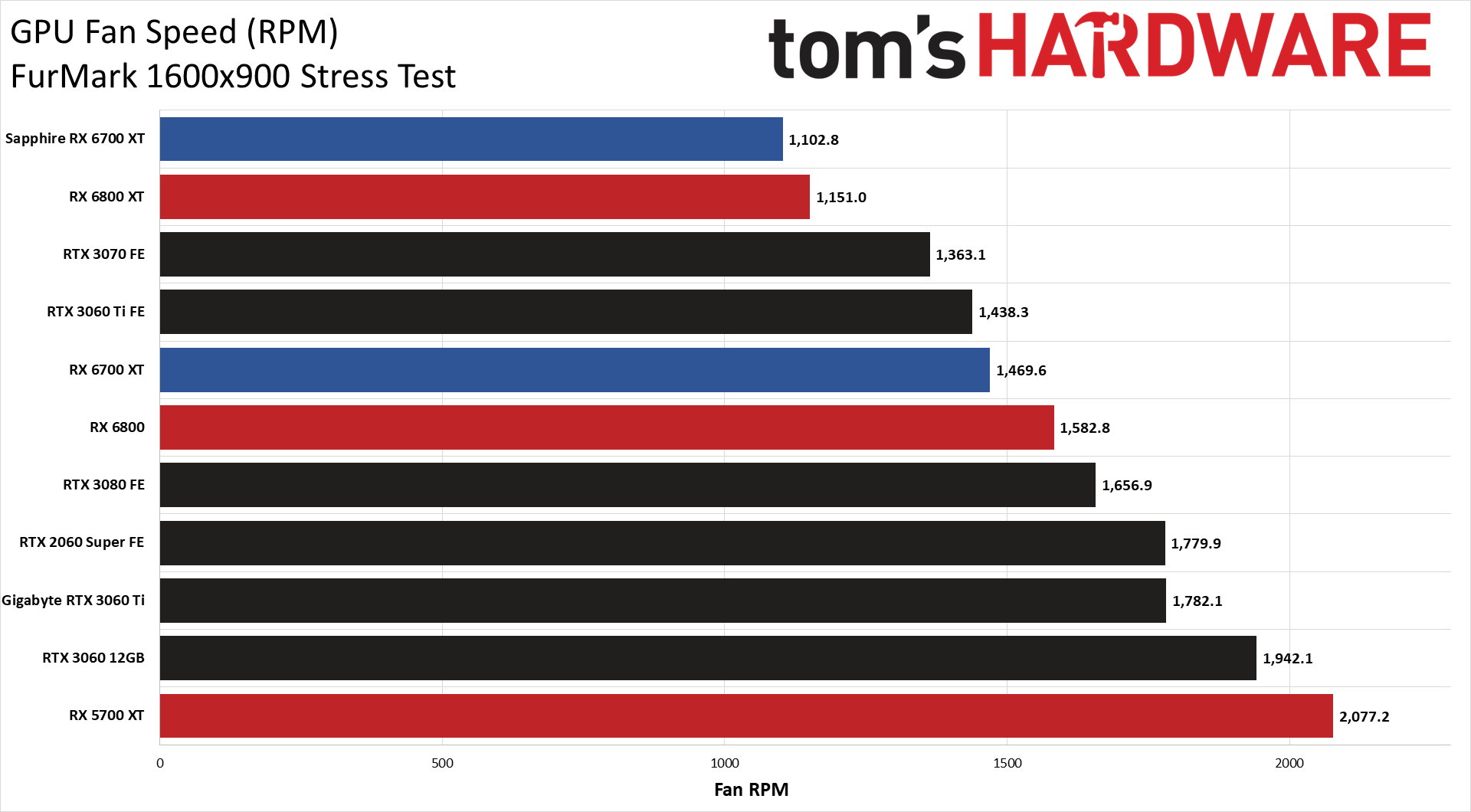

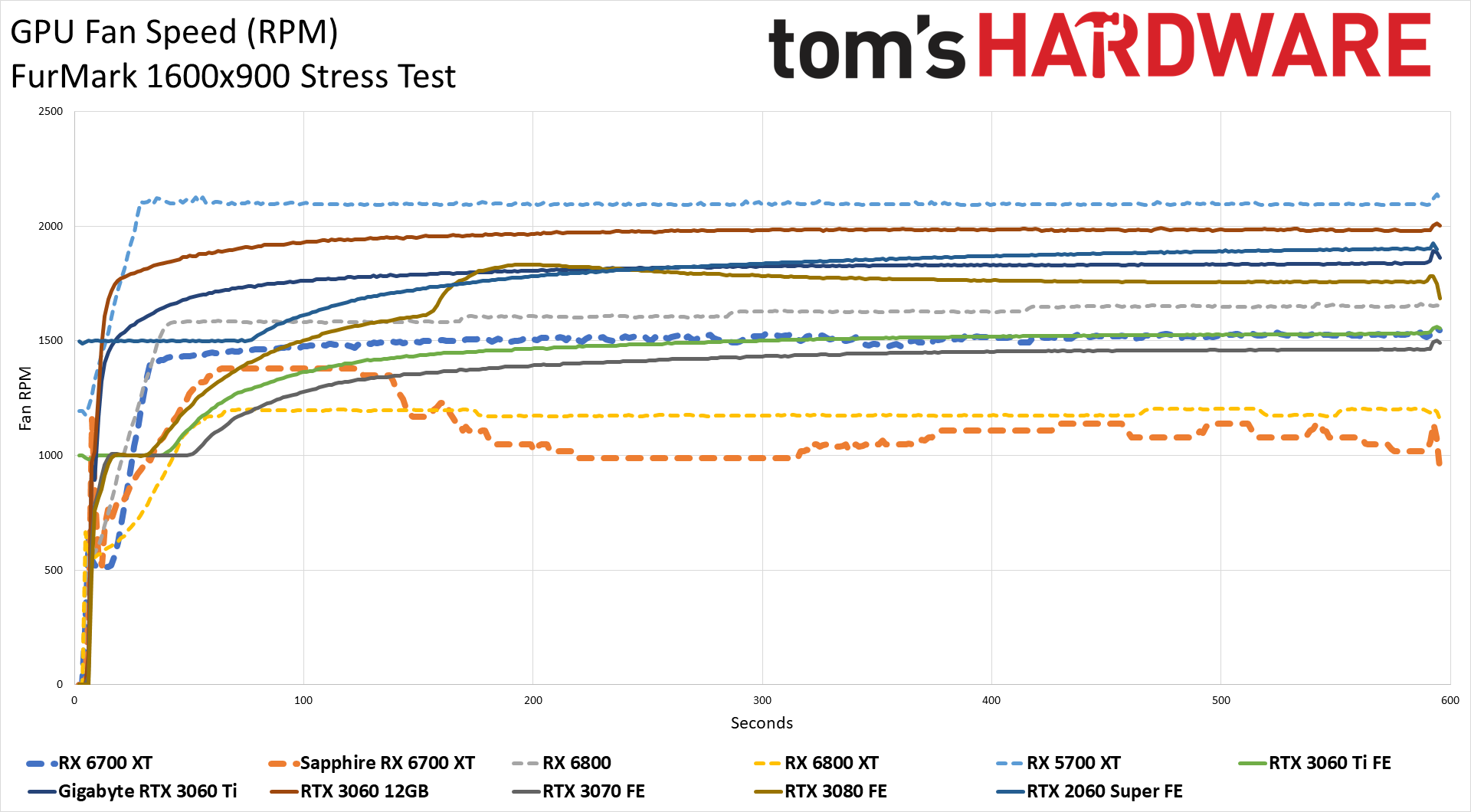

Fan speeds directly affect temperatures, and here we see the reduced cooling capacity of AMD's reference design. It's not loud, but it does hit higher temperatures — not that 72C is particularly hot. The larger fans help make up for the reduced number of fans, but the triple fan cards all achieve lower temperatures. Meanwhile, Sapphire's RX 6700 XT posts some of the lowest average fan speeds we've ever seen.

Lower fan speeds naturally mean lower noise levels. The noise floor of our test environment and equipment measures 34 dB(A) at a distance of 15cm from the side of the GPU. We put the SPL (Sound Pressure Level) meter close to the GPU fans to focus on their noise, rather than case fans or other noise sources. The reference RX 6700 XT measured 40.4 dB, while Sapphire's card was only a touch above the noise floor with 36.0 dB.

Radeon RX 6700 XT Mining Performance

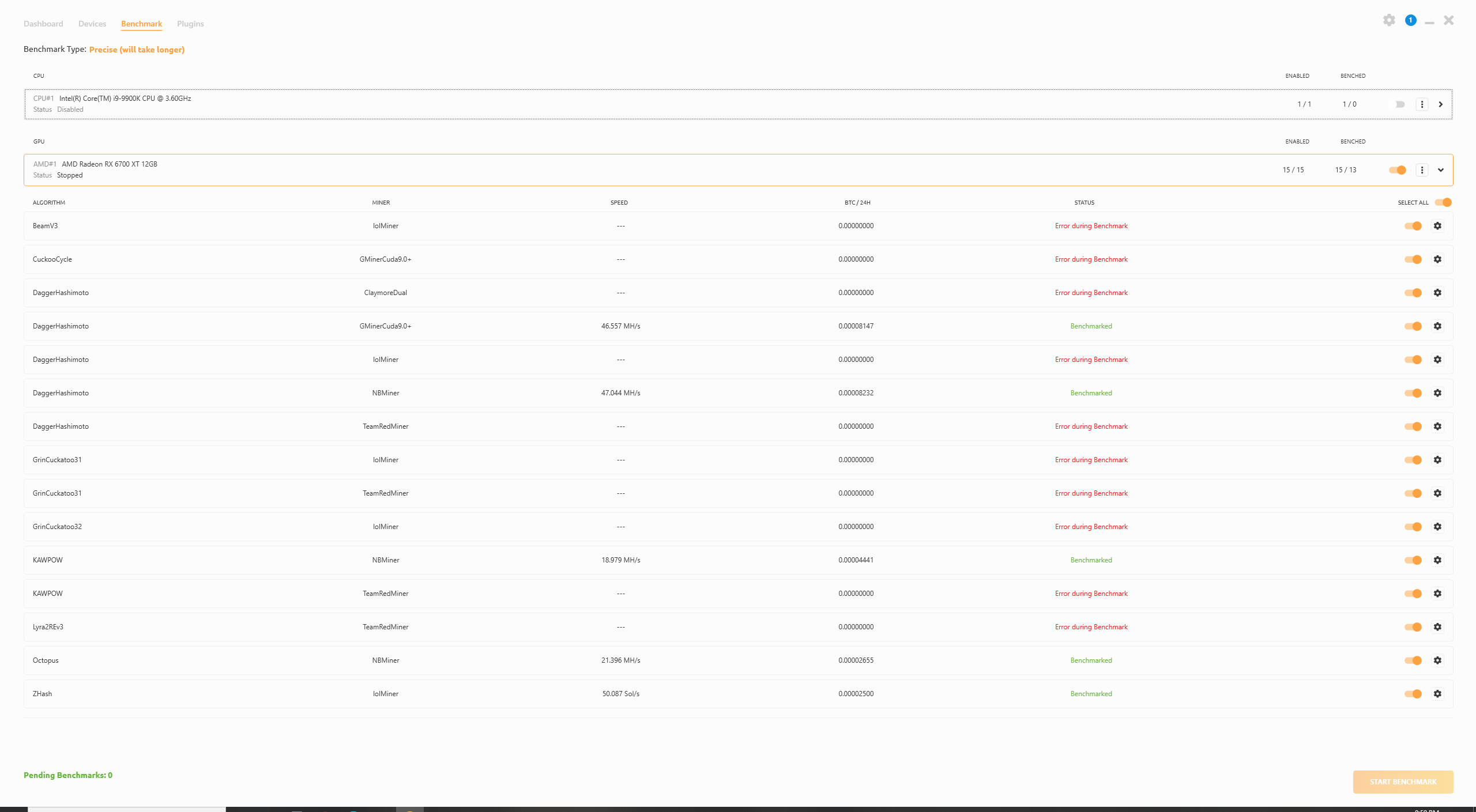

Unlike Nvidia's RTX 3060 12GB, AMD isn't even trying to stop miners from using its cards. On the one hand, that might seem like a poor decision, but we also saw how that all played out with Nvidia accidentally posting a development driver that doesn't fully implement the Ethereum mining speed limiter. Anyway, for better or worse, cryptocurrency mining is a thing right now, so we checked the hashing performance of the RX 6700 XT using NiceHashMiner.

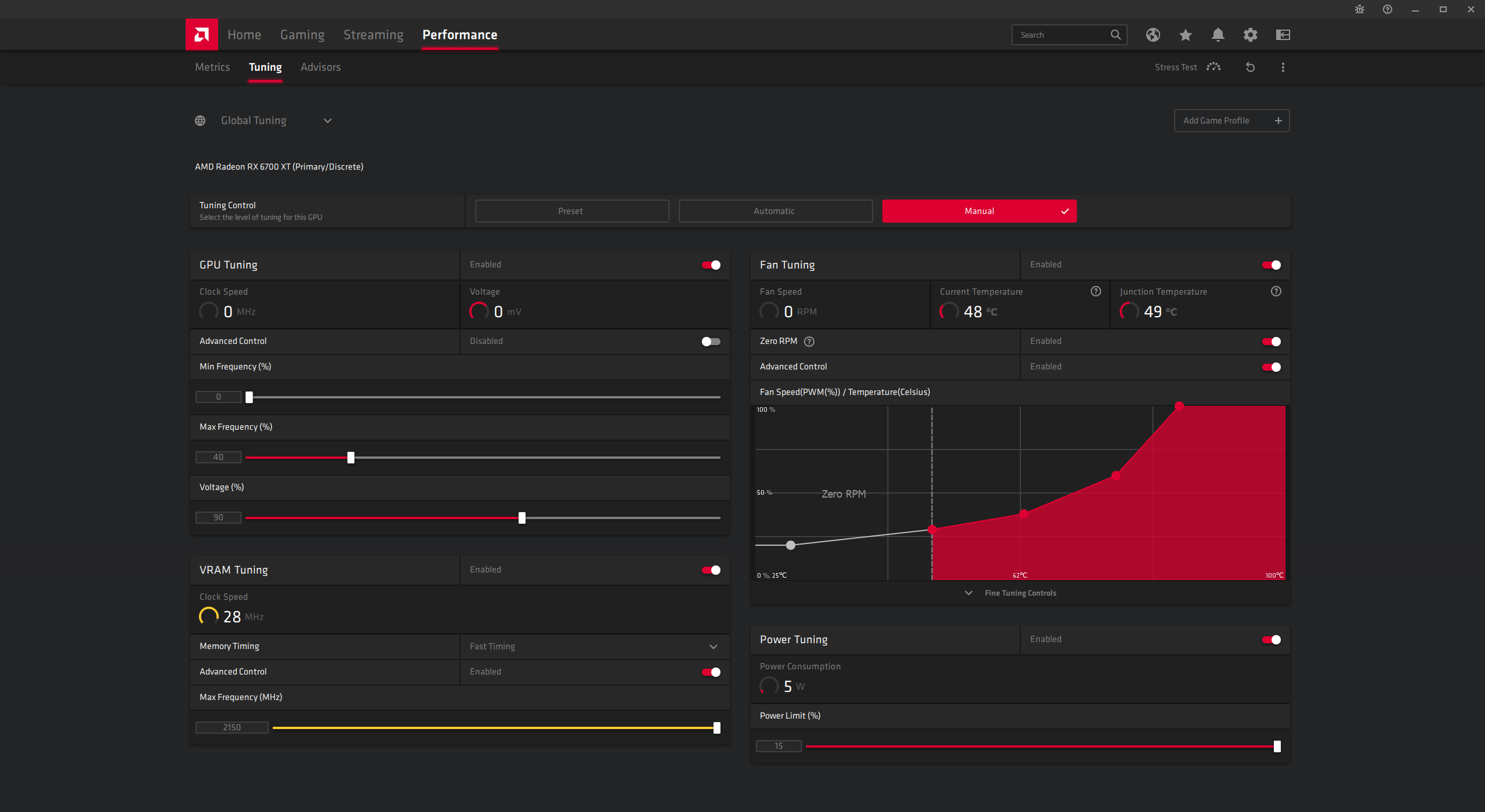

Before running the built-in benchmark in precision mode, we tuned for optimal Ethereum mining performance. Basically, that means finding the highest stable memory clocks and then dropping the GPU clocks (or power limit, depending on the card) until we find a good balance.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

In the case of the RX 6700 XT, we settled on 50% maximum GPU clocks (around 1300MHz) with a 150MHz GDDR6 overclock (17.2Gbps effective) and fast memory timings enabled. We also set the power limit to the maximum — that appears to help AMD's RDNA2 cards make better use of the memory bandwidth even if GPU clocks don't improve. Actual power consumption was only about 120W with these settings.

Considering Ethash tends to favor memory bandwidth over other factors, and the RX 6700 XT has a 192-bit bus instead of the 256-bit bus on the Navi 21 6800/6900 cards, the drop in hash rate pretty much matches the drop in bandwidth.

With tuning, we're able to get around 65MH/s out of the Navi 21 GPUs, while the RX 6700 XT maxed out at around 47.5MH/s. 25% less bandwidth, 25% lower hash rate. Maybe additional tuning would improve hash rates a bit more, but it's unlikely the 6700 XT will get much above 48MH/s with current mining software.

It's worth pointing out that 48MH/s is actually lower than the previous-gen RX 5700/5700 XT, again, thanks to the narrower memory bus width. It's slightly faster than the RX 5600 XT due to the higher memory clocks and also matches the "oops, we accidentally unlocked it" RTX 3060 12GB in Ethash rates.

What does that mean for gamers? Not much, except that the other cards that can do around 48MH/s currently sell for $800 or more, which means you can pretty much count on and a very limited supply of cards priced remotely close to the official MSRP, and the RX 6700 XT selling out.

MORE: Best Graphics Cards

MORE: GPU Benchmarks and Hierarchy

MORE: All Graphics Content

Current page: Radeon RX 6700 XT Power, Temps, Clocks, and Fans

Prev Page Radeon RX 6700 XT Gaming Performance Next Page Radeon RX 6700 XT: More and Less Navi

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

bigdragon These benchmarks show better performance than most of the others I've read this week. There's a few surprises in this review given that other sites showed performance in the 3060 to 3060 Ti range consistently. Seeing the 6700 XT beat the 3070 in a few tests is unexpected. I suppose that means AMD has been hard at work tweaking their drivers for better performance. The power consumption doesn't look as bad as I had been led to believe either. Looks like a solid GPU.Reply

I think the 6700 XT could be a good replacement for my 1070. However, do I really want to waste even more time fighting bots, adding to cart only to be unable to checkout, or being led to believe I have a shot at getting a GPU when I really never did? No. I'm not waking up early to watch a page that instantly flips from "coming soon" to "sold out" again. -

tennis2 Mining Performance -ReplyIn the case of the RX 6700 XT, we settled on 50% maximum GPU clocks (which doesn't actually mean 50%, but whatever — actually clocks settled in around 2.13GHz)

Sooo, you don't actually know how to detune the CPU, or? Hint - toggle that "advanced control" slider to ON.

Power efficiency is poor as expected. Meh. That tiny chainsaw whacked off a few too many CUs. -

oenomel Reply

Who really cares? They won't be available for months if ever?Admin said:The Radeon RX 6700 XT takes a step down from Big Navi, trimming the fat and coming in at $479 (in theory). Availability and actual street pricing are the keys to success, as the card otherwise looks promising. Here's our full review.

AMD Radeon RX 6700 XT Review: Big Navi Goes on a Diet : Read more -

JarredWaltonGPU Reply

And if you knew how AMD's drivers and tuning section work, you'd understand that toggling that "advanced control" slider just changes the percentage into a MHz number. Hint - toggle the "condescending tone" slider to OFF.tennis2 said:Mining Performance -

Sooo, you don't actually know how to detune the CPU, or? Hint - toggle that "advanced control" slider to ON.

Power efficiency is poor as expected. Meh. That tiny chainsaw whacked off a few too many CUs.

But I did make an error: I was looking at the memory clock, not the GPU clock, when I thought the GPU was still running at 2.13GHz. I've done a bit more investigating, now that I'm more awake (it was a late night, again — typical GPU launch). With the slider at 40% (which gives a MHz number of something like 1048MHz), I got nearly the same mining performance as with the slider at 65% (1702MHz). Here are three screenshots, showing 40%, 50%, and 65% Max Frequency settings (but with advanced control ticked on so you can see the MHz values). This is with the Sapphire Nitro+, so the clocks are slightly higher than the reference card, but the performance is pretty similar (actually, the reference card was perhaps slightly faster at mining for some reason — only like 0.3MH/s, but still.)

I've updated the text to remove the note about the max frequency not appearing to work properly. It does, my bad, the description of the tuned settings was and is still correct: 50% Max Freq, 112% power, 2150MHz GDDR6, slightly steeper fan curve, 115-120W.

82

83

84 -

Wendigo ReplyWe're interested in hearing your thoughts on what features matter most as well. We know AMD and Nvidia make plenty of noise about certain technologies, but we question how many people actually use the tech. If you have strong feelings for or against a particular tech, let us know in the comments section.

To answer your question, I would say that the most interesting AMD feature is Chill. Sure, it's useless if you're only looking for max FPS or benchmark scores. But on a practical standpoint, for the average gamer, it works wonder. This is particularly true when used with a Freesync monitor and setting the Chill min and max values to the range supported by the monitor, thus always keeping the FPS in the Freesync range. There's no obvious difference when playing most games (particularly for the average gamer not involved in competitive esports), but the card then runs significantly cooler and thus quieter, making for a overall more pleasant gaming experience. -

bigdragon Asus released their 6700 XT cards about an hour ago...for $350 over AMD's MSRP. The 6700 XT is an absolutely horrific value at $829. The stupid things still sold out almost instantly. Plenty of humans on Twitter complaining about bots buying everything up and immediately flipping the cards on Ebay. AMD and Asus have a lot of explaining to do.Reply

My 1070 is now worth double what I paid for it. I'm going to sell it this weekend and forget about AAA gaming for the rest of the year. Plenty of indie games get by happily with lower-end GPUs or iGPs. -

InvalidError Reply

No explaining to do here, it is simply the free market at work. Demand is higher than supply? Raise prices until equilibrium is reached.bigdragon said:AMD and Asus have a lot of explaining to do. -

deesider Reply

Not sure what the OP was hoping for - a golden ticket system like Willy Wonka?!InvalidError said:No explaining to do here, it is simply the free market at work. Demand is higher than supply? Raise prices until equilibrium is reached. -

bigdragon Reply

AMD's release date isn't until 9 AM EDT tomorrow morning -- not today. There were also no promised anti-bot measures in place, again, as usual. AMD's AIB's are also far more aggressive about marking up Radeon prices as compared to RTX prices.InvalidError said:No explaining to do here, it is simply the free market at work. Demand is higher than supply? Raise prices until equilibrium is reached.

Yeah, that would be swell.deesider said:Not sure what the OP was hoping for - a golden ticket system like Willy Wonka?!

What I seriously want to do is just checkout with 1 GPU without the GPU being ripped out of my cart mid-checkout or the vendor cancelling the order after it's been placed. -

jeremyj_83 Reply

I forgot what time they went on sale and checked within 5 minutes of the release and they are sold out online everywhere. You can get a couple different ones from a physical Microcenter location as they are in store only. Two of those are at MSRP even.bigdragon said:AMD's release date isn't until 9 AM EDT tomorrow morning -- not today. There were also no promised anti-bot measures in place, again, as usual. AMD's AIB's are also far more aggressive about marking up Radeon prices as compared to RTX prices.