Why you can trust Tom's Hardware

The combination of the Zen 3 CPU architecture and the Radeon RX Vega graphics engine propels the six-core Ryzen 5 5600G to class-leading gaming performance from an integrated graphics engine at its price point. Overall, the chip serves up a compelling blend of price and performance for the target market, effectively destroying the value prop of its more expensive sibling, the Ryzen 7 5700G.

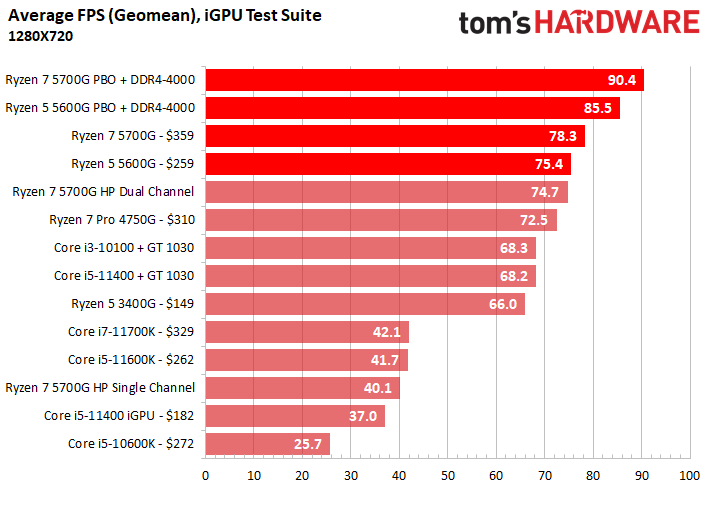

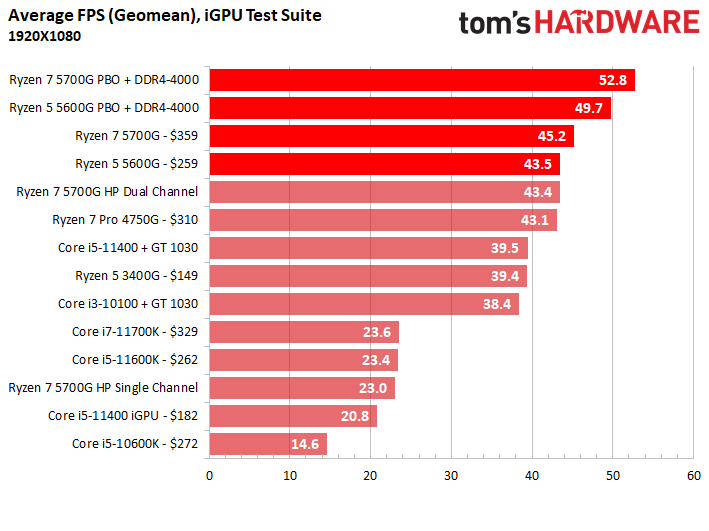

In fact, given that the $259 Ryzen 5 5600G's iGPU performance lands within 4% of the $359 Ryzen 7 5700G but for 30% less cash, it's also the best value APU for gaming on the market.

Quick disclaimer here: The chip market is currently in a state of flux, so it's hard to give solid buying advice that won't expire in a few days — CPU and GPU pricing and availability are extremely volatile. Additionally, recent signs point to GPU supply and pricing slowly improving. Be sure to check updated pricing before pulling the trigger on your build.

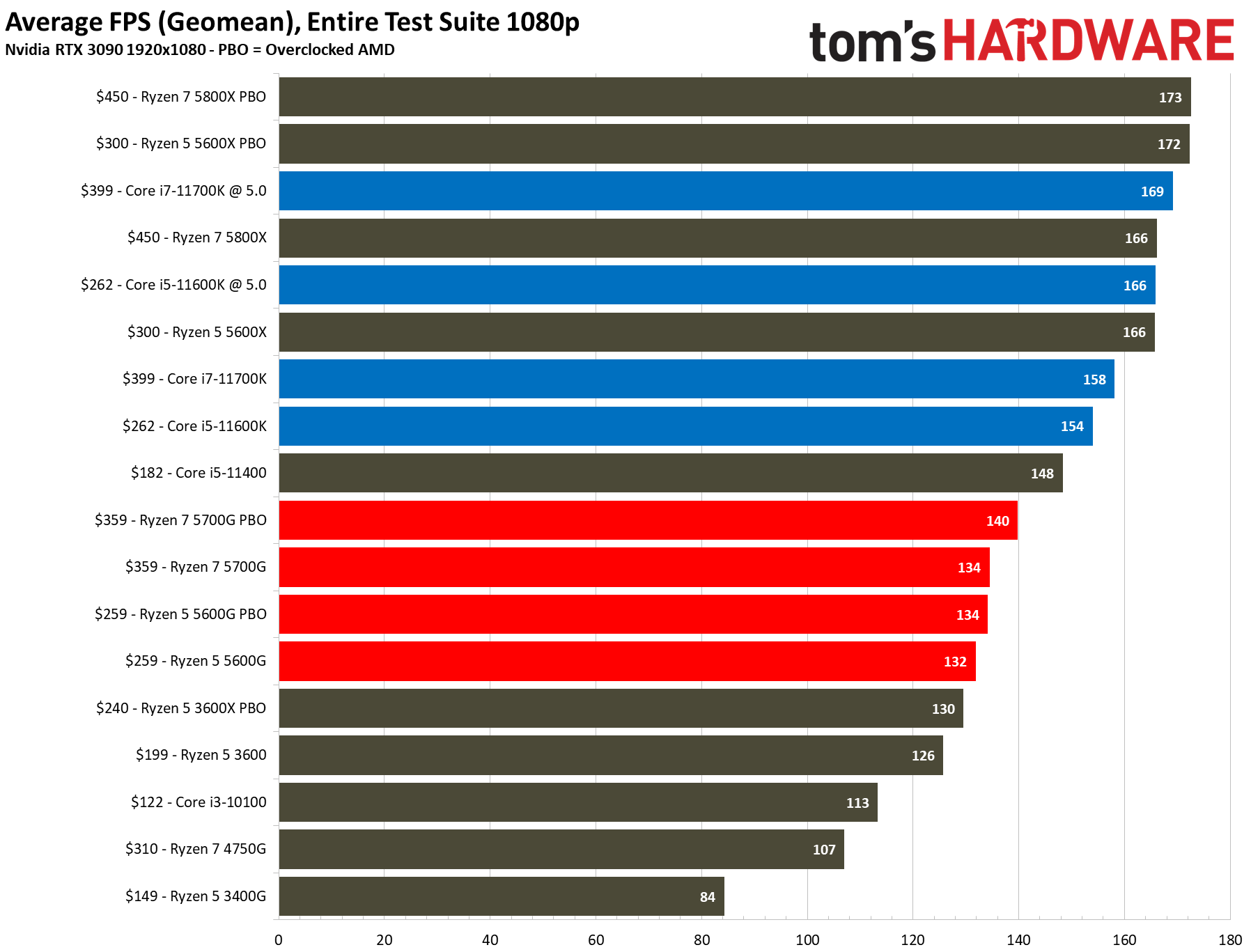

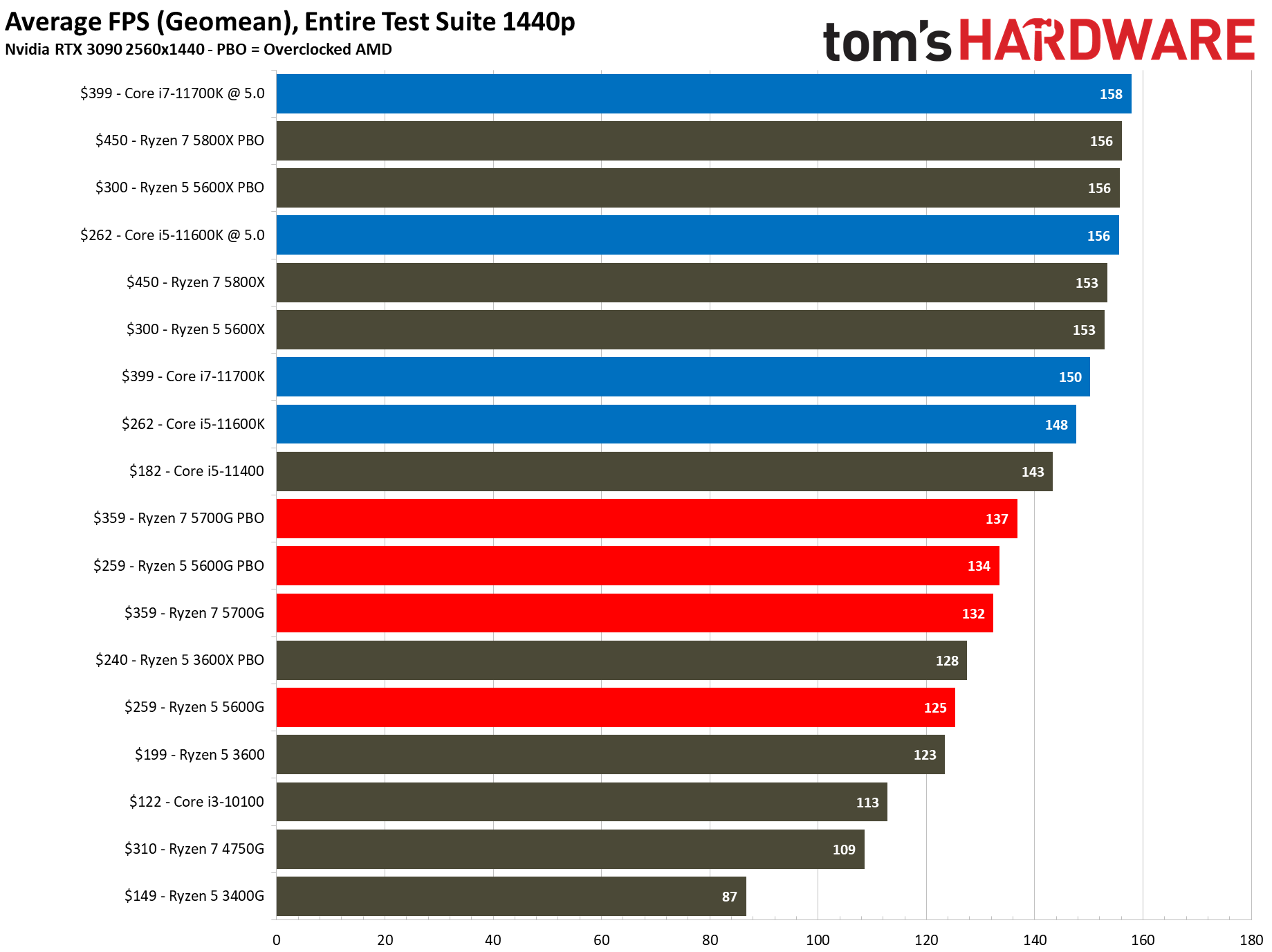

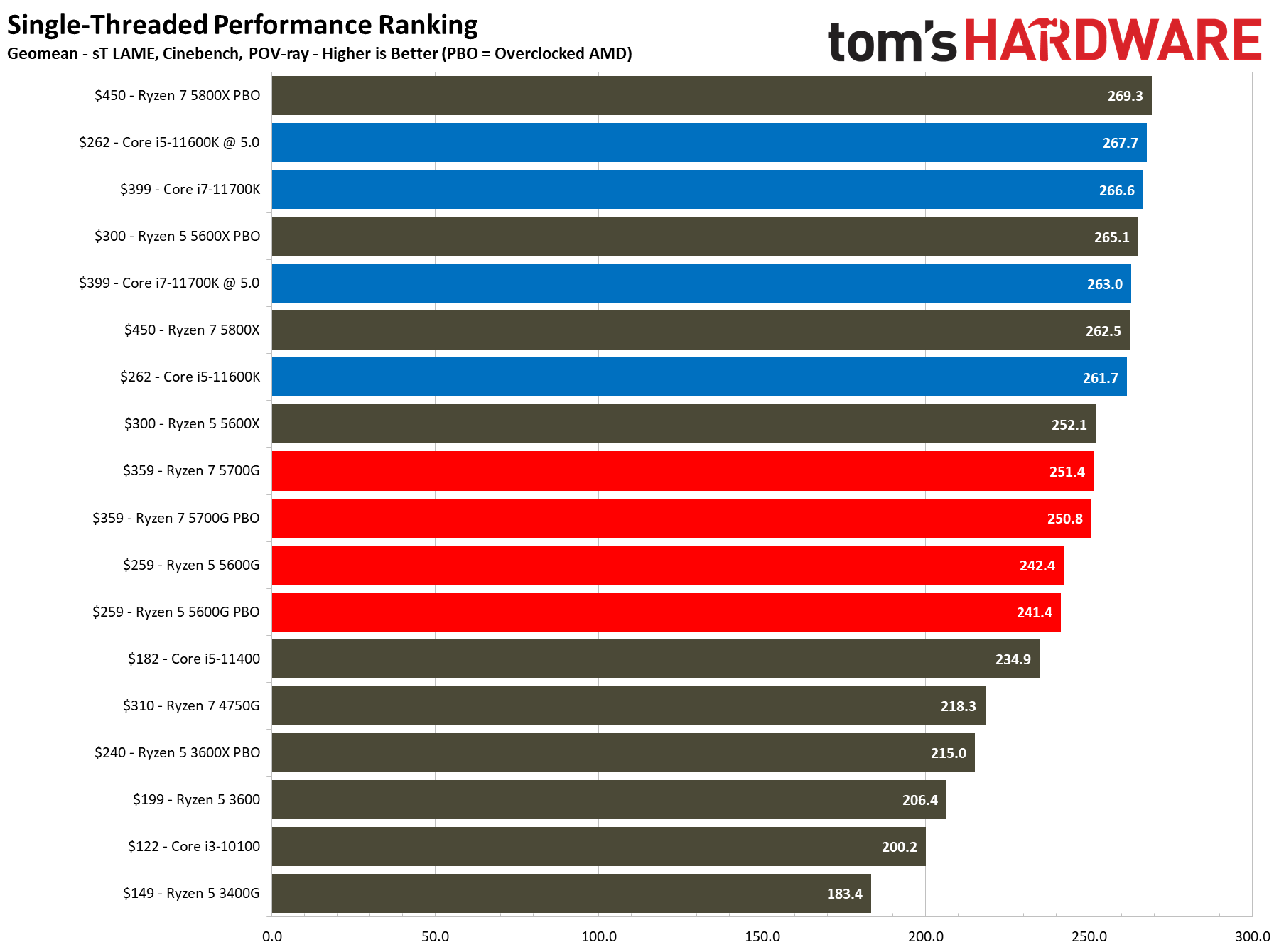

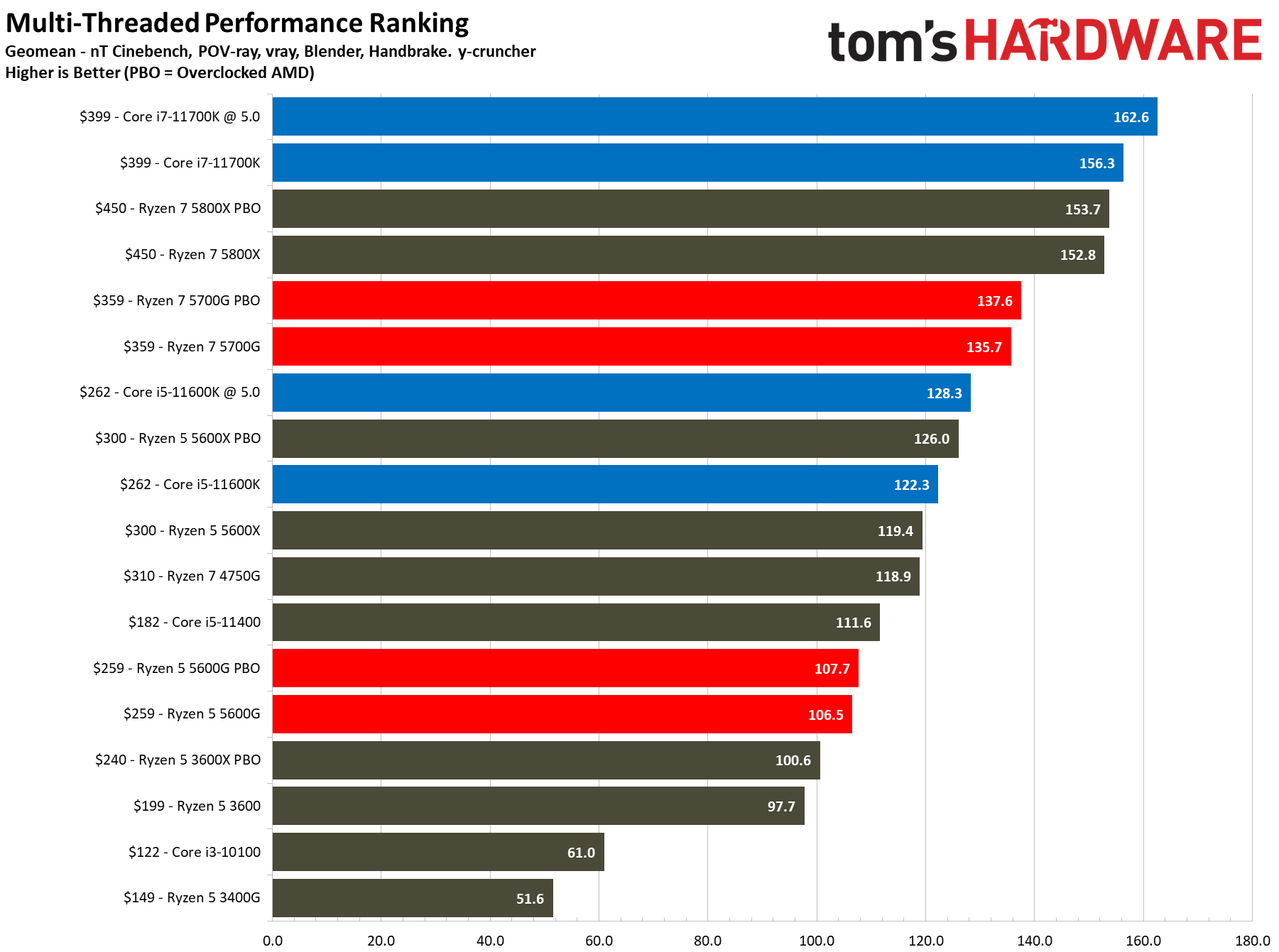

The charts below outline three areas of performance: The geometric mean of our suite of integrated graphics tests at both 1920x1080 (FHD) and 1280x720 resolutions, the geometric mean of performance with a discrete GPU, and performance in single- and multi-threaded workloads.

As long as you're willing to sacrifice fidelity and resolution, and keep your expectations in check, the Ryzen 5 5600G's Vega graphics have surprisingly good performance in gaming. The 5600G's Vega graphics served up comparatively great 1280x720 gaming across numerous titles, but options become more restricted at 1080p. Of course, you can get away with 1080p gaming, but you'll need to severely limit the fidelity settings with most titles.

The Ryzen 7 5700G's iGPU performance benefits more from overclocking than the 5600G due to its extra graphics and CPU resources. Still, we don't think that changes the value calculus much, especially since most folks using these chips won't splurge on expensive memory kits — you should shoot for a bog-standard DDR4-3200 kit for this class of chip. Additionally, you'll need a more robust cooler than the one that AMD chucks in the box. But again, spending a comparatively ridiculous amount of money for a cooler for this class of chip doesn't make much sense. The Ryzen 5 5600G is an excellent overclocker in all facets, like CPU, GPU, memory and fabric, so tuners will find plenty to tinker with.

We wouldn't recommend purchasing either Cezanne chip with the sole purpose of using it with a discrete GPU — that defeats the purpose of the Vega graphics. However, these strange times of the GPU shortage might compel some to use the Cezanne chips as a stopgap until pricing normalizes. When paired with a discrete GPU, the Ryzen 7 5700G will offer more runway for future GPU upgrades than the 5600G, but not by enough to justify the higher price tag. Additionally, the 5600G proved pretty agile, too, beating out the Ryzen 5 3600.

Based on CPU pricing alone, Intel's competing 11600K is much more potent when paired with a discrete GPU. However, its integrated graphics are lacking, so you'll be stuck with extremely limited gaming options, meaning it isn't a good stopgap solution unless you're only interested in very light games. For that purpose, at least in this price range, you'll need to look for a pairing like the Core i3-10100 and Nvidia GT 1030. That combo couldn't keep pace with the 5600G in our integrated graphics testing, but the Core i3-10100 will give you a bit more headroom when paired with a higher-powered GPU. However, that headroom shouldn't be meaningful enough to throw the decision in the 10100's favor, especially given that you would pair these chips with a lower-end discrete GPU than we've tested here.

In other words, if you're looking for a stop-gap solution that will pair well with a discrete GPU in the future, the Ryzen 7 5600G is the best choice. That calculus might change a bit if you broaden your options to the fickle and volitale second-hand GPU market, but most pairings of a used previous-gen GPU and Intel processor won't stack up well against the 5600G.

If you choose the Ryzen 5 5600G over the 'standard' six-core Ryzen 5 5600X, you gain the Radeon graphics engine but sacrifice 200 MHz of peak CPU boost clocks and half the L3 cache. The 5600G does have a 200 MHz higher boost clock, though. Choosing Cezanne also means you step back from 24 lanes of PCIe 4.0 support to 24 lanes of PCIe 3.0. While PCIe 4.0 doesn't deliver any significant gains in gaming performance, that could change in the future with the Windows 11 Direct Storage feature that will utilize NVMe SSDs more fully. You'll also lose out on the (up to) doubled storage throughput for day-to-day file transfers and productivity applications.

AMD positions the 5600G as the "non-X" equivalent to the Ryzen 5 5600X, but we don't think it lives up to the traditional value prop we've seen with prior non-X models. Overall, if you already have a discrete GPU for your build, most enthusiasts will be better served with other alternatives, be they from the Ryzen 5000 product stack or Intel's lineup. Pricing is fluid, though, so be sure to check our list of Best CPUs for the latest advice.

New builds are an entirely different matter, though. For extreme budget gaming, small form factor (SFF), and HTPC rigs, the Ryzen 5 5600G offers the lion's share of the more expensive 5700G's gaming performance, but at a much more palatable price point. If AMD frees the Ryzen 3 5300G from its OEM shackles, it could be the new budget champion. Unfortunately, the company hasn't committed to releasing it to retail. For extreme budget gaming rigs built around integrated graphics, or if you're looking for a fantastic stop-gap chip until the GPU shortage recedes, the Ryzen 5 5600G will be the chip to beat for the foreseeable future.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

MORE: Best CPUs

MORE: CPU Benchmarks Hierarchy

MORE: All CPUs Content

Current page: Better than the Ryzen 7 5700G

Prev Page AMD Ryzen 5 5600G Application Benchmarks

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

hotaru251 i would personally add to the cons: VegaReply

the only problem with this chip is the vega graphics. if they had RDNA it would basically destroy Intel's lower cpu's in cost to performance.

pcie 3.0 isnt really a con at this type of chip. Its a budget chip. -

ndperson Actually it isn't Vega that is limiting their performance but their ddr4 ram. That is why they don't update beyond vega. Vega itself is limited by the speed of the ram so upgrading to rdna really wouldn't help muchReply -

twotwotwo Probably not a priority for Intel, but a TGL desktop chip (that non-OEMs can get) might make this segment more interesting, going by laptop benchmarks.Reply

That or desktop Van Gogh 🤣 but I imagine Valve has all those booked for a while. -

brodon Building an RGB matx non gaming system on a budget, and, not understanding, at 79, much of the language from the tech savvy contributors, I rely a lot on certain comments and reviews to make purchase decisions.Reply

This G series integrated graphic CPU, on a B450M DS3H v.2 mobo and Cooler Master G100M low profile cooler in a NXZT h501i case looks like a workable base configuration.

The rest of the components should be elementary. -

hrudy Most of you won't care about this issue . I was evaluating the AMD Ryzen5 5600G using an existing Linux Image based on Centos 7. I wanted to get away from Intel and try AMD. However, this processor consistently generated Panic Traps, both from the image and even from the Centos 7.9 install disk. I was using a GIGABYTE B550M DS3H . I have never seen an X86_64 part generate Linux Panic traps by just booting. Usually there are minor issues like not seeing the audio or ethernet components. And yes ithe Ryzen 5 5600G will boot from later kernels.Reply

And yes an AMD cpu without the GPU enabled works fine. I then ran into the second problem which is that even with a late Ubuntu distribution the AMD DRM gpu code didn't not want to install. I have never encountered this level of incompatibility since I've been using Linux which is around Y2k. Naturally AMD support doesn't care since it is Linux. For this level of incompatibility I might as well be running an ARM processor. -

tracker1 Replyhrudy said:... AMD support doesn't care since it is Linux. For this level of incompatibility I might as well be running an ARM processor.

It's definitely a mixed bag. I learned this using an RX 5700XT at release. I had to run beta/alpha kernels for the first 6 months or so.. Ubuntu 20.04 was really the first release with decent in the box support and even then better with later kernels. So it's not too surprising.

I also had similar issues with Intel AX wireless at that time (Around x570 Master, motherboard). And that doesn't even cover trying to get RGB in Linux working.

I've since gone back to solid, no window, case. I'm also now on an RTX 3080, as I'm running some video AI stuff that didn't do well with AMD at the time.

Nvidia drivers haven't been the best either. At least at this point it's mostly mature. Unfortunately, bleeding edge hardware and Linux means a bit of pain.

If suggest only running a very recent kernel in hardware released at least 4 months before your OS release. CentOS stable is not that. Should run a recent Fedora instead. I'm not that up on rpm distros, preferring Debian based myself. I know there are a couple newer white box RedHat options though. -

render1967 Hi, guys! I have a question. Help me, please! Does the built-in video in 5600G support analog signal via D-sub? I ask because I have a problem with this - there's no signal via D-sub. Computer configuration: CPU Ryzen 5 5600G, MB ASRock B450M Pro4-F R2.0, RAM ADATA XPG GAMMIX D10 16GB (2x8GB) DDR4 3000MHz, HDD ADATA Ultimate SSD SU650 120GB + Apacer AS2280P4 M.2 PCIe 512GB, PSU FORTRON HYPER K PRO 500W, monitor ASUS VH222SReply -

Girl_Downunder Replyhrudy said:Most of you won't care about this issue . I was evaluating the AMD Ryzen5 5600G using an existing Linux Image based on Centos 7. I wanted to get away from Intel and try AMD. However, this processor consistently generated Panic Traps, both from the image and even from the Centos 7.9 install disk. I was using a GIGABYTE B550M DS3H . I have never seen an X86_64 part generate Linux Panic traps by just booting. Usually there are minor issues like not seeing the audio or ethernet components. And yes ithe Ryzen 5 5600G will boot from later kernels.

And yes an AMD cpu without the GPU enabled works fine. I then ran into the second problem which is that even with a late Ubuntu distribution the AMD DRM gpu code didn't not want to install. I have never encountered this level of incompatibility since I've been using Linux which is around Y2k. Naturally AMD support doesn't care since it is Linux. For this level of incompatibility I might as well be running an ARM processor.

I wondered if you asked at the Ubuntu forums on this issue. I personally run Mint Mate on an old 4th gen i5 desktop. I'm looking to upgrade to "nearly" the newest (not worried about PCIe 4) and have been eyeing the 5600G due to price as well as preferring iGPU as a backup if dedicated fails. Reading this has me a bit worried. Runs off to the Mint forums to see what I find there!