Why you can trust Tom's Hardware

Ryzen 5 5600G Overclocking and Thermals

| Row 0 - Cell 0 | CPU Cores | Radeon RX Vega Graphics | Memory | Infinity Fabric (FCLK) |

| Ryzen 7 5000G Overclock | Precision Boost Overdrive (PBO) | 2300 MHz | DDR4-4000 | 2000 MHz |

The Ryzen 5 5600G will compete with budget processors paired with low-end discrete GPUs, like the Core i3-10100 paired with an Nvidia GT 1030 GPU, so we've added that setup to our line of test systems.

When it comes to overclocking, the Ryzen 5 5600G is shockingly similar to the Ryzen 7 5700G. That's a good thing. The integrated RX Vega graphics engine proved to be an easy overclocker, jumping up to 2.3 GHz (a 400 MHz improvement over stock settings) with the SoC dialed in at 1.35V (this power domain feeds both the iGPU and SoC). Higher settings introduced artifacting, though, and we didn't attempt to add in too much additional voltage to the graphics due to our memory and core overclocks. Notably, this is only 100 MHz less iGPU frequency than we achieved with the 5700G, and that was the only difference in overclocks between the two chips.

APUs are one of the trickiest types of chips to overclock, at least in terms of balancing the different units. Because gaming performance scales far better with iGPU and memory throughput than core clocks, we toggled AMD's auto-overclocking Precision Boost Overdrive (PBO) for the CPU cores. PBO alleviated the lion's share of our difficulties balancing the CPU, iGPU and memory clocks, but further tuning might have yielded a better all-core overclock. The silicon lottery always comes into play, so your mileage might vary.

We've heard that good Cezanne chips support a fabric clock (FCLK) up to 2400 MHz, but again, it's a balancing act. We settled for a 2000 MHz FCLK and dialed in an easy DDR4-4000 with a 1:1 FCLK/memory ratio. This 'coupled mode' is the sweet spot for memory latency on AMD's Zen 2 and 3 platforms, but dialing in a higher FCLK can unlock higher coupled frequencies. We're aiming to represent a basic overclock, and a DDR4-4000 kit is already very pricey for a build with an APU, so we stopped there. You should shoot for a DDR4-3200 kit with this type of chip, provided you can nab one at a decent price.

We recorded a maximum of 73C and an average of 65C during an extended period of multi-threaded stress tests while a simultaneous instance of Furmark hammered the overclocked iGPU. However, this is with the Corsair H115i's fans cranking away at full speed. Most enthusiasts won't have such a powerful cooling solution for this class of chip, so you'll have to adjust your expectations accordingly. It should go without saying that you shouldn't expect to pull off such vigorous overclocks with the bundled cooler. As always, you should view our overclocking results as an exhibition.

We didn't have to adjust the VDDP voltage to ensure stability, but otherwise, our overclocking efforts were very similar to what we've achieved with the Ryzen 7 4750G in the past, highlighting the similarities between the two SoCs.

AMD says the Ryzen 5 5600G slots in as what we would traditionally think of as a non-X CPU. Additionally, some enthusiasts will seek out the chip to game with the iGPU until they can find a discrete GPU after the shortage recedes. As such, we also paired the chip with the Gigabyte GeForce RTX 3090 Eagle we use for our standard gaming suite to see how the chip would fare with a discrete GPU. One note about using a dedicated GPU with the 5600G is that, unlike the earlier AMD APUs that limited add-in boards to an x8 PCIe link, you get the full x16 link with Cezanne. We also ran our standard application test suite, so there are plenty of results to analyze.

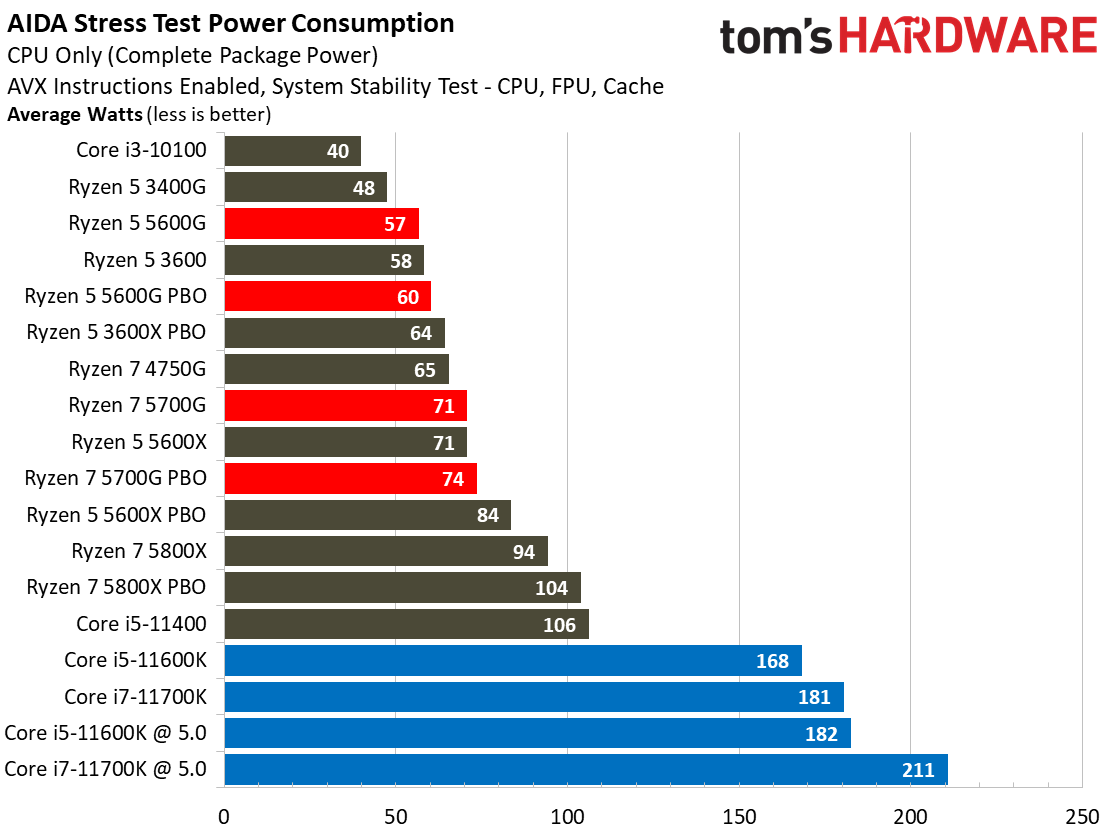

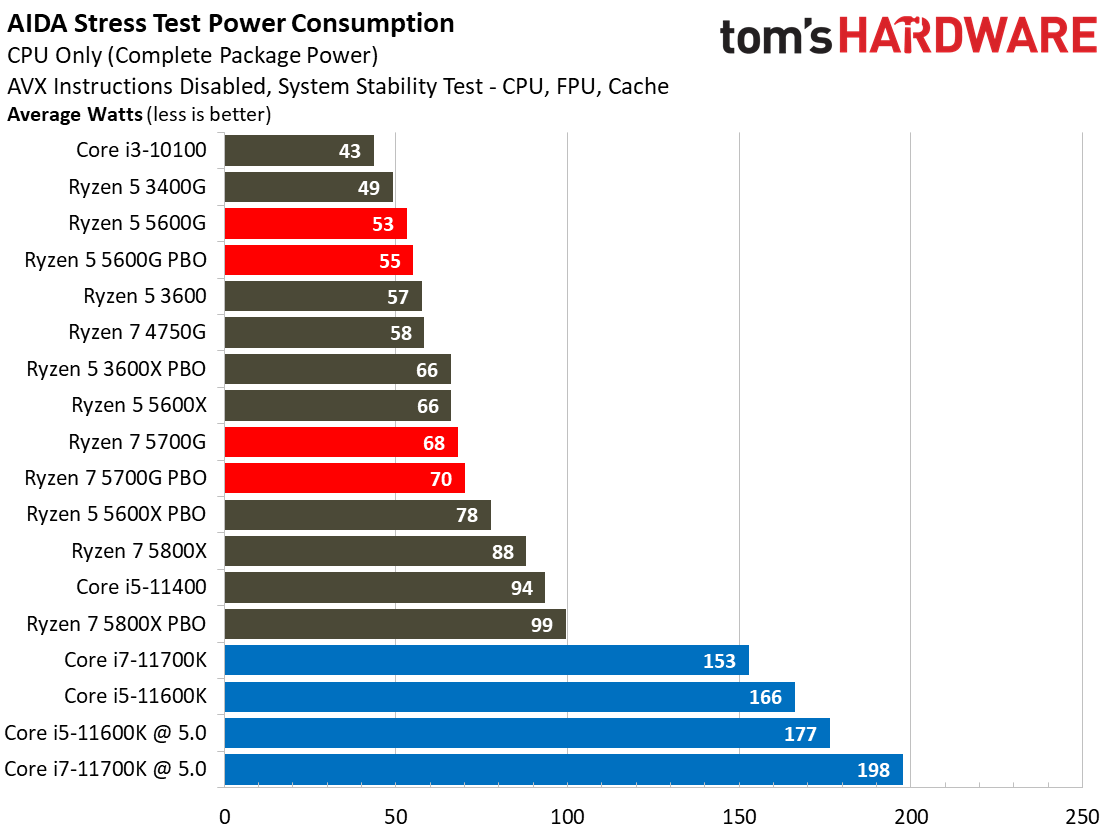

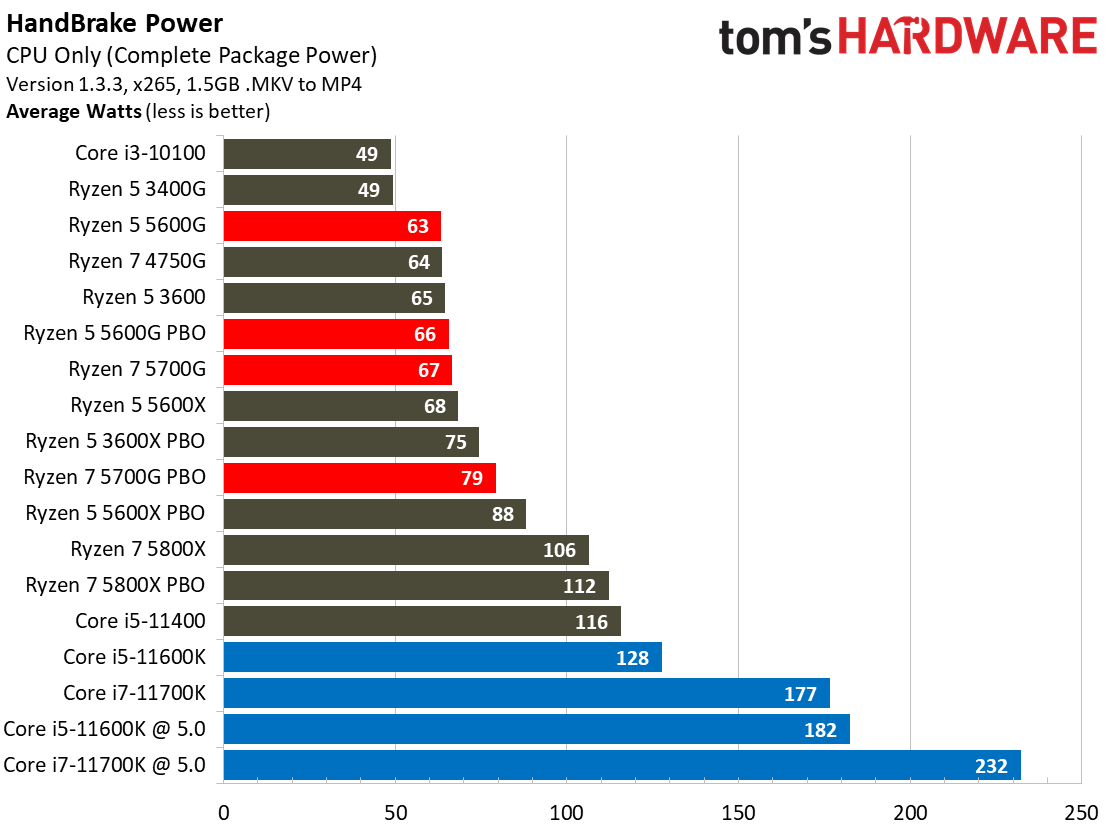

AMD Ryzen 5 5600G Power Consumption and Efficiency

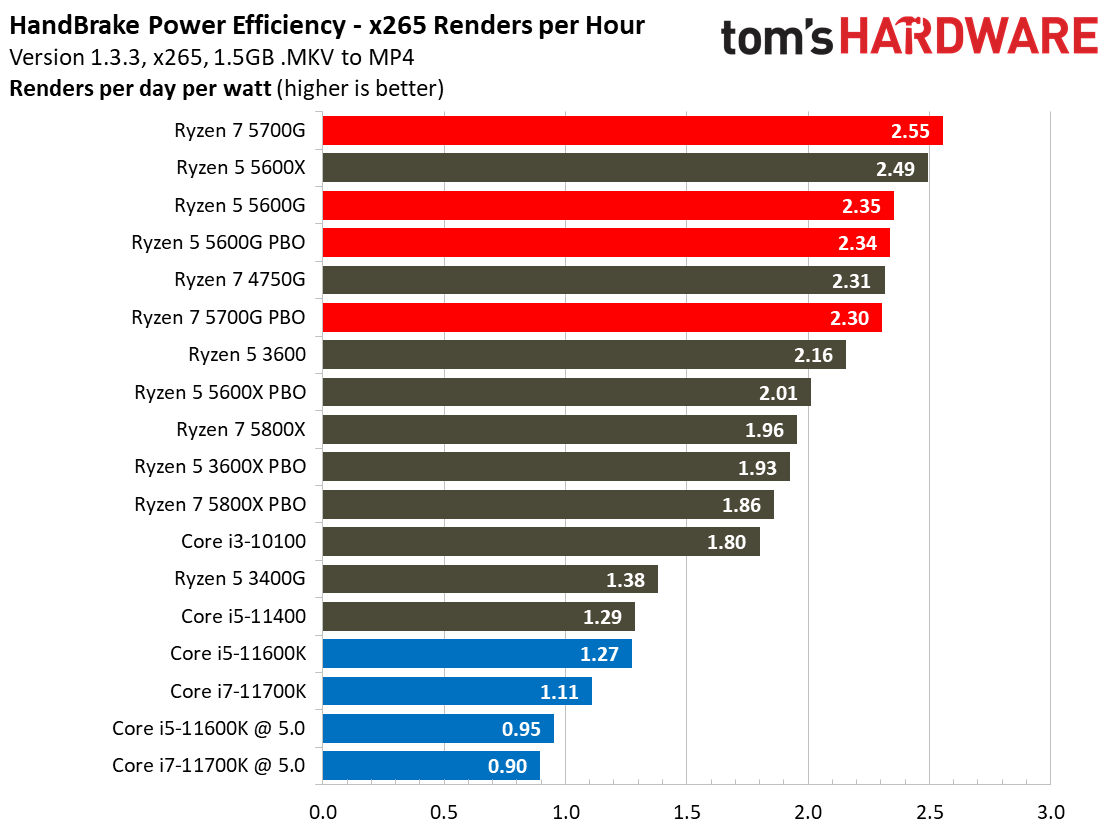

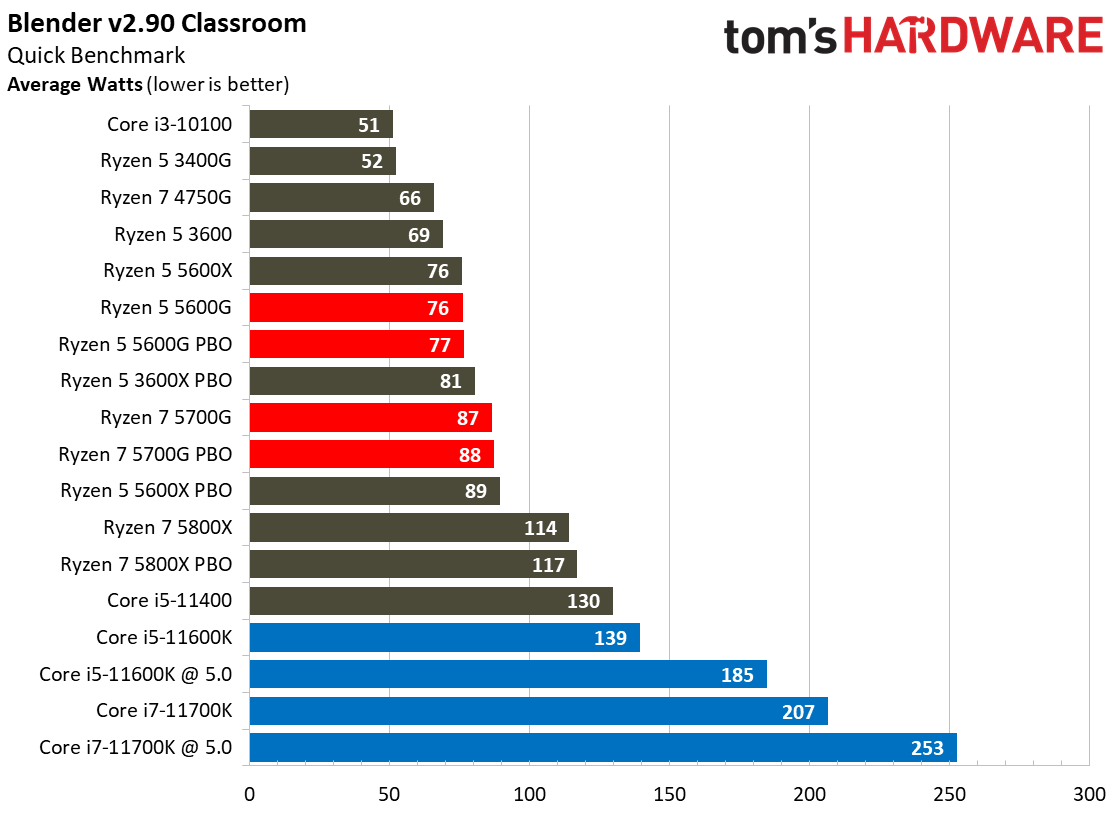

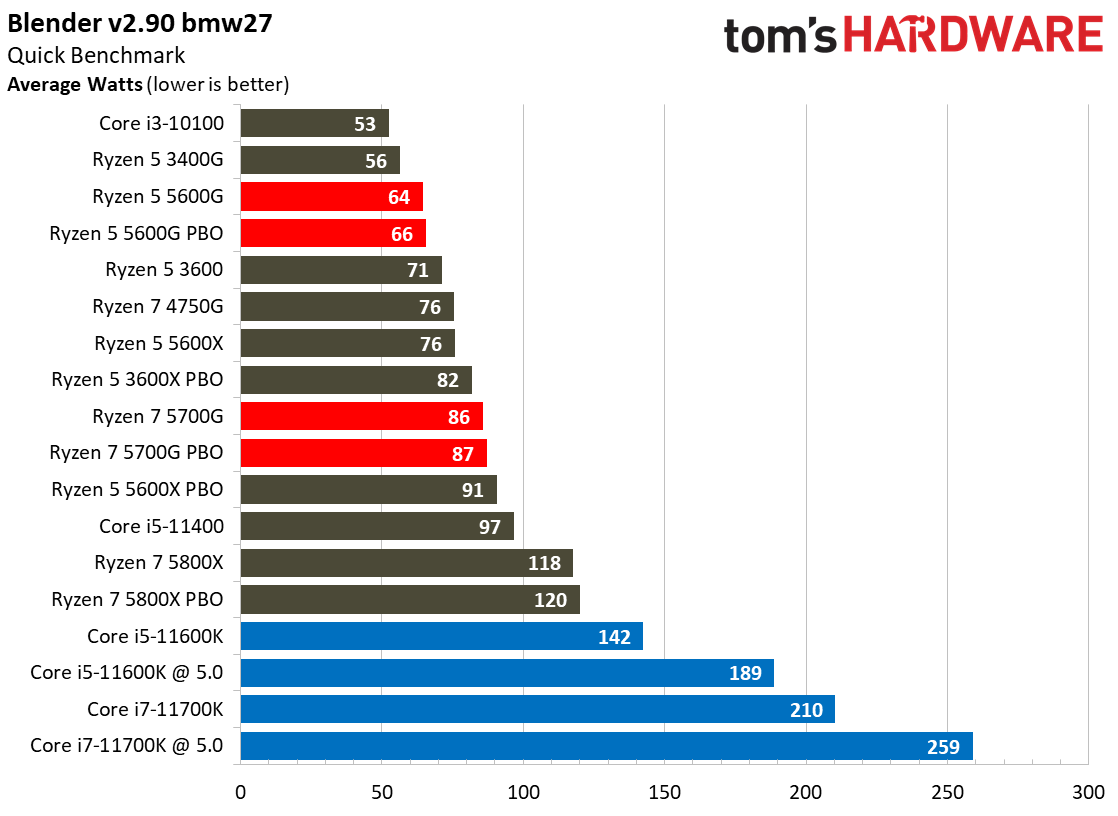

It's no secret that Intel has dialed up the power to compete with AMD's surprisingly efficient chips. As such, there are no real surprises here — Intel chips draw more power across the board.

AMD's Zen 3 models are the most power-efficient desktop PC chips we've ever tested, and the Ryzen 7 5000G series brings that same level of efficiency to the APU lineup, though the 5700G turned out to be the most power-efficient even though it consumed more power than the 5600G. In either case, the Ryzen 7 5600G is a miserly chip, sucking very little power for a superb power-to-performance ratio that easily beats any Intel chip.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

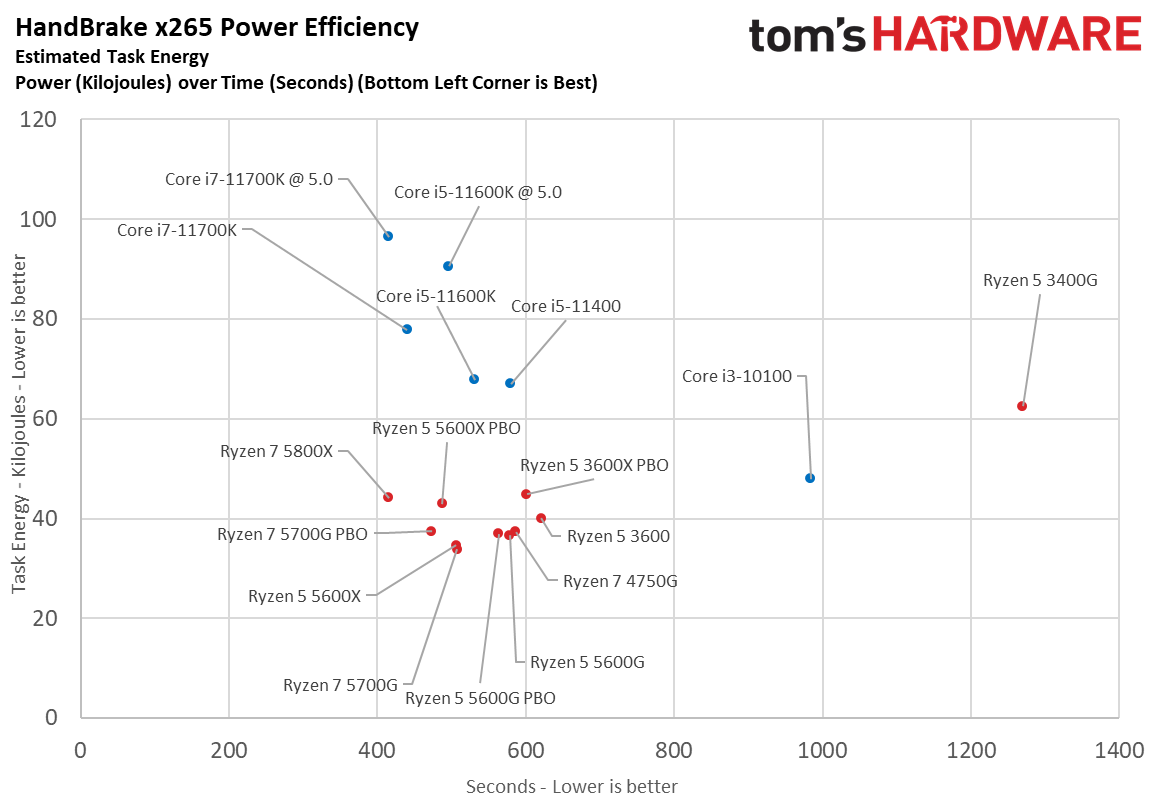

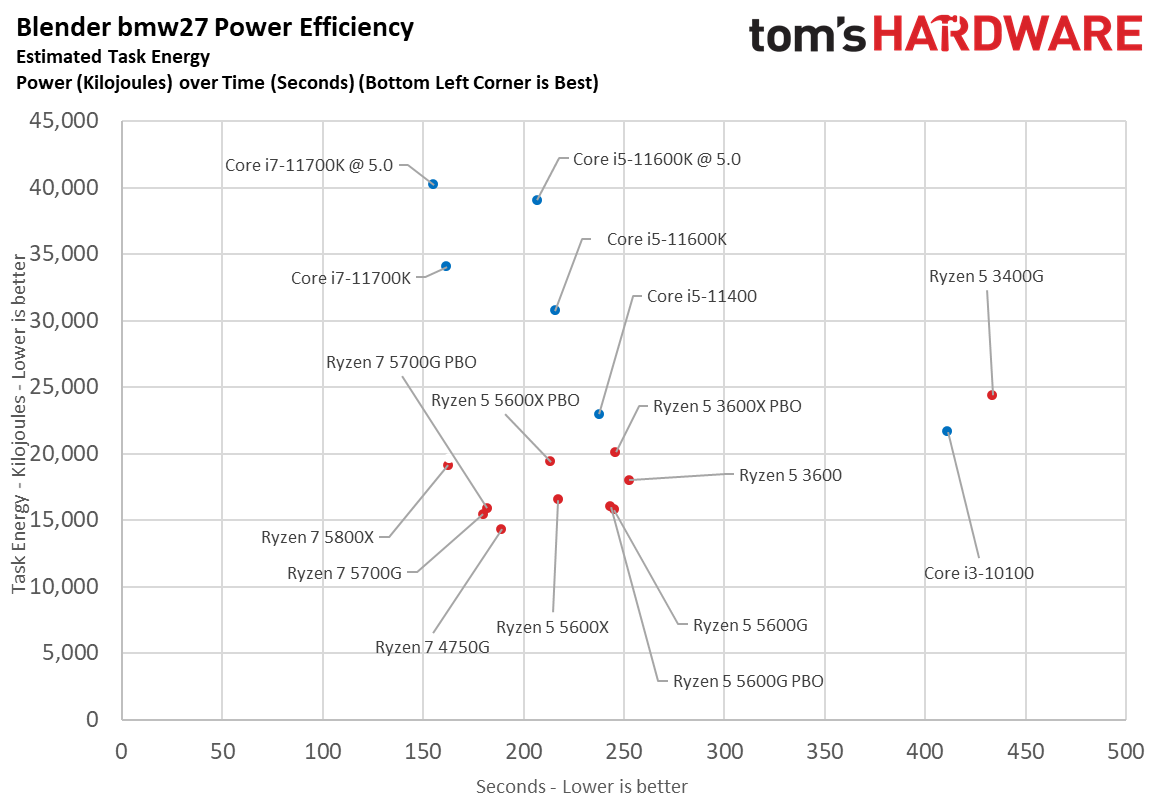

Here we take a slightly different look at power consumption by calculating the cumulative amount of energy required to perform Blender and x265 HandBrake workloads, respectively. We plot this 'task energy' value in Kilojoules on the left side of the chart.

These workloads are comprised of a fixed amount of work, so we can plot the task energy against the time required to finish the job (bottom axis), thus generating a really useful power chart.

Bear in mind that faster compute times, and lower task energy requirements, are ideal. That means processors that fall the closest to the bottom left corner of the chart are best.

Ryzen 5 5600G Test Setup

| AMD Socket AM4 (B550, OEM) | Ryzen 7 5700G, Ryzen 5 5600G, 4750G, 3400G |

| Row 1 - Cell 0 | ASUS ROG Strix B550-E, HP Pavilion TP01-2066 |

| Row 2 - Cell 0 | 2x 8GB Trident Z Royal DDR4-3600 @ 3200, Kingston DDR4-3200 |

| Intel Socket 1200 (Z590) | Core i5-11600K, Core i7-11700K, Core i5-11400, Core i3-10100 |

| Row 4 - Cell 0 | ASUS Maximus XIII Hero |

| Row 5 - Cell 0 | 2x 8GB Trident Z Royal DDR4-3600 - 10th-Gen: Stock: DDR4-2933, OC: DDR4-4000, 11th-Gen varies, outlined above (Gear 1) |

| AMD Socket AM4 (X570) | AMD Ryzen 7 5800X, Ryzen 5 5600X |

| MSI MEG X570 Godlike | |

| Row 8 - Cell 0 | 2x 8GB Trident Z Royal DDR4-3600 - Stock: DDR4-3200, OC: DDR4-4000, DDR4-3600 |

| All Systems | Gigabyte GeForce RTX 3090 Eagle - Gaming and ProViz applications |

| Row 10 - Cell 0 | Nvidia GeForce RTX 2080 Ti FE - Application tests |

| 2TB Intel DC4510 SSD | |

| EVGA Supernova 1600 T2, 1600W | |

| Row 13 - Cell 0 | Open Benchtable |

| Windows 10 Pro version 2004 (build 19041.450) | |

| Cooling | Corsair H115i, Custom loop |

MORE: Best CPUs

MORE: CPU Benchmarks Hierarchy

MORE: All CPUs Content

Current page: AMD Ryzen 5 5600G Power Consumption, Overclocking and Thermals

Prev Page Zen 3 and Vega Unite Next Page AMD Ryzen 5 5600G iGPU Gaming Benchmarks

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

hotaru251 i would personally add to the cons: VegaReply

the only problem with this chip is the vega graphics. if they had RDNA it would basically destroy Intel's lower cpu's in cost to performance.

pcie 3.0 isnt really a con at this type of chip. Its a budget chip. -

ndperson Actually it isn't Vega that is limiting their performance but their ddr4 ram. That is why they don't update beyond vega. Vega itself is limited by the speed of the ram so upgrading to rdna really wouldn't help muchReply -

twotwotwo Probably not a priority for Intel, but a TGL desktop chip (that non-OEMs can get) might make this segment more interesting, going by laptop benchmarks.Reply

That or desktop Van Gogh 🤣 but I imagine Valve has all those booked for a while. -

brodon Building an RGB matx non gaming system on a budget, and, not understanding, at 79, much of the language from the tech savvy contributors, I rely a lot on certain comments and reviews to make purchase decisions.Reply

This G series integrated graphic CPU, on a B450M DS3H v.2 mobo and Cooler Master G100M low profile cooler in a NXZT h501i case looks like a workable base configuration.

The rest of the components should be elementary. -

hrudy Most of you won't care about this issue . I was evaluating the AMD Ryzen5 5600G using an existing Linux Image based on Centos 7. I wanted to get away from Intel and try AMD. However, this processor consistently generated Panic Traps, both from the image and even from the Centos 7.9 install disk. I was using a GIGABYTE B550M DS3H . I have never seen an X86_64 part generate Linux Panic traps by just booting. Usually there are minor issues like not seeing the audio or ethernet components. And yes ithe Ryzen 5 5600G will boot from later kernels.Reply

And yes an AMD cpu without the GPU enabled works fine. I then ran into the second problem which is that even with a late Ubuntu distribution the AMD DRM gpu code didn't not want to install. I have never encountered this level of incompatibility since I've been using Linux which is around Y2k. Naturally AMD support doesn't care since it is Linux. For this level of incompatibility I might as well be running an ARM processor. -

tracker1 Replyhrudy said:... AMD support doesn't care since it is Linux. For this level of incompatibility I might as well be running an ARM processor.

It's definitely a mixed bag. I learned this using an RX 5700XT at release. I had to run beta/alpha kernels for the first 6 months or so.. Ubuntu 20.04 was really the first release with decent in the box support and even then better with later kernels. So it's not too surprising.

I also had similar issues with Intel AX wireless at that time (Around x570 Master, motherboard). And that doesn't even cover trying to get RGB in Linux working.

I've since gone back to solid, no window, case. I'm also now on an RTX 3080, as I'm running some video AI stuff that didn't do well with AMD at the time.

Nvidia drivers haven't been the best either. At least at this point it's mostly mature. Unfortunately, bleeding edge hardware and Linux means a bit of pain.

If suggest only running a very recent kernel in hardware released at least 4 months before your OS release. CentOS stable is not that. Should run a recent Fedora instead. I'm not that up on rpm distros, preferring Debian based myself. I know there are a couple newer white box RedHat options though. -

render1967 Hi, guys! I have a question. Help me, please! Does the built-in video in 5600G support analog signal via D-sub? I ask because I have a problem with this - there's no signal via D-sub. Computer configuration: CPU Ryzen 5 5600G, MB ASRock B450M Pro4-F R2.0, RAM ADATA XPG GAMMIX D10 16GB (2x8GB) DDR4 3000MHz, HDD ADATA Ultimate SSD SU650 120GB + Apacer AS2280P4 M.2 PCIe 512GB, PSU FORTRON HYPER K PRO 500W, monitor ASUS VH222SReply -

Girl_Downunder Replyhrudy said:Most of you won't care about this issue . I was evaluating the AMD Ryzen5 5600G using an existing Linux Image based on Centos 7. I wanted to get away from Intel and try AMD. However, this processor consistently generated Panic Traps, both from the image and even from the Centos 7.9 install disk. I was using a GIGABYTE B550M DS3H . I have never seen an X86_64 part generate Linux Panic traps by just booting. Usually there are minor issues like not seeing the audio or ethernet components. And yes ithe Ryzen 5 5600G will boot from later kernels.

And yes an AMD cpu without the GPU enabled works fine. I then ran into the second problem which is that even with a late Ubuntu distribution the AMD DRM gpu code didn't not want to install. I have never encountered this level of incompatibility since I've been using Linux which is around Y2k. Naturally AMD support doesn't care since it is Linux. For this level of incompatibility I might as well be running an ARM processor.

I wondered if you asked at the Ubuntu forums on this issue. I personally run Mint Mate on an old 4th gen i5 desktop. I'm looking to upgrade to "nearly" the newest (not worried about PCIe 4) and have been eyeing the 5600G due to price as well as preferring iGPU as a backup if dedicated fails. Reading this has me a bit worried. Runs off to the Mint forums to see what I find there!