Can Your Old Athlon 64 Still Game?

Game Benchmarks: Oblivion

Oblivion was released in March of 2006 and pushed all available hardware at the time beyond its limits. Many a gamer upgraded his or her system for a better Oblivion experience. Upgrade for one game? Well, given the right game, why not? Oblivion is not a 30-50 hour game. Most Oblivion gamers spend hundreds of hours questing and exploring, and with the vast number of available mods, some may spend thousands of hours playing this game alone. So if there is ever a game to warrant a system upgrade, it would be a lengthy and demanding title such as Oblivion. Our 8800 GS test card is more powerful than any single GPU at that time, but lets take an in depth look to see what CPU power is needed to let the 8800 GS breathe in this aging, but still demanding and popular game.

In Oblivion, vast amounts of time are spent in towns, castles, caves, or exploring the varied outdoor land of Cryodil. These areas stress the system in a different way, so to best get an idea of the performance of these three processors in Oblivion, we go more in-depth and tested two different areas of the game. The Town benchmark takes place inside the walls of Chorral and the Foliage benchmark is completely in the thickest waist-high grass we could find in the Great Forest.

We used Fraps to record gaming performance and ran each test three times to assure consistency. Results were once again typically less than 1 FPS apart so we used the median score, while all settings were on maximum except for distracting self shadows. Oblivion doesn’t have an in-game option for anisotropic filtering, which makes the game look much better and is worth forcing in the drivers. To keep HDR on, we also have to force FSAA in the drivers. Both make the game better looking if you have a powerful enough graphics card to handle them.

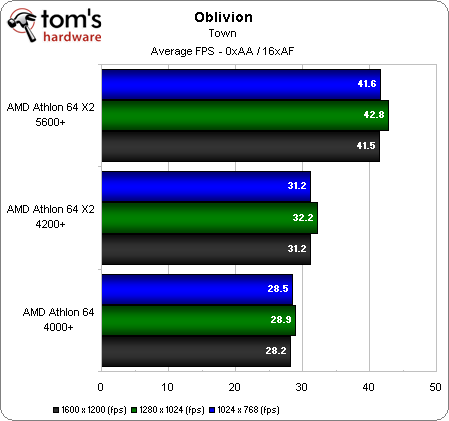

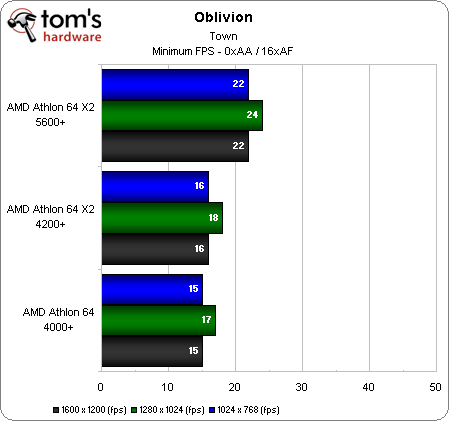

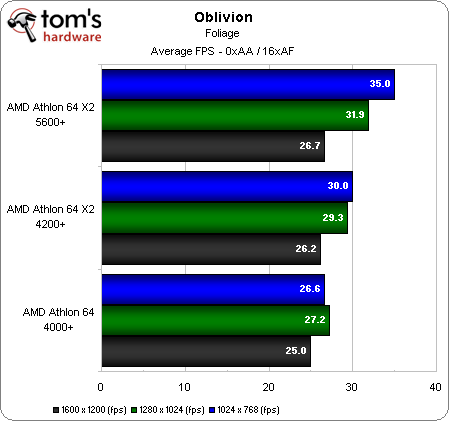

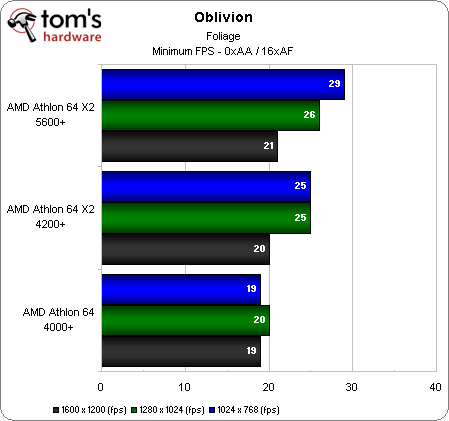

Without forcing FSAA in the drivers, we are extremely CPU-bound with all three of these processors. You can see results do not vary by resolution, but do by processor. The X2 4200+ is able to average over 30 FPS and slightly beats the A64 4000+ both in average and minimum FPS. But the high-clocked X2 5600+ really shines here with a 10 FPS higher average than the X2 4200+, and minimums that stay above 20 FPS. Right at the start, we see at max details that Oblivion demands some serious CPU power as we could have used an even more powerful CPU to keep up with our 8800 GS and maintain higher minimum frame rates.

One interesting side observation is that in this CPU limited game area, performance at 1280x1024 was consistently higher than at 1024x768 or 1600X1200. Look in both charts above, and the narrower 5:4 aspect ratio allows for a 2 FPS higher minimum and even slightly higher average frame rate compared to the slightly wider 4:3 ratio. It would be interesting to explore this further and include 16:10 and 16:9 aspect ratios as well.

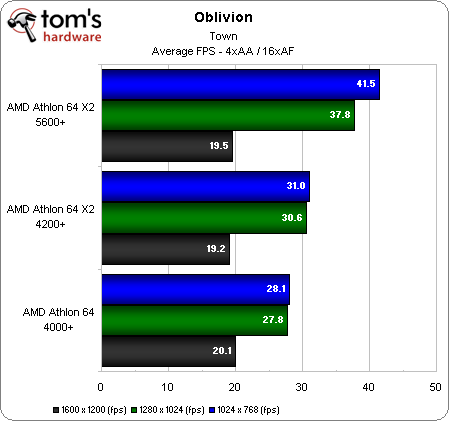

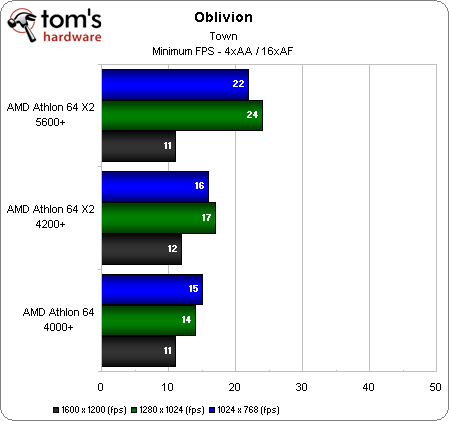

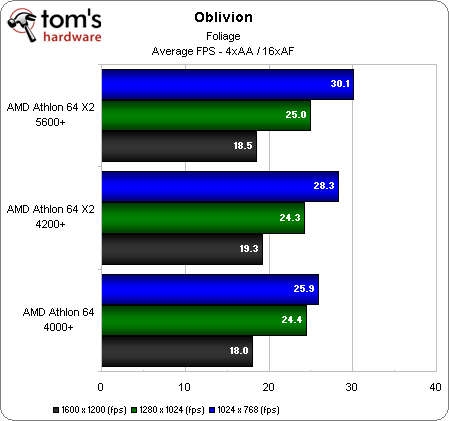

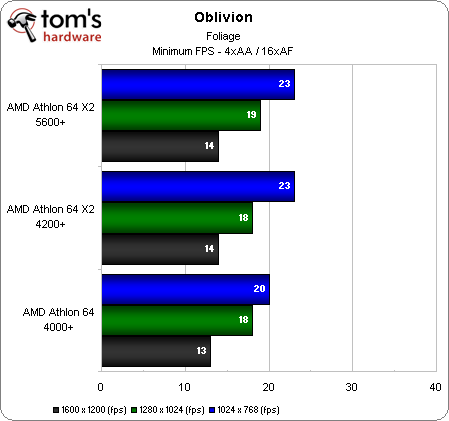

With 4xAA, we see no performance drop at 1024x768, and very little at 1280x1024. The A64 4000+ trails the X2 4200+ and the X2 5600+ is still shining above the others. The 8800 GS is unable to handle 1600x1200 with 4xAA/16xAF and we are now totally GPU bound with equally unplayable performance regardless of the CPU. At all playable settings, we see the importance of having a good CPU in this game area as the X2 5600+ provided the best performance. Now, it’s time to see if it still shines outside the city walls.

Oblivion’s foliage is the most GPU-demanding part of the game and you can see without FSAA that we are already under 30 FPS average at 1600x1200 regardless of the CPU. But this game area also stresses the CPU. Take note of the single-core A64 4000+, and you’ll see at 1024x768 it’s only able to do an average of 26 FPS. At these settings it is CPU limited and again we see that the narrower aspect ratio of 1280x1024 gives higher performance as in the town benchmark. And once again, the X2 5600+ provides the best performance.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Without overclocking, the stock-clocked XFX 8800 GS struggles with 4xAA. The X2 5600+ at 1024x768 is the only test to average 30 FPS. Increasing the resolution reached fairly unplayable settings, and there is very little difference between the two dual-core CPUs.

Through all these tests, we can see Oblivion, like many games, is both CPU and GPU demanding. To be able to play at max details at higher resolutions or with FSAA, you will need a good amount of GPU power. But we also see that a decent multi-core CPU is needed, too. Single-core CPU owners will need to reduce settings when playing Oblivion and owners of entry-level dual-cores may find it necessary to overclock their CPU to play Oblivion at max details.

Current page: Game Benchmarks: Oblivion

Prev Page Game Benchmarks: Test Drive Unlimited Next Page Game Benchmarks: Call of Duty 4-

Schip FIRST POST!!! Nice Article though. I knew my brother would soon be doomed with his P4 2.8c ;)Reply -

"AMD Athlon 64 X2 4200 + dual-core, which has a 2.2 GHz Manchester architecture with 512 MB L2 cache per core."Reply

oau! that's a lot of cache :D -

neiroatopelcc I haven't read the actual article yet, but I bet the simple answer is no!Reply

I've got a backup gaming rig at home that barely cuts it. An x2 1.9ghz (oc'ed to 2.4) with an 8800gtx and 3gb memory. That rig struggles at 1280x1024 in some situations, and it can only be attributed to the cpu really. -

bf2gameplaya 2.8GHz Opteron 185 (up from 2.6GHz) with 2x1MB L2 cache is the ultimate s939 CPU....blows these weak benchmarks away.Reply

Who would have thought DDR would have such durability? There's something to be said for CAS2! -

neiroatopelcc But your opteron cpu still limits the modern graphics cards.Reply

Two years back I bought my 8800gtx, and realized it wouldn't come to its full potential in my opteron 170 (@ 2.7). A friend with another gtx paired with an e6400 chip (@ 3ghz) scored a full 30% higher in 3dmark than I, and it showed in games. Even in wow where you'd expect a casio calculator would deliver enough graphics power.

In short - ye ddr still work if you've got enthusiast parts, but that can't negate the effect a faster cpu would give. At least at decent resolutions (22" wide) -

dirtmountain This is a great article! It will give me something to show when i'm talking to people about a new system or just a GPU/PSU upgrade. Great job by Henningsen.Reply -

NoIncentive I'm still using a P4 3.0 @ 3.4 with 1 GB DDR 400 and an nVidia 6800GT...Reply

I'm building a new computer next week. -

randomizer I can echo the findings in Crysis. It didn't matter what settings I ran with a 3700 Sandy and an X1950 pro, the framerate was almost the same (albeit low 20s because the card is slower). Added an E6600 to the mix and my framerate tripled at lower settings.Reply

It would have been interesting to see how a 3000+ Clawhammer (C0 stepping) would do in Crysis. Single-channel memory, poor overclocking capabilities... FAIL! -

ravenware bf2gameplaya2.8GHz Opteron 185 (up from 2.6GHz) with 2x1MB L2 cache is the ultimate s939 CPU....blows these weak benchmarks away.Who would have thought DDR would have such durability? There's something to be said for CAS2!Reply

Thia ia true about the DDR. I recall an article on toms right after the release of the AM2 socket which tested identical dual core processors against their 939 counterparts; the tests showed little to no performance gains.

Great article, their has been some discussion about this in the forums as well.

I currently own a 939 4200+ x2 that's paired with a 7800GT; and this article shows what I thought to be accurate about the AMD64 chips. Their not as fast as some of the C2D's but they still kick ass.

Good job pointing out the single core factor in newer games too. As soon as the crysis demo was released I upgraded my San Diego core to a dual core and noticed the difference in crysis immediately.

This article gives me further confidence in my decision to hold on upgrading my system. I want to hold out for Windows7 D3D11 and more money to build an ape sh** machine :D

Nice article!!