CrossFire Versus SLI Scaling: Does AMD's FX Actually Favor GeForce?

We've heard it said before that AMD's GPUs are more platform-dependent than Nvidia's. So, what happens when you drop a Radeon and a GeForce into an FX-8350-based system? Does AMD's CPU get in the way of its GPU running as well as it possibly could?

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Power And Efficiency

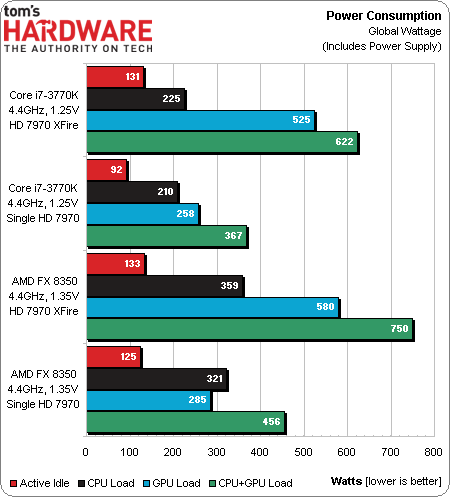

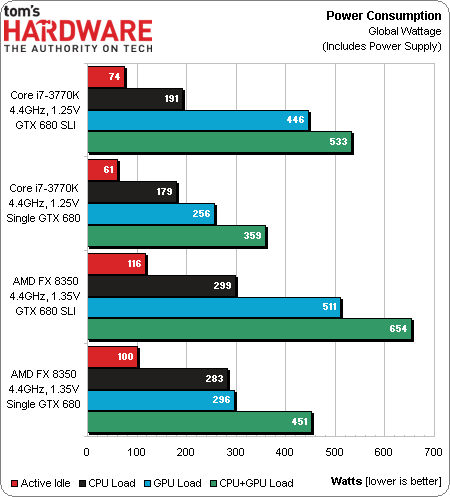

Despite the marketing behind ZeroCore, and indeed, the technology suite's effectiveness in single- and multi-card configurations, Radeon HD 7970s cannot idle with three monitors attached. The host processor's power use isn't bad, though. This is one of those instances where putting the AMD and Nvidia cards into separate charts make sense, since we're trying to compare CPU-to-GPU pairing, rather then CPUs or GPUs alone.

Regardless of whether you're running under an AMD or Intel processor, adding a second Radeon HD 7970 appears to impart far greater power consumption than a second GeForce GTX 680. Single-GPU load power is comparable between the competing graphics cards.

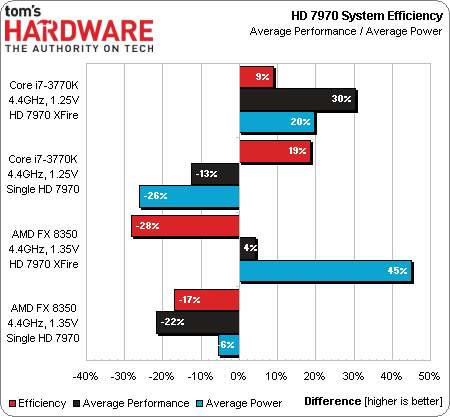

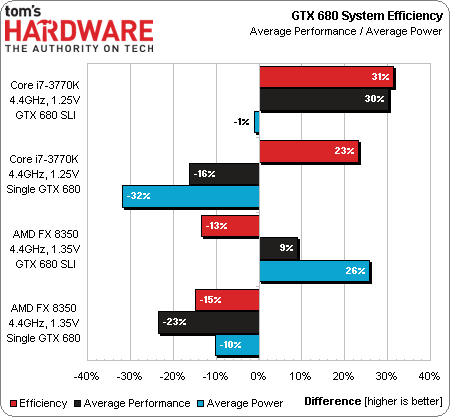

Those power draw differences are reflected in reduced efficiency. Comparing performance to power, GeForce efficiency appears to increase in SLI, while Radeon efficiency appears to drop in CrossFire. None of this gets us closer to figuring out whether AMD’s fastest CPUs allow Nvidia's graphics hardware to reach further than its own, however.

Article continues belowGet Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Power And Efficiency

Prev Page Results: 3DMark 11 Next Page CPU-To-GPU Performance Scaling-

Crashman BigMack70This article was a good laugh... I sincerely hope nobody is throwing $800+ worth of graphics muscle onto an FX series CPU.I think AMD is just trolling gamers with their CPUs. Although they're definitely catching up to Intel while Intel just sorta sits there and doesn't do anything after declaring ultimate victory with Sandy Bridge.The great thing about AMD is that its chipsets have a lot of PCIe lanes. That should make them great for multi-way graphics. The problem is, the more cards you add the worse the CPU looks. I can see someone doing 3-way SLI on a new 990FX board if they already had a few older/slower cards laying around.Arfisy Perdanawhat a pity for amd processor. So terribleNot terrible, AMD charges appropriately less for its slower CPU. It's no big deal, unless you're trying to push some high-end AMD graphics cards.Reply

Nobody remembers that at the time AMD bought ATI, they already had a business partnership with Nvidia on the co-marketing of 650A chipsets (AMD Business Platform) Also at the time AMD bought ATI, ATI already had a business partnership with Intel to develop the RD600 as a replacement for the 975X. AMD's purchase left both Nvidia and Intel stranded, as it took Intel more than a year to develop a replacement for the abandoned RD600.

-

Crashman BigMack70Meh... they're PCI-e 2.0 lanes so they need twice as many to equal the PCI-e 3.0 lanes on Z77Depends on the cards you're using. 3-way at x8/x8/x4? Tom's Hardware did an article on how bad PCIe 2.0 x4 performed, so if you're carrying over a set of PCIe 2.0 cards from a previous system, well, I refer to the same comment that you referenced.Reply -

Thanks for the article it was great.Reply

Amd is actually doing fine with their products especially with their GPUs.

Why so much hate on their CPUs i will never understand.They are cheaper aren't they?

-

CaptainTom I'm going to be honest, this article didn't prove anything for these reasons:Reply

-The i7 is stronger so of course it scaled better.

-The 7970 is on average a stronger card than the 680, so of course it needs a little extra CPU power.

-The differences overall were very little anyways besides the obvious things like Skyrim preferring Intel. -

smeezekitty CaptainTomI'm going to be honest, this article didn't prove anything for these reasons:-The i7 is stronger so of course it scaled better.-The 7970 is on average a stronger card than the 680, so of course it needs a little extra CPU power.-The differences overall were very little anyways besides the obvious things like Skyrim preferring Intel.You hit the nail right on the head.Reply -

ohyouknow CaptainTomI'm going to be honest, this article didn't prove anything for these reasons:-The i7 is stronger so of course it scaled better.-The 7970 is on average a stronger card than the 680, so of course it needs a little extra CPU power.-The differences overall were very little anyways besides the obvious things like Skyrim preferring Intel.Reply

Truth. Didn't really see anything other than the same games that show the FX falling behind did the same thing in this test as it would with anything involving Skyrim and so forth. -

billcat479 I wish I could have seen a lets say better test. I'm not great at knowing which games to pick and one thing I would have liked to see is games that are able to use more than one core of the cpu.Reply

I know when the AMD came out with the new FX it's single threading was still not up to intel's standards but in many of the tests that used more core's the AMD could actually keep up with Intel's cpus. Not as good all the time but it's very very easy to make these AMD cpu's look bad, just run a single threaded and/or older game at them and presto.

I would have liked to see how the video card would play into this, if AMD's running more optimized software would it's crossfire come out better as well as it's overall effect in games that make use of this.

I mean how long have we had more than one cpu core running now?

And I know it's taken the software people to come up to speed but the game board is changing and they are starting more and more to use more than one core so do you think this would be important to check out as well. And just maybe see some new data from how the AMD can use video cards if it's running software it was really designed for?

We can play this Intel single thread line till hell freezes over and we all know there will not be any surprises as long as we do.

And we also have seen a shift if low cost game setups start to favor AMD's older cpus because there is more software that can run on more core's? So lets start to even out the playing field a bit here ok?