AMD FirePro W8100 Review: The Professional Radeon R9 290

After introducing the flagship FirePro W9100, AMD now has a FirePro W8100 in its portfolio. Somewhat lower specs (like 8 GB of memory, a slower GPU, and fewer shader units) should position it in the workstation world where the Radeon R9 290 is in gaming.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

OpenCL: Compute, Cryptography, and Bandwidth

Shader Performance: FP32 vs. FP64

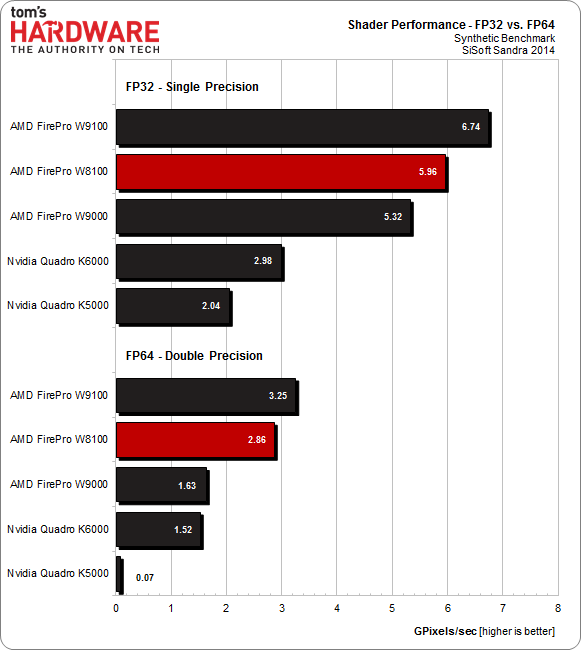

Let’s start with an OpenCL benchmark, which should push the theoretical ceiling of 32- and 64-bit compute performance.

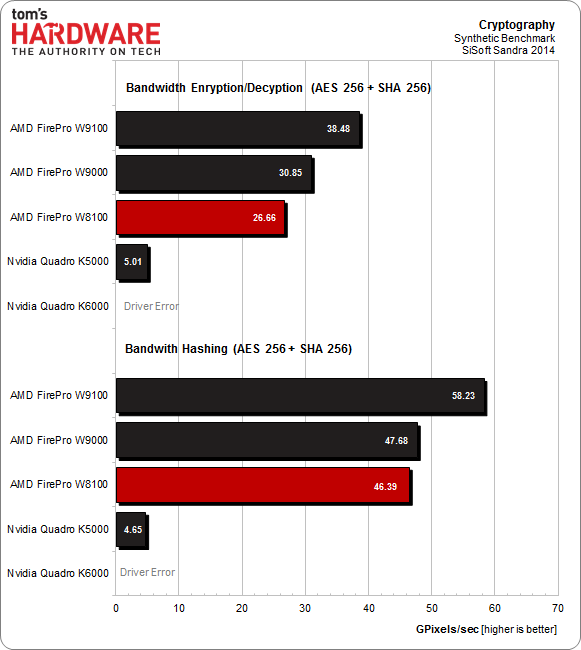

Sandra's Cryptography module is next. It’s remarkable how well the older FirePro W9000 keeps up. The distance between the W9100 and the W8100 is right around where it should be based on each card's specifications.

Although these benchmarks are synthetic in nature, they still illustrate Nvidia’s half-hearted support of OpenCL. Yes, the company offers its proprietary CUDA API, and there are plenty of applications that support it. Increasingly, though, ISVs looking for a broader customer base don't want to support two languages, and OpenCL is gaining traction as a result. Even long-time bastions of CUDA support like Adobe are adopting OpenCL.

Article continues belowFolding It Up: Folding@Home

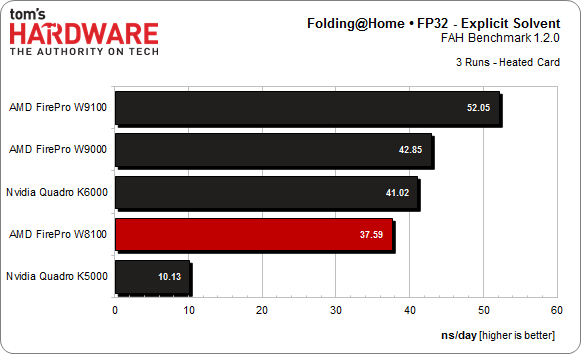

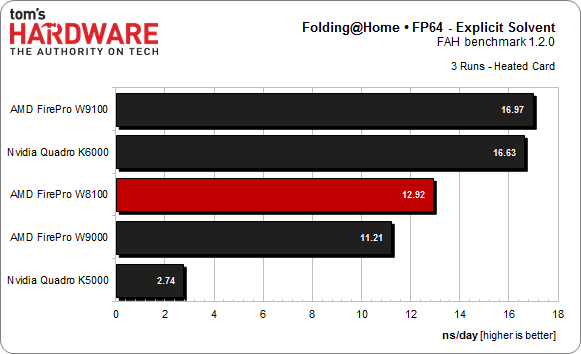

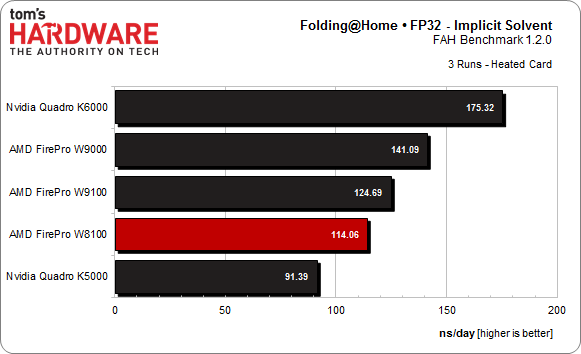

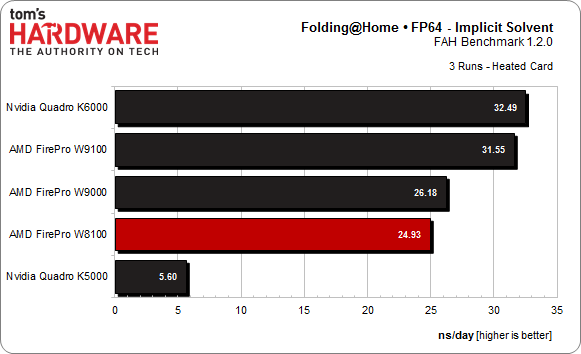

Let’s run the Folding@Home benchmark on this card. Even though few professionals would use a workstation-class board for this (or cryptocurrency mining), the test does give us a more real-world look at compute performance.

Once again, the gap between each card's performance is what we'd expect in light of their specifications. This chart demonstrates nicely how well AMD's architecture scales in scenarios without overhead.

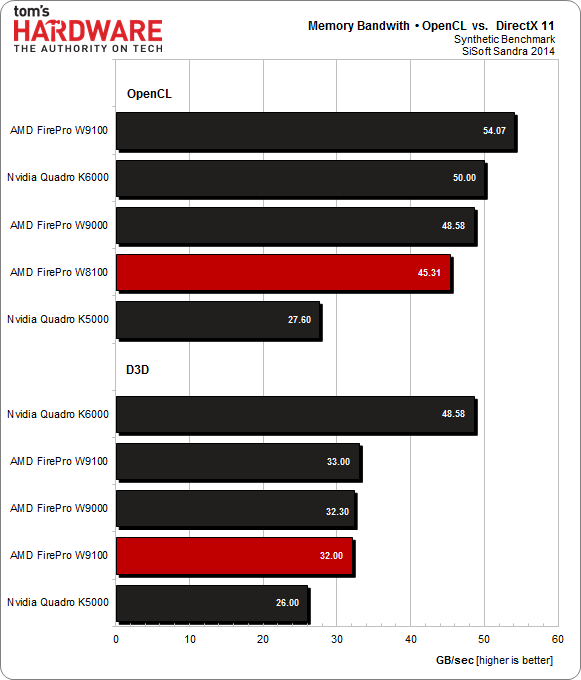

Memory Bandwidth

Conversely, when comparing memory bandwidth under OpenCL to Direct3D 11, Nvidia demonstrates that putting more effort into optimizing drivers makes a quantifiable difference.

As we move on to our application benchmarks, keep these synthetics tests in mind. They help decipher the performance results of real-world metrics, which are subject to influence from other platform subsystems.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

At least for now, we have to question whether Nvidia's lackluster support for OpenCL and emphasis on CUDA is the best strategy. Only time will tell.

Current page: OpenCL: Compute, Cryptography, and Bandwidth

Prev Page How We Test AMD's FirePro W8100 Next Page OpenCL: Financial Mathematics and Scientific Computations

Igor Wallossek wrote a wide variety of hardware articles for Tom's Hardware, with a strong focus on technical analysis and in-depth reviews. His contributions have spanned a broad spectrum of PC components, including GPUs, CPUs, workstations, and PC builds. His insightful articles provide readers with detailed knowledge to make informed decisions in the ever-evolving tech landscape

-

Memnarchon "The video shows that the AMD FirePro W8100 is bearable when it comes to maximum noise under load. This also demonstrates that a thermal solution originally designed for the Radeon HD 5800 (which hasn't changed much since) deals with the W8100’s nearly 190 W a lot better than the W9100's 250 W."Reply

Wait a minute. If this kind of cooling is better than the ones that used on R9 290 and they had this kind of technology from HD5800 series, then why in the hell they didn't use it on R9 290 series instead of using this crap cooler they used? -

FormatC It is the same cooler, but the power consumption of the W8100 is a lot lower. This cooler type can handle up to 190 watts more or less ok, but the R9 290(X) produces more heat due a more expensive power consumptionReply -

Cryio "Nvidia's Quadro K5000 is quite a bit cheaper, but comes with half the memory, less 4K connectivity, and is generally slower."Reply

That's almost an understatement. The K5000 is almost constantly 50% slower than the W8100, with a few 25% cases difference. For 700 $ more, the W8100 looks like a great buy. -

Memnarchon Reply

Well this doesn't approve that the cooler they used is superior. It might be higher TDP rated but that doesn't mean that its better than a lower TDP rated. We know how this rated works, the number is not by any means absolute. And we have seen in the past (especially at CPU coolers) higher TDP rated coolers to loose against lower TDP coolers for a lot of reasons (better quality, better tech, better materials, heatpipe placement etc etc).13836269 said:It is the same cooler, but the power consumption of the W8100 is a lot lower. This cooler type can handle up to 190 watts more or less ok, but the R9 290(X) produces more heat due a more expensive power consumption

I think the real reason might come from your review.

I don't believe in coincidence, but they decided to use it on a more expensive professional GPU with great success.

How do we know that the cooler used in W8100 wasn't approved for R9 290(X) cause of its higher cost perhaps?

ps: Am I asking too much if I ask from any reviewer on Tom's to test this cooler on a R9 290? (if its compatible ofc...) -

bambiboom Gentlemen?,Reply

The focus on the Firepro W8100 and Quadro K5000 being competitors as something to directly compare is a bit misleading and distracts attention from the impressive features of the Firepro W8100.

The W8100 does outperform the Quadro K5000 is some important ways, but to be in marketing competition, the performance should be to be in the same general league. The W8100 is 56% more expensive- the price difference of $900 is more than enough to buy a K4000 (About $750).

On a marketing-basis a $60,000 car that is 50% faster is not a direct competitor to a $38,000 one. The use and expectations of performance and quality are different. The logic is to say, "If you're thinking of buying a Quadro K5000, you should know that for 56% more you can have 25-50% higher performance in several important but not all categories." These purchases are most often budget driven- how many have unlimited funds- and the buyer of a $1,600 card will be a different person from someone with a $2,500 budget. The buyer's quest is more often based on how much performance is expected combined with how much is possible within the budget.

These cards may have the same applications, but for the W8100 to be better value than a K5000, it should have a consistent 56% performance advantage. A better comparison would be to consider for example, the W7000 and Quadro K4000. Both about $750, but the W7000 is 256-bit, has 4GB. a 154GB/s bandwidth. and 1280 stream processors against the K4000's 192-bit 3GB, 134GB/s and 768 CUDA cores. On Passmark Performance Test, a W7000 3D score near but not the top is about 4300 and 2D at about 1000 while the K4000 scores near the top at about 3000 3D and 1100 2D. The news for AMD is even better when considering that a $1,600 Quadro K5000- double the W7000 cost but also 4GB and 256-bit- near the top 3D scores are about 4300 and in 2D about 900. For me, a better marketing strategy would be to compare the K5000 to the W7000 and the W8100 to a mythological "K5500" that would cost $2,800 (midway between 4 and 12GB and $1600 and $5000).

This means that the person looking for the best performance for $750 -and uses the applications the W-series is good at- has an easy choice in the W7000.

Still, the features- especially the 512-bit and 8GB plus overall performance make the W8100 one to consider in the upper end of workstation cards. This should be a very good animation /film editing card. The comments about AMD being more forward looking than NVIDIA may be correct though the comments about the quality of Quadro drivers also seems true. This furthers the trend of GPUs tending to concentrate in certain functions-( the W8100 in OpenCL for example), having to consider GPU's one by one according to the applications used. More and more, with complex 3D modeling and animation software, specific software drives graphics card choices and except for the very top of the lines, the cards seem to less all-rounders than before- not good at everything.

BambiBoom -

nebun call me stupid but how is a $2600 gpu a fair competition to a $1600 gpu....am i missing something?....also the amd gpu has more cores....this is not a fair comparison....take an envidia card with the same about of cores and we see who comes on top....AMD HAS THE WORST DRIVERSReply -

falchard I would like to see these matched against their desktop counterparts in productivity tasks as well. Over the years we have seen a shift in architecture where the desktop part is pretty much the same as the workstation part using different drivers. Some of us need to compare the benefits of workstation cards over desktop cards. A few years ago in CAD based programs we would see a 400% or more increase in performance compared to desktop chips, and knowing this is still the case is very important.Reply -

mapesdhs Typo: "... our processor runs at a base close rate ... "Reply

I assume that should be, 'clock rate'.

Btw, how come the test suite has changed so that there is no longer any

app being used such as AE for which NVIDIA cards can be strong because

of CUDA support?

Ian.

-

mapesdhs A down-vote eh? I guess the proverbial NVIDIA-haters still lurk, unwilling toReply

present any rationale as usual. :D

And falchard is right, Viewperf tests showed enormous differences between

pro & gamer cards in previous years, but it seems vendors are deliberately

blurring the tech now, optimising for consumer APIs (ie. not OGL), which

means pro tests often run well on gamer cards. In which case where is their

rationale for the cost difference? Apart from support and supposedly better

drivers, basic performance used to be a major factor of choosing a pro card

and a sensible justification for the extra cost, but this appears to be not the

case anymore; check Viewperf11 scores for any gamer vs. pro card, the only

test where a gamer card isn't massively slower is ENSIGHT-04. For MAYA-03,

a Quadro 4000 is 3X faster than a GTX 580; for PROE-05, a Q4K is 10X faster;

for TCVIS-02, a Q4K is 30X faster.

Today though, with Viewperf12, a 580 is faster than a K5000 for MAYA-04,

about the same for CREO-01, about the same for SHOWCASE-01 and

not that much slower for SW-03. Only for CATIA-04 and SNX-02 does the

expected difference persist.

Meanwhile we get OpenCL touted everywhere, even though there are plenty

of apps which can exploit CUDA, but little attempt to properly compare the

two when the option to use the latter is also available, eg. 3DS Max, Maya,

Cinema4D, AE, LW, SI, etc.

Ian.

PS. nebun, the core structure on these cards is completely different. The number

of cores is a totally useless measure, it tells one nothing. One can't even compare

between different cards from the same vendor, eg. a GTX 770 has way more cores

than a GTX 580, but a 580 hammers the 780 for CUDA. Indeed, a 580 beats all

the 600 series cards for CUDA despite having far few cores (it's because the newer

cards use a much lower core clock, less bandwidth per core, etc.)

-

ddpruitt Doesn't Tom's do copy editing? What's up with the chart with blanks at the bottom of page 13.Reply

It's nice to see that AMD is starting to close the gap on it's products. They seriously need to consider updating their cooling solutions and improving power. I would be interested to see if these workstation cards throttle down as often as their desktop counterparts. In my experience most of the current Hawaii chips are running higher voltages than needed and they could save both power and heat by running them down a bit. It should allow the boards to stay stable and compete better in many workloads.