SSD 102: The Ins And Outs Of Solid State Storage

The benefits introduced by solid state drives are undeniable. However, there are a few pitfalls to consider when switching to this latest storage technology. This article provides a rundown for beginners and decision makers.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Some Numbers: Performance and Power Consumption

There is no alternative to studying reviews and comparing comparative information before purchasing SSDs, especially if you’re looking for a larger number of drives for servers. All manufacturers promise 230+ MB/s and 180+ MB/s read and write throughput, as well as thousands of I/O operations per second for their drives. While most products deliver on these expectations when it comes to peak performance, the minimum and average performance numbers can be significantly lower. This means that you should always be planning with minimum performance numbers in an effort to avoid issues in your business environment. In the end, a drive that usually writes at around 200 MB/s will still be unsuitable for high-performance environments, even if it might drop to 40 MB/s here and there.

I/O Enables Our Digital Lives

Eventually, it does not matter too much whether an SSD reads at 220 or at 250 MB/s, or if it writes at 210 or 180 MB/s. Only hardcore enthusiasts will be able to tell a difference. However, SSDs make much more of a difference in enterprise scenarios where ridiculous amounts of I/O operations per second are way more important than throughput.

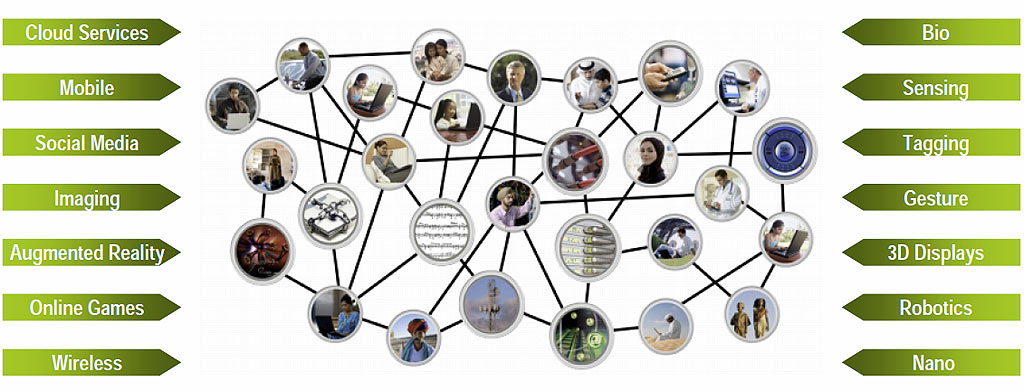

If you think about how many users log on to Web services at any point in time, you'll realize that we’re discussing a number of I/Os that no one can grasp anymore. Facebook alone reputedly has some 400 million active users, and each of their login requests trigger many read and write operations. Despite this massive traffic, we still expect immediate responses to all of our clicks and requests. Now consider how your digital footprint spreads: logins across various Web sites, analytics, tracking, reposts by other users, on and on. You get the point. We need SSD-class performance to help manage this data avalanche.

Power Consumption

The same thinking applies to power consumption. Why should we care if drives with less than 0.1 W idle power go from 2 W to 1.5 W from one SSD generation to the next? With laptops, this is indeed mainly relevant only for road warriors who want maximum battery runtime. But from a global perspective, especially including data centers, each watt can matter. According to IDC, servers worth $42.2 billion were purchased in 2009. The cost for power required to operate those servers was $32.6 billion. Every watt of power required to run data center hardware requires 2.5 W of additional power for cooling.

Where To Go?

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Clearly, the metrics are changing. Whereas companies used to look at gigabytes or performance per dollar, today's managers are more interested in I/Os per dollar, I/Os per watt, and sometimes gigabytes per watt. These metrics clearly favor of SSDs. In the end, what matters is expanding data center performance and capacity without physically expanding the data center itself.

Current page: Some Numbers: Performance and Power Consumption

Prev Page How SSDs Work Next Page Business Metrics

Patrick Schmid was the editor-in-chief for Tom's Hardware from 2005 to 2006. He wrote numerous articles on a wide range of hardware topics, including storage, CPUs, and system builds.

-

Lewis57 A very good article. I love these articles explaining everything. I'm planning on buying two OCZ Vertex 2E 60GB for RAID-0 when I get enough money. Can't wait, should be one hell of an upgrade from a single 5400rpm WD green drive.Reply -

ares1214 Memristors might make SSD's sorter lived than people thought, but who knows. Great article btw.Reply -

JoeSchmuck From what I understand, TRIM is supported under IDE mode using Win7 as well so you do not need AHCI. I have a Samsung’s VBM19C1Q firmware device and running IDE mode.Reply -

Earlier this year we deployed a 5 node failover cluster with iSCSI backend. Each of the VM Host servers utilize a pair of solid state drives for booting and operating, with VM's running off of iSCSI shared cluster volumes. The servers are unbelievably fast and stable - 6 months of 100% uptime on Windows 2008R2. We only use magnetic HDD's now for transporting backups off site.Reply

-

One thing that I'm very curious, if we follow Tomshardware's advice to turn off disk defragmentation, the files on SSD would be defragmented over time.Reply

Upon SSD data loss, can we recover the data files if it's defragmented, especially on a SSD that has never been defragmented as Tomshardware had recommended? -

randomizer Defragmentation of an SSD is not entirely unnecessary. It's important to distinguish between file fragmentation and free space fragmentation. The former is not an issue with SSDs because all parts of an SSD can be read at the same rate (the same is true for writing if the blocks are clean). But fragmentation of free space, whereby free space is largely distributed across partially-filled blocks, can severely reduce the performance of an SSD. Any time a file of <512kB is written to an SSD, it will take up only part of a block. However, the SSD will eventually run out of clean blocks and will need to re-arrange the data by erasing partially-filled blocks and consolidating them to free up more blocks for further writing. Running a free space defragmentation on the drive will aggressively consolidate the data on-demand so that you don't have the problem occurring when you didn't plan for it.Reply

Most SSDs will perform this process themselves when idle for extended periods, but it happens at a slow rate. This is what most manufacturers refer to when they talk about Garbage Collection. -

Alvin Smith Please send me the four fastest 256GB SSDs on the market, so that I might perform my own comparison ... I'll just sit by the door and wait for UPS to arrive.Reply

Thanks, in advance !!

= Alvin =

-

gordonaus I put an SSD in my new computer and it was good but after i got the firmware update and changed to AHCI it was AMAZING (OZC Vertex 2 60gb). I would say tho that 60 gb is not enough, i installed windows photoshop and a few other design programs and i only have 20GB leftReply