For Honor Performance Review

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Benchmarks: Frame Rate, Frame Time, and Smoothness

Benchmark Sequence

For Honor features a built-in benchmark that lasts about 70 seconds. This is what we're using to test.

Some of the game's campaign missions (for example, Valkenheim in winter, which makes generous use of fog) are more graphics-bound than other scenarios, regardless of the detail options you choose.

Extreme Quality - 1080p, 1440p, and 4K

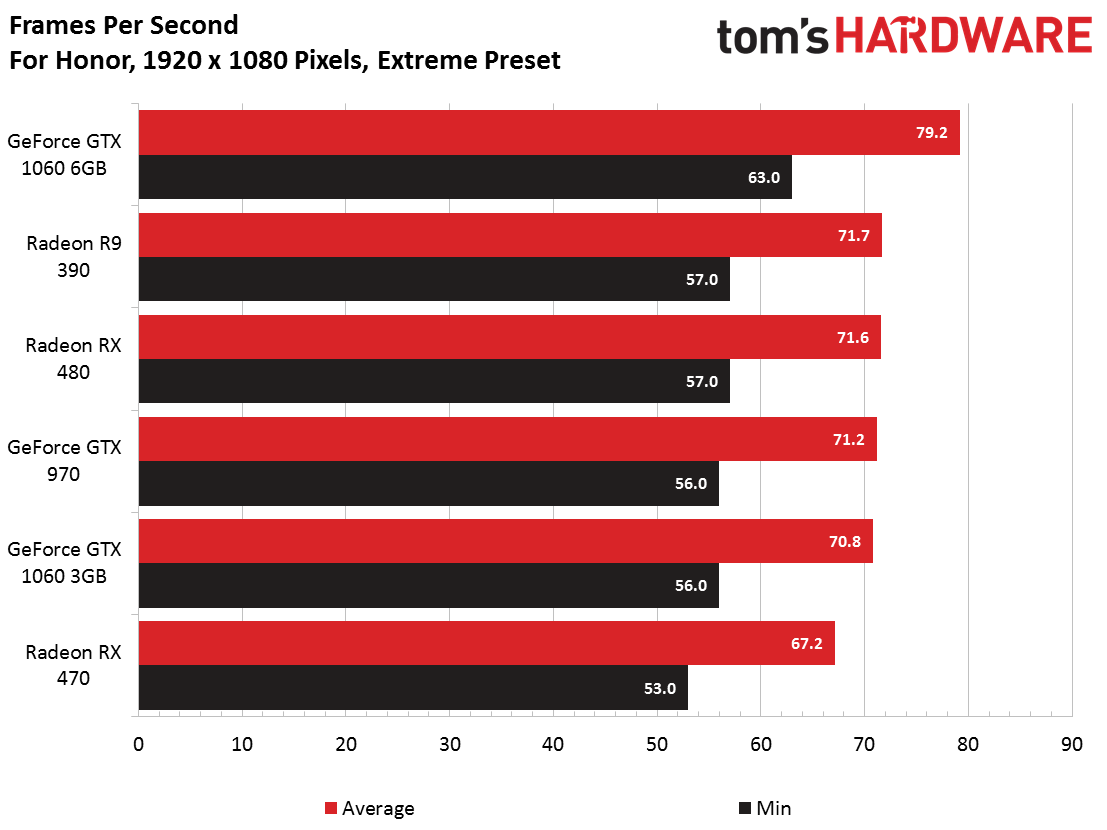

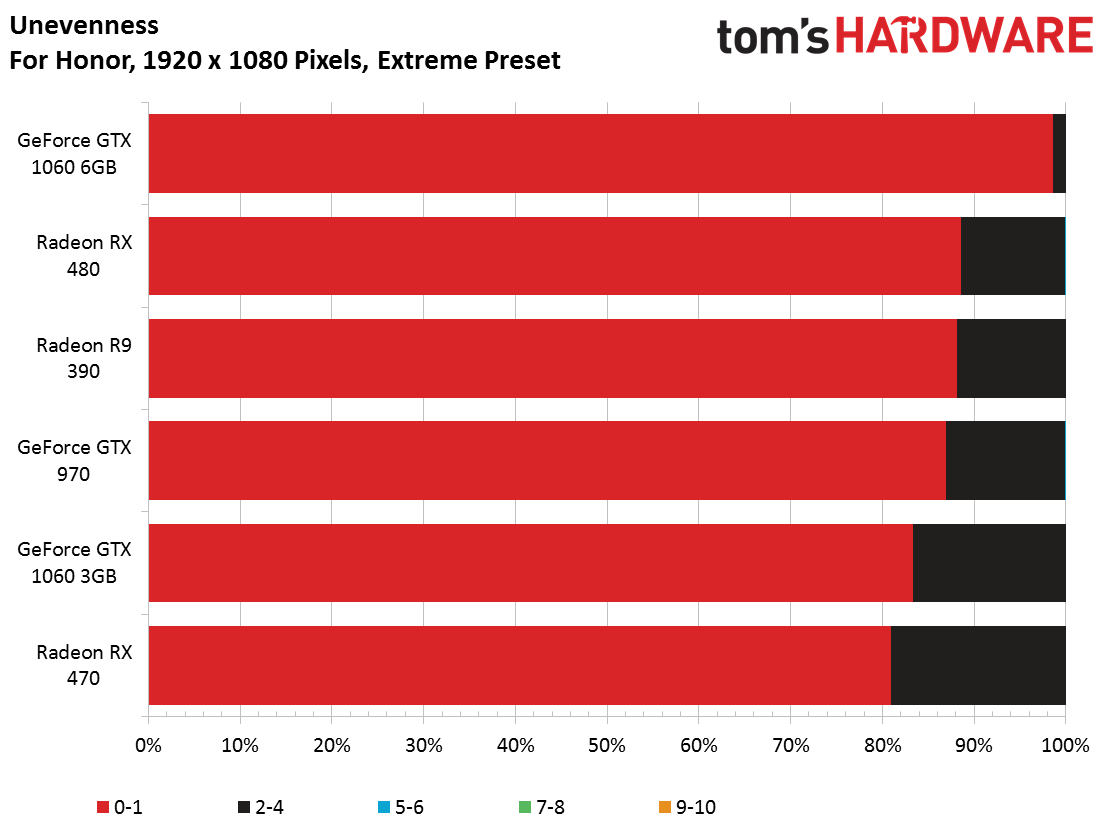

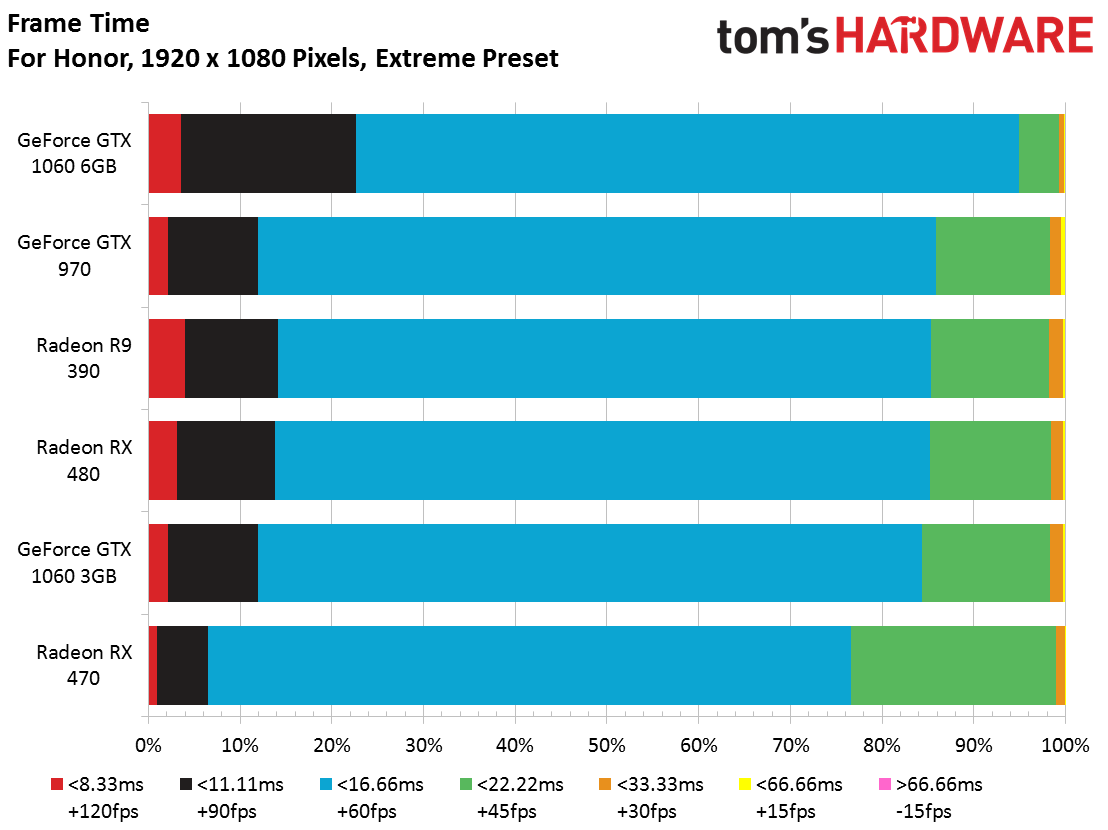

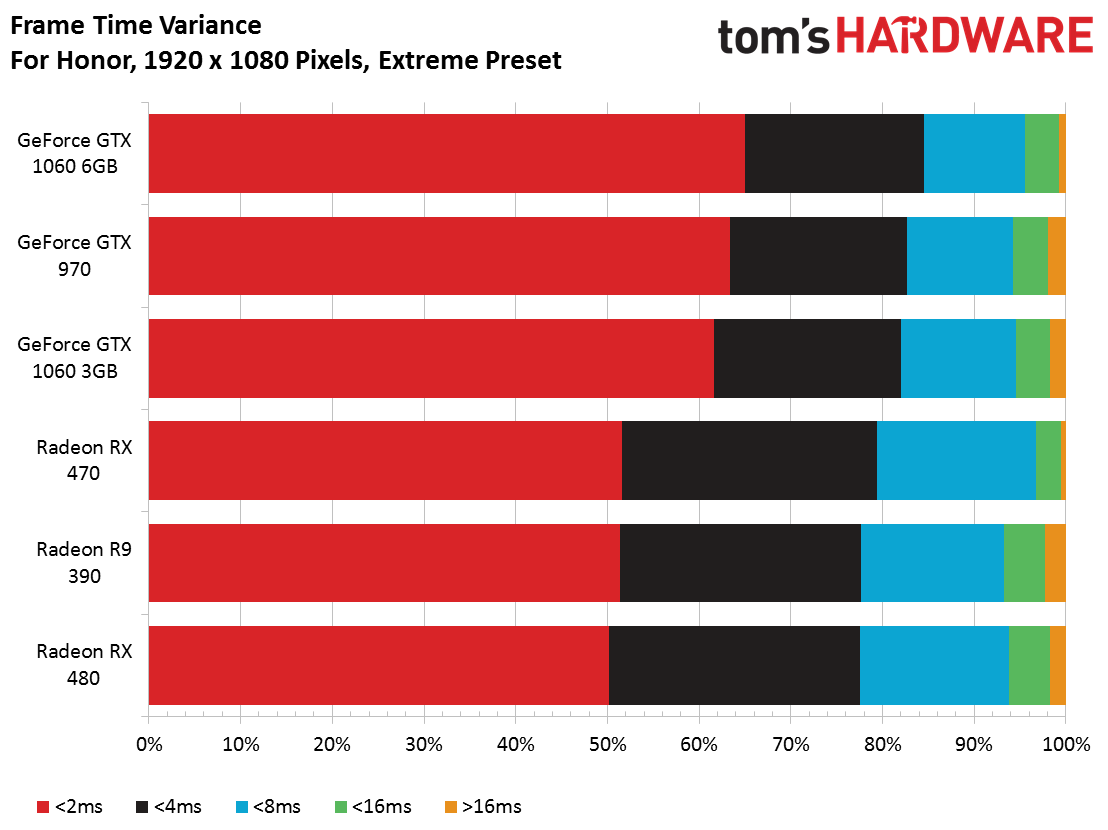

At Full HD, using the Extreme preset, a GeForce GTX 1060 6GB lands at the top of our charts. AMD's Radeon RX 470 represents the other end of the spectrum. The four cards between those two perform almost identically. From top to bottom, though, these six products achieve fairly smooth frame rates.

Article continues below

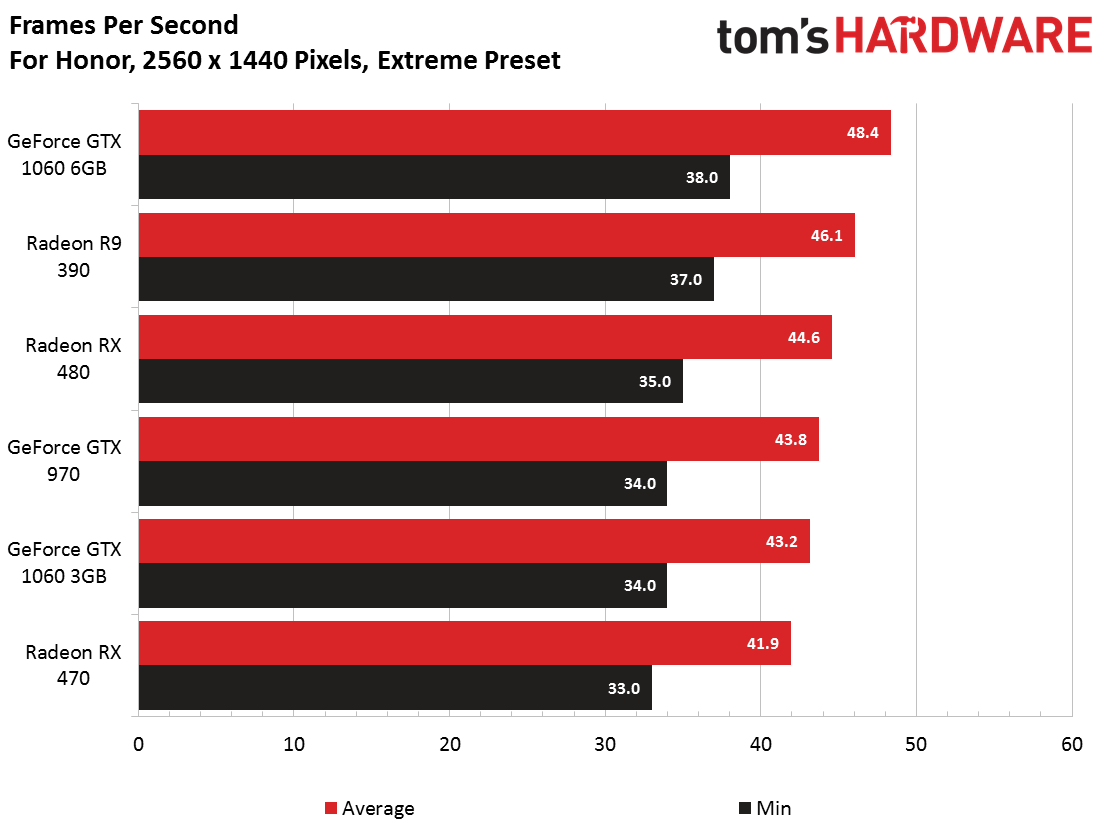

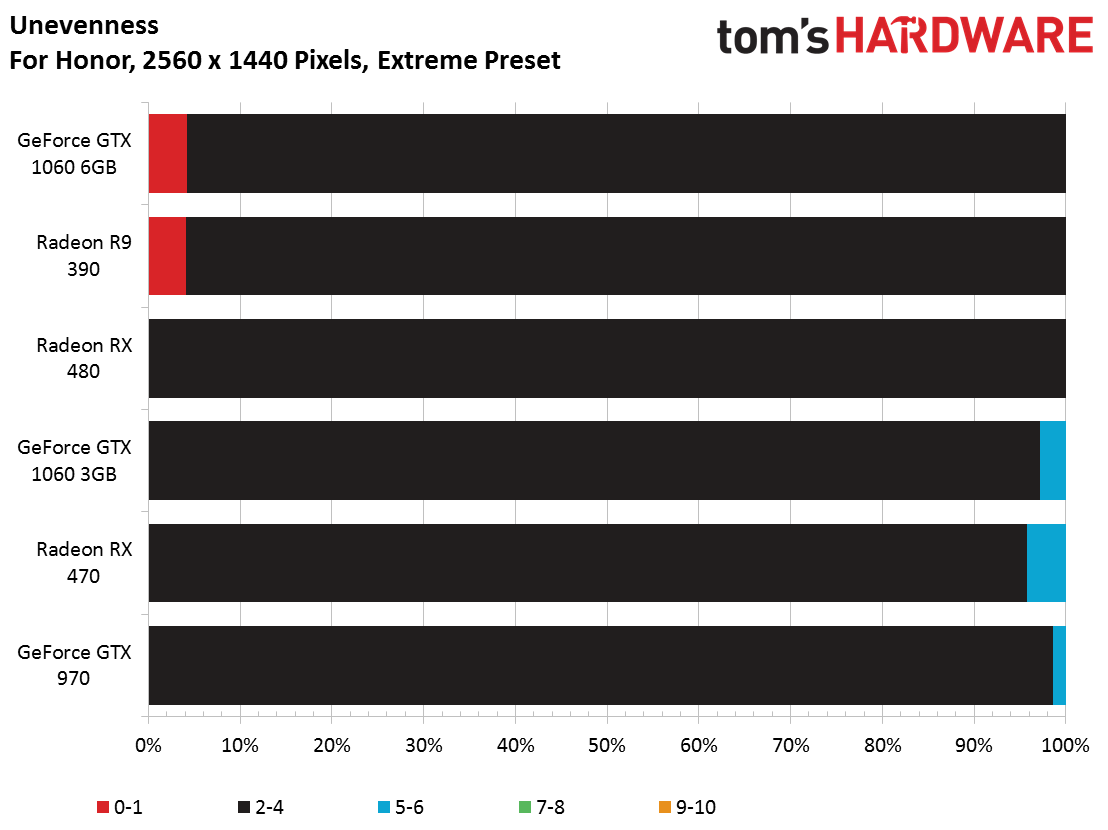

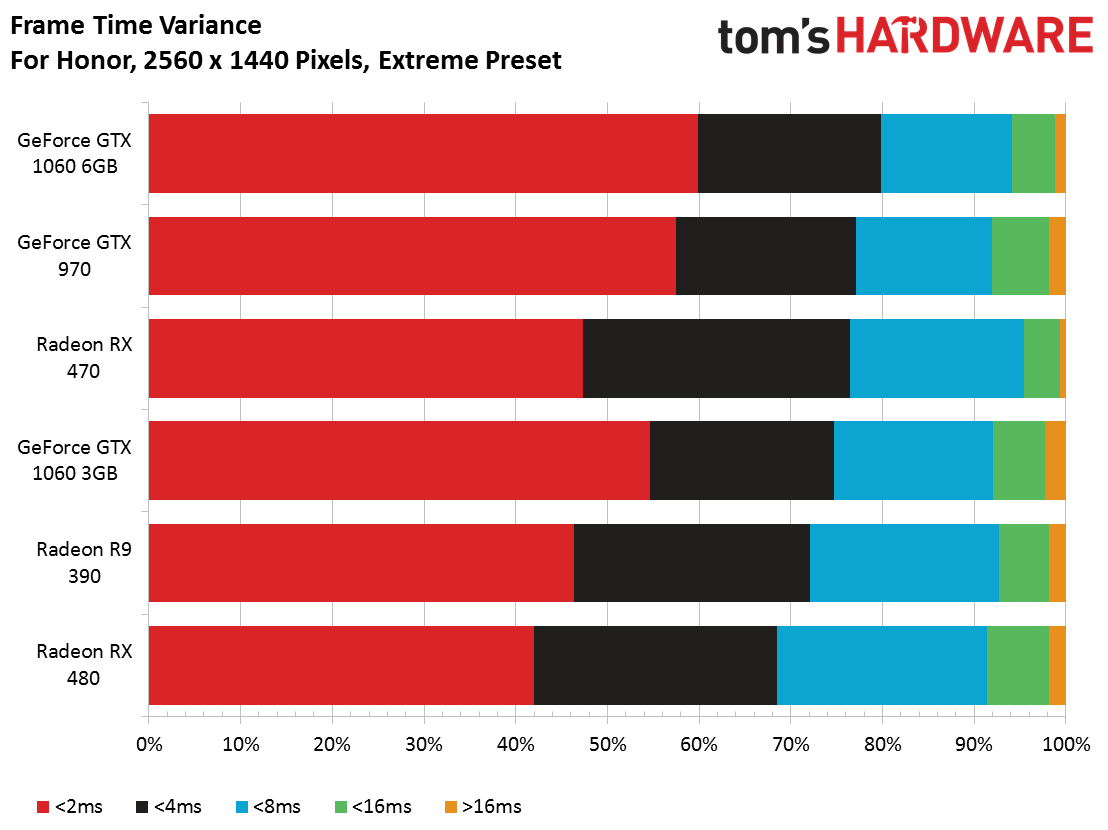

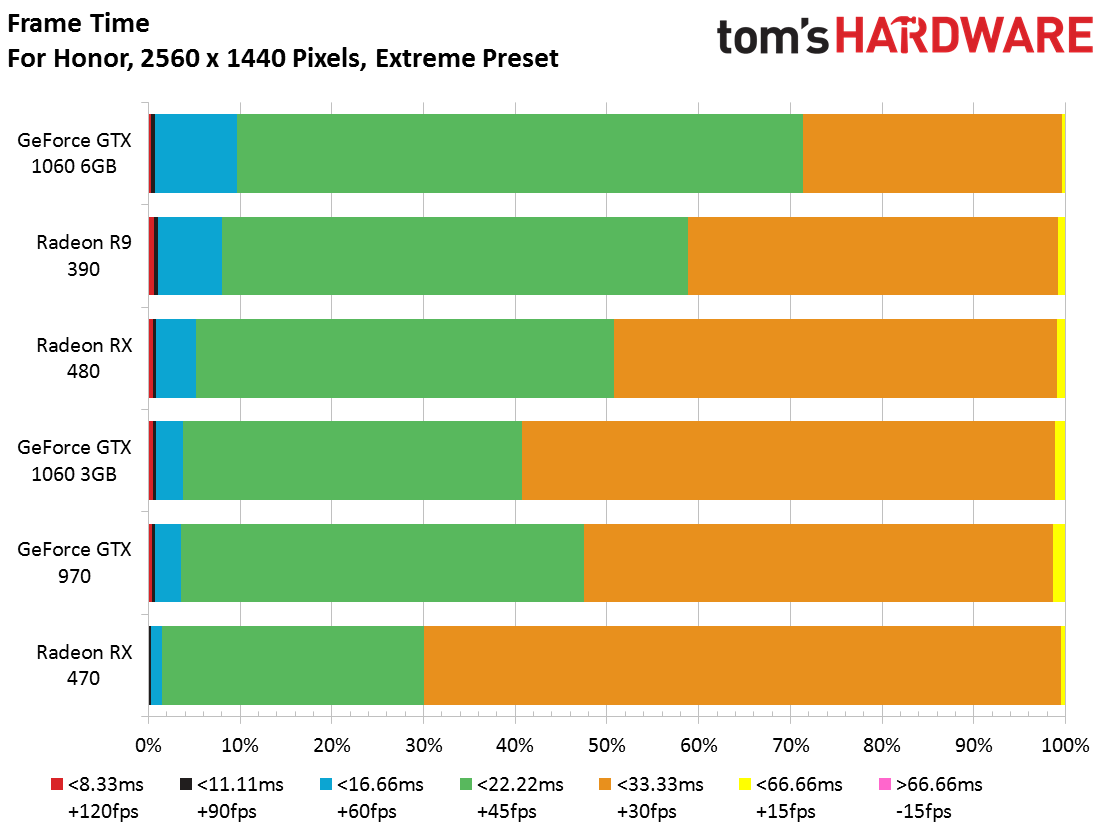

Not surprisingly, performance falls as we shift to 1440p (still at Extreme quality). While all of the cards average more than 40 frames per second, their minimum frame rates drop into the 30s during our benchmark sequence. Even the GeForce GTX 1060 6GB struggles, barely besting AMD's previous-gen Radeon R9 390.

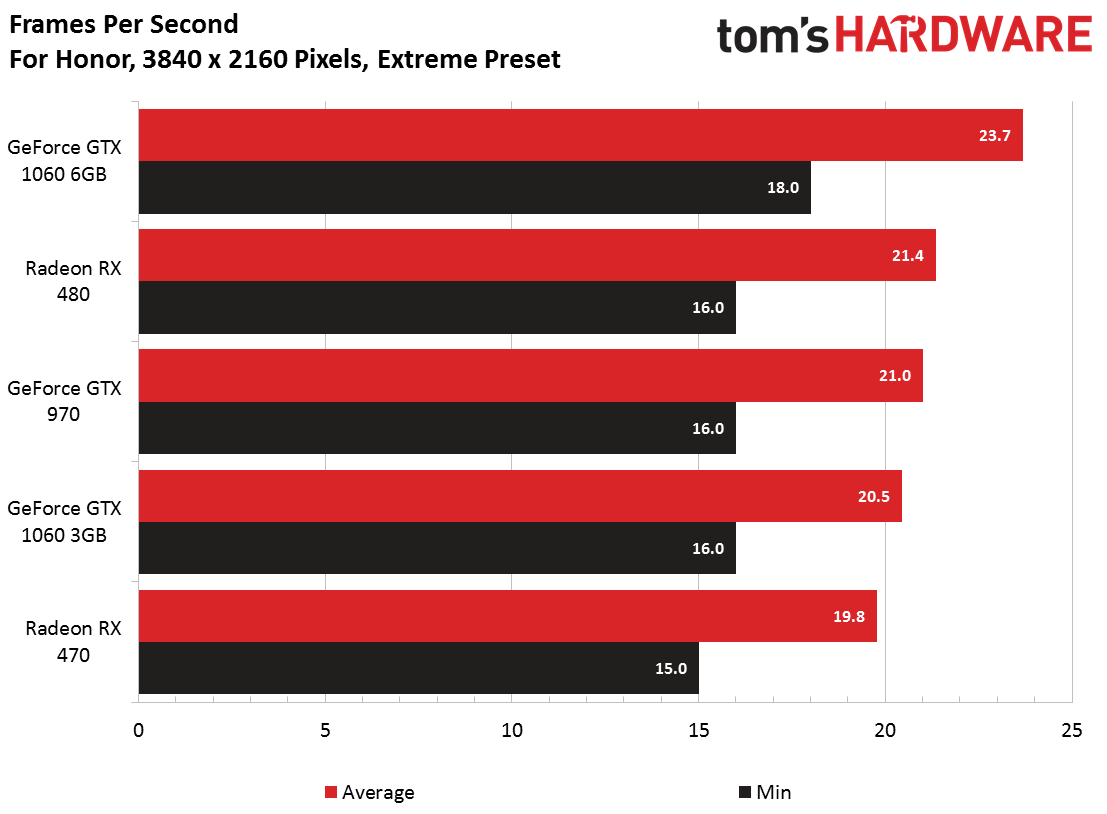

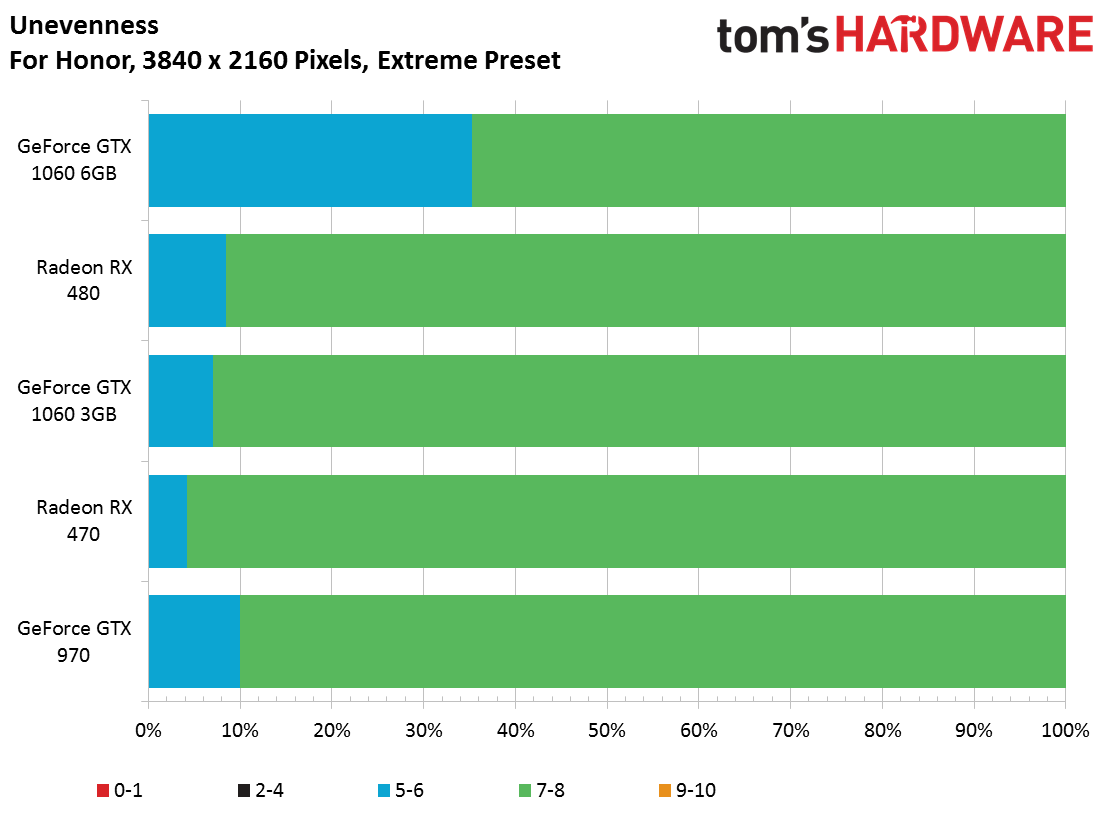

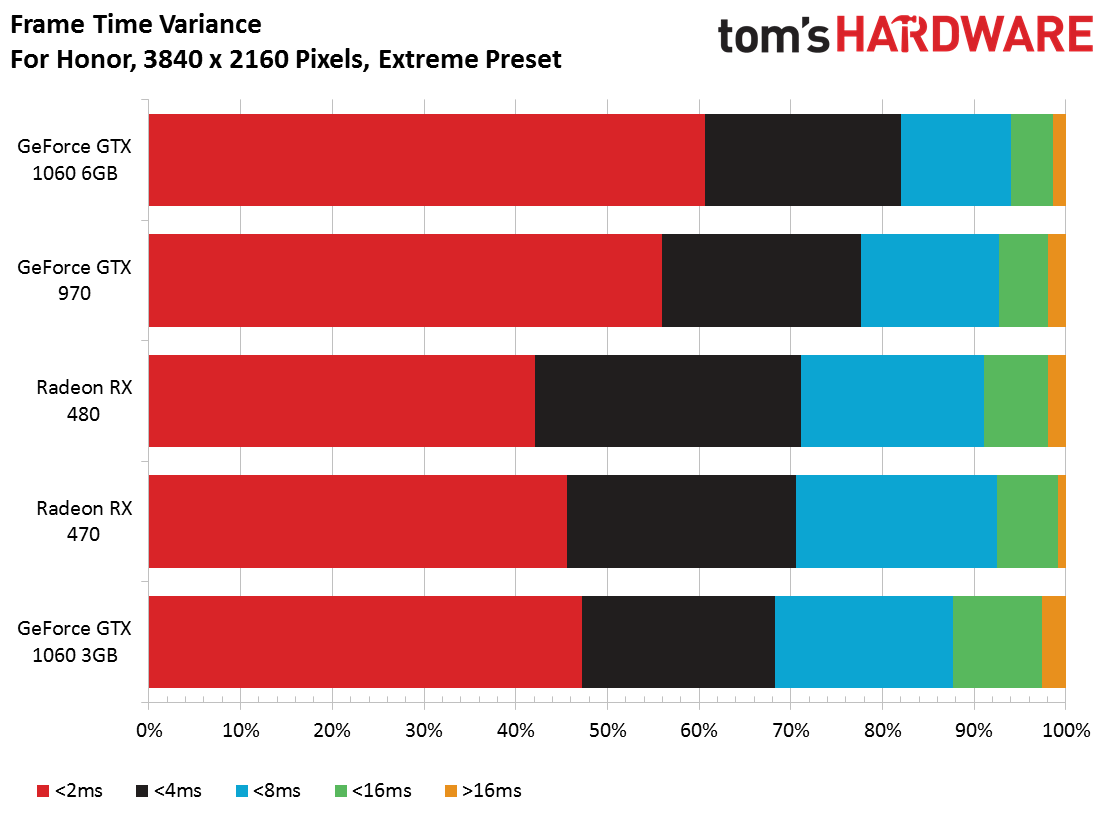

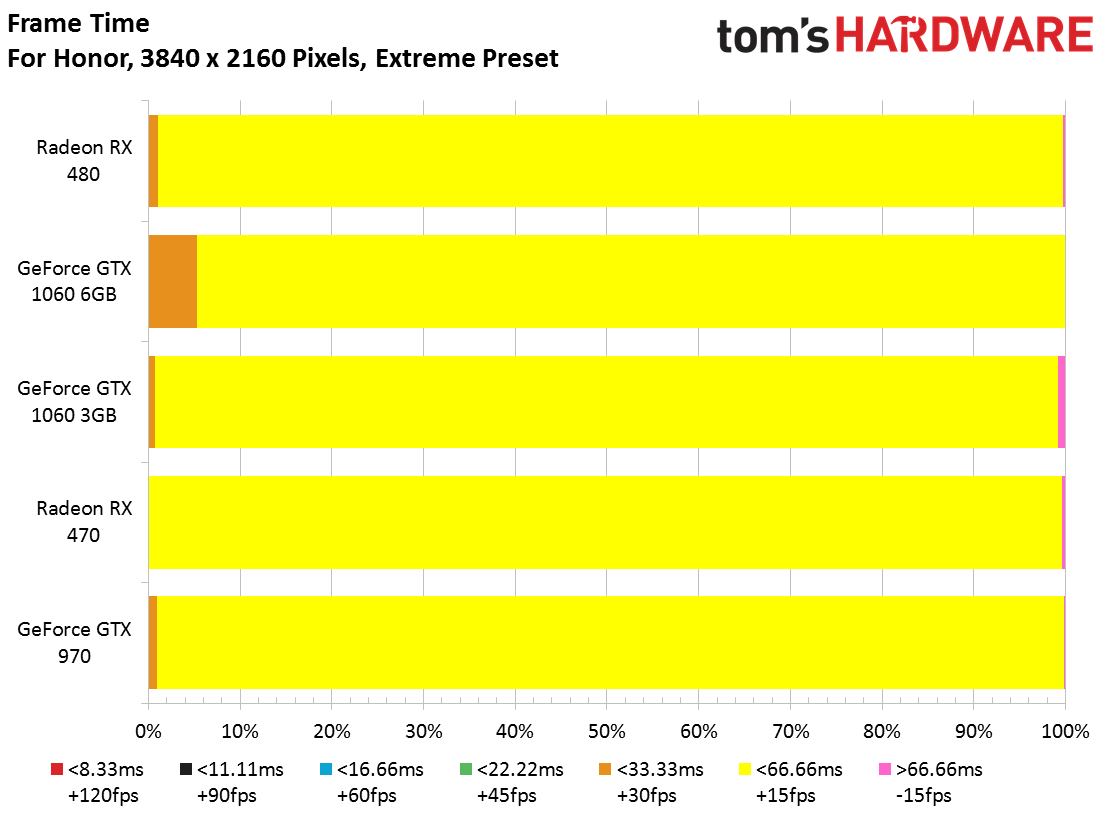

Gaming at 4K just isn't viable on mainstream hardware, even in a title like For Honor that doesn't demand a lot of rendering horsepower. Our Radeon R9 390 simply refused to launch the benchmark at this resolution.

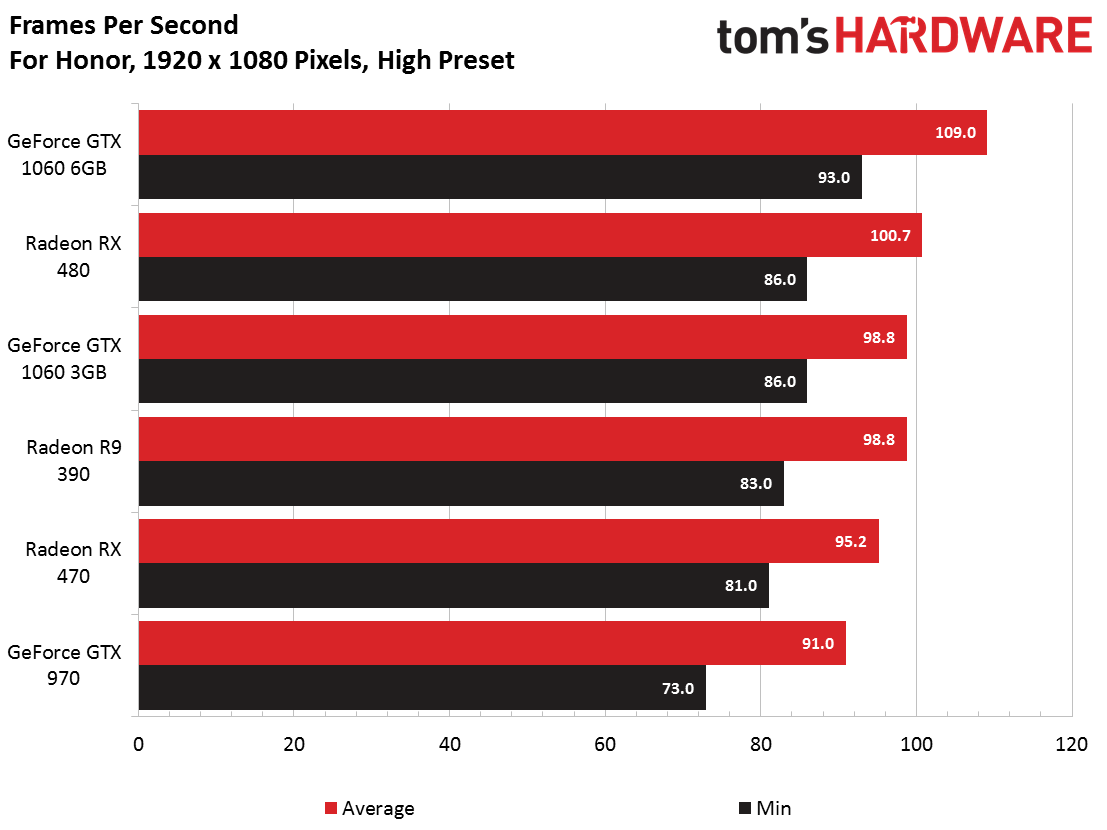

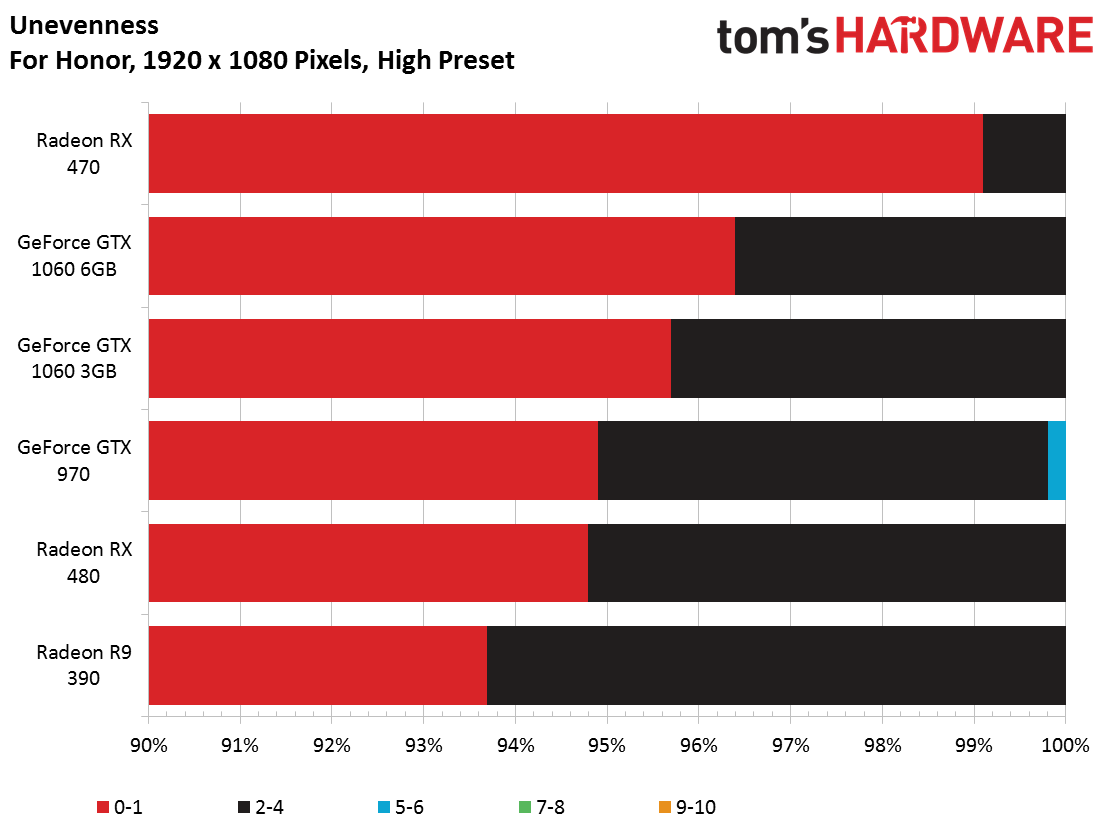

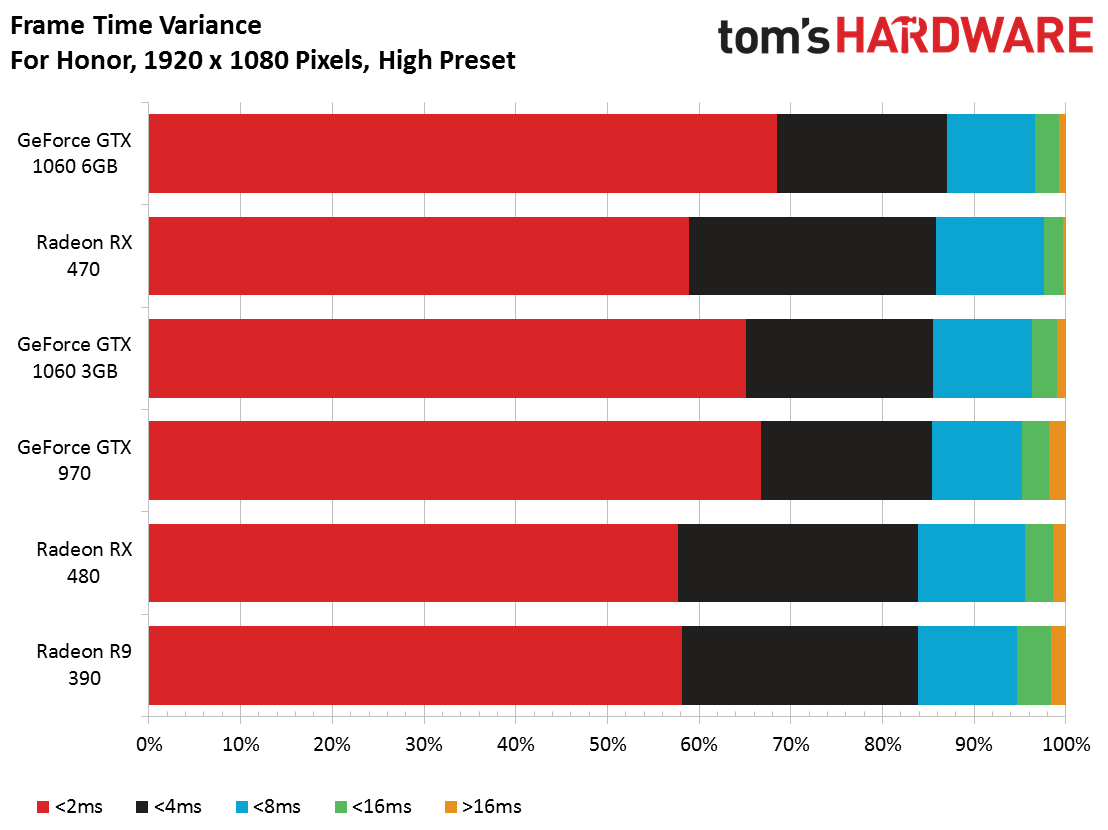

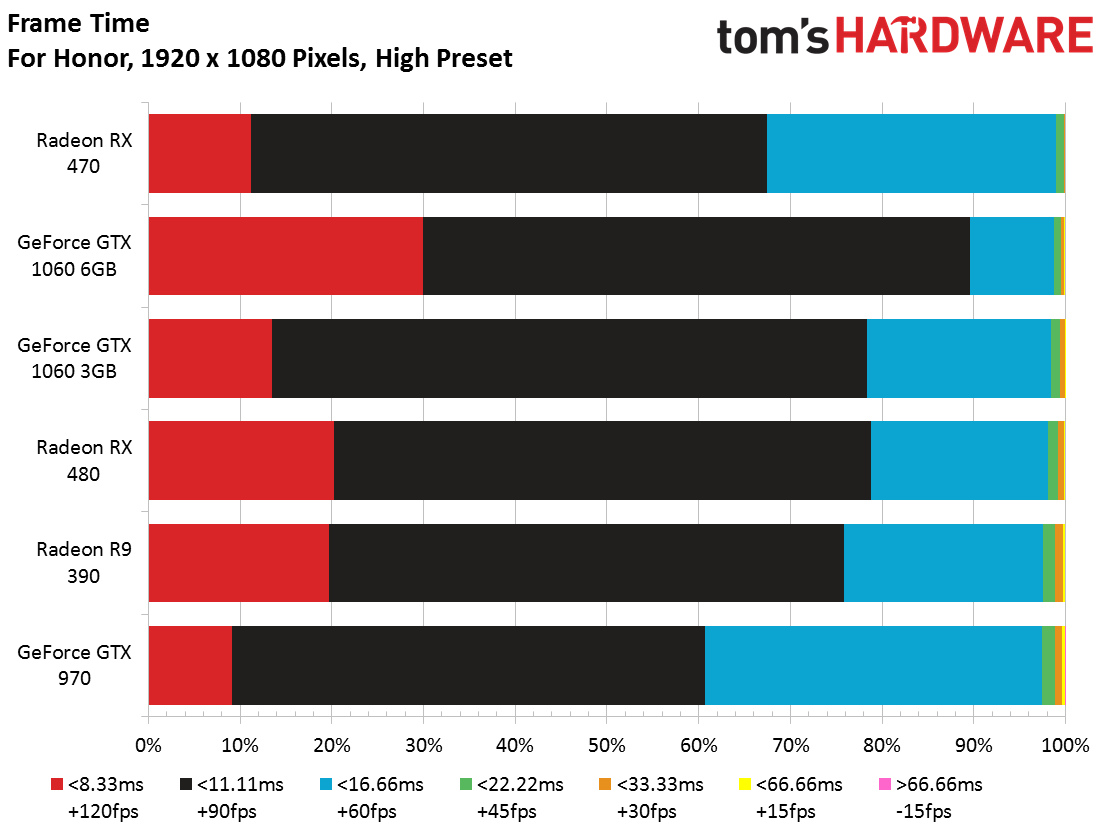

High Quality - 1080p, 1440p, and 4K

By pulling back a bit on quality, performance increases just as you'd expect. This time, all of the cards exceed an average of 90 FPS. The Radeon RX 480 and GeForce GTX 1060 6GB even pass the 100 FPS mark. Only the GeForce is able to keep its nose above 90 FPS throughout our benchmark run, though. Interestingly, the GeForce GTX 970 succumbs to AMD's Radeon RX 470.

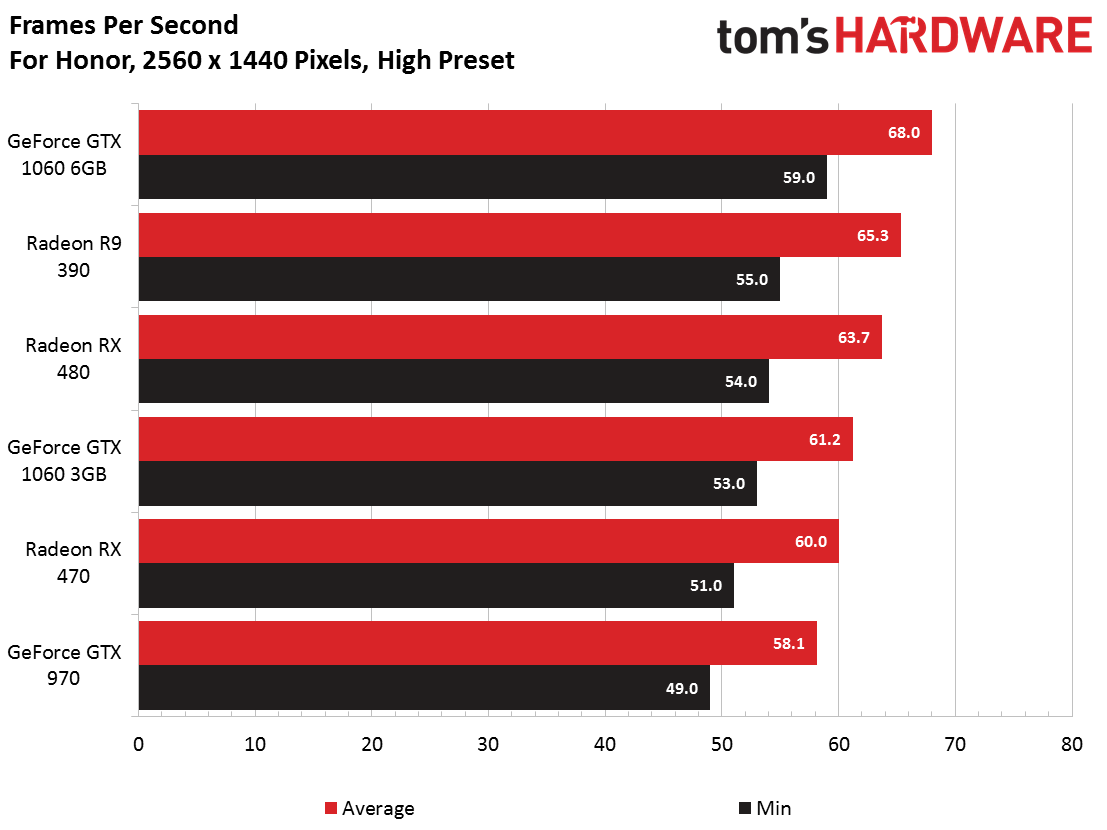

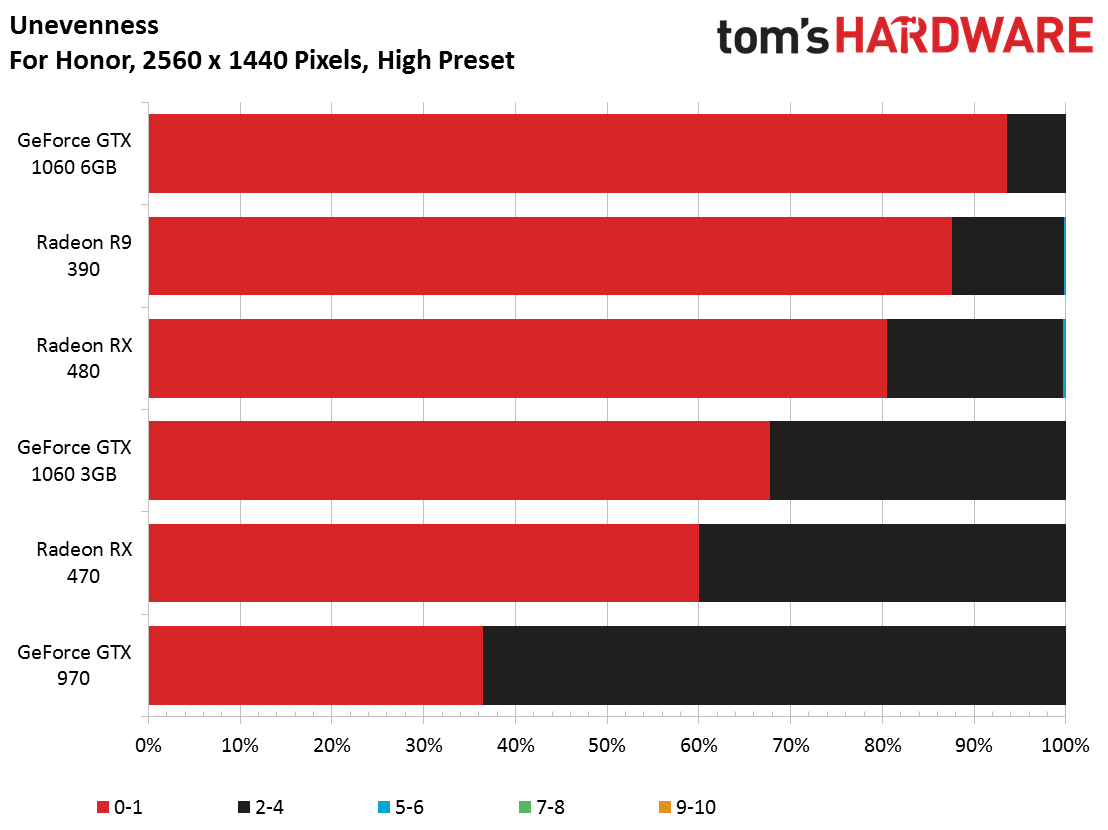

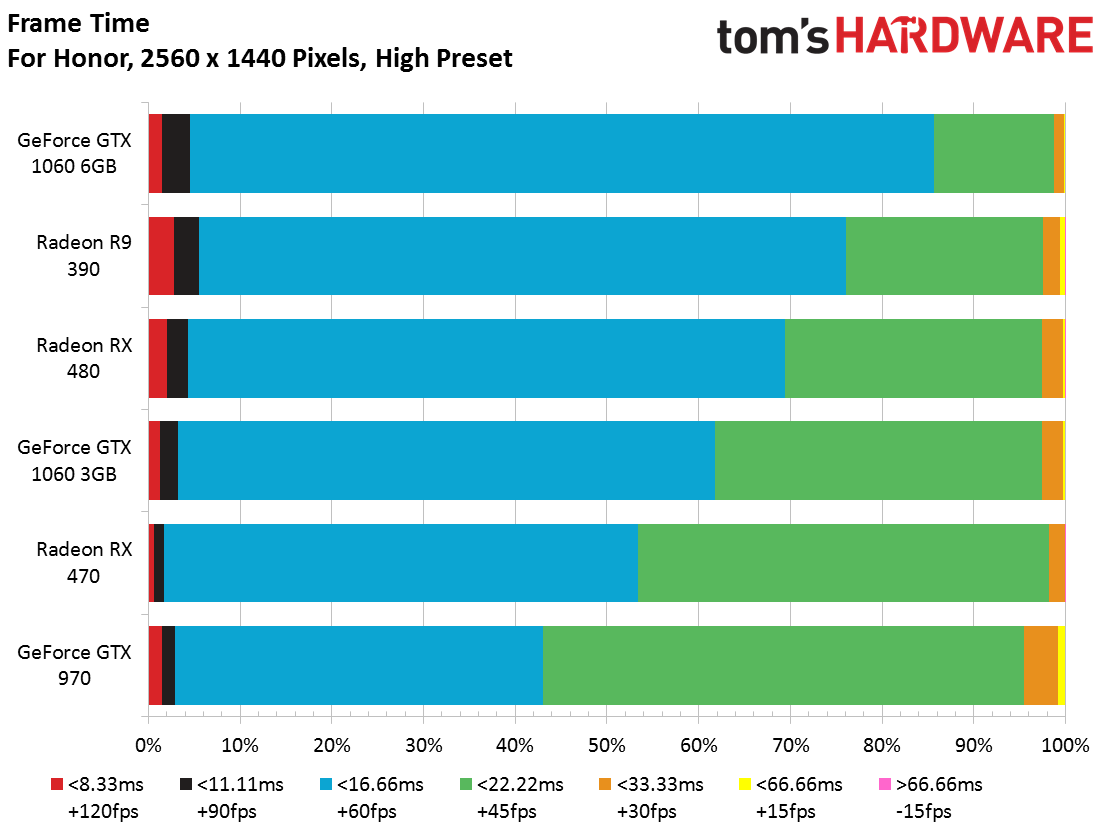

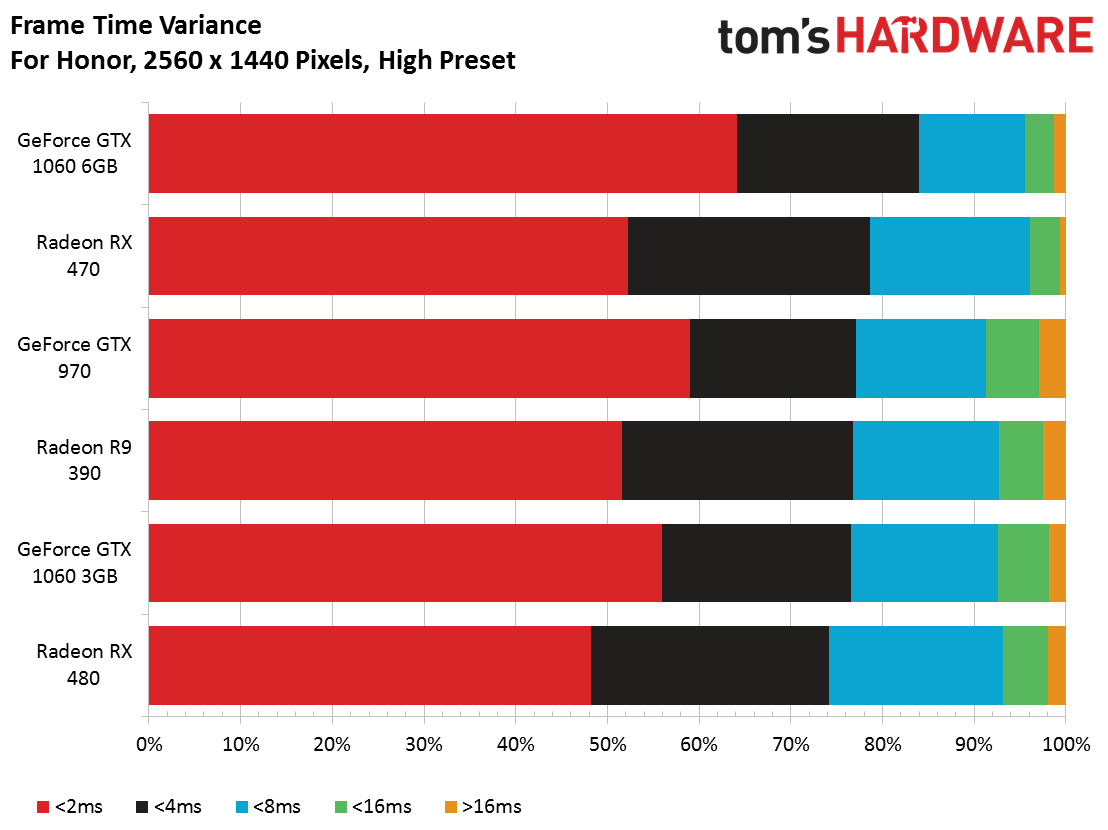

At 1440p and High-quality settings, every card except for the GTX 970 achieves an average of 60 FPS or more. The game plays through smoothly on all cards, though.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

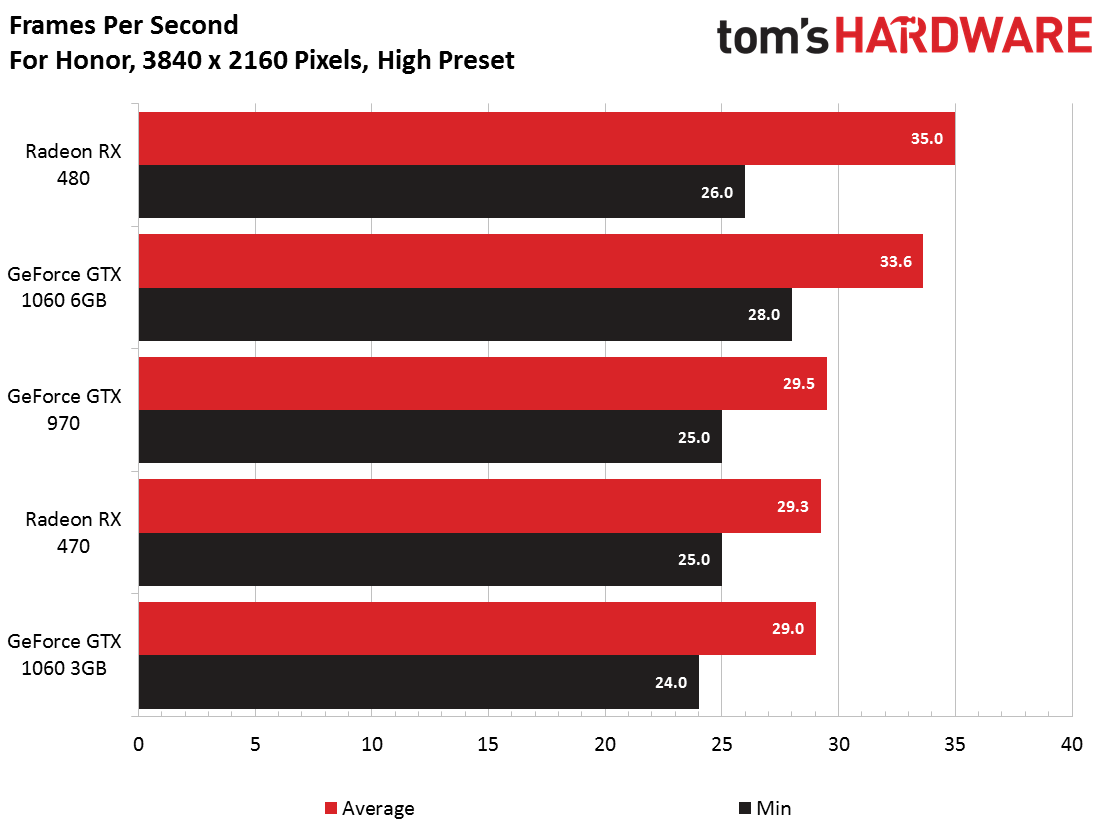

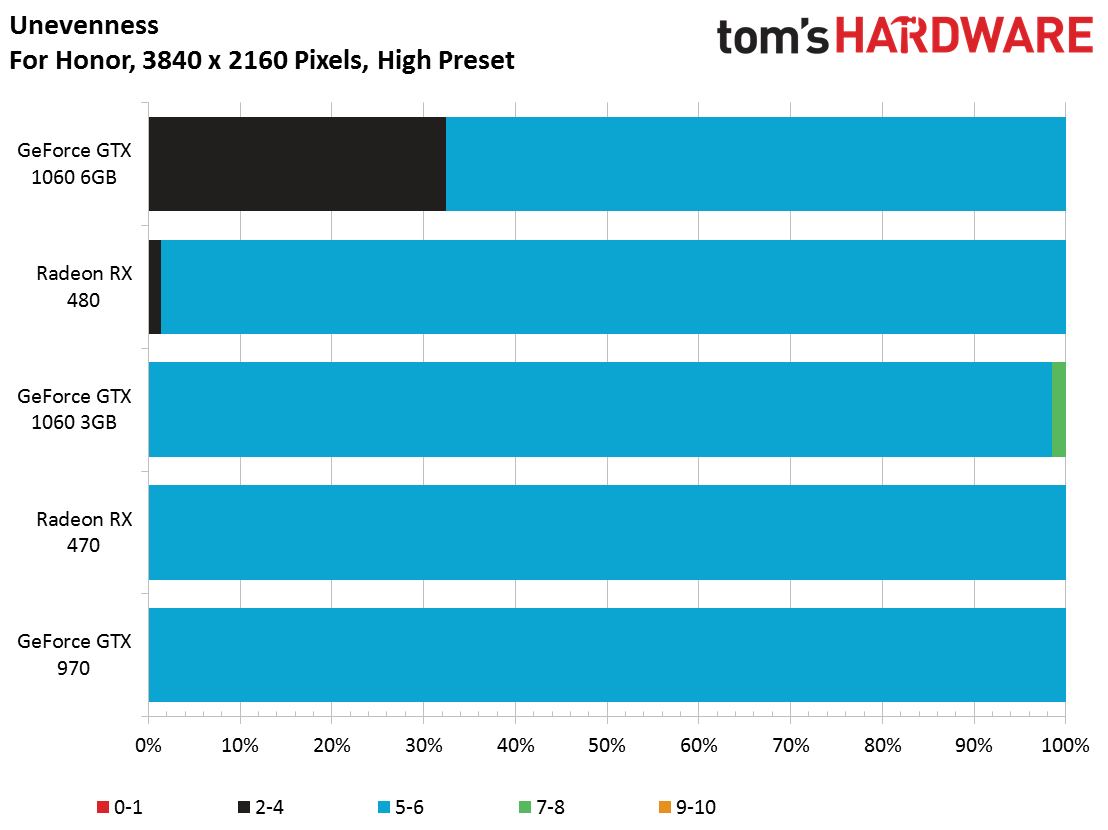

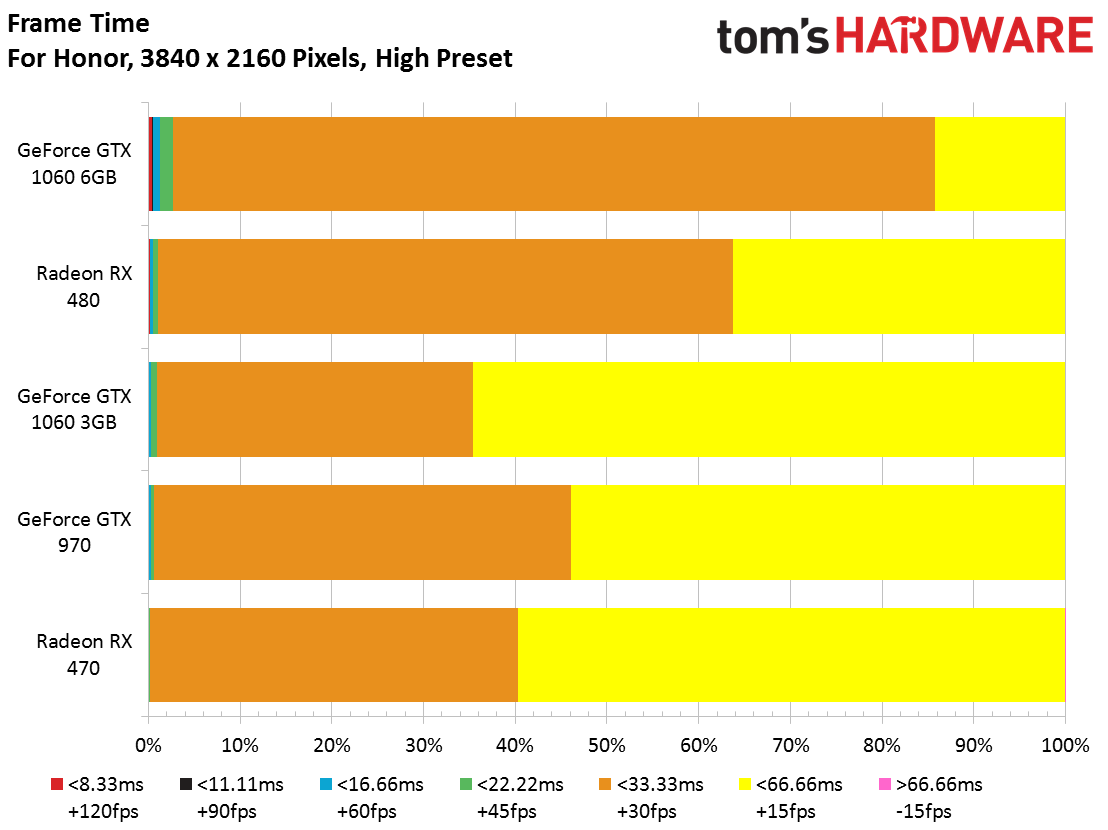

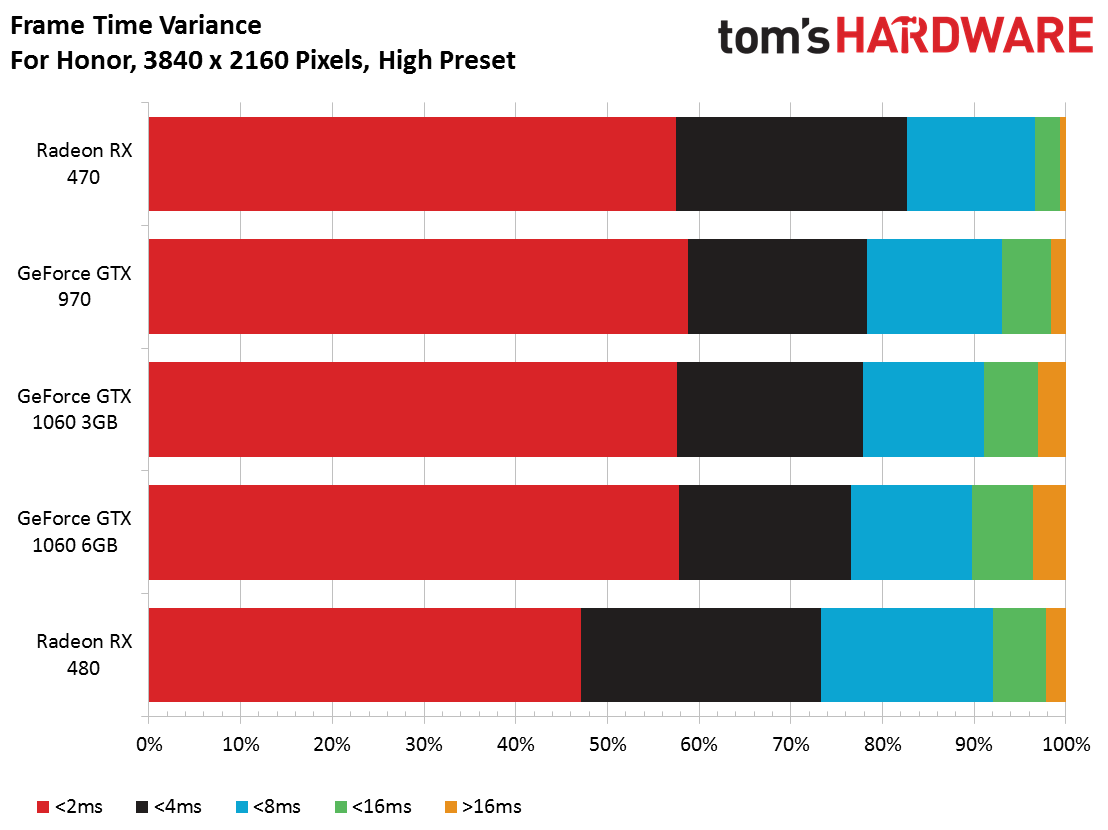

Only the Radeon RX 480 and GeForce GTX 1060 6GB average more than 30 FPS. But with minimums in the 20s, you're not going to enjoy this game or most others at such a high resolution with a mainstream graphics card. What's more, you wouldn't be able to play multi-player mode, as the servers are supposed to boot you at under 30 FPS.

Once again, our Radeon R9 390 wouldn't run the test at this resolution.

MORE: Best CPUs

MORE: Best Motherboards

Current page: Benchmarks: Frame Rate, Frame Time, and Smoothness

Prev Page How We Test For Honor Next Page CPU & RAM Resources, And Conclusion-

Sakkura While it is nice to see how last-gen cards like the 970 and 390 compare to current-gen cards, I think it would have been nice to see cards outside this performance category tested. How do the RX 460 and GTX 1050 do at 1080p High, for example.Reply -

coolitic I find it incredibly stupid that Toms doesn't make the obvious point that TAA is the reason why it looks blurry for Nvidia.Reply -

alextheblue Reply

They had it enabled on both cards. Are you telling me Nvidia's TAA implementation is inferior? I'd believe you if you told me that, but you have to use your words.19351221 said:I find it incredibly stupid that Toms doesn't make the obvious point that TAA is the reason why it looks blurry for Nvidia. -

irish_adam Reply19351221 said:I find it incredibly stupid that Toms doesn't make the obvious point that TAA is the reason why it looks blurry for Nvidia.

as has been stated setting were the same for both cards, maybe Nvidia sacrificing detail for the higher FPS score? I also found it interesting that Nvidia used more system resources than AMD. I was confused why the 3GB version of the 1060 did so poorly considering the lack vram usage but after looking it up nvidia gimped the card, seems a bit misleading for them to both be called the 1060, if you didnt look it up you would assume they are the same graphics chip with just differing amounts of ram. -

anthony8989 ^ yeah it's a pretty shifty move . Probably marketing related. The 1060 3 GB and 6 GB have a similar relationship to the GTX 660 and 660 TI from a few gens back. Only they were nice enough to differentiate the two with the TI moniker. Not so much this time.Reply -

Martell1977 Reply19352376 said:I was confused why the 3GB version of the 1060 did so poorly considering the lack vram usage but after looking it up nvidia gimped the card, seems a bit misleading for them to both be called the 1060, if you didnt look it up you would assume they are the same graphics chip with just differing amounts of ram.

I've been calling this a dirty trick since release and is part of the reason I recommend people get the 480 4gb instead. Just a shady move on nVidia's part, but they are known for such things.

Odd thing though, the 1060 3gb in laptops doesn't have a cut down chip, it has all its cores enabled and is just running lower clocks and VRAM.