For Honor Performance Review

The Game, Graphics Engine, and Settings

For Honor, developed by Ubisoft Montreal, just launched for the PS4, Xbox One, and PC. The game is a mix of third-person combat and action-strategy. We're not here to talk about how you play it, though. Rather, we're digging into how it runs on your PC.

Behind the knights, vikings, and samurai is the AnvilNext 2.0 engine, which first surfaced in 2014 as the foundation for Assassin's Creed Unity. Although the engine is more advanced than its predecessor, AnvilNext, you're still limited to DirectX 11 API support. Does that negatively affect what the developers could do with For Honor? We don't think so.

Minimum and Recommended Hardware Requirements

Despite its attractive graphics, For Honor's hardware requirements are quite reasonable. In theory, it should be possible to play this title with a mid-range machine built four or five years ago.

| System Requirements | Minimum | Recommended |

|---|---|---|

| Processor | Core i3-550 or Phenom II X4 955 | Core i5-2500K or FX-6350 |

| Memory | 4GB | 8GB |

| Graphics Card | GeForce GTX 660/750Ti or Radeon HD 6970/7870 (2GB minimum) | GeForce GTX 680/760 or Radeon R9 280X/380 (2GB minimum) |

| Operating System | Windows 7, 8.1, 10 (64-bit only) | Windows 7, 8.1, 10 (64-bit only) |

| Disk Space | 40GB | 40GB |

| Audio | DirectSound-compatible | DirectSound-compatible |

Radeon vs GeForce

On one hand, For Honor is a multi-platform game, and therefore optimized for AMD's Graphics Core Next architecture used in consoles. On the other, it's an Nvidia "The Way It's Meant to be Played” title. Are there differences, then, in rendering quality between GeForce and Radeon cards at the same detail settings?

Whether you look at the flag on the left, the windows, the climbing vines, or the grass, Nvidia's picture appears blurrier and less crisp than AMD's.

The same observation applies to this image, particularly when you focus on the chain and castle wall textures. Nvidia renders a softer image, while the Radeon's picture is sharper (though it almost appears grainy as a result). Of course, we looked several times: the graphics settings are exactly identical between test platforms (we're using 16x anisotropic texture filtering and TAA anti-aliasing).

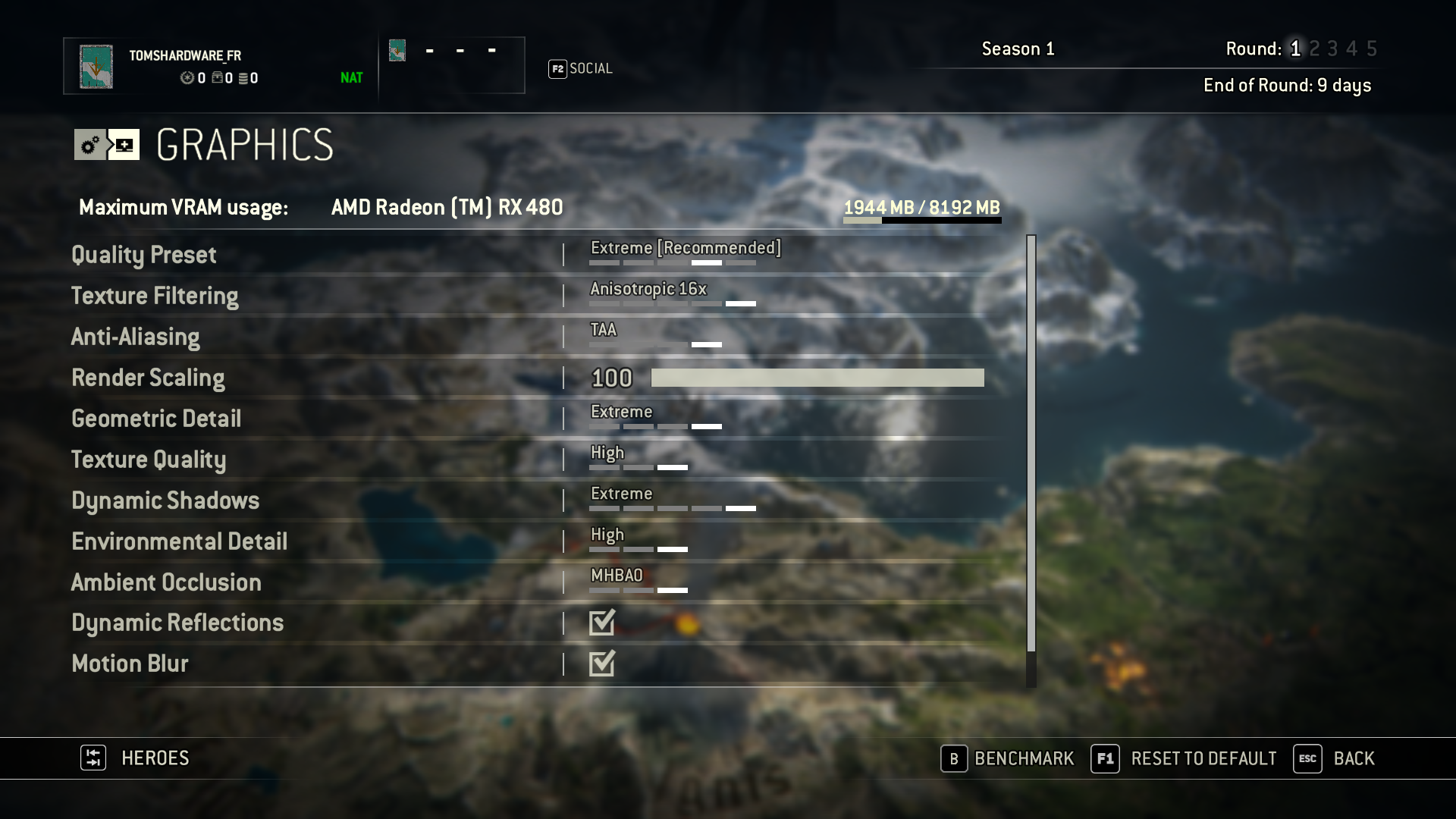

Graphics Settings

Aside from the always-important FOV and resolution options, we get a texture filtering setting (trilinear or anisotropic), several anti-aliasing modes (FXAA, SMAA, or TAA), texture quality, dynamic reflections, and even ambient occlusion (HBAO+ or MHBAO). If you don't feel like experimenting with each knob and dial, there are also four quality presets.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Ansel

Nvidia Ansel support makes it possible to freeze the camera in For Honor and move around freely within the scene. From there, you can compose the perfect screen shot.

A configurable resolution facilitates far more detail than 1080p, or even 4K. And there are options to capture 360-degree images for an extra bit of fun. For those enthusiasts who increasingly stop and marvel at how far graphics have come, Ansel can be a lot of fun to play with.

MORE: Best Graphics Cards

MORE: Best PC Builds

-

Sakkura While it is nice to see how last-gen cards like the 970 and 390 compare to current-gen cards, I think it would have been nice to see cards outside this performance category tested. How do the RX 460 and GTX 1050 do at 1080p High, for example.Reply -

coolitic I find it incredibly stupid that Toms doesn't make the obvious point that TAA is the reason why it looks blurry for Nvidia.Reply -

alextheblue Reply

They had it enabled on both cards. Are you telling me Nvidia's TAA implementation is inferior? I'd believe you if you told me that, but you have to use your words.19351221 said:I find it incredibly stupid that Toms doesn't make the obvious point that TAA is the reason why it looks blurry for Nvidia. -

irish_adam Reply19351221 said:I find it incredibly stupid that Toms doesn't make the obvious point that TAA is the reason why it looks blurry for Nvidia.

as has been stated setting were the same for both cards, maybe Nvidia sacrificing detail for the higher FPS score? I also found it interesting that Nvidia used more system resources than AMD. I was confused why the 3GB version of the 1060 did so poorly considering the lack vram usage but after looking it up nvidia gimped the card, seems a bit misleading for them to both be called the 1060, if you didnt look it up you would assume they are the same graphics chip with just differing amounts of ram. -

anthony8989 ^ yeah it's a pretty shifty move . Probably marketing related. The 1060 3 GB and 6 GB have a similar relationship to the GTX 660 and 660 TI from a few gens back. Only they were nice enough to differentiate the two with the TI moniker. Not so much this time.Reply -

Martell1977 Reply19352376 said:I was confused why the 3GB version of the 1060 did so poorly considering the lack vram usage but after looking it up nvidia gimped the card, seems a bit misleading for them to both be called the 1060, if you didnt look it up you would assume they are the same graphics chip with just differing amounts of ram.

I've been calling this a dirty trick since release and is part of the reason I recommend people get the 480 4gb instead. Just a shady move on nVidia's part, but they are known for such things.

Odd thing though, the 1060 3gb in laptops doesn't have a cut down chip, it has all its cores enabled and is just running lower clocks and VRAM.