Why you can trust Tom's Hardware

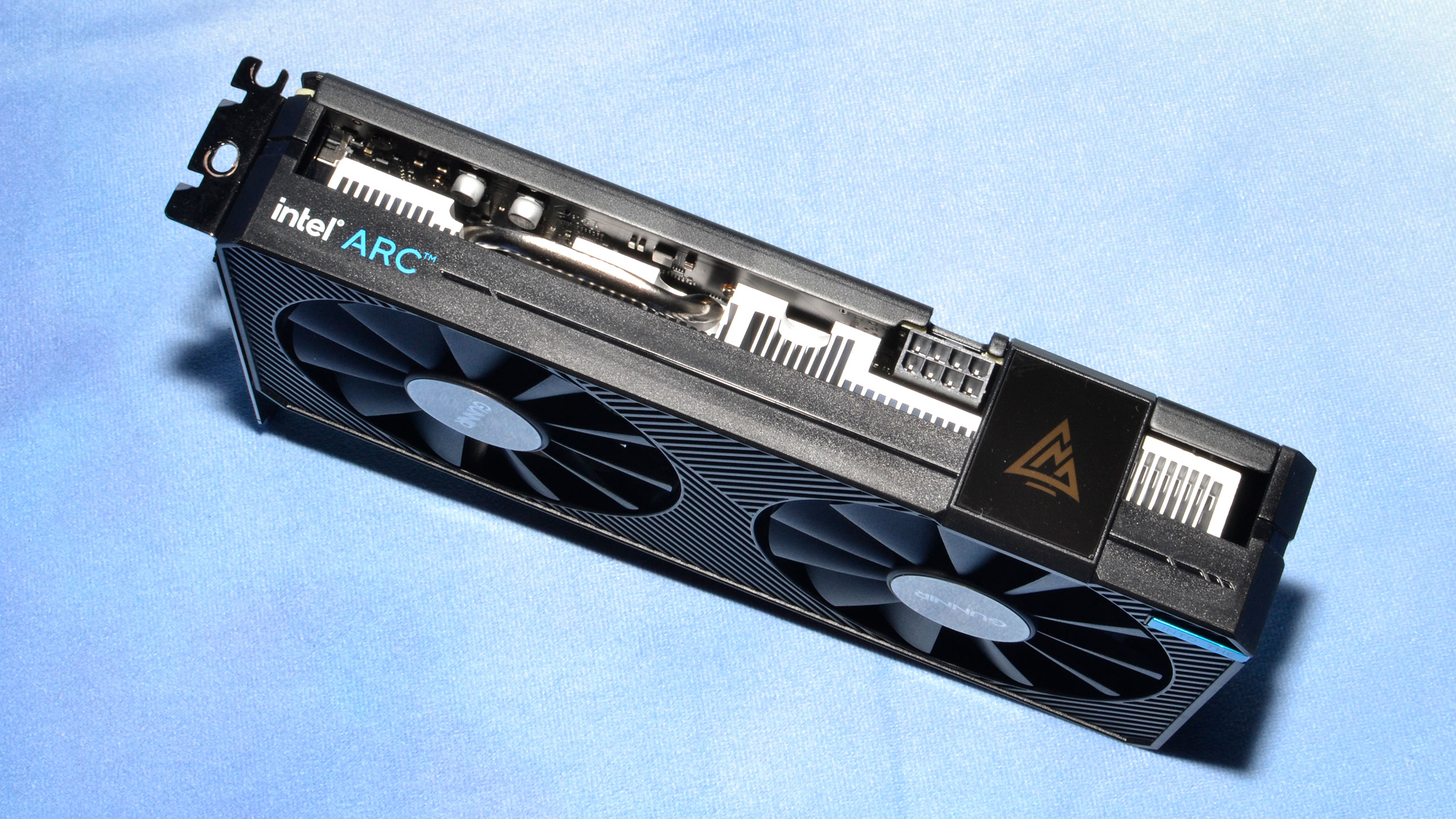

Gunnir isn't a brand we've reviewed before, though the company name has come up quite a bit both with the previous Intel DG1 graphics cards as well as the Arc A380. The Photon OC card comes with a modest factory overclock of 2450 MHz, and the power draw is slightly higher at 92W.

The Photon OC has two 90mm fans, with card dimensions of 222 x 114 x 42mm — slightly thicker than a traditional 2-slot design. The card is small enough to fit in most mini-ITX cases, and in testing it wasn't terribly loud. It's not particularly heavy, though, weighing 690g, with a milled aluminum heatsink. Gunnir seems to compensate for the lower quality heatsink by spinning the fans faster under load.

RGB lighting is limited, with the only concession being the glowing Arc logo on the top of the card that lights up. It's blue by default, but apparently will turn yellow or red if it detects problems with the PCIe power — so it's not really an RGB setup, though perhaps future software could change that.

The cooling and design seem a bit overkill, even with the higher power and clock settings. The Photon has an 8-pin power connector, which combined with the PCIe slot power can provide up to 225W while remaining in spec. That's way more than the card should ever see in practical use, and impractical use cases like liquid nitrogen don't make much sense with an otherwise budget GPU.

Video outputs consist of the typical triple DisplayPort and single HDMI connectivity. However, Intel is the first GPU manufacturer out of the gate with DisplayPort 2.0 support. We don't even have DP 2.0 monitors to use with the card yet, but the card should be able to drive up to 8K at 60 Hz without the use of DSC (Display Stream Compression). It should also be possible to use MST (Multi Stream Transport) with an adapter to drive three 4K 60 Hz displays off a single port, meaning there's potential to have up to ten displays powered by a single A380 card. We couldn't test that use case, sadly.

- MORE: Best Graphics Cards

- MORE: GPU Benchmarks and Hierarchy

- MORE: All Graphics Content

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Gunnir Arc A380 Photon

Prev Page Intel Arc A380 Review Next Page Intel Arc A380 Test Setup and Overclocking

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

cyrusfox Thanks for putting up an review on this. I really am looking for Adobe Suite performance, Photoshop and lightroom. IMy experience is even with a top of the line CPU (12900k) it chugs throuhg some GPU heavy task and was hoping ARC might already be optimized for that.Reply -

brandonjclark While it's pretty much what I expected, remember that Intel has DEEP DEEP pockets. If they stick with this division they'll work it out and pretty soon we'll have 3 serious competitors.Reply -

Giroro What settings were used for the CPU comparison encodes? I would think that the CPU encode should always be able to provide the highest quality, but possibly with unacceptable performance.Reply

I'm also having a hard time reading the charts. Is the GTX 1650 the dashed hollow blue line, or the solid hollow blue line?

A good encoder at the lowest price is not a bad option for me to have. Although, I don't have much faith that Intel will get their drivers in a good enough state before the next generation of GPUs. -

JarredWaltonGPU Reply

Are you viewing on a phone or a PC? Because I know our mobile experience can be... lacking, especially for data dense charts. On PC, you can click the arrow in the bottom-right to get the full-size charts, or at least get a larger view which you can then click the "view original" option in the bottom-right. Here are the four line charts, in full resolution, if that helps:Giroro said:What settings were used for the CPU comparison encodes? I would think that the CPU encode should always be able to provide the highest quality, but possibly with unacceptable performance.

I'm also having a hard time reading the charts. Is the GTX 1650 the dashed hollow blue line, or the solid hollow blue line?

A good encoder at the lowest price is not a bad option for me to have. Although, I don't have much faith that Intel will get their drivers in a good enough state before the next generation of GPUs.

https://cdn.mos.cms.futurecdn.net/dVSjCCgGHPoBrgScHU36vM.pnghttps://cdn.mos.cms.futurecdn.net/hGy9QffWHov4rY6XwKQTmM.pnghttps://cdn.mos.cms.futurecdn.net/d2zv239egLP9dwfKPSDh5N.pnghttps://cdn.mos.cms.futurecdn.net/PGkuG8uq25fNU7o7M8GbEN.png

The GTX 1650 is a hollow dark blue dashed line. The AMD GPU is the hollow solid line, CPU is dots, A380 is solid filled line, and Nvidia RTX 3090 Ti (or really, Turing encoder) is solid dashes. I had to switch to dashes and dots and such because the colors (for 12 lines in one chart) were also difficult to distinguish from each other, and I included the tables of the raw data just to help clarify what the various scores were if the lines still weren't entirely sensible. LOL

As for the CPU encoding, it was done with the same constraints as the GPU: single pass and the specified bitrate, which is generally how you would set things up for streaming (AFAIK, because I'm not really a streamer). 2-pass encoding can greatly improve quality, but of course it takes about twice as long and can't be done with livestreaming. I did not look into other options that might improve the quality at the cost of CPU encoding time, and I also didn't look if there were other options that could improve the GPU encoding quality.

I suspect Arc won't help much at all with Photoshop or Lightroom compared to whatever GPU you're currently using (unless you're using integrated graphics I suppose). Adobe's CC apps have GPU accelerated functions for certain tasks, but complex stuff still chugs pretty badly in my experience. If you want to export to AV1, though, I think there's a way to get that into Premiere Pro and the Arc could greatly increase the encoding speed.cyrusfox said:Thanks for putting up an review on this. I really am looking for Adobe Suite performance, Photoshop and lightroom. IMy experience is even with a top of the line CPU (12900k) it chugs throuhg some GPU heavy task and was hoping ARC might already be optimized for that. -

magbarn Wow, 50% larger die size (much more expensive for Intel vs. AMD) and performs much worse than the 6500XT. Stick a fork in Arc, it's done.Reply -

Giroro Reply

I'm viewing on PC, just the graph legend shows a very similar blue oval for both cardsJarredWaltonGPU said:Are you viewing on a phone or a PC? Because I know our mobile experience can be... lacking, especially for data dense charts -

JarredWaltonGPU Reply

Much of the die size probably gets taken up by XMX cores, QuickSync, DisplayPort 2.0, etc. But yeah, it doesn't seem particularly small considering the performance. I can't help but think with fully optimized drivers, performance could improve another 25%, but who knows if we'll ever get such drivers?magbarn said:Wow, 50% larger die size (much more expensive for Intel vs. AMD) and performs much worse than the 6500XT. Stick a fork in Arc, it's done. -

waltc3 Considering what you had to work with, I thought this was a decent GPU review. Just a few points that occurred to me while reading...Reply

I wouldn't be surprised to see Intel once again take its marbles and go home and pull the ARCs altogether, as Intel did decades back with its ill-fated acquisition of Real3D. They are probably hoping to push it at a loss at retail to get some of their money back, but I think they will be disappointed when that doesn't happen. As far as another competitor in the GPU markets goes, yes, having a solid competitor come in would be a good thing, indeed, but only if the product meant to compete actually competes. This one does not. ATi/AMD have decades of experience in the designing and manufacturing of GPUs, as does nVidia, and in the software they require, and the thought that Intel could immediately equal either company's products enough to compete--even after five years of R&D on ARC--doesn't seem particularly sound, to me. So I'm not surprised, as it's exactly what I thought it would amount to.

I wondered why you didn't test with an AMD CPU...was that a condition set by Intel for the review? Not that it matters, but It seems silly, and I wonder if it would have made a difference of some kind. I thought the review was fine as far it goes, but one thing that I felt was unnecessarily confusing was the comparison of the A380 in "ray tracing" with much more expensive nVidia solutions. You started off restricting the A380 to the 1650/Super, which doesn't ray trace at all, and the entry level AMD GPUs which do (but not to any desirable degree, imo)--which was fine as they are very closely priced. But then you went off on a tangent with 3060's 3050's, 2080's, etc. because of "ray tracing"--which I cannot believe the A380 is any good at doing at all.

The only thing I can say that might be a little illuminating is that Intel can call its cores and rt hardware whatever it wants to call them, but what matters is the image quality and the performance at the end of the day. I think Intel used the term "tensor core" to make it appear to be using "tensor cores" like those in the RTX 2000/3000 series, when they are not the identical tensor cores at all...;) I was glad to see the notation because it demonstrates that anyone can make his own "tensor core" as "tensor" is just math. I do appreciate Intel doing this because it draws attention to the fact that "tensor cores" are not unique to nVidia, and that anyone can make them, actually--and call them anything they want--like for instance "raytrace cores"...;) -

JarredWaltonGPU Reply

Intel seems committed to doing dedicated GPUs, and it makes sense. The data center and supercomputer markets all basically use GPU-like hardware. Battlemage is supposedly well underway in development, and if Intel can iterate and get the cards out next year, with better drivers, things could get a lot more interesting. It might lose billions on Arc Alchemist, but if it can pave the way for future GPUs that end up in supercomputers in five years, that will ultimately be a big win for Intel. It could have tried to make something less GPU-like and just gone for straight compute, but then porting existing GPU programs to the design would have been more difficult, and Intel might actually (maybe) think graphics is becoming important.waltc3 said:I wouldn't be surprised to see Intel once again take its marbles and go home and pull the ARCs altogether, as Intel did decades back with its ill-fated acquisition of Real3D. They are probably hoping to push it at a loss at retail to get some of their money back, but I think they will be disappointed when that doesn't happen. As far as another competitor in the GPU markets goes, yes, having a solid competitor come in would be a good thing, indeed, but only if the product meant to compete actually competes. This one does not. ATi/AMD have decades of experience in the designing and manufacturing of GPUs, as does nVidia, and in the software they require, and the thought that Intel could immediately equal either company's products enough to compete--even after five years of R&D on ARC--doesn't seem particularly sound, to me. So I'm not surprised, as it's exactly what I thought it would amount to.

I wondered why you didn't test with an AMD CPU...was that a condition set by Intel for the review? Not that it matters, but It seems silly, and I wonder if it would have made a difference of some kind. I thought the review was fine as far it goes, but one thing that I felt was unnecessarily confusing was the comparison of the A380 in "ray tracing" with much more expensive nVidia solutions. You started off restricting the A380 to the 1650/Super, which doesn't ray trace at all, and the entry level AMD GPUs which do (but not to any desirable degree, imo)--which was fine as they are very closely priced. But then you went off on a tangent with 3060's 3050's, 2080's, etc. because of "ray tracing"--which I cannot believe the A380 is any good at doing at all.

Intel set no conditions on the review. We purchased this card, via a go-between, from China — for WAY more than the card is worth, and then it took nearly two months to get things sorted out and have the card arrive. That sucked. If you read the ray tracing section, you'll see why I did the comparison. It's not great, but it matches an RX 6500 XT and perhaps indicates Intel's RTUs are better than AMD's Ray Accelerators, and maybe even better than Nvidia's Ampere RT cores — except Nvidia has a lot more RT cores than Arc has RTUs. I restricted testing to cards priced similarly, plus the next step up, which is why the RTX 2060/3050 and RX 6600 are included.

The only thing I can say that might be a little illuminating is that Intel can call its cores and rt hardware whatever it wants to call them, but what matters is the image quality and the performance at the end of the day. I think Intel used the term "tensor core" to make it appear to be using "tensor cores" like those in the RTX 2000/3000 series, when they are not the identical tensor cores at all...;) I was glad to see the notation because it demonstrates that anyone can make his own "tensor core" as "tensor" is just math. I do appreciate Intel doing this because it draws attention to the fact that "tensor cores" are not unique to nVidia, and that anyone can make them, actually--and call them anything they want--like for instance "raytrace cores"...;)

Tensor cores refer to a specific type of hardware matrix unit. Google has TPUs, various other companies are also making tensor core-like hardware. Tensorflow is a popular tool for AI workloads, which is why the "tensor cores" name came into being AFAIK. Intel calls them Xe Matrix Engines, but the same principles apply: lots of matrix math, focusing especially on multiply and accumulate as that's what AI training tends to use. But tensor cores have literally nothing to do with "raytrace cores," which need to take DirectX Raytracing structures (or VulkanRT) to be at all useful. -

escksu The ray tracing shows good promise. The video encoder is the best. 3d performance is meh but still good enough for light gaming.Reply

If it's retails price is indeed what it shows, then I believe it will sell. Of course, Intel won't make much (if any) from these cards.