Meet Moorestown: Intel's Atom Platform For The Next 10 Billion Devices

Intel’s second-gen Atom platform, Moorestown, positions the chip giant to have a killer smartphone and MID platform in 2010. The old Atom Z5xx drawbacks seem fixed. Why does Moorestown rock, and will it be enough to let Intel advance in this market?

Processor Power

Intel likes to refer to Lincroft as having an “ultra-low-power,” or ULP, processing core. I’m not going to veer off into the basics of Atom microarchitecture or the differences in how it processes data compared to higher-end Intel chips. We’ve covered that business before. For now, I’ll simply note that Lincroft is a single-core part that uses Hyper-Threading to create two logical processors for the operating system. The chip supports 64-bit code and uses the same Intel Virtualization Technology (VT) as the Core 2 Duo. Lincroft features a 24K data cache and a 32K instruction cache at the L1 level. There’s 512K of L2 cache and no L3. As we discuss power and performance from here on, know that we’re talking about a 1.9 GHz Lincroft part. To the best of my knowledge, this will be the top of the Z6xx stack in the near-term.

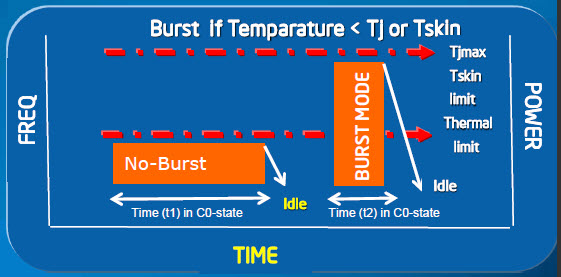

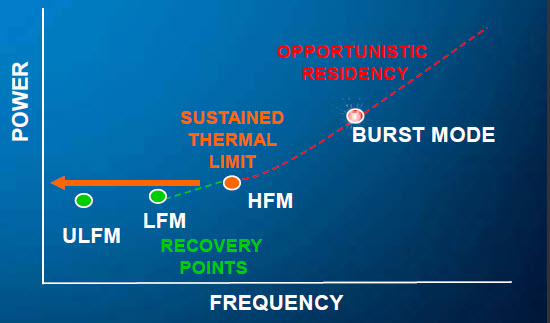

Now, it’s important to add that the 1.9 GHz clock is a “burst mode” rate, and we need to explain this. There are four primary power states in Lincroft: Ultra-Low-Frequency Mode (ULFM), Low-Frequency Mode (LFM), High-Frequency Mode (HFM), and Burst Mode. The Lincroft model that specs 1.9 GHz is likely to spend a lot of of its operational time in a 200 MHz ULFM.

Intel Burst Performance Technology (BPT) is a bit like the Turbo Boost we’ve seen implemented on the desktop in that it provides on-demand performance when needed, and when power and performance profiles will accommodate it. In the graph shown below, you can see that the HFM is the “sustained thermal limit,” meaning the actual TDP. At no time can the platform exceed its CPU thermal junction (Tj) or external chassis (Tskin) temperature limits as measured by thermal monitors. If these are exceeded, the platform will throttle back to the LFM or ULFM “recovery points” to cool off and then remain within the HFM threshold until enough headroom reappears for another burst. Naturally, these transitions all happen within fractions of a second.

With Turbo Boost, there’s a defined, guaranteed frequency and a set specific limit. When X number of cores go idle, you know the remaining cores will jump to Y frequency, and the BIOS doesn’t know what that frequency is. With Burst Mode, though, frequencies are governed by the BIOS. In fact, as Intel puts it, “Burst Mode frequencies [can be] enumerated as P-states” by the BIOS, and multiple Burst Mode exit policies can be defined.

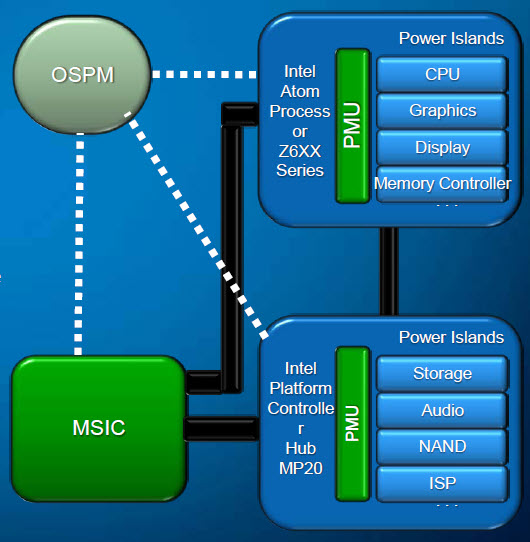

Another facet of Burst Mode is that it supports “race-to-halt” power profiles as driven by Operating System-directed Power Management (OSPM). Race-to-halt reflects the same concept found in server computing environments: the object of the game is to blast through work as quickly as possible in order to revert to a low power, idle state. While the burst utilizes a high-power mode, total work in CPU-bound loads gets finished in less time than if the load were to run at a “normal” speed at a standard power level, and thus net power gets saved. OSPM functionality is directed through drivers and now support power down modes while the device is still active.

OSPM works in conjunction with hardware-based power controls, acting in a sort of advisory role. Software sets power policies and constraints, but hardware ultimately does the fine-grained power management. As you might expect, power and performance needs will vary depending on the application being used, and part of OSPM involves leveraging middleware profiles based on common hardware and software activities. In the common event of multitasking, where different usage profiles might apply concurrently, hardware ultimately gets the last word.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

yannifb Huh, i wonder how this will compete with Bobcat, which supposedly will have 90% of desktop chip performance according to AMD.Reply -

descendency Why isn't this a 32nm product yet? If your concern (which it would be with said devices) is power consumption, shrinking the die can only help...Reply -

Greg_77 silverx75Man, and the HTC Incredible just came out....Man, and I just got the HTC Incredible... ;)Reply

And so the march of technology continues! -

well we can only wait till amd gets their ULV chips out with their on die graphics so we can get a nice comparison.Reply

-

williamvw descendencyWhy isn't this a 32nm product yet? If your concern (which it would be with said devices) is power consumption, shrinking the die can only help...Time to market. 45 nm was quicker for development and it accomplished what needed to get done at this time. That's the official answer. Unofficially, sure, we all know 32 nm will help, but this is business for consumers. Right or wrong, you don't play all of your cards right away.Reply

-

seboj I've only had time to read half the article so far, but I'm excited! Good stuff, good stuff.Reply -

burnley14 This is more exciting to me than the release of 6-core processors and the like because these advances produce tangible results for my daily use. Good work Intel!Reply -

ta152h Do we really need x86 plaguing phones now? Good God, why didn't they use a more efficient instruction set for this? Compatibility isn't very important with the PC, since all the software will be new anyway.Reply

I like the Atom, but not in this role. x86 adds inefficiencies that aren't balanced by a need for compatibility in this market.