Part 1: DirectX 11 Gaming And Multi-Core CPU Scaling

We test five theoretical Intel CPUs in 10 different DirectX 11-based games to determine what impact core count has on performance.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Interview With Ubisoft Massive & The Division

Ubisoft Massive developed its Snowdrop engine for The Division, which we’ve been using as a graphics benchmark for several months. We’ve seen the developer tease Snowdrop’s capabilities since back in 2013, and we know efficiency was at the forefront of its goals. But there’s not much technical detail out there about what the next-gen platform enables. With that in mind Ubisoft Massive technical director Anders Holmquist spent some time answering our questions about his team’s work on The Division.

Tom’s Hardware: How does The Division’s Snowdrop engine utilize multi-core CPUs? Is there a limit to the number of cores it’s able to use?

Anders: The Snowdrop engine, and in particular The Division, is very heavily threaded. The systems in the game are built around a task scheduler with dependencies set for each task. So, as soon as all of the dependencies for a task are completed, that task will execute. This means we aren’t tied to a specific number of cores and can scale fairly fluidly.

Article continues belowTom’s Hardware: So, how meaningful is it to support four or more cores if most gameplay is graphics-bound? What does a more sophisticated host processor get a Division enthusiast, and is it better to pursue IPC/clock rate or more parallelism?

Anders: We are fairly CPU-heavy, and on many configurations we are bound by the CPU, not by the GPU, so more cores is definitely meaningful. Having eight or more cores is certainly not necessary, but if the cores are there, we will use them.

Tom’s Hardware: The Division is one of the few titles out there with a built-in benchmark, which we as enthusiasts and reviewers do appreciate…so long as it’s representative of actual game play. Based on your experience with the game’s benchmark, is this the case?

Anders: It should be fairly representative.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Tom’s Hardware: The Division is a DX11-based title. How will low-level APIs like DX12 and Vulkan affect future games from Massive?

Anders: It’s not as DX11-based as one may think. Consoles allow even greater control over resources and CPU-GPU communication, and we take advantage of that. In DX11, some parts are forced to run single-threaded because that’s the only option, but in DX12 most of those limitations are gone and the PC can work more efficiently. This typically enables higher and more consistent performance as well as reductions in CPU utilization for the render threads.

Tom’s Hardware: Is there anything else you’d like to say about the technology supported by Snowdrop, graphics/processing capabilities that you’re excited about, or developments from Massive that PC enthusiasts should keep an eye out for?

Anders: We’re excited about HDR and 4K being on the rise. HDR really has to be seen in person for the difference to be appreciated.

MORE: Best Graphics Cards

MORE: Desktop GPU Performance Hierarchy Table

MORE: All Graphics Content

The Division

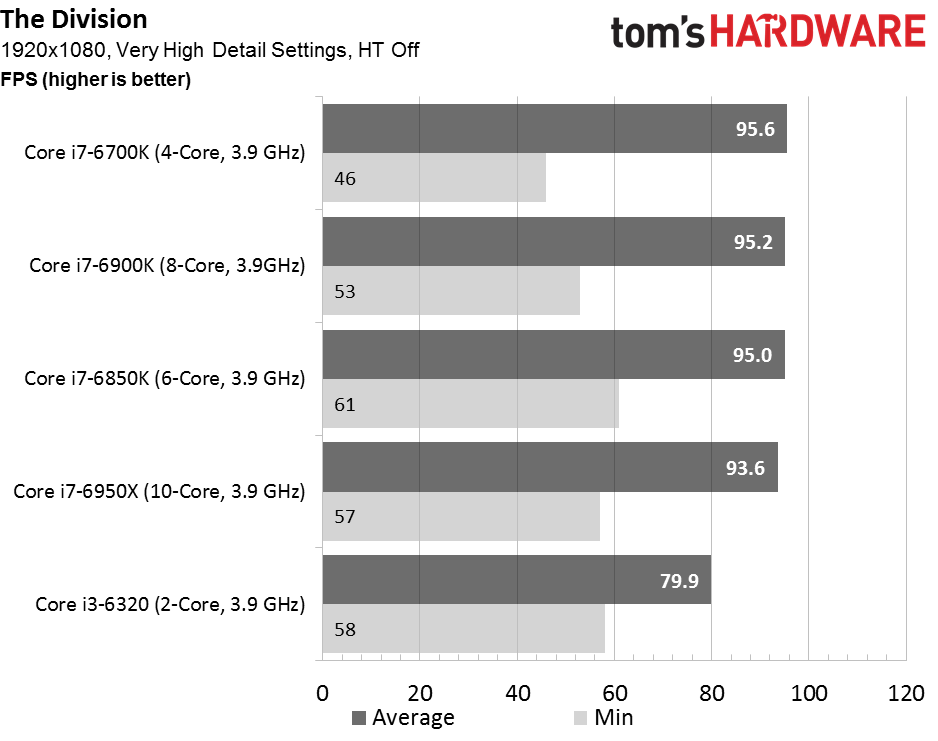

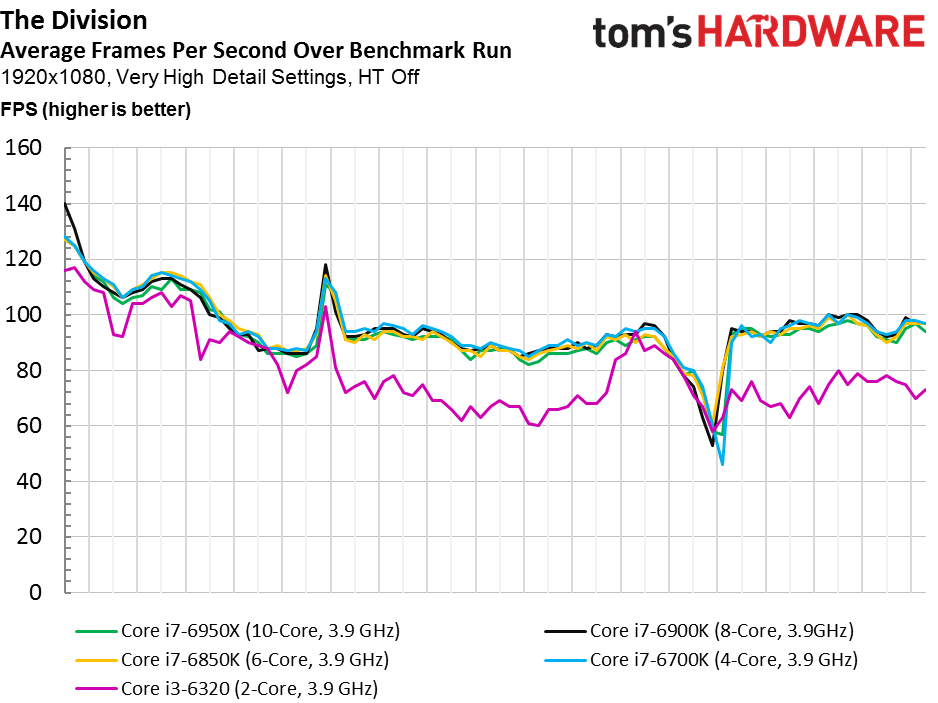

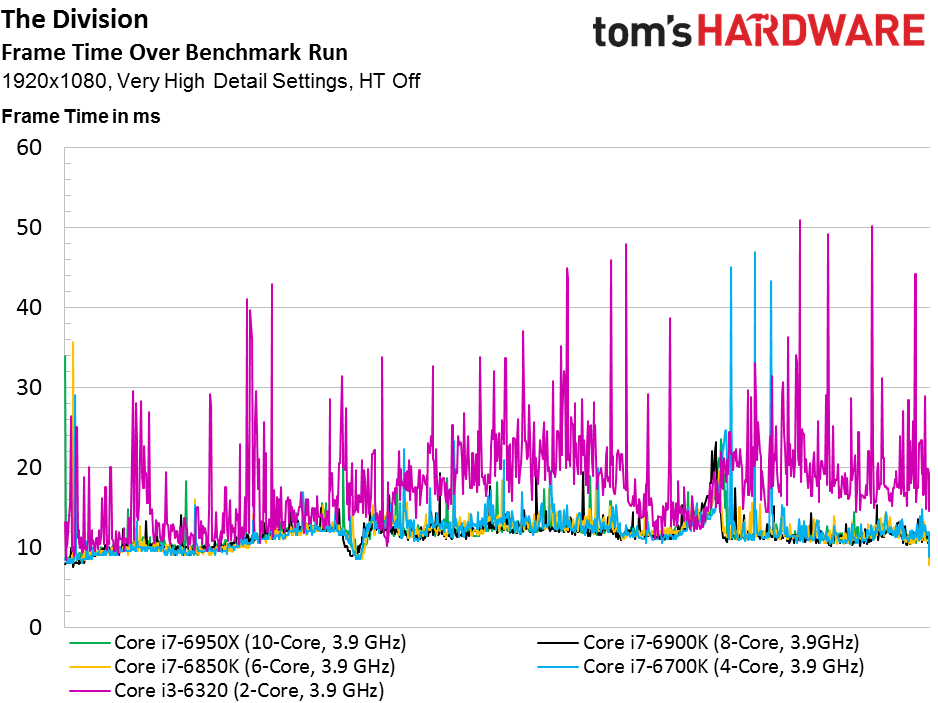

Despite Anders’ assertion that The Division is heavily threaded, there’s little observable benefit from anything over a quad-core configuration at 1920x1080. In fact, the Core i7-6700K averages the highest frame rate (though its minimum tellingly trails all three Broadwell-E CPUs with more cores). Perhaps we need to dial back clock rate to console-class performance to expose more of a difference. After all, the game and engine were both optimized for PC, Xbox One, and PS4.

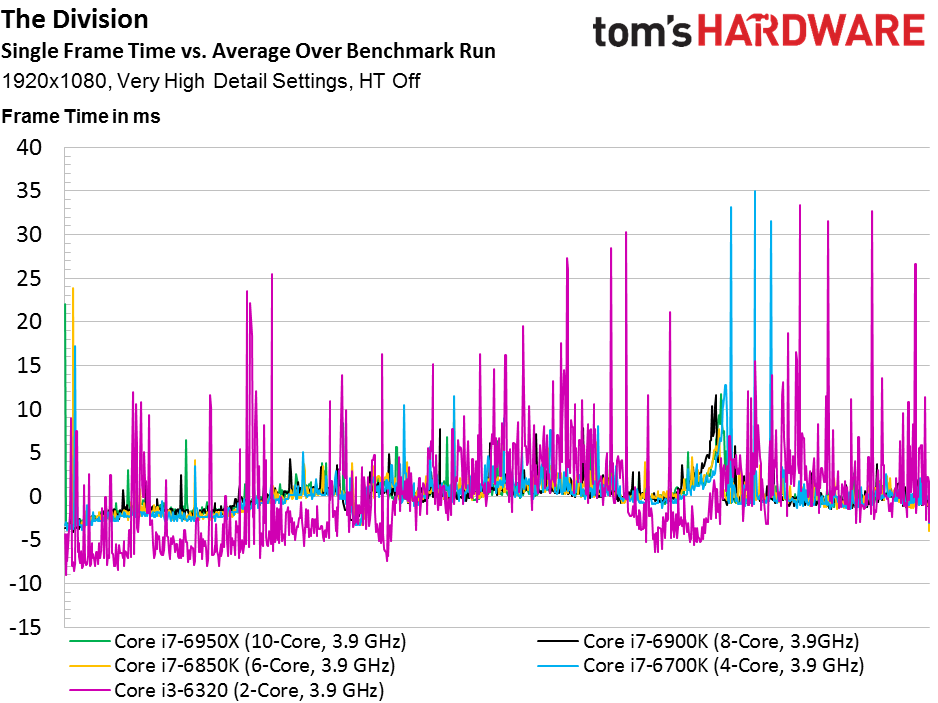

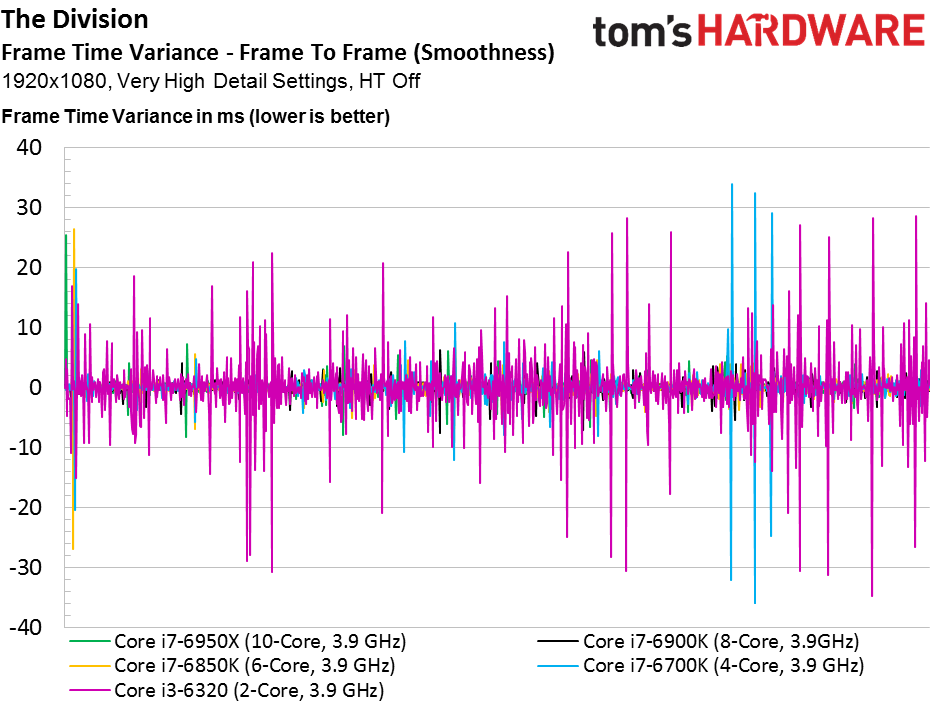

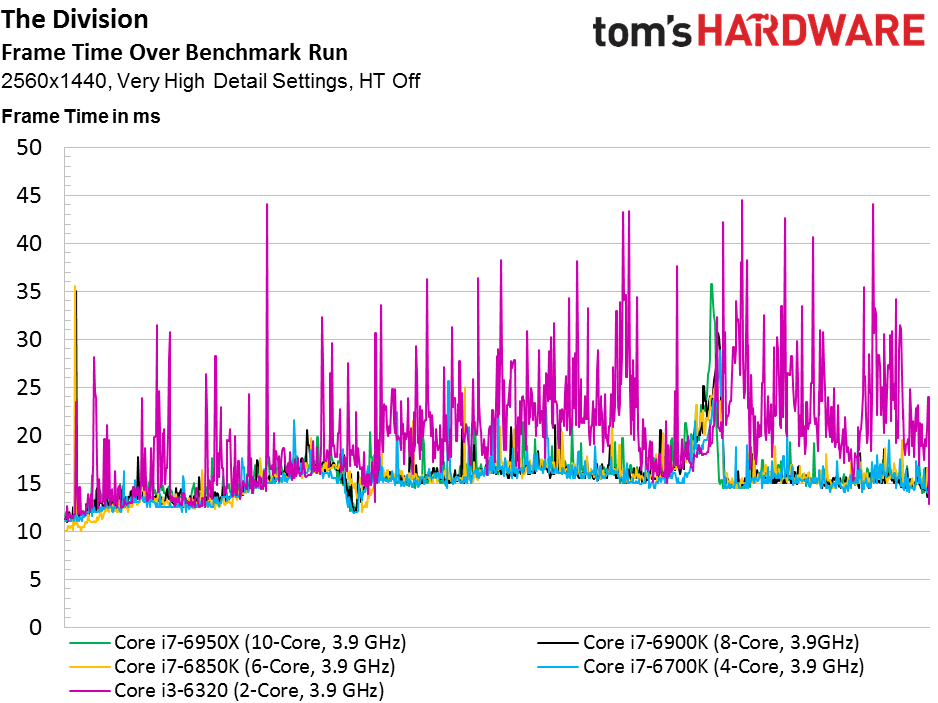

It does become clear that a dual-core processor falls short of satisfactory. Not only does our example track lower in frame rate over time, but it also exhibits sharp frame time variance spikes. Our four-core -6700K suffers a handful of spikes as well, but they’re far rarer.

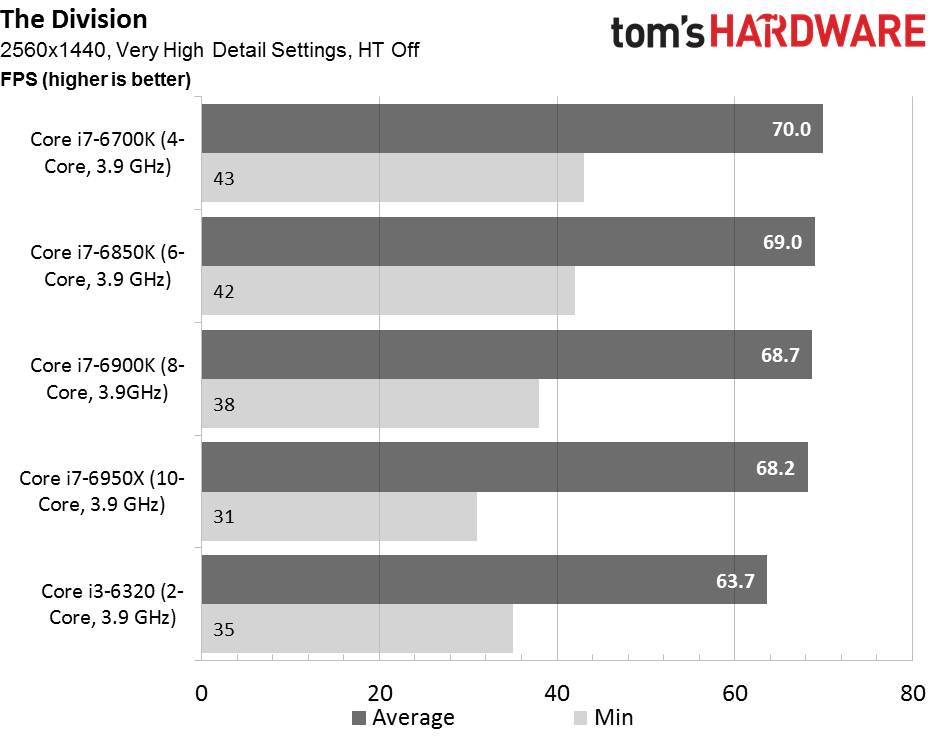

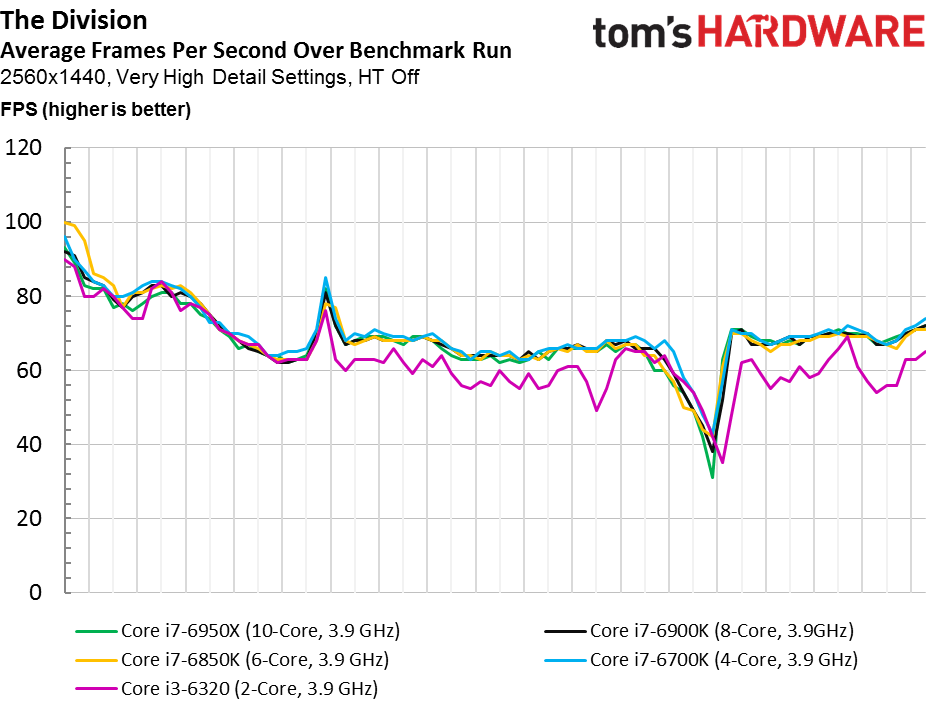

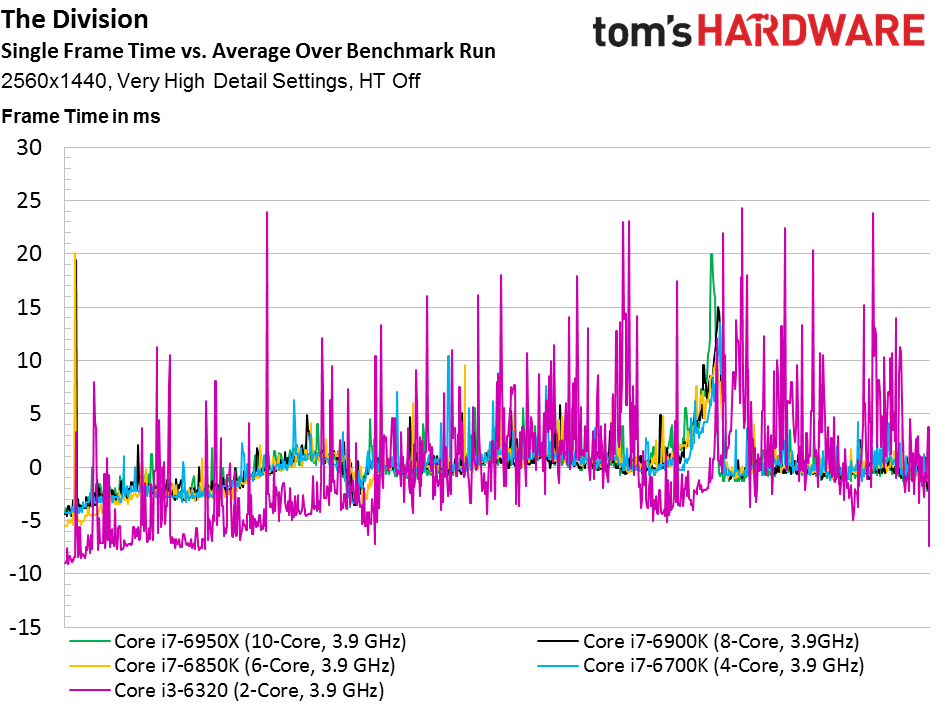

All five CPUs take a substantial hit as we move to 2560x1440. The quad-core config retains its slight lead, though now it also enjoys the highest minimum frame rate as well. Three Broadwell-E processors follow, with the six-core chip beating the eight-core, which in turn is faster than the 10-core -6950X. Their respective minimums abide the same finishing order.

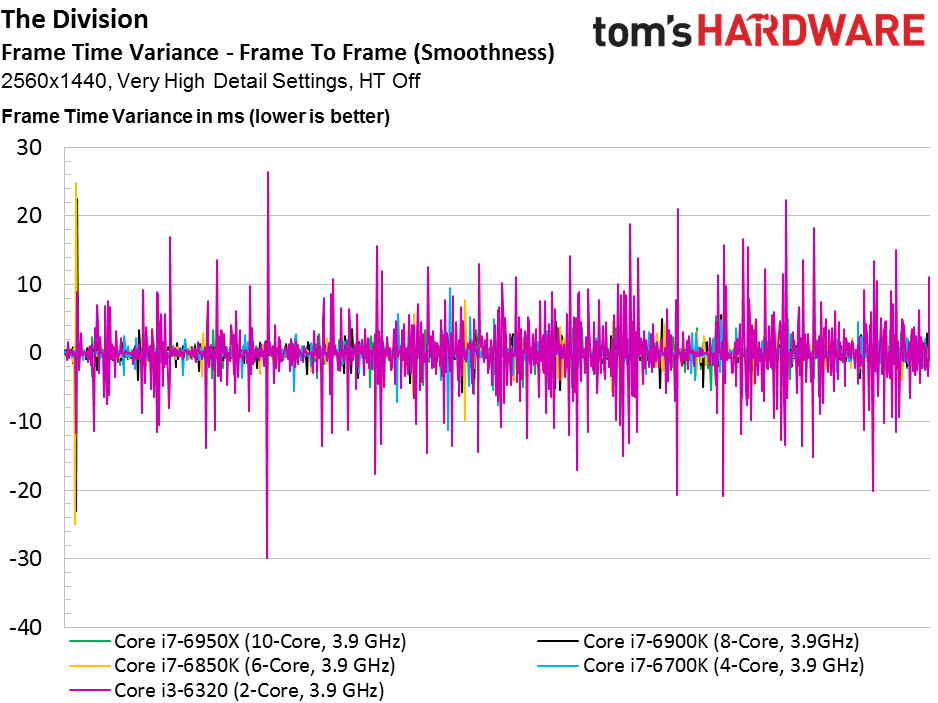

The Core i7-6700K averages about 20% higher frame rates than the -6320. However, the Core i3’s variance chart shows some big spikes that reinforce the message we’ve delivered several times already: when a developer tells you to use at least a quad-core processor, there’s a reason for it. Ubisoft Massive specifies a Core i5-2400, minimum, for The Division. In other words, even our Core i3 with Hyper-Threading enabled would pull up short.

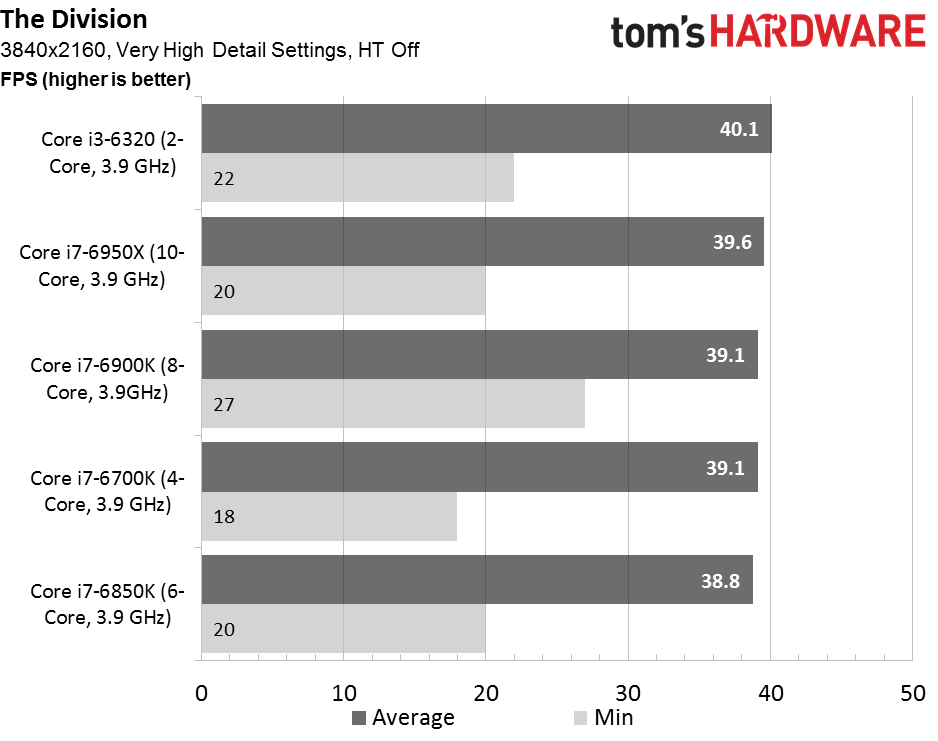

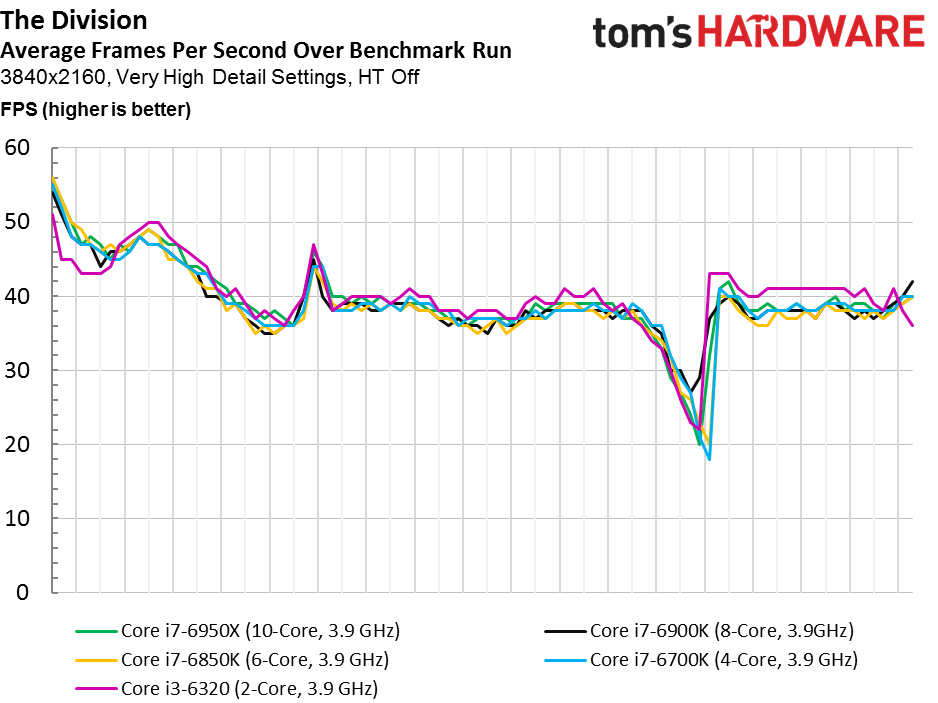

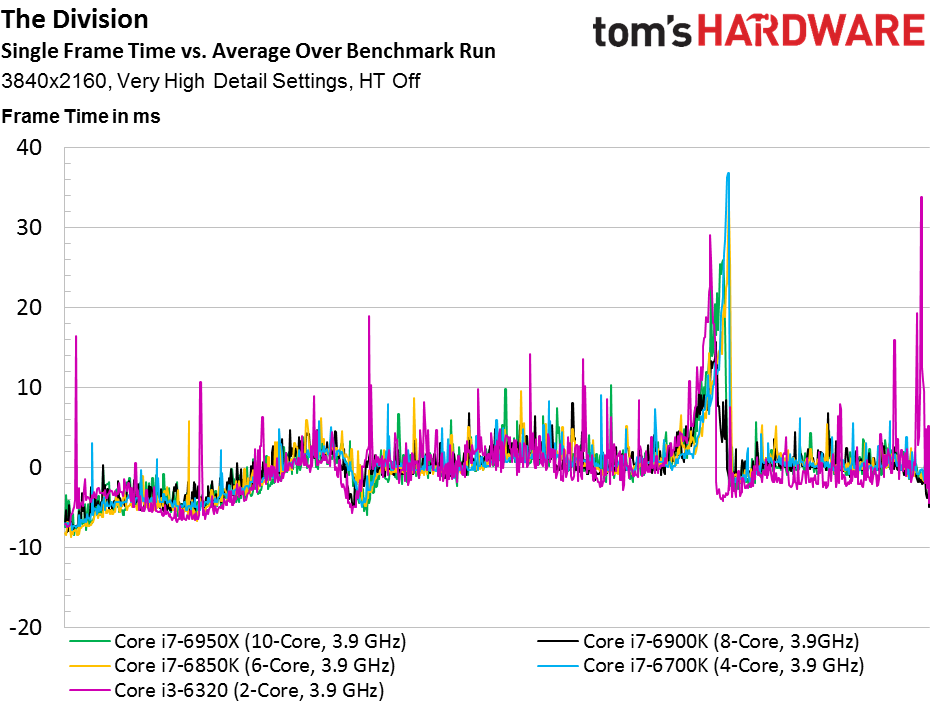

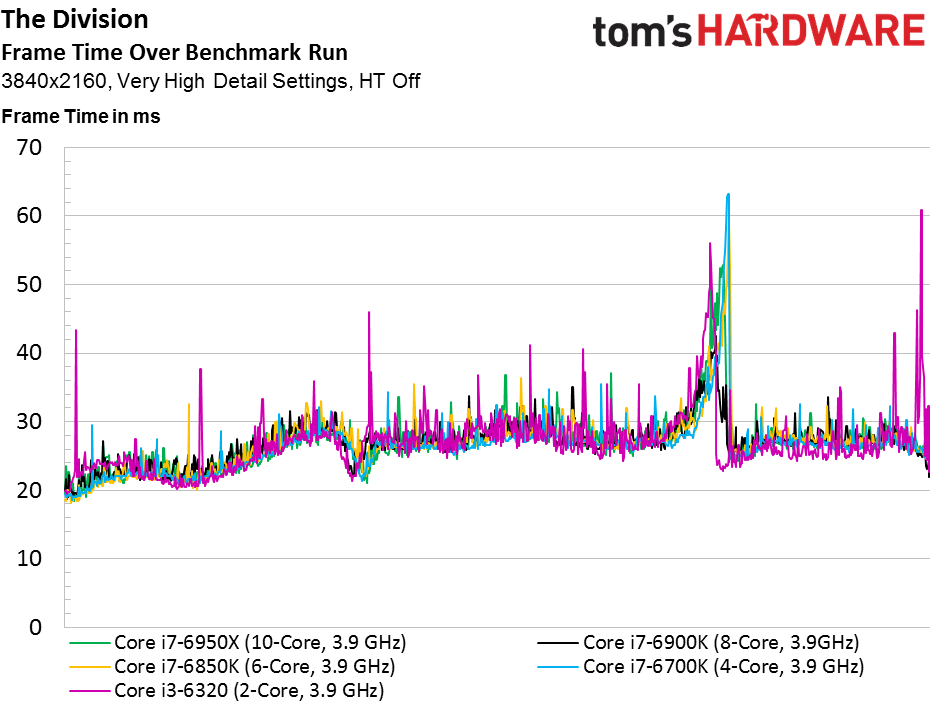

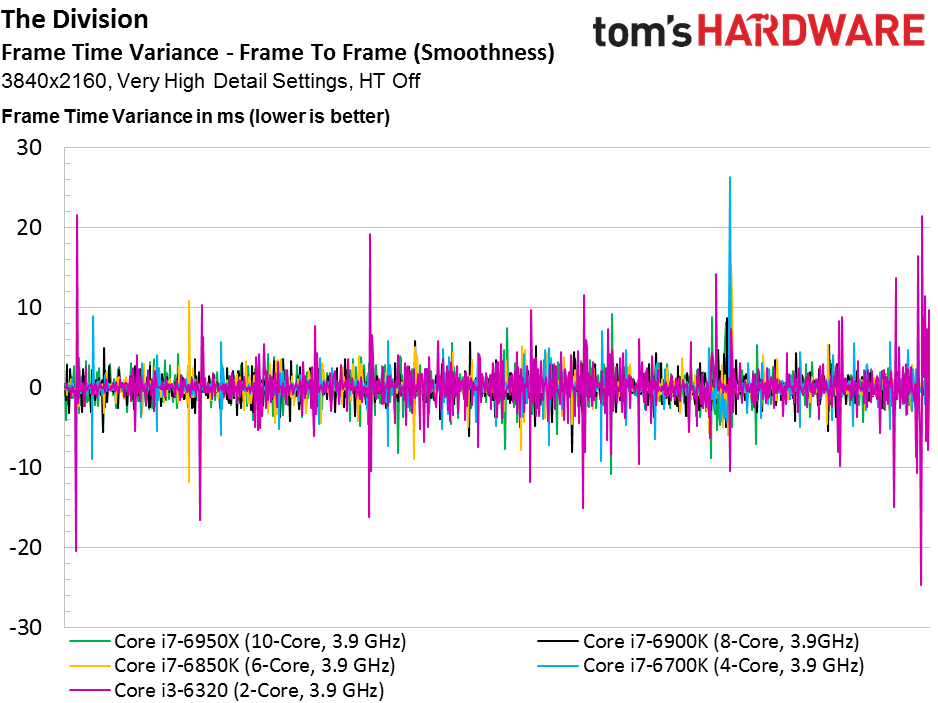

It’s always interesting to see what happens at 3840x2160 when the graphics workload is most intense. And sure enough, we’re surprised by the Core i3-6320 appearing at the top of our average frame rate chart. There’s no rhyme or reason to the finishing order (the other Skylake processor lands second to last, with Broadwell-E above and below).

And so we flip over to the variance graphs, which point to a handful of spikes, but nothing as severe as what we saw previously. There’s no reason to pair a Pentium (or Core i3) and a $700 graphics card, but if you did, the combo could apparently get through The Division without tipping anyone off.

Tom Clancy's The Division

Current page: Interview With Ubisoft Massive & The Division

Prev Page Interview With Slightly Mad Studios & Project CARS Next Page The Witcher 3 & Conclusion-

ledhead11 Awesome article! Looking forward to the rest.Reply

Any chance you can do a run through with 1080SLI or even Titan SLi. There was another article recently on Titan SLI that mentioned 100% CPU bottleneck on the 6700k with 50% load on the Titans @ 4k/60hz. -

Wouldn't it have been a more representative benchmark if you just used the same CPU and limited how many cores the games can use?Reply

-

Traciatim Looks like even years later the prevailing wisdom of "Buy an overclockable i5 with the best video card you can afford" still holds true for pretty much any gaming scenario. I wonder how long it will be until that changes.Reply -

nopking Your GTA V is currently listing at $3,982.00, which is slightly more than I paid for it when it first came out (about 66x)Reply -

TechyInAZ Reply18759076 said:Looks like even years later the prevailing wisdom of "Buy an overclockable i5 with the best video card you can afford" still holds true for pretty much any gaming scenario. I wonder how long it will be until that changes.

Once DX12 goes mainstream, we'll probably see a balanced of "OCed Core i5 with most expensive GPU" For fps shooters. But for CPU the more CPU demanding games it will probably be "Core i7 with most expensive GPU you can afford" (or Zen CPU). -

avatar_raq Great article, Chris. Looking forward for part 2 and I second ledhead11's wish to see a part 3 and 4 examining SLI configurations.Reply -

problematiq I would like to see an article comparing 1st 2nd and 3rd gen I series to the current generation as far as "Should you upgrade?". still cruising on my 3770k though.Reply -

Brian_R170 Isn't it possible use the i7-6950X for all of 2-, 4-, 6-, 8-, and 10-core tests by just disabling cores in the OS? That eliminates the other differences between the various CPUs and show only the benefit of more cores.Reply -

TechyInAZ Reply18759510 said:Isn't it possible use the i7-6950X for all of 2-, 4-, 6-, 8-, and 10-core tests by just disabling cores in the OS? That eliminates the other differences between the various CPUs and show only the benefit of more cores.

Possibly. But it would be a bit unrealistic because of all the extra cache the CPU would have on hand. No quad core has the amount of L2 and L3 cache that the 6950X has. -

filippi I would like to see both i3 w/ HT off and i3 w/ HT on. That article would be the perfect spot to show that.Reply