Nvidia's Ion: Lending Atom Some Wings

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

GeForce 9400M Versus 945GC

A Three Year Age Difference

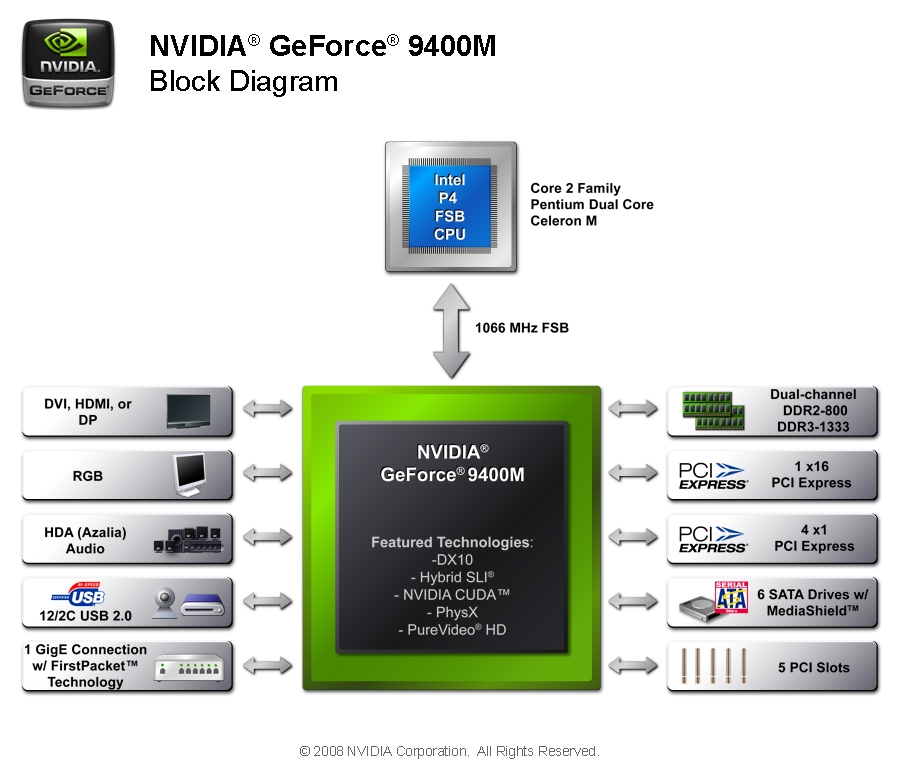

We already described the characteristics of the GeForce 9400/9300 chipset when we first tested it—see Move Over G45: Nvidia's GeForce 9300 Arrives. The GeForce 9400M is an almost identical product, featuring the same GeForce 9-series core with its 16 stream processors. The biggest difference we can find between the desktop 9400 and its mobile version is simply the clock frequencies of the core and shaders: 580 MHz /1,400 MHz for the 9400, and 450 MHz/1,100 MHz for the 9400M.

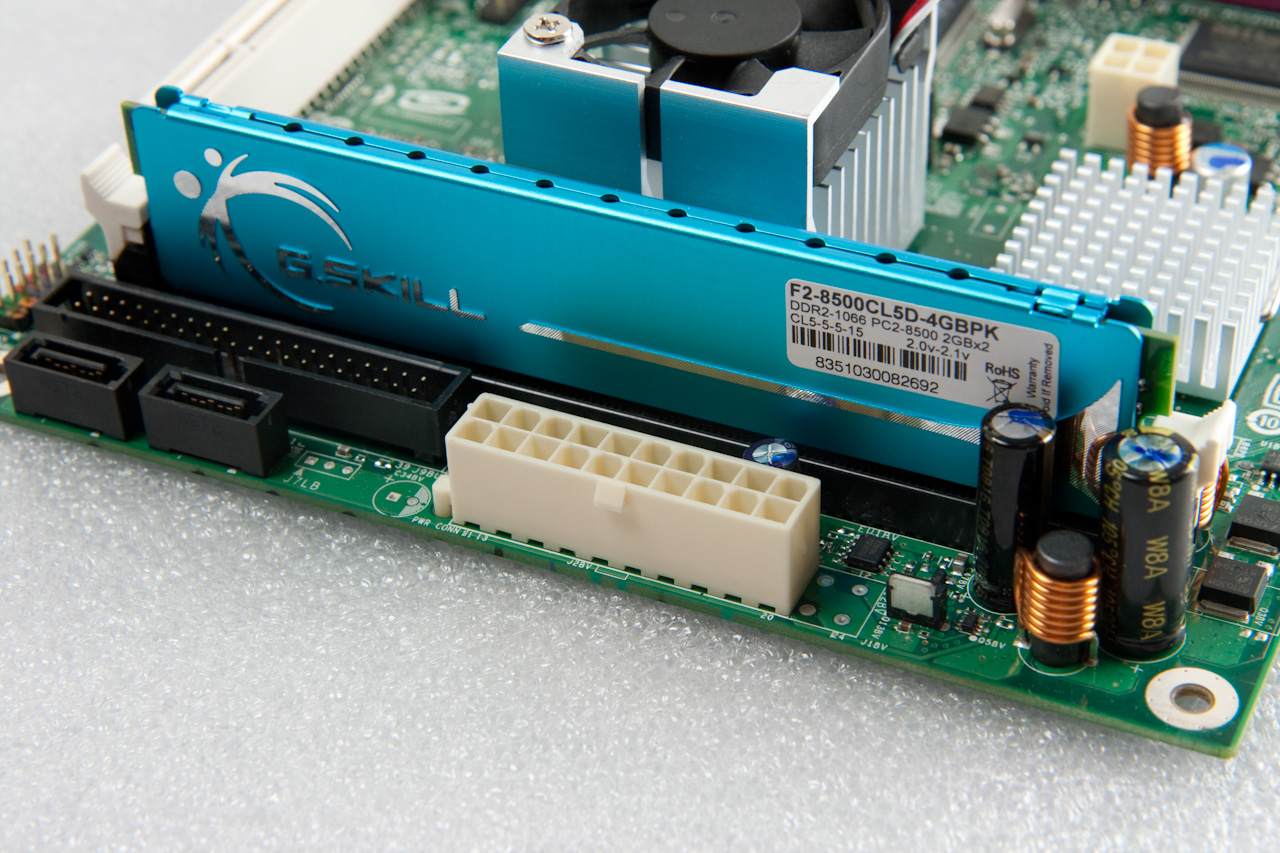

Aside from the slower clock speed, which is necessary in order to keep power consumption low, the features we liked on the 9400 are still there: DDR2-800 or DDR3-1333 memory compatibility, support for all Intel processors with 1066 MHz FSB maximum (so far there are no mobile Intel CPUs with a faster FSB), support for dual-link DVI, HDMI, and DisplayPort interfaces, Gigabit Ethernet, an HD Azalia audio codec, a maximum of 12 USB 2.0 ports, and 3 Gbps SATA ports. As for peripheral interfaces, the mobile GeForce manages one PCI Express x16 slot, four PCIe x1 slots, and five PCI slots. The GeForce uses a 65 nm fabrication process, and has a total die surface of 1225 mm2 (35 x 35 mm). Nvidia rates its TDP at 12 W.

Article continues belowThe 945GC chipset is part of the large “Lakeport” family of Intel chipsets, which has no fewer than seven members. If you count their “mobile” cousins from the Calistoga family, there are a total of 13 memebers. That’s a lot of core logic, but the differences between each model are subtle. All you need to know is that the 945GC is the chipset Intel sells coupled with its Atom desktop processors (the 230 and 330). For its netbook mobile Atom processor (N270), on the other hand, Intel requires that its 945GSE chipset be used.

GeForce: Smaller, With Better Performance

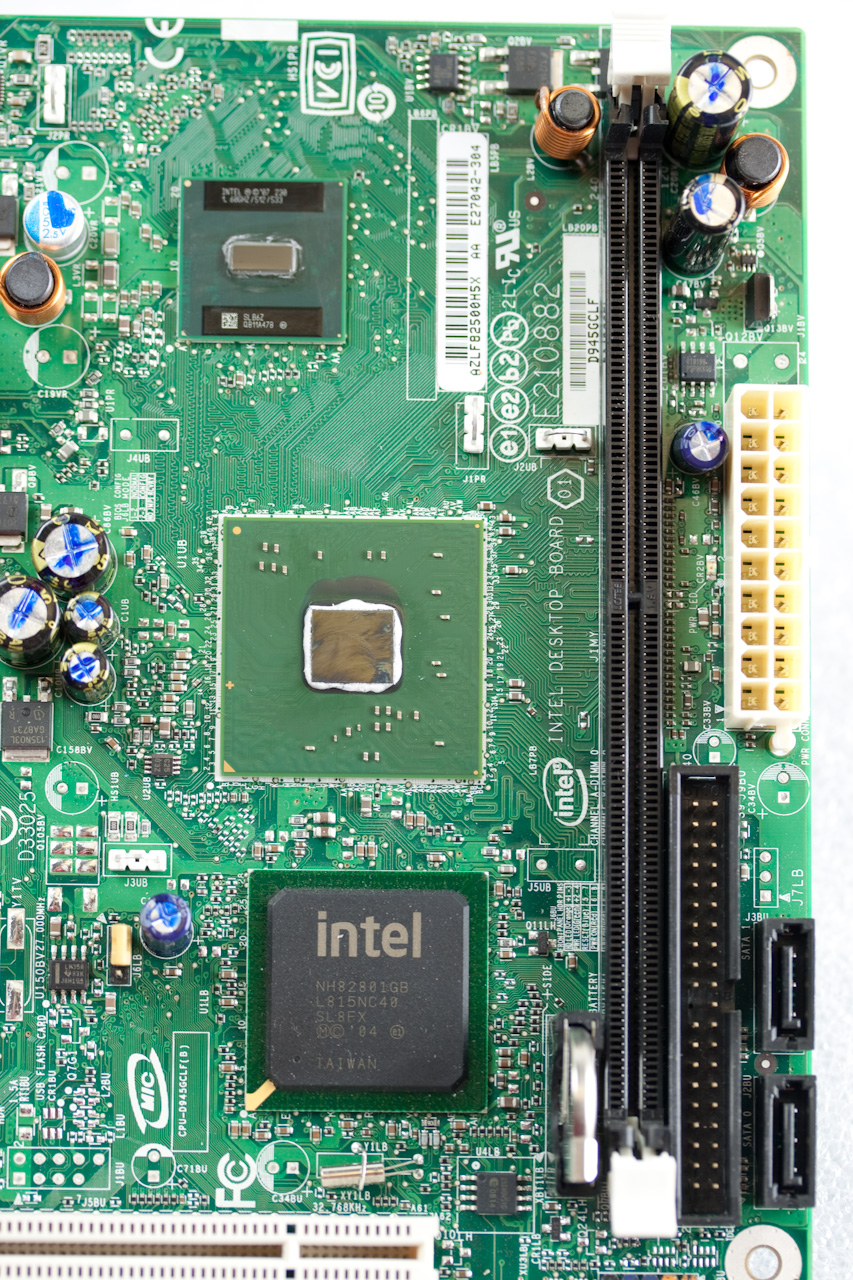

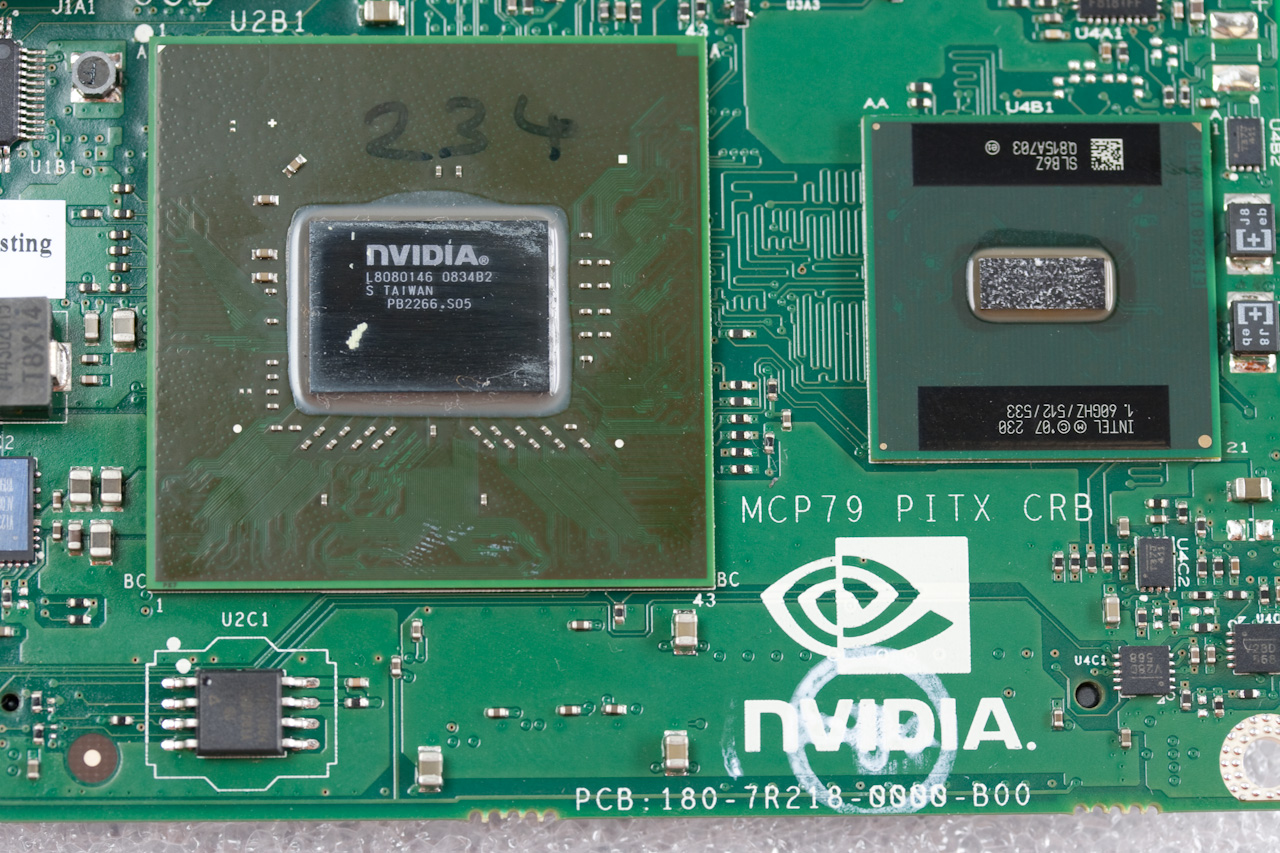

These chipsets are far from being brand new. The first representatives of the Lakeport family hit the market in Spring 2005 (early 2006 for the Calistogas). One of the consequences of their advanced age is that the 945GC uses a 90 nm fabrication process. The 945GC is also not a single-chip product like the GeForce 9400M. The 82945GC northbridge has to be attached to a 82801GB southbridge, also known as ICH7.

The consequences of this are twofold. First, the 945GC + ICH7 combination eats up a lot of real estate: the northbridge measures 34 x 34 mm, and the southbridge 31 x 31 mm. Add a few square millimeters of space between the two ICs and you end up with twice the surface area taken up on the PCB when compared with the GeForce 9400.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The second impact of the older design is that total power consumption is high. According to Intel, the 945GC’s TDP is 22.2 W, and the Atom 230 + 945GC + ICH7 platform has a TDP of 29.5 W. The GeForce 9400M claims a TDP of only 12 W. The Atom itself consumes 4 W, putting the Ion at 16 W total—just above half the TDP of the Intel platform.

Finally, let’s look at performance. The 945GC’s integrated graphics core is the well known (and not necessarily for good reasons) GMA950. It’s DirectX 9- and Shader Model 2.0-compatible, clocked at 400 MHz, and armed with four pixel shaders, one vertex shader, and four ROPs. In other words, it’s from the pre-DirectX 10 unified shader era. It uses part of the main memory as video memory. On the D945GCLF, the inclusion of a single DIMM slot reduces bandwidth to approximately 5 GB/s. By comparison, the GeForce 9400M, with its DDR3-1066 slot, has 8.3 GB/s of bandwidth.

Theoretically, out of the starting gate, the GeForce 9400M has an enormous advantage over its only current competitor. But will that theoretical lead hold up in practice? Isn’t so much graphics power for a CPU as weak as the Atom casting pearls before swine? And is the Nvidia chipset good at anything besides graphics?

-

rootheday Let's be clear - the Ion reference platform is for a nettop - not a netbook. Its based on the dual core Atom 330. I doubt very much that Ion with a single code Atom 230 or N270 would have enough horsepower to do the BD decryption required for BD playback.Reply -

matthieu lamelot Please, let me be clear : the platform reviewed was equipped with an Atom 230, not a 330, as is perfectly obvious from the pictures (one single die on the CPU package).Reply

And, yes, it's powerful enough (thanks to the 9400M) to smoothly playback a BD like Casino Royale (including the HDCP decryption). CPU utilization rose to around 67 % during that test.

And even though the Ion ref platform is kind of a nettop (and we tested it with that in mind, comparing it to Intel's nettop platform), it could also fit in a netbook since 9400M TDP is very close to that of Intel 945GSE chipset that is found in most netbooks today : 12 W compared to 9,3 W. Nvidia and its partners would just need to drop 9400M's frequency a bit. -

randomizer Matthieu, I'm not sure who makes the onboard sound, but if it's Realtek you need to have Stereo Mix enabled for CoD 4 to run. Also, I found that I couldn't start CoD4 (with what appears to be the same error even though I can't really understand it) without plugging in speakers. Yes, speakers. Although any output device might have sufficed.Reply -

mitch074 Realtek codecs are quite common (thus I concur with randomizer); some are quite advanced in that they do automatic detection of what kind of hardware is connected to what pin (through best guess from device impedance, considering the low dB noise those codecs output they are some precise piece of ingineering), allowing autodetection of the sound setup (they will detect if you replace a microphone with a set of speakers, and switch configuration from stereo+microphone to 4.0 audio, for example; that requires driver support though).Reply

If CoD4 requires sound (some games are funny this way) and no hardware is plugged in, then the sound card may report a status CoD4 wasn't expecting, and refuse to run. -

randomizer Well you'd think that they would have patched the game so that it doesn't have problems with needing Stereo Mix and output devices by now. What if my speakers are dead? That's just poor...Reply -

amnotanoobie I immediately looked at the benchmark images, instead of reading the accompanying text around it, and I thought "WTH is AMD (green bar) doing on the nVidia ION platform." I thought I was linked to another page of another review.Reply -

hei man pls ...for now nvidia platform has some advantages but with DX11 you will not need gpu any more ...so for now it's ok for future this platform will be nothing but dustReply

-

nukemaster You may not need a GPU, but a GPU is still far faster then the cpu at running games.Reply

Good review its about time Atom got a little help. -

liemfukliang I wont buy atom until it can run PCMark Vantage, 3DMark Vantage. I don't mean it has to be high score, I just one it is finish the test and not error. :)Reply -

Tekkamanraiden I'd like to be able to buy one of those little reference systems. It would make a nice little HTPC.Reply