AMD Radeon HD 7970: Promising Performance, Paper-Launched

A sample of AMD's next-generation Radeon HD 7970 landed in our lab just before Santa. Don't cross your fingers for one of these in your stocking, though. It's not available yet. Is it fast, though? Our benchmarks suggest yes, but more testing remains!

GPGPU Benchmarks: This Time, With A Preface

It is our goal to be as thorough as possible and to include as many real-world applications as we can, instead of simply relying on synthetic performance metrics. Unfortunately, by pulling its launch date up before Christmas, AMD ran out of time to square away the details. So, the company had to focus its development efforts on gaming (admittedly, the most important subject for an introduction like this one).

As a consequence, other areas didn’t get any development love, and we're missing the ability to test a lot of what AMD is claiming as features. The blame certainly doesn’t fall on the company's software partners, since they were working on a different timeline than what actually ended up transpiring. Regardless, while some of the general-purpose compute applications work to some degree, most don’t. The ones that do don’t show much of a benefit over their predecessors, or even the unaccelerated code path. Ironically, video acceleration is one of the casualties, so we can’t even highlight one of Tahiti’s marquee features: VCE. In short, this is meant to be a fixed-function block of logic not unlike Intel’s Quick Sync.

So, this time around, we are forced to rely more heavily on synthetics. We will follow up with more real-world applications as soon as compatible versions of the software and supporting drivers are available.

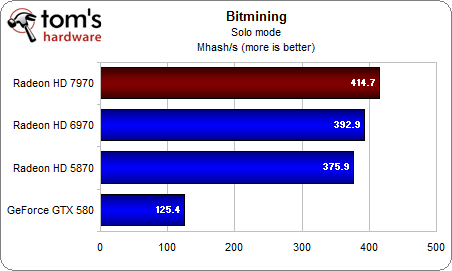

Bitmining

Bitmining is one of the few real-world applications we're able to run, although it's a bit one-sided. Since the server would not let us verify, we had to use solo mode.

Now, Radeons have traditionally been very strong in Bitmining. However, efficiency is really almost more important than sheer performance, and that’s where things are less clear. Sure, the Radeon HD 7970 is the fastest single-GPU card in this group, but it’s comparatively small performance improvement comes with a steep increase in power consumption. For reference, while the aging Radeon HD 5870 attains its respectable performance using 190 W, the new Radeon HD 7970 guzzles down 254 W.

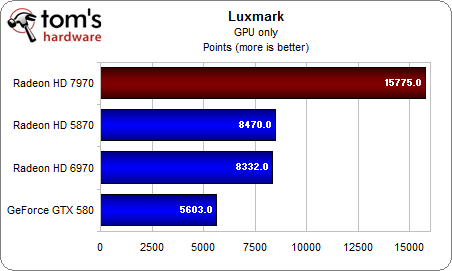

LuxMark

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

LuxMark is based on the freeware application LuxRender, making it the second real-world application in our GPGPU suite. The results are nothing short of spectacular, as the Radeon HD 7970 returns an almost twofold improvement over its predecessor, which takes third place to the older Radeon HD 5870.

Meanwhile, Nvidia's GeForce GTX 580 trails the pack and comes in last. Granted, this benchmark doesn’t seem to like the GeForce cards to begin with, but the fact that the Radeon HD 7970 is almost three times as fast as Nvidia’s current single-GPU flagship is a bit of an embarrassment. It also goes to show that a little optimization can go a long way.

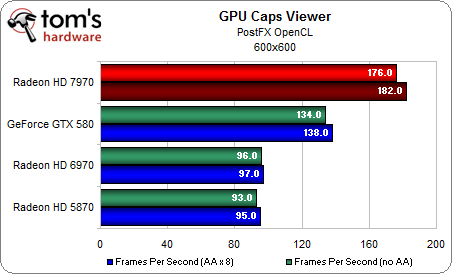

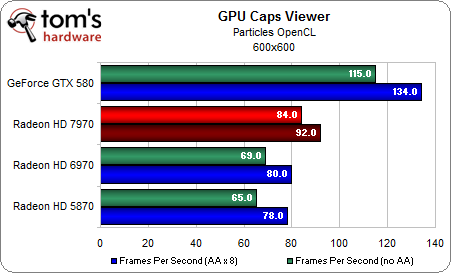

GPU Caps Viewer

That brings us to our synthetic benchmarks. GPU Caps Viewer uses a combination of OpenCL computations, post-processing, and normal graphics output without anti-aliasing, letting us draw some interesting conclusions.

The Post-FX test is a direct implementation of Nvidias own demo for oclPostprocessGL from the Nvidia GPU Computing SDK. A blur effect is added to the image output during post-processing. Interestingly, the Radeon HD 7970 is able to beat the GeForce GTX 580, even though the demo was originally developed by Nvidia. The older Radeons fall behind by a sizeable margin.

In the particle test, the GeForce GTX 580 chalks up a clear win. Meanwhile, none of the Radeons can keep up, although the Radeon HD 7970 is able to close the gap a little.

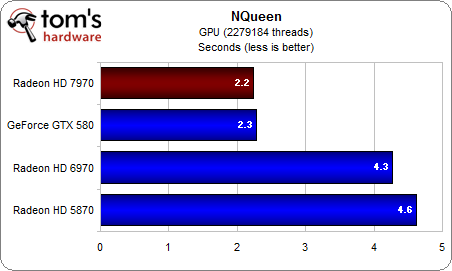

NQueen

The N-Queen puzzle (also known as the eight queens puzzle) is a complex mathematical problem from the world of chess. The goal is to arrange eight queens on a chess board in such a way that no two queens can attack each other according to the rules of chess. The color of the piece is irrelevant, so any queen may attack any other queen. In the end, the point is to find the number of possible solutions as quickly as possible.

This problem forms the basis of this benchmark, and the NQueen test proves once more that AMD's Radeon HD 7970 tremendously benefits from leaving behind the VLIW architecture in complex workloads. Both the HD 7970 and the GTX 580 are nearly twice as fast as the older Radeons. So, while the VLIW-based cards are great for crunching numbers, they’re not as well suited to this sort of task.

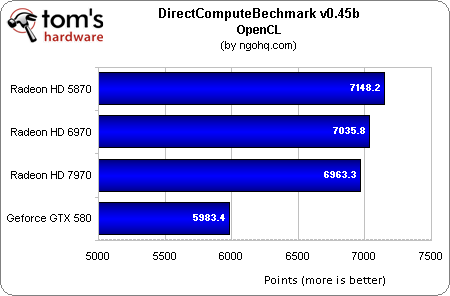

DirectComputeBenchmark

Since this is one of the few benchmarks out there that can test DirectCompute performance as well, that was originally on our list too. However, the result we got for the Radeon HD 7970 was far too high to be plausible. Until we can prove otherwise, we’ll discard that result and chalk it up to a bug in the benchmark. The result of the OpenCL benchmark was more believable:

Interestingly, the Radeons rule this benchmark, with the HD 5870 taking the top spot ahead of the HD 6970 and the HD 7970. Despite the fact that it uses an architecture similar to that of the HD 7970, Nvidia’s GeForce GTX 580 trails the AMD group by a wide margin.

First Impressions

While these results hold great promise, it’s certainly too early for the AMD fans out there to celebrate. Due to the distinct lack of usable real-world apps and the beta state of the drivers we had at our disposal, it’s hard to come to any conclusion about Tahiti’s real compute performance. There is definitely a very positive trend, though, so we can hope to see some compelling performance in real-world applications once they surface.

Moving away from the previous VLIW architecture doesn’t hurt the Radeon HD 7970 too much (if at all, as borne out in Bitmining) in areas where Radeons have traditionally ruled the roost, while simultaneously helping it gain ground in disciplines that Fermi-based cards dominated in the past. Thus, the card appears to be a potent solution able to leave behind the previous generation's limitations. Of course, the drivers and third-part apps have to come around, too. AMD certainly has its work cut out for it in this department.

Current page: GPGPU Benchmarks: This Time, With A Preface

Prev Page Benchmark Results: Metro 2033 Next Page 2D Performance BenchmarksDon Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

thepieguy If Santa is real, there will be one of these under my Christmas tree in a few more days.Reply -

a4mula From a gaming standpoint I fail to see where this card finds a home. For 1920x1080 pretty much any card will work, meanwhile at Eyefinity resolutions it's obvious that a single gpu still isn't viable. Perhaps this will be something that people would consider over 2x 6950, but that isn't exactly an ideal setup either. While much of the article was over my head from a technical standpoint, I hope the 7 series addresses microstuttering in crossfire. If so than perhaps 2x 7950 (Assuming a 449$) becomes a viable alternative to 3x 6950 2GB. I was really hoping we'd see the 7970 in at 449, with the 7950 in at 349. Right now I'm failing to see the value in this card.Reply -

cangelini a4mulaFrom a gaming standpoint I fail to see where this card finds a home. For 1920x1080 pretty much any card will work, meanwhile at Eyefinity resolutions it's obvious that a single gpu still isn't viable. Perhaps this will be something that people would consider over 2x 6950, but that isn't exactly an ideal setup either. While much of the article was over my head from a technical standpoint, I hope the 7 series addresses microstuttering in crossfire. If so than perhaps 2x 7950 (Assuming a 449$) becomes a viable alternative to 3x 6950 2GB. I was really hoping we'd see the 7970 in at 449, with the 7950 in at 349. Right now I'm failing to see the value in this card.Reply

I'll be trolling Newegg for the next couple weeks on the off-chance they pop up before the 9th. A couple in CrossFire could be pretty phenomenal, but it remains to be seen if they maintain the 6900-series scalability. -

cangelini thepieguyIf Santa is real, there will be one of these under my Christmas tree in a few more days.Reply

Hate to break it to you, but there won't be, unless you celebrate Christmas in mid-January.

Start treating your SO super-nice and ask for one for Valentine's Day! -

danraies cangeliniStart treating your SO super-nice and ask for one for Valentine's Day!Reply

If I ever find someone that will buy me a $500 graphics card for Valentine's Day I'll be proposing on the spot. -

a4mula cangeliniI'll be trolling Newegg for the next couple weeks on the off-chance they pop up before the 9th. A couple in CrossFire could be pretty phenomenal, but it remains to be seen if they maintain the 6900-series scalability.Reply

While I have little doubt that 2x of these cards would be very impressive, so would the $1100+ pricetag. I guess coming from the 580 SLI standpoint it might not seem like much, but if you've been considering the $750 ($900 for mobo+psu difference) 3x 6950 route like myself it seems like a major jump.

Of course this is all just initial reaction towards the earliest of benchmarks. Given awhile to really dig around the new 7xxx, while allowing it to mature from a driver standpoint might make the 3x6950 seem foolhardy.

-

Zombeeslayer143 WOW!!! I love the conslusion; all of it, which basically is interpretted as "I'm biased towards Nvidia," and trys to say don't buy this card! Has the nerve to mention Kepler as an alternative; right, Kepler, as in 1 year away. The GTX580 just got "Radeon-ed" in it's rear. I'm not biased towards either manufacturer, just love to see and give credit to a team of people with passion, vision, and hardwork come together and put their company back on the map, as is shown here today with AMD's launch of the 7970. It's AMD's version of "Tebow Time!!"Reply