Update: Radeon R9 295X2 8 GB In CrossFire: Gaming At 4K

We spent our weekend benchmarking the sharp-looking iBuyPower Erebus loaded with a pair of Radeon R9 295X2 graphics cards. Do the new boards fare better than the quad-GPU configurations we've tested before, or should you stick to fewer cards in CrossFire?

Radeon R9 295X2s, Working Together

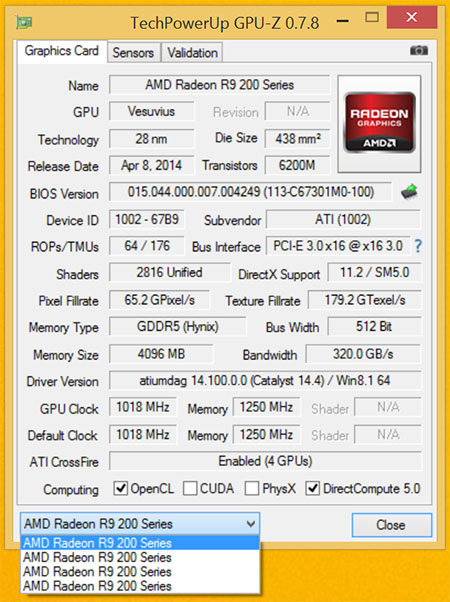

Update: After this story went live in mid-April, AMD let us know that a number of our results did not match what the company was seeing in its own lab. Today’s update is the product of hundreds of benchmark runs designed to diagnose the behavior of two Radeon R9 295X2 cards in quad-CrossFire.According to AMD, the only officially-supported way to connect a 295X2 and a 4K display is through DisplayPort. The dual-HDMI method we’re forced to use (and described in detail here: Challenging FPS: Testing SLI And CrossFire Using Video Capture) is not considered valid. So, the first step was to run all of our tests using a DisplayPort cable and Fraps, comparing the outcome to FCAT-generated data.Using that information, we identified a number of games that behaved the same, regardless of test method, and a couple that suggested something else was the matter. And so we dug…All of the charts on the following pages were re-rendered. And much of what you’ll see confirms our original conclusions. But because we have so much more time invested into benchmarking and troubleshooting, all of the analysis is new. We also gathered additional information about AMD's XDMA engine. The Catalyst driver dynamically calculates PCIe bandwidth in real-time. If there isn't enough available, either because your platform cannot keep up or because the resolution you're driving is too throughput-intensive, compositing happens in software instead. Ideally, then, you want x16 slots running at x16 transfer rates, without PLX switches in front of them adding latency. AMD says its dual-GPU boards will still work on links narrower than 16 lanes and through motherboard switches, though stuttering/timing issues become more likely, as you'd expect.Does our revisit vindicate a pair of $1500 graphics cards working in parallel, or are you better off spending your budget elsewhere?

Twenty-four point eight billion transistors. Eleven-thousand two-hundred and sixty-four shaders. Sixteen gigabytes of GDDR5 memory. Twenty-three teraflops of compute power. One thousand watts of rated board power.

No matter which specification you use to describe two Radeon R9 295X2 cards in CrossFire, the number is undoubtedly unlike any other describing a gaming PC’s capabilities. And yet, late last Friday, a box from iBuyPower showed up at the lab with a tall, majestic Erebus PC sporting a pair of the dual-GPU, liquid-cooled flagships I reviewed in Radeon R9 295X2 8 GB Review: Project Hydra Gets Liquid Cooling. iBuyPower’s builders dutifully matched my test bed’s specifications, including a Core i7-4960X overclocked to 4.2 GHz, 32 GB of memory in a quad-channel configuration, and an even beefier 1350 W power supply. Despite the box’s brawn (and the haste with which I asked to have it built), every cable was tied down neatly, giving the lighting on AMD’s latest a neat, roomy enclosure with a side window to illuminate.

Article continues belowAlso nifty, but something we don’t typically think much about as enthusiasts, is that iBuyPower shipped the exceedingly-heavy system with two liquid-cooled cards and it arrived working perfectly. Polyurethane foam works wonders.

And so, with years of experience under our belts suggesting that four GPUs working cooperatively typically don’t behave well at all, regardless of vendor, we set off an experiment to gauge whether history repeats itself, or if AMD’s best-built board breaks precedent in more ways than one.

Testing Two Radeon R9 295X2s

We have our reasons to believe that two Radeon R9 295X2s might behave different from quad-GPU solutions tested previously. To begin, there's AMD's new DMA engine that enables CrossFire without a bridge connector. There's also the fact that we're testing at 3840x2160 and using an overclocked Ivy Bridge-E-based platform, hopefully minimizing the poor scaling you'd expect from a platform-bound configuration. AMD also sent over a new beta driver late last week, which was supposed to be optimized for four-way CrossFire.

| Test Hardware | |

|---|---|

| Processors | Intel Core i7-4960X (Ivy Bridge-E) 3.5 GHz Base Clock Rate, Overclocked to 4.2 GHz, LGA 2011, 15 MB Shared L3, Hyper-Threading enabled, Power-savings enabled |

| Motherboard | MSI X79A-GD45 Plus (LGA 2011) X79 Express Chipset, BIOS 17.8ASRock X79 Extreme11 (LGA 2011) X79 Express Chipset |

| Memory | G.Skill 32 GB (8 x 4 GB) DDR3-2133, F3-17000CL9Q-16GBXM x2 @ 9-11-10-28 and 1.65 V |

| Hard Drive | Samsung 840 Pro SSD 256 GB SATA 6Gb/s |

| Graphics | 2 x AMD Radeon R9 295X2 8 GB |

| Row 5 - Cell 0 | 2 x AMD Radeon R9 290X 4 GB (CrossFire) |

| Row 6 - Cell 0 | AMD Radeon HD 7990 6 GB |

| Row 7 - Cell 0 | 2 x Nvidia GeForce GTX Titan 6 GB (SLI) |

| Row 8 - Cell 0 | 2 x Nvidia GeForce GTX 780 Ti 3 GB (SLI) |

| Row 9 - Cell 0 | Nvidia GeForce GTX 690 4 GB |

| Power Supply | Rosewill Lightning 1300 1300 W, Single +12 V rail, 108 A output |

| System Software And Drivers | |

| Operating System | Windows 8.1 Professional 64-bit |

| DirectX | DirectX 11 |

| Graphics Driver | AMD Catalyst 14.4 Beta |

| Row 15 - Cell 0 | Nvidia GeForce 337.50 Beta |

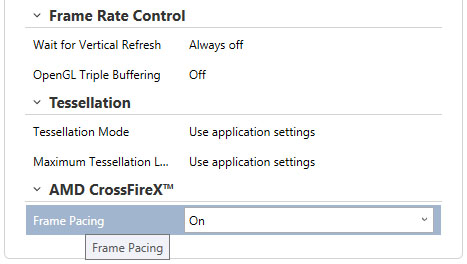

We're careful to make sure Frame Pacing is enabled, that Tessellation Mode is controlled by the applications, and v-sync is forced off in AMD's driver.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

| Benchmarks And Settings | |

|---|---|

| Battlefield 4 | 3840x2160: Ultra Quality Preset, v-sync off, 100-second Tashgar playback. Fraps/FCAT for 3840x2160 |

| Arma 3 | 3840x2160: Ultra Quality Preset, 8x FSAA, Anisotropic Filtering: Ultra, v-sync off, Infantry Showcase, 30-second playback, FCAT and Fraps |

| Metro: Last Light | 3840x2160: Very High Quality Preset, 16x Anisotropic Filtering, Normal Motion Blur, v-sync off, Built-In Benchmark, FCAT and Fraps |

| Assassin's Creed IV | 3840x2160: Maximum Quality options, 4x MSAA, 40-second Custom Run-Through, FCAT and Fraps |

| Grid 2 | 3840x2160: Ultra Quality Preset, 120-second recording of built-in benchmark, FCAT and Fraps |

| Thief | 3840x2160: Very High Quality Preset, 70-second recording of built-in benchmark, FCAT and Fraps |

| Tomb Raider | 3840x2160: Ultimate Quality Preset, FXAA, 16x Anisotropic Filtering, TressFX Hair, 45-second Custom Run-Through, FCAT and Fraps |

-

redgarl I always said it, more than two cards takes too much resources to manage. Drivers are not there either. You are getting better results with simple Crossfire. Still, the way AMD corner Nvidia as the sole maker able to push 4k right now is amazing.Reply -

BigMack70 I personally don't think we'll see a day that 3+ GPU setups become even a tiny bit economical.Reply

For that to happen, IMO, the time from one GPU release to the next would have to be so long that users needed more than 2x high end GPUs to handle games in the mean time.

As it is, there's really no gaming setup that can't be reasonably managed by a pair of high end graphics cards (Crysis back in 2007 is the only example I can think of when that wasn't the case). 3 or 4 cards will always just be for people chasing crazy benchmark scores. -

frozentundra123456 I am not a great fan of mantle because of the low number of games that use it and its specificity to GCN hardware, but this would have been one of the best case scenarios for testing it with BF4.Reply

I cant believe the reviewer just shrugged of the fact that the games obviously look cpu limited by just saying "well, we had the fastest cpu you can get" when they could have used mantle in BF4 to lessen cpu usage. -

Matthew Posey The first non-bold paragraph says "even-thousand." Guessing that should be "eleven-thousand."Reply -

Haravikk How does a dual dual-GPU setup even operate under Crossfire? As I understand it the two GPUs on each board are essentially operating in Crossfire already, so is there then a second Crossfire layer combining the two cards on top of that, or has AMD tweaked Crossfire to be able to manage them as four separate GPUs? Either way it seems like a nightmare to manage, and not even close to being worth the $3,000 price tag, especially when I'm not really convinced that even a single of those $1,500 cards is really worth it to begin with; drool worthy, but even if I had a ton of disposable income I couldn't picture myself ever buying one.Reply