Update: Radeon R9 295X2 8 GB In CrossFire: Gaming At 4K

We spent our weekend benchmarking the sharp-looking iBuyPower Erebus loaded with a pair of Radeon R9 295X2 graphics cards. Do the new boards fare better than the quad-GPU configurations we've tested before, or should you stick to fewer cards in CrossFire?

Results: Tomb Raider

Tomb Raider received the bulk of our attention because of its odd behavior. But it too typifies the issues AMD is facing.

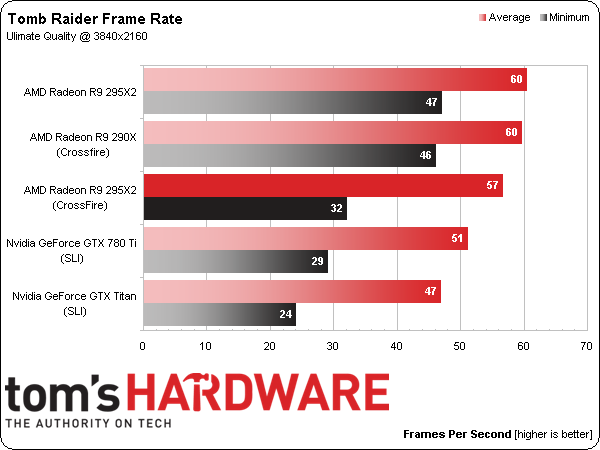

Let’s start with the chart above. It’s correct for the in-game benchmark we ran. FCAT says we’re seeing an average of 57 FPS. Fraps confirms that 57 FPS sounds about right. And our previous FCAT-generated chart said 51 FPS. In all cases, that’s negative scaling compared to one Radeon R9 295X2 at 60 FPS.

Now, bear in mind that this is a benchmark taken straight from the game. It was chosen by our very own Paul Henningsen for its load and repeatability compared to sequences elsewhere. If you instead choose to run Tomb Raider’s built-in benchmark, you’ll start with around 56 FPS with one Radeon R9 295X2 and end up around 100 FPS. That’s the result AMD is expecting, and we replicated it on our side.

Article continues belowSo we have an in-game benchmark that is helped along by four GPUs and a real sequence from Tomb Raider that scales negatively. Almost certainly, something is bottlenecking AMD’s cards, since we have folks at iBuyPower running numbers concurrently using our test and showing that you can use two, three, and four GeForce GTX Titans and still scale performance.

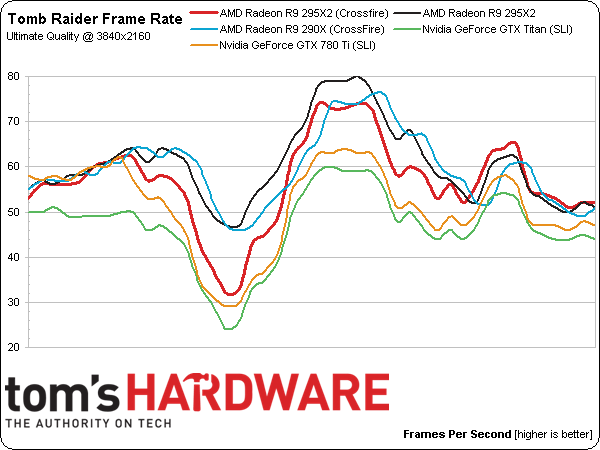

But why the negative scaling? It also turns out that, with a single Radeon R9 295X2 under the hood, there’s an aspect ratio bug, which renders the scene offset to one side. This means less of Lara is rendered on a more regular basis, lightening the load. Switching to quad-CrossFire fixes the aspect ratio, creating a more demanding benchmark. And thus, the frame rate drops compared to a single card.

The red line speaks for itself; one Radeon R9 295X2 outperforms two, but only because the sequence it’s rendering is also incorrect. There are bugs that need to be fixed.

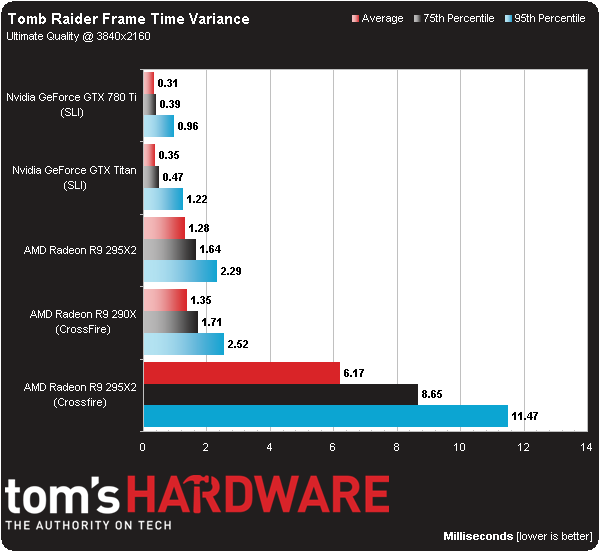

As two Radeon R9 295X2s struggle with whatever’s going on in Tomb Raider, frame time variance is all over the place in a bad way. As you might have guessed, stuttering is a prominent issue in this game as well, and it’s so much worse with four GPUs than two.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

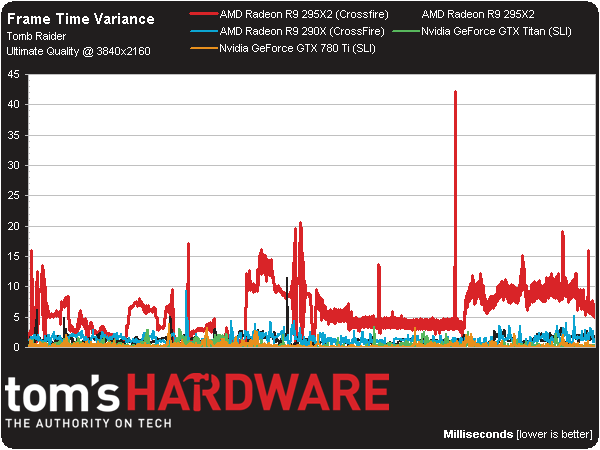

And there’s what it looks like over time. Ouch.

Current page: Results: Tomb Raider

Prev Page Results: Thief Next Page Two Radeon R9 295X2s: A Work In Progress-

redgarl I always said it, more than two cards takes too much resources to manage. Drivers are not there either. You are getting better results with simple Crossfire. Still, the way AMD corner Nvidia as the sole maker able to push 4k right now is amazing.Reply -

BigMack70 I personally don't think we'll see a day that 3+ GPU setups become even a tiny bit economical.Reply

For that to happen, IMO, the time from one GPU release to the next would have to be so long that users needed more than 2x high end GPUs to handle games in the mean time.

As it is, there's really no gaming setup that can't be reasonably managed by a pair of high end graphics cards (Crysis back in 2007 is the only example I can think of when that wasn't the case). 3 or 4 cards will always just be for people chasing crazy benchmark scores. -

frozentundra123456 I am not a great fan of mantle because of the low number of games that use it and its specificity to GCN hardware, but this would have been one of the best case scenarios for testing it with BF4.Reply

I cant believe the reviewer just shrugged of the fact that the games obviously look cpu limited by just saying "well, we had the fastest cpu you can get" when they could have used mantle in BF4 to lessen cpu usage. -

Matthew Posey The first non-bold paragraph says "even-thousand." Guessing that should be "eleven-thousand."Reply -

Haravikk How does a dual dual-GPU setup even operate under Crossfire? As I understand it the two GPUs on each board are essentially operating in Crossfire already, so is there then a second Crossfire layer combining the two cards on top of that, or has AMD tweaked Crossfire to be able to manage them as four separate GPUs? Either way it seems like a nightmare to manage, and not even close to being worth the $3,000 price tag, especially when I'm not really convinced that even a single of those $1,500 cards is really worth it to begin with; drool worthy, but even if I had a ton of disposable income I couldn't picture myself ever buying one.Reply