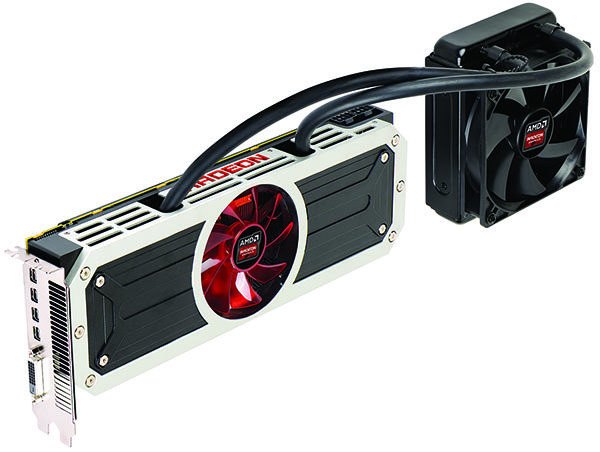

Radeon R9 295X2 8 GB Review: Project Hydra Gets Liquid Cooling

“Do you have what it takes?” AMD asks, purportedly referring to the big budget and beefy power supply you need before buying its new Radeon R9 295X2. We benchmark the 500 W, dual-GPU beast against several other high-end configs before declaring a winner.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Not For The Faint Of Heart, AMD Says

Dreadnought. Perhaps you know the word from Final Fantasy. Or maybe Warhammer. Or Star Trek, even.

But the dreadnoughts I was thinking about during my week locked up in the lab were the 20th-century battleships built by Britain, France, Germany, Italy, Japan, and the U.S. Before the signing of the Washington Naval Treaty in 1922, each of those countries (and several others) poured tons of resources into one-upping each other, commissioning capital ships able to move faster, fire further, and prevent more damage. Eventually, the exercise became economically exhausting.

But all in the name of claiming superiority, right?

Article continues belowThe graphics card market is in the midst of its own arms race. AMD fired a white-hot salvo back in 2011 with the introduction of its Radeon HD 7970, which easily dwarfed Nvidia’s GeForce GTX 580. A few short months later, Nvidia shot back with the GeForce GTX 680, hitting harder and for less money. Since then, both companies have traded broadsides, introducing the Radeon HD 7970 GHz Edition, GeForce GTX 690, Radeon HD 7990, GeForce GTX Titan, and Radeon R9 290X, all leveraging relatively similar architectures to push the performance envelope. Increased prices were offset by higher frame rates, which affluent gamers willingly paid.

If those cards are the dreadnoughts of our industry, then we’re about to enter the era of super-dreadnoughts (yes, that’s a thing).

A couple of weeks ago, Nvidia announced its GeForce GTX Titan Z, a dual-GK110-powered, triple-slot behemoth. Jen-Hsun called it the perfect card for those in need of a supercomputer under their desk. And using his 8 TFLOP specification, I worked backward to a core clock rate around 700 MHz per GPU. That’s more than 100 MHz lower than the GK110 on a GeForce GTX Titan. Wouldn’t you be better off building that supercomputer using two, three, or even four Titans? We have to wait and see; the Titan Z isn’t available yet.

Although one GeForce GTX Titan Z appears destined to be quite a bit slower than a pair of Titans, Nvidia plans to ask an astounding $3000, or 50% more for it.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

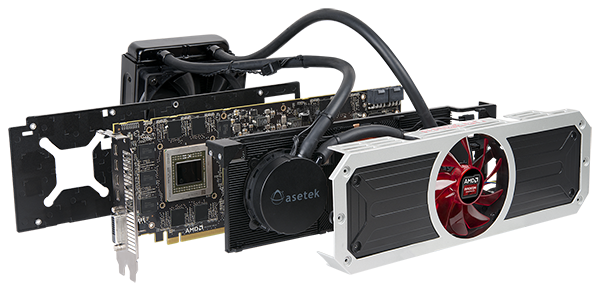

In response, AMD is escalating the arms race with its Radeon R9 295X2, another dual-GPU specimen. But this one is quite a bit different. To begin, it sports Hawaii GPUs that run just a bit faster than the single-processor Radeon R9 290X. Also, the 295X2 is a dual-slot board. How is such a feat possible? Closed-loop liquid cooling, of course.

AMD Fires Back With (Relative) Value

The existence of this card wasn’t a carefully-guarded secret. In fact, AMD had a marketing agency shipping out care packages alluding to its arrival. But a lot of the 295X2’s rumored specifications were completely wrong. Let's set the record straight, shall we?

Learn More About Hawaii

For more information on the Hawaii GPU, check out Radeon R9 290X Review: AMD's Back In Ultra-High-End Gaming

Again, AMD starts with two Hawaii processors, each manufactured at 28 nm and composed of 6.2 billion transistors. Those GPUs are unaltered, sporting a full 2816-shader configuration with 176 texture units, 64 ROPs, and an aggregate 512-bit memory bus. Four gigabytes of GDDR5 per processor are attached, yielding a card with 8 GB on-board.

AMD has a respectable track record of keeping its dual-GPU boards almost as fast as two single-GPU flagships. The Radeon HD 6990 ran something like 50 MHz slower than a Radeon HD 6970. But it still managed to accommodate two fully-operational Cayman processors. The Radeon HD 7990 did battle against the GeForce GTX 690 with Tahitis also operating 50 MHz slower than the then-fastest card in AMD’s stable. They too were fully-featured, with all 2048 shaders enabled.

| Header Cell - Column 0 | Radeon R9 295X2 | Radeon R9 290X | GeForce GTX Titan | GeForce GTX 780 Ti |

|---|---|---|---|---|

| Process | 28 nm | 28 nm | 28 nm | 28 nm |

| Transistors | 2 x 6.2 Billion | 6.2 Billion | 7.1 Billion | 7.1 Billion |

| GPU Clock | Up to 1018 MHz | Up to 1 GHz | 837 MHz | 875 MHz |

| Shaders | 2 x 2816 | 2816 | 2688 | 2880 |

| FP32 Performance | Up to 11.5 TFLOPS | 5.6 TFLOPS | 4.5 TFLOPS | 5.0 TFLOPS |

| Texture Units | 2 x 176 | 176 | 224 | 240 |

| Texture Fillrate | Up to 358.3 GT/s | 176 GT/s | 188 GT/s | 210 GT/s |

| ROPs | 2 x 64 | 64 | 48 | 48 |

| Pixel Fillrate | Up to 130.3 GP/s | 64 GP/s | 40 GP/s | 41 GP/s |

| Memory Bus | 2 x 512-bit | 512-bit | 384-bit | 384-bit |

| Memory | 2 x 4 GB GDDR5 | 4 GB GDDR5 | 6 GB GDDR5 | 3 GB GDDR5 |

| Memory Transfer Rate | Up to 5 GT/s | 5 GT/s | 6 GT/s | 7 GT/s |

| Memory Bandwidth | 2 x 320 GB/s | 320 GB/s | 288 GB/s | 336 GB/s |

| Board Power | 500 W | 250 W | 250 W | 250 W |

The Radeon R9 295X2's twin Hawaii GPUs go even further. Whereas a reference Radeon R9 290X runs at up to 1000 MHz, the 295X2 gets a small bump to 1018 MHz. Yes, the processors are still subject to the dynamic throttling behavior we illustrated in The Cause Of And Fix For Radeon R9 290X And 290 Inconsistency. But because cooling is better this time around, we’ve been told that throttling shouldn’t be an issue.

Between the two GPUs, their respective memory packages, and a bunch of power circuitry, AMD plants a PEX 8747 switch, the same 48-lane, five-port device found on its Radeon HD 7990 and Nvidia’s GeForce GTX 690. The switch interfaces with each Hawaii processor’s PCI Express 3.0 controller, facilitating a 16-lane connection between the GPUs and platform.

AMD also offers a similar array of display outputs as what we saw on the 7990, including one dual-link DVI-D connector and four mini-DisplayPort interfaces.

For all of that, AMD claims it will charge $1500 (or €1100 + VAT). The Radeon R9 295X2 won’t be available immediately, either. As of right now, the company says you’ll find it for sale online the week of April 21st. Don and I are in agreement here: we’ve seen too many missed price estimates and ship dates from AMD to take this one as gospel. We'll treat $1500 as general guidance for now.

Current page: Not For The Faint Of Heart, AMD Says

Next Page Power And Design Decisions-

Marsian Gustrianda Many people doubt about Dual GPU Hawaii will be Blow Up. It seems AMD really do well job. Nice Looking CardReply -

ohim This card is like the Veyron of WV , show the world what you can do (R295x2) but you`ll still relay on the sales of your WV Golf for revenue (270x, 280x)Reply -

outlw6669 Impressive performance, temperatures and fairly low noise!Reply

I would prefer a bit lower price, but this looks like a great card for the gamer that has everything! -

gunfighter zeck the name Dreadnaught originated from Dread Nothing or, fear nothing.Reply

Boss ship. -

Maxamus456 Hope this price stays low and not get bloated from bit con miners like its predecessors.Reply -

blubbey So let me get this straight. It runs pretty cool, quiet, performs well and (for the moment) is able to play a good selection of games at 4k admirably and is priced competitively. Plus if you are going to drop a bit more on watercooling your GPUs (which is a possibility if you're spending $1200+) that gives this card even greater value. Nice work AMD.Reply -

marciocattini Wheres Tom's Hardware seal of approval? =( clearly this card diserves some love!Reply