Nvidia GeForce GTX Titan 6 GB: GK110 On A Gaming Card

After almost one year of speculation about a flagship gaming card based on something bigger and more complex than GK104, Nvidia is just about ready with its GeForce GTX Titan, based on GK110. Does this monster make sense, or is it simply too expensive?

Meet The Mighty GeForce GTX Titan 6 GB

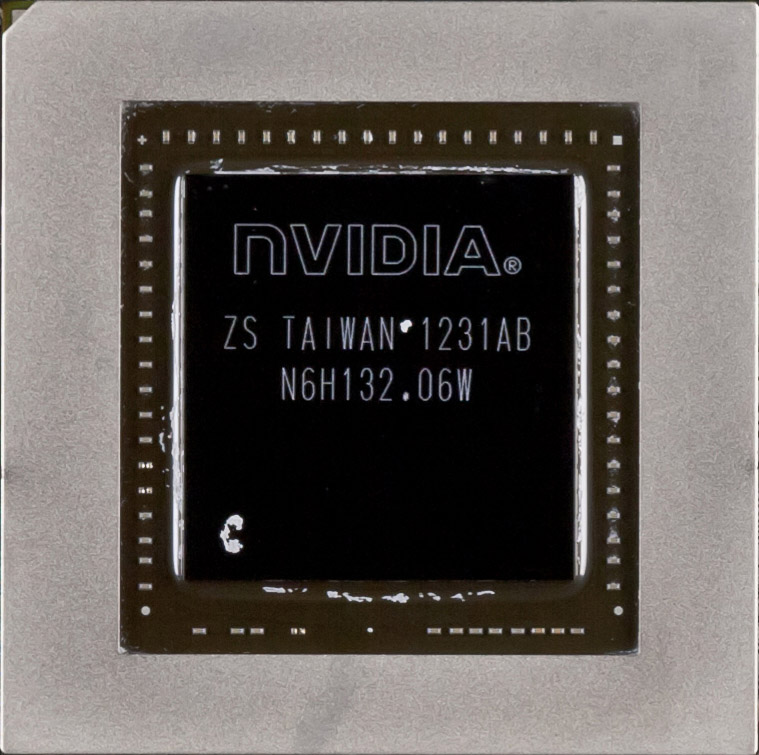

There are more than 7 billion humans on our fair planet. As it happens, Nvidia’s GK110 GPU—previously found only in the Tesla K20X and K20 cards—comprises 7.1 billion transistors. If you could break GK110 into single-transistor pieces, everyone on Earth could have one. And here I am, staring at three of these massive GPUs driving a trio of GeForce GTX Titan cards.

According to the folks at Nvidia, GK110 is the largest chip that TSMC can manufacture using its 28 nm node. Common sense dictates that this GPU would be expensive, power-hungry, hot, and susceptible to low yields. Two of those are absolutely true. The other assumptions, surprisingly, are not.

My, Titan, You Look Familiar

In spite of its large, complex graphics processor, GeForce GTX Titan isn’t a massive card by any means. It falls right in between the 10” GeForce GTX 680 and 11” GeForce GTX 690, measuring 10.5”-long (half of an inch shorter than AMD's Radeon HD 7970). It also eschews the 680’s comparatively cheap-feeling plastic shroud with a solid dual-slot shell clearly derived from Nvidia’s work on GeForce GTX 690.

There are notable differences between the two premium boards, though. Whereas the 690 needed an axial-flow fan to effectively cool two GPUs, GeForce GTX Titan employs a centrifugal blower to exhaust hot air out the back of the card. No more recirculating heat back into your case—that’s the good news. Unfortunately, Nvidia says the 690’s magnesium alloy fan housing was too expensive, so the entire cover is now aluminum (except for the polycarbonate window—another design cue that carries over from GeForce GTX 690).

Blower-style fans are sometimes derided for making more noise than axial-flow coolers. And if you manually force this card’s fan to the upper range of its duty cycle, it’ll scream at you. Let the board balance its own thermal situation, though, and the GeForce GTX Titan is hardly audible at all. Nvidia attributes this partly to vapor chamber cooling (a technology both Nvidia and AMD employ in their high-end cards), but also to more effective thermal interface material, the extended stack of aluminum cooling fins, and dampening material around its fan.

Similar aesthetics and acoustics aren’t the only two qualities GeForce GTX Titan and GeForce GTX 690 share. Like the flagship board, this new card sports the GeForce GTX logo along its top edge. The green text is likewise LED-backlit, and the lighting is controllable through bundled software.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

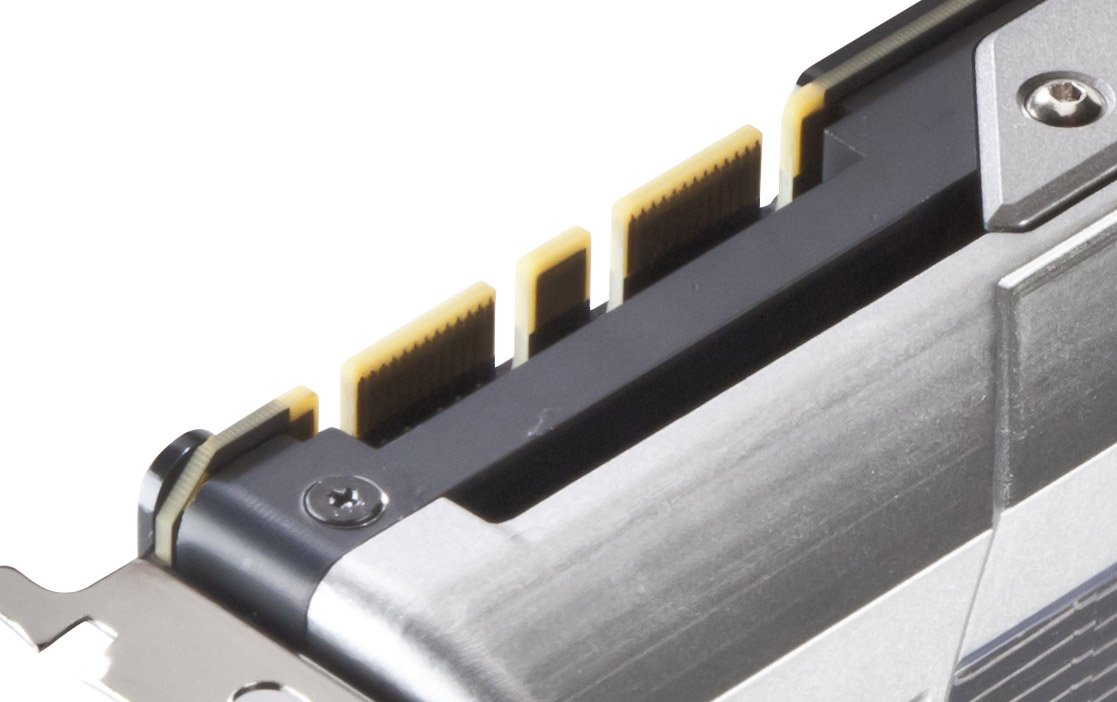

You’ll also find SLI connectors above the Titan’s rear I/O bracket. Nvidia enables two-, three-, and four-way configurations, though company reps readily admit that scaling on the fourth GPU is better for making runs at performance records rather than real-world gaming gains.

Any combination of GeForce GTX Titan cards is able to output to four displays simultaneously—three screens in Surround, along with an accessory display. Obviously, with just one card plugged in, you’d need to use all of its outputs: two dual-link DVI connectors, one full-sized HDMI port, and one full-sized DisplayPort output. Two- and three-way SLI setups give you several other options for hooking up multi-monitor configurations.

Although the Titan’s GK110 processor is immense, it’s less power-hungry than two ~3.5 billion transistor GK104s working in tandem on a GeForce GTX 690. That card bears a 300 W TDP and consequently requires two eight-pin power leads.

GeForce GTX Titan is rated for 250 W—the same as a Radeon HD 7970 GHz Edition—necessitating one eight- and one six-pin connector. The math adds up to 75 W from a PCI Express x16 slot, 75 W from the six-pin plug, and 150 W from the eight-pin connection, leaving plenty of headroom to stay within spec. Nvidia recommends you match this card up to a 600 W power supply at least, though most of the shops building mini-ITX systems seem to be getting away with 450 and 500 W PSUs.

| Header Cell - Column 0 | GeForce GTX Titan | GeForce GTX 690 | GeForce GTX 680 | Radeon HD 7970 GHz Ed. |

|---|---|---|---|---|

| Shaders | 2,688 | 2 x 1,536 | 1,536 | 2,048 |

| Texture Units | 224 | 2 x 128 | 128 | 128 |

| Full Color ROPs | 48 | 2 x 32 | 32 | 32 |

| Graphics Clock | 836 MHz | 915 MHz | 1,006 MHz | 1,000 MHz |

| Texture Fillrate | 187.5 Gtex/s | 2 x 117.1 Gtex/s | 128.8 Gtex/s | 134.4 Gtex/s |

| Memory Clock | 1,502 MHZ | 1,502 MHz | 1,502 MHz | 1,500 MHz |

| Memory Bus | 384-bit | 2 x 256-bit | 256-bit | 384-bit |

| Memory Bandwidth | 288.4 GB/s | 2 x 192.3 GB/s | 192.3 GB/s | 288 GB/s |

| Graphics RAM | 6 GB GDDR5 | 2 x 2 GB GDDR5 | 2 GB GDDR5 | 3 GB GDDR5 |

| Die Size | 551 mm2 | 2 x 294 mm2 | 294 mm2 | 365 mm2 |

| Transistors (Billion) | 7.1 | 2 x 3.54 | 3.54 | 4.31 |

| Process Technology | 28 nm | 28 nm | 28 nm | 28 nm |

| Power Connectors | 1 x 8-pin, 1 x 6-pin | 2 x 8-pin | 2 x 6-pin | 1 x 8-pin, 1 x 6-pin |

| Maximum Power | 250 W | 300 W | 195 W | 250 W |

| Price (Street) | $1,000 | $1,000 | $460 | $430 |

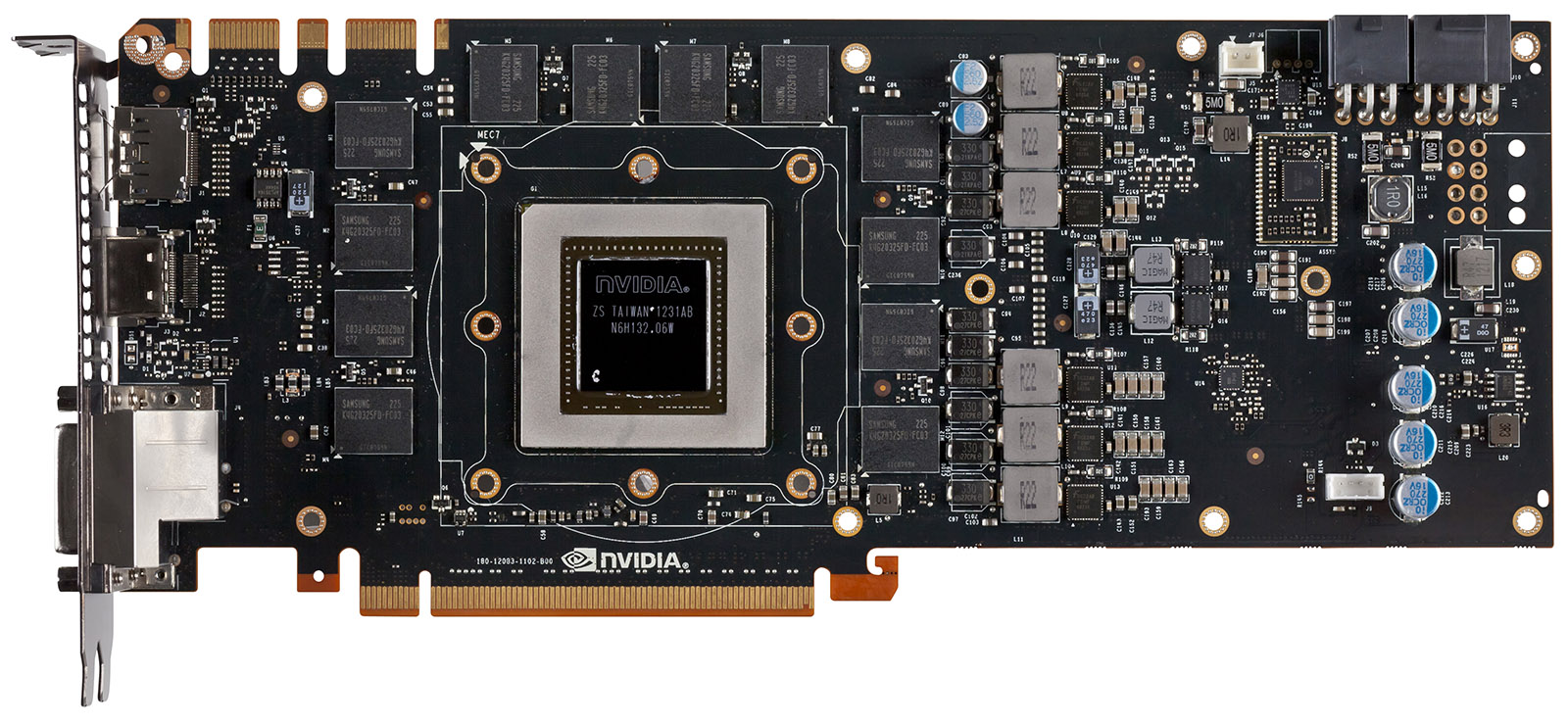

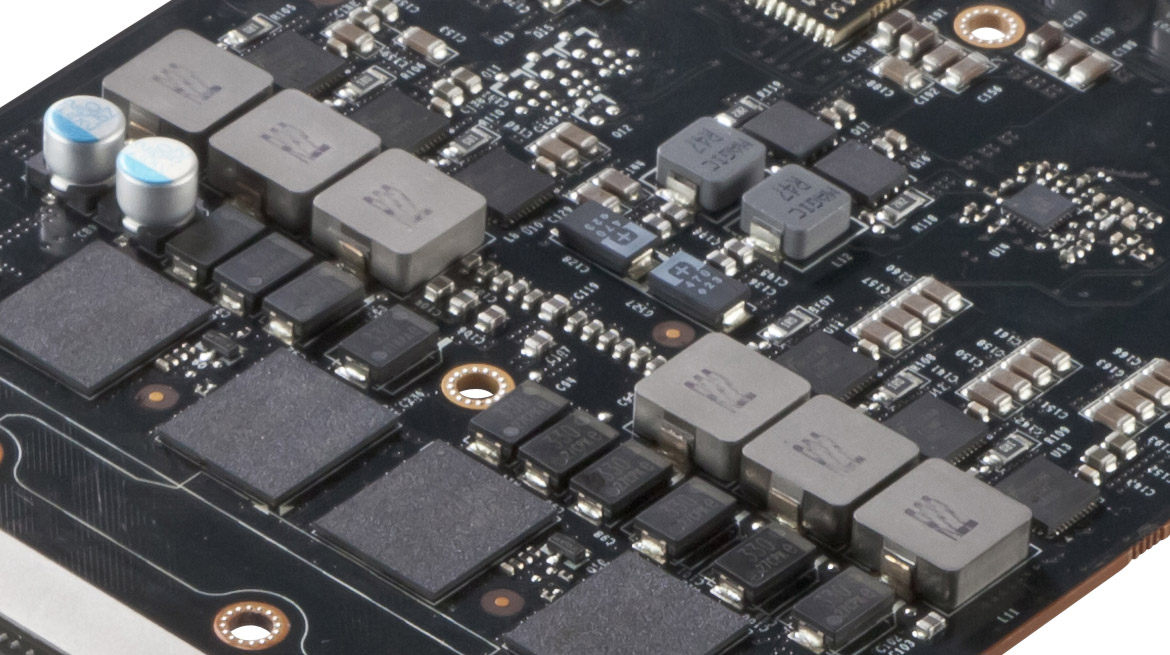

Cut away the beefy cooler and you’ll expose this card’s massive GPU, memory subsystem, and voltage regulation circuitry.

The Titan’s GK110 graphics processor runs at 836 MHz, minimum. However, it benefits from a reworked version of GPU Boost that Nvidia says can typically keep the chip operating at 876 MHz. We know from extensive testing in GeForce GTX 680 2 GB Review: Kepler Sends Tahiti On Vacation, however, that the behavior of GPU Boost depends heavily on a number of factors, right down to the temperature in your room. In our World of Warcraft benchmark, for example, GeForce GTX Titan barely crests 70% of its board power. So, the GPU ramps up to 993 MHz, even as its temperature hovers around 77 degrees Celsius. More on GPU Boost 2.0 shortly.

Twelve 2 Gb packages on the front of the card and 12 on the back add up to 6 GB of GDDR5 memory. The .33 ns Samsung parts are rated for up to 6,000 Mb/s, and Nvidia operates them at 1,502 MHz. On a 384-bit aggregate bus, that’s 288.4 GB/s of bandwidth.

Six power phases for the GPU and two for the memory are relatively easy to identify toward Titan’s back half. In comparison, the GeForce GTX 690 employed five phases per GPU (10 total) and one memory phase per GPU. This is significant because Nvidia enables overvolting on GeForce GTX Titan, explicitly affecting the card’s ability to hit higher GPU Boost frequencies. Maxing out the voltage settings exposed through EVGA’s Precision X software, we were able to increase our sample’s clock from a typical 876 MHz to nearly 1.2 GHz—all while keeping the GPU’s temperature under a defined threshold of 87 degrees Celsius.

-

jaquith Hmm...$1K yeah there will be lines. I'm sure it's sweet.Reply

Better idea, lower all of the prices on the current GTX 600 series by 20%+ and I'd be a happy camper! ;)

Crysis 3 broke my SLI GTX 560's and I need new GPU's... -

Trull Dat price... I don't know what they were thinking, tbh.Reply

AMD really has a chance now to come strong in 1 month. We'll see. -

tlg The high price OBVIOUSLY is related to low yields, if they could get thousands of those on the market at once then they would price it near the gtx680. This is more like a "nVidia collector's edition" model. Also gives nVidia the chance to claim "fastest single gpu on the planet" for some time.Reply -

tlg AMD already said in (a leaked?) teleconference that they will not respond to the TITAN with any card. It's not worth the small market at £1000...Reply -

wavebossa "Twelve 2 Gb packages on the front of the card and 12 on the back add up to 6 GB of GDDR5 memory. The .33 ns Samsung parts are rated for up to 6,000 Mb/s, and Nvidia operates them at 1,502 MHz. On a 384-bit aggregate bus, that’s 288.4 GB/s of bandwidth."Reply

12x2 + 12x2 = 6? ...

"That card bears a 300 W TDP and consequently requires two eight-pin power leads."

Shows a picture of a 6pin and an 8pin...

I haven't even gotten past the first page but mistakes like this bug me

-

wavebossa wavebossa"Twelve 2 Gb packages on the front of the card and 12 on the back add up to 6 GB of GDDR5 memory. The .33 ns Samsung parts are rated for up to 6,000 Mb/s, and Nvidia operates them at 1,502 MHz. On a 384-bit aggregate bus, that’s 288.4 GB/s of bandwidth."12x2 + 12x2 = 6? ..."That card bears a 300 W TDP and consequently requires two eight-pin power leads."Shows a picture of a 6pin and an 8pin...I haven't even gotten past the first page but mistakes like this bug meReply

Nevermind, the 2nd mistake wasn't a mistake. That was my own fail reading. -

ilysaml ReplyThe Titan isn’t worth $600 more than a Radeon HD 7970 GHz Edition. Two of AMD’s cards are going to be faster and cost less.

My understanding from this is that Titan is just 40-50% faster than HD 7970 GHz Ed that doesn't justify the Extra $1K. -

battlecrymoderngearsolid Can't it match GTX 670s in SLI? If yes, then I am sold on this card.Reply

What? Electricity is not cheap in the Philippines.