Four SAS 6 Gb/s RAID Controllers, Benchmarked And Reviewed

We got our hands on four SAS 6 Gb/s RAID controllers from Adaptec, Areca, HighPoint, and LSI and ran them through RAID 0, 5, 6, and 10 workloads to test their mettle. Does your system need eight more ports of connectivity? We can answer that!

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

SAS: When SATA Is Not Enough

Have a look at today's motherboards (or even some of the older platforms out there). Is it really still necessary to buy a dedicated RAID controller? Three-gigabit SATA ports are found on pretty much every board, just like audio and networking connectivity. The most modern chipsets, like AMD's A75 and Intel's Z68 even incorporate SATA 6Gb/s support. Backed by reliable power circuitry, a powerful processor, and plenty of I/O, aren't you already getting the hallmarks of a solid add-in storage card? Where does it make sense to make that investment in a discrete controller?

In most cases, mainstream users are able to configure RAID 0, 1, 5, and 10 arrays using their motherboard's built-in SATA ports and a little bit of software, yielding reasonable performance. But in environments where more advanced RAID levels like 6, 50, or 60 are necessary, beefier disk management is desired, or scalability is needed, those chipset-based controllers come up inadequate. That's when it's time for a professional-class solution.

And at that point, you're no longer limited to SATA storage. A great number of add-in cards facilitate support for Serial-Attached SCSI (SAS) or Fibre Channel (FC) disks, each interface offering unique advantages.

Article continues belowSAS and FC for Professional RAID

Each of the three available interfaces (SATA, SAS, and FC) brings different strengths and weaknesses to the table; none of them can be definitively labeled the best. The strengths of SATA-based drives include some of the highest capacities and low cost, while still managing great data rates. SAS disks generally emphasize reliability, scalability, and high I/O rates. FC storage focuses on continuous, fast data rates. As a legacy solution, some enterprises still use Ultra SCSI, although it's hampered by a maximum device count of 16 (which includes one controller and up to 15 disks). Moreover, its maximum aggregate bandwidth of 320 MB/s (in the case of Ultra-320 SCSI) is quite paltry compared to its successors.

Ultra SCSI used to be the standard for professional, enterprise storage solutions. SAS has, however, largely taken over now, offering not only significantly higher bandwidth, but also the flexibility to accommodate mixed SAS/SATA environments to really optimize cost, performance, dependability, and capacity even within a single JBOD. Additionally, many SAS disks have two ports for the purpose of redundancy. If one controller card goes out, connecting the drive to a second controller enables fail-over. Thus, SAS can support high-availability setups.

Furthermore, SAS is not merely a point-to-point protocol between a controller and a storage device. It supports up to 255 storage devices per SAS cable via expanders. By using a two-tiered SAS expander structure, theoretically 255 x 255 (or slightly more than 65 000) storage devices could be connected to a single SAS channel, assuming the controller chip supports such a large quantity internally.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Adaptec, Areca, HighPoint, and LSI: Four SAS RAID Controllers Tested

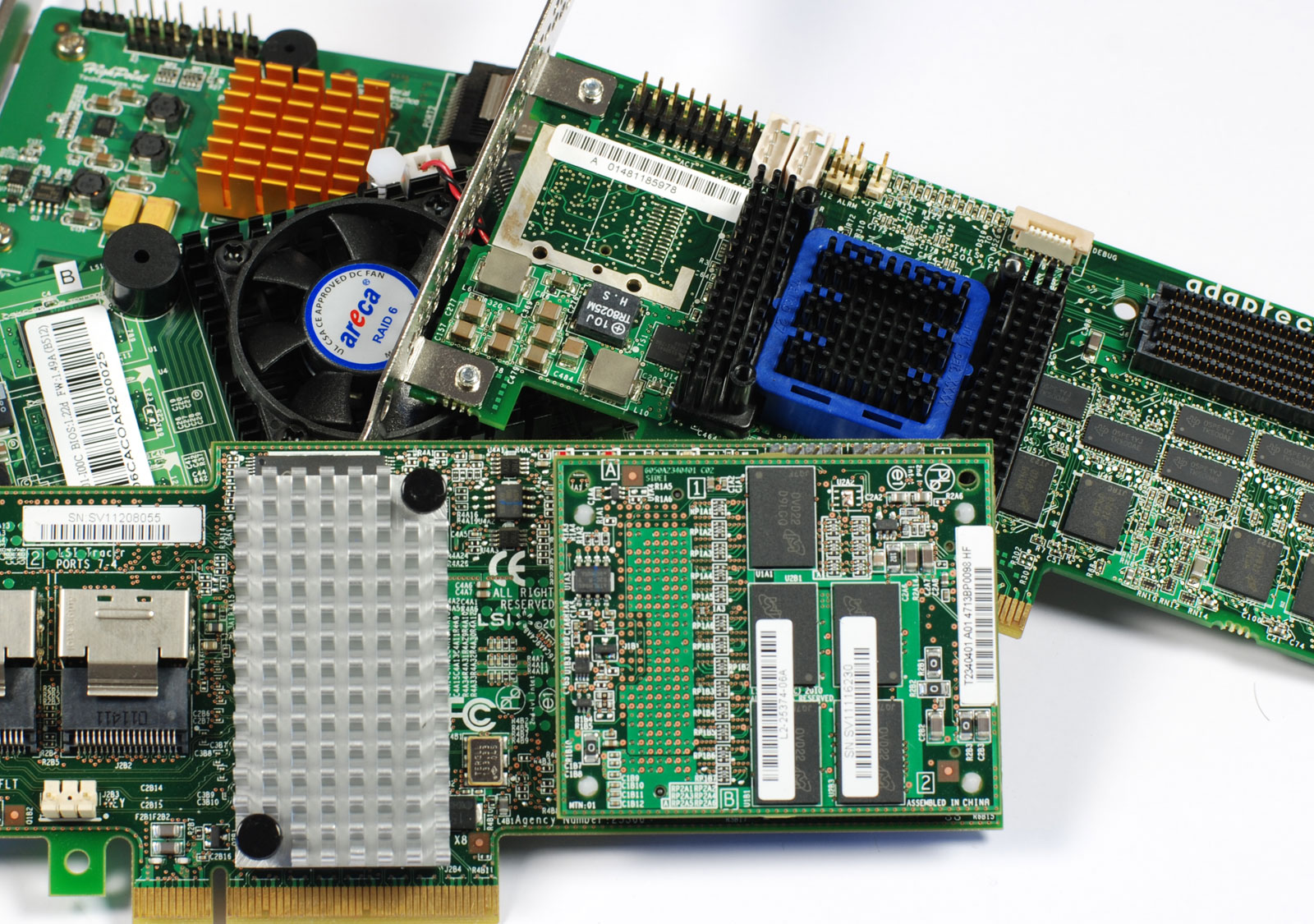

In this comparison test, we're scrutinizing the performance of current SAS RAID controllers, represented by four products: Adaptec's RAID 6805, Areca's ARC-1880i, HighPoint's RocketRAID 2720SGL, and LSI's MegaRAID 9265-8i.

Why SAS and not FC? On one hand, SAS is the more interesting and relevant architecture. It offers features like zoning that can be highly attractive for professional use. On the other hand, market data shows that FC’s role in the professional storage market is declining, and some analysts even predict its demise based on the number of shipped hard disks. While the future of FC seems bleak, IDC predicts that SAS hard disks will claim 72 percent share of the enterprise hard disk market in 2014.

-

americanherosandwich Great review! Though I would have like to see some RAID 1 and RAID 10 benchmarks. Don't usually see RAID 0 for expensive SAS RAID Controllers, and more RAID 10 configurations than RAID 5.Reply -

purrcatian I just sold my HighPoint RocketRAID 2720 because of the terrible drivers. Not only do the drivers add about 60 seconds to the Windows boot, they also cause random BSODs. The support was a joke, and the driver that came on the disc caused the Windows 7 x64 setup to instantly BSOD even though the box had a Windows 7 compatible logo on it. I even RMAed the card and the new one was exactly the same.Reply -

dealcorn Very cool, fast and expensive means not home server stuff. For that, try the IBM BR10I, 8port PCI-e SAS/SATA RAID controller, which is generally available on eBay for $40 with no bracket (I live for danger). You are stuck with 3 GB/sec per port, but if you add $34 for a pair of forward breakout cables you have 8 sata ports at a cost of under $10 per port. The card requires a PCIe X8 slot but if you only give it 4 lanes (the number of lanes offered by our Atom's NM10) if will give each port 1.5 Gb/sec. Cheap SAS makes software RAID 6 prudent in a home storage server.Reply -

slicedtoad I have pretty much no use for anything other than raid 0 but it was still an interesting read. I think i prefer this type of article over the longer type with actual benchmarks thrown in (not for gpu or cpu reviews though).Reply -

pxl9190 Only wish this review had came earlier !Reply

I had a hard time deciding between 9265-8i, 1880 and 6805 a month ago. I bought the 6805 and always wondered why RAID-10 was not as fast as I thought it should be. This reviewed proved my worries.

I eventually went to RAID 6 with 6 Constellation ES 1TB disks. Here's where the adaptec really shines. This is for a photo/video storage/editing disk array.

Admittedly if I have a choice again I would have picked the Areca after seeing the numbers. Adaptec was the cheapest among all of them so it's not too much of a regret. -

Great review! As I am in the process of building a new home file server and always have a habit of going overboard in such situations, I will be referring back to this article many more times before purchasing.Reply

That said can you please talk more to the differences performance wise between SATA and SAS? I understand the reliability argument, however I wonder if for my purposes I would not be better served by using cheaper SATA disks over SAS disks?

I would also love some direction with regard to a good enclosures/power supplies for a hard drive only enclosure. I realize I am quickly priced out of an enterprise solution in this arena, but have seen at least a couple cheaper options online such as the Sans Digital TR8M+B. (This enclosure is normally bundeled with some RocketRaid controller which I would probably discard in favor of either the Adaptec or LSI solution.) -

You are missing a huge competitor in this space. Atto RAID Adapters are on par and I think the only other one out there, why are they not compared in this review?Reply

-

Marco925 I bought the Highpoint, for it's money, it was incredible value at a little under $120Reply -

stuckintexas I evaluated all but the Highpoint for work. What isn't shown, and would be unrealistic for a home user, is that the LSI destroys the competition when you throw on a SAS expander. With 24 15k SAS drives, the LSI card tops out at 3500MB/s, RAID0 sequential write, while the Areca isReply